OpenAI Ships GPT-5.4-Cyber for Binary Exploit Scanning as Pipecat Hits 1.0 and Agent Memory Goes Three-Dimensional

OpenAI's new cybersecurity-focused model can find exploits in compiled binaries without source code, raising both defensive capabilities and new attack surface concerns. The agent ecosystem matures with Pipecat reaching 1.0, multi-agent orchestration frameworks emerging, and a compelling argument for three-dimensional agent memory. Meanwhile, developers continue pushing Claude Code into unexpected territory from prediction market bots to game development.

Daily Wrap-Up

The most consequential announcement today isn't about making AI faster or cheaper. It's about making it dangerous on purpose. OpenAI's GPT-5.4-Cyber is a model explicitly fine-tuned to find software exploits, and it can do it on compiled binaries without ever seeing source code. That's a genuine paradigm shift in security research, and the tiered access system where "verified defenders" get a more permissive model than the public raises all sorts of questions about who gets to wield these tools. On the builder side, the agent infrastructure layer is quietly solidifying. Pipecat hitting version 1.0 after two years of development, Cognee offering three-dimensional agent memory, and Aurogen forking OpenClaw with proper multi-agent orchestration all point to the same thing: we're moving past the "can AI do X?" phase and into "how do we architect reliable systems around AI?"

The vibe coding movement continues to blur the line between professional development and weekend tinkering, with game developers using Codex for procedural generation and Superblocks 2.0 explicitly positioning itself as the enterprise answer to shadow AI development. Chamath's Software Factory walkthrough and the design agency pivot described by @abnux both tell the same story from different angles: the unit of work in software is changing from "lines of code" to "systems that generate code." Anthropic quietly shifting Claude Enterprise to usage-based billing suggests they're seeing exactly this kind of heavy, automated usage pattern and pricing accordingly.

The most entertaining moment goes to @luaroncrew, who won an RTX 5090 at a Mistral hackathon by training an image generation model to do technical analysis on trading charts, only to realize he uses a MacBook and has no desktop to put the GPU in. It's a perfect metaphor for 2026: the tools are arriving faster than anyone can figure out what to do with them. The most practical takeaway for developers: invest time in building reusable AI scaffolding rather than one-off prompts. Whether it's Claude Skills (folders that teach the model your workflow), design systems that agents can reference, or structured memory layers for your agents, the teams seeing real leverage are the ones who treat AI configuration as infrastructure, not conversation.

Quick Hits

- @f4micom highlights @lauriewired's deep dive into historical memory conservation techniques like Hitachi's SuperH architecture, which used 16-bit instructions on a 32-bit chip to double cache line density. The "RAM crisis" is sending engineers back to the 90s for optimization ideas.

- @Scobleizer sees the holodeck arriving after @sparkjsdev launched Spark 2.0, an open-source streamable level-of-detail system for 3D Gaussian Splatting that pushes 100M+ splats to any device via WebGL2.

- @gusgarza_ built a cockroach-infested subway world in minutes using ThreeJS, MeshyAI, and World Labs, continuing the trend of rapid 3D prototyping with AI-assisted tools.

- @techwith_ram dropped a guide on knowledge graph optimization, arguing that companies like Google are building "digital DNA" through knowledge graphs to ensure AI systems actually understand organizational identity.

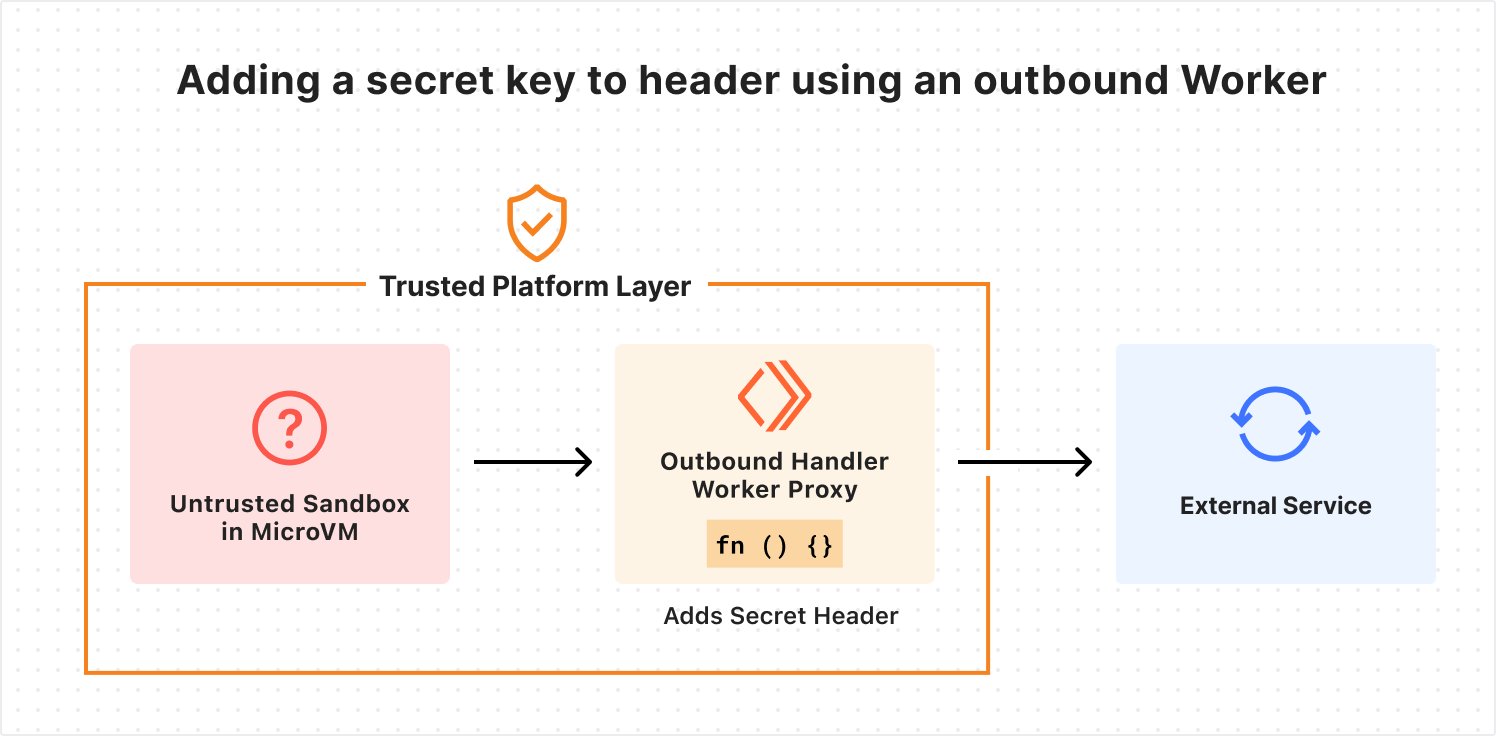

- @larsencc notes that even Cloudflare appears to have drawn from his blog post on building secure, scalable agent sandbox infrastructure. The agent sandbox pattern is clearly gaining mainstream infrastructure attention.

- @unusual_whales reports Anthropic is shifting Claude Enterprise to usage-based billing, raising costs for heavy users. A sign that enterprise AI consumption patterns are outpacing flat-rate pricing models.

- @jessegenet reports GLM 5.1 running locally "actually works," enabling OpenClaw workflows to run for just the cost of electricity. The local inference story keeps getting more practical.

AI-Powered Development Tools and Workflows

The tooling layer around AI-assisted development is thickening fast, and today's posts show it happening across multiple fronts simultaneously. What's notable isn't any single tool but the pattern: developers are building meta-tools that make AI development itself more systematic and repeatable. @sukh_saroy shared CodeFlow, a single HTML file that turns any GitHub repo into an interactive architecture map with dependency graphs, blast radius analysis, and security scanning. No install, no account, no build step. It's the kind of tool that feels trivial until you realize it solves the "help the AI understand my codebase" problem that every developer hits eventually.

On the workflow side, @kirillk_web3 had something of an epiphany watching two Anthropic engineers explain Claude Skills: "Skills are just folders? Folders that remember your workflow? Your domain? Your expertise?" The realization that persistent, structured context beats clever prompting is spreading. @pbakaus pointed to a related project from @mbwsims called Claude Universe, a plugin that teaches Claude Code to think systematically about security, testing, and codebase analysis rather than treating each session as a blank slate.

The thread connecting these is that raw model capability matters less than the scaffolding you build around it. @abnux described how their design agency's entire operating model shifted after building trained design and PM agents: "What I hear from our new set of clients is that there's a lot of value in setting up that scalable system such that design can truly go across the org via Claude Code. It feels like there's no going back." Their agency moved from recurring retainers to one-time system setup sprints. That's not a tool change; it's a business model change driven by the realization that well-configured AI systems compound in value.

Agent Architecture and Orchestration

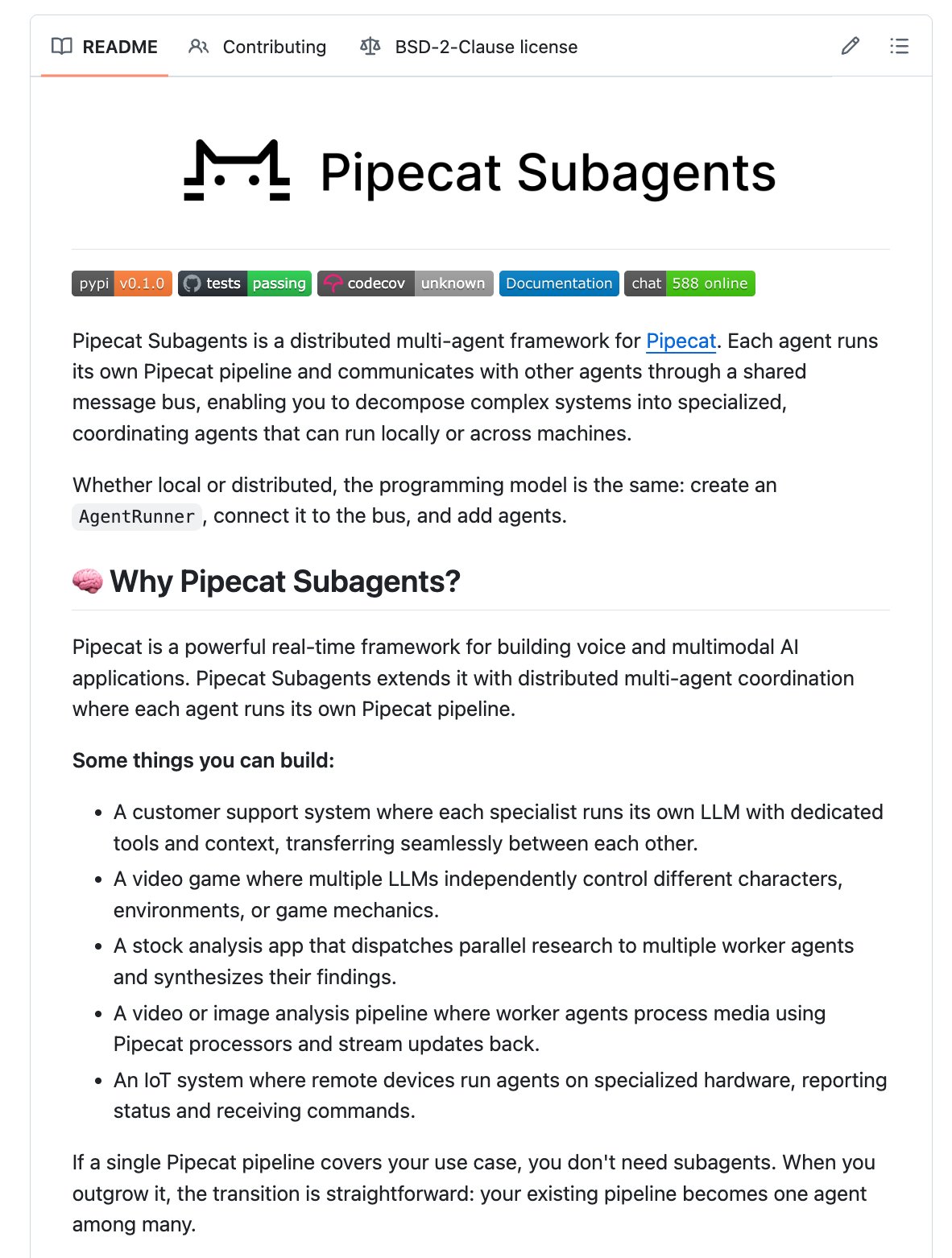

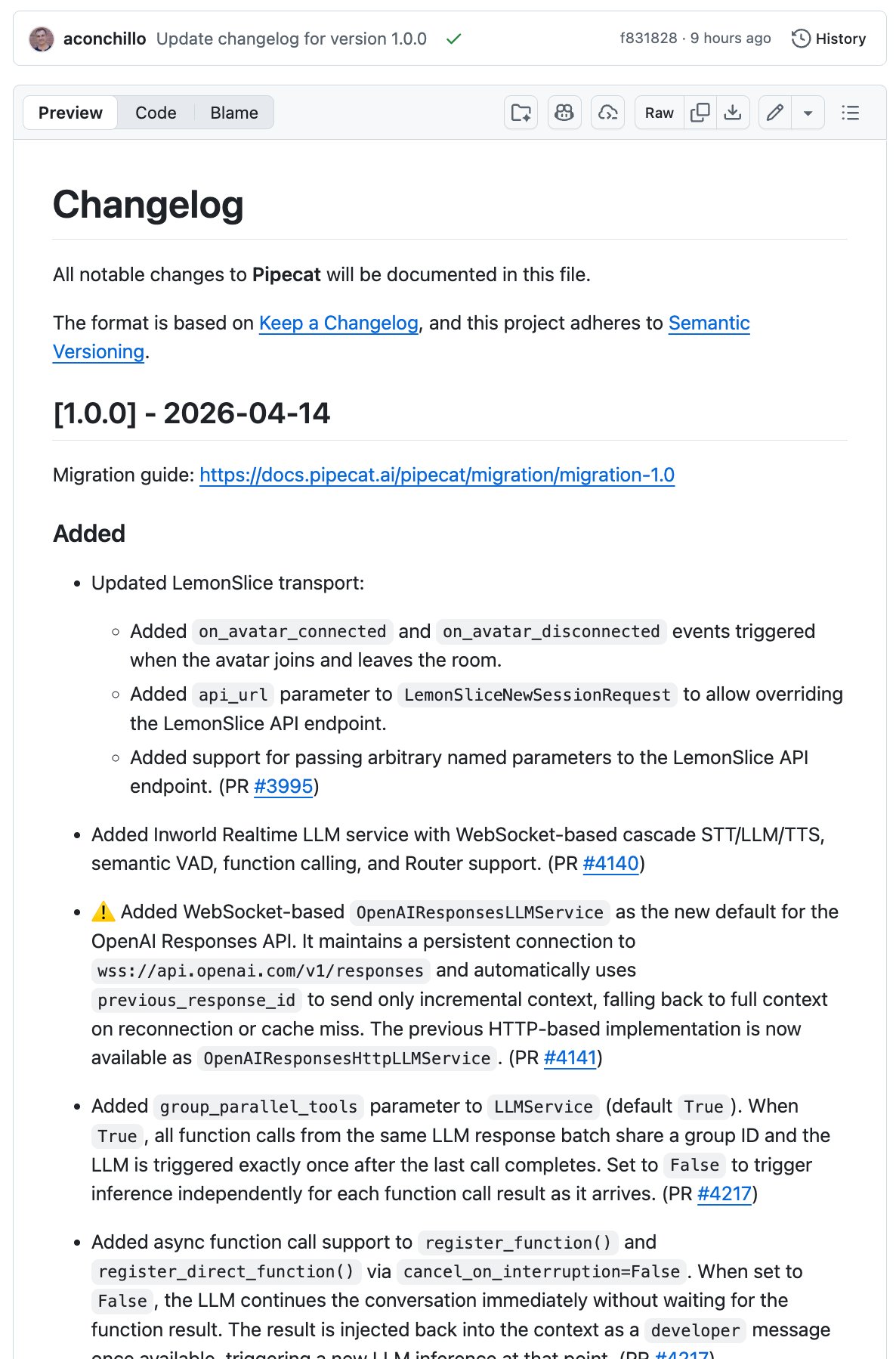

The agent infrastructure conversation leveled up today with three distinct but complementary developments. @kwindla announced Pipecat 1.0 after two years of development, positioning it not just as a voice agent framework but as a general-purpose platform for "realtime, multi-modal, multi-model AI applications." The launch came alongside Pipecat Subagents, a library for running multiple inference loops in parallel with partially shared context. The fact that they proved the architecture by building Gradient Bang, described as "the first massively multiplayer, completely LLM-driven game," suggests the framework handles real complexity, not just demo-day scenarios.

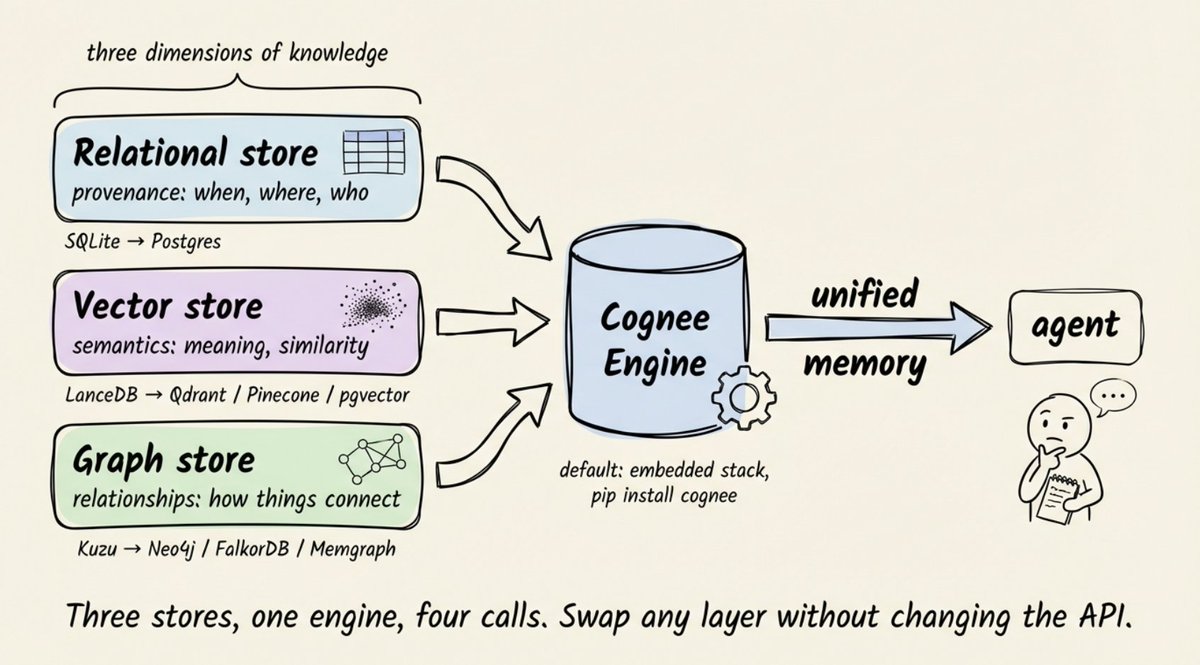

Meanwhile, @akshay_pachaar made one of the most technically precise arguments of the day about why single-store agent memory fails. His example is elegant: store "Alice is the tech lead on Project Atlas," "Project Atlas uses PostgreSQL," and "PostgreSQL went down Tuesday," then ask if Alice's project was affected. Vector search finds Alice and Tuesday but misses the bridge fact connecting them through PostgreSQL. His proposed solution through Cognee combines relational stores for provenance, vector stores for semantics, and graph stores for relationships. "Enter through vectors, then traverse the graph, with provenance grounding every result back to its source."

@ihtesham2005 introduced Aurogen as an OpenClaw alternative built around true multi-agent, multi-instance orchestration inside a single deployment: "The original runs one agent. This one runs as many as you want." The pattern across all three announcements is convergent: the industry is realizing that useful AI systems require multiple models working together with shared but bounded context, and the infrastructure to support that is finally arriving.

Cybersecurity Meets Frontier Models

OpenAI's release of GPT-5.4-Cyber marks a deliberate step into territory that most AI companies have carefully avoided. As @PaulSolt broke down, this is a model specifically built to find and fix software exploits, and its most striking capability is binary scanning: "Agents can find exploits in compiled apps... no source code required. That's a new attack surface." The model ships with tiered access based on identity verification, where verified defenders get a more permissive version than the general public.

The numbers backing the release are substantial. Codex Security has reportedly fixed over 3,000 critical vulnerabilities automatically, and OpenAI is scaling to thousands of verified defenders. But as @PaulSolt noted, "The binary scanning unlock is scary. Stuff like this hasn't been mainstream before." The dual-use nature of exploit-finding AI is obvious, and OpenAI's answer is essentially an identity-gated access system. Whether that's sufficient is a question the security community will be debating for months.

Vibe Coding and Game Development

The "just build things" ethos continues to gain momentum, particularly in game development where AI-assisted creation is producing genuinely playable results. @NicolasZu captured the energy: "It's SO fun to game dev with Codex. Adding usually super complex stuff like it's nothing: procedural generation, laser mining, inventory management." His advice distills to two things: "Become good at AI" and "Train your taste." The implication is that taste, not technical skill, is becoming the primary bottleneck.

@cgtwts profiled Superblocks 2.0, which is explicitly positioning against the vibe coding wave by offering enterprise controls around AI-generated apps. The quoted launch post from @bradmenezes doesn't mince words: "Vibe-coded apps just became the #1 attack vector in the enterprise. Business teams are building on production data, while IT has zero visibility." A Fortune 500 reportedly shut down 2,500 Replit users to standardize on Superblocks. The tension between creative freedom and organizational control is becoming a real product category.

AI as Economic Infrastructure

@r0ck3t23 offered the day's most ambitious framing, building on a Jeff Bezos quote calling AI a "horizontal enabling layer." The argument: Wall Street is pricing AI like the next iPhone (a vertical product) when it's actually the next electrical grid (a horizontal substrate). "You do not sell a horizontal layer. You do not compete with it. You build on top of it or you disappear beneath it." It's the kind of post that reads as hyperbolic until you look at today's other posts showing AI dissolving boundaries between design agencies, game studios, security teams, and prediction markets. The horizontal layer thesis may be grandiose, but the evidence keeps accumulating.

The Trading Bot Underground

@LunarResearcher's viral thread about building a Polymarket trading bot with Claude Code reads like fiction but maps to real tools and repos. The core claim: connecting Claude Code to Polymarket's 86 million trade dataset, identifying 47 wallets with 70%+ win rates, and building a scanner that filters 500+ markets in real time. The key insight buried in the thread is about exits, not entries: "Same exact entries. The exits make it a completely different game." Top wallets capture 86% of the expected move and cut losers at 12%, while everyone else holds losers to 41%. Whether the claimed $8,700 return on an $800 seed is real or embellished, the underlying architecture of using AI as a runtime for data analysis rather than a chatbox for generating prompts reflects a broader shift in how people think about these tools.

Sources

https://t.co/dDGYm5PBsc

🚨do you understand what two Anthropic engineers just explained in 16 minutes. Barry and Mahesh built Claude Skills from scratch. here's the part nobody is talking about: > Skills are just folders. > folders that teach Claude your job. > your workflow. your expertise. your domain. Claude on day 30 is a completely different tool than day one. watch this before you write another prompt. before you build another agent. before you touch another tool. 16 minutes. bookmark it. watch it today. and if you want to learn everything about Claude from scratch the full 4 hour guide is waiting below.

Introducing Superblocks 2.0: AI-generated enterprise apps – finally under IT control. Vibe-coded apps just became the #1 attack vector in the enterprise. Business teams are building on production data, while IT has zero visibility. No reviews. No audits. No permissions. No control. AI hackers are about to get 100x better. Anthropic proved it with Mythos. Superblocks 2.0 is the only platform to take back control: > Business teams build AI-powered apps with permissions baked in. > IT and Security can audit everything and lock down anything, instantly. > Engineering sets the standards. Every app follows them. Instacart, SoFi, and LinkedIn run Superblocks in production today. And larger organizations we can't yet name are too: A Fortune 500 just shut down 2,500 Replit users to standardize on Superblocks, running the platform air-gapped in their AWS environment. A 150,000-employee global services firm replaced Lovable with Superblocks to unlock AI-built apps on restricted internal systems. Every IT leader we’ve demoed to using Replit, Lovable or v0 asked for early access. Today we open access to the world. The genie is out of the bottle on employee vibe coding. Let it run wild, or take back control – https://t.co/8TEolq14Z5

How to Make Knowledge Graphs Blazing Fast

How We Built Secure, Scalable Agent Sandbox Infrastructure

Spark 2.0 is here! 🚀 We’re redefining what’s possible on the web with a streamable LoD system for 3D Gaussian Splatting. Built on Three.js, you can now stream massive 100M+ splat worlds to any device from mobile to VR using WebGL2. All open-source. Dive into the tech 👇 https://t.co/VOd6V0Wz1s

In the 90s, Hitachi came up with a bizarre way to conserve memory bandwidth. Their SuperH architecture, intended to compete with ARM, was a 32-bit architecture that used…16 bit instructions. The benefit was really high code density. If you can fit twice as many instructions into every cache line, the CPU pipeline stalls way, way less. This was *really* important for embedded devices, which were often extremely bandwidth constrained in the era. Sega famously used the processors for the Dreamcast, and ARM actually ended up licensing their patents for Thumb mode! I think perhaps the weirdest thing about SuperH was its concept of “upwards compatibility”. The ISA itself is a microcode-less design, all future instructions were trapped and emulated by older chipsets. It’d be slow…but you could run future code on very old chips! Very neat design, a massive success through the 90s and 2000s, that slowly faded.

Teach Claude Code to think systematically. I got tired of having the same conversation with Claude Code. Review this for security. Are these tests sufficient? Can you find patterns in my codebase and update the instruction files? The answers were ok but inconsistent: no clear methodology, no memory between sessions, no systematic depth. So I built one Claude Code plugin, then another. Before I knew it I had five, covering instruction files, test coverage, security, codebase analysis, and code evolution. I decided to merge them into one integrated plugin. Claude universe was available so I figured why not… The Claude Universe plugin: teach Claude Code to think systematically Github link (entirely open source): https://t.co/kGFSGnryRu More at https://t.co/KLovBt5vDI

Our Head of Product John Calzaretta walks through the full Software Factory platform live: Refinery, Foundry, Planner, Validator, Knowledge Graph, MCP integration with Cursor, Claude Code, and others. Live Q&A included. Full demo: https://t.co/jQns1Gqrwn https://t.co/y1OrzYpEFI

We’re expanding Trusted Access for Cyber with additional tiers for authenticated cybersecurity defenders. Customers in the highest tiers can request access to GPT-5.4-Cyber, a version of GPT-5.4 fine-tuned for cybersecurity use cases, enabling more advanced defensive workflows. https://t.co/RMMXQklFar

how i rebuilt our landing page in 4 hrs with Claude

Sub-agents in (latent) space! We’ve been working on a side project. As far as I know, this is the first massively multiplayer, completely LLM-driven game. Come play Gradient Bang with us. See if you can catch me on the leaderboard. This whole thing started because I wanted to explore a bunch of things I’m currently obsessed with, in an application of non-trivial size, that felt both new and old at the same time. So … a retro-style space trading game built entirely around interacting with and managing multiple LLMs. Factorio, but instead of clicking, you cajole your ship AI into tasking other AIs to do things for you. Some of the things we’ve been thinking about as we hack on Gradient Bang: - Sub-agent orchestration - Partial context sharing between multiple LLM inference loops - Managing very long contexts, and episodic memory across user sessions - World events and large volumes of structured data input as part of human/agent conversations - Dynamic user interfaces, driven/created on the fly by LLMs - And, of course, voice as primary input If you’ve been building coding harnesses, or writing Open Claw agents, or doing pretty much anything that pushes the boundaries of AI-native development these days, you’re probably thinking about these things too! This is all built with @pipecat_ai, the back end is @supabase, the React front end is deployed to @vercel, and all the code is open source.

An awesome trick for gamedevs here in the #vibejam or overall, if like me you don't know how to make good trees or rocks or any props Ask GPT-5.4 in Codex to make - a tree tool - a rock tool to allow you to tweak procedural trees and rock or props generation DIRECTLY in your game - Work with the AI to define a style and parameters you want to play with - Then see directly in game what you prefer - Save And now it's done And you can easily do that for seasons, adding more types of trees, etc.

Build Agents that never forget