Kronos Foundation Model Targets Financial Markets as AI Community Debates Ideas vs. Execution

An open-source financial forecasting model trained on 12 billion records across 45 exchanges made waves today, while Ethan Mollick sparked discussion about whether AI commoditizes execution and elevates the value of truly original ideas. Meanwhile, Seedance 2 showed surprising progress on AI's longtime nemesis: rendering human hands.

Daily Wrap-Up

Today's discourse landed squarely on a tension that's been simmering for months: if AI makes building things dramatically cheaper, what actually matters? Ethan Mollick framed it sharply, noting that AI can generate plenty of interesting ideas but struggles with the truly outlier ones, the kind that create new categories rather than iterating on existing ones. Paul Smith pushed back with the pragmatic counter that execution hasn't gotten easier in the ways that count. The software might write itself, but focus, discipline, and simplicity remain stubbornly human problems. It's a useful corrective to the "ideas are all that matter now" narrative. The people who ship things know that the hard part was never typing the code.

On the technical side, the Kronos model out of Tsinghua University represents an interesting trend in domain-specific foundation models. Rather than fine-tuning a general-purpose LLM for finance, the team built something native to candlestick data from scratch. Whether the 93% accuracy claims hold up in production trading environments is another question entirely, but the architectural decision to treat financial time series as a first-class data type rather than shoehorning it into a text model is sound engineering. David Cramer's skepticism about local model hype provides a nice counterweight here: open-source and runs-on-your-laptop sounds great until you actually try to run inference at scale. The gap between a demo and a deployable system remains wide.

The most entertaining moment was fofr marveling at Seedance 2's hand rendering, a callback to the years when "AI can't do hands" was the universal tell for generated imagery. It's not perfect yet, but the progress is genuinely striking. The most practical takeaway for developers: if you're working in a specialized domain like finance or medicine, pay attention to the emerging wave of domain-native foundation models. General-purpose LLMs are incredibly versatile, but purpose-built models trained on domain-specific data representations are starting to show significant performance advantages, and many are shipping under permissive open-source licenses.

Quick Hits

- @matteocollina ran into Claude's usage policy filter while doing open-source coding work, a reminder that overzealous content filters remain a friction point for legitimate developer workflows.

- @loganthorneloe is promoting AI books releasing from early access at 35% off, covering RAG systems and core AI concepts for developers looking to level up.

- @github_skydoves shared photos from Shibuya and Shinjuku with the RevenueCat team, the mobile monetization platform continuing its developer relations push in Japan.

- @alexismediaco is studying @DannyLimanseta's "Tiny Skies" game from the #vibejam competition, noting that simplified game assets are key to achieving smooth performance in AI-assisted game development.

- @CNET shared footage of Jensen Huang walking through the Vera Rubin System at GTC 2026, Nvidia's continued push into next-generation GPU architecture for AI workloads.

- @techNmak posted a follow-request tweet that can be safely filed under "algorithmic noise."

The Ideas vs. Execution Debate

The most thought-provoking thread of the day centered on a deceptively simple question: as AI drives down the cost of building things, does the value shift entirely to having good ideas? @emollick kicked it off with a nuanced observation: "Really interesting ideas are going to be increasingly at a premium as the cost of executing those ideas drops." But he added an important caveat drawn from his own research, noting that AI "is quite good at generating interesting ideas, but not nearly as good at generating outlier really interesting ideas." This creates a fascinating paradox. AI lowers the barrier to execution while simultaneously flooding the zone with competent-but-unremarkable ideas, potentially making the truly novel ones even more valuable by contrast.

@realpaulsmith offered the practitioner's rebuttal, arguing that "the software/build side of execution gets easier with AI. The business side of execution - focus / discipline / simplicity - will still be the thing that trips most people up." This rings true for anyone who's shipped a product. The bottleneck was rarely the code itself. It was deciding what to build, saying no to feature creep, and maintaining coherence across a growing system. AI can write your functions, but it can't tell you which functions matter. The synthesis here is that we're not heading toward an "ideas-only" economy. We're heading toward one where the middle layer of execution, the rote translation of specs into code, gets compressed, while the top layer (vision, taste, judgment) and the bottom layer (operational discipline, user empathy) remain distinctly human advantages.

Domain-Specific Foundation Models Hit Finance

The biggest technical story today was Kronos, a foundation model built specifically for financial market prediction coming out of Tsinghua University. What makes it architecturally interesting isn't the accuracy claims, though those are eye-catching, but the design philosophy. @heynavtoor laid out the core pitch: "Not a general AI repurposed for finance. An AI that speaks the native language of candlestick patterns." The model was trained on 12 billion records from 45 exchanges and ships in four sizes, from a 4M parameter version that runs on a laptop to a 499M parameter model for maximum accuracy. It handles price forecasting, volatility prediction, and works zero-shot across any asset class, market, or timeframe.

The claimed numbers are striking: "93% more accurate than the leading time series model" and "87% more accurate than the best non-pretrained baseline," all without fine-tuning. The model has been accepted at AAAI 2026 and carries an MIT license with 11.6K GitHub stars. The live BTC/USDT demo updating hourly adds a layer of accountability you don't usually see from academic papers. That said, anyone who's worked in quantitative finance knows that backtested performance and live trading performance are separated by a chasm of slippage, liquidity constraints, and regime changes. The real test for Kronos will be whether it maintains its edge when actual capital is on the line, not just in research benchmarks.

What's strategically significant is the trend Kronos represents. We're moving past the era where every AI application starts with a general-purpose LLM and a fine-tuning pipeline. Domain-native models that understand the fundamental data structures of their field, whether that's candlestick patterns, protein sequences, or medical imaging, are showing that specialization at the pre-training level can unlock performance that transfer learning alone can't match.

The Local Models Reality Check

@zeeg offered a characteristically blunt take on the local AI discourse: "an awful lot of people promote local models when they're unusable (hardware wise, perf wise, or simple outcomes)." He framed it as a litmus test for whether someone has meaningful contributions to the conversation. It's a pointed observation that cuts through the enthusiasm that often surrounds open-source model releases, including ones like Kronos that advertise laptop-friendly variants.

The tension is real and worth sitting with. Open-source models running locally offer genuine advantages in privacy, cost, and latency. But the gap between "technically runs" and "runs well enough to be useful in production" is enormous. A 4M parameter financial model on a laptop might produce predictions, but whether those predictions are actionable at the speed required for trading is a different question entirely. The local model conversation needs more honesty about these tradeoffs, not less enthusiasm, but more precision about where local inference actually makes sense versus where it's a hobbyist exercise dressed up as a production solution.

AI Image Generation Keeps Climbing

@fofrAI highlighted a quiet milestone in generative AI: Seedance 2 producing surprisingly competent hand renderings. "Do you remember when AI couldn't do hands?" they asked, sharing video samples that, while "still feels off in parts," demonstrate dramatic improvement over the mangled fingers that became a meme during the Stable Diffusion and Midjourney era. The qualifier matters: we're not at photorealistic perfection, but the trajectory is clear. The well-known failure modes of generative models, hands, text, consistent character identity, are falling one by one. For developers building products on top of image and video generation APIs, this steady improvement in edge cases means fewer manual touch-ups and broader applicability for commercial use cases.

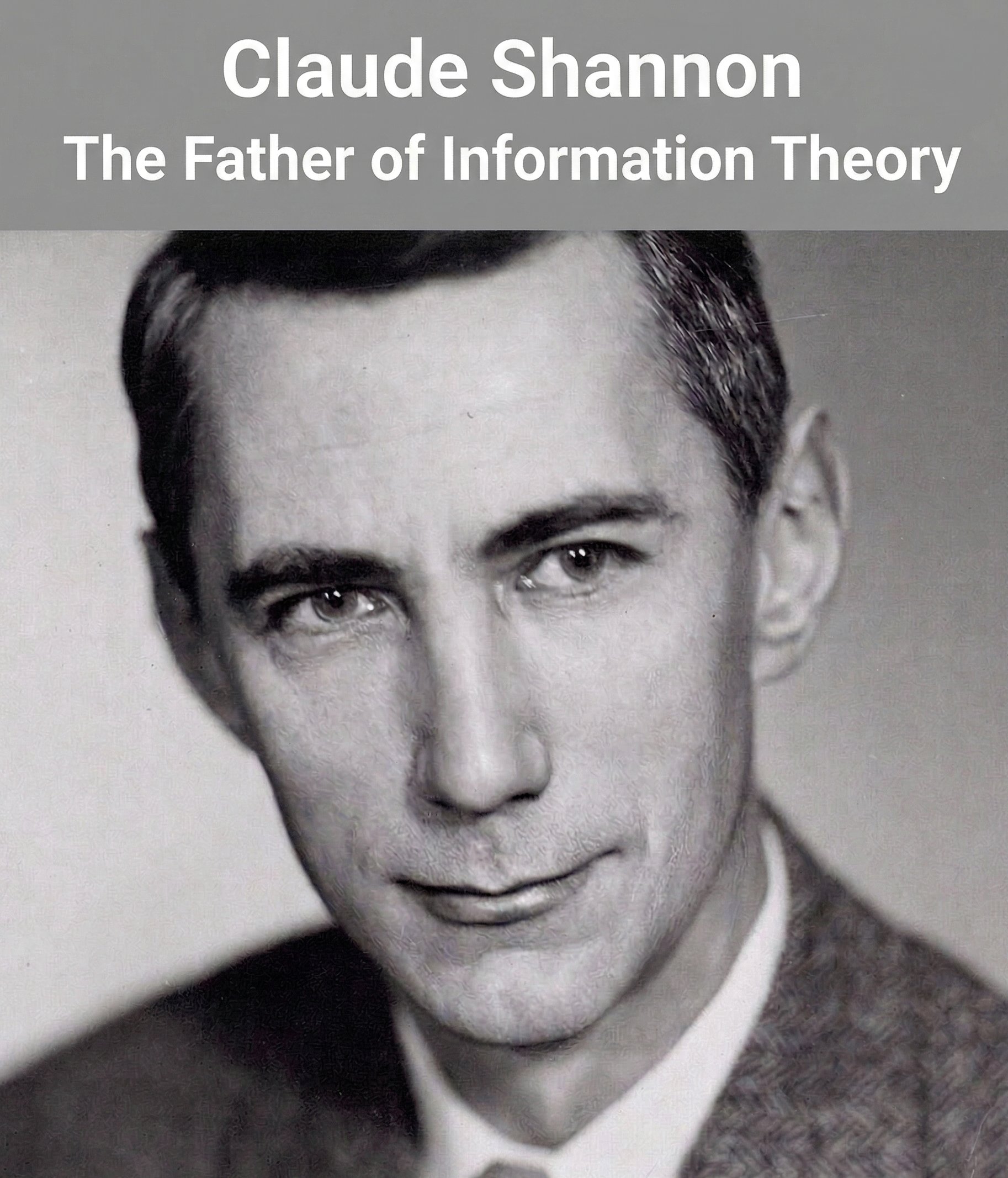

Claude Shannon: The Man Behind the Bit

@techNmak shared an extensive tribute to Claude Shannon, tracing the through-line from his 1937 master's thesis connecting Boolean algebra to electrical circuits through to modern deep learning. The post is a worthwhile read for anyone in the AI space who hasn't dug into the foundational history. The connection to today's models is direct and mathematical: "Cross-entropy loss, the function training every classifier and language model, is derived directly from" Shannon's entropy equation. Every gradient descent step in every neural network training run is, in a very literal sense, running Shannon's formula. It's a useful reminder that the current AI revolution didn't emerge from nowhere. It sits atop decades of theoretical work by people who were driven by curiosity rather than commercial ambition, work that only became practically relevant when compute caught up with the math.

Sources

Rainy day vibes! 🎵 (Turn on sound for cosy vibes) I think I've settled on the #vibejam game concept. It's called Tiny Skies, it's a charming, cosy flying game where you fly around a tiny world, delivering packages, exploring the world and getting to know its inhabitants. If you've played Nintendo Animal Crossing before, you would probably recognise the kind of cosy vibes I'm going for.