DeepSeek V4 Leaks Trillion-Parameter Architecture as Claude Code Billing Concerns and Security Guides Dominate Developer Chatter

Today's feed centers on the Claude Code ecosystem, with posts spanning billing surprises, security hardening, and creative (if questionable) use cases like tax filing. Meanwhile, leaked specs for DeepSeek V4 promise a trillion-parameter MoE model, and local AI demos show Gemma 4 orchestrating SAM 3.1 entirely on a MacBook.

Daily Wrap-Up

The Claude Code discourse has reached a fascinating inflection point. It's no longer about whether developers are using it, but about the messy realities of living with it daily. Today we saw concerns about surprise billing when free credits run out, a comprehensive security hardening guide making the rounds, and someone claiming they filed their taxes with it. That last one should probably come with a disclaimer, but it captures the current mood perfectly: people are throwing these tools at every problem they can find, consequences be damned.

On the model front, the most interesting development is the leaked DeepSeek V4 spec sheet claiming a trillion-parameter mixture-of-experts architecture that activates only 37 billion parameters at inference time. If even half those benchmarks hold up, the cost-to-capability ratio continues its relentless march downward. Pair that with @MaziyarPanahi's demo of Gemma 4 orchestrating SAM 3.1 for vehicle segmentation entirely on a MacBook, and you start to see a world where serious AI workloads genuinely don't need cloud infrastructure. The Codex ecosystem is also chugging along with a meaty double update that landed memory extensions, MCP overhauls, and hook improvements.

The most practical takeaway for developers: if you're using Claude Code or any AI coding agent, spend 15 minutes today locking down your permissions. @Axel_bitblaze69's three-tier security guide is worth bookmarking. At minimum, enable sandbox mode and set up a deny list for credentials, SSH keys, and environment files. The tools are powerful enough now that the blast radius of a misconfiguration is real.

Quick Hits

- @Scobleizer got a demo of Niantic's spatial reconstruction platform, calling it "the Holodeck." The tech builds on Scaniverse's digital twin capabilities to create living 3D models synced with reality. (link)

- @thdxr is marveling at TUI plugin capabilities after seeing a voice integration demo inside OpenCode by @LukeParkerDev. The terminal-based AI tool ecosystem keeps getting weirder and more capable. (link)

- @kanjun open-sourced Bouncer, responding to @nikitabier's prediction that the idea had a "72 hour shelf life" by simply shipping the repo. The best response to "big companies will kill this" remains "here's the code, good luck." (link)

- @grinich argues the UI era is ending after 70 years of interface paradigms, from mainframes to touch. His talk at Mastra's conference explores what comes after the interface disappears entirely. (link)

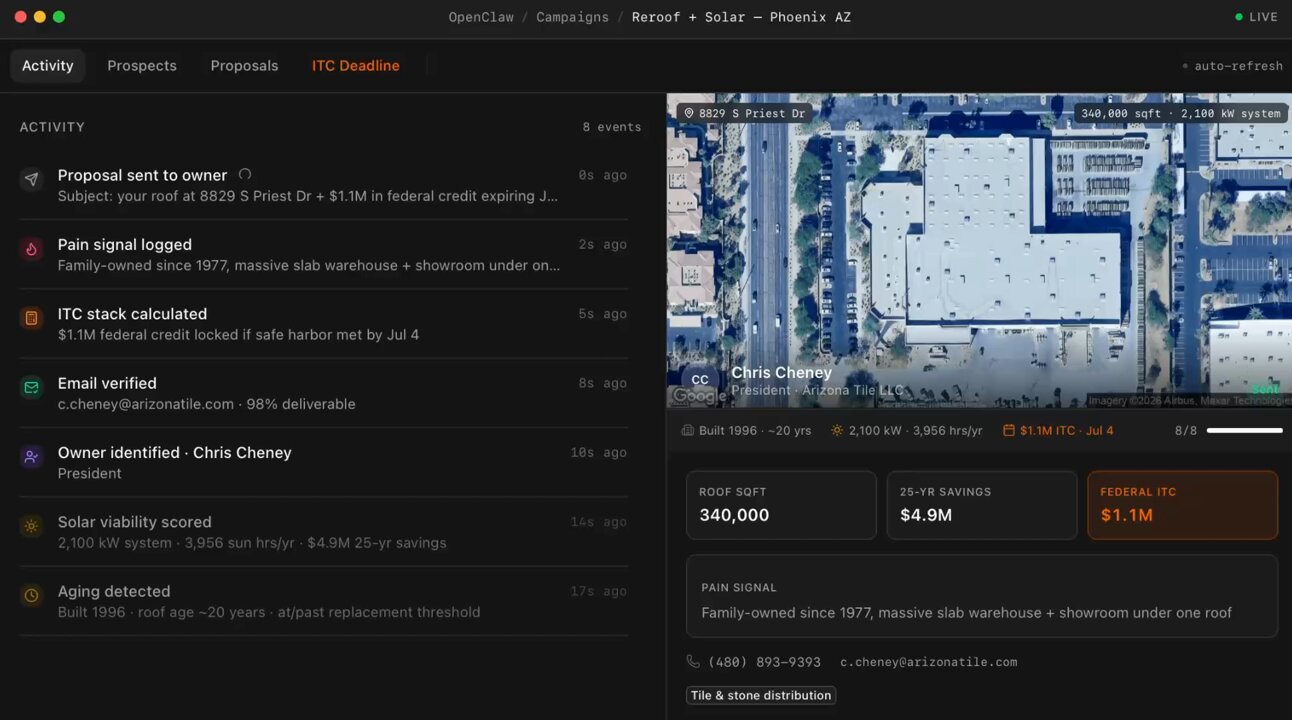

- @everestchris6 showcases OpenClaw, a bot that scans satellite imagery of commercial roofs, renders solar panels on buildings, calculates federal tax credits, finds property owners, and ships personalized proposals on autopilot. Peak AI sales automation. (link)

Claude Code: Billing, Security, and Pushing the Boundaries

Claude Code dominated today's conversation, but not in the way Anthropic's marketing team might prefer. The tool has clearly reached mass adoption, and with that comes the growing pains of users discovering how the billing model actually works once promotional credits expire.

@doodlestein captured the frustration many are feeling: "Was this all a ploy to get people to turn on extra usage in Claude Code so that once the $200 credit gets used up, people start racking up huge bills? It's very easy to miss that it's doing that. I kind of hate this, now I need to watch stuff like a hawk to know when to stop it." It's a pattern we've seen with cloud services for years: generous free tiers that create habits, followed by the sticker shock of actual usage-based pricing. The difference here is that AI coding agents can burn through tokens at a rate that makes a forgotten EC2 instance look quaint.

On the security front, @Axel_bitblaze69 posted what might be the most useful thread of the day, a three-level security hardening guide for Claude Code. The core insight is sobering: "by default, claude code can read your SSH keys, AWS credentials, every .env file, and push code wherever it wants. One bad repo with a hidden instruction and your data is gone." The guide walks through progressively stricter configurations, from a basic settings.json deny list (15 minutes) to Trail of Bits' audited security plugin (30 minutes) to full container isolation via devcontainers (1 hour). This kind of practical security guidance is exactly what the ecosystem needs as adoption scales.

Then there's @leopardracer, who claims Claude Code turned a $13,000 tax bill into a $6,500 refund by processing 2,000+ transactions across two LLCs. No CPA involved. This is either a glimpse of the future or a cautionary tale waiting to happen, possibly both. The IRS probably has opinions about AI-filed returns, and "Claude told me to" is unlikely to hold up as an audit defense. Still, it illustrates the ambition users bring to these tools.

DeepSeek V4 and the Quantization Arms Race

The model architecture wars continue to heat up, with leaked specifications for DeepSeek V4 making the rounds. @teortaxesTex shared details from what appears to be a Chinese-language announcement: a trillion-parameter MoE architecture that activates roughly 37 billion parameters during inference, claiming a 35x speed improvement and 40% energy reduction. The quoted benchmarks are eye-catching: 99.4% on AIME 2026, 92.8% on MMLU, and 83.7% on SWE-Bench. Perhaps most notable is the claim of inference costs at 1/70th of GPT-4, with full compatibility with the OpenAI API format.

Whether these numbers survive independent benchmarking remains to be seen. DeepSeek has consistently delivered on efficiency claims in the past, but a trillion-parameter model with these activation ratios would represent a significant leap. The planned late April release and open-source weight distribution signal continued commitment to the open model ecosystem. The mention of native Huawei Ascend 950PR optimization is also worth noting for the geopolitical implications of AI hardware supply chains.

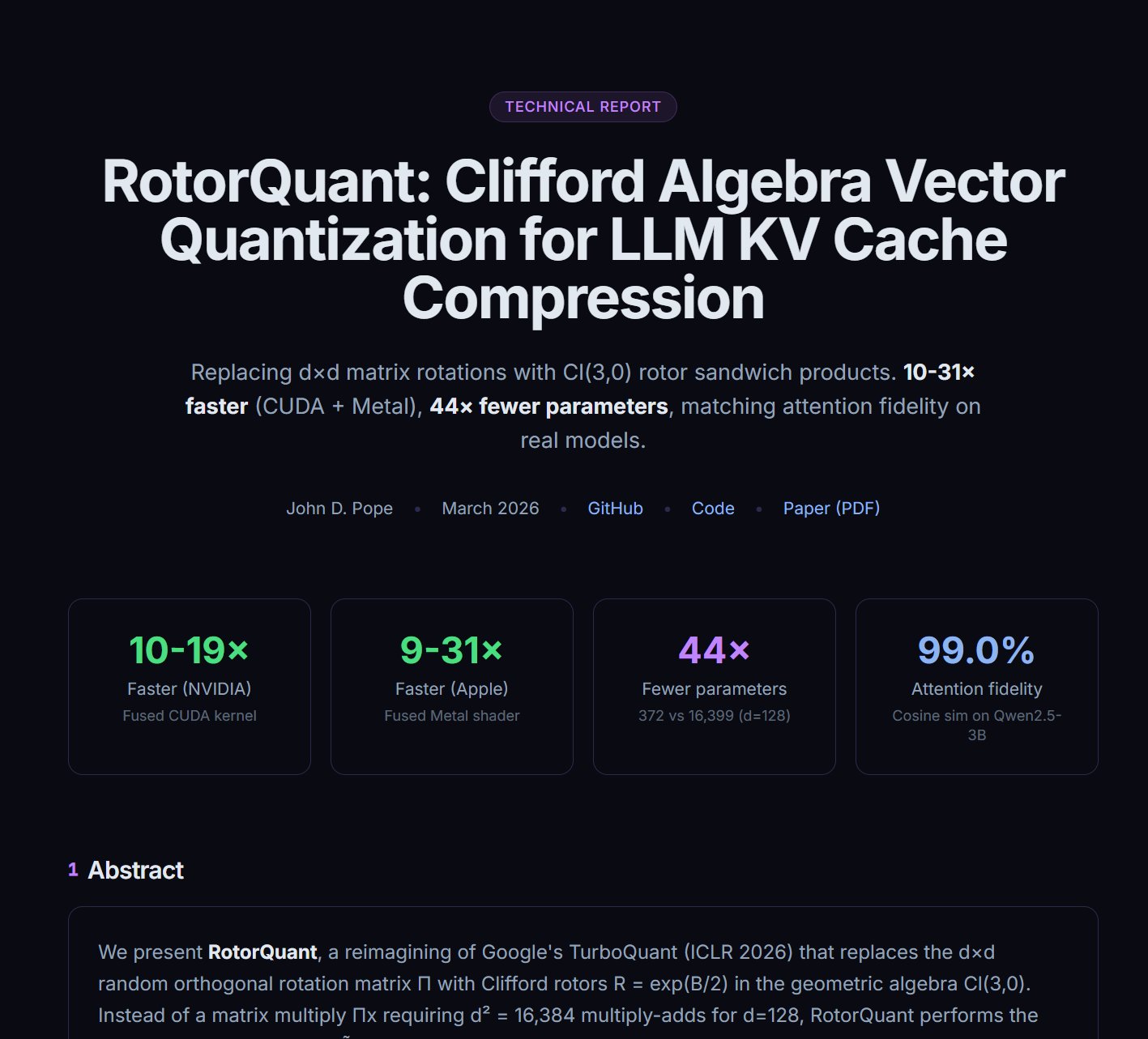

Separately, @aisearchio flagged what appears to be a new open-source quantization method that "beats Google's TurboQuant on every axis: better PPL, 28% faster decode, 5x faster prefill, 44x fewer parameters." The quantization space is moving fast enough that today's state-of-the-art compression technique is next month's baseline, but each increment matters for making models practical on consumer hardware.

Local AI Gets Surprisingly Capable

The dream of running serious AI workloads without cloud infrastructure took another step forward today. @MaziyarPanahi demonstrated a pipeline where Gemma 4 acts as a reasoning layer that orchestrates SAM 3.1 for image segmentation, all running locally on a MacBook via MLX: "Gemma 4 looks at a parking lot. Decides what to ask. Calls SAM 3.1. 'Segment all vehicles.' 64 found. 'Now just the white ones.' 23 found. One model reasoning and orchestrating. One model executing."

This is significant because it demonstrates multi-model orchestration, not just single-model inference, running entirely on local hardware. The pattern of a reasoning model directing a specialist model mirrors what cloud-based agent architectures do, but without the latency, cost, or data privacy concerns of API calls. As models get more efficient through better architectures and quantization techniques (see above), the gap between local and cloud capabilities continues to narrow for many practical workloads.

Codex and the Agent Tooling Ecosystem

OpenAI's Codex received a substantial double update (0.119.0 and 0.120.0), and @fcoury highlighted @LLMJunky's comprehensive changelog breakdown. The release is notable for several reasons: memory extensions that allow third-party plugins to feed context into the summarization agent, a major MCP overhaul enabling tool search across custom servers and mid-flow user elicitations, and quality-of-life improvements like hook rendering and Zellij terminal support.

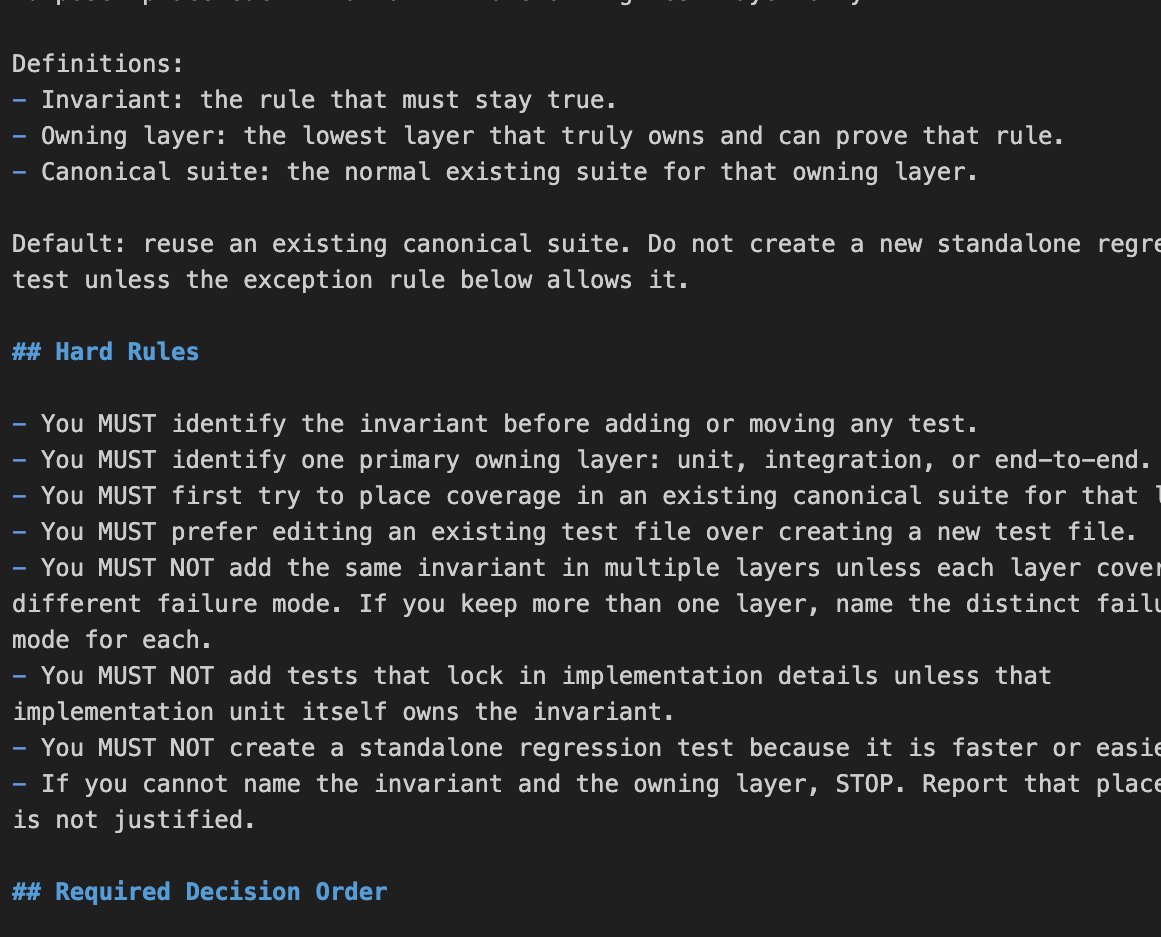

The memory extensions feature is particularly interesting as it represents "the first real extension point for Codex's memory system," opening the door for community-built memory plugins. Meanwhile, @kevinkern identified a common pain point with all coding agents: test proliferation. "One bug fix turns into three more tests. Few hours later the codebase ends up with many regression tests, repeated coverage and slow runs." His "consolidate-test-suites" skill for managing this bloat is the kind of meta-tooling that becomes essential as agent-written code becomes a larger share of any codebase.

The Long AI Agent Mission

@RayFernando1337 called attention to what he considers "one of the most legendary blog posts of our time," a detailed breakdown from @droid_35719 documenting a 16.5-hour autonomous agent mission: 185 agent runs, 778 million tokens processed, and 89% test coverage achieved. The post reportedly covers design rationale and how missions actually operate in practice. This kind of transparent documentation about long-running agent behavior, including failure modes and recovery patterns, is invaluable for the field. As agents tackle increasingly complex multi-hour tasks, understanding the mechanics of sustained autonomous operation becomes critical knowledge.

Sources

I Used Claude Code to File My Taxes. Here’s Exactly How I Did It (And Got a $6,500 Refund).

The post explains our design rationale, how missions actually run, and breaks down a real 16.5-hour mission: 185 agent runs, 778M tokens, 89% test coverage. https://t.co/vCXs7B6MG1

the voice of god spoke to me. now you can get divine intellect inside of opencode. https://t.co/NCDXtO7sYp

Most digital twin investments stall for one reason: They’re not grounded in how the world actually looks today. Niantic Spatial’s Reconstruction capability fixes this, creating a living, machine-readable 3D model that stays in sync with reality, so every system, team, and workflow operates from the same ground truth. In a new blog by Trista Pierce, Business Development Lead, explore how Scaniverse’s Reconstruction technology changes the cadence entirely. Read more: https://t.co/8NDSOwz3Kp

@beffjezos As a former founder, I hate when big companies kill startups—but unfortunately, this idea will have a 72 hour shelf life.

DeepSeek V4 最新消息! 一、发布时间 2026年4月下旬正式发布 二、核心配置与升级 1. 万亿参数 MoE 架构,总参数1万亿,推理时激活约370亿,推理速度提升35倍,能耗降低40% 2. 100万 token 无损上下文窗口 3. 原生多模态,支持文本、图像、视频、音频 4. 训练+推理全链路适配华为昇腾950PR,算力利用率85%,部署成本为英伟达方案1/3 5. 自研 mHC 架构、Engram 记忆模块,推理成本大幅降低 三、性能实测 • 数学:AIME 2026 99.4% • 通用知识:MMLU 92.8% • 编程:SWE-Bench 83.7%、HumanEval 90%,支持338种语言 • 推理成本:仅为 GPT-4 的1/70 四、开放计划 1. 网页端已上线快速模式、专家模式(V4功能预览) 2. API 兼容 OpenAI 格式,新用户赠送500万免费 Token 3. 模型权重计划开源,支持本地部署

Look ma new Codex Updates! 0.119.0 and 0.120.0 are here. And with it, a HUGE number of quality of life updates and bug fixes! > Hooks now render in a dedicated live area above the composer. They only persist when they have output, so your terminal stays clean. If you're running PreToolUse or PostToolUse hooks, this is a huge readability win. > Hooks are now available again on Windows > CTRL+O copies the last agent output. Small but clutch when you're pulling a code block into another file or chat. > New statusline option: context usage as a graphical bar instead of a percentage. Easier to glance at mid-session when you're trying to gauge how much runway you have left. > Zellij support is here with no scrollback bugs. If you've been stuck on tmux just because Codex was broken in Zellij, you're free now (shout out @fcoury) > Memory extensions just landed. The consolidation agent can now discover plugin folders under memories_extensions/ and read their instructions.md to learn how to interpret new memory sources. Drop a folder in, give it guidance, and the agent picks it up automatically during summarization. No core code changes needed. This is the first real extension point for Codex's memory system, and it opens the door for third-party memory plugins. > Did you know, you can /rename a thread? But what's really cool about that is, after you rename it, you can resume it with the same name, no more UUIDs. codex resume mynewapp or directly from the TUI: /resume mynewapp > Multi agents v2 got an update to tool descriptions More reliable multi agent environments and inter agent communication > You can now enable TUI notifications whether Codex is in focus or not. Modify this in your config: [tui] notification_condition = "always" > MAJOR overhaul to Codex MCP functionality: 1. Codex Tool Search now works with custom MCP servers, so tools can be searched and deferred instead of all being exposed up front. 2. Custom MCP servers can now trigger elicitations, meaning they can stop and ask for user approval or input mid-flow. 3. MCP tool results now preserve richer metadata, which improves app/UI handoff behavior. 4. Codex can now read MCP resources directly, letting apps return resource URIs that the client can actually open. 5. File params for Codex Apps are smoother: local file paths can be uploaded and remapped automatically. 6. Plugin cache refresh and fallback sync behavior are more reliable, especially for custom and curated plugins. > Composer and chat behavior smoother overall, resize bugs remain though. > Realtime v2 got several significant improvements as well. > You're still reading? What a legend. 🫶 npm i -g @openai/codex to update