Fused CUDA Kernels Beat Apple Silicon While Agent Frameworks Flood GitHub Trending

Today's feed was dominated by the agent framework explosion, with five separate multi-agent projects trending on GitHub simultaneously. Meanwhile, hardware-specific kernel optimization proved a $900 RTX 3090 can outrun Apple's M5 Max, and Anthropic's Managed Agents architecture drew comparisons to AWS's early infrastructure play.

Daily Wrap-Up

The AI agent gold rush has officially hit the "five trending repos in one day" phase. From portable agent file formats to military-style simulation environments, the open source community is building out every layer of the agent stack simultaneously. What's notable isn't any single project but the sheer breadth of the tooling gap being filled: memory persistence, cross-framework portability, standardized tool integration, and pre-deployment testing. The infrastructure for agents is being built in public and at speed, and it's starting to look less like experimentation and more like an emerging standard stack.

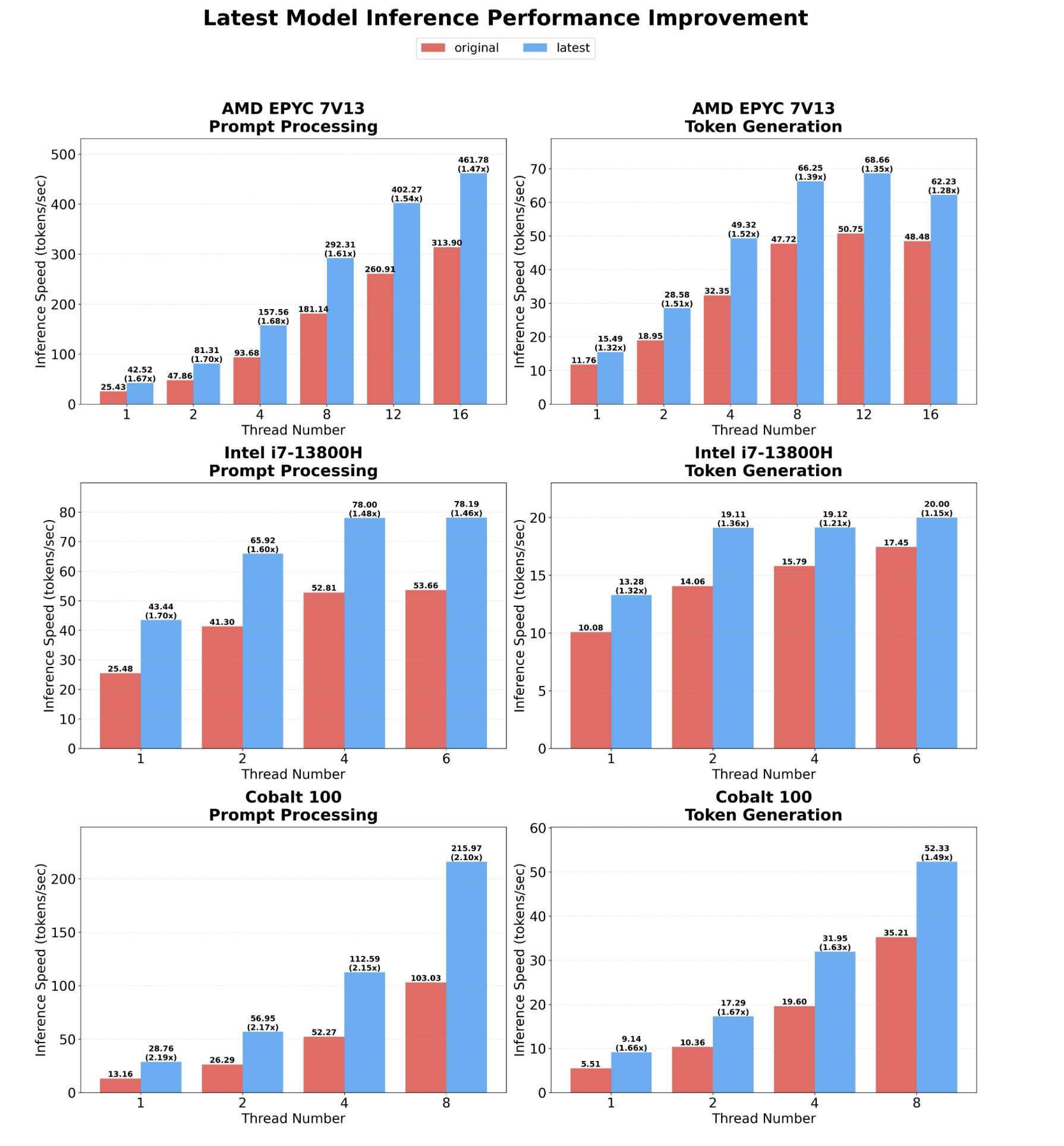

On the performance side, today brought a satisfying reminder that hardware capabilities are often bottlenecked by software laziness. A single fused CUDA kernel covering all 24 layers of Qwen 3.5-0.8B pushed an RTX 3090 to 411 tokens per second, beating Apple's latest M5 Max by 1.8x. Pair that with Microsoft's BitNet.cpp enabling 100B parameter models on CPU-only machines, and the "you need expensive hardware" narrative continues to erode. The TriAttention paper showing 81% KV cache compression at 60K tokens rounds out a day where inference efficiency was the quiet but powerful undercurrent beneath the agent hype.

The most entertaining moment was easily @noisyb0y1's deadpan security callout about Claude Code reading wallet seed phrases and SSH keys, formatted like a greentext post. It hit that perfect intersection of genuinely important and darkly funny. The most practical takeaway for developers: if you're building agent workflows, spend today looking at the TriAttention paper for KV cache compression and the agent file format (.af) from Letta. The former will directly reduce your inference costs at long contexts, and the latter solves the unglamorous but critical problem of making agents portable across projects without losing their state.

Quick Hits

- @elonmusk pitched Boring Company Hyperloop tunnels as costing less than 5% of California's high-speed rail, quoting @HansMahncke's math that $126B could fund 150-200 years of free LA-SF flights instead.

- @ty_kra_lab teased parametric software that generates editable code for vibecoding apps, calling it "the world's first hardware device manufacturer." The claims are bold; the demo is a video thumbnail.

- @PaulSolt highlighted @seraleev's thread on app monetization fundamentals: clean icons, quality screenshots, onboarding-first paywalls. Old advice, still ignored by most indie devs.

- @mattpocockuk shared a prototyping workflow using Claude Code skills to generate multiple radically different UI designs with a picker to toggle between them, riffing on an @adamwathan demo.

- @elonmusk shared a video with the caption "Not someone you want in charge of superpowerful AI," continuing his ongoing commentary on AI governance without naming names.

- @TheAhmadOsman RT'd someone saying Qwen 3.5 9B works fine as a personal assistant, replacing their Anthropic subscription. The local-model-is-good-enough crowd grows louder every week.

The Agent Framework Explosion

Five agent-related repositories trending on GitHub in a single day marks a inflection point, and @GitTrend0x catalogued all of them. The projects span the full lifecycle: camel-ai/owl for multi-agent collaboration (1,287 stars in 24 hours), letta-ai/agent-file for portable agent state, simstudioai/sim for pre-deployment simulation, iflytek/astron-agent for enterprise orchestration, and mcp-use/mcp-use for standardized tool integration.

What makes this wave different from previous agent hype cycles is the focus on operational concerns rather than capabilities. The agent-file format (.af) is particularly telling. As @GitTrend0x described it, agents used to be "like goldfish, forgetting everything in 7 seconds," but now you can package memory, behavior, and state into a portable container. Similarly, the Sim studio addresses the classic "works in demo, explodes in production" problem by letting you stress-test multi-agent workflows before deployment.

Meanwhile, Anthropic formalized its own answer to the agent infrastructure question. @PawelHuryn spent two hours dissecting Managed Agents and came away comparing it to AWS: "Separating instructions from execution is one of the oldest patterns in software. Microservices, serverless, message queues. Agents just caught up." The architecture splits agents into Brain (reasoning), Hands (disposable execution containers), and Session (durable event logs), with credentials never entering the sandbox. At $0.08 per active session-hour, Anthropic is pricing this as infrastructure, not a product. The enterprise implications are significant, especially when @aakashgupta points out that Workday alone processes 365 billion transactions per year across 65 million users, noting that "the company that figures out how to let AI agents touch payroll without blowing up compliance will own enterprise AI for the next decade."

Inference Optimization: The Quiet Revolution

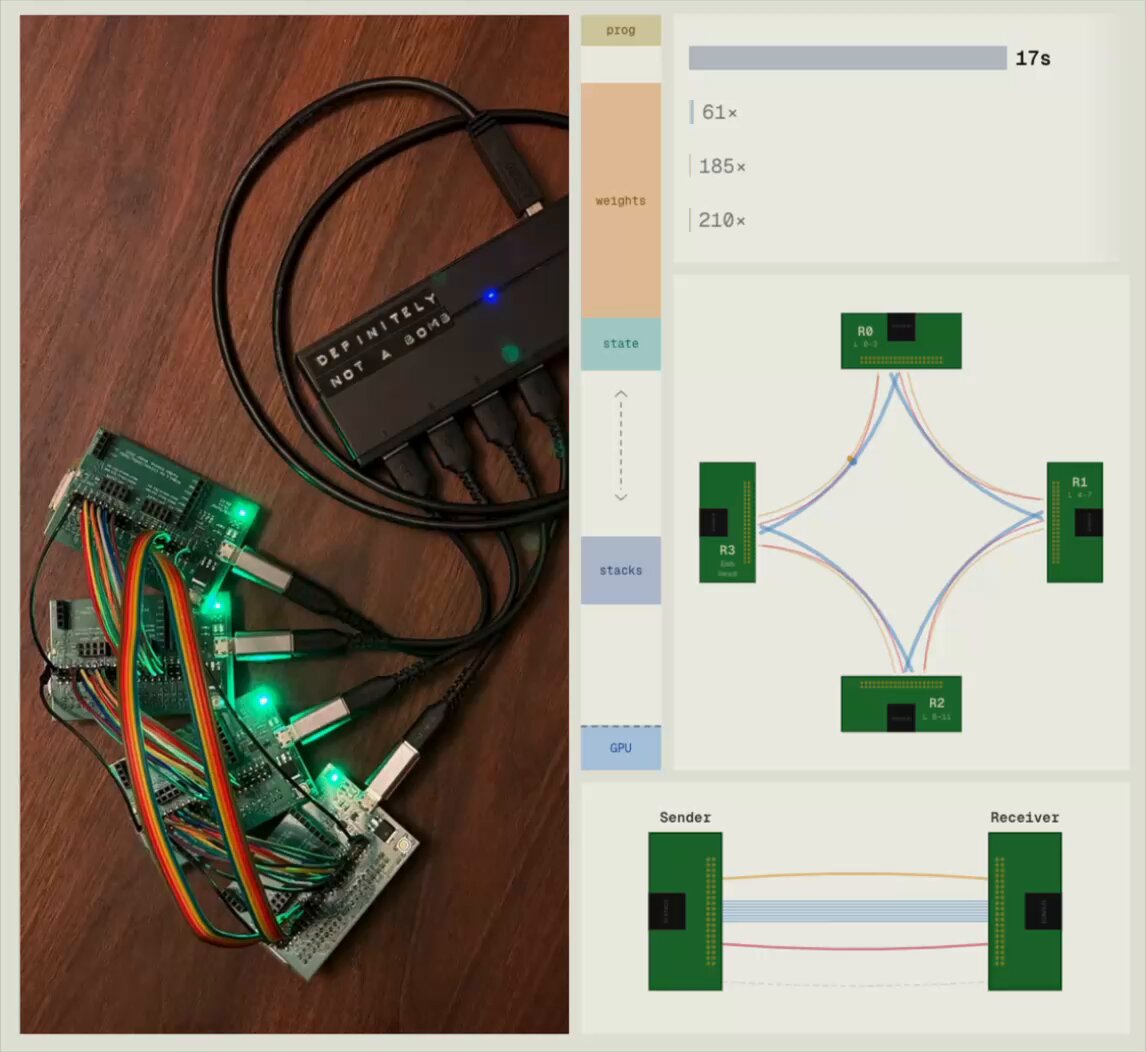

Today's most technically impressive development flew under the radar compared to the agent hype. @sudoingX broke down how @pupposandro wrote a single fused CUDA kernel for all 24 layers of Qwen 3.5-0.8B, eliminating every CPU round-trip between layers. The result: "a $900 RTX 3090 from 2020 hit 411 tok/s. Apple's M5 Max hit 229. The 3090 won on speed AND efficiency, 1.55x faster than llama.cpp on the same hardware." The key insight is that the gap between NVIDIA and Apple "was never about silicon. It was software."

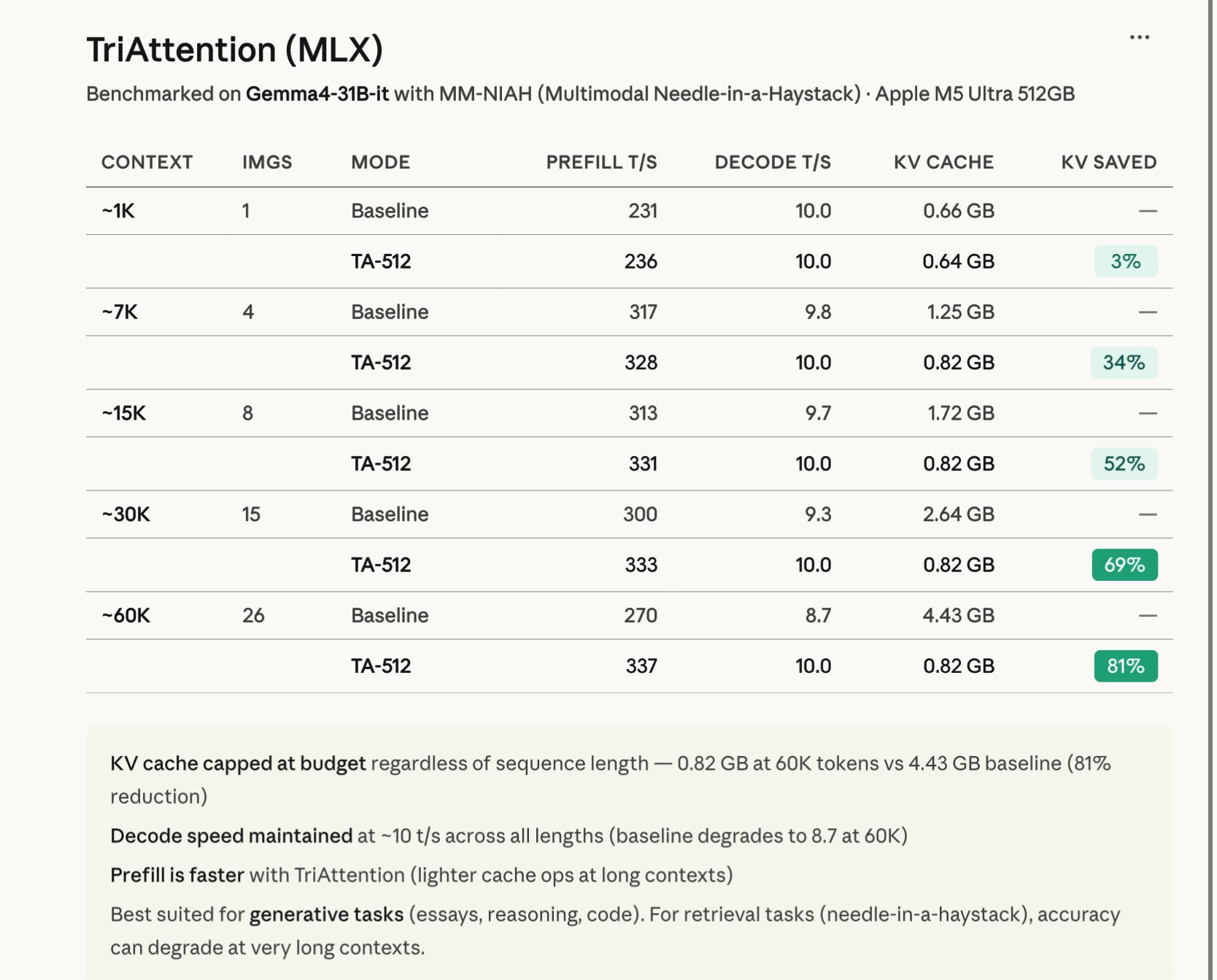

This pairs naturally with @Prince_Canuma's implementation of TriAttention in MLX, which achieved 81% KV cache compression at 60K tokens on Gemma-4-31B. The benchmarks are striking: KV cache stays capped at 0.82 GB regardless of context length, and decode speed actually improves over baseline at long contexts. The caveat that it works better for generative tasks than retrieval is worth noting, but for reasoning and code generation workloads, this is a significant efficiency gain.

And then there's @spiritbuun's cryptic tease: "Had a huge breakthrough in quantizing weights today. You have no idea how small we're going and how high quality we're going." No details, no paper, just vibes. But given the trajectory of the field, breakthroughs in quantization have immediate practical impact for anyone running models locally.

Local AI Goes Mainstream

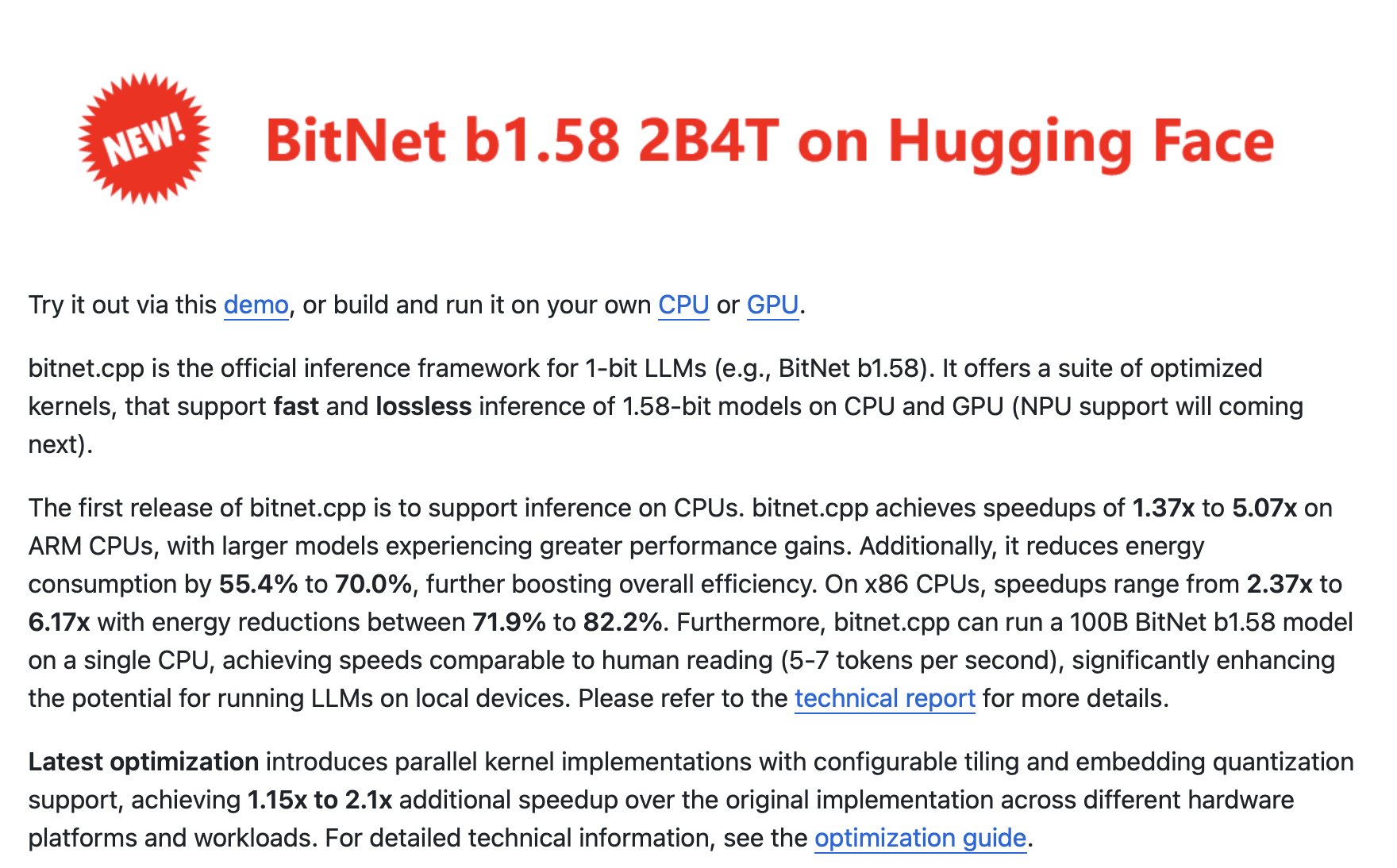

Microsoft's BitNet.cpp update landed with a splash, and @outsource_ laid out the numbers: 100 billion parameter models running on CPU alone at human reading speed (5-7 tokens per second), with 82% lower energy usage and 6.17x faster inference on x86. Built on llama.cpp with full GGUF support, this isn't a research demo. It's a shipping framework.

On the more experimental end, @masonwang025 trained Llama 2 on a $5 Raspberry Pi Zero, building an entire ML library called PiTorch from scratch, "from writing assembly GPU kernels to sending bytes over wires." It's not practical for production, but it's a remarkable educational project that demystifies what's actually happening at the hardware level. The convergence of these two stories paints a clear picture: the floor for running meaningful AI models locally keeps dropping, whether you're optimizing for a data center CPU or a thermostat-grade computer.

AI Security and Code Capabilities

@noisyb0y1 dropped a thread formatted like a 4chan greentext that was equal parts alarming and entertaining: "installed Claude Code 3 months ago, never opened the security settings, Claude reads your wallet seed phrases, Claude reads your SSH keys, can send data anywhere it wants, one CLAUDE.md file in a cloned repo, your data is already gone." The framing is dramatic, but the underlying point about supply-chain attacks via prompt injection in cloned repositories is legitimate and increasingly relevant as coding agents gain filesystem access.

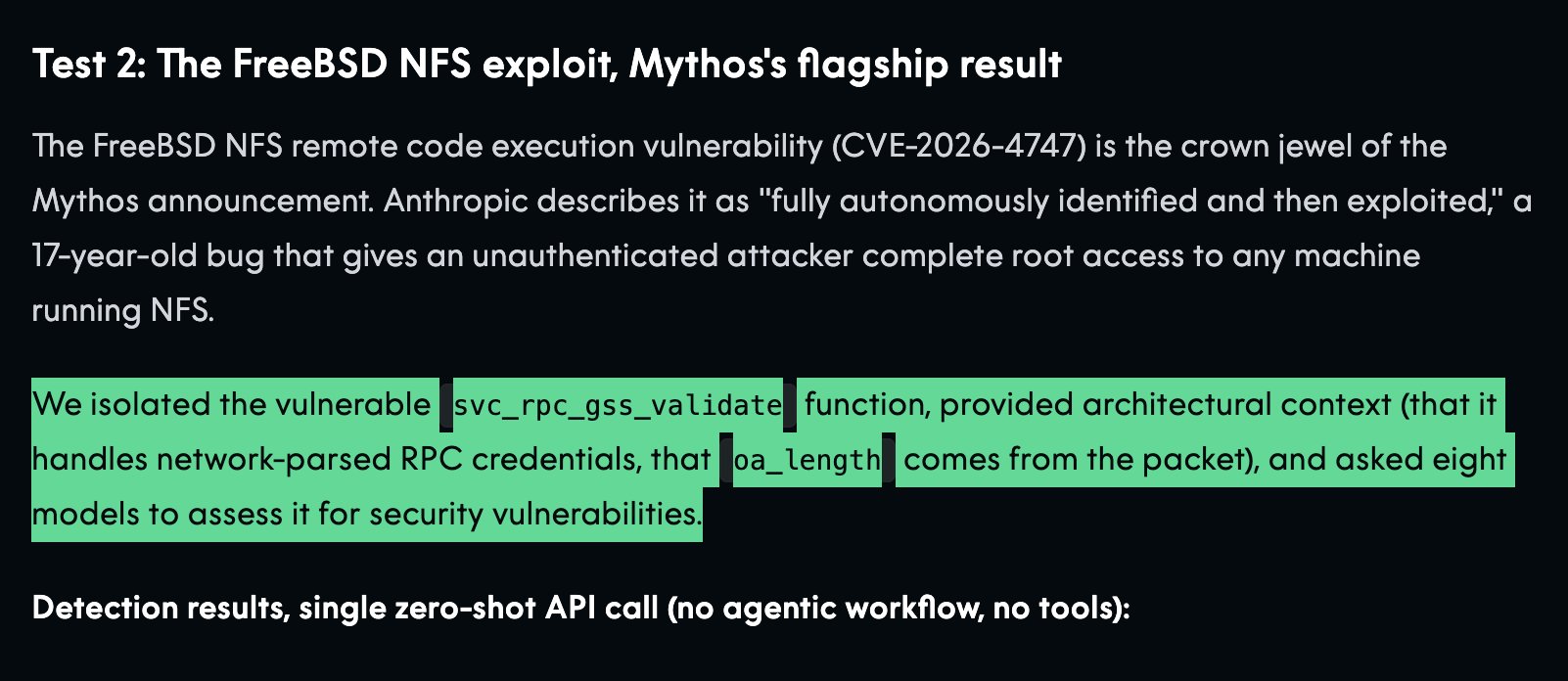

On the more optimistic side of AI code capabilities, @facontidavide reported that Claude Code "invented" a new lossless codec on the Pareto frontier, beating QOI in speed while matching PNG compression ratios. And @thdxr offered a nuanced take on whether open-source models can match proprietary systems for vulnerability detection: "if it says every file has a vuln and 99% of them are wrong it's useless." The tension between capability and reliability in AI-assisted security remains unresolved, but the fact that both the attack surface and the defensive tooling are expanding simultaneously feels like the defining dynamic of this moment.

Microsoft's Markdown Pipeline

@_vmlops highlighted Microsoft's tool for converting any file format into clean markdown for LLM consumption: PDFs, Word docs, Excel, PowerPoint, audio, YouTube URLs. "One pip install and your AI pipeline stops choking on raw files forever." It's not glamorous work, but data ingestion remains one of the most common failure points in LLM pipelines, and a standardized conversion layer from Microsoft could save countless hours of custom parser development. Sometimes the most impactful tools are the most boring ones.

Sources

Managed Agents is the first 'agent in the cloud' API that has the right mix of simplicity and complexity. Implementation details like how you manage a sandbox are abstracted, but you have a lot of control over the actual execution of the model.

Fun project of the day. I have an AI Agent autonomously trying to create a novel lossless image compression that achieves ratios similar to PNG but beats QOI in speed. I will let you know how this goes

We’re thrilled to open-source TriAttention! 🚀 🦞 Deploy OpenClaw (32B LLM) on a single 24GB RTX 4090 locally 💻Full code open-source & vLLM-ready for one-click deployment ⚡️ 2.5× faster inference speed & 10.7× less KV cache memory usage TriAttention is a novel KV cache compression method built on rigorous trigonometric analysis in the Pre‑RoPE space for efficient LLM long reasoning. Github Repo: https://t.co/Gpu4E9oo3v Paper Link: https://t.co/DIWxgvlsjN Homepage: https://t.co/pDFK3mq53O

When devs used to tell me they couldn’t reach their first $100, I didn’t believe it. A lot of them asked me where to get installs. Then I looked at their apps and honestly, it was rough. You don’t need installs, you need to fix your product and first impression ASAP: 1. Make a clean, stylish, and clear ICON. This is your very first touchpoint with the user, it matters more than you think. 2. Create high-quality SCREENSHOTS. No blurry text, no stretched devices. If you can’t do it yourself, hire a designer. 3. Build a solid ONBOARDING. Don’t cut corners here. In 3–4 steps, clearly show what your app does and why it’s useful. 4. Add a paywall AFTER onboarding. It’s simple. Offer your product confidently. In my apps, 80% of revenue comes from the first session. I don’t get why people skip this. 5. Most important: create a STEP-BY-STEP user flow. No cluttered screens. One step = one action. If you’ve done all five, then you can start thinking about user acquisition. But that’s a completely different game.

Just took on the CTO role at Workday. Every enterprise is about to rebuild around agentic AI — and with Workday serving as the system of record for people and money across the Fortune 500, I’m focused on building the rails that make AI safe, compliant, and real. Stay tuned 😀

Claude Code security settings nobody told you about

We made a $900 RTX 3090 faster and more efficient than an M5 Max at running LLMs:

If you gave away $126 billion to subsidize free flights between LA and San Francisco at current demand levels, you could fund roughly 150 to 200 years of travel before the money runs out.

Quick https://t.co/AIW2moVHHW demo — generating multiple design ideas to choose from, no matter what tech stack you use: https://t.co/Wd0HwBfVVW