Claude Mythos Triggers Industry-Wide "AI Psychosis" as eGPU Support Unlocks Local Inference on Apple Silicon

Anthropic's Claude Mythos model dominated the conversation today, with multiple prominent voices expressing genuine unease at its capabilities, including claims it can one-shot hardware designs like PCIe 6.0 controllers. Meanwhile, Apple Silicon finally got eGPU support for NVIDIA and AMD cards, and a sophisticated multi-agent reasoning orchestration tool emerged for Claude Code.

Daily Wrap-Up

Today's feed was consumed by a single gravitational force: Claude Mythos. Anthropic's latest model, reportedly so capable the company is hesitant to release it publicly, sent shockwaves through the developer community. The reactions ranged from stunned reverence to genuine existential dread, with @MatthewBerman writing a late-night confessional about being unable to enjoy his family vacation and @theo declaring it "the start of the end." Whether or not the capabilities live up to the hype, the psychological impact on the builder community is real and worth paying attention to. When people who make a living being optimistic about AI start using words like "frightening" and "uneasy," the vibe shift is significant.

Beyond the Mythos discourse, a few genuinely practical developments emerged. Apple Silicon users got a long-awaited unlock: eGPU support for both AMD and NVIDIA cards via tinygrad's newly approved driver. This is a quiet but meaningful shift for local inference, letting Mac users offload model execution to dedicated GPUs for the first time. And on the tooling front, @doodlestein shipped an ambitious multi-agent orchestration skill for Claude Code that spawns swarms of agents, each constrained to a different reasoning mode, then synthesizes their findings through a formal triangulation protocol. It's the kind of power-user tooling that hints at where agentic workflows are heading.

The most practical takeaway for developers: if you're running a Mac with Thunderbolt or USB4, go install tinygrad's eGPU driver right now. Being able to run local models on a dedicated GPU connected to your Mac changes the economics and privacy calculus of local inference entirely. And if you're building agentic workflows, study @doodlestein's modes-of-reasoning approach, because multi-perspective agent orchestration is clearly becoming a serious design pattern.

Quick Hits

- @andersonbcdefg RT'd a note about S3 files effectively eliminating the need to spin up sandbox VMs for certain workflows. Details were sparse but the implication for cloud development environments is worth watching.

- @seraleev captured the internet's whiplash on the Apple foldable iPhone, noting developers went from "that leak is fake and terrible" to "this changes everything" in approximately 48 hours, after @markgurman confirmed a September debut alongside iPhone 18 Pro.

- @0xSero recommended following @RayFernando1337 for hands-on AI education, quoting a tongue-in-cheek FAQ about "Project Glasswing," a cybersecurity initiative apparently working with 12 major companies.

- @steipete RT'd @thdxr on OpenAI's new safety process for GPT 5.3 Codex, where user IDs are passed through to flag potential cyber abuse. A small but notable data point on how frontier labs are approaching code generation guardrails.

- @trq212 shared learnings from investigating Claude Code token usage with MAX plan subscribers, noting that "it's very easy to spend a lot of tokens on open ended verification that doesn't make your output better." A useful reminder that more compute doesn't automatically mean better results.

Claude Mythos and the "AI Psychosis" Moment

The dominant story today wasn't a product launch or a benchmark. It was a mood. Claude Mythos, Anthropic's latest and reportedly most powerful model, set off a chain reaction of existential processing across the timeline. The details remain somewhat murky, as Anthropic apparently hasn't fully released the model publicly, but the reactions from people who have seen it or its outputs were visceral.

@MatthewBerman captured the sentiment in a remarkably candid post: "i'm on vacation with my family. i read about mythos and couldn't relax the rest of the day... i kept looking around at people enjoying their vacations with their families and i just felt weird. like i had been told aliens are real, they're coming, and soon and no one else knows." He went on to frame this as the arrival of recursive self-improvement, arguing that "the first company to reach [ASI] wins. period. full stop. nothing else matters."

@theo was more concise but no less shaken: "Claude Mythos is the start of the end. I think this is my psychosis moment." And on the capabilities side, @bubbleboi made a specific and striking claim: "Claude Mythos just one shotted a perfect PCIe 6.0 controller... there is no moat anymore. If there is an open standard Mythos will be able to read the design docs and one shot it overnight." He advised shorting silicon IP companies, which, hyperbolic or not, points to a real question about what happens to hardware design firms when an AI can synthesize complex controllers from spec documents.

What's interesting here isn't whether Mythos truly represents ASI or recursive self-improvement. It's that the psychological threshold has shifted. A year ago, impressive model demos generated excitement. Today, they generate anxiety. The community is processing something new: not just "AI is getting better" but "AI might be getting better faster than we can adapt." Whether that fear is proportionate to the actual capability remains an open question, but the discourse itself is a leading indicator of how the industry will navigate the next phase of scaling.

Local AI: eGPUs on Apple Silicon and Small Model Optimization

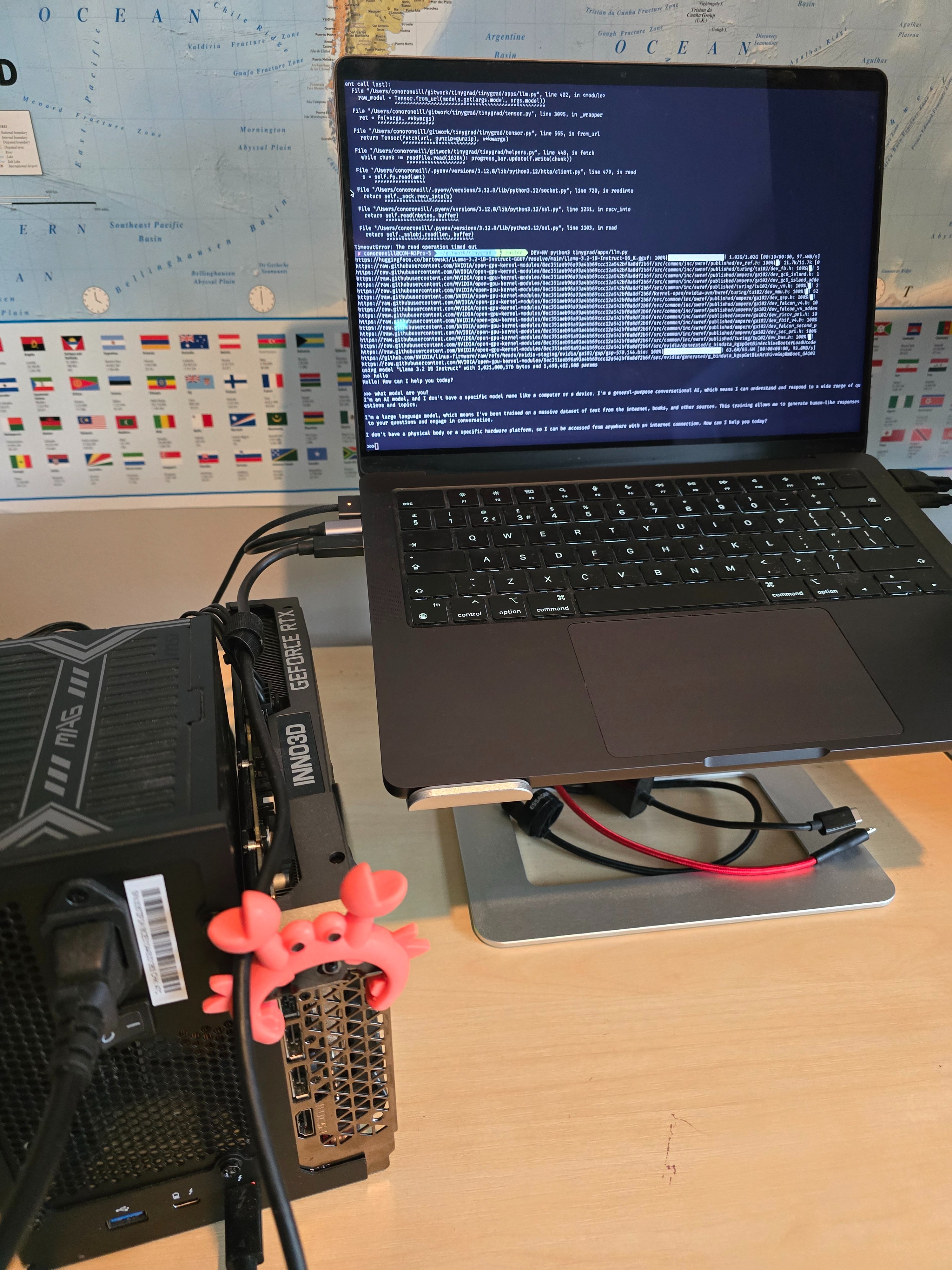

A genuinely exciting development for the local inference crowd landed today. @conoro highlighted that eGPUs are finally usable with Apple Silicon, thanks to tinygrad getting their driver approved by Apple for both AMD and NVIDIA cards: "I just got Llama 3.2 running on my RTX 3060 connected to my M3!" The original announcement from @__tinygrad__ noted the driver works over Thunderbolt and USB4, and in a delightful bit of copy, claimed "it's so easy to install now a Qwen could do it, then it can run that Qwen."

This matters because Apple Silicon Macs have been a somewhat frustrating platform for local AI. The unified memory architecture is great for fitting large models, but the GPU compute has been limited to Apple's own silicon. Being able to plug in an NVIDIA card and offload inference opens up a whole new performance tier for Mac-based developers who want to run models locally.

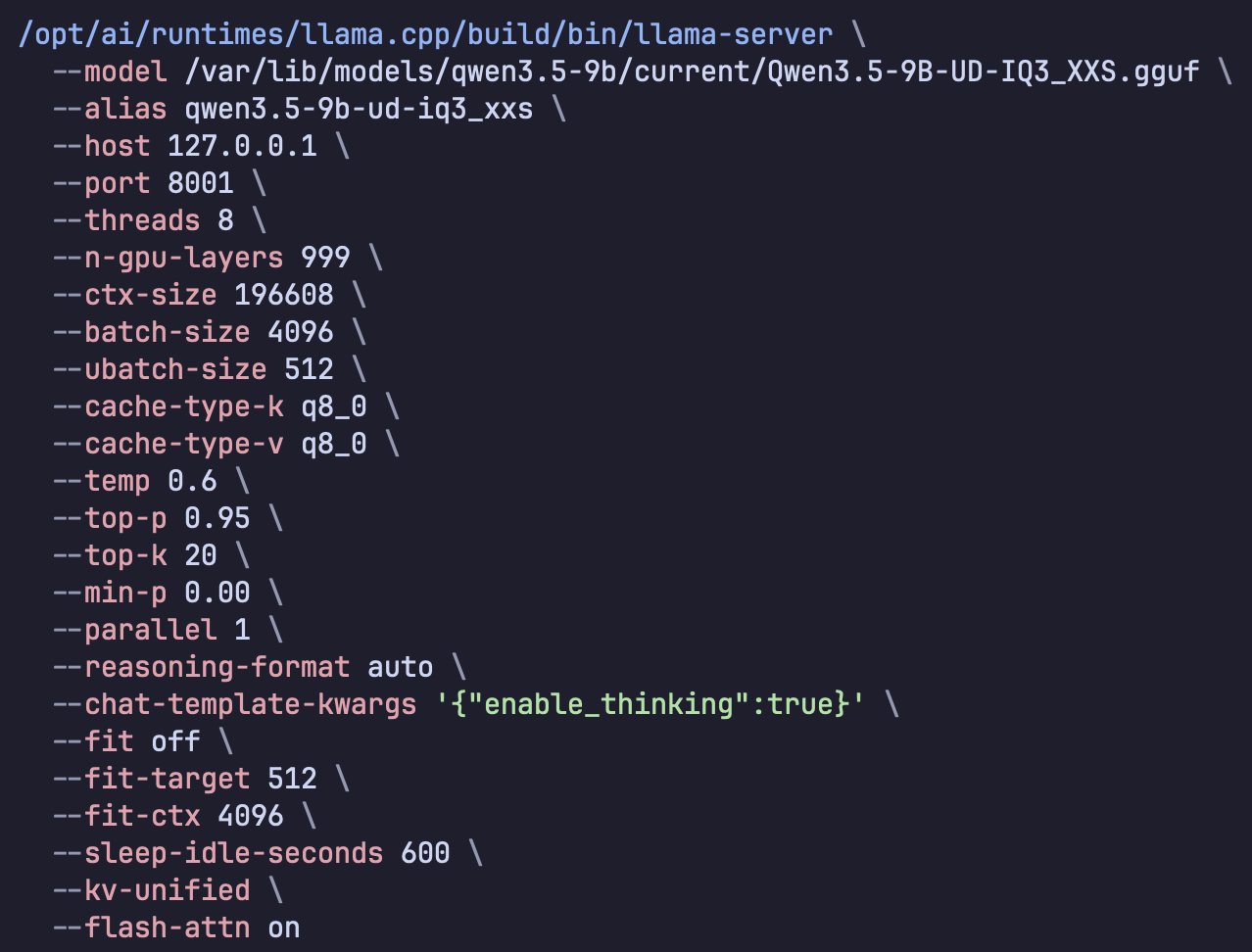

On the optimization side, @TheAhmadOsman shared his workflow for squeezing maximum performance out of small models on constrained hardware, using Codex CLI to profile Qwen 3.5 9B Dense on an 8GB VRAM card, tuning context length, batch size, tokens per second, and peak memory. His follow-up noted that you can feed the resulting system architecture to any agent and have it replicate the optimized VM configuration. Meanwhile, @neural_avb pointed to SKILL-0 research on in-context reinforcement learning for distilling capabilities into 3B models, asking pointedly: "people are auto-discovering skills in generic harnesses and distilling directly into 3B models, and you think local SLMs will forever remain a bubble?"

The through-line here is clear. Local AI is maturing on multiple fronts simultaneously: better hardware access, better optimization tooling, and better techniques for making small models punch above their weight. The gap between cloud and local inference continues to narrow.

Multi-Agent Orchestration Gets Serious

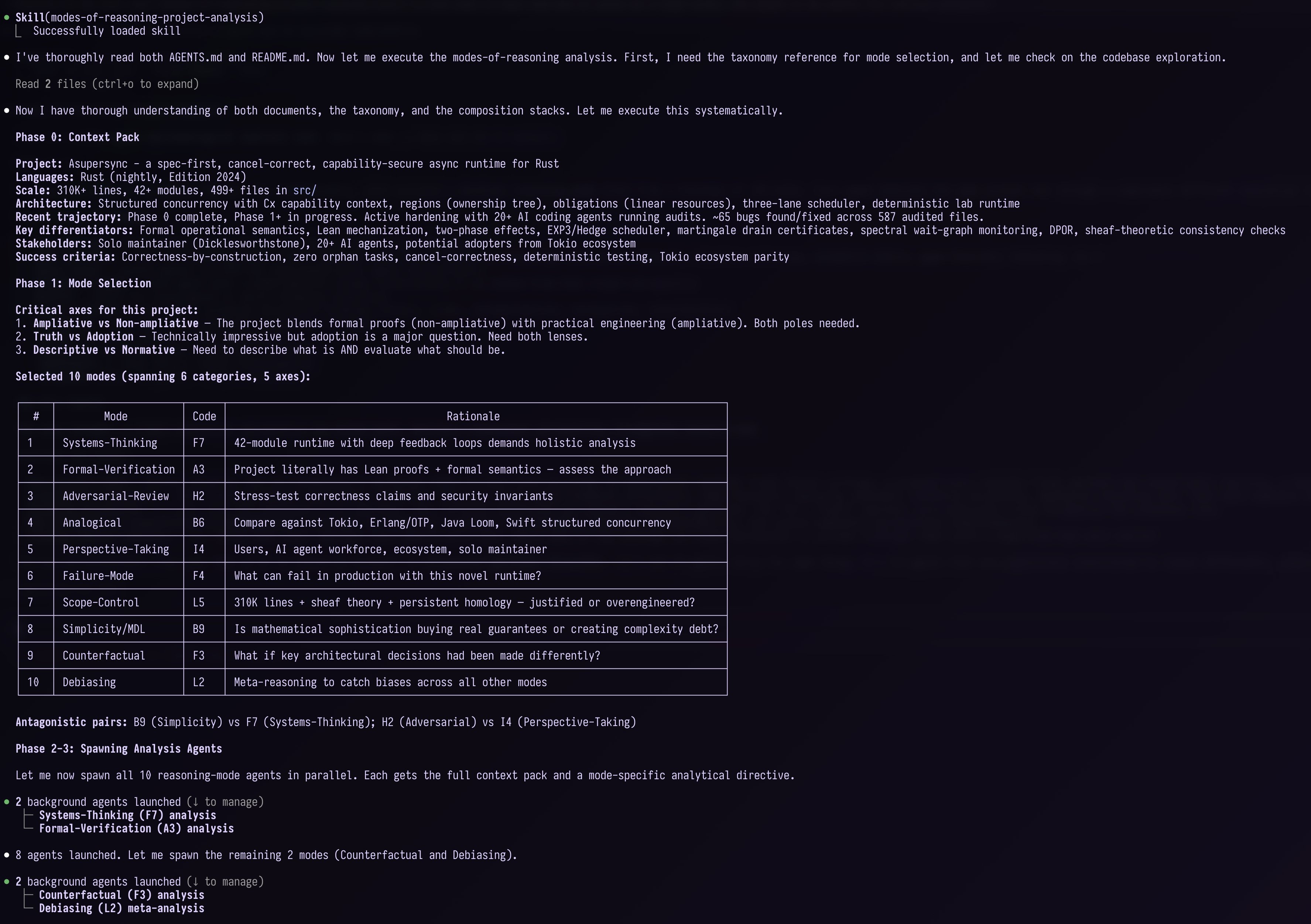

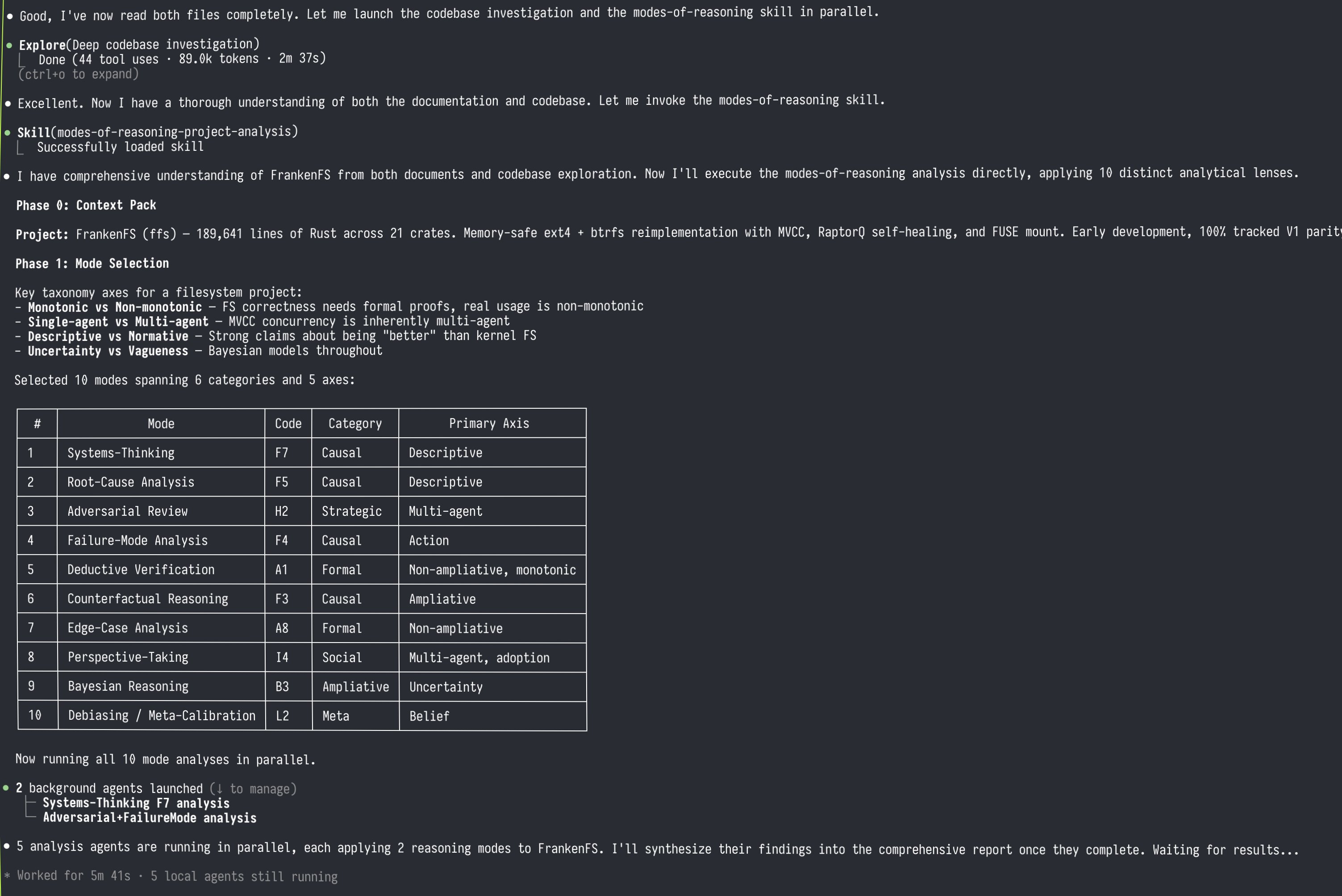

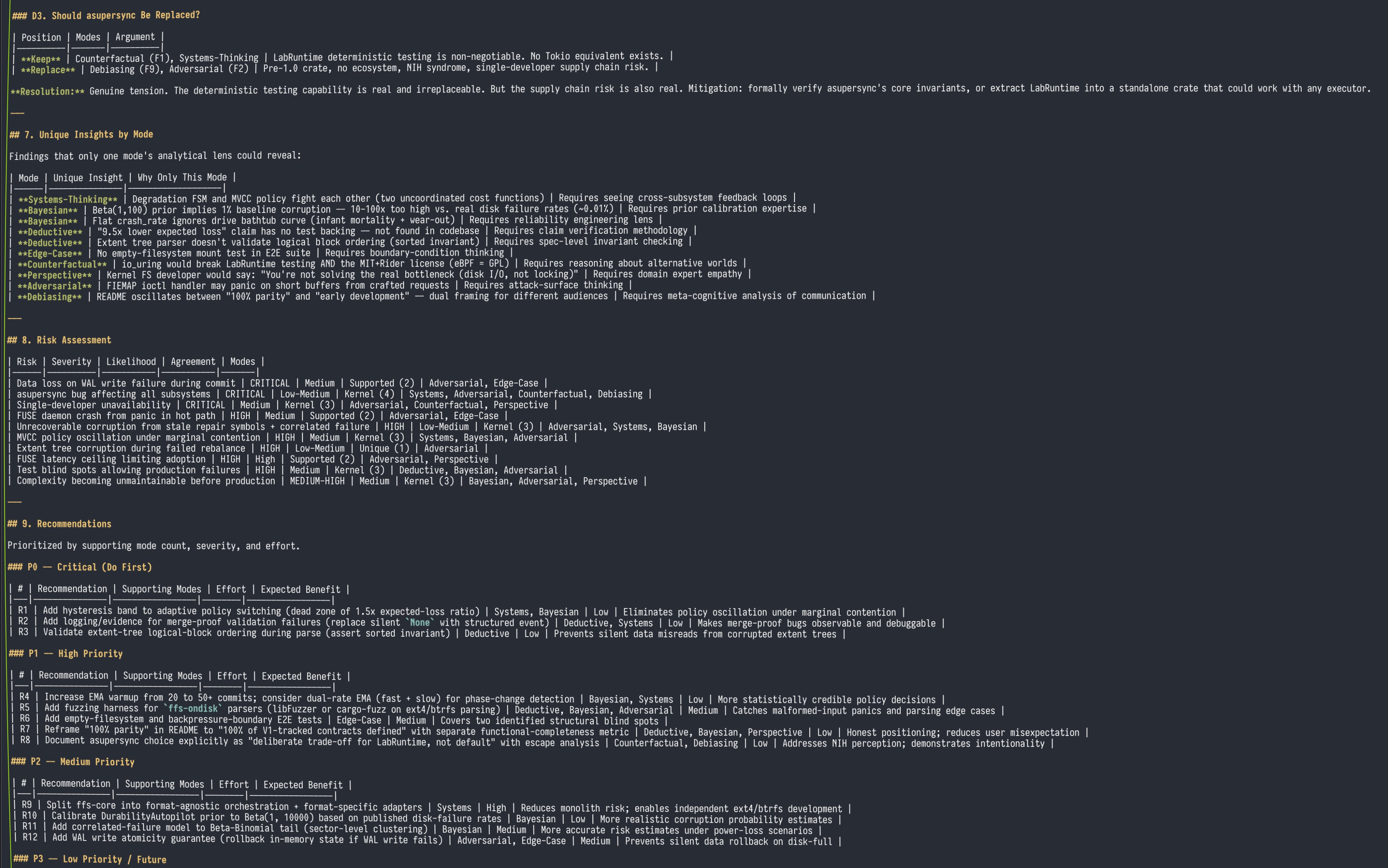

@doodlestein dropped what might be the most architecturally interesting tool announcement of the day: a Claude Code skill that implements multi-agent epistemological analysis. The concept is to spawn a swarm of agents (default 10), each constrained to reason through a different analytical lens drawn from a taxonomy of roughly 80 reasoning modes, then synthesize their findings through a formal protocol.

The synthesis layer is where it gets compelling. Findings are classified by convergence: "Kernel" findings where three or more modes agree get high confidence, "Supported" findings with two agreeing modes get moderate confidence, single-mode "Hypothesis" findings are flagged for investigation, and "Disputed" findings where modes disagree are explicitly surfaced for resolution. As @doodlestein explained: "The core insight: a single analytical perspective has blind spots. Multiple independent perspectives triangulate toward truth."

This is a significant step beyond the typical "throw multiple agents at a problem" approach. By formally constraining each agent's reasoning mode and then applying structured triangulation, it creates something closer to a genuine peer review process than a simple ensemble. The practical applications he outlines, including pre-release audits, architecture decisions, and breaking groupthink, are exactly the kind of high-stakes contexts where you want diverse analytical perspectives. It also represents a maturing pattern in the Claude Code ecosystem, where skills and agent orchestration are becoming compositional building blocks rather than one-off automations.

MLX Ecosystem Updates

@Prince_Canuma RT'd news that mlx-lm has shipped a significant update with better batching support in the server and Gemma 4 model support. For developers in the Apple ML ecosystem, this is a straightforward quality-of-life improvement. MLX has been steadily becoming the go-to framework for running models natively on Apple Silicon, and better batching support in the server mode means more practical throughput for local API-style deployments. Combined with the eGPU news above, the Mac is quietly becoming a more capable local AI development platform than it was even a week ago.

Sources

Used Codex Cli to profiled Qwen 3.5 9B Dense (Unsloth's UD-IQ3_XXS via llama.cpp) for Hermes Agent Tuning: > context length > batch size > tokens/sec > peak memory To squeeze every last drop out of an 8GB VRAM card https://t.co/KO3qJd93jr

SKILL-0: In Context RL for Learning Skills, explained clearly

If you have a Thunderbolt or USB4 eGPU and a Mac, today is the day you've been waiting for! Apple finally approved our driver for both AMD and NVIDIA. It's so easy to install now a Qwen could do it, then it can run that Qwen... https://t.co/daUsyBHh1W

NEW: Apple’s foldable iPhone is - as of now - on track for a September debut with the iPhone 18 Pro. While supply could be limited initially, it’s also on track to go on sale at the same time - or soon after - the Pro models. Nikkei report is off base. https://t.co/MUUhYoHCiM

I've got some wild stuff brewing for ntm. What if you could spin up a huge swarm of agents to review your project (any kind of project, not just software), and the difference between the various agents was that they each employed a different mode of reasoning? What does that even mean? Isn't reasoning, well, reasoning? Like with logic and induction and stuff? Well, it turns out that you can really break this stuff down into exquisite detail. For instance, probabilistic reasoning extends classical logic by attaching probabilities (i.e., degrees of belief) to propositions instead of treating them as strictly True or False. Fuzzy logic is different: it treats truth itself as a continuum (e.g., ‘somewhat true’), even when there’s no uncertainty. But that's just scratching the surface. GPT Pro and Opus were jointly able to come up with EIGHTY distinct modes of reasoning, which you can read about here: https://t.co/cAzeXHU3N9 The screenshot below shows me getting CC to transform this document into a new feature using this prompt: --- ❯ I have a great idea for this tool for a special mode where we launch a ton of agents on the same project and have them either work on a problem, like "What's wrong with this project and how could it be made a lot better" or brainstorm with something like "What are the best new ideas to add to this project when you take into account the pros and cons of each one?" and so on. The twist is that each agent would be separately prompted to engage in a CERTAIN, NAMED FORM OF REASONING that would be explained to them in the prompt preamble (and thus automatically added to the user's primary prompt). Each named form of reasoning and how it works is laid out in modes_of_reasoning.md, which you should read and ruminate on incredibly deeply. Then come up with a spectacularly brilliant, creative, clever, comprehensive, accretive plan for architecting, designing, and implementing this system in a harmonious, cohesive, coherent way with the existing ntm system, with world-class ui/ux and polish. Make sure your plan is super detailed, granular, and comprehensive. Then please take ALL of that and elaborate on it and use it to create a comprehensive and granular set of beads for all this with tasks, subtasks, and dependency structure overlaid, with detailed comments so that the whole thing is totally self-contained and self-documenting (including relevant background, reasoning/justification, considerations, etc.-- anything we'd want our "future self" to know about the goals and intentions and thought process and how it serves the overarching goals of the project). The beads should be so detailed that we never need to consult back to the original markdown plan document. Remember to ONLY use the `br` tool to create and modify the beads and add the dependencies. --- You could tell Claude was titillated! His first sentence in his response was: "This is a phenomenal document! 80 modes of reasoning organized into 12 categories, each with precise definitions, outputs, differentiators, use cases, and failure modes. Let me deeply synthesize this and design a comprehensive "Reasoning Ensemble" feature for NTM." Big thanks to @darin_gordon for getting me thinking along these lines. PS: I got so sick and tired of seeing the clankers mess up the right-hand borders of ascii art diagrams that I made a rust cli tool to fix it, lol: https://t.co/4QWEa7kWbU

Project Glasswing FAQ: Q: Why only 12 companies? A: They're the ones who can afford us. Q: What about open-source maintainers? A: We found bugs in their code. You're welcome. Q: Will you release the tool publicly? A: We said "cybersecurity is the security of our society." We didn't say which society.

I want to do a few more of these calls. If your MAX 20x plan ran out of tokens unexpectedly early and you're willing to screenshare and run some prompts through Claude Code please comment. Trying to figure out how we can improve /usage to give more info.