Claude Code Blocks Self-Analysis, Netflix Drops AI Object Removal, and the Local AI Hardware Debate Heats Up

Today's feed centered on local AI hardware realities and the growing tension between cloud and local inference, alongside notable releases from Netflix's VOID video tool and Falcon Perception's open-vocabulary segmentation model. Meanwhile, Claude Code made waves for refusing to analyze its own source code, and developers debated whether premium AI subscriptions are sustainable.

Daily Wrap-Up

The conversation today split neatly into two camps: people excited about what AI can do right now, and people worried about what it will cost them tomorrow. On the capability side, Netflix dropped VOID, a tool that removes objects from video and corrects the physics afterward, and Falcon Perception showed off an impressively simple approach to visual segmentation. On the anxiety side, developers are staring at their hardware specs, their subscription bills, and their dependency on cloud providers, wondering which of those three will bite them first.

The local AI discourse has matured significantly. We've moved past the "just run it on your MacBook" phase into a genuine hardware taxonomy conversation, where memory bandwidth matters more than marketing stickers and the answer to "what should I buy?" is increasingly "it depends on five different things." That maturity is healthy, even if it makes the buying decision harder. The most entertaining moment of the day goes to Claude Code throwing an error when someone tried to use it to analyze its own source code, a delightful bit of recursive self-protection that feels like the AI equivalent of a magician refusing to explain the trick.

The most practical takeaway for developers: if you're investing in local AI hardware, stop comparing raw specs and start asking what models you need to run, what bandwidth tier those models require, and what software stack you're committed to. And if you're relying on cloud AI subscriptions, start building fallback workflows now, because as @thekitze pointed out, the subsidized pricing era won't last forever.

Quick Hits

- @melvynx shared that Claude Code now throws an error if you try to analyze its own source code, a fun bit of self-referential protection that got plenty of attention. Link

- @elonmusk highlighted major X API upgrades including pay-per-use going GA worldwide, an XMCP Server for agents, and official Python and TypeScript SDKs. Link

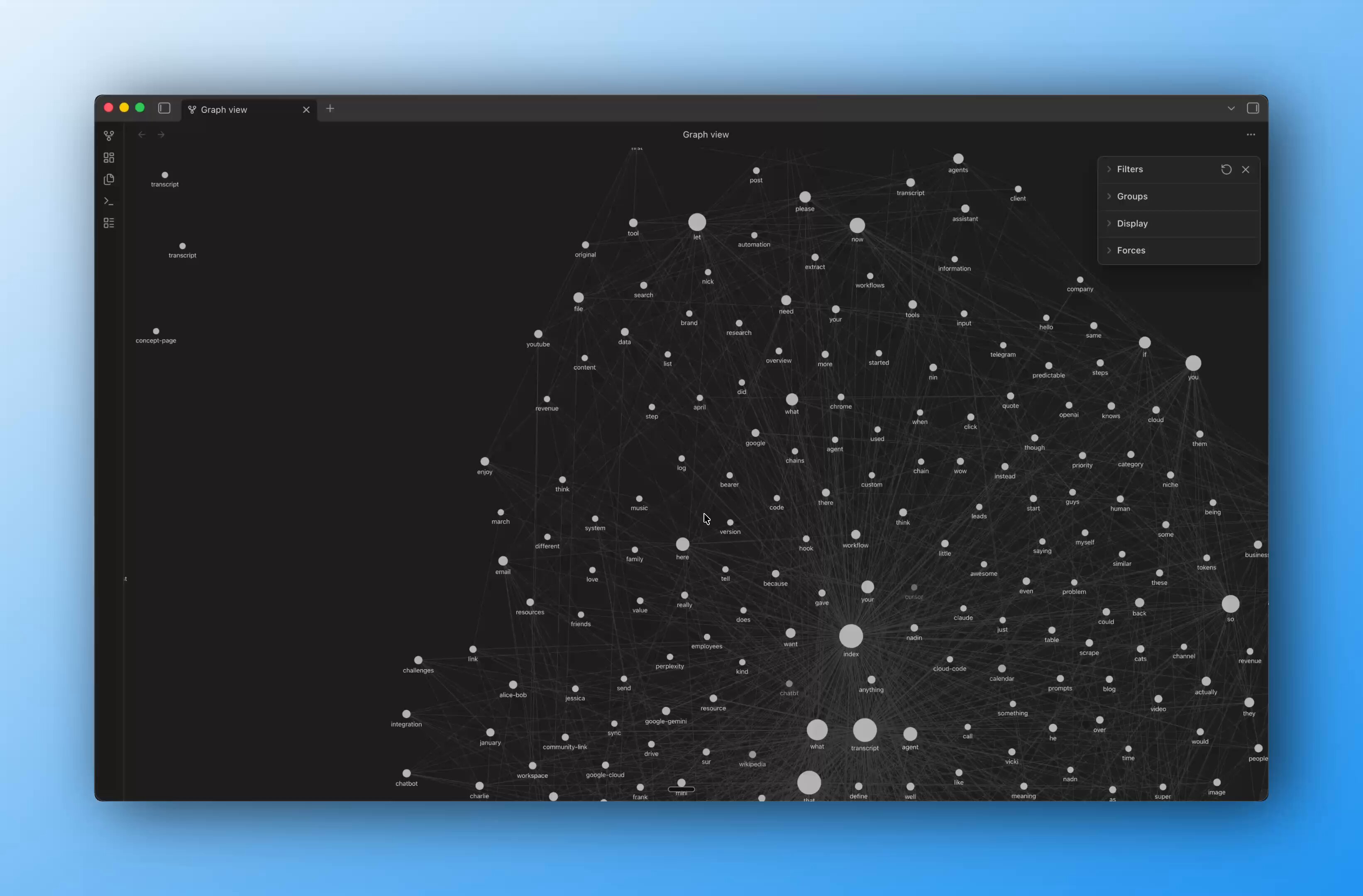

- @NickSpisak_ shared a weekend project building a "second brain" CLI using Karpathy's latest gist, Obsidian vaults, yt-dlp, and AI agents to index and query personal data across YouTube history, X archives, and agent logs. Link

- @alightinastorm spotlighted @boona11's stunning Three.js rendering study inspired by Tiny Glade, featuring procedural grass, animated water, and a 7-pass post-processing stack running at 120fps in the browser. A reminder that raw creative engineering talent is alive and well. Link

- @byteHumi highlighted Obsidian's philosophy as a $350M company built by 3 engineers that refuses VC, refuses analytics, and hired their CEO from their own Discord server. Link

Local AI: Hardware Ladders, Philosophy, and Self-Defense

Three posts today converged on what's becoming the defining tension in the AI developer ecosystem: the relationship between local inference and cloud dependence. The conversation has evolved well past hobbyist tinkering into a serious infrastructure discussion, with developers mapping out hardware tiers, bandwidth requirements, and software stack commitments like they're designing production systems. Because increasingly, they are.

@TheAhmadOsman dropped the most comprehensive local AI hardware breakdown I've seen in a single post, laying out the full landscape from NVIDIA's discrete cards through Apple Silicon to AMD and even Tenstorrent. The key insight isn't any single spec comparison but the framing: "The local AI market is really five different markets wearing the same buzzword." The post identifies five distinct categories: fastest raw speed (discrete NVIDIA), biggest one-box memory (Apple Ultra), coherent NVIDIA appliance (DGX Spark), first x86 unified-memory contender (Strix Halo), and open-source stack (Tenstorrent). The practical advice cuts through the noise: "Stop asking which box is best. Start asking: what must fit? What bandwidth tier do I need? What software stack do I trust? Which bottleneck am I buying?"

@0xSero took a more philosophical angle, offering what amounts to a manifesto for local AI pragmatism. Rather than overselling local capabilities, the post is refreshingly honest about limitations while making a compelling case for why it still matters: "Local AI is self defence, it is a go kit, it is a rebalancing of power." The analogy that landed hardest was comparing cloud AI to renting a Ferrari for cheap while local AI is the beater Toyota you can depend on. "It's delusional to think it approaches or will ever approach SOTA," @0xSero writes, "the scale of private labs blows anything you can get for less than 25k USD out the water." But that's not the point. The point is autonomy and resilience.

What ties these posts together with @thekitze's complaint about the $200 Codex subscription being "super limited and useless" without the 2x usage multiplier is a growing awareness that the current AI pricing landscape is artificially cheap. When @thekitze warns "we'll be so cooked when the subsidies will be over," that's the same instinct driving the local AI movement. The smart play isn't choosing one side but building competence in both, using cloud for peak capability while developing local fallbacks that keep you operational when pricing shifts or services degrade.

AI Vision and Video: Netflix VOID and Falcon Perception

Two significant releases today pushed the boundaries of what AI can do with visual content, and both represent meaningful shifts in their respective domains. One comes from a massive entertainment company, the other from an open research effort, but together they paint a picture of computer vision capabilities advancing on multiple fronts simultaneously.

@minchoi highlighted Netflix's release of VOID, an AI system that removes objects from video and, crucially, corrects the physics of the scene after removal. This isn't simple inpainting; it's understanding how a scene's dynamics change when an element is removed and reconstructing plausible physics for what remains. The implications for post-production workflows are significant, potentially eliminating hours of manual VFX work for common cleanup tasks.

On the research side, @ivanfioravanti called Falcon Perception "unbelievable," pointing to @dahou_yasser's release of an open-vocabulary referring expression segmentation model alongside a 0.3B OCR model matching competitors 3-10x its size. The technical approach is notable for its simplicity: rather than the complex multi-pipeline architectures that dominate the field, Falcon uses a single early-fusion Transformer with shared parameters. As @dahou_yasser describes it, they "developed a novel simpler 'bitter' approach: one early-fusion Transformer (image + text from first layer) with a shared parameter space, and let scale + training signal do the work." The code, paper, and playground are all publicly available, making this immediately useful for developers working on vision tasks.

These releases share a common thread: the commoditization of capabilities that were research-grade problems just a year ago. Object removal with physics correction and efficient open-vocabulary segmentation are both moving from "impressive demo" to "tool you can actually ship with."

AI Pricing and the Sustainability Question

The economics of AI tooling surfaced today through a pointed observation that deserves attention. @thekitze's frustration with the $200 Codex subscription being "super limited and useless" without double usage credits is a canary in the coal mine for the entire AI-assisted development ecosystem. The blunt assessment that "we'll be so cooked when the subsidies will be over" reflects a growing unease among developers who've built their workflows around artificially cheap AI access.

This connects directly to @open_founder's announcement of a reasoning framework called SERV-nano that allegedly matches GPT-5.4 "at 20x lower cost and 3x the speed." Whether those claims hold up under scrutiny remains to be seen, but the pitch itself reveals market demand: developers want capable AI that doesn't bankrupt them at scale. The fact that @open_founder positions the product as a drop-in replacement, where "any builder or enterprise swaps two lines of code and their agents get much cheaper and much smarter instantly," tells you exactly what pain point they're targeting.

The sustainability question isn't going away. If anything, it's becoming the central strategic concern for teams building AI-dependent products. Today's posts suggest the market is responding in three ways: local inference for resilience, alternative providers for cost, and increasingly vocal pushback against pricing models that don't scale with real-world usage.

Sources

Obsidian is a $350M company for a note taking app built by 3 engineers working remotely No other time in history was something like this possible What a wonderful time to be building a company

We’ve made major upgrades to X API: • Pay-Per-Use now GA worldwide • XMCP Server + xurl for agents • Official Python & TypeScript XDKs • API Playground - free realistic simulations New releases coming will be a game changer. Start building → https://t.co/hiyP33PMVa 🚢

I built a Three.js rendering study inspired by Tiny Glade’s painterly aesthetic, and got it running at 120fps in the browser. Over the past few weeks, I’ve been studying how stylized games achieve that soft, handcrafted look in real time. Tiny Glade was a huge inspiration, and I wanted to use the browser as a constraint: no compute shaders, no native GPU access, and single-threaded JavaScript. As part of this study, I implemented: - GPU-driven instanced brick walls with procedural noise jitter and elastic build animations - Tree, bush, and flower rendering with billboard card expansion, wind sway, and grow animations - Procedural grass with terrain conformance and interactive push deformation - Animated water with layered noise, interactive ripples, and Fresnel-based reflections - Procedural terrain with slope-aware triplanar materials, dirt paths, and rocks - A 7-pass post-processing stack with TAA, bloom, depth of field, painterly filtering, ACES tonemapping, 3D LUT color grading, and film grain The hardest part wasn’t writing any single shader. It was making all of these systems work together at high frame rates inside WebGL, where every millisecond counts and performance problems compound quickly across animation, materials, post-processing, and scene management. Some techniques in this study were inspired by analyzing Tiny Glade’s rendering approach, while others were original implementations built from scratch from visual reference. That contrast taught me a lot: recreating an effect is one challenge, but designing your own shaders and systems to achieve a similar feel is a very different one. This is a private educational rendering study. Some temporary placeholder content is being used during the research phase, and any public or production version would use original or properly licensed assets. Huge credit to Pounce Light for the incredible art direction and rendering work in Tiny Glade: https://t.co/pQpoh8rVe7 @threejs #gamedev #webgl #threejs #rendering #graphics #realtimerendering #shaderdev

We are releasing Falcon Perception, an open-vocabulary referring expression segmentation model. Along with it, a 0.3B OCR model that is on par with 3-10x larger competitors. Current systems solve this with complex pipelines (separate encoders, late fusion, matching algorithms). We developed a novel simpler "bitter" approach: one early-fusion Transformer (image + text from first layer) with a shared parameter space, and let scale + training signal do the work. Please check our work ! 📄 Paper: https://t.co/dWvK5t7MIt 💻 Code: https://t.co/AJ65GbMrUY 🎮 Playground: https://t.co/BIgisZkeid 🤗 Blogpost: https://t.co/J2IjlBPywF

A team just solved AI's hardest engineering problem.

How to Build Your Second Brain