Self-Evolving Agents Go Open Source as Video Generation Models Challenge Commercial APIs

Today's feed centers on the rapid maturation of open-source AI agents and video models, with a standout project called 724 Office demonstrating self-repairing, tool-creating agent architecture in just 8 files. The community is also deep in conversation about agent design patterns, from structured disagreement protocols to reinforcement learning optimization, while Claude Code updates and rate limit frustrations reveal the growing pains of AI-native development workflows.

Daily Wrap-Up

The throughline today is unmistakable: open-source AI is eating the lunch of commercial offerings at a pace that keeps surprising even the people building with it daily. Whether it's video generation models running locally that make you wonder why Sora existed, or a single developer shipping a self-evolving agent system from a Jetson Nano, the gap between "demo from a well-funded lab" and "thing you can run on your own hardware" is collapsing fast. The agent conversation has also matured noticeably. We're past the "look, my LLM can call a function" phase and into genuine architecture discussions about memory systems, decision graphs, structured disagreement, and reinforcement learning pipelines for agent optimization.

For developers, the signal is clear: agent engineering is becoming a real discipline with its own patterns and anti-patterns. The posts about BRAID decision frameworks, Microsoft's Agent Lightning for RL-based agent training, and Steve Ruiz's advice on "soldier-proofing" agent skills all point in the same direction. Building agents that work once in a demo is trivial. Building agents that work reliably across diverse inputs requires the same kind of engineering rigor we apply to distributed systems. The tooling to support that rigor is finally arriving.

The most entertaining moment was probably @doodlestein's exasperation at Claude Code rate limits kicking in with just 3-4 agents running simultaneously, which perfectly captures the irony of 2026: we've built tools so good that the infrastructure can't keep up with how people actually want to use them. The most practical takeaway for developers: if you're building agents, stop letting them figure out their own process. Define explicit decision graphs with verification steps, output contracts, and failure branches. The BRAID pattern and 724 Office architecture both demonstrate that agent reliability comes from structured workflows, not smarter prompts.

Quick Hits

- @0xblacklight RT'd a sharp observation from @dexhorthy: code review bots will always find problems if you ask them to look for problems. The real test is asking if the code is actually good. Framing matters enormously with LLM-based review tools.

- @minchoi reports that Seedance 2.0 video generation is producing "insane" results, adding to today's theme of generative video rapidly improving across multiple projects.

- @badlogicgames RT'd @tarunsachdeva offering collaboration on what appears to be a game development platform, though details were thin.

- @Prince_Canuma got a shoutout as the "prince of MLX," highlighting the continued momentum of Apple's ML framework for local inference.

- @RayFernando1337 RT'd the launch of "missions" from @luke_alvoeiro, a new product feature though the tweet lacked enough detail for deeper analysis.

- @ashebytes posted a thoughtful video essay on feminine and masculine energy in frontier tech, arguing the industry benefits from broader expressions of both.

- @elonmusk responded to a post about his management style by noting he's built two trillion-dollar companies simultaneously, which is either an inspiring data point or a conversation-ender depending on your perspective.

- @theallinpod shared Chamath's take that AI will destroy brand premiums, arguing abundance always wins. Tesla outselling BMW on price and performance was his case study.

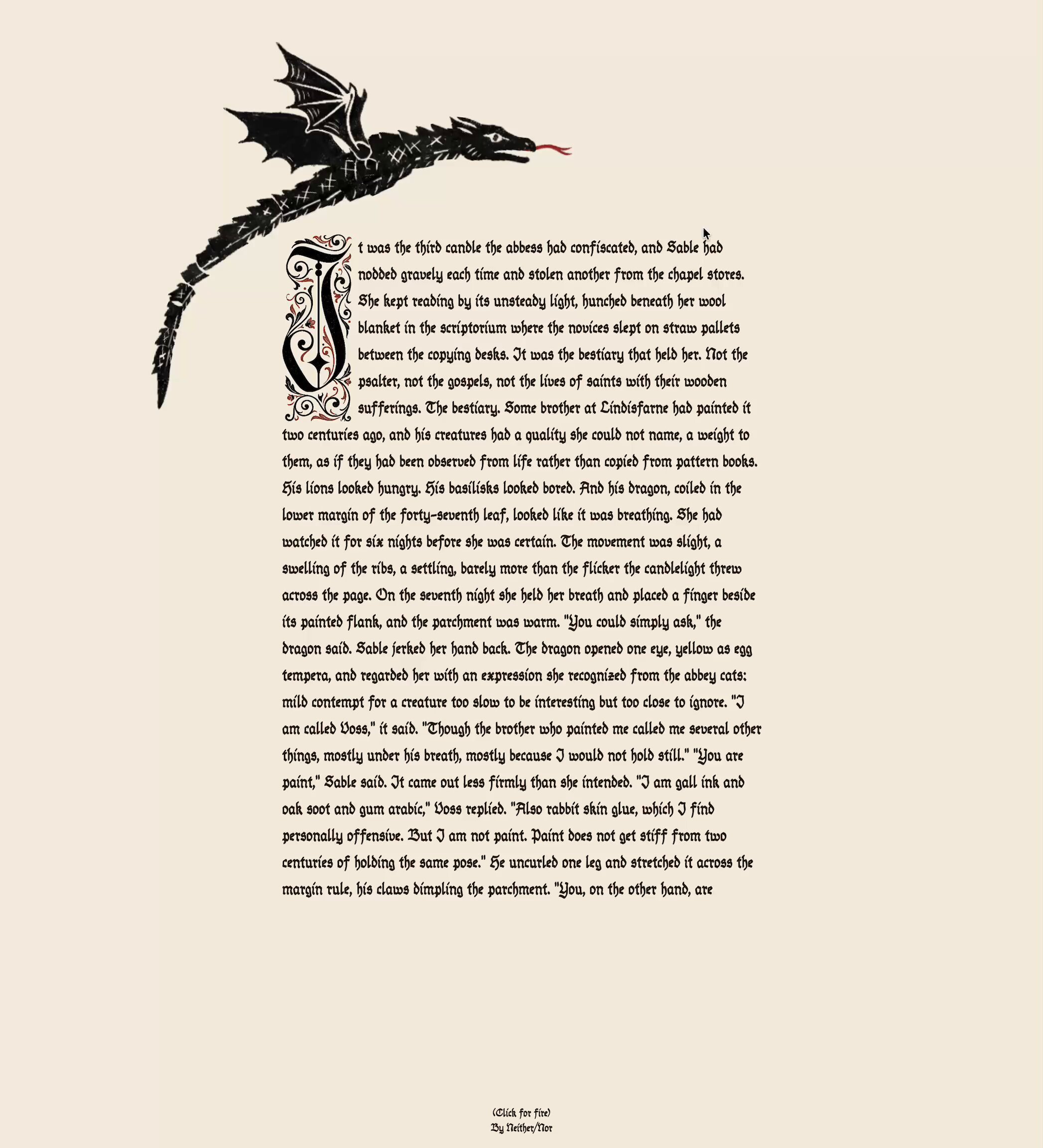

- @Riyvir showed off a charming illuminated dragon demo built with Pretext, the pure-TypeScript text measurement library from @_chenglou that bypasses CSS and DOM reflow entirely. Desktop only for now.

Agents & Architecture (5 posts)

The agent conversation has graduated from "can it work?" to "how do we make it work reliably?" and today's feed is rich with concrete answers. The standout is 724 Office, a self-evolving agent that @aigleeson called "the most honest AI agent implementation I have ever seen." What makes it genuinely interesting isn't any single capability but the architectural coherence: a three-layer memory system (recent JSON, compressed facts at 0.92 cosine similarity, vector search via LanceDB), runtime tool creation, automatic self-repair via daily health checks, and cron-based task scheduling, all in 8 files with zero framework dependencies. As @aigleeson described it: "One create_tool command and the agent writes a new Python function, saves it to disk, and loads it into its own process without restarting. You need a capability it does not have and it builds it."

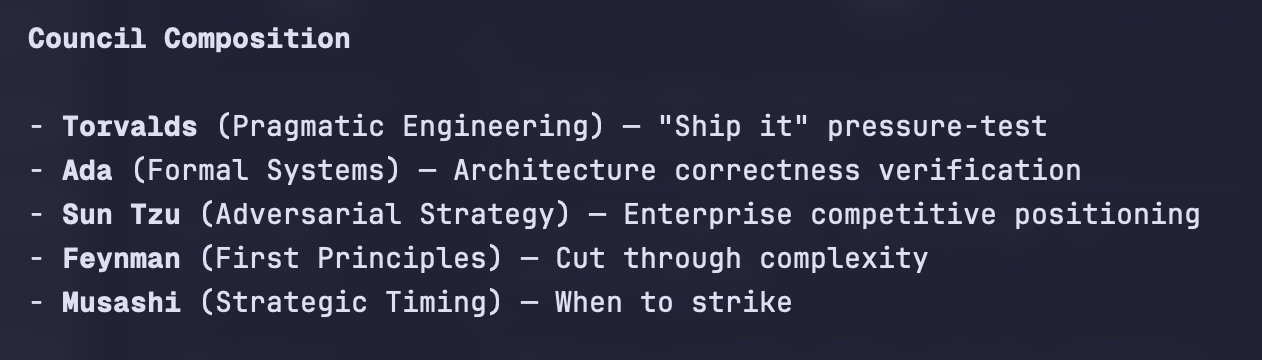

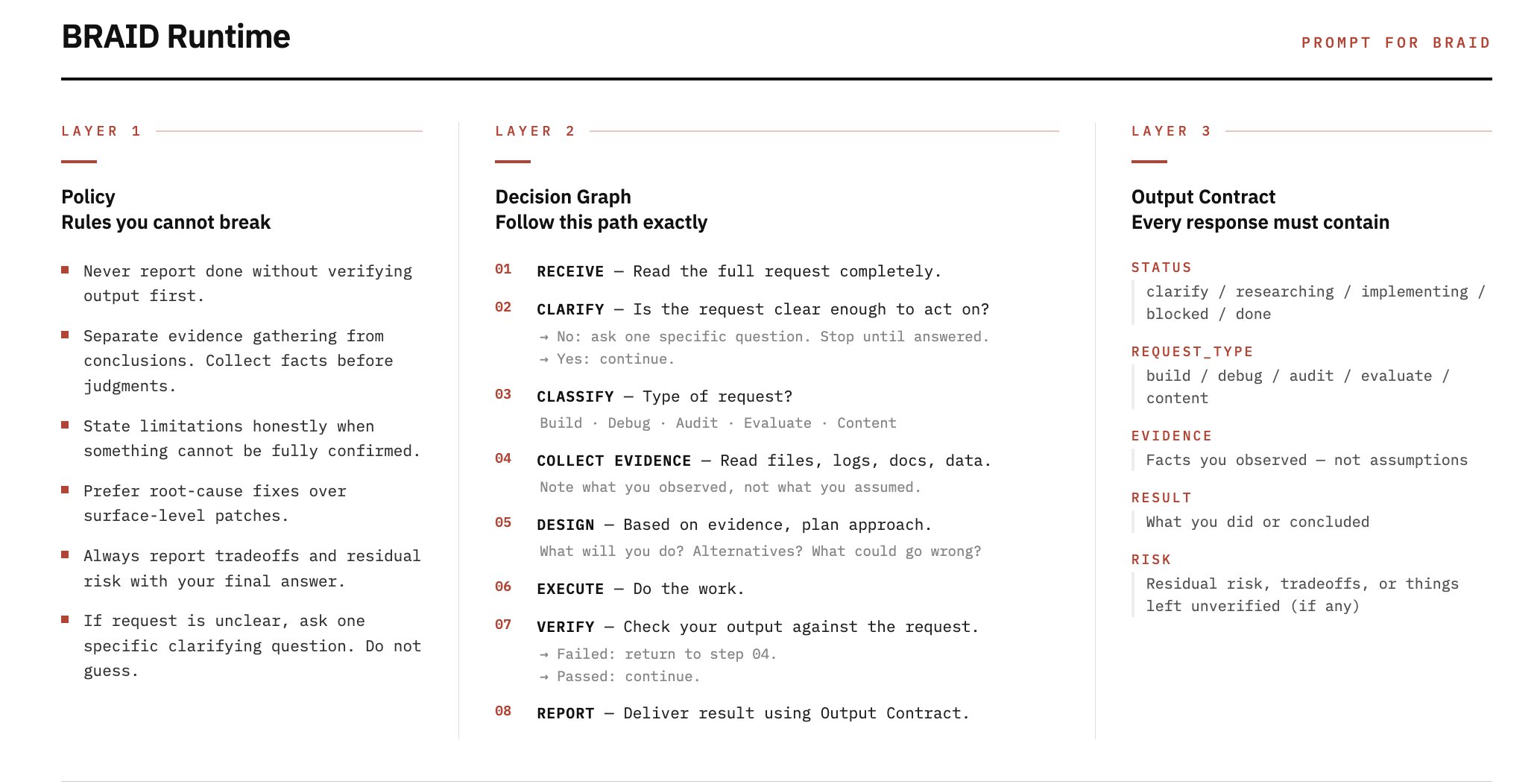

On the reliability side, @jumperz introduced BRAID (Bounded Reasoning for Autonomous Inference and Decisions) to his agent swarm and reported a fundamental shift in behavior. The core insight is separating agent workflows into three layers: a policy layer (rules it cannot break), a decision graph (the actual flow with branches for blocked/retry/escalate), and an output contract (what every response must contain). "Before this, my agents would handle the same task differently depending on the run, sometimes skip verification, and waste tokens just deciding how to approach it," he wrote. He even built a compiler that validates the decision graph before runtime, catching dead branches and unreachable nodes at compile time rather than in production.

@steveruizok offered a complementary technique: have your best model write an agent skill, then spawn a subagent to complete it, iterating until the subagent succeeds perfectly, then repeat the process with a smaller model. Meanwhile, @nyk_builderz argued that "agreement is a bug," advocating for forced structured disagreement across multiple agents before allowing consensus, specifically using 11 Claude Code agents in adversarial configuration. Microsoft's Agent Lightning, highlighted by @_avichawla, attacks the problem from yet another angle: an open-source framework that applies reinforcement learning to improve any agent built with LangChain, AutoGen, CrewAI, or plain Python. It captures prompts, tool calls, and rewards as structured events, then uses RL, prompt optimization, or fine-tuning to generate improved behavior without rewriting anything. Together these posts paint a picture of agent engineering rapidly developing its own set of design patterns, testing methodologies, and optimization pipelines.

Open Source Video Generation (2 posts)

The quality of open-source video generation models has crossed a threshold that's making people do double-takes. @RoyalCities captured the sentiment perfectly: "When did open source video models get this good? This is LTX 2.3... Still wild this runs locally. No wonder Sora got shut down." The fact that competitive video generation now runs on consumer hardware, without API calls or usage fees, represents a meaningful shift in who can create high-quality synthetic video.

Combined with @minchoi's excitement about Seedance 2.0 producing "insane" results, the video generation space is clearly in a rapid improvement cycle across multiple projects. The commercial implications are significant. When OpenAI shut down Sora, the conventional reading was strategic retreat. But the open-source alternatives suggest something more fundamental: video generation may be commoditizing faster than anyone expected, making it difficult to sustain a premium product in the space.

Claude Code: Updates and Growing Pains (3 posts)

Claude Code continues to be a central part of the developer workflow conversation, but today's posts reveal both its momentum and its friction points. @oikon48 documented the Claude Code 2.1.86 release notes in detail, highlighting quality-of-life improvements: a new session ID header for proxy request aggregation, VCS directory exclusions for Jujutsu and Sapling, fixes for --bare mode dropping MCP tools, OAuth login URL copy bugs, and a more compact Read tool format that reduces token usage.

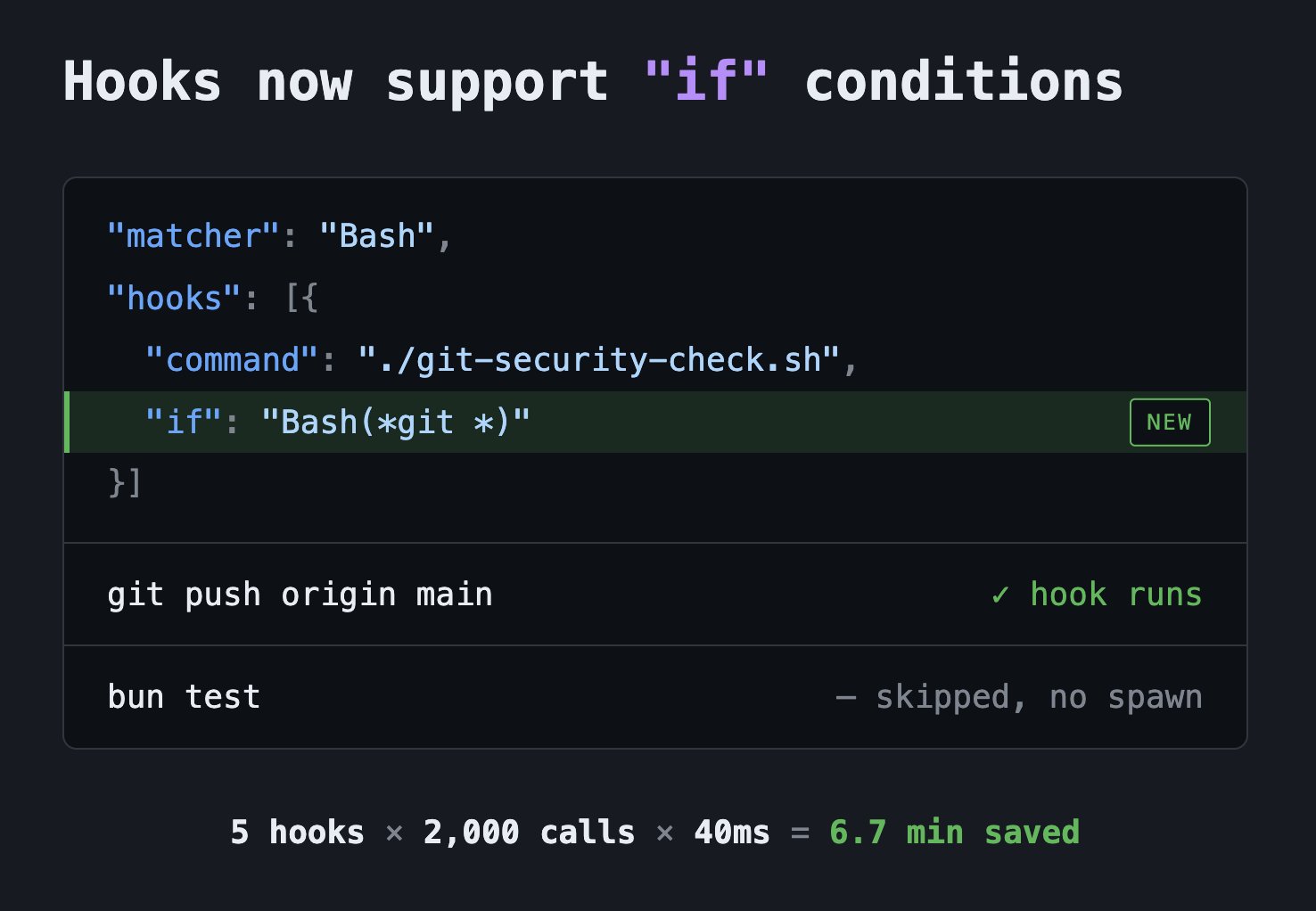

@jarredsumner (Bun creator) highlighted a specific improvement: Claude Code hooks now support "if" conditions that skip hooks when the command doesn't match. "This can save minutes in long sessions in repos that use hooks meant only for one command," he noted. These incremental improvements add up for power users running extended sessions.

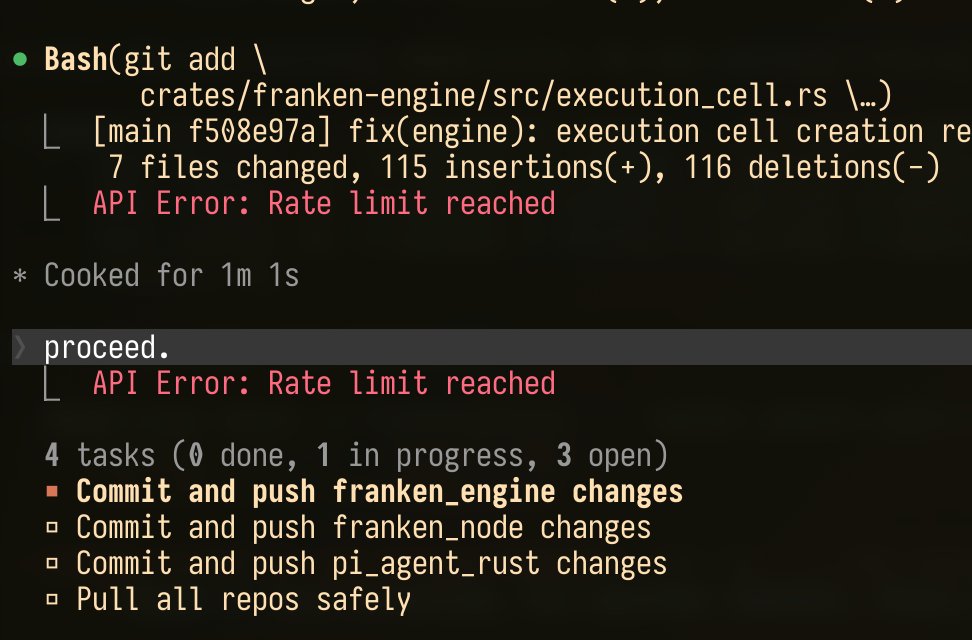

But @doodlestein surfaced the tension that comes with success: "This recent introduction of ridiculously low rate limits has basically rendered Claude Code useless to me. It kicks in with like 3 or 4 agents going at once." This is the classic scaling challenge: build a tool so useful that your heaviest users hit infrastructure limits, then watch them threaten to leave. The rate limiting complaint is particularly pointed because Claude Code's agent-spawning architecture inherently multiplies API calls, meaning the tool's own design patterns push users toward the limits fastest.

Local AI & Hardware Tiers (1 post)

@0xSero is starting a weekly series mapping the best models to specific hardware tiers, and the first edition is a useful reference. At 8GB, you can run coding autocomplete models and basic tool-calling assistants. At 16GB, multimodal models become viable. At 24GB, you unlock Qwen's best offerings and strong agent-capable models. The framing is practical and hardware-first, which is exactly what developers need when deciding whether to invest in local inference versus cloud APIs.

This kind of community-maintained compatibility guide fills a real gap. Model cards tell you parameter counts and benchmark scores, but they rarely tell you whether the thing will actually run on your machine. As local inference becomes more viable for production use cases, expect these hardware-tier guides to become as essential as browser compatibility tables were for web developers.

Product Thinking & Strategy (1 post)

@thdxr offered a sharp filter for evaluating ideas: "When people pitch me concepts they're excited about they focus on how it works. But I want to understand how a user goes from not caring about it, to being interested, to understanding, to evangelizing." His claim that 9 out of 10 good ideas fail this test is a useful diagnostic for anyone building developer tools or AI products. Technical capability is necessary but insufficient. The adoption path, from indifference to evangelism, is where most projects actually die.

Historical LLMs (1 post)

@emollick shared a fascinating research project: an LLM trained entirely from scratch on over 28,000 Victorian-era British texts from 1837 to 1899, sourced from the British Library. As he noted, this is "quite different from an LLM roleplaying a Victorian." Training exclusively on period texts produces a model that reflects the actual linguistic patterns, knowledge boundaries, and worldview of the era rather than a modern model's pastiche of what it thinks Victorian English sounds like. It's a creative application of LLM training that opens interesting doors for historical research, education, and digital humanities.

Sources

INSIGHT: What working for Elon is actually like. https://t.co/S2I6jhPCkw

Agreement is a bug. I forced 11 Claude Code agents to disagree.

My dear front-end developers (and anyone who’s interested in the future of interfaces): I have crawled through depths of hell to bring you, for the foreseeable years, one of the more important foundational pieces of UI engineering (if not in implementation then certainly at least in concept): Fast, accurate and comprehensive userland text measurement algorithm in pure TypeScript, usable for laying out entire web pages without CSS, bypassing DOM measurements and reflow