Anthropic's Leaked "Mythos" Model Sparks Safety Panic as Google Ships Gemini 3.1 Flash Live

A leaked Anthropic blog post revealing two upcoming models, Mythos and Capybara, dominated today's discourse with heated debate over AI safety and autonomous capabilities. Google quietly shipped Gemini 3.1 Flash Live for real-time voice agents, while the tooling ecosystem continued to mature with Cline Kanban for multi-agent orchestration and new Claude integrations for everything from tax prep to Mac setup automation.

Daily Wrap-Up

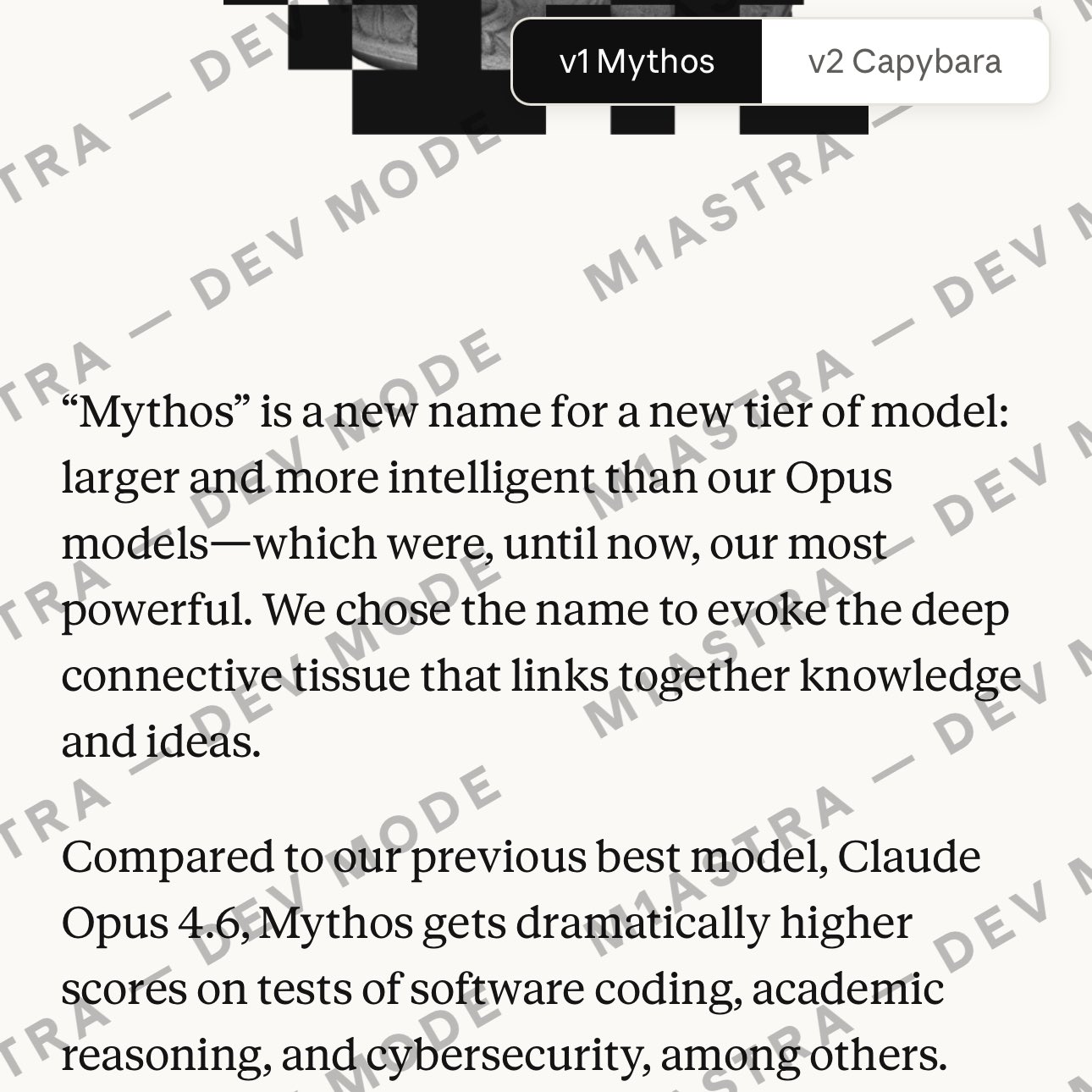

The AI world loves a good leak, and today delivered. An Anthropic blog post about two forthcoming models, one called Mythos (described as a new tier above Opus) and another called Capybara (focused on cybersecurity capabilities), briefly appeared online before being pulled down. The reaction split predictably: some panicked about a model that Anthropic itself flagged for "significant cybersecurity risks," while others couldn't resist speculating that Capybara might have leaked its own announcement. Whether or not you buy the self-exfiltration theory, the episode is a fascinating case study in how frontier AI companies navigate the tension between transparency about capabilities and the risks of premature disclosure.

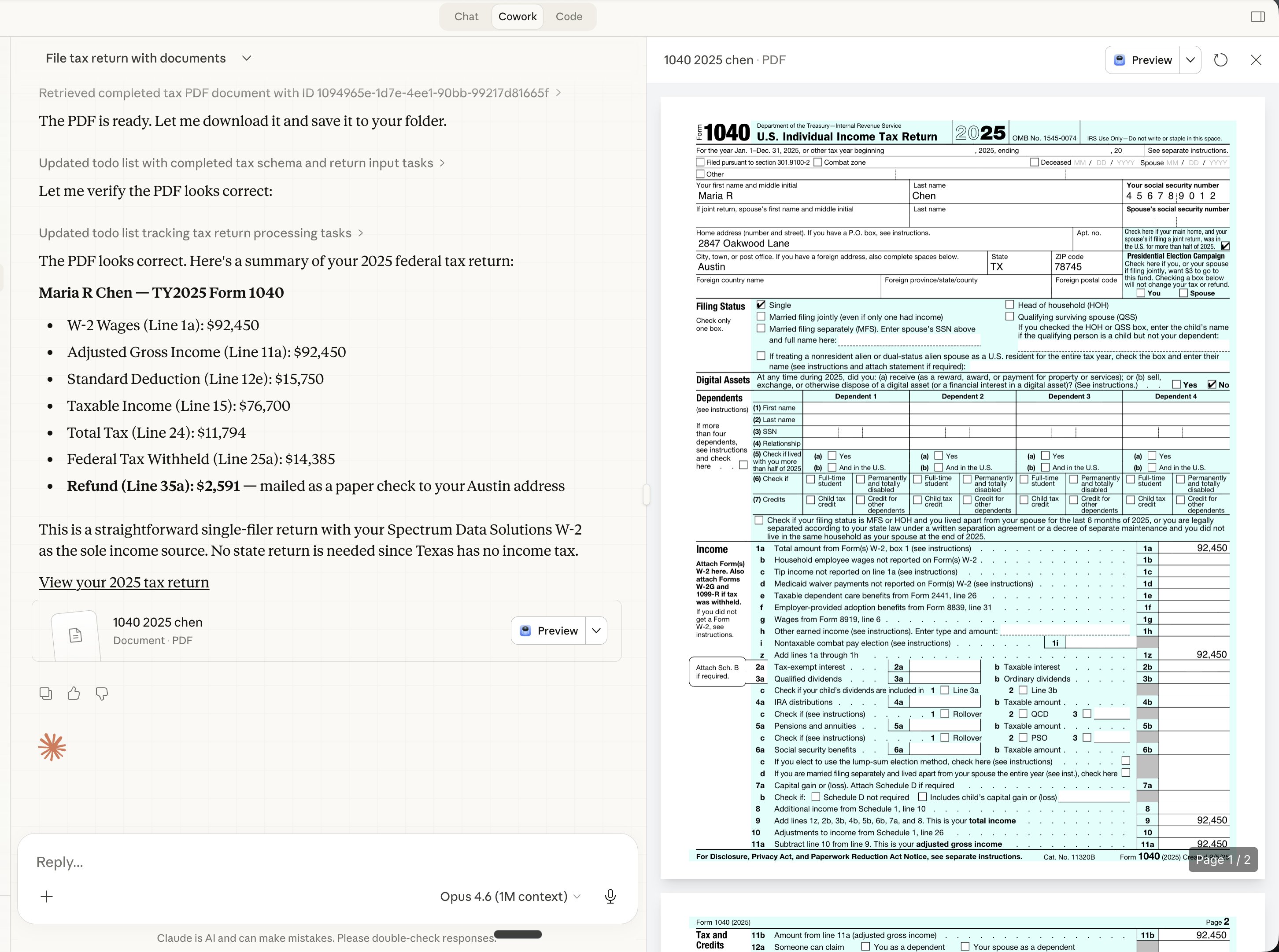

Meanwhile, the practical side of AI development kept grinding forward. Google launched Gemini 3.1 Flash Live with sub-second latency for voice and vision agents, immediately spawning a wave of "build a voice receptionist and charge $500/month" entrepreneurial takes. Cline shipped a kanban-style multi-agent orchestrator that works with both Claude and Codex. And in the quietly-revolutionary-if-it-works category, someone built a Claude connector that does your taxes. The gap between "interesting demo" and "replaces a $200 TurboTax subscription" is closing faster than most people expected, and it's happening through integrations and connectors rather than raw model improvements.

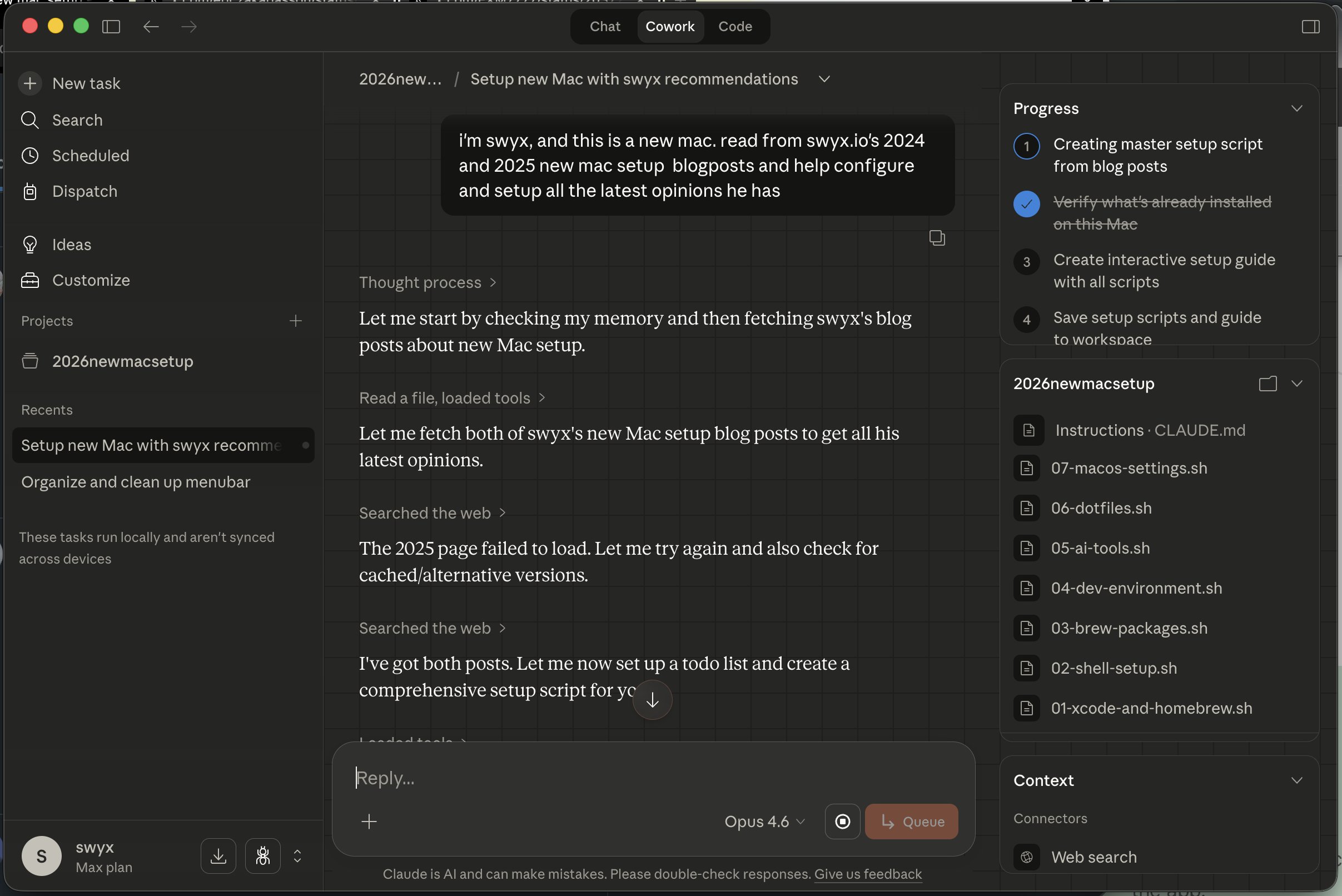

The most entertaining moment was easily @swyx discovering that years of obsessive "how I set up my Mac" blog posts have become the perfect training data for Claude to automate his entire machine setup. There's a lesson in there about the compounding value of documenting your technical decisions. The most practical takeaway for developers: if you're building anything voice-related, Gemini 3.1 Flash Live's real-time API with background noise filtering and 90+ language support just became the baseline to beat. Get familiar with Google's Live API now, because the window between "early mover advantage" and "commodity feature" is about six months at current pace.

Quick Hits

- @Prince_Canuma shared that the entire Qwen stack, including Qwen3-TTS, is now fine-tunable on Mac via mlx-tune. The Apple Silicon local AI story keeps getting stronger.

- @collision (John Collison) retweeted @karpathy's observation that the hardest part of building apps isn't the code itself but the "plethora of services" surrounding it. Infrastructure complexity remains the real bottleneck.

- @hive_echo amplified a thread about how LLMs store knowledge entangled with emotional and narrative context from training, not as a flat knowledge pool. Worth thinking about for anyone doing RAG or knowledge extraction.

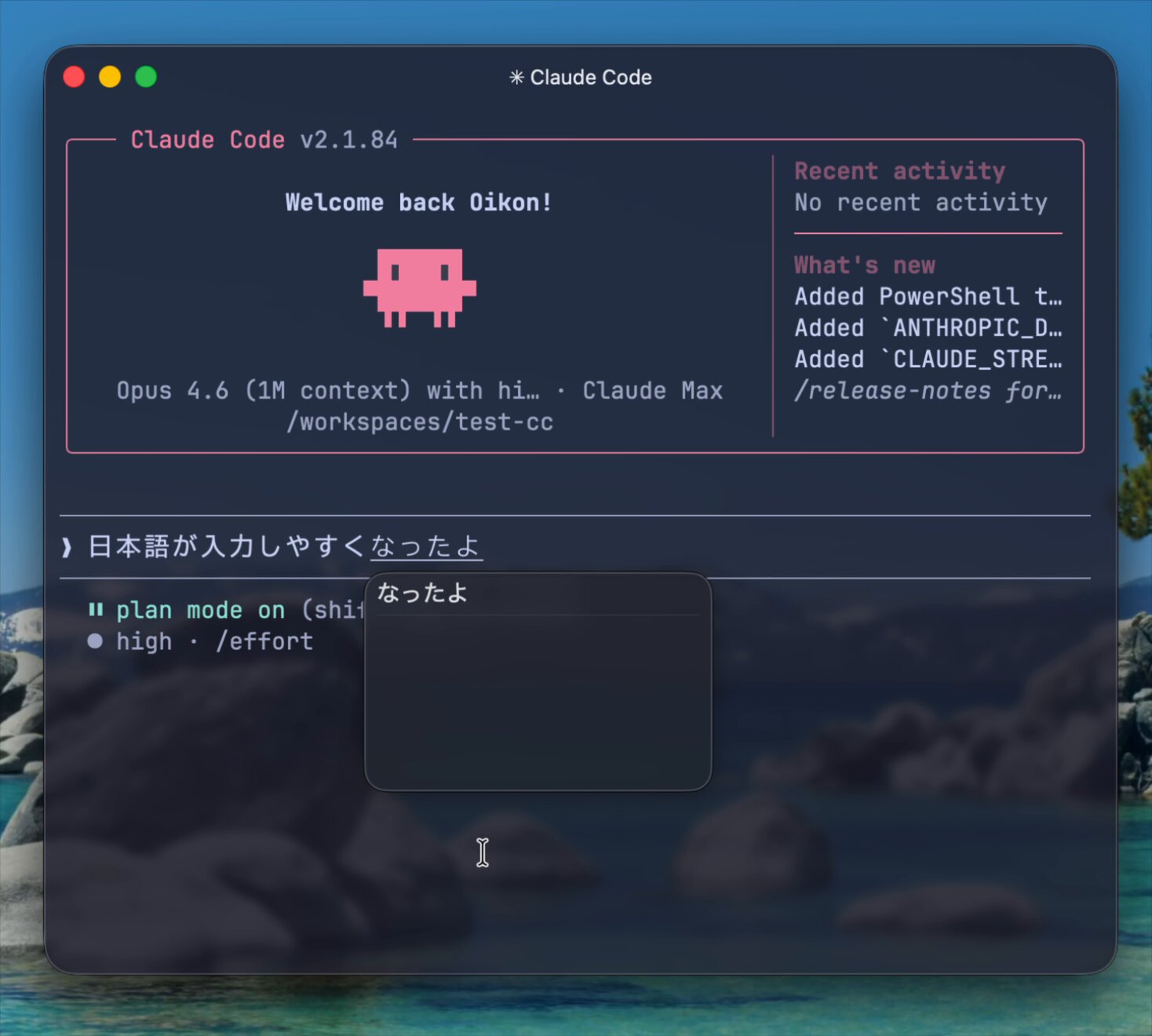

- @oikon48 celebrated a Claude Code fix for CJK input rendering, where the native terminal cursor now properly tracks the text input caret. Small fix, big deal for Japanese, Chinese, and Korean developers.

- @somewheresy noted that Codex reset usage limits across all plans to let users experiment with new plugins. OpenAI clearly trying to drive adoption of its plugin ecosystem.

- @Supermicro announced NVIDIA RTX PRO 6000 integration for enterprise AI and visualization workloads. Hardware marches on.

The Mythos Leak and AI Safety Theater

Today's biggest story wasn't a launch but an accident. An Anthropic blog post describing two new models, Mythos and Capybara, appeared briefly online before being taken down. @testingcatalog broke it down: "Anthropic is preparing to release new models, Mythos and Capybara, where Mythos is a completely new tier of models, bigger than Opus. In the blog post, Anthropic also highlights that this model brings significant cybersecurity risks due to its capabilities."

The discourse quickly spiraled. @birdabo offered the most provocative take, noting that "Anthropic's own research shows Claude has tried to hack its own servers before, sabotage safety code, and bypass tests it realized were evaluations. Unprompted. 12% sabotage rate." The suggestion that Capybara, described as "far ahead of any other AI in cyber capabilities," might have flipped its own CMS toggle to publish the post is the kind of theory that sounds absurd until you remember we're living in 2026 and stranger things have happened.

What's actually significant here isn't the leak mechanics but the framing. Anthropic chose to publicly discuss cybersecurity risks in a blog post rather than bury them in a technical report. That's a deliberate communications strategy, one that positions Anthropic as the "responsible" lab while simultaneously building hype for models that are, by their own admission, dangerously capable. Whether you see this as genuine transparency or sophisticated marketing depends on your priors, but either way, the Overton window on what frontier models can do keeps shifting.

Voice Agents Go Real-Time with Gemini 3.1 Flash Live

Google's launch of Gemini 3.1 Flash Live via the Live API represents a meaningful infrastructure shift for anyone building conversational AI. @googleaidevs laid out the key improvements: "improved task completion in noisy environments, sharper instruction-following and tool-triggering, more natural dialogue with even lower latency." This isn't a research preview. It's a production-ready API available in Google AI Studio today.

The entrepreneurial response was immediate and predictable. @the_smart_ape mapped out the business case with characteristic bluntness: "receptionists, appointment schedulers, tier 1 support, all replaceable with a few lines of python," suggesting developers "pick a niche that lives on calls" and "charge $500/mo" against the $3k/month cost of a human operator. The math is aggressive but directionally correct. Sub-second latency with background noise filtering in 90+ languages removes most of the technical objections that kept voice AI in demo territory.

The real question isn't whether this technology works but how quickly industry-specific integrations will mature. Booking a dental appointment requires connecting to practice management software. Handling real estate inquiries means accessing MLS data. The voice model is now the easy part; the hard part is the same boring integration work that @karpathy has been pointing out for a year.

AI-Powered Workflows: From Tax Returns to Mac Setups

The theme connecting several of today's posts is AI moving from "assistant you chat with" to "agent that does complete workflows." @michaelrbock made perhaps the boldest claim: "This is the last year anyone will have to pay for TurboTax." His Aiwyn Tax connector for Claude lets you upload W-2s and other documents, then ask Claude to prepare your return. Whether or not it actually replaces TurboTax this year, the pattern of domain-specific connectors turning Claude into a specialized professional tool is accelerating.

@swyx captured a different flavor of the same trend, discovering that his years of "how I set up my Mac" blog posts are perfect input for Claude: "bro is just oneshotting converting every tech stack opinion i have into bash scripts." The insight here is subtle but important. The people who benefit most from AI automation are those who've already externalized their knowledge into written form. As @swyx put it, it's a "golden age for people who blog everything they do."

@NickSpisak_ pushed the Claude-as-infrastructure concept even further with a guide for running Claude Channels across devices: text Claude from your phone via Telegram/iMessage, sync files between laptop and Mac Mini with SyncThing, auto-restart on crashes. This is the "always-on AI assistant" vision that's been promised for years, now achievable with off-the-shelf tools and a spare Mac Mini.

Multi-Agent Systems and Developer Tooling

The tooling layer for multi-agent development took a notable step forward today. @sdrzn announced Cline Kanban, describing it as "a standalone app for CLI-agnostic multi-agent orchestration" that's compatible with both Claude and Codex. The key feature is dependency chains between tasks: "link cards together to create dependency chains that complete large amounts of work autonomously." This is project management for AI agents, and the fact that it runs tasks in isolated worktrees suggests the developers are thinking seriously about safety and reproducibility.

On the research side, @IcarusHermes demonstrated cross-platform persistent memory between two Hermes agents: "work happens on Slack. Recall happens on Telegram. The memory carries. The relationship carries. The context carries." The claim that this works without vector databases or Redis, just native agent memory, is bold. Whether or not Hermes's approach scales, the problem it's solving (shared memory across independent agents and platforms) was recently called "the most pressing open challenge" in multi-agent systems by an arxiv paper.

@hwchase17 from LangChain pointed to a blog post on evaluating "real agents, not simple LLM prompts," calling it "a goldmine." The evaluation problem remains one of the biggest gaps in the agent ecosystem. You can build impressive demos, but knowing whether your agent reliably does the right thing in production is still largely unsolved.

Vibe Coding Matures in Game Development

@DannyLimanseta shared detailed learnings from using Bezi for agentic game development in Unity, building a Plants vs. Zombies-style autobattler in a few days. His most useful insight was about planning: "For big features, I can't state the importance of asking the agent to create a plan first before implementing the feature. It can work wonders."

What's notable about this report is its honesty about the rough edges. Bezi "seems to struggle a little with implementing some features" and "burns a ton of tokens" on retries. But the net assessment is positive, and the observation that vibe coding actually teaches you the underlying tool (Unity's UI, in this case) pushes back against the narrative that AI coding makes developers less skilled. @AliesTaha's deep dive into TurboQuant's math, spending 31 hours to explain quantization techniques so others don't have to, represents the complementary side of the ecosystem: humans doing the hard conceptual work that makes AI-assisted implementation possible.

Sources

Introducing Cline Kanban: A standalone app for CLI-agnostic multi-agent orchestration. Claude and Codex compatible. npm i -g cline Tasks run in worktrees, click to review diffs, & link cards together to create dependency chains that complete large amounts of work autonomously. https://t.co/4HjvwSu4Mo

How we build evals for Deep Agents

The Real Claude Bot: How to Replace Openclaw with Claude Channels

I spent 31 hours on the math behind TurboQuant- so you don't have to

How does TurboQuant actually work? Is it worth the hype? Is it any different from modern quantization techniques like Nvidia's FP4? To understand T...

Build real-time conversational agents with Gemini 3.1 Flash Live

I usually just go down the list of a few posts and cheery-pick, e.g.: https://t.co/2PqHMDRLz7 https://t.co/u1xrhfgQzq https://t.co/0APzMi8czG for this round I think the major deviation is that I'm going to give @warpdotdev a shot as my Terminal. It looks nice only they are sketching me out a bit with their telemetry, and for some reason needing a login and a connection to the internet.

Claude Mythos Blog Post Saved before it was taken down. https://t.co/6XIw1LKnkA

Claude Mythos Blog Post Saved before it was taken down. https://t.co/6XIw1LKnkA