LiteLLM Supply Chain Attack Exposes 97M Downloads as Google's TurboQuant Promises 6x Memory Compression

A major supply chain attack on LiteLLM's PyPI package dominated the conversation, with the compromise only caught because the attacker's malware was buggy enough to crash machines. Meanwhile, Google's TurboQuant algorithm for KV cache compression drew widespread excitement, and Cloudflare's Dynamic Workers launched as a new primitive for AI agent sandboxing.

Daily Wrap-Up

The biggest story today isn't a product launch or a new model. It's a security catastrophe that was only averted because an attacker got sloppy. LiteLLM, a Python package with 97 million monthly downloads that serves as a universal proxy for AI API keys, was compromised through a cascading supply chain attack. The poisoned version ran malware on install, no import required, harvesting SSH keys, cloud credentials, Kubernetes secrets, and anything else it could find. The attacker group TeamPCP first compromised Trivy, a security scanning tool, then used stolen credentials to work their way through GitHub Actions, Docker Hub, npm, and Open VSX. The only reason this didn't become a multi-week silent exfiltration across thousands of production environments is that the malware was so poorly written it consumed all available RAM and crashed a developer's machine. That developer happened to be running a Cursor MCP plugin that pulled in LiteLLM as a transitive dependency they didn't even know they had. It's the kind of story that makes you reconsider every pip install you've ever run.

On the more optimistic side of the ledger, Google published TurboQuant, a KV cache compression algorithm that achieves 6x memory reduction with zero accuracy loss across every benchmark tested. This is the kind of infrastructure-level improvement that quietly changes what's economically viable. If the same GPU can handle six times more concurrent conversations, the cost curve for AI inference shifts dramatically. Multiple developers were already testing it on local models by end of day, and an MLX implementation showed promising results on Qwen3.5. Cloudflare also made a play for the agent infrastructure layer with Dynamic Workers, using V8 isolates instead of containers to give AI-generated code a sandbox that boots in milliseconds instead of hundreds of milliseconds. Between the security wake-up call and two meaningful infrastructure advances, today felt like the ecosystem maturing in real time, sometimes painfully.

The most entertaining moment was easily the revelation that the supply chain attack was caught by a RAM spike. Somewhere, a security engineer is writing a postmortem that essentially says "we were saved by bad vibes and worse code." The most practical takeaway for developers: audit your transitive dependencies today. Run pip show or npm ls on your AI projects and actually look at what's installed. If you're using LiteLLM, verify you're on a clean version. And if you're building anything that touches credentials, consider whether you actually need that dependency or whether you can, as Karpathy suggested, just "yoink" the functionality you need with an LLM.

Quick Hits

- @staysaasy shared @rodriscoll's framework for categorizing AI layoffs into five buckets: excuse cover, growth decline, capex reallocation, genuine productivity gains, and workforce skill mismatch. A useful lens for cutting through corporate spin.

- @jpschroeder pointed to the .agent TLD initiative, with Brave among the early registrants. Community-owned domain management instead of single-company control.

- @MatthewBerman flagged self-improving AI developments that he thinks aren't getting enough attention.

- @elonmusk posted an Optimus robot video. No additional context provided, as is tradition.

- @bl888m shared a greentext-style narrative about going from faking AI knowledge to actually understanding it via Stanford CS221. Relatable content for the "transformers and embeddings" era.

- @EOEboh recommended following @ashoKumar89 for backend engineering content.

- @cgtwts shared a reaction video about Claude users discovering Karpathy's autoresearch method.

- @mikeyobrienv highlighted Anthropic's writing on harness design for long-running agent apps, noting overlap with ralph-orchestrator's architecture.

Supply Chain Security: The LiteLLM Compromise

Today's most consequential story is a security incident that reads like a thriller. A threat group called TeamPCP executed a cascading supply chain attack that started with Trivy, a security scanning tool, and ended with a poisoned version of LiteLLM on PyPI. The attack was sophisticated in strategy but crude in execution, and that crudeness is the only thing that saved thousands of organizations.

@karpathy laid out the technical severity: "Simple pip install litellm was enough to exfiltrate SSH keys, AWS/GCP/Azure creds, Kubernetes configs, git credentials, env vars (all your API keys), shell history, crypto wallets, SSL private keys, CI/CD secrets, database passwords." The malware ran automatically on package installation through a .pth file that Python executes on startup. No explicit import needed.

@aakashgupta traced the full attack chain, noting that "the attacker vibe coded it... the malware was so sloppy it crashed computers... used so much RAM a developer noticed their machine dying and investigated. They found LiteLLM had been pulled in through a Cursor MCP plugin they didn't even know they had." The contagion spread beyond direct installs: any package depending on LiteLLM, including DSPy, would pull in the compromised version. @mathdroid added that OpenClaw also uses LiteLLM, widening the blast radius further.

The timing is notable. On the same day this attack surfaced, @mslipper announced the open-source release of iron-sensor, an eBPF-based behavioral monitor specifically designed to watch what AI coding agents do on your system. "Agents act like you: they read SSH keys, write cron jobs, modify systemd units, and escalate privileges. Most of the time this helps you code. Some of the time it installs a backdoor. By default, you can't tell the difference." They stress-tested it with an OpenClaw instance that installed 223 random skills with zero human review, generating 16,000+ events. The tool records everything at the kernel level so you can actually audit what happened. Given today's events, the timing couldn't be more relevant. Karpathy's conclusion resonates: dependencies need to be re-evaluated, and LLMs might be the tool that lets us "yoink" functionality instead of trusting an ever-deeper tree of packages we've never audited.

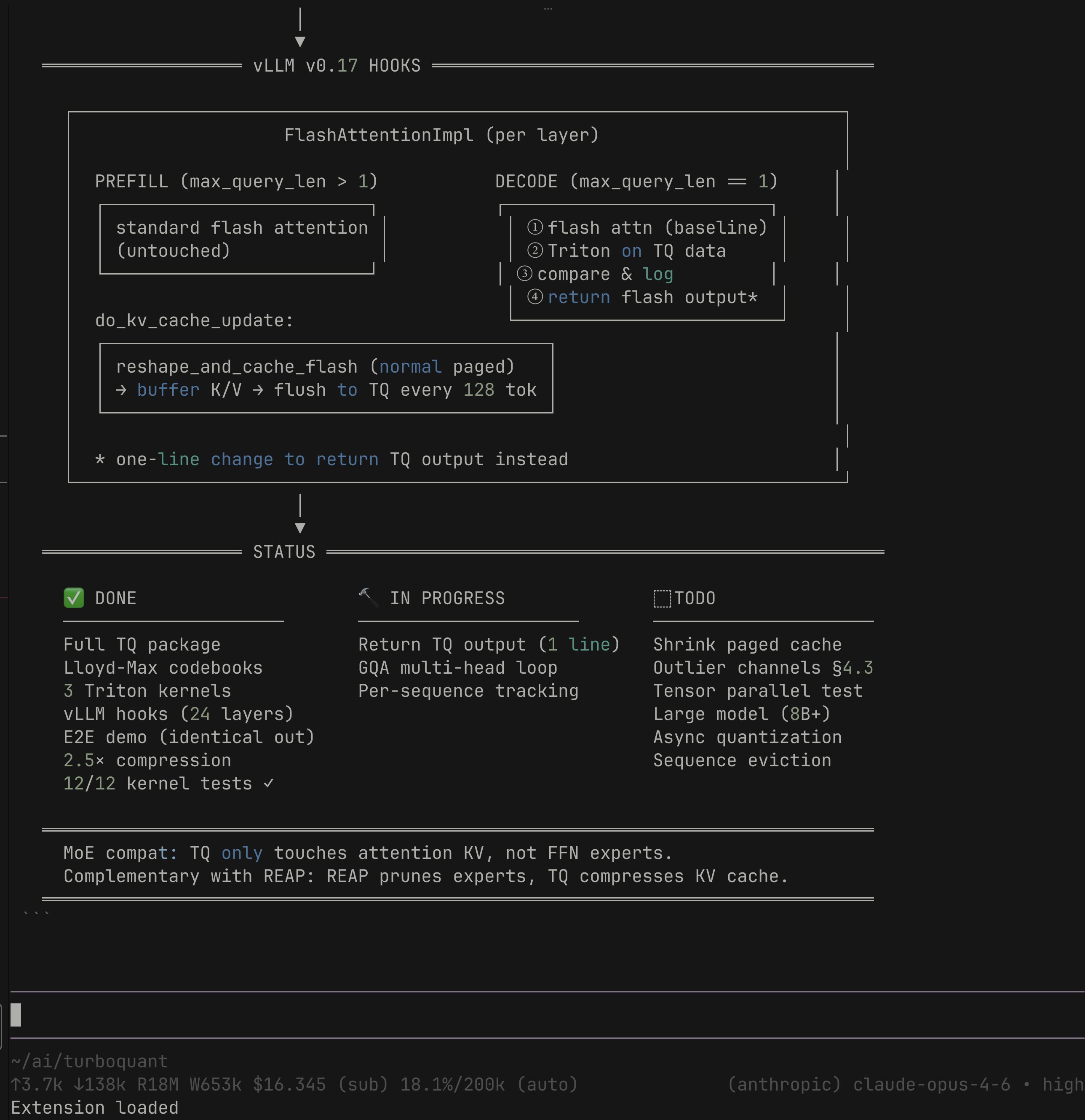

Google's TurboQuant: 6x KV Cache Compression

The KV cache is the single largest memory bottleneck in LLM inference. Every conversation you have with a chatbot maintains this cache so the model doesn't re-process the entire context from scratch. On a 70B parameter model with a long conversation, that cache alone can consume 40GB of GPU memory. Google's TurboQuant algorithm compresses it by 6x, down to 3 bits per value, with what they claim is zero accuracy loss. No retraining, no fine-tuning, drop-in replacement.

@AnishA_Moonka broke down the economics: "AI inference now makes up 55% of all AI compute spending. Hyperscalers are pouring nearly $700 billion into AI infrastructure in 2026. The KV cache is the single biggest memory bottleneck in that stack." The algorithm works by randomly rotating data to simplify its structure, applying compression, then adding a 1-bit error correction step. On H100 GPUs it delivers up to 8x speedup over uncompressed computation, and it scored perfectly on needle-in-a-haystack benchmarks across Llama, Gemma, and Mistral models.

The community response was immediate and practical. @badlogicgames retweeted an MLX implementation already showing results on Qwen3.5-35B, while @0xSero announced plans to test it on Qwen3.5 with the hope of running much larger models locally. @AlexFinn captured the enthusiasm, arguing this means "that 16gb Mac Mini now can run INCREDIBLE AI models. Completely locally, free, and secure." That's probably overstating things, but the direction is real. When inference memory drops 6x, models that required enterprise hardware move within reach of consumer devices. Being presented at ICLR 2026, TurboQuant also outperforms existing methods for vector search, which has implications well beyond chatbots and into the core of how search engines work.

Cloudflare Dynamic Workers and Agent Infrastructure

Cloudflare launched Dynamic Workers this week, and the AI agent community took notice. The core pitch is simple: AI agents generate code that needs to run somewhere safe, containers take 100-500ms to boot and use hundreds of megabytes of RAM, and V8 isolates solve both problems by booting in 1-5ms with a few megabytes of memory.

@TipsCsharp highlighted the key technical differentiator: "TypeScript API definitions replace OpenAPI specs. Fewer tokens, cleaner code, type-safe RPC across the sandbox boundary." The "Code Mode" approach, where an LLM writes TypeScript that runs in an isolate and calls typed APIs, reportedly uses 81% fewer tokens than sequential tool calls. At $0.002 per Worker loaded per day (free during beta), the pricing targets high-volume agent deployments. @dinasaur_404 from Cloudflare demoed the developer experience, emphasizing simplicity.

The broader agent infrastructure conversation extended beyond Cloudflare. @gakonst described their internal agent setup: a Postgres coordinator, Docker containers with piped stdin/stdout, 150+ API integrations, and a firewall that injects secrets on the fly rather than storing them in containers. This architecture, where the agent's sandbox is deliberately isolated from credentials, feels prescient given the LiteLLM attack. @zachlloydtweets shared a build-vs-buy analysis for deploying coding agents at scale, reflecting what appears to be a growing consensus among engineering leaders that cloud coding agents should be first-line contributors this year.

AI-Powered Development: From Games to Trading Bots

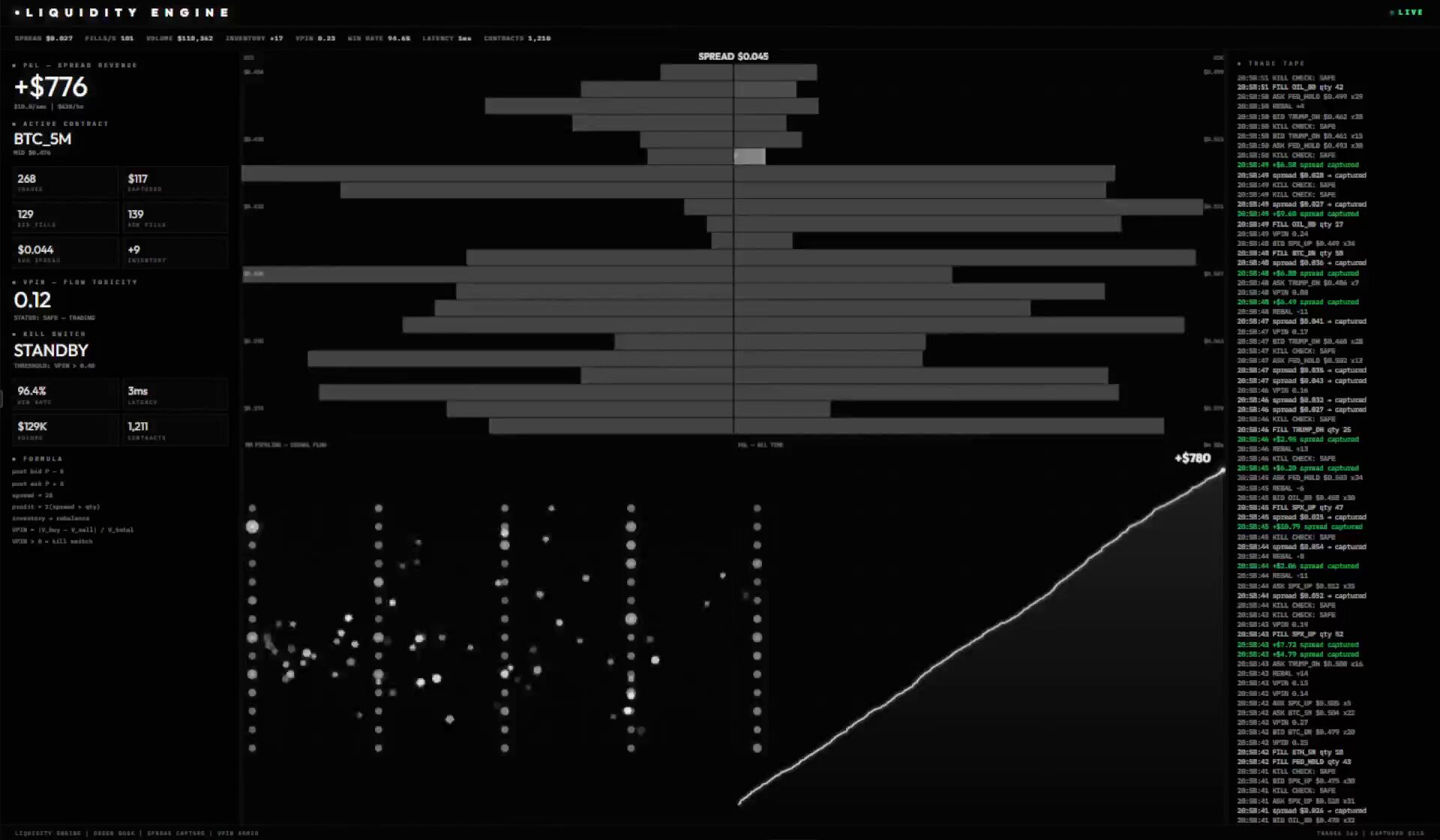

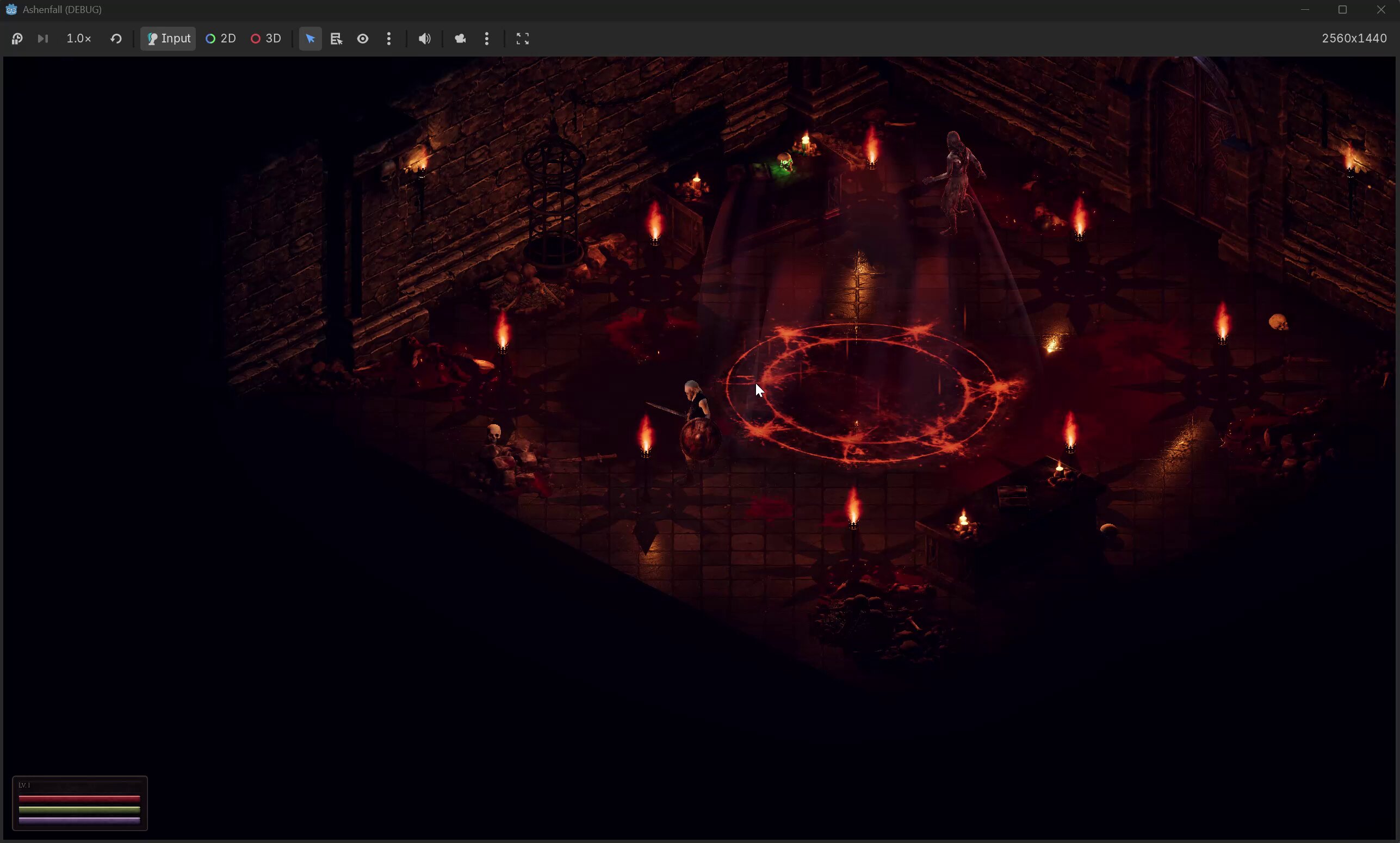

The range of what people are building with AI coding tools keeps expanding. @heygeorgekal showed off an ARPG being developed entirely with AI: models generated by Meshy, every line of code written by Claude Code, running in Godot with MCP integration. "Now working on souls-like combat: 3-hit combos, dodge rolls, stamina, hitstop." It's a concrete demonstration of AI tools reaching into game development, a domain that historically required deep specialized knowledge.

@hanakoxbt shared a more provocative example: an AI-built market-making bot running on Polymarket that executed 393 trades overnight for $904 in spread revenue. "I didn't write a single line of code. Fed it one article about how quant bots extract money on Polymarket. It built the whole thing." The bot includes a VPIN flow toxicity detector as a kill switch and automatic inventory rebalancing. Whether the numbers hold up long-term is another question, but the barrier to entry for algorithmic trading strategies has clearly collapsed.

@doodlestein offered a practical "life hack" for agent-assisted coding: point your coding agent at the entire project, then ask it to find every hardcoded constant that should be dynamic, every TODO comment, and every "will" or "would" in comments indicating unfinished work. "I was truly horrified by how much stuff this turned up." It's a reminder that AI coding tools are powerful not just for generating new code but for auditing the shortcuts we've already taken. @threepointone's essay on "dynamic workers" and the implications of every user having a coding buddy rounds out the theme: we're moving toward a world where the boundary between using software and programming software is dissolving.

Sources

code mode: let the code do the talking (aka, after w/i/m/p) wherein I ponder the implications of every user having a little coding buddy, and every "app" being directly programmable on demand. https://t.co/i62HuWsjKg lmk what you think. https://t.co/86fiigZedY

We’re introducing Dynamic Workers, which allow you to execute AI-generated code in secure, lightweight isolates. This approach is 100 times faster than traditional containers. https://t.co/c36Vkb7I0R

The Complete Stanford CS221: Everything AI Actually Is, Explained (Part II)

LiteLLM HAS BEEN COMPROMISED, DO NOT UPDATE. We just discovered that LiteLLM pypi release 1.82.8. It has been compromised, it contains litellm_init.pth with base64 encoded instructions to send all the credentials it can find to remote server + self-replicate. link below

Build vs buy: how to deploy coding agents at scale

The consensus amongst engineering leaders I talk to is that they want to deploy cloud coding agents to automate development this year. Their goal is f...

Brave just registered a .agent domain! We support the effort to have the .agent top-level domain managed by a community, instead of being owned by one company. Join the community and pre-register your domain here: https://t.co/3Cnyae5EHm

AI has become the justification for every layoff. It's the perfect excuse card, but there is a lot of spin involved. Every layoff is some combo of the following five very different AI stories. 1. Nothing changed, we just realized we have too many people. We are going to blame AI, but we are bullshitting. This is the AI as an excuse; it was really sloppy hiring, and we are just blaming AI. (See Block) 2. Growth has gone away so now we have too many people. This may be because of AI if you are a SaaS company. All the customer love is now going to AI. But it's less AI as a productivity lift, and more about you just building a less ambitious growth company. (See Salesforce and most every SaaS company) 3. We spent our money on capex to build AI so now we can’t afford as many people. Management may say it’s about AI making us productive (4 below) but my gut is a lot of it is about Nvidia getting our money so now there is none for you. (See Meta and Oracle) 4 We are really using AI the way god intended us to. We don't need as many people. This is the ONLY version of the story that is actually about a productivity increase. It's real, it's happening, but I wonder if it is even the majority of the layoffs. (See some software engineering departments right now) @jasonlk raised a fifth reason that doesn't get talked about enough: we just have the wrong people. Maybe we don't need 20 engineers who all know C++, but rather eight who have strong AI skills. This I think should be happening everywhere. Every time a layoff announcement comes out, I try and mentally categorize per the above.

https://t.co/CNyhlvUUNd

lots of folks running expensive sandboxes but really all you need is a filesystem but really you don't even need a filesystem, you just need a filesystem API that frontends something like a database (often you care a lot about ACID compliance or indexability etc; @jeffreyhuber talks about this) you can do this with FUSE and lots of people are building really cool things this way but really you don't even need a filesystem API, because agents don't see the POSIX APIs they just see tokens in and tokens so really all you need is something that looks like a file system but frontends whatever you want - S3, Postgres, Chroma, durable streams, whatever coding agents are great "everything agents" but the problem is that you use an off-the-shelf harness it marries you to the filesystem so you get stuck with FUSE or NFS hacks OR you have to inject a bunch of extra MCP tools that are parallel to the 'real' fs tools but if you own the harness, you can own the control flow this lets you separate the tool INTERFACE from the tool EXECUTION. your tool can look like a normal FS read tool to the agent, but you can use whatever backend you want for the execution logic this unlocks lots of exciting things but it requires you build your own harness or use something more customizable

The largest manipulation in the benchmarking history uncovered

Cursor just released this article and ton of people started worshipping cursor like they just made a revolution in the file search. They showed a beau...

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: https://t.co/CDSQ8HpZoc https://t.co/9SJeMqCMlN

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: https://t.co/CDSQ8HpZoc https://t.co/9SJeMqCMlN

Software horror: litellm PyPI supply chain attack. Simple `pip install litellm` was enough to exfiltrate SSH keys, AWS/GCP/Azure creds, Kubernetes configs, git credentials, env vars (all your API keys), shell history, crypto wallets, SSL private keys, CI/CD secrets, database passwords. LiteLLM itself has 97 million downloads per month which is already terrible, but much worse, the contagion spreads to any project that depends on litellm. For example, if you did `pip install dspy` (which depended on litellm>=1.64.0), you'd also be pwnd. Same for any other large project that depended on litellm. Afaict the poisoned version was up for only less than ~1 hour. The attack had a bug which led to its discovery - Callum McMahon was using an MCP plugin inside Cursor that pulled in litellm as a transitive dependency. When litellm 1.82.8 installed, their machine ran out of RAM and crashed. So if the attacker didn't vibe code this attack it could have been undetected for many days or weeks. Supply chain attacks like this are basically the scariest thing imaginable in modern software. Every time you install any depedency you could be pulling in a poisoned package anywhere deep inside its entire depedency tree. This is especially risky with large projects that might have lots and lots of dependencies. The credentials that do get stolen in each attack can then be used to take over more accounts and compromise more packages. Classical software engineering would have you believe that dependencies are good (we're building pyramids from bricks), but imo this has to be re-evaluated, and it's why I've been so growingly averse to them, preferring to use LLMs to "yoink" functionality when it's simple enough and possible.

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: https://t.co/CDSQ8HpZoc https://t.co/9SJeMqCMlN

Hit 5M impressions 🚀 Still ~200 followers away from 500 verified. If you’ve been getting value from my posts on backend, systems, and real-world dev stuff follow along. Let’s grow together. https://t.co/V3Rz0vzlrR

How to 10x your Claude Skills (using Karpathy's autoresearch method)