Karpathy Declares the End of Manual Coding as Agent Memory Wars Heat Up

Andrej Karpathy's wide-ranging interview on the No Priors podcast dominated discussion, declaring he hasn't typed code since December and outlining a future where humans direct autonomous agents. Meanwhile, a fierce debate emerged around agent memory architectures, with Hermes and OpenClaw representing two fundamentally different philosophies. The community also grappled with agent code quality problems, with Factory AI and others proposing lint-driven development as a solution.

Daily Wrap-Up

The AI developer community spent March 21st digesting a sprawling Karpathy interview that touched on everything from "AI psychosis" (the anxiety of unused tokens) to the claim that software engineering has permanently shifted from writing code to expressing intent. Whether you buy the maximalist framing or not, the signal underneath is real: the conversation has moved decisively from "can agents code?" to "how do we manage agents that code?" The practical questions dominating the timeline weren't about model capabilities but about memory management, code quality guardrails, and orchestration patterns.

The memory architecture debate is particularly worth watching. Two competing philosophies are crystallizing: stuff everything into context (OpenClaw style) versus keep the prompt minimal and retrieve on demand (Hermes style). The tradeoffs are stark and measurable, with one user reporting 5-second versus 60-second response times. This isn't an abstract design choice; it's the kind of architectural decision that compounds over weeks of agent usage. Meanwhile, Karpathy himself admitted that agents ignore his AGENTS.md instructions and produce bloated code, which Factory AI used as a springboard to promote lint-driven development and codebase "agent readiness" as necessary prerequisites. The tension between agent autonomy and code quality is becoming the defining challenge of this era.

The most practical takeaway for developers: if you're running persistent coding agents, audit your memory architecture now. The difference between a lean retrieval-based approach and an ever-growing context window isn't just speed; it's the difference between an agent that stays coherent over weeks and one that degrades as it accumulates history. Start with Hermes-style minimal prompts and search-on-demand, and only expand your persistent context when you can prove the tradeoff is worth it.

Quick Hits

- OpenAI's Codex for Students: @OpenAIDevs is offering $100 in Codex credits to U.S. and Canadian college students, with @gdb boosting the announcement. A smart acquisition play targeting the next generation of developers.

- Tenstorrent office visit: @TheAhmadOsman shared photos from a Tenstorrent office tour, highlighting the AI accelerator hardware company's work.

- Elon on Von Neumann probes: @elonmusk floated Optimus+PV as "the first Von Neumann probe," a self-replicating machine using space raw materials. Filed under: ambitious timelines.

- Energy tech meets AI: @ashebytes posted a conversation with Danielle Fong covering the intersection of frontier energy technology, data center power demands, and agentic tooling at Lightcell.

- Cloudways Copilot: @Cloudways promoted AI-powered managed hosting, another signal that AI copilot features are becoming table stakes across infrastructure products.

- Browser Use CLI 2.0: @shawn_pana praised the new Browser Use CLI, claiming he hasn't touched Chrome manually while working with Claude Code. @browser_use touts 2x speed at half the cost using direct CDP.

Agent Memory Architecture: The Great Divergence

The hottest technical debate of the day centered on how AI agents should handle memory, and two sharply different philosophies are now competing for developer mindshare. @witcheer provided a detailed firsthand comparison after running both OpenClaw and Hermes on the same Mac Mini, distilling the core difference to a single sentence: "OpenClaw stores everything and searches it. Hermes keeps almost nothing in the prompt and retrieves the rest on demand."

The Hermes approach uses four memory layers, but the key insight is restraint. Its core context is roughly 1,300 tokens of curated facts, with everything else living in a SQLite archive queried only when needed. As @witcheer put it: "Hermes would rather search for a fact at the cost of one tool call than stuff it into every single message and break the cache." The performance difference was dramatic in practice: "my OpenClaw bot was 2 months of accumulated memory, deep context, but every message replays more history. Hermes is 2 weeks old with a fraction of the memory, but it responds in 5 seconds vs 60."

This dovetails with @tricalt's piece on "Memory as a Harness: Turning Execution Into Learning," which argues that the missing layer in agent development is the system around the intelligence. The article's premise, that models alone cannot give us learning systems, aligns perfectly with the Hermes philosophy: memory isn't about accumulation, it's about structured retrieval. For developers building with persistent agents, the lesson is clear. Cache-friendly, minimal prompts with on-demand retrieval will outperform brute-force context stuffing every time. The question isn't whether your agent remembers everything; it's whether it knows how to find what it needs.

Karpathy's Vision: Directors, Not Doers

Andrej Karpathy's appearance on the No Priors podcast generated the day's longest discussion thread, with @kloss_xyz providing a detailed 10-point breakdown. The headline claim, "I don't think I've typed a line of code since December," set the tone for a conversation about the permanent restructuring of software engineering workflows.

Several of Karpathy's points landed with particular force. His concept of "AI psychosis," the anxiety of knowing you have unused tokens sitting idle, resonated as a genuine new cognitive phenomenon in the developer community. His observation that "the limits aren't model capability anymore, they're orchestration skill" reinforced what many practitioners are discovering independently. And his prediction about specialized model ecosystems ("a team of focused models beats one mega model every time") challenges the scaling-maximalist narrative that still dominates some corners of AI discourse.

But perhaps the most grounded insight was about jobs data. Karpathy looked at real employment numbers and concluded that engineering demand is still rising, comparing cheaper AI-assisted engineering to how ATMs actually created more bank teller jobs by enabling more branch locations. The framing of humans as "directors, not doers" is provocative, but the economic data he cites suggests this is additive rather than replacement. For developers feeling anxious about relevance, Karpathy's message is that orchestration skill is the new leverage point, and there's more demand for it than ever.

The Agent Code Quality Problem

While the industry celebrates agent productivity, a candid admission from Karpathy himself exposed the elephant in the room. In a post quoted by @alvinsng, Karpathy wrote: "I'm not very happy with the code quality and I think agents bloat abstractions, have poor code aesthetics, are very prone to copy pasting code blocks." He noted that agents consistently ignore his style instructions, creating complex one-liners that call multiple functions and index arrays in a single expression despite explicit guidance to do otherwise.

@alvinsng and the @FactoryAI team positioned their work as a direct response to this problem, advocating for what they call "Lint Driven Development" (LDD): "Using Linters to direct agents... Agent Readiness (Fixing the codebase before letting agents roam wild)." Their thesis is that you need to prepare your codebase before unleashing agents, through banned patterns (like React's useEffect), linter rules that agents can follow more reliably than prose instructions, and context compression for long sessions. The approach treats the codebase itself as the instruction set rather than relying on markdown files that agents may or may not respect. It's a pragmatic admission that natural language constraints are insufficient for maintaining code quality at scale, and that mechanical enforcement through existing tooling is the path forward.

Agentic Methods and the Harness Debate

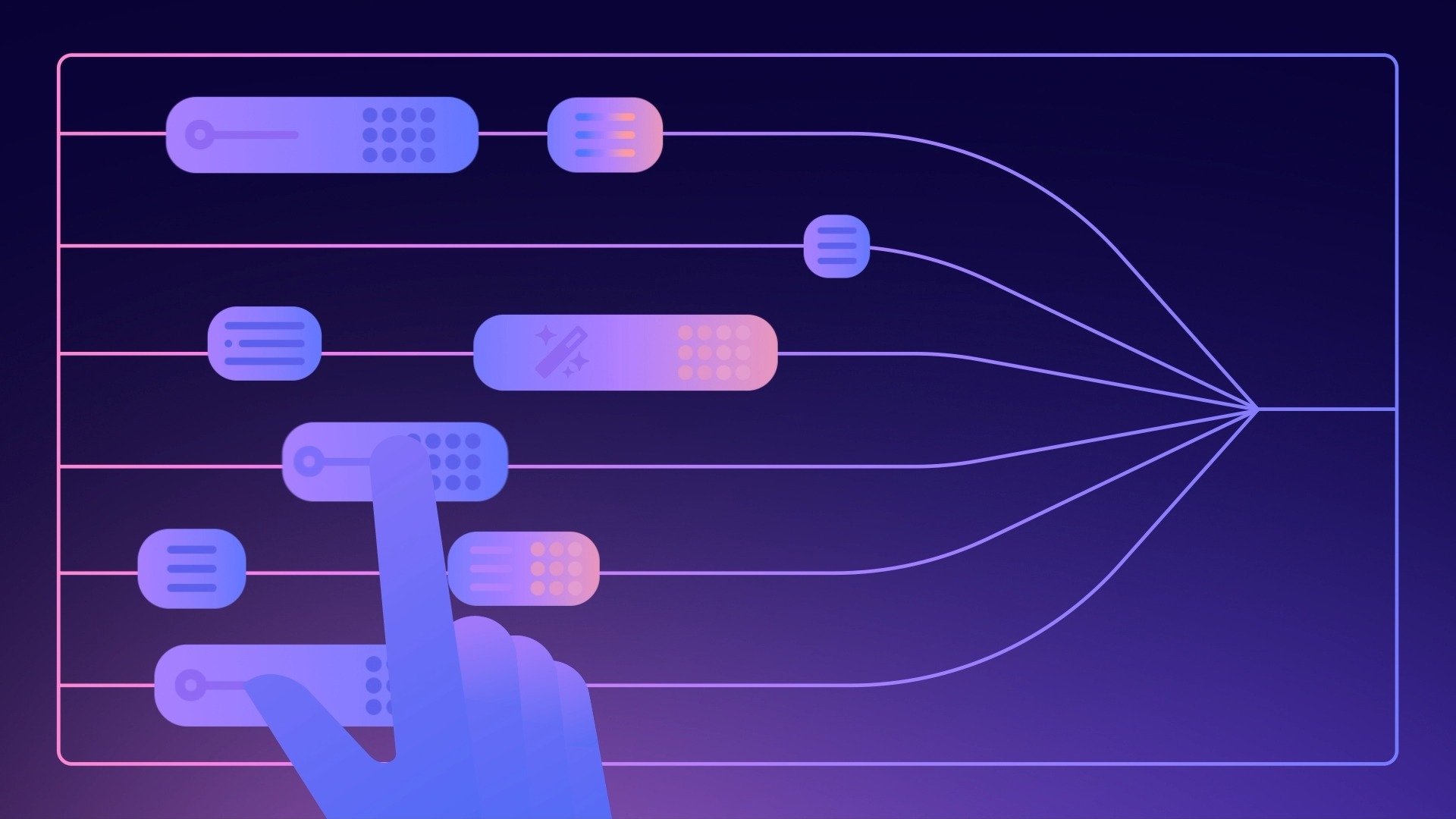

A cluster of posts explored the proliferating landscape of agent architectures and orchestration approaches. @neural_avb walked through how different agentic methods solve the same problem, from direct prompting through RAGs, ReAct, CodeAct, subagents, and Recursive Language Models, providing a useful taxonomy for developers trying to navigate the options. Meanwhile, @joemccann sparked debate about open versus closed agent harnesses, arguing that open source will win despite Anthropic's "walled garden" approach with Claude Code.

The quoted post from @_can1357 that catalyzed the harness discussion made a striking claim: "I improved 15 LLMs at coding in one afternoon. Only the harness changed." This frames the current competitive landscape not as a model race but as an orchestration race. @doodlestein reinforced this with his concept of "in-context recursive self-improvement," where learnings from agent sessions feed back into the skills agents use: "It doesn't take many iterations before you can start doing really extraordinary things." @mattshumer_ also weighed in with claims about a "simple setup change" that dramatically improves coding agent performance. Whether these individual claims hold up, the meta-pattern is consistent: the returns on better orchestration currently exceed the returns on better models.

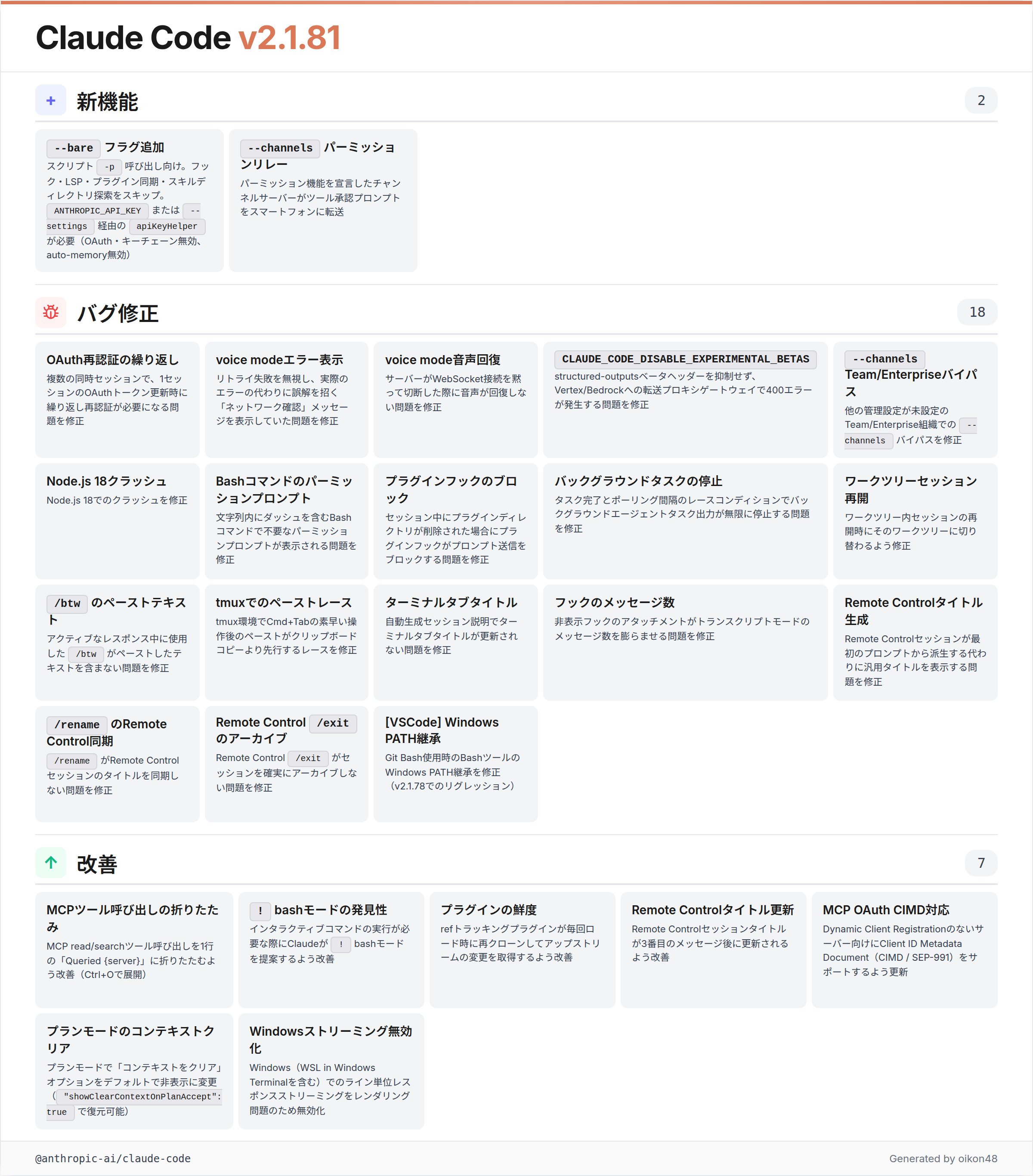

Claude Code Updates

@oikon48 shared detailed release notes for Claude Code 2.1.81, covering a range of improvements including a new --bare flag for scripted -p usage that skips hooks, LSP, and plugin scanning for leaner automation pipelines. The update also adds --channels for permission relay (enabling tool approvals from mobile), fixes for worktree session resumption, and collapsed MCP read/search output. These are incremental but meaningful quality-of-life improvements, particularly for developers running Claude Code in automated or multi-device workflows.

Sources

I Read Hermes Agent's Memory System, and It Fixes What OpenClaw Got Wrong

Memory as a Harness: Turning Execution Into Learning

"The missing layer that makes agents actually improve over time." Earlier this month the industry woke up: models can give us intelligence, but they ...

10x Your Coding Agent Productivity

Recursive Language Models - what finally gave me the 'aha' moment

I improved 15 LLMs at coding in one afternoon. Only the harness changed.

Introducing: Browser Use CLI 2.0 🔥 The most efficient browser automation CLI tool > 2x the speed, half the cost > Easily connect to running Chrome > Uses direct CDP Try it now 🔗↓ https://t.co/9YZ2oB5wVz

I'm not very happy with the code quality and I think agents bloat abstractions, have poor code aesthetics, are very prone to copy pasting code blocks and it's a mess, but at this point I stopped fighting it too hard and just moved on. The agents do not listen to my instructions in the AGENTS.md files. E.g. just as one example, no matter how many times I say something like: "Every line of code should do exactly one thing and use intermediate variables as a form of documentation" They will still "multitask" and create complex constructs where one line of code calls 2 functions and then indexes an array with the result. I think in principle I could use hooks or slash commands to clean this up but at some point just a shrug is easier. Yes I think LLM as a judge for soft rewards is in principle and long term slightly problematic (due to goodharting concerns), but in practice and for now I don't think we've picked the low hanging fruit yet here.

Why we banned React's useEffect

Caught up with @karpathy for a new @NoPriorsPod: on the phase shift in engineering, AI psychosis, claws, AutoResearch, the opportunity for a SETI-at-Home like movement in AI, the model landscape, and second order effects 02:55 - What Capability Limits Remain? 06:15 - What Mastery of Coding Agents Looks Like 11:16 - Second Order Effects of Coding Agents 15:51 - Why AutoResearch 22:45 - Relevant Skills in the AI Era 28:25 - Model Speciation 32:30 - Collaboration Surfaces for Humans and AI 37:28 - Analysis of Jobs Market Data 48:25 - Open vs. Closed Source Models 53:51 - Autonomous Robotics and Atoms 1:00:59 - MicroGPT and Agentic Education 1:05:40 - End Thoughts

It's also so powerful to take learnings from agent sessions where your custom CLI tools are used by agents and then feed them back into the skills the agents use to help them operate those tools. This is sort of "in-context recursive self-improvement" if you will, cyborg style: https://t.co/2auFrjre8I

Meet Codex for Students. We're offering college students in the U.S. and Canada $100 in Codex credits. Our goal is to support students to learn by building, breaking, and fixing things. https://t.co/WrOtW8E8Lk https://t.co/l2T81LgKCI