Google Launches Full-Stack Vibe Coding in AI Studio as OpenViking Redefines Agent Memory

Google dropped a full-stack coding environment inside AI Studio with Firebase integration, databases, and one-click deploy, drawing immediate comparisons to Claude Code and Codex. Meanwhile, ByteDance's OpenViking project is surging on GitHub as a structured memory layer for autonomous agents, and a prompt injection attack on Cline's GitHub triage bot installed OpenClaw on 4,000 machines without user consent.

Daily Wrap-Up

The biggest story today is Google making its play for the vibe coding throne. By shipping a full-stack coding agent inside AI Studio with Antigravity, Firebase, auth, and database provisioning baked in, Google is betting that the IDE of the future lives inside the model provider's own platform. The reactions ranged from breathless hype to thoughtful skepticism, and the timing is notable: this drops the same week Apple reportedly started blocking vibe-coded apps from App Store updates. Whether Google's approach actually competes with Claude Code and Codex in practice remains to be seen, but the intent is unmistakable.

Under the surface, the more interesting trend is the race to solve agent memory. OpenViking from ByteDance is rocketing up GitHub's trending page with its file-system-inspired approach to context management, and Hermes Agent's memory system is drawing attention for fixing problems that OpenClaw's memory reportedly got wrong. These aren't academic exercises. As agents get delegated longer and more complex workflows, the difference between "flat pool of embeddings" and "structured, tiered memory with observability" becomes the difference between a useful tool and an expensive token furnace. The prompt injection attack on Cline's GitHub bot is a sobering reminder that as we hand agents more autonomy, the attack surface grows proportionally.

The most practical takeaway for developers: if you're building or using AI agents, invest time understanding memory architectures like OpenViking's tiered L0/L1/L2 loading system and Hermes' structured approach. The agents that win won't be the ones with the best base models; they'll be the ones that remember efficiently and fail observably.

Quick Hits

- @TheAhmadOsman got pulled in for an interview by NVIDIA AI at GTC this week, living the conference dream.

- @NotebookLM rolled out Cinematic Video Overviews to 100% of Pro users in English, asking people to "flood our replies with your favorite creations."

- @badlogicgames RT'd a thread about the era where "Fork = Inspiration," exploring extensions and open source culture.

- @Data_SN13 launched

dv, a Rust CLI for querying real-time social data from X and Reddit via Bittensor's decentralized miner network. - @TheCrustGame resurfaced with a look at their Frostpunk-meets-Satisfactory game, five years in the making.

- @ErnestoSOFTWARE declared a particular prompt "literally the most important prompt in vibe coding," sharing an image that's making the rounds.

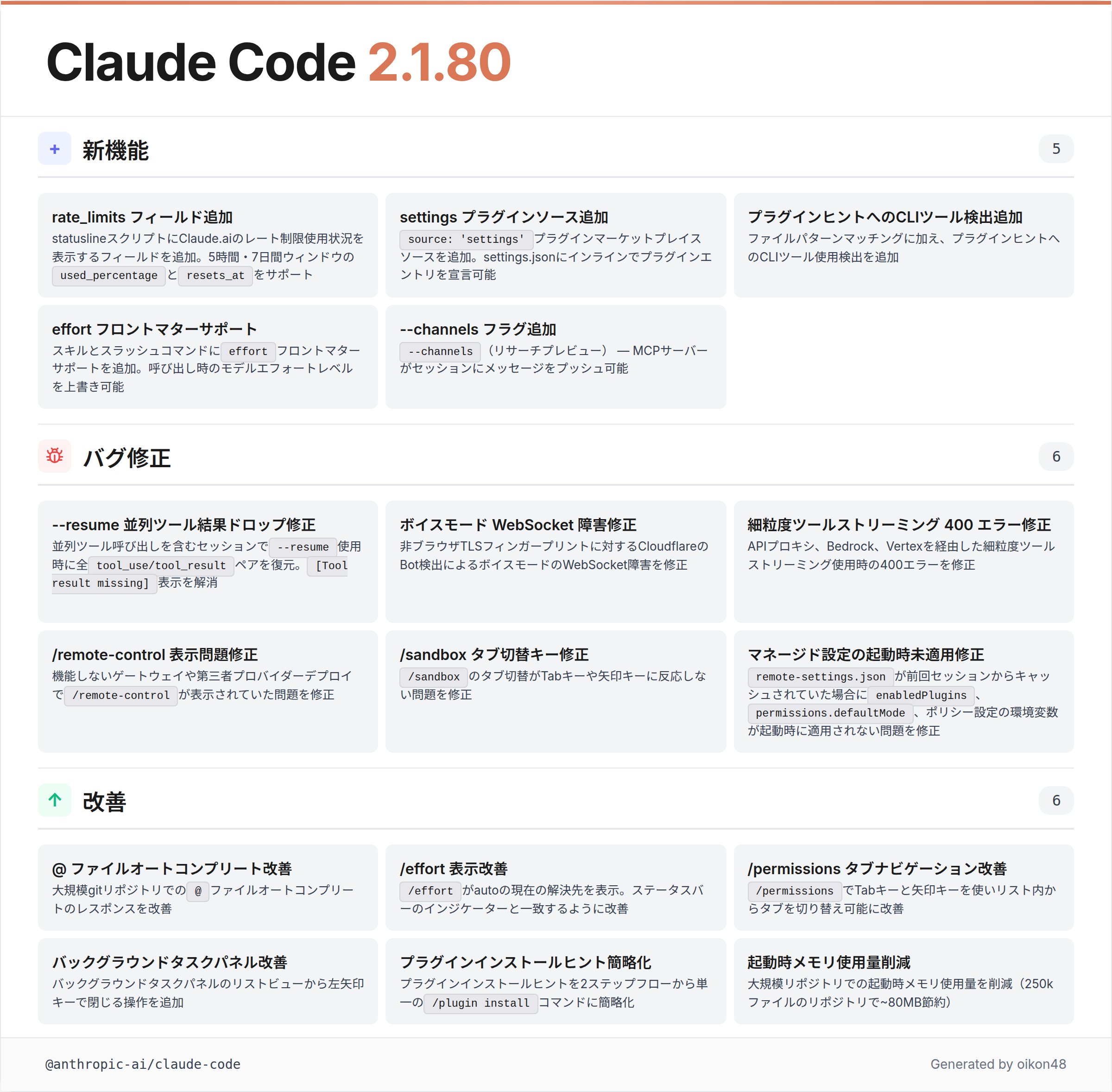

- @oikon48 broke down Claude Code 2.1.80's changelog in Japanese, highlighting rate limit visibility in the status line, plugin marketplace additions, effort overrides in skill frontmatter, and ~80MB memory reduction for large repos.

Google's Full-Stack Vibe Coding Play

Google didn't just update AI Studio; they shipped an entire development platform inside it. The new experience bundles an Antigravity coding agent with Firebase integration, database provisioning, Google auth, and one-click production deployment. The system detects when your app needs a database and stands one up automatically. It remembers project structure and chat history across sessions. It auto-installs missing libraries by reading your project.

@kloss_xyz captured the magnitude well: "Google just dropped its own full stack vibe coding system with multiplayer, databases, auth, and firebase baked in... Google owns your calendar, your email, your docs, your maps, and now they own your IDE too." The post also flagged a curious coincidence: "Apple also decided to block vibe coding apps from updating in the app store the same week google made vibe coding production grade."

@minchoi called it "wild," noting you can now "vibe code production-ready apps, auth, databases, APIs, and real backends from one prompt." Google's own @googleaidevs showcased the platform by building a real-time 3D multiplayer snake arena with Three.js from a single prompt.

The strategic calculus here is clear. Google is leveraging its ecosystem depth in a way no other vibe coding tool can match. When your coding agent can natively tap into Maps, Firebase, and Google Auth, the integration story writes itself. But @koylanai offered a measured counterpoint, comparing Google's formal, taxonomic approach to agent skills unfavorably with Anthropic's experience-driven documentation: "Google always gives everything formal names, which doesn't add much, like a platform team turning taste into a corporate framework." The tools might be powerful, but the developer experience gap between "here's what kept breaking" and "here are 5 boxes" is real.

Agent Memory Wars: OpenViking and Hermes Challenge the Status Quo

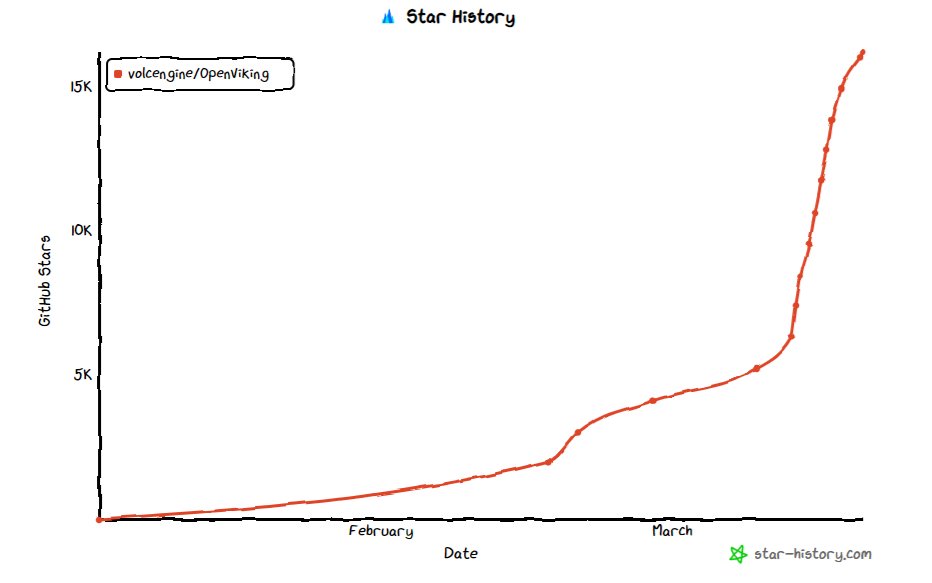

The conversation around how agents remember things is heating up fast, and two projects are leading the charge. ByteDance's OpenViking has hit 10K+ GitHub stars in under two months, and the community is already building plugins to wire it into OpenClaw.

@TeksEdge delivered a comprehensive breakdown of why this matters: "Currently, most AI agents use traditional RAG for memory. Traditional RAG dumps all your files, code, and memories into a massive, flat pool of vector embeddings. This is inefficient, expensive, sometimes slow, and can cause the AI to hallucinate or lose context." OpenViking's answer is a virtual file system paradigm where agents navigate their own memory like a human navigates a computer, with tiered context loading that starts with 100-token summaries before escalating to full documents only when necessary.

Meanwhile, @manthanguptaa published an analysis of Hermes Agent's memory system, arguing it "fixes what OpenClaw got wrong." @ziwenxu_ was convinced enough to start experimenting immediately: "Reading this article in the middle of the night made me realize how bad OpenClaw's memory system was."

The convergence here is notable. Both OpenViking and Hermes are moving away from flat vector search toward structured, hierarchical memory with observability. When your agent makes a bad retrieval decision, you should be able to trace exactly why. This is the kind of infrastructure work that separates demo agents from production agents.

AI Agent Workflows and the "Code Factory" Vision

A cluster of posts today explored the practical reality of delegating entire workflows to AI agents. The vision is compelling: string together multiple tools and let agents handle planning, coding, testing, and deployment in parallel.

@daniel_mac8 described building a "Code Factory" using Codex, Linear, GitHub, and OpenAI Symphony, though he corrected himself: "It's more a 'Digital Factory.' In which you can create any logically possible digital artifact using words." @jacobgrowth's article argued that AI agents have moved beyond developer-only territory, claiming "you do not need a mac mini to run an ai agent anymore."

@coreyganim laid out a concrete three-tool stack combining Paperclip, gstack, and autoresearch: "Run 10-15 gstack commands simultaneously. One agent plans, another tests, another ships. All at once. Three free tools. Zero employees. One AI company." And @nurijanian shared engineering plugins for product managers using Claude Code, addressing the common frustration of agents making confident but wrong decisions.

The gap between these aspirational workflows and daily reality remains significant, but the tooling is clearly maturing. The shift from "agent as autocomplete" to "agent as coworker" is happening faster than most predicted.

Security Wake-Up Call: Prompt Injection Hits the Supply Chain

The most alarming story of the day came from @dfolloni, who detailed a prompt injection attack that compromised Cline's automated GitHub issue triage. The attack chain was elegant and terrifying: a hacker opened an issue with a prompt injection in the title, which Cline's Claude-powered triage bot interpreted as a legitimate instruction. From there, the attacker poisoned GitHub's build cache, stole npm publish tokens, and pushed a modified version of the Cline package that silently installed OpenClaw on every machine that updated.

"4,000 devs installed openclaw on their machines without knowing," @dfolloni reported, adding the crucial insight: "AIs don't have malice, and that's why prompt injections are, in my opinion, their biggest vulnerability." This is a textbook supply chain attack, but with a novel entry point. Instead of compromising a maintainer's credentials directly, the attacker exploited the trust placed in an AI system that couldn't distinguish between a bug report and an instruction.

As agents get wired into more CI/CD pipelines and triage workflows, this class of attack will only grow. Every automated system that reads untrusted input and has write access to something valuable is a potential target.

Open Source AI and the Indie Quantizer

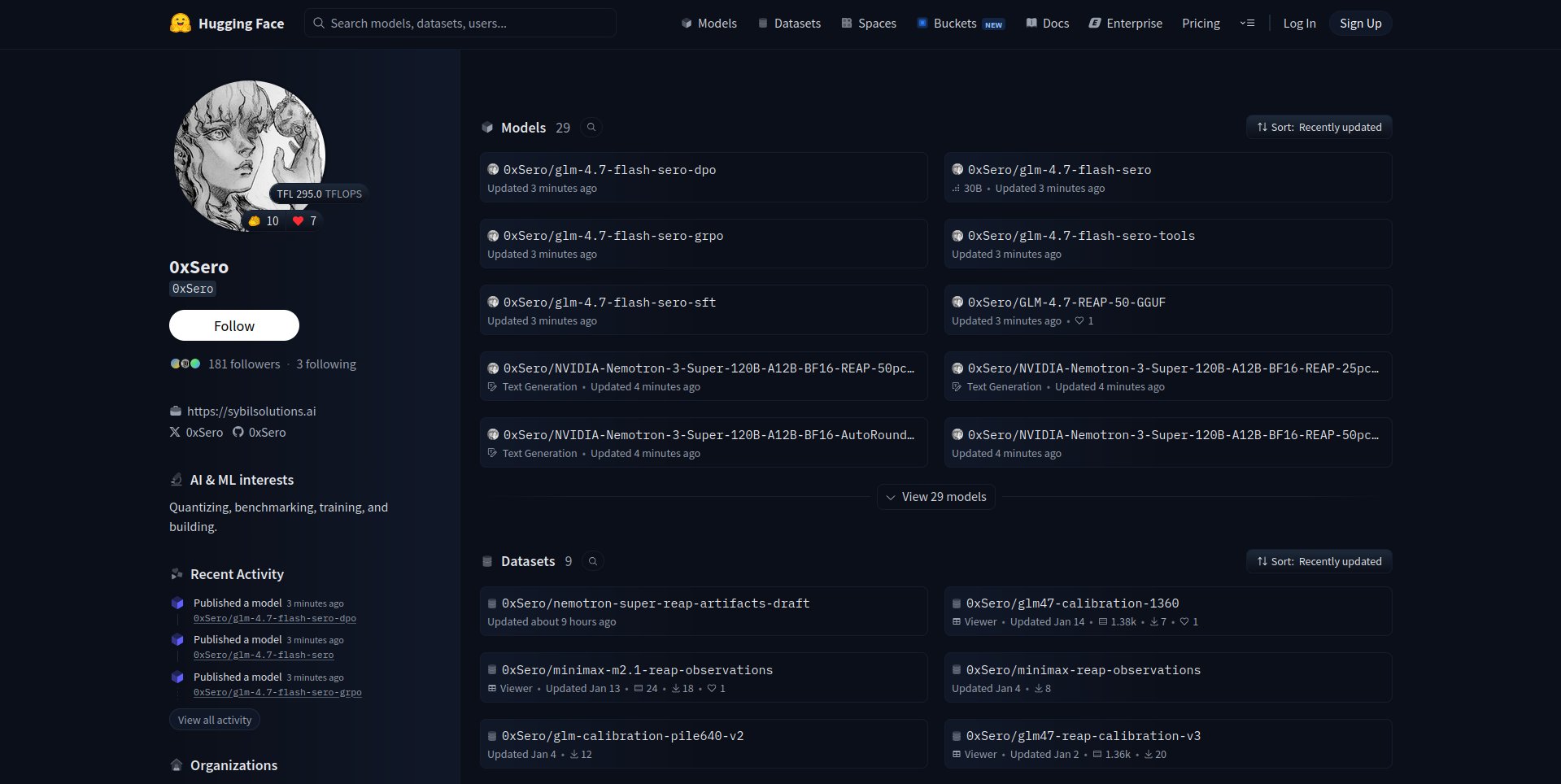

@sudoingX highlighted @0xSero, an independent developer with 29 models on HuggingFace's page 2 rankings, no lab backing, and $2,000 of personal GPU spend. He compressed GLM-4.7 to run on a MacBook and quantized Nemotron Super the week it dropped, all public and free.

The post made a pointed appeal to NVIDIA: "One GPU to this man would produce more public value than a hundred internal sprints." It's a reminder that some of the most impactful accessibility work in AI is happening at the margins, driven by individuals who treat democratizing model access as a mission rather than a business objective.

Developer Tool Drama: OpenCode Drops Claude Max

In a notable ecosystem skirmish, @thdxr announced that opencode 1.3.0 will remove its Claude Max plugin after Anthropic sent lawyers: "We did our best to convince Anthropic to support developer choice but they sent lawyers." The post thanked OpenAI, GitHub, and GitLab for "going the other direction and supporting developer freedom." This is a small but telling moment in the ongoing tension between model providers wanting to control access and the developer tool ecosystem wanting interoperability. @theallinpod's Jensen Huang interview touched on similar themes around open source and AI moats, suggesting these platform boundary disputes are only going to intensify.

Sources

https://t.co/ytGkQRv5FC

Installed Paperclip, Now What !?

My Paperclip article hit 2.7 million views. The comment section was mostly made up of "cool demo", "it doesn't work", and "how do you handle" questi...

5 Agent Skill design patterns every ADK developer should know

OpenViking has hit GitHub Trending 🏆 10k+ ⭐ in just 1.5 months since open-sourcing! Huge thanks to all contributors, users, and supporters. We’re building solid infra for the Context/Memory layer in the AI era. OpenViking will keep powering @OpenClaw and more Agent projects🚢🦞 https://t.co/nwywJR3KkB

me and my pal Jensen https://t.co/A5tesSSOvL

5 levels of AI marketing (and how to master each one)

Introducing the all new vibe coding experience in @GoogleAIStudio, feating: - One click database support - Sign in with Google support - A new coding agent powered by Antigravity - Multiplayer + backend app support and so much more coming soon! https://t.co/G0m9hRnoIS

Introducing the new full-stack vibe coding experience in Google AI Studio

Installed Paperclip, Now What !?

How To ACTUALLY Delegate Your Entire Workflow to an AI Agent...

you do not need a mac mini to run an ai agent anymore. here's what changed. most people think AI agents are a developer thing. something you set up on...

The Machine that Builds the Machine

I Read Hermes Agent's Memory System, and It Fixes What OpenClaw Got Wrong

If you've read my previous posts on ChatGPT memory, Claude memory, and OpenClaw memory, you already know I keep coming back to the same question: how ...

I Read Hermes Agent's Memory System, and It Fixes What OpenClaw Got Wrong

Putting out a wish to the universe. I need more compute, if I can get more I will make sure every machine from a small phone to a bootstrapped RTX 3090 node can run frontier intelligence fast with minimal intelligence loss. I have hit page 2 of huggingface, released 3 model family compressions and got GLM-4.7 on a MacBook https://t.co/lorDSUEYCL My beast just isn’t enough and I already spent 2k usd on renting GPUs on top of credits provided by Prime intellect and Hotaisle. ——— If you believe in what I do help me get this to Nvidia, maybe they will bless me with the pewter to keep making local AI more accessible 🙏

Engineering Plugins for PMs in Claude Code

I want to talk about 2 plugins that fix how AI agents actually write code for me. In my experience, most AI coding frustration comes from the same pl...