OpenSquirrel Reimagines the IDE Around Agents as Kimi and Nvidia Ship New Open-Source Models

The agent-native development toolchain is taking shape fast, with new IDEs, sandboxing debates, security guides, and collaborative editors all landing in the same 24-hour window. Meanwhile, Kimi's Attention Residuals paper and Nvidia's Nemotron-3 Super both dropped as open-source releases, continuing the trend of capable models going free. The spatial mapping discourse around Niantic's 30-billion-image Pokémon GO dataset offered the week's most fascinating detour into how games quietly build AI infrastructure.

Daily Wrap-Up

The most striking pattern today isn't any single model release or tool launch. It's that the entire scaffolding around AI-assisted development is being rebuilt simultaneously. We saw a new Rust-based IDE designed around agents as the primary unit of work, a collaborative document editor built for human-AI co-authorship, a formal security guide for agentic coding, a TLA+ prechecking tool for agent-generated designs, and an ongoing debate about how to properly sandbox Claude Code. Six months ago, the conversation was "can AI write code?" Now it's "what does the entire development environment look like when AI writes most of the code?" That shift from capability questions to infrastructure questions is the real story.

On the model side, the open-source drumbeat continues. Kimi's Attention Residuals paper introduces a genuinely interesting architectural idea (learned, input-dependent attention over preceding layers as a replacement for standard residual connections), and they open-sourced it. Nvidia shipped Nemotron-3 Super, a 120B-parameter MoE model with only 12B active parameters, purpose-built for agents. Z.ai released GLM-5-Turbo. The common thread: all optimized for agent workflows, all open. @TukiFromKL's breathless framing about Chinese labs versus Silicon Valley aside, the real dynamic is that agent-optimized inference is becoming a commodity faster than anyone expected. Meanwhile, @jamonholmgren's "Night Shift" workflow thread and @doodlestein's exhaustive multi-model planning process both point to the same conclusion: the developers getting the most out of these tools are the ones investing heavily in process design, not just prompt engineering.

The most practical takeaway for developers: if you're building with AI agents, invest your time in the surrounding infrastructure (sandboxing, security boundaries, verification tooling, structured planning documents) rather than chasing the latest model. The models are converging; your workflow is the differentiator.

Quick Hits

- @awilkinson RT'd a story about someone using ChatGPT to sell a house in 5 days with no real estate agent, getting 5 offers in 72 hours. The "AI replaces professional services" anecdote cycle continues.

- @naval RT'd @getjonwithit on how LLM success reveals how much human knowledge is fundamentally linguistic and pattern-based.

- @michaeljburry recommended an 1880 Smithsonian presentation as relevant to today's AI scaling debate, drawing parallels between historical technological optimism and current LLM hype. Weekend reading for the skeptically inclined.

- @theallinpod wrapped their SXSW Austin event with Michael Dell and Travis Kalanick, with the full show dropping tomorrow.

- @rohit4verse highlighted someone who reverse-engineered Anthropic's partner program features and released them for free, framing it as a "Claude architect" course.

- @nummanali made the case for Pi Coding Agent over OpenCode as a base for customizable AI development workflows, citing its plugin ecosystem and endorsement from Spotify's CEO.

- @mattpocockuk asked about alternatives to Docker sandbox for Claude Code on WSL, noting reliability issues and the inability to run truly AFK agent workflows with the built-in

/sandboxcommand.

Agents as the New Unit of Development

The idea that agents, not files, should be the organizing principle of development tools went from Karpathy shower thought to shipping software in record time. @elliotarledge announced OpenSquirrel, a Rust-based IDE built with GPUI (the same framework behind Zed) that treats agents as the central unit rather than files. It supports Claude Code, Codex, OpenCode, and Cursor CLI. As Elliot put it: "This really forced me to think up the UI/UX from first principles instead of relying on common electron slop." He was responding directly to @karpathy's observation that "the basic unit of interest is not one file but one agent. It's still programming."

This wasn't the only agent-native tool to land. @danshipper launched Proof, an open-source collaborative document editor where humans and AI agents work in the same document with provenance tracking (green rail for human text, purple for AI). The pitch is straightforward: when agents generate most of the text in your planning docs, PRDs, and memos, you need a tool designed for that reality rather than markdown files on your laptop. And @KingBootoshi released TLA Precheck, a tool that uses TLA+ formal verification to catch design bugs in agent-generated output before they become code bugs, with skills for both Claude Code and Codex.

What connects all three is a recognition that the agent revolution needs its own native toolchain. You can't just bolt agents onto file-centric IDEs and markdown editors and expect good results. The developers building these tools are essentially asking: if we designed the entire workflow from scratch knowing that agents do most of the typing, what would it look like?

Agentic Workflows and Process Design

Two of the day's longest and most substantive posts were essentially workflow manifestos. @jamonholmgren shared hard-won principles from his "Night Shift" agentic workflow, which he says is "about 5x faster, better quality" than previous approaches. His rules are blunt: "I never want to review another agent-produced plan again. Waste of my time, overwhelming, not worth it. It's valuable to the agent, but not to me." His philosophy centers on burning tokens freely for validation while protecting his own time and energy, and investing in process documentation that pays dividends across future sessions rather than guiding agents interactively.

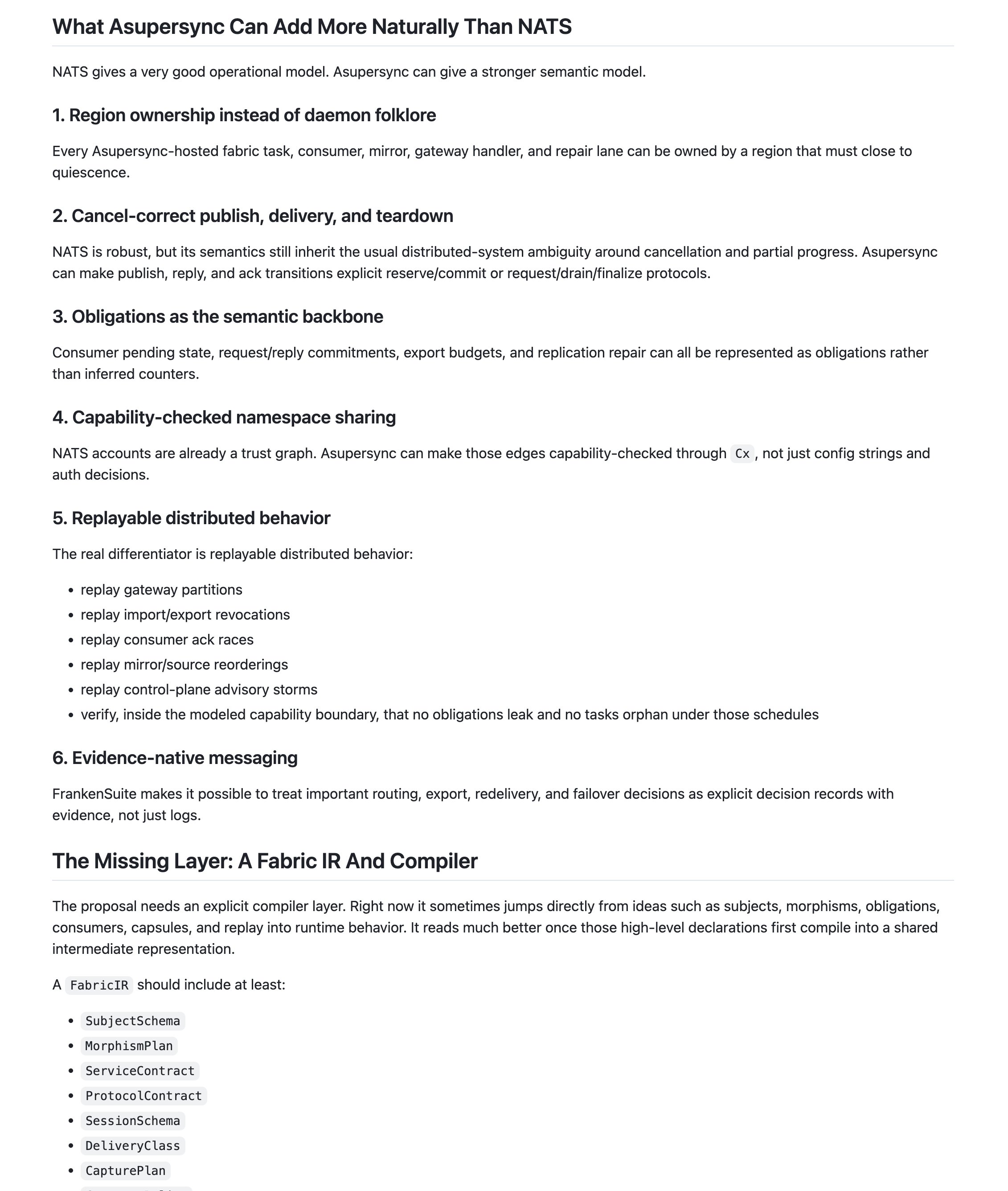

@doodlestein took the opposite approach to planning but arrived at a complementary conclusion. His post detailed an elaborate multi-model planning process for adding a messaging substrate to his Asupersync project: generate a proposal with GPT 5.4 in Codex, run it through five rounds of self-critique, then solicit feedback from GPT Pro, Gemini 3 Deep Think, Claude Opus 4.6, and Grok 4.2 Heavy, before synthesizing a "best of all worlds" hybrid. The process is intensive, but the logic is sound: "I'll work on the process, docs, and specs as much as I need to, in order to reap the benefits in future sessions."

The tension between these two approaches is productive. Jamon says don't review agent plans; Jeffrey says build elaborate multi-model planning pipelines. But both agree on the meta-principle: your competitive advantage is in process design, not in how cleverly you prompt a single model.

Open-Source Models Keep Shipping

The model release cadence shows no signs of slowing. @minchoi covered Nvidia's Nemotron-3 Super, a 120B-parameter mixture-of-experts model with 12B active parameters, open-sourced and built specifically for AI agent workloads. @Zai_org announced GLM-5-Turbo, a speed-optimized variant of GLM-5 targeting agent-driven environments. And @TukiFromKL highlighted Kimi's Attention Residuals paper, which introduces a learned attention mechanism over preceding layers as a drop-in replacement for standard residual connections, claiming a 1.25x compute advantage with negligible latency overhead.

@TukiFromKL framed this as an existential threat to closed-model companies: "The AI race isn't US vs China anymore... It's closed vs open. And closed is losing." That's an overstatement, but the directional pressure is real. When agent-optimized open models keep landing weekly, the value proposition of $200/month subscriptions gets harder to defend on capability alone. The differentiator shifts to reliability, tooling integration, and enterprise support.

Security and Sandboxing for Agents

As agents gain more autonomy, the security conversation is catching up. @affaanmustafa released "The Shorthand Guide to Everything Agentic Security," covering fundamentals and implementation for both coding with agents and deploying them in production. His motivation: "if people even follow just this, I guarantee the number of exploits and stories going around would drop significantly, to the level of occurrences that would be normal (before vibe coding)."

Meanwhile, @mattpocockuk's frustration with Docker sandboxing for Claude Code on WSL highlights the practical gap between wanting autonomous agents and being able to safely run them. The built-in /sandbox "doesn't do what I want," he noted, because "cc can always get around it and it doesn't allow for properly AFK workflows." This is the unsexy but critical infrastructure work that determines whether agent-heavy development actually scales.

The World Is Being Mapped, One Game at a Time

The day's most fascinating tangent came from @bilawalsidhu's deep analysis of Niantic's disclosure that Pokémon GO players contributed 30 billion real-world images now being used to train visual navigation AI for delivery robots. Rather than treating this as a scandal, Bilawal contextualized it within the broader landscape of spatial mapping: "Fused together, from body cam to dashcam to doorbell to phone to satellite, every layer of physical reality is being mapped by somebody right now."

His key insight is about incentive design: "Google spends billions. Mapillary tried altruism. Hivemapper grinds with crypto. Pokémon GO cracked something none of them could: a game mechanic that subsidizes the scanning behavior." The implication for AI developers is that the most valuable training datasets may not come from deliberate data collection at all, but from products that make data generation a side effect of something people already want to do.

Local Inference Goes Peer-to-Peer

Two bookmarked posts pointed to the quiet growth of local and offline AI. @jameskharwood2 described building a local LLM setup using "WebLLM (Llama-3.2-1B-Instruct quantized) that downloads once, then runs fully offline." And @meme_terrorist shared an open-source Android app that runs AI on-device and even spreads via peer-to-peer for grid-down scenarios. These are niche use cases today, but they represent the logical endpoint of the model-commoditization trend: when capable models are small enough to run on a phone and share over local networks, the entire cloud-dependent AI infrastructure becomes optional rather than necessary.

Sources

My current agentic workflow is about 5x faster, better quality, I understand the system better, and I’m having fun again. My previous workflows have left me exhausted, overwhelmed, and feeling out of touch with the systems I was building. They also degraded quality too much. This is way better. I’m not ready to describe in detail. It’s still evolving a bit. But I’ll give you a high level here. I call this the Night Shift workflow.

I want to become a Claude architect (full course).

# Build Something Just for Yourself: #2 Why not make your coding agent personal?

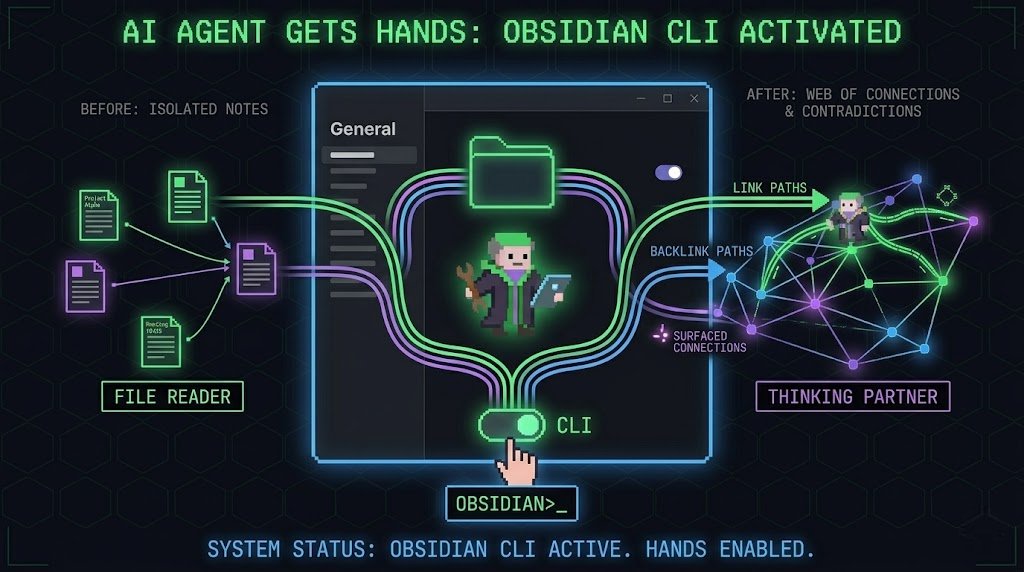

How I turned Obsidian into a second brain that runs itself

The Shorthand Guide to Everything Agentic Security

Expectation: the age of the IDE is over Reality: we’re going to need a bigger IDE (imo). It just looks very different because humans now move upwards and program at a higher level - the basic unit of interest is not one file but one agent. It’s still programming.

This is wild. 143 million people thought they were catching Pokémon. They were actually building one of the largest real-world visual datasets in AI history. Niantic just disclosed that photos and AR scans collected through Pokémon Go have produced a dataset of over 30 billion real-world images. The company is now using that data to power visual navigation AI for delivery robots. Players didn't just walk around with their phones. They scanned landmarks, storefronts, parks, and sidewalks from every angle, at every time of day, in lighting and weather conditions that staged photography would never capture. They documented the physical world at a scale no mapping company with a fleet of vehicles could have replicated on the same timeline or budget. Niantic collected this systematically, data point by data point, across eight years, while users thought the only thing at stake was catching a rare Charizard. The most valuable AI training datasets in the world aren't being assembled in data centers. They're being built by people who have no idea they're building them.

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: https://t.co/u3EHICG05h