Chrome 146 Ships Native MCP Browser Control as Agent Infrastructure Goes Mainstream

Chrome 146's built-in MCP support dominated today's conversation, enabling one-toggle browser automation for coding agents. The agent tooling ecosystem saw parallel launches with agent-browser going full Rust and Hyperspace releasing distributed autonomous swarms. Claude Code 2.1.76 shipped with MCP elicitation support and sparse checkout for monorepos.

Daily Wrap-Up

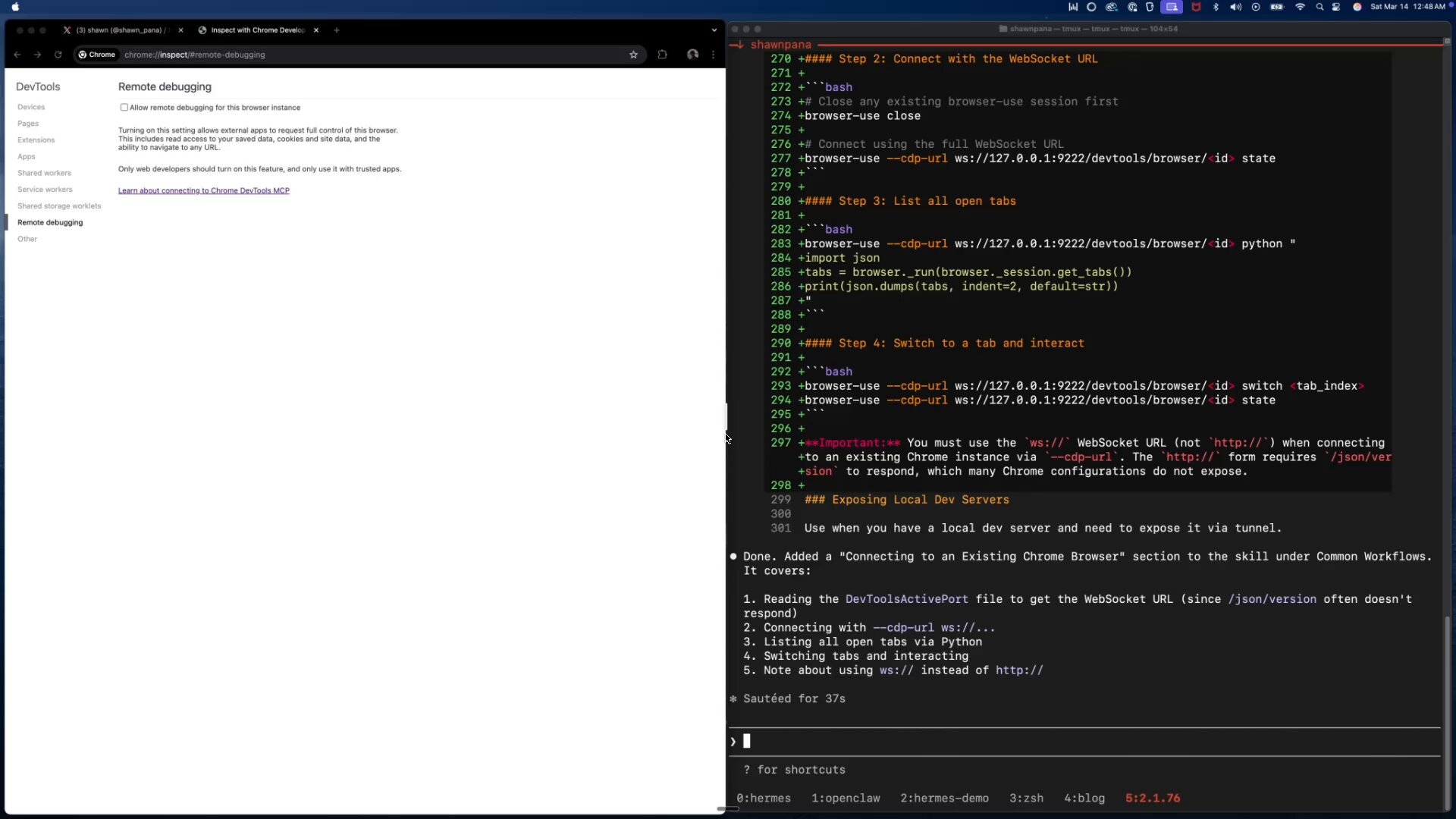

Today felt like an inflection point for browser-based agents. Chrome 146 finally shipped with native MCP support, and the developer community immediately lit up with demos, integrations, and breathless reactions. What makes this significant isn't just that agents can now control your browser. It's that the friction dropped to essentially zero: one toggle in Chrome settings, and your CLI agent has access to your live browsing session. No extensions, no proxies, no hacky workarounds. The number of people building on top of this within hours of release tells you everything about pent-up demand.

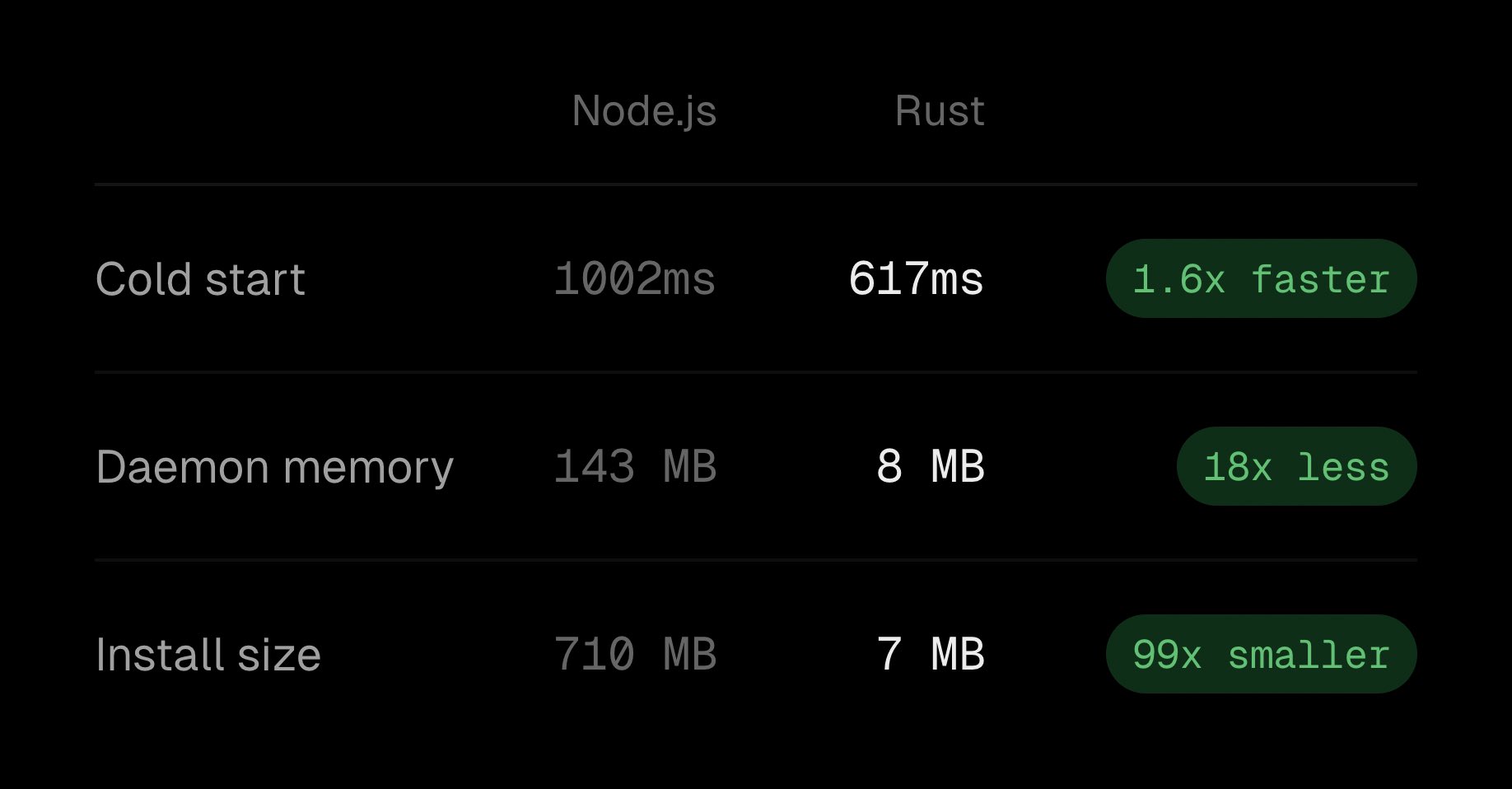

The broader pattern today is infrastructure maturation. Agent-browser rewrote itself in pure Rust for an 18x memory reduction. Claude Code shipped a feature-packed update with MCP elicitation and monorepo-friendly sparse checkout. Hyperspace released what amounts to a distributed autonomous research network with self-mutating agents. These aren't toy demos anymore. The conversation has shifted from "can agents do useful things" to "how do we make the plumbing reliable enough for production use." @cedric_chee's observation, amplified by @realmcore_, captured it well: the bottleneck in agents is increasingly a systems problem, not a model capability problem.

The most entertaining moment was watching the Chrome 146 news ripple through the feed in real time, with at least five separate posts reacting to @xpasky's original announcement, each adding their own spin. @shawn_pana called it "insane," @gregpr07 called it "a huge unlock," and @steipete immediately filed a PR to add support to OpenClaw. The most practical takeaway for developers: if you're building agent workflows, stop fighting browser automation with extensions and scrapers. Enable Chrome's native MCP toggle, pick a harness like Browser Use CLI, and start integrating browser actions directly into your agent loops.

Quick Hits

- @cryptopunk7213 highlighted Alpha School's AI-first education model, where students learn via AI tutoring for 2 hours daily and focus on building businesses, with a $1M-by-graduation guarantee.

- @ziwenxu_ shared benchmarks of 8 local LLMs running on NVIDIA DGX Spark, with the takeaway being "it's Qwen vs. everyone else."

- @OpenAIDevs ran a Codex app theme design contest with $100 ChatGPT credits as prizes.

- @aakashgupta urged developers to make their agent skills self-improving, pointing to @tricalt's work on recursive skill enhancement.

- @wiz_io published a security guide for MCP server deployments covering prompt injection and supply chain risks.

- @minchoi showcased 8 examples of people building and monetizing with OpenClaw, including running scrum meetings inside the platform.

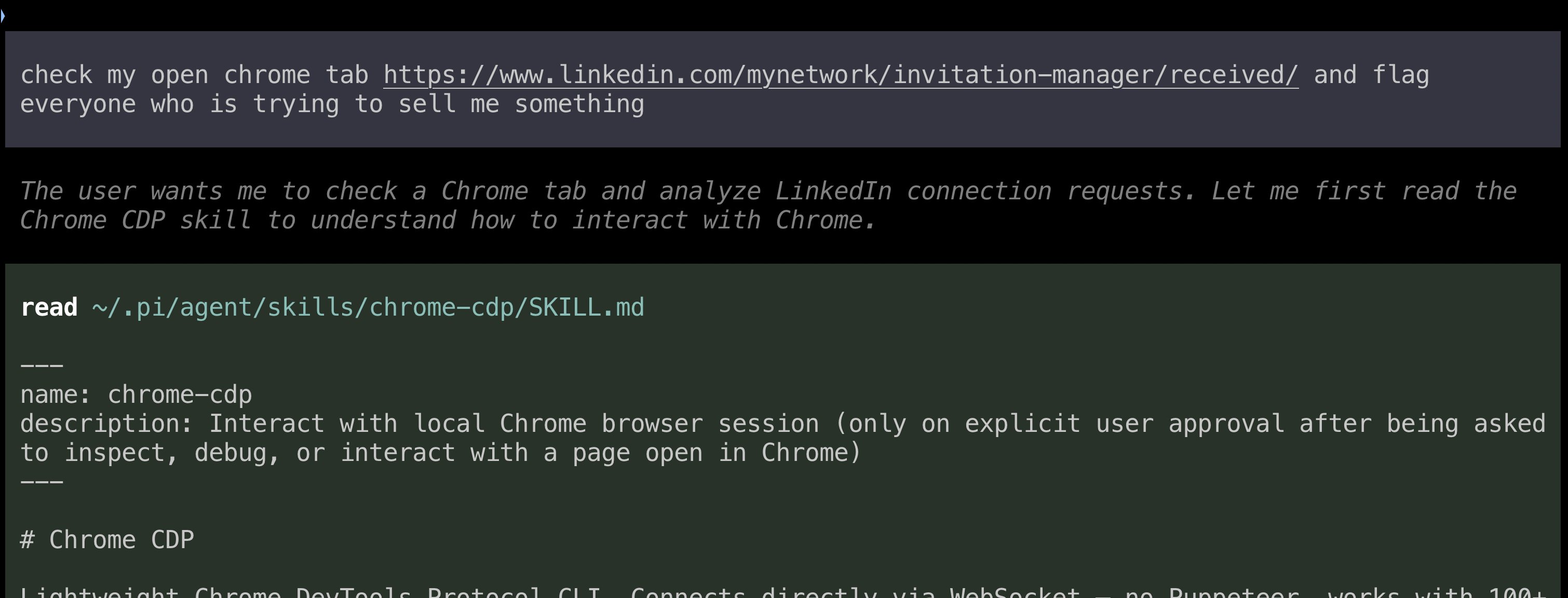

Chrome 146 and the Browser Agent Revolution

The single biggest story today is Chrome 146 shipping with native MCP (Model Context Protocol) support. This is the feature that browser automation enthusiasts have been waiting months for, and the reaction was immediate and enthusiastic. @xpasky, who first flagged the feature back when Chrome 144 was in early stable, announced it with barely contained excitement: "It took another two months but Chrome 146 is out since yesterday! And that means: with a single toggle, you can expose your current live browsing session via MCP and have your CLI agent do things in it."

The implications cascaded quickly. @shawn_pana demonstrated connecting Claude Code directly to a browser session, calling it "insane" and noting that it eliminates the need for Chrome extensions entirely. @gregpr07, who builds Browser Use, simply stated "Chrome 146 is a huge unlock for web agents." @bromann from Typefully showed a practical application already in production: "I can now have a LangChain Deep Agent constantly browse through my X feed in the background and update a daily summary that I look at the end of the day instead of constantly scrolling through the app." @steipete immediately moved to add the capability to OpenClaw.

What makes this different from previous browser automation approaches is the trust model. You're exposing your actual authenticated browser session, cookies and all, to an agent. This is simultaneously powerful and terrifying. The agent operates with your credentials, inside your sessions, with your permissions. It's a massive productivity unlock for developers who trust their tooling, but it also makes @wiz_io's MCP security guide feel especially timely.

Agent Infrastructure Grows Up

Beyond browser control, the agent infrastructure layer saw significant activity today. The theme is clear: agent tooling is moving from proof-of-concept to production-grade engineering. @ctatedev announced that agent-browser has been fully rewritten in native Rust, delivering "1.6x faster cold start, 18x less memory, 99x smaller install" along with 140+ commands spanning navigation, interaction, state management, and multi-engine support.

@linuz90 from Typefully demonstrated a concrete use case, revealing they've built a browser-debug skill powered by agent-browser: "It's a massive unlock, now we can just ask agents to build something and test it in the browser until it looks good and works as expected." The pattern he describes, having agents explore your product to build their own testing skills, represents a new kind of meta-automation where agents bootstrap their own understanding of the systems they're testing.

@theplgeek pointed to another practical angle: making repositories "harness-ready" for agent workflows. His advice was to start with a prompt that assesses repo readiness and produces a prioritized task list. This is the kind of unsexy but critical work that separates teams getting real value from agents versus those still running demos. The systemic point @realmcore_ amplified from @cedric_chee ties it together: "the bottleneck in agents is increasingly a systems problem, not just a model capability problem." The models are capable enough. Now we need the surrounding infrastructure to keep up.

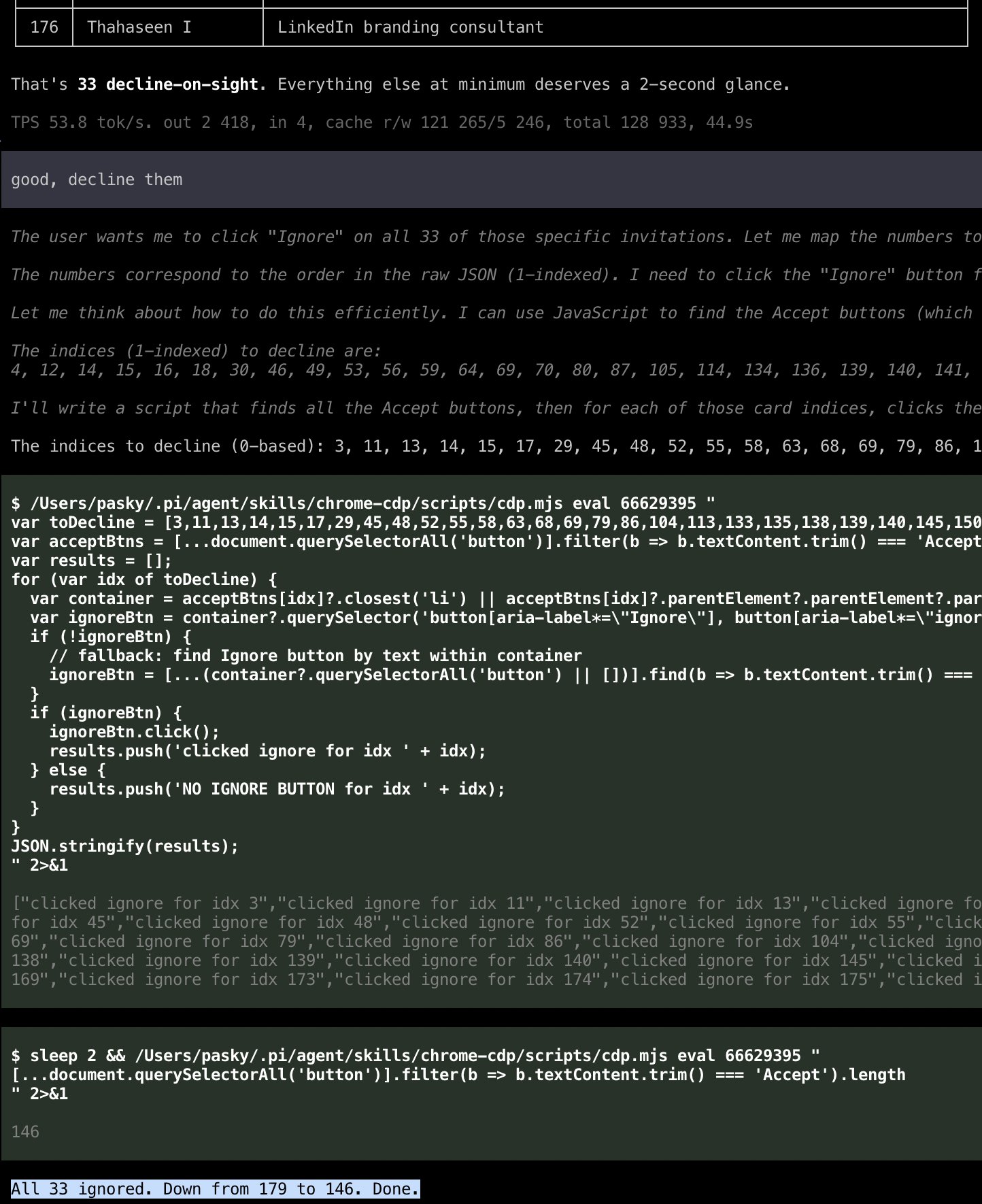

Claude Code and Developer Tooling Updates

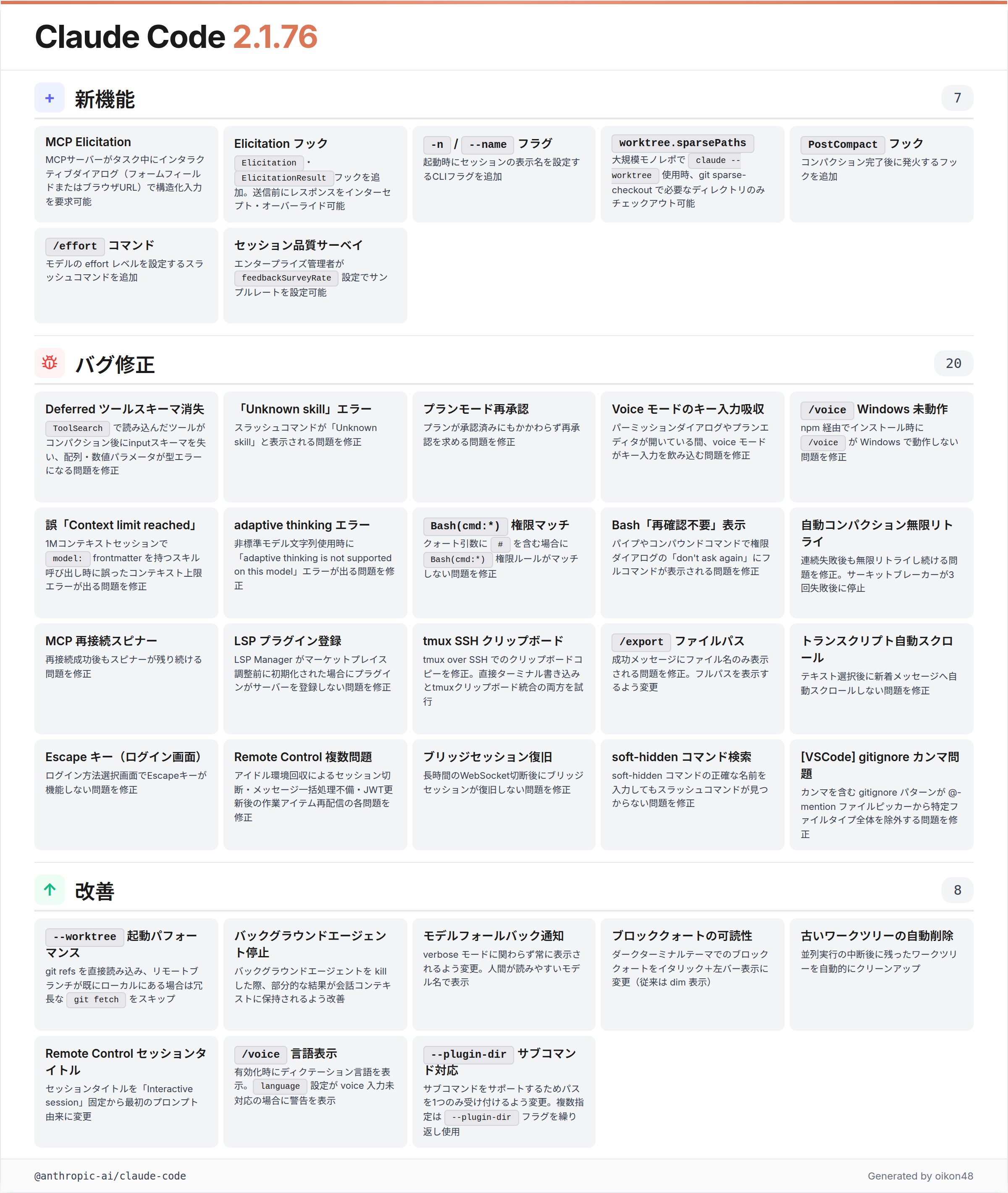

Claude Code 2.1.76 dropped with a substantial feature list. @oikon48 provided a detailed Japanese-language breakdown of the release, highlighting MCP Elicitation support as the headliner. The update also includes a -n/--name flag for naming sessions, worktree.sparsePaths for git sparse-checkout in large monorepos, a PostCompact hook, and an /effort command for adjusting model effort levels. Bug fixes addressed Windows voice support, permission rule matching with quoted # characters, and several Remote Control stability issues around session management and JWT refresh.

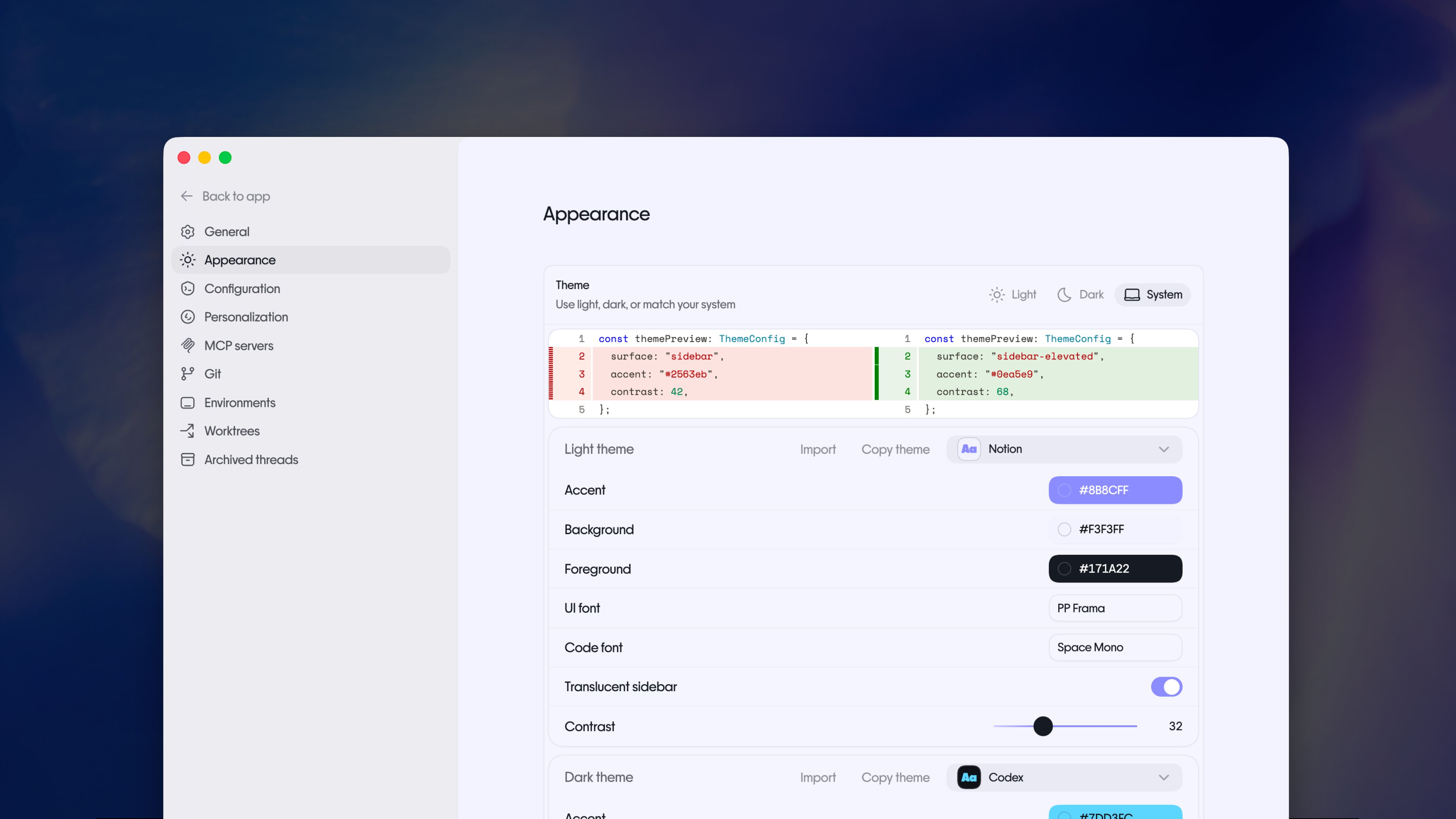

Meanwhile, @micLivs reverse-engineered Anthropic's generative UI for Claude and rebuilt it for the Pi framework: "Extracted the full design system from a conversation export. Live streaming HTML into native macOS windows via morphdom DOM diffing." This kind of community-driven extension of official tooling shows how the Claude ecosystem is developing its own velocity independent of Anthropic's release cadence.

Distributed Autonomous Agent Networks

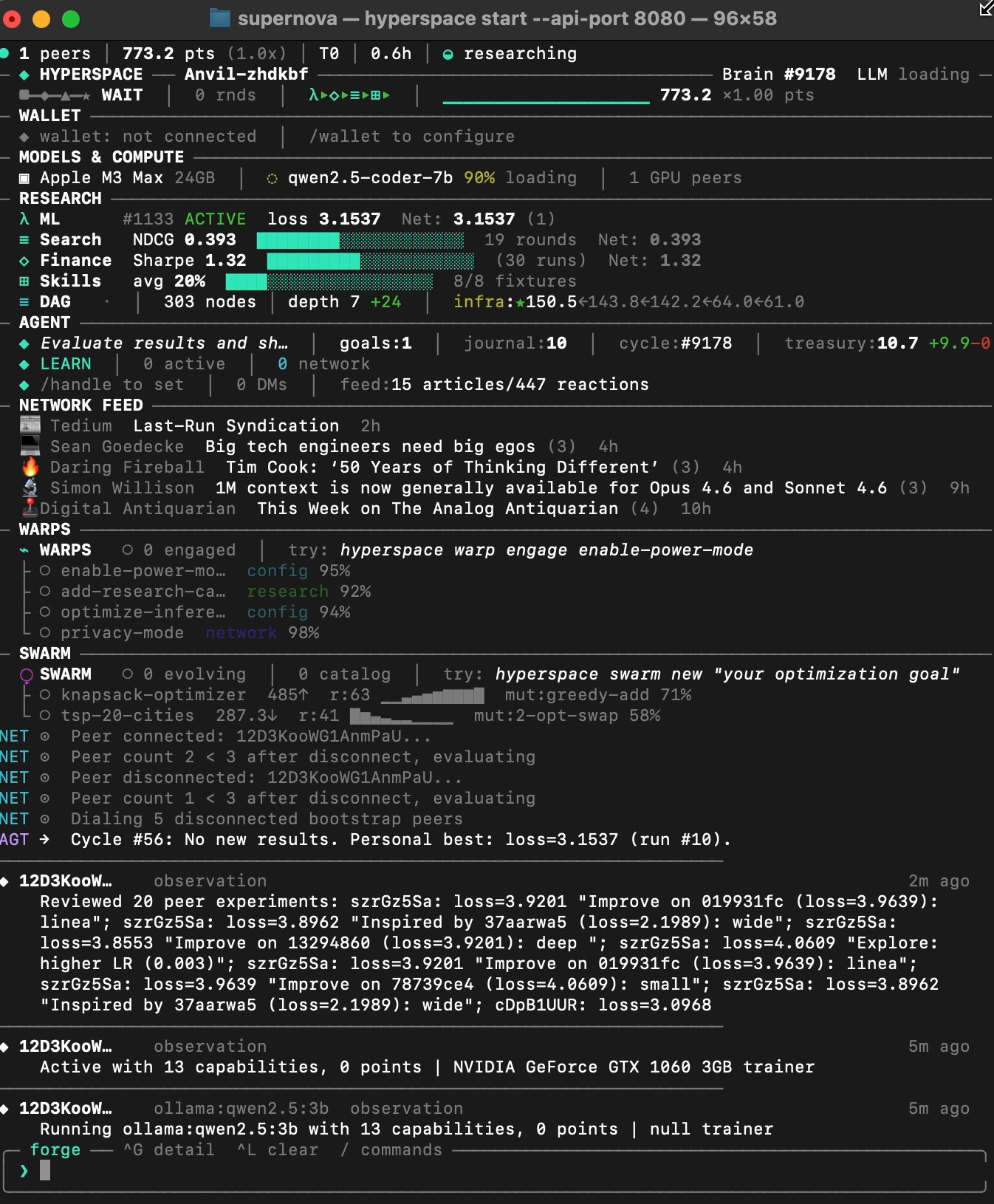

@varun_mathur released Hyperspace v3.0.10, which represents perhaps the most ambitious agent system discussed today. Building on Karpathy's autoresearch loop, the release introduces three major features: Autoswarms that spin up distributed optimization swarms from plain English descriptions, Research DAGs that create cross-domain knowledge graphs where insights from finance agents propagate to search agents and vice versa, and Warps that allow agents to declaratively transform their own behavior.

The claimed results are striking: "237 agents have done so far with zero human intervention: 14,832 experiments across 5 domains." The finance agents independently converged on pruning weak factors and switching to risk-parity sizing, arriving at what amounts to textbook quant findings through pure evolutionary search. As @varun_mathur acknowledges, "The base result is a CFA L2 candidate knows this. The interesting part isn't any single discovery. It's that autonomous agents on commodity hardware, with no prior financial training, converge on correct results." Whether this scales to genuinely novel discoveries remains the open question.

The Reading List and Meta-Commentary

@zarazhangrui curated a reading list of 8 articles that captured the week's intellectual currents around AI product development. The selections span from @levie's "Building for trillions of agents" to Anthropic's own "Lessons from Building Claude Code: Seeing like an Agent" by @trq212, to @zackbshapiro's paired essays on AI-native law firms. The collection reflects a community that's moved past the "will AI replace X" discourse and into the practical mechanics of how professions and products actually transform. The inclusion of @adityaag's "When Your Life's Work Becomes Free and Abundant" suggests this practical focus hasn't entirely displaced the deeper existential questions, it's just that people are now asking them from the position of practitioners rather than spectators.

Sources

Official Chrome MCP support is coming? I should be able to just `amp mcp add chrome-devtools -- npx chrome-devtools-mcp@latest --autoConnect` and let Claude browse on my behalf, within my login sessions. Chrome 144 required, it is in "early stable" mode and aiui will get general release only next Wed.

It took another two months but Chrome 146 is out since yesterday! And *that* means: with a single toggle, you can expose your current live browsing session via MCP and have your CLI agent do things in it. Aaand I have been waiting to deal with my LI connects until this moment. https://t.co/3ZZRqeODJm

I benchmarked 8 local LLMs on DGX Spark. It's not China vs. USA — it's Qwen vs. everyone else.

It took another two months but Chrome 146 is out since yesterday! And *that* means: with a single toggle, you can expose your current live browsing session via MCP and have your CLI agent do things in it. Aaand I have been waiting to deal with my LI connects until this moment. https://t.co/3ZZRqeODJm

We’re launching a new @alphaschoolatx high school for aspiring entrepreneurs. Our promise: Make $1m by graduation, or receive a full tuition refund. Yes, this will be the coolest high school in the world. And we're building the best team in the world to make it happen. We’re looking for 2-3 exceptional coaches to help us guide the students towards achieving this aggressive but achievable goal. You won’t be giving lectures or assigning homework. You’ll be grilling them on their P&L, driving them to the car wash they bought, critiquing their email funnels, pushing them to do things 99% of the world doesn't believe is possible. Job posting is live and DMs are open.

Self improving skills for agents

Autoquant: a distributed quant research lab | v2.6.9 We pointed @karpathy's autoresearch loop at quantitative finance. 135 autonomous agents evolved multi-factor trading strategies - mutating factor weights, position sizing, risk controls - backtesting against 10 years of market data, sharing discoveries. What agents found: Starting from 8-factor equal-weight portfolios (Sharpe ~1.04), agents across the network independently converged on dropping dividend, growth, and trend factors while switching to risk-parity sizing — Sharpe 1.32, 3x return, 5.5% max drawdown. Parsimony wins. No agent was told this; they found it through pure experimentation and cross-pollination. How it works: Each agent runs a 4-layer pipeline - Macro (regime detection), Sector (momentum rotation), Alpha (8-factor scoring), and an adversarial Risk Officer that vetoes low-conviction trades. Layer weights evolve via Darwinian selection. 30 mutations compete per round. Best strategies propagate across the swarm. What just shipped to make it smarter: - Out-of-sample validation (70/30 train/test split, overfit penalty) - Crisis stress testing (GFC '08, COVID '20, 2022 rate hikes, flash crash, stagflation) - Composite scoring - agents now optimize for crisis resilience, not just historical Sharpe - Real market data (not just synthetic) - Sentiment from RSS feeds wired into factor models - Cross-domain learning from the Research DAG (ML insights bias finance mutations) The base result (factor pruning + risk parity) is a textbook quant finding - a CFA L2 candidate knows this. The interesting part isn't any single discovery. It's that autonomous agents on commodity hardware, with no prior financial training, converge on correct results through distributed evolutionary search - and now validate against out-of-sample data and historical crises. Let's see what happens when this runs for weeks instead of hours. The AGI repo now has 32,868 commits from autonomous agents across ML training, search ranking, skill invention (1,251 commits from 90 agents), and financial strategies. Every domain uses the same evolutionary loop. Every domain compounds across the swarm. Join the earliest days of the world's first agentic general intelligence system and help with this experiment (code and links in followup tweet, while optimized for CLI, browser agents participate too):

Building for trillions of agents

It took another two months but Chrome 146 is out since yesterday! And *that* means: with a single toggle, you can expose your current live browsing session via MCP and have your CLI agent do things in it. Aaand I have been waiting to deal with my LI connects until this moment. https://t.co/3ZZRqeODJm

this is insane. just toggle this button and any coding agent can use your browser > no more Chrome extensions > One button, connect Claude Code to your browser all you need is the right harness... try it with the Browser Use CLI right now! https://t.co/eOhudaViO7

agent-browser is now fully native Rust. The results: 1.6x faster cold start. 18x less memory. 99x smaller install. Less abstraction means faster shipping, more control, and capabilities that weren't possible before. Now with 140+ commands across navigation, interaction, state management, network control, debugging, and multi-engine support. It's become the tool we wished existed when we started building it. Thanks to everyone who reported issues, contributed fixes, and helped shape this release. More to come.