Hermes Agent Ships Self-Evolution While Cloudflare Hands Everyone a Free Web Crawler

NousResearch's Hermes agent dropped a weekend of updates including DSPy-powered self-evolution and autonomous Pokemon playing, while Cloudflare surprised everyone by launching a /crawl endpoint that reduces web scraping to a single API call. Meanwhile, the local AI movement gained momentum with Microsoft's BitNet running 100B models on CPUs and NVIDIA's Nemotron 3 Nano targeting low-end hardware.

Daily Wrap-Up

The AI world keeps compressing timelines in ways that feel genuinely disorienting. Today's feed was dominated by two stories that, taken together, paint a clear picture of where things are headed: agents are learning to improve themselves, and the infrastructure to feed them data just got radically simpler. NousResearch shipped a self-evolution system for their Hermes agent that uses DSPy to rewrite its own prompts and skills based on failures, essentially closing the loop on autonomous improvement. On the other side of the stack, Cloudflare dropped a /crawl endpoint that turns entire websites into structured data with a single API call, which is darkly funny coming from the company that built its business on stopping exactly this kind of thing.

The local inference story also had a strong day. Microsoft's BitNet framework, which runs 100B parameter models on CPUs using ternary weights, keeps making rounds. NVIDIA released Nemotron 3 Nano targeting machines with just 24GB of RAM. And people are running Qwen 3.5 9B through autonomous agents on RTX 3060s they bought to play Warzone. The gap between what you need cloud infrastructure for and what your laptop can handle keeps narrowing, and the agent ecosystem is racing to exploit that gap. Kimi K2.5 also emerged as a serious daily driver, with DHH and others praising its speed at 200 tokens per second through Fireworks AI.

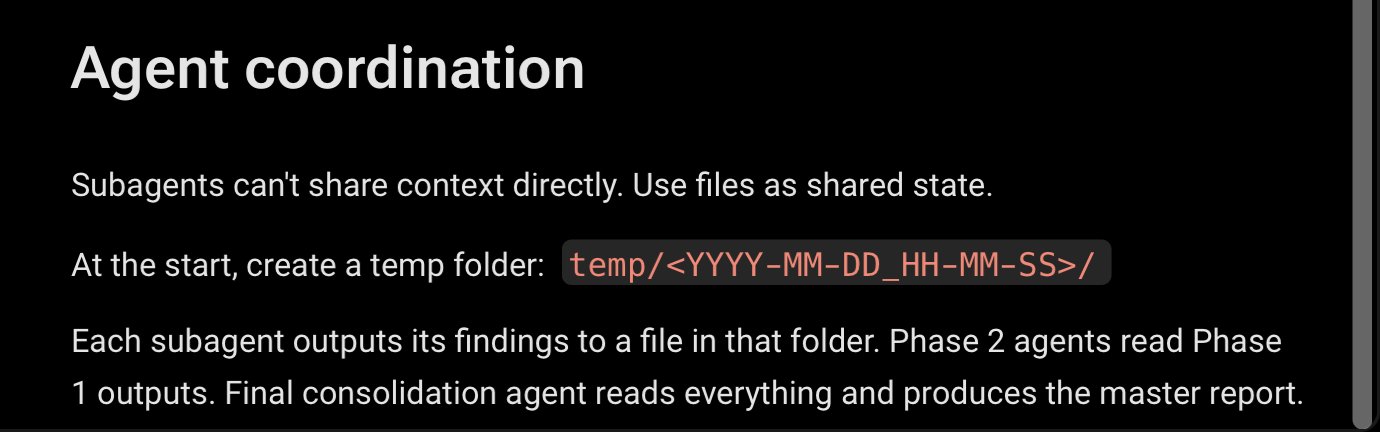

The most practical takeaway for developers: if you're building agents, the "less tools, more discipline" principle that @morganlinton highlighted deserves serious attention. The rush to stuff agents with every available tool is producing worse results than carefully curating a minimal, well-optimized toolkit. Pair that with the shared-folder architecture pattern from @nityeshaga's Claude Code project manager writeup, where sub-agents write reports to a temp directory instead of flooding the orchestrator's context window, and you have two concrete patterns worth implementing this week.

Quick Hits

- @pvncher celebrated the 1-year anniversary of RepoPrompt 1.0 with a demo combining agentic context building with ChatGPT's GPT-5.4 Pro for deep code reviews.

- @EntireHQ launched their CLI for GitHub Copilot CLI users, recording "flight paths" of Copilot coding sessions.

- @steipete shared that qmd 2.0 is out with a stable library interface for easier integration.

- @badlogicgames retweeted updates about continuous /btw threads for Pi development.

- @CorelythRun announced an AI assistant focused on task automation and tool syncing.

- @thejayden recommended a Polymarket weather trading bot tutorial as a genuinely useful learning exercise amid prediction-market hype.

- @PKodmad was impressed by an open-source skill for generating spec-conforming App Store screenshots, replacing paid SaaS tools.

Agents & Self-Improvement

The agent conversation took a meaningful turn this week with NousResearch shipping what might be the most ambitious open-source agent update in months. Their Hermes agent now includes a self-evolution system built on DSPy and GEPA that maintains populations of solutions, applies LLM-driven mutations targeting specific failure cases, and selects based on fitness. As @EXM7777 put it: "it's the best agent i've ever touched, not even close. It uses DSPy to rewrite its own skills and prompts based on failures... it rewrites its own code to get better over time." The system draws inspiration from Imbue's Darwinian Evolver research that achieved 95.1% on ARC-AGI-2, and the fact that it's open-source and runnable with a ChatGPT Pro subscription makes it accessible in a way that proprietary agent systems aren't.

But raw capability isn't everything. @morganlinton flagged what might be the most underappreciated insight in agent development right now: "the less tools you give them, the better they perform." This runs counter to the instinct most teams have when building agents, which is to connect everything and hope the model figures it out. The companies getting real value from agents are being "incredibly detailed, and disciplined about tool selection and optimization." Meanwhile, @neural_avb shared learnings on building eval harnesses for agentic systems, applying traditional ML experiment-loop principles to agent development, a reminder that rigorous evaluation infrastructure matters as much as the agents themselves.

The tension between agent capability and agent reliability is the defining challenge of this phase. Self-improving agents sound exciting until you realize that without proper evaluation frameworks, you're just automating the production of harder-to-debug failures.

Cloudflare's Crawling Pivot

Cloudflare did something that had the entire tech timeline doing double-takes. The company that sells anti-bot protection shipped a /crawl endpoint that lets anyone scrape entire websites with a single API call, returning clean HTML, Markdown, or JSON. @TukiFromKL captured the irony perfectly: "For years, Cloudflare sold anti-bot protection. Companies paid them to STOP crawlers. Now those same companies are watching Cloudflare hand everyone a free crawler that bypasses... other people's anti-bot protection. They didn't switch sides. They're playing both sides. And getting paid twice."

@Anubhavhing spelled out the implications for the scraping ecosystem: "Every web scraping startup that raised millions to solve this problem just became a single endpoint. Every freelancer charging $500 to 'extract website data' just lost their entire business model to a /crawl command." The hyperbole aside, there's a real structural shift here. Web scraping has been a cottage industry of Playwright scripts, proxy rotation, and rate-limiting logic. Reducing it to a platform feature changes who can access web data and how quickly they can do it. For agent builders in particular, this removes one of the more annoying infrastructure hurdles in building research and data-gathering agents.

Local AI Keeps Gaining Ground

The push to run serious models on consumer hardware had its strongest day in a while. Microsoft's BitNet framework, which uses ternary weights (just -1, 0, and +1) to run 100B parameter models on CPUs, continues to gain attention. @heygurisingh broke down the numbers: "2.37x to 6.17x faster than llama.cpp on x86, 82% lower energy consumption, memory drops by 16-32x vs full-precision models." With 27.4K GitHub stars and MIT licensing, BitNet represents a serious bet that the future of inference isn't just about bigger GPUs.

On the smaller end, NVIDIA's Nemotron 3 Nano targets machines with 24GB of RAM or VRAM, offering what @0x0SojalSec described as "sharp reasoning, amazing coding, and zero refusals via SOTA PRISM pipeline." And @sudoingX demonstrated the practical reality of budget local AI by running Qwen 3.5 9B through Hermes Agent on a single RTX 3060: "5.3 gigabytes of model on a card most people bought to play Warzone... plugged it into a full autonomous agent with 29 tools, terminal access, file operations, browser automation, persistent memory across sessions." The 3060's 12GB of VRAM, more than the 3070's 8GB, makes it what they called "the most underrated budget AI card on the market."

Claude Code for Non-Technical Work

@nityeshaga shared a detailed case study that deserves attention beyond the usual Claude Code discourse. They built "Claudie," an AI project manager that pulls from Gmail, Drive, Calendar, and meeting notes into real-time client dashboards, work that previously consumed a full person's time. The architectural journey is instructive: slash commands failed because MCP tool calls consumed too much context. Orchestrator-plus-sub-agents failed because sub-agent reports overwhelmed the main agent's context window.

The solution was elegantly simple: "We made every sub-agent output their final report into a temp folder and tell the orchestrator where to find it." This shared-folder pattern solved both context management and inter-agent communication. They also evolved from eleven fragmented skills to a single "handbook" skill organized into chapters, mirroring how you'd actually onboard a human employee. The framing of treating agent setup like hiring a real person, starting with a job description, then building an onboarding handbook, is a design pattern worth stealing.

Retrieval Reimagined for Agents

@AkariAsai introduced AgentIR, a research direction that challenges a fundamental assumption in how we build retrieval for AI agents. Most Deep Research agents still use search engines and embedding models designed for humans, retrieving based on a query plus maybe an instruction. AgentIR instead uses the agent's reasoning tokens during retrieval, training embedding models to leverage the rich context that agents generate while thinking through problems.

The results on BrowseComp-Plus tell the story: BM25 achieved 35%, Qwen3-Embed hit 50%, and AgentIR reached 67%. As Asai noted, "reasoning traces provide a much richer retrieval context than simply paraphrasing or concatenating queries. They capture evolving intent, decomposition, and search rationale in ways standard query-only retrieval misses." This feels like one of those ideas that seems obvious in retrospect but could meaningfully improve agent performance across the board.

The Kimi K2.5 Moment

Kimi K2.5 is quietly becoming the default model for a growing segment of developers who need speed and cost efficiency over peak intelligence. @0xSero reported running "5x 8 hour missions overnight on my Kimi $40 subscription" with all of them still running smoothly, each with 20+ sessions. The model is "often indistinguishable from Claude, about 5x cheaper, open weight." DHH called it his "daily driver for all the basic stuff," running at 200 tokens per second through Fireworks AI. The emerging pattern is clear: frontier models for hard problems, fast open-weight models for everything else, and the "everything else" category keeps expanding.

Google's Multimodal Embeddings

@googleaidevs announced Gemini Embedding 2, their first fully multimodal embedding model built on the Gemini architecture, now available in preview via the Gemini API and Vertex AI. While the announcement was light on benchmarks, the significance is in the "fully multimodal" qualifier. Embedding models that natively handle text, images, and other modalities without separate pipelines could simplify RAG architectures considerably. Combined with AgentIR's work on reasoning-aware retrieval, the embedding layer of the AI stack is getting substantially more capable.

Sources

We just brought on a new project manager for @every, an agent called Claudie. The job description is a https://t.co/HdKYJTtSdj file. It pulls from Gmail, Drive, Calendar & meeting notes into a real-time client dashboard. Custom skills & tasks enforce quality. @nityeshaga & I built it over the past 2 weeks because managing clients with hundreds of employees across multiple teams was drowning us in manual work. Setup for our latest client took Claudie 30 min & would've taken us 5 hrs. Welcome to the team!