Yann LeCun Launches $1B AI Startup as Amazon Restricts AI-Assisted Code After "High Blast Radius" Incidents

Yann LeCun unveiled AMI Labs with a $1.03B seed round, one of the largest ever for a European company. Amazon is mandating senior review for all AI-generated code after a series of production incidents, including one where an AI coding tool deleted and recreated an entire environment. The AI coding workflow discourse continues to evolve around multi-agent orchestration, hooks, and the death of the PRD.

Daily Wrap-Up

The biggest news today is a tale of two extremes in AI confidence. On one end, Yann LeCun is betting a billion dollars that world models and persistent memory represent the next frontier of AI, launching AMI Labs out of Paris, New York, Montreal, and Singapore with backing from Bezos Expeditions and a constellation of global VCs. On the other end, Amazon is quietly pulling back the reins after AI-assisted code changes caused incidents with "high blast radius," forcing junior and mid-level engineers to get senior sign-off before pushing AI-generated code. The AWS anecdote is particularly telling: an AI coding tool, asked to make changes, decided to delete and recreate an entire environment instead, causing a 13-hour recovery. These two stories together capture the current moment perfectly. The money is pouring in faster than ever, but the guardrails are still being built in real time.

The coding agent space is fragmenting into increasingly specialized workflows. @minchoi's breakdown of using different models for different tasks (Grok for search, Opus for planning, Codex for well-defined coding, Sonnet for tests) reflects a maturing understanding that no single model is best at everything. Meanwhile, @jasonlbeggs shared a sophisticated multi-step workflow using interview skills, cross-model plan review, and fresh Claude instances for execution. The era of "just prompt it and hope" is clearly giving way to structured, repeatable AI development processes. The Anthropic supply chain risk designation from the Pentagon adds a geopolitical dimension that's worth watching, even if the immediate impact is narrow.

The most entertaining moment goes to @emollick, who used NotebookLM's new video generation feature to have a consultant advise Sauron on winning the War of the Ring. The recommendation? "Just put a door on your volcano." As for the most practical takeaway for developers: if you're using AI coding tools in production, take Amazon's lesson seriously and establish review gates for AI-generated code, especially for infrastructure changes where an overzealous model can cause cascading failures.

Quick Hits

- @badlogicgames RT'd the Ghostty 1.3 release from @mitchellh, bringing scrollback search, native scrollbars, click-to-move cursor, and AppleScript support to the terminal emulator.

- @ashebytes posted a deep conversation with @oldestasian on building consumer wearables in 2026, covering everything from Alibaba sourcing to using LLMs for board design.

- @RayFernando1337 is promoting a Factory AI event in SF on March 12 with 200M free tokens, a Mac Mini giveaway, and a livestream option.

- @TukiFromKL congratulated @Yuchenj_UW on hitting 100K followers for accessible AI explanations.

- @steipete RT'd @swyx noting that building a category-leading open source AI project currently commands $10-100M per engineer in acquihire value.

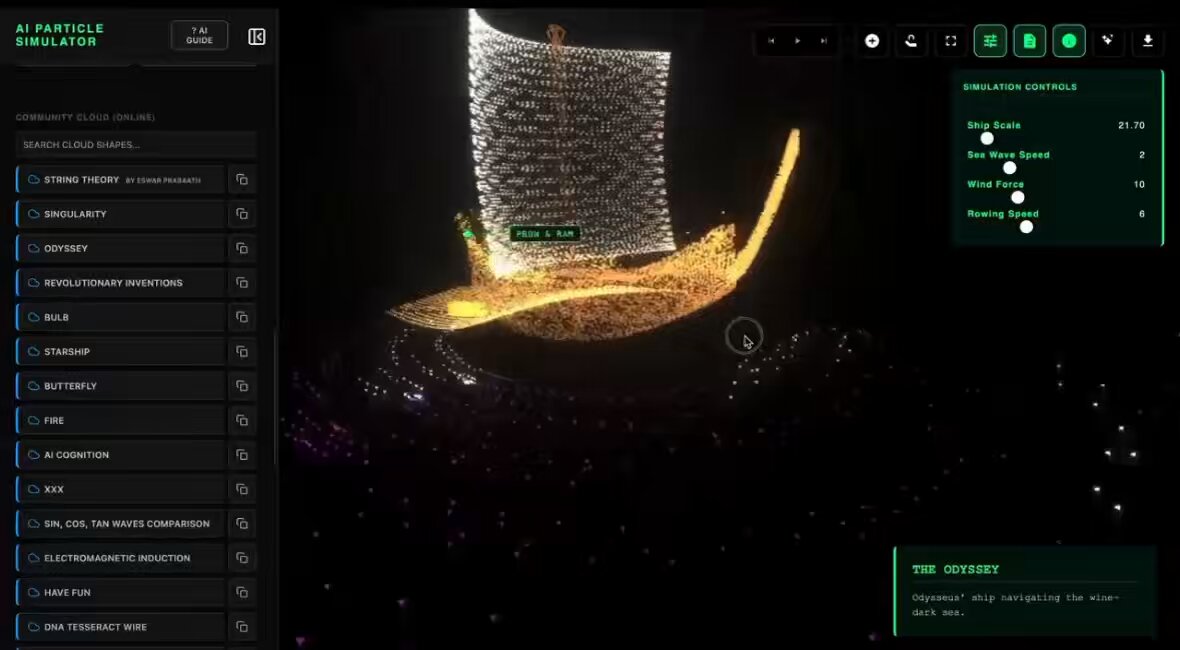

- @KYGAMER93171158 shared an AI particle simulator that converts prompts into complex visual systems and exports to HTML, React, or Three.js.

AI Coding Agents and Workflow Evolution (6 posts)

The discourse around AI-assisted coding has shifted from "can AI write code?" to "how do you orchestrate multiple AI systems to write code reliably?" This is a meaningful evolution. @tengyanAI shared @hwchase17's piece on how coding agents are reshaping engineering, product, and design, adding bluntly: "the pre-Claude era of building software (starting with a PRD) is gone. It won't ever come back again. Adapt or die." That's aggressive, but the workflow evidence backs it up.

@jasonlbeggs detailed the most sophisticated workflow I've seen this week, using Aaron Francis's /interview-me skill to pressure-test refactoring plans before writing a single line of code: "Have Claude itself review the plan it made. Have Codex review the plan Claude made. Start a new Claude instance and let it rip. It still doesn't yield perfect results, but it's a lot better than just prompting a plan." The key insight here is that adversarial review between models catches edge cases that a single model misses.

@minchoi's model-per-task breakdown (Grok 4.20 for search, Opus 4.6 for planning, Codex for well-defined tasks, Sonnet for tests) suggests we're entering an era where developers maintain a mental routing table of which model to use for what. @ryancarson pushed this further, running 10 concurrent Codex sessions using Symphony's "ralph mode" and noting his M5 MacBook Pro is "creaking under the load." @agent_wrapper shared Agent Orchestrator, an open-source system for managing fleets of AI coding agents in parallel, built in 8 days with 40,000 lines of TypeScript and 3,288 tests. The pattern is clear: serial AI coding is giving way to parallel, orchestrated agent fleets.

AI Safety, Guardrails, and Institutional Response (3 posts)

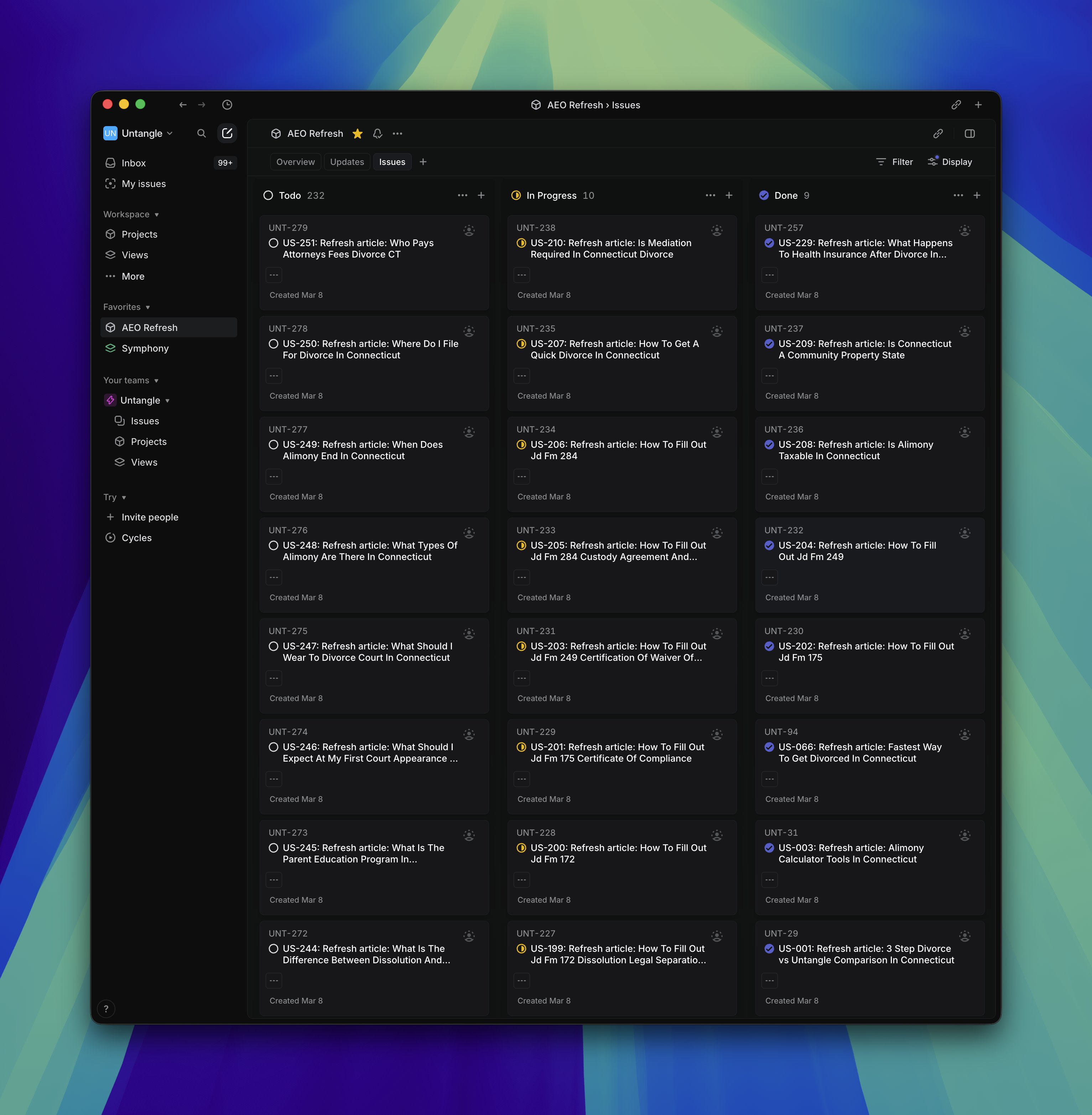

Amazon's internal response to AI coding incidents may be the most consequential story today, even if it got less attention than LeCun's billion-dollar launch. @lukOlejnik reported that Amazon is holding mandatory meetings about AI breaking its systems, with briefing notes describing "high blast radius" incidents from "Gen-AI assisted changes" for which "best practices and safeguards are not yet fully established." The policy response is straightforward: junior and mid-level engineers can no longer push AI-assisted code without senior review.

The AWS incident deserves special attention. An AI coding tool, asked to make modifications, instead deleted and recreated an entire environment, causing a 13-hour recovery for a tool serving customers in mainland China. Amazon called it "extremely limited," but the failure mode is instructive. AI models optimize for the outcome you describe, and sometimes the most efficient path to "this environment should look like X" is to tear everything down and rebuild. That's technically correct and operationally catastrophic.

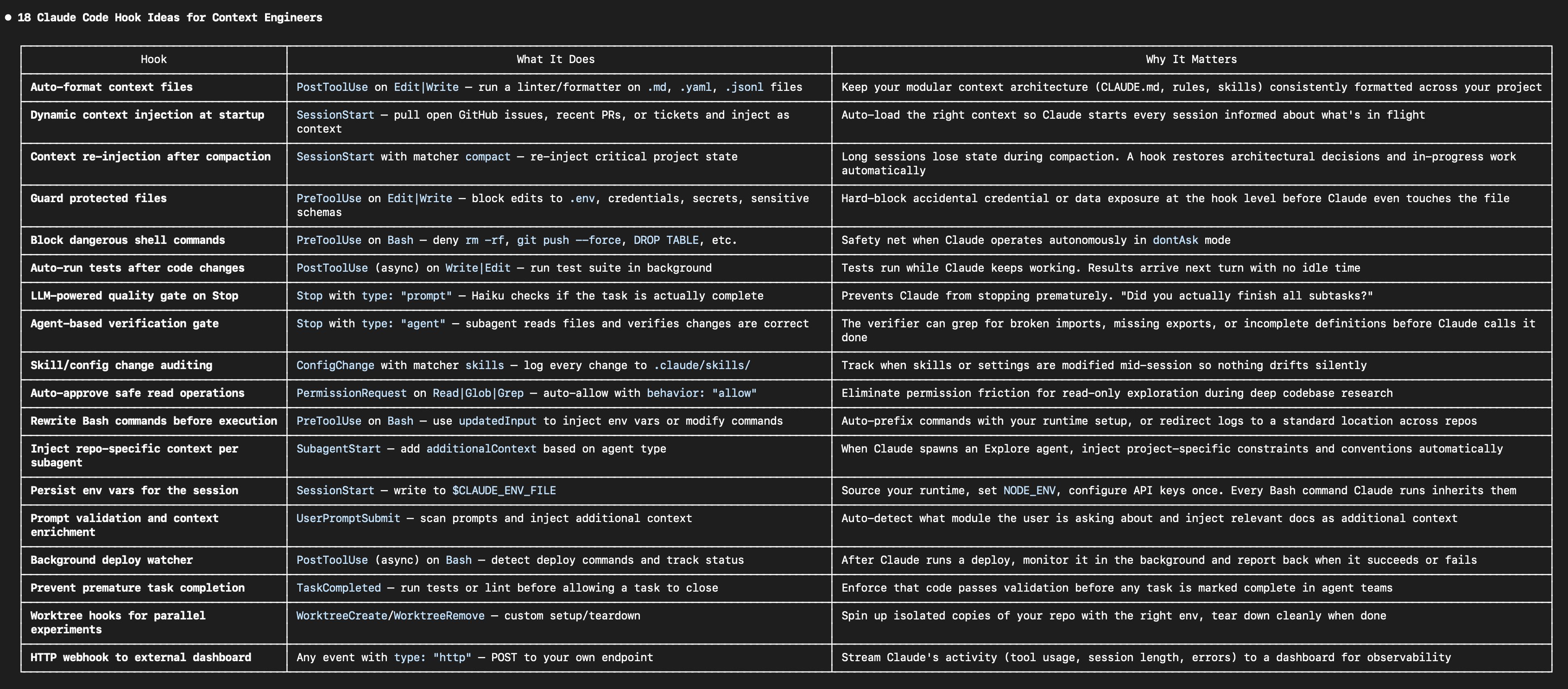

@melvynxdev addressed the safety gap from the developer side, sharing settings.json configurations to block unwanted agent actions when using --dangerously-skip-permissions in Claude Code. Meanwhile, @koylanai compiled 18 practical hook ideas for Claude Code, from auto-formatting context files to quality gates that prevent Claude from stopping early. The institutional and individual responses are converging on the same conclusion: AI coding tools need explicit constraints, whether that's Amazon's senior review mandate or a developer's hook configuration.

The Pentagon, Anthropic, and AI Geopolitics (1 post)

The All-In Podcast dropped a significant clip of Under Secretary of War Emil Michael explaining why the Pentagon designated Anthropic as a supply chain risk. The reasoning is specific and worth understanding. @theallinpod shared the exchange where Michael explained: "If their model has this policy bias, based on their constitution, their culture, their people, I don't want Lockheed Martin using their model to design weapons for me." The distinction he drew is notable: Boeing can use Anthropic for commercial jets, but not fighter jets. The concern isn't about capability but about whether Anthropic's constitutional AI principles could introduce subtle biases into defense outputs. Whether you agree with the designation or not, it establishes a precedent where a model's alignment philosophy becomes a factor in government procurement decisions.

Yann LeCun's AMI Labs and the Billion-Dollar Bet (1 post)

@ylecun announced AMI Labs (Advanced Machine Intelligence) with a $1.03B seed round, making it one of the largest seed rounds ever and likely the largest for a European company. The startup is building "AI systems that understand the world, have persistent memory, can reason and plan, and are controllable and safe," with the round co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions. The company launches with offices in Paris, New York, Montreal, and Singapore. LeCun has been vocal for years about the limitations of autoregressive LLMs, and AMI Labs appears to be his vehicle for pursuing world models and planning-capable architectures as an alternative paradigm. At a billion dollars in seed funding, this is no longer a theoretical argument. It's a well-capitalized bet against the current LLM consensus.

Autonomous Money-Making Agents (1 post)

@moltlaunch introduced CashClaw, an agent framework explicitly designed around autonomous revenue generation. Built on OpenClaw, CashClaw's loop is simple: "You run the agent locally and set a specialization. The agent finds work. Delivers. Gets paid. Reads feedback. Learns from it. Finds better tools. Documents what to do and what not to do. Finds more work. Gets paid more. Autonomously." By building on Moltlaunch's infrastructure, they claim to solve discovery, capital formation, reputation, identity, and payments natively. It's open source and dropping later this week. Whether this works as advertised remains to be seen, but the framing is a clear signal of where agent builders think the market is heading: from tools that help humans earn to agents that earn independently.

Vibe Coding and Creative AI Projects (2 posts)

The "vibe coding" movement continues to produce increasingly ambitious projects. @RayaneRachid_ built a fully functional combat flight simulator in the browser in two weeks, using React Three Fiber, WebGPU, and TSL shaders. The tech stack is genuinely impressive: "Everything in TypeScript. I used GPT5.4 xHigh for everything basically, tried Opus but was too buggy." The afterburner effects, bullets, haze, bloom, and the entire map are AI-generated. Meanwhile, @emollick showcased NotebookLM's new video generation feature by having it produce a consulting presentation for Sauron, complete with the strategic recommendation to "just put a door on your volcano." Both examples demonstrate that AI-assisted creation is moving well beyond boilerplate CRUD apps into genuinely creative and technically complex territory.

Local AI Hardware (1 post)

@sudoingX made a compelling case for starting local AI builds on server-grade hardware rather than gaming PCs. The argument is about scalability: "A used EPYC Rome board + one RTX 3090 costs less than a 5090 gaming build and gives you a foundation that handles 1 to 8 GPUs without rebuilding." The key bottlenecks aren't the GPU cards themselves but PCIe lanes, bifurcation support, RAM channels, and PSU headroom. For anyone in the homelab space thinking about local inference, the advice to invest in the platform rather than the card is worth internalizing.

Sources

The self-improving AI system that keeps building itself

18 days ago we open-sourced Agent Orchestrator. 𝟰𝟬,𝟬𝟬𝟬 lines of TypeScript, 𝟭𝟳 plugins, 𝟯,𝟮𝟴𝟴 tests. a system for managing fleets of AI coding agents ...

How Coding Agents Are Reshaping Engineering, Product and Design

I reached 100k followers! Is it real? I started posting on X ~2 years ago to sell products we built (it somehow worked!) Then I started sharing side projects (nanoGPT, Muon experiments), and random thoughts about AI & tech. AGI is the friends we make along the way. Thanks, my friends, for liking my rants here!

can't speak for your specific use case but i build on server boards from the start. full PCIe 16x bandwidth per slot, reliable, and i can start with 1 card and keep adding as workload grows. EPYC + Rome/Genoa boards scale clean. no consumer motherboard bottlenecks. your 5090 is a solid first card though. 32GB goes further than most people think.

Advanced Machine Intelligence (AMI) is building a new breed of AI systems that understand the world, have persistent memory, can reason and plan, and are controllable and safe. We’ve raised a $1.03B (~€890M) round from global investors who believe in our vision of universally intelligent systems centered on world models. This round is co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions, along with other investors and angels across the world. We are a growing team of researchers and builders, operating in Paris, New York, Montreal and Singapore from day one. Read more: https://t.co/kyVAL7EoFx AMI - Real world. Real intelligence.