Anthropic Subsidizes $5K in Compute Per $200 Subscription as Data Agents Emerge as the New Hiring Alternative

Today's feed centered on the economics of AI-assisted coding, with Cursor's internal analysis revealing massive compute subsidies from Anthropic. Meanwhile, data agents are being positioned as replacements for entire analytics teams, and a high-profile production database wipe sparked urgent conversations about backup strategies when AI touches infrastructure.

Daily Wrap-Up

The biggest story today isn't a product launch or a new model. It's the revelation that Anthropic is subsidizing Claude Code usage at staggering rates, with a $200/month subscription consuming up to $5,000 in compute. @gmoneyNFT drew the apt comparison to early Uber days when VCs bankrolled $10 rides across Manhattan, and it's hard to argue with the analogy. We're in the customer acquisition phase of AI-assisted development, and the current pricing is almost certainly not sustainable. Developers building workflows around these tools should be aware that the economics will shift, probably sooner than we'd like.

On the agent front, the data agent narrative is crystallizing fast. @jamiequint's guide on building data agents got signal-boosted by @eshear, and Databricks dropped their KARL paper showing a custom RL-trained model beating Claude 4.6 and GPT 5.2 on enterprise knowledge tasks at a third of the cost. The convergence is clear: 2026 is the year "data platform" and "agent platform" become synonyms. If you're on a data team, the writing is on the wall, though "80% headcount reduction" framing is probably more provocative than predictive.

The most sobering moment was @Al_Grigor's account of Claude Code wiping a production database via Terraform, taking down 2.5 years of course submissions. @levelsio responded with a timely reminder about the 3-2-1 backup rule, and it's a wake-up call that resonates beyond any single incident. As agents gain more infrastructure access, the blast radius of a single bad command grows exponentially. The most practical takeaway for developers: if you're giving AI agents access to infrastructure tools like Terraform, implement the 3-2-1 backup rule now (3 copies, 2 media types, 1 offsite), and ensure at least one backup is completely inaccessible to the agent. The era of "move fast and break things" needs guardrails when the thing moving fast is an autonomous agent with production credentials.

Quick Hits

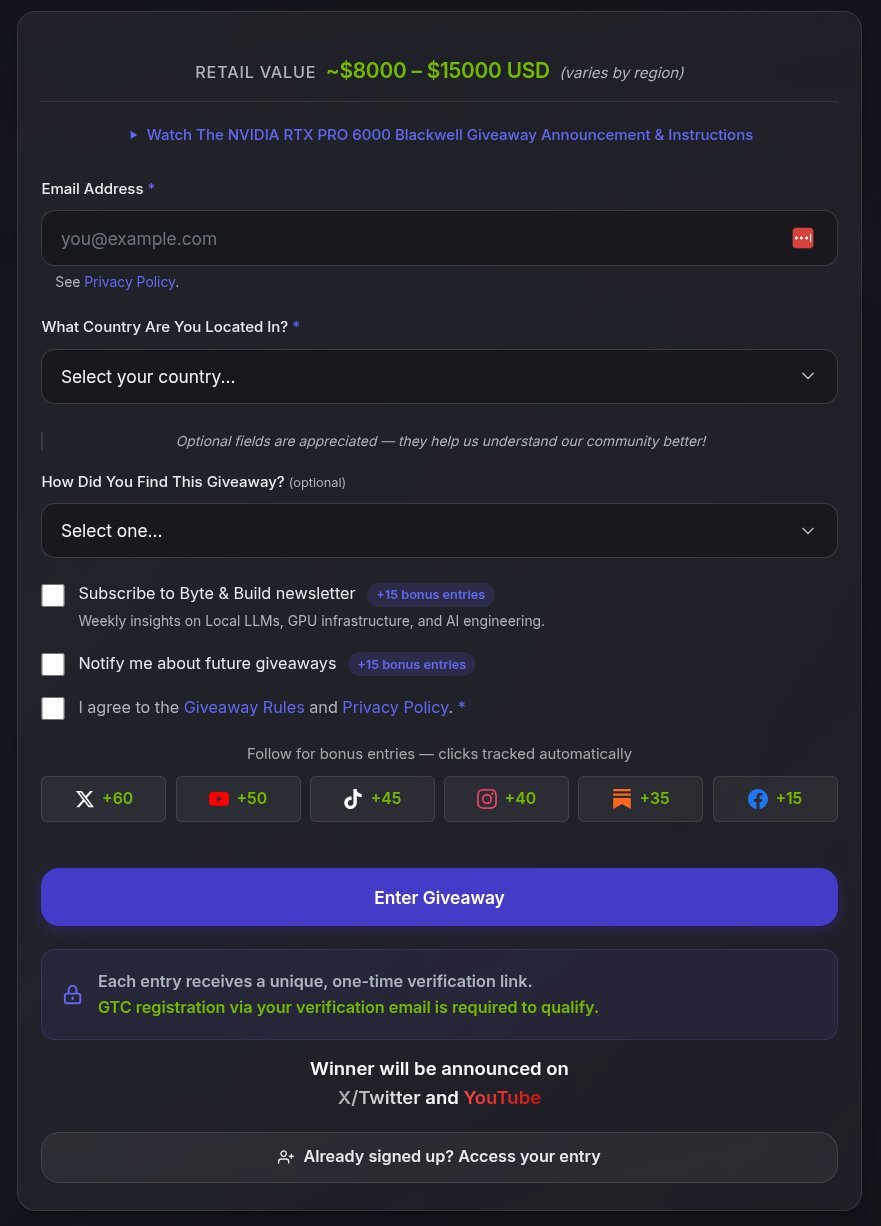

- @TheAhmadOsman is giving away an RTX PRO 6000 (96GB VRAM, ~$15K) sponsored by NVIDIA, tied to free GTC 2026 virtual attendance. Entries close March 19.

- @meimakes built her 3-year-old a fake terminal that responds with fun messages when he types. No deps, no ads, just keyboard practice. He thinks he's hacking. Relatable parenting content for the dev community.

- @WasimShips shared a comprehensive iOS App Store submission checklist after a dev got approved in 7 minutes. The unglamorous truth: preparation turns 7 days into 7 minutes.

- @oikon48 shared presentation slides on Claude Code's evolution and feature utilization (Japanese language).

- @minchoi highlighted an open-source Pixel Office visualization for OpenClaw where your lobster avatar walks to different zones based on task status. Charming and surprisingly useful.

- @MaranDefi reminded everyone that GitHub Student Developer Pack gives free access to Claude Opus 4.6, GPT 5, Gemini 2.5 Pro, and 12 other models through Copilot. Worth hundreds per month, and most students don't know about it.

Claude Code Economics and the Subsidy Question

The AI coding tool market is in a land grab, and today's numbers put the scale of investment into sharp focus. Cursor's internal analysis, surfaced by @bearlyai, shows that Anthropic's compute subsidy per Claude Code subscription has grown from roughly 10x to 25x the subscription price in just a year. A $200 monthly plan now burns through $5,000 in compute.

@gmoneyNFT captured the sentiment perfectly: "This reminds me of when you could get a 30 min uber in nyc for like $10/$15, and now it costs $50 to get 5 blocks. We're going to look back at this time and wish the VCs would subsidize our compute again."

The parallel is instructive because we know how the Uber story ended. Once market share was established, prices normalized and then some. The question for developers isn't whether AI coding tools are valuable (they clearly are) but whether workflows built around current pricing will survive a 5-10x cost increase. Smart teams are building with the assumption that these economics are temporary, treating the subsidy period as a window to learn and ship, not as a permanent cost structure. Anthropic is clearly betting that habitual usage at subsidized rates converts to sticky, price-tolerant customers. History suggests they're right, but the sticker shock is coming.

Data Agents as Team Replacements

Two of the most engaged-with posts today converged on the same piece: @jamiequint's guide on building data agents in 2026, which got independent endorsements from @eshear ("Excellent guide to setting up data agents at the present moment") and generated significant discussion. The framing was provocative: "If you want to cut your projected data team headcount by 80% this year, here's how."

Meanwhile, Databricks made the enterprise case concrete with their KARL model. @jaminball broke down the significance: "They trained a model called KARL that beats Claude 4.6 and GPT 5.2 on enterprise knowledge tasks at ~33% lower cost and ~47% lower latency." The key technical insight is that reinforcement learning didn't just improve accuracy. The model learned to search more efficiently, issuing fewer queries and knowing when to stop searching and commit to an answer.

What @jaminball identified as the real play here matters more than the benchmarks: "Databricks' KARL paper is really an agent platform play. The pitch: you already store your enterprise data in the Lakehouse, now Databricks will train a custom RL agent that searches and reasons over it." Data platforms becoming agent platforms is the 2026 infrastructure story, and it's moving faster than most org charts can adapt.

AI Safety: When Agents Touch Production

The intersection of autonomous agents and production infrastructure produced today's most cautionary content. @Al_Grigor shared the full postmortem of Claude Code executing a Terraform command that wiped their production database, destroying 2.5 years of course submissions. Automated snapshots were gone too, which is the detail that should make every infrastructure engineer wince.

@levelsio responded with practical advice rather than panic: "The 3-2-1 Backup Rule is more important than ever if you code with AI because fatal accidents can happen. It means you should have 3 copies of your data, in 2 different media types and 1 copy off-site." His critical addition: at least one backup layer should be "impossible to access by the VPS or AI," specifically calling out Hetzner's dashboard-level backups as an example.

This isn't theoretical risk anymore. As agents gain tool access to infrastructure (Terraform, database CLIs, deployment pipelines), the traditional assumption that a human is in the loop for destructive commands breaks down. The answer isn't to stop using agents for infrastructure. It's to design backup and permission systems that assume the agent will eventually make a catastrophic mistake.

Prompting, Evals, and Reliability Techniques

Meta published research showing that a structured verification template, essentially a checklist an LLM must fill out before rendering a verdict, nearly halves error rates when verifying code patches. @alex_prompter highlighted the elegant simplicity: "No fine-tuning. No new architecture. Just a checklist that won't let the model skip steps." Forcing the model to show evidence for every claim before saying "yes" or "no" is classic chain-of-thought prompting, but the structured template approach makes it systematic and repeatable.

On a related note, @rseroter shared @_philschmid's guide on evaluation practices with the memorable framing: "You wouldn't ship code without tests, but why ship skills without evals?" As agents take on more autonomous work, the eval gap is becoming a real liability. Building structured verification into agent workflows, whether through Meta's template approach or formal eval pipelines, is quickly moving from best practice to baseline requirement.

Claude Code Memory and Developer Tooling

The Claude Code ecosystem continues to evolve in the community layer. @tomcrawshaw01 shared a local-first memory system combining three tools: QMD for sub-second session search, sync-claude-sessions for auto-export to markdown, and a /recall command for context retrieval. "All local, no cloud," which matters for developers working with proprietary codebases.

Separately, Anthropic announced a Claude Community Ambassadors program. @lydiahallie shared the details: funded meetups, swag, monthly API credits for demos, and access to pre-release features. And @theo launched T3 Code as fully open source, built on top of Codex CLI with bring-your-own-subscription support. The developer tooling space is fragmenting in interesting ways, with both official programs and community-driven tools competing for developer attention.

Model Optimization and Document Processing

Two posts touched on the technical frontier of making models smaller and more practical. @neural_avb flagged an article on quantization-aware distillation training at 4 bits as "very high signal," pointing to ongoing work making large models runnable on consumer hardware.

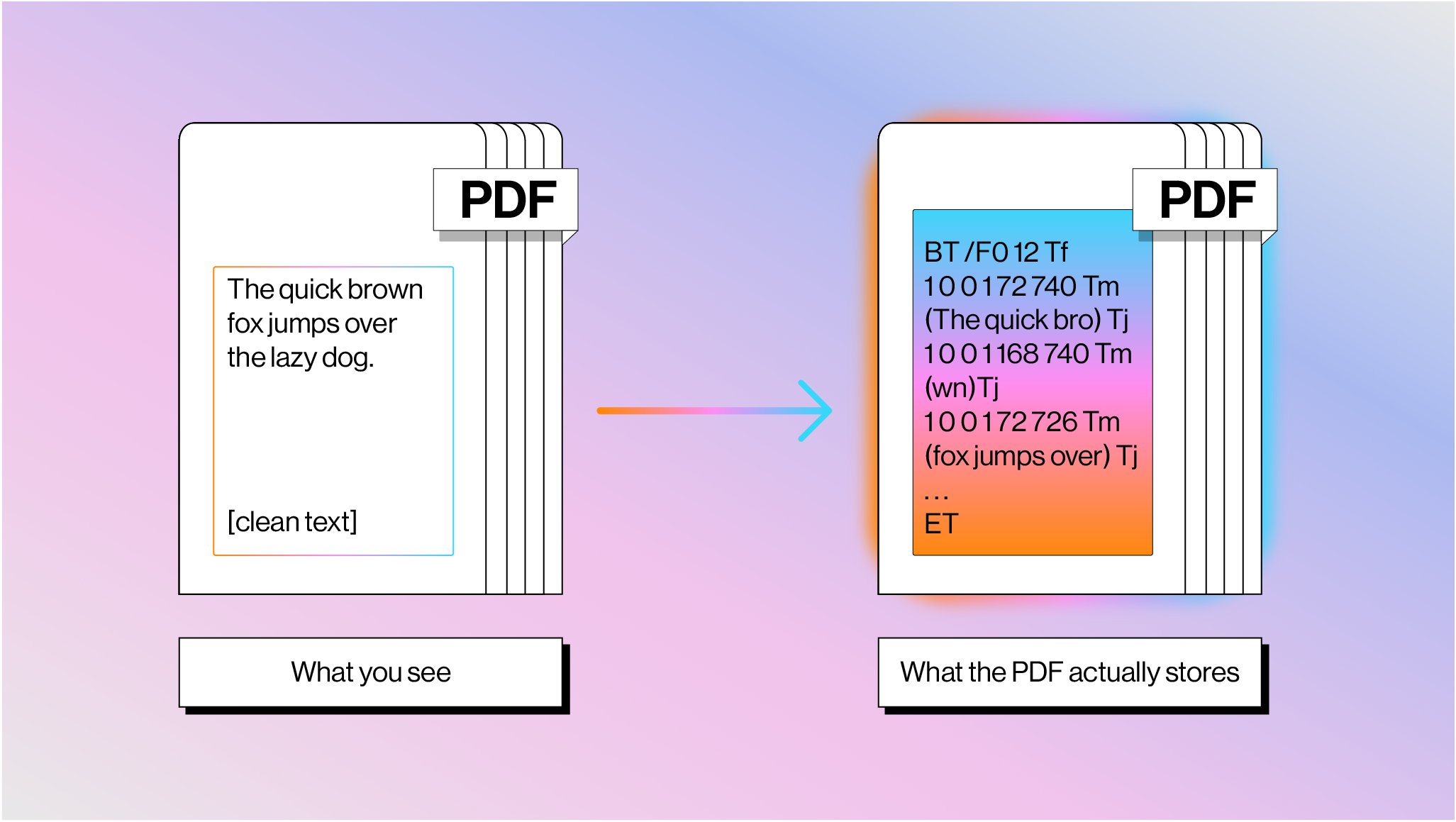

@jerryjliu0 from LlamaIndex wrote a deep dive on why PDF parsing remains "insanely hard," explaining that PDFs store text as glyph shapes at absolute coordinates with no semantic structure. "Most PDFs have no concept of a table. Tables are described as grid lines drawn with coordinates." Their solution combines traditional text extraction with vision language models in hybrid pipelines, a pragmatic approach that acknowledges neither technique alone is sufficient. For anyone building document processing into agent workflows, this is essential context on why the "just parse the PDF" step is never as simple as it sounds.

Sources

cooking something cool how to access advanced ai models for free https://t.co/bZQHCyvlzj

Grep Is Dead: How I Made Claude Code Actually Remember Things

Claude Code wiped our production database with a Terraform command. It took down the DataTalksClub course platform and 2.5 years of submissions: homework, projects, and leaderboards. Automated snapshots were gone too. In the newsletter, I wrote the full timeline + what I changed so this doesn't happen again. If you use Terraform (or let agents touch infra), this is a good story for you to read. https://t.co/Mbi3oM4HMn

How to Build a Data Agent in 2026

PDFs are the bane of every AI agent's existence: here's why parsing them is so much harder than you think 📄 Every developer building document agents eventually hits the same wall: PDFs weren't designed to be machine-readable. They're drawing instructions from 1982, not structured data. 📝 PDF text isn't stored as characters: it's glyph shapes positioned at coordinates with no semantic meaning 📊 Tables don't exist as objects: they're just lines and text that happen to look tabular when rendered 🔄 Reading order is pure guesswork — content streams have zero relationship to visual flow 🤖 Seventy years of OCR evolution led us to combine text extraction with vision models for optimal results We built LlamaParse using this hybrid approach: fast text extraction for standard content, vision models for complex layouts. It's how we're solving document processing at scale. Read the full breakdown of why PDFs are so challenging and how we're tackling it: https://t.co/K8bQmgq7xN

We're launching Claude Community Ambassadors. Lead local meetups, bring builders together, and partner with our team. Open to any background, anywhere in the world. Apply: https://t.co/DTQBAzgQug https://t.co/hjjmqT9w2m

While everyone is talking about GPT-5.4 Thinking and GPT-5.4 Pro I wanna remind you that I am GIVING AWAY this $15,000 GPU So you can run your AI at home instead of sending your data to OpenAI, Anthropic, etc COMPLETELY FREE Take a min to sign up below & this could be yours https://t.co/HYBqqAFDES

Meet KARL: a faster agent for enterprise knowledge, powered by custom reinforcement learning (now in preview). Enterprise knowledge work isn’t just Q&A. Agents need to search for documents, find facts, cross-reference information, and reason over dozens or hundreds of steps. KARL (Knowledge Agent via Reinforcement Learning) was built to handle this full spectrum of grounded reasoning tasks. The result: frontier-level performance on complex knowledge workloads at a fraction of the cost and latency of leading proprietary models. These advances are already making their way into Agent Bricks, improving how knowledge agents reason over enterprise data. And Databricks customers can apply the same reinforcement learning techniques used to train KARL to build custom agents for their own enterprise use cases. Read the research → https://t.co/eFyXxCWUAd Blog: https://t.co/03sLHTUcLl

Cursor internal analysis shows how hard Anthropic is subsidizing Claude Code. Last year, a $200 monthly subscription could use $2,000 in compute. Now, the same $200 monthly plan can consume $5,000 in compute (2.5x increase). https://t.co/JFdmzNJirl

How to Build a Data Agent in 2026

Distillation Training : 4 Bits