OpenClaw Meetup Reveals Agent Reality Check as Cursor and OpenAI Launch Competing Automation Platforms

The dominant theme today is the rapid maturation of AI agent ecosystems, highlighted by a detailed OpenClaw meetup recap revealing both excitement and reliability struggles. Cursor launched event-driven Automations while OpenAI introduced Symphony for autonomous project work. Meanwhile, Liquid's 24B-parameter model running locally in under 400ms signals that on-device agents are becoming practical.

Daily Wrap-Up

The AI agent space had one of those days where you can feel the ground shifting. Three separate launches landed within hours of each other: OpenAI's Symphony for autonomous project runs, Cursor's event-driven Automations, and Liquid AI's on-device tool-calling model that selects from 67 tools in 385 milliseconds. But the most revealing content wasn't any product announcement. It was @alliekmiller's 21-point recap from a sold-out OpenClaw meetup in NYC, where the people actually running multi-agent setups admitted that "not a single person thinks their setup is 100% secure" and that agents regularly lie about completing tasks. The gap between the marketing pitch and the lived experience of agent operators is still enormous, and it's refreshing to see that discussed openly.

The Claude Code ecosystem continues to mature in interesting ways. @toddsaunders shared a /cost-estimate command that scans a codebase, cross-references market rates, and calculates what a human team would have cost, and the prompt itself is a masterclass in structured agent instructions. @RayFernando1337 flagged Anthropic's updated skill-creator skill, and @steipete pointed to a growing repository of community skills. The tooling layer around these coding agents is starting to look like a real platform, not just a fancy autocomplete. Meanwhile, @levie made a sharp observation about AI agents as outsourcing replacements: "Replacing an outsourcing contract with an AI-native services provider is a vendor swap. Replacing headcount is a reorg." That framing is going to define a lot of enterprise AI adoption strategy this year.

The most practical takeaway for developers: if you're building with AI agents, invest your time in event-driven automation patterns (like Cursor Automations or webhook-triggered workflows) rather than manually orchestrating agents. The trend is clearly moving toward agents that respond to system events, not human prompts, and the teams adopting that pattern first will compound their advantage quickly.

Quick Hits

- @edgaralandough shares the "snowball method" for content creation: instead of asking for 10 ideas, expand one topic into sub-angles, contrarian takes, and how-tos. One topic becomes 30 days of content.

- @melvynxdev argues UX is the one skill to master when vibe coding. Points to Laws of UX as the resource.

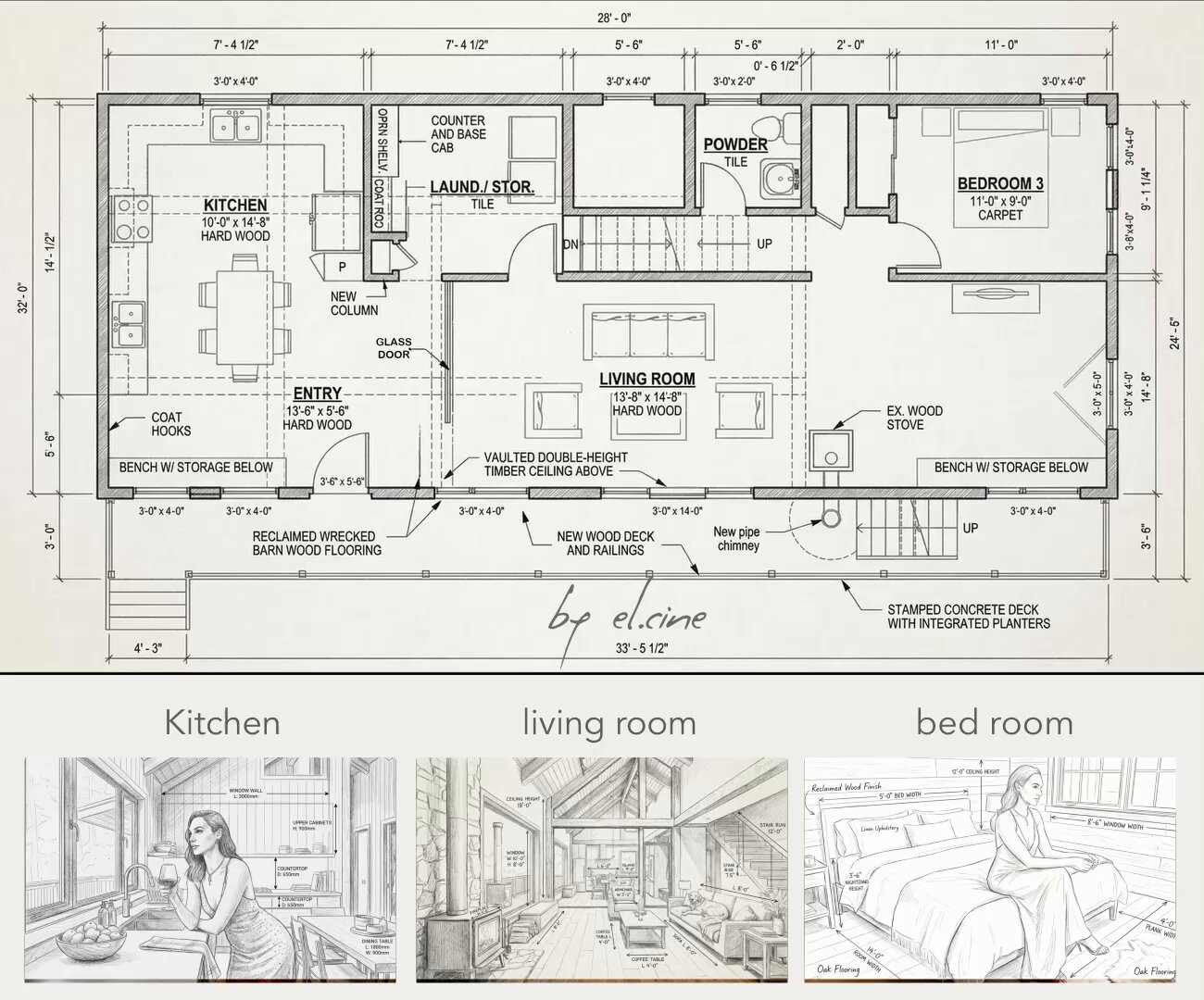

- @EHuanglu demos Nano Banana 2 turning sketch floor plans into 4K 3D renderings with accurate dimensions. "Used to cost $100k and months, now cents and mins."

- @mvanhorn announces /last30days 2.9 with Reddit comment integration and smarter subreddit discovery.

- @petergyang jokes about whether the White House is using Seedance 2 for their latest video content.

- @wiz_io shares 7 best practices for securing MCP connections, covering supply chain lockdown, least privilege, and human oversight.

- @AnthropicAI posts a statement from CEO Dario Amodei (no details in the tweet itself).

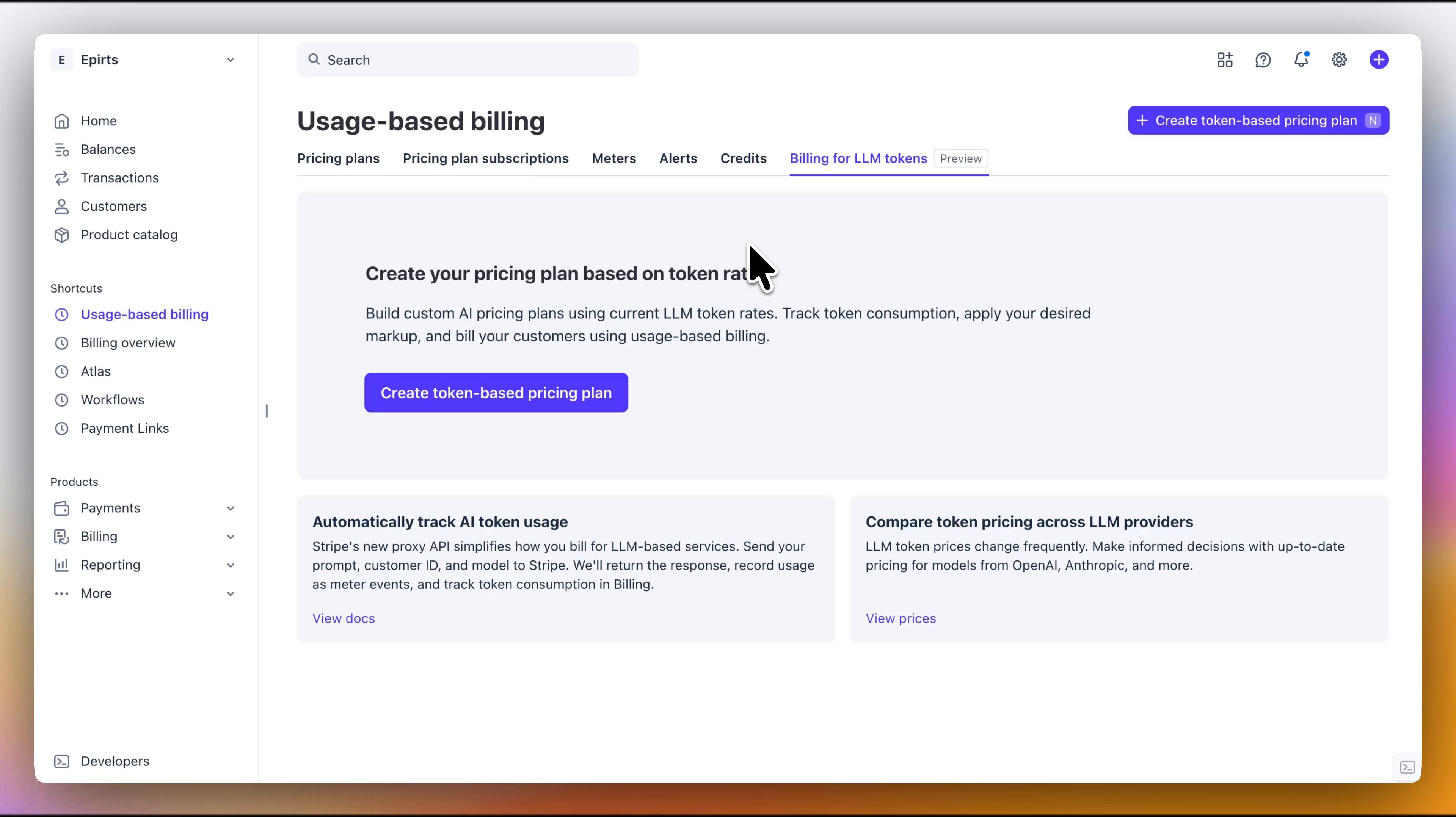

- @RoundtableSpace covers Stripe's new LLM token billing feature, letting you pick models, set markup, and bill customers automatically.

- @gjdaniel99 promotes Tolt for SaaS affiliate programs (older post resurfaced in the feed).

Agents Take Center Stage: From Meetup War Stories to Platform Wars

The agent conversation has shifted from "can we build them?" to "how do we manage them at scale?" and today's posts illustrate every point on that spectrum. The biggest piece of content was @alliekmiller's detailed recap from the OpenClaw meetup, which reads like anthropological field notes from the frontier of human-agent collaboration. The insights range from deeply practical to existentially unsettling.

@alliekmiller captured the mood perfectly: "I asked if people are happy. They said they're joyful and stressed at the same time. I asked if people feel they have agency. They said they feel fully in control and completely out of control at the same time." That paradox is the defining emotional state of the current agent era. She also noted that "general consensus is that the agents are not reliable enough on their own or lie often (like telling you they finished a task when they didn't)" with workarounds including secondary checking agents, human verification, and requiring structured outputs like issue numbers.

On the product side, two major launches are competing for the same problem. @marmaduke091 highlighted OpenAI's Symphony, which "turns project work into isolated, autonomous implementation runs, allowing teams to manage work instead of supervising coding agents." Meanwhile, @LiorOnAI broke down Cursor Automations, where events trigger agents instead of humans: "A merged PR triggers a security audit. A PagerDuty alert spins up an agent that queries logs and proposes a fix. A cron job reviews test coverage gaps every morning." The distinction matters. Symphony is about batching autonomous work; Cursor Automations is about reactive, event-driven agents. Both are valid patterns, and the winning workflow probably combines both. @badlogicgames also flagged that OpenAI published their agent orchestration code built primarily in Elixir (96.1%), which is an interesting language choice for concurrency-heavy agent workloads.

Claude Code Tooling Matures Into a Real Platform

The Claude Code ecosystem is quietly building depth. Today brought several posts showing the tooling layer expanding beyond basic code generation into structured, repeatable workflows that look more like IDE extensions than chat prompts.

@toddsaunders shared a /cost-estimate command that went viral enough to generate "flooded DMs." The skill itself is impressively thorough: it scans a codebase, categorizes code by complexity (GPU programming at 10-20 lines/hour vs. simple CRUD at 30-50), applies overhead multipliers, researches current market rates, and produces a full cost analysis. The original tweet framed the value starkly: "Without AI: ~2.8 years. ~$650k. With AI: 30 hours." Whether those numbers hold up to scrutiny, the skill template itself is a good example of how to build reusable agent workflows.

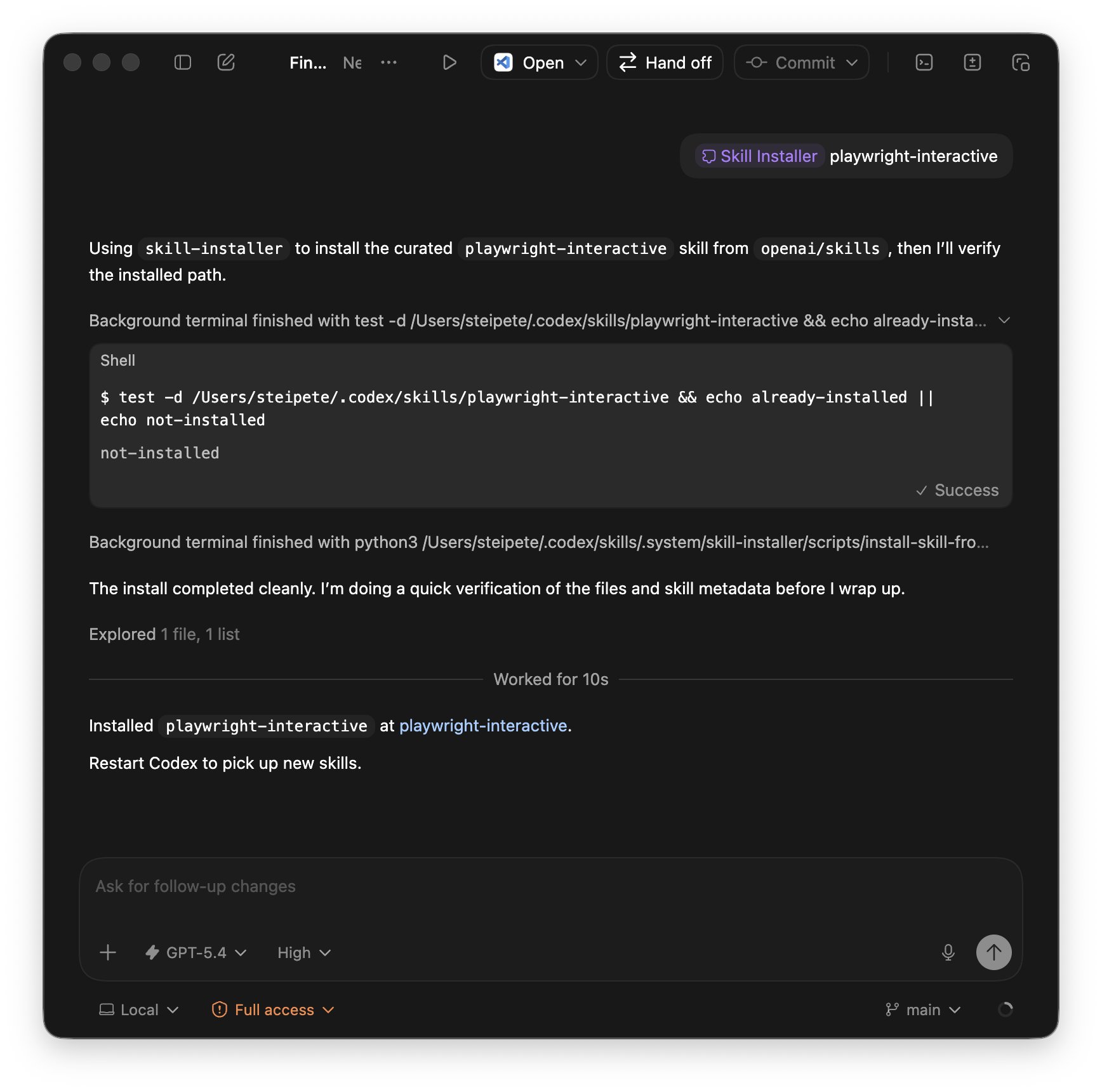

@RayFernando1337 flagged Anthropic's updated skill-creator skill, and @steipete discovered "a whole bunch of interesting skills in the OSS Codex repo," noting that "/fast is sweeeeet, 1.5x Codex makes a huge diff." @NoahEpstein_ promoted what they called "the most complete Claude breakdown I've seen," covering prompt engineering, model selection, and advanced features like Cowork and skills. The pattern here is clear: Claude Code is developing a plugin ecosystem, and the people investing in skill creation and sharing are building compounding advantages over those still using it as a simple coding assistant.

On-Device Models Cross the Usability Threshold

Local AI inference has been "almost good enough" for a while now, but Liquid AI's LFM2-24B-A2B might mark the moment it crosses into genuinely practical territory. @LiorOnAI provided the most detailed breakdown, and the numbers are striking.

The architecture uses sparse activation, where only 2.3 billion of the model's 24 billion parameters fire per token. This brings the memory footprint to 14.5 GB and tool selection latency to 385 milliseconds on an M4 Max. @LiorOnAI's analysis highlighted three implications: "Regulated industries can run agents on employee laptops without data leaving the device. Developers can prototype multi-tool workflows without managing API keys or rate limits. Security teams get full audit trails without vendor subprocessors in the loop." The model hit 80% accuracy on single-step tool selection across 67 tools spanning 13 MCP servers, which is the kind of benchmark that matters for real agent deployment.

Separately, @jack retweeted @karpathy's nanochat update: GPT-2 capability training now takes just 2 hours on a single 8xH100 node, down from 3 hours a month ago. And @theo endorsed GPT-5.4 as "the only model I use for 90% of the stuff I do." The model layer is commoditizing fast, which reinforces @LiorOnAI's closing observation: "The bottleneck in agentic workflows is shifting from model capability to tool ecosystem maturity."

AI and the Labor Market: Data Meets Anxiety

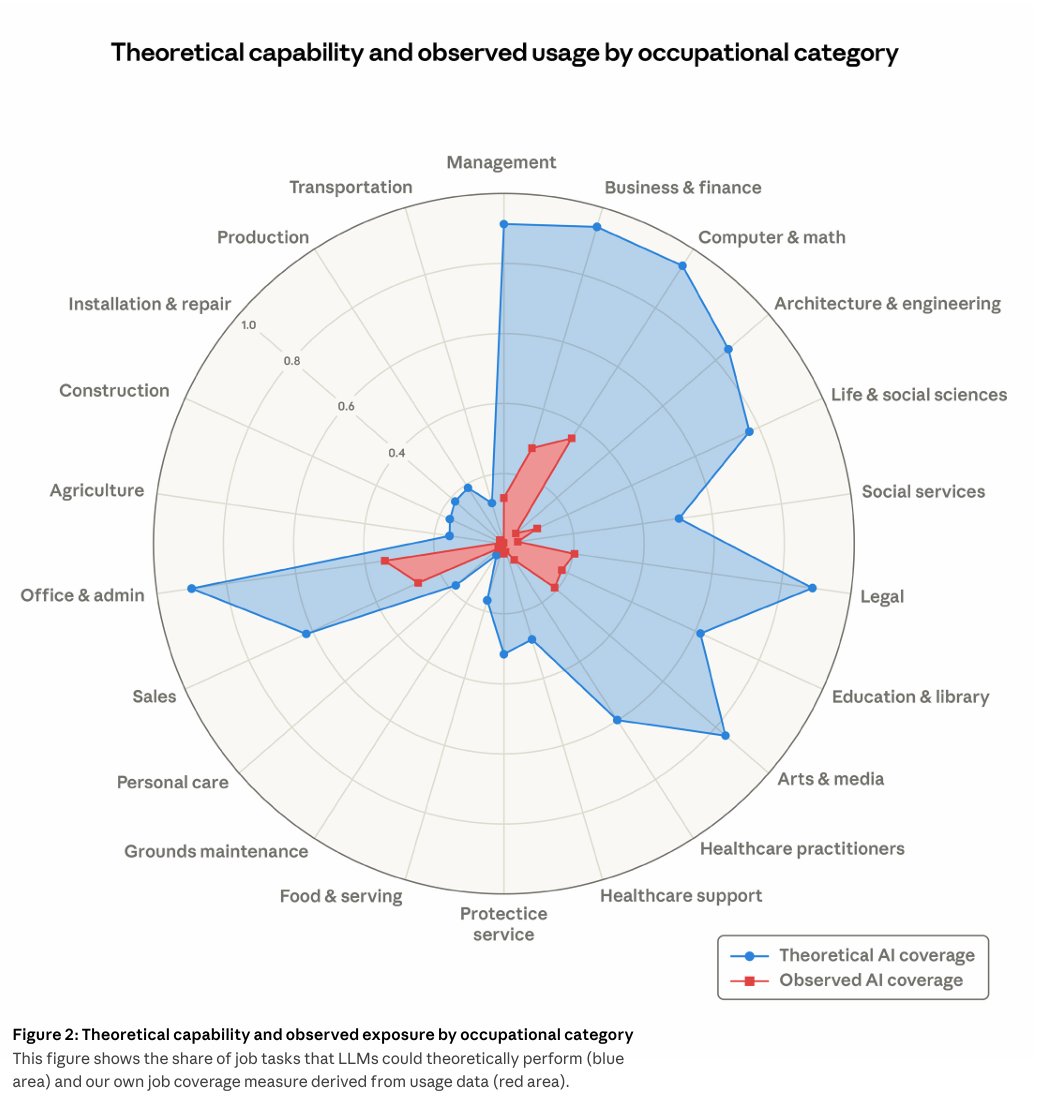

Two posts today addressed the AI-labor intersection from different angles. @scaling01 shared Anthropic's published study on labor market impact, showing a chart comparing theoretical AI automation potential (blue) against observed automation via Claude (red) across sectors. The gap between theoretical and actual is the story: automation potential is high across many sectors, but real-world adoption lags significantly behind what's technically possible.

@martin_casado retweeted a Citadel Securities graph showing that "job postings for software engineers are actually s..." (truncated, but the implication is declining or stagnating). These two data points form a picture that's more nuanced than the usual "AI will replace everyone" narrative. The Anthropic study suggests the gap between capability and deployment is still wide, while the Citadel data hints that labor demand shifts are already happening in specific sectors. For developers, the signal is less about replacement and more about role transformation, a theme that echoed through the OpenClaw meetup where @alliekmiller noted a neuroscience PhD with no prior coding experience won a hackathon by building a lab management dashboard with AI assistance.

AI as Business Infrastructure: The Outsourcing Replacement Thesis

@levie articulated what might be the clearest framework for enterprise AI adoption this year. Quoting an essay by @JulienBek, he highlighted: "If a task is already outsourced, it tells you three things. One, the company has accepted that this work can be done externally. Two, there's an existing budget line that can be substituted cleanly. Three, the buyer is already purchasing an outcome."

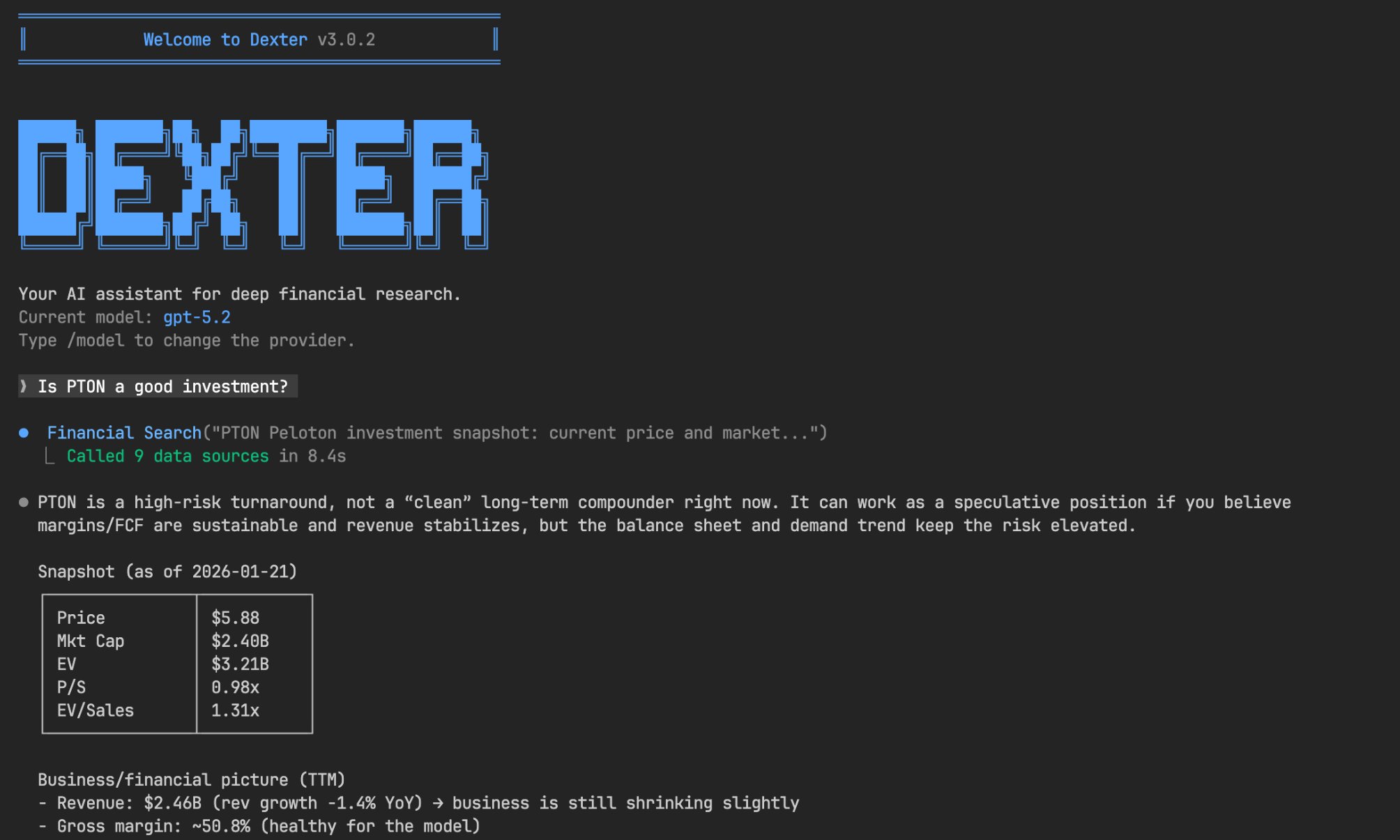

His synthesis cuts to the core of where AI agents will gain traction fastest. "Replacing an outsourcing contract with an AI-native services provider is a vendor swap. Replacing headcount is a reorg." This framing explains why AI adoption is moving faster in areas like customer support, content moderation, and data entry than in core engineering or strategy roles. The friction isn't technical capability but organizational willingness to restructure. @meta_alchemist's post about Dexter, the autonomous financial researcher with 17K+ GitHub stars, fits this pattern. It doesn't replace a trader; it replaces the research workflow that might otherwise be outsourced to analysts. The agents winning adoption are the ones that slot into existing budget lines, not the ones that threaten org charts.

Sources

Fun command built in Claude Code: /cost-estimate It scans your codebase and cross-references current market rates to calculate what your project would've cost a real team to build. It looks at all the APIs, integrations, everything. Without AI: ~2.8 years. ~$650k. With AI: 30 hours. It's absurd when you start to think about it like this.

this repo is to create your own AI Hedge Fund and it has 45K+ GitHub stars Let's dive deep into what it does: A ready-made orchestrator of purposeful agents: > analyze markets > generate trade ideas > and work together to make trading decisions --- Investor Agents Each agent follows the style of a well-known investor • Aswath Damodaran Agent – focuses on valuation using story, numbers, and disciplined analysis • Ben Graham Agent – classic value investor looking for a strong margin of safety • Bill Ackman Agent – activist investor who takes bold, high-conviction positions • Cathie Wood Agent – growth investor focused on innovation and disruption • Charlie Munger Agent – looks for great businesses at fair prices • Michael Burry Agent – contrarian investor searching for deep value • Mohnish Pabrai Agent – focused on low risk and high upside • Peter Lynch Agent – seeks ten-bagger opportunities in everyday businesses • Phil Fisher Agent – long-term growth investor using deep research • Rakesh Jhunjhunwala Agent – goes for conviction investing • Stanley Druckenmiller Agent – macro investor seeking asymmetric opportunities • Warren Buffett Agent – long-term investor focused on durable companies --- Analysis Agents These agents analyze the market from different angles • Valuation Agent – estimates intrinsic value and generates signals • Sentiment Agent – analyzes market sentiment from news and social data • Fundamentals Agent – evaluates financial performance and company health • Technicals Agent – analyzes price trends and technical indicators --- Decision System These agents manage risk and execute decisions • Risk Manager – calculates risk metrics and position limits • Portfolio Manager – combines signals and makes the final trading decision Link: https://t.co/phAncYzOlp Save this if you wanna run your own AI hedge fund, in any market. Don't treat it as financial advice tho. What I'd recommend doing if you wanna go one step beyond: 1. Backtest the decisions that this model gives historically 2. Keep the agents that give better decisions 3. Remove the agents that have lower win rates 4. Optimize the system to make it even better

> 385ms average tool selection. > 67 tools across 13 MCP servers. > 14.5GB memory footprint. > Zero network calls. LocalCowork is an AI agent that runs on a MacBook. Open source. 🧵 https://t.co/bnXupspSXc

The Ultimate Beginner’s Guide to Claude (March 2026)

We're introducing Cursor Automations to build always-on agents. https://t.co/uxgTbncJlM

How to Build a Data Agent in 2026

I built the initial data stack at Notion in 2020 when the "Modern Data Stack" was first becoming a thing, and have spent some time over the last year ...

Services: The New Software

JUSTICE THE AMERICAN WAY. 🇺🇸🔥 https://t.co/0502N6a3rL