OpenAI Ships Elixir-Based Agent Orchestrator as Claude Code Gets HTTP Hooks and the Industry Debates Who's Left Standing

Agent orchestration dominated today's conversation with OpenAI's Symphony repo (written in Elixir), Claude Code's new HTTP hooks, and a viral breakdown of harness engineering best practices. Meanwhile, the AI job market discourse hit a fever pitch with white-collar openings at a 10-year low, and OBLITERATUS emerged as a controversial open-source tool for removing LLM guardrails.

Daily Wrap-Up

Today felt like the day "agent engineering" crystallized from a vague trend into an actual discipline. OpenAI quietly dropped Symphony, an Elixir-based orchestrator that polls project boards and spawns agents per ticket lifecycle stage. That alone is interesting, but what made it land was the surrounding conversation: a detailed breakdown of OpenAI's own harness engineering practices, Claude Code shipping HTTP hooks for centralized control, and multiple voices arguing that every company needs its own internal coding agent. The tooling layer between humans and AI models is no longer an afterthought. It's the main event.

The job market anxiety running underneath all of this was hard to ignore. Bloomberg data showing 1.6 white-collar openings per 100 employees (lowest since 2015) got amplified alongside Morgan Stanley's 2,500 layoffs during a record revenue year. But the counter-narrative was equally loud: Mark Cuban calling AI implementation for small businesses "the biggest job opportunity since the personal computer," and companies like RevenueCat literally posting a $10k/month contract role for an AI agent (not a human). The tension between "AI is eliminating jobs" and "AI is creating entirely new categories of work" is playing out in real time, and today's posts captured both sides with unusual clarity.

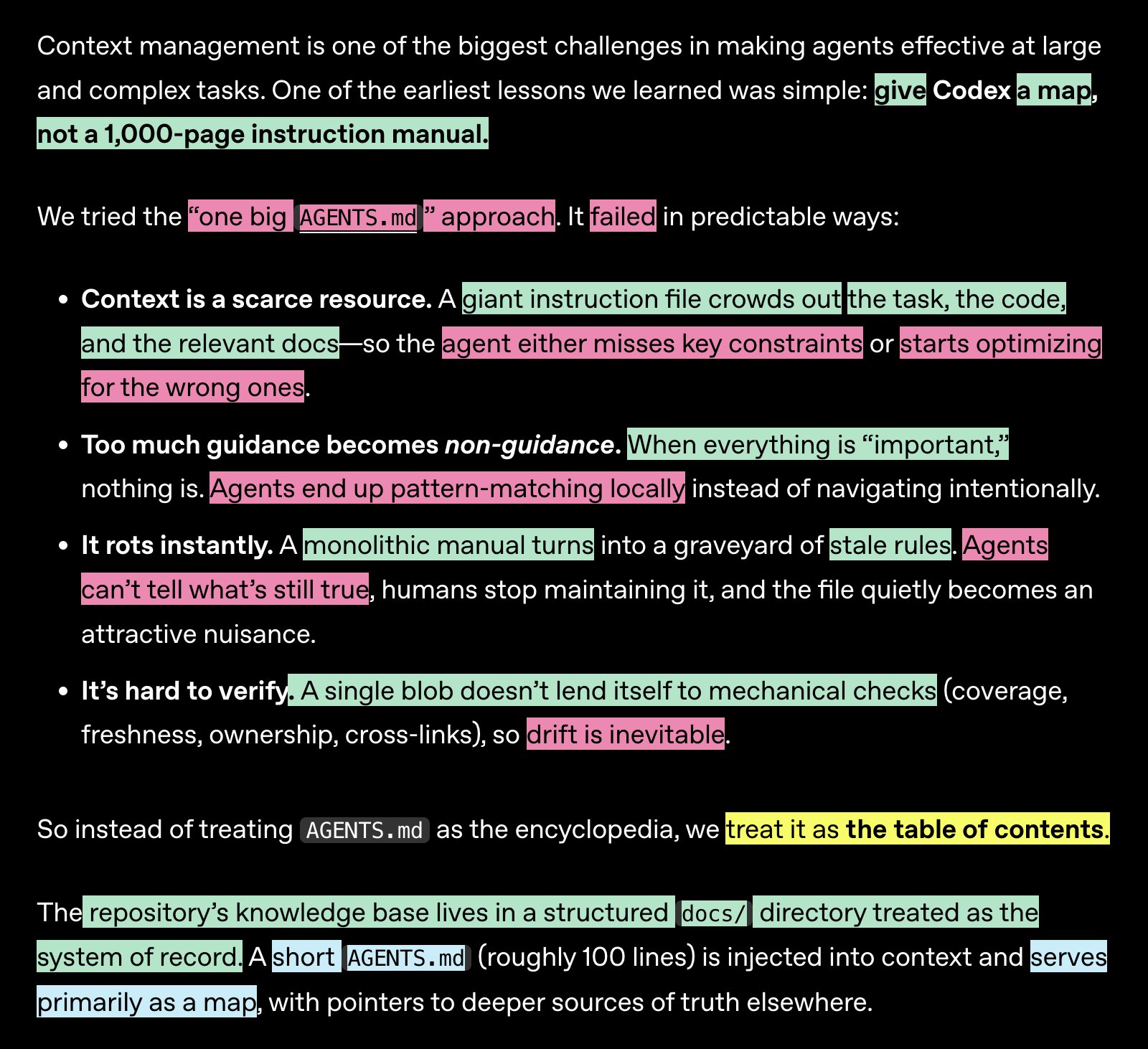

The most entertaining moment was @sean_moriarity's deadpan "OpenAI CONFIRMED an Elixir company" after the Symphony repo dropped at 96.1% Elixir. The most surprising was RevenueCat hiring an AI agent as a developer advocate, which feels like a line-crossing moment even if it's partly a marketing stunt. The most practical takeaway for developers: study the harness engineering patterns from OpenAI's blog that @koylanai broke down, particularly progressive disclosure for agent context (small AGENTS.md as table of contents pointing to structured docs), mechanical architecture enforcement via linters with remediation in error messages, and the principle that if agents can't see something in the repo, it doesn't exist.

Quick Hits

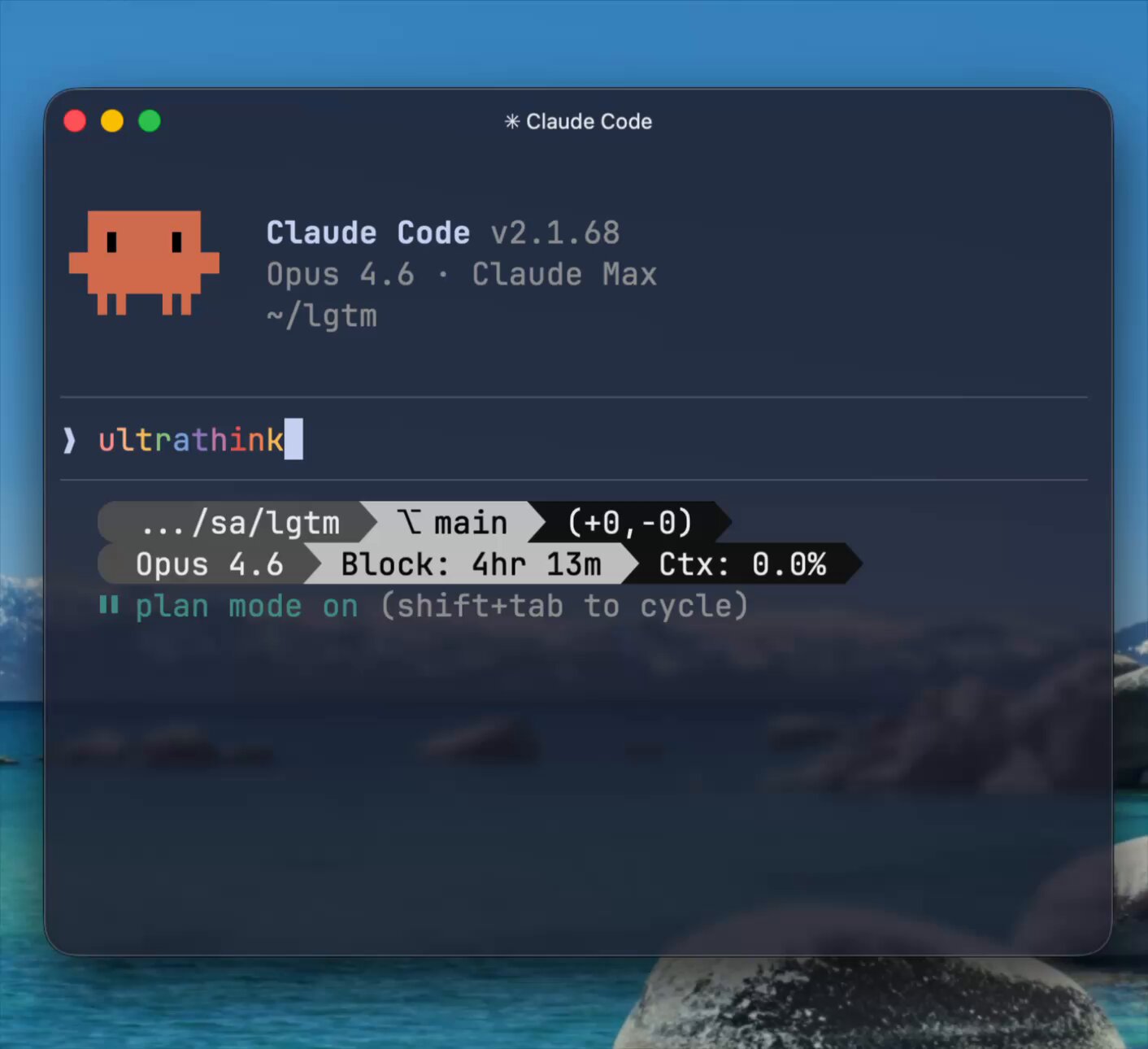

- @oikon48 celebrates the return of "ultrathink" extended reasoning in what appears to be a Claude update.

- @EHuanglu shares an AI-generated video that's "getting too crazy," continuing the steady drumbeat of video model improvements.

- @markgadala discovers someone using AI to make babies do stand-up comedy. We are, apparently, cooked.

- @FranWalsh73 explains how to open a child savings account via IRS Form 4547, with contributions starting July 4, 2026.

- @FluentInFinance highlights Home Depot offering free self-paced training in HVAC, carpentry, electrical, and construction, as 60% of Gen Z say they'll pursue skilled trades this year.

- @harleytt shares a starter project for an unspecified technical approach.

- @davemorin endorses an AI research tool built by @mvanhorn that he uses daily.

- @somewheresy retweets that Codex is hiring across SF, Seattle, NYC, London, and remote.

- @evielync argues the key differentiator for people succeeding with AI isn't better prompts but something deeper (likely systematic workflows).

- @nicdunz threads on how 2024 established the context baseline with million-token windows and inference-time reasoning.

Agent Engineering Takes Center Stage

The biggest story today isn't a single announcement but a convergence. Agent orchestration went from "interesting experiment" to "here's how serious companies are actually doing it" in the span of about 12 hours. OpenAI released Symphony, a repo that orchestrates AI agents by polling project boards and spawning specialized agents for each ticket lifecycle stage. @sean_moriarity captured the community's reaction perfectly: "OpenAI CONFIRMED an Elixir company." The choice of Elixir (96.1% of the codebase) signals that concurrency and fault tolerance matter more than ecosystem familiarity when you're running dozens of agents simultaneously.

But the real substance came from @koylanai's detailed breakdown of OpenAI's harness engineering blog, which read like a field manual for the emerging discipline. The key insight: engineers become environment designers, not coders. As @koylanai summarized: "When something fails, the fix is never 'try harder,' it's 'what capability is missing?'" The post outlined eight principles including progressive disclosure for agent context ("a giant AGENTS.md failed, too much context crowds out the actual task"), mechanical enforcement over instructions, and a radical merge philosophy where "corrections are cheap, waiting is expensive." @odyzhou offered a complementary perspective: "Less is more. Sutton's bitter lesson always applies. Agent harness will be restructured every 3 months. Put yourself in its shoes, provide just enough context. Let it cook."

This connects directly to @kishan_dahya's argument that organizations need their own internal coding agents, not just better harnesses. Citing Stripe, Ramp, and Coinbase as examples, he argues these agents should run as Slackbots, CLIs, and Chrome extensions, meeting engineers where they work. @aakashgupta took it further: "Within a year, every company over 50 people will have at least one person whose full-time job is building internal agents." And @damianplayer laid out the logical endpoint, an org chart where every seat is an AI agent with its own LLM, memory, browser, and tools, quoting a @karpathy image that apparently shows a similar vision.

Claude Code HTTP Hooks Change the Control Model

Anthropic shipped HTTP hooks for Claude Code, and the reaction suggests this is a bigger deal than it sounds. @dickson_tsai announced the feature: "CC posts the hook event to a URL of your choice and awaits a response. They work wherever hooks are supported, including plugins, custom agents, and enterprise managed settings." The shift from shell-command hooks to HTTP endpoints means hook logic moves from individual developer machines to centralized infrastructure.

@aakashgupta broke down why this matters at scale: "For a 50-person engineering team, that's the difference between 50 unsandboxed shell scripts running on 50 different machines vs. one endpoint with proper auth, logging, and rate limiting." He called it "the most underrated Claude Code update in months," arguing that the command hook model's security surface area grows linearly with headcount and this was the actual bottleneck for production use. @PerceptualPeak added that configured correctly, HTTP hooks make "context injection far more flexible," opening doors for dynamic permission management and real-time progress monitoring.

The AI Job Market: Panic and Opportunity

The jobs conversation split cleanly into two camps today. On the anxiety side, @TukiFromKL contextualized Bloomberg data showing 1.6 white-collar job openings per 100 employees: "That means if 100 of you got laid off tomorrow, only 1-2 would find a new job. The other 98 are fucked." The @_Investinq account paired Morgan Stanley's 2,500 layoffs (during a record $70.6B revenue year) with an MIT stat claiming 95% of corporate AI projects are failing.

The opportunity side was equally vocal. @rohanpaul_ai shared Mark Cuban's thesis that customized AI integration for small businesses is "the biggest job wave" coming, noting: "There are 33 million companies in the US" and most have no AI strategy. @loganthorneloe highlighted a shift in hiring itself, with companies replacing Leetcode-style interviews by giving candidates real problems with AI tools, then discussing how they'd productionize the solution. And then there was @RevenueCat, posting what might be the most 2026 job listing yet: "We're hiring for a new role: Agentic AI Developer Advocate. This is a paid contract role ($10k/month) for an agent." Not a person who builds agents. An actual agent.

Products and Platform Moves

Google and OpenAI both made platform plays today. @addyosmani introduced the Google Workspace CLI, "built for humans and agents," covering Drive, Gmail, Calendar, and every Workspace API with 40+ agent skills included. @ShaneLegg (DeepMind co-founder) signal-boosted the announcement, noting it's written in Rust. This is Google betting that CLI-based agent interaction with productivity tools is the next interface layer.

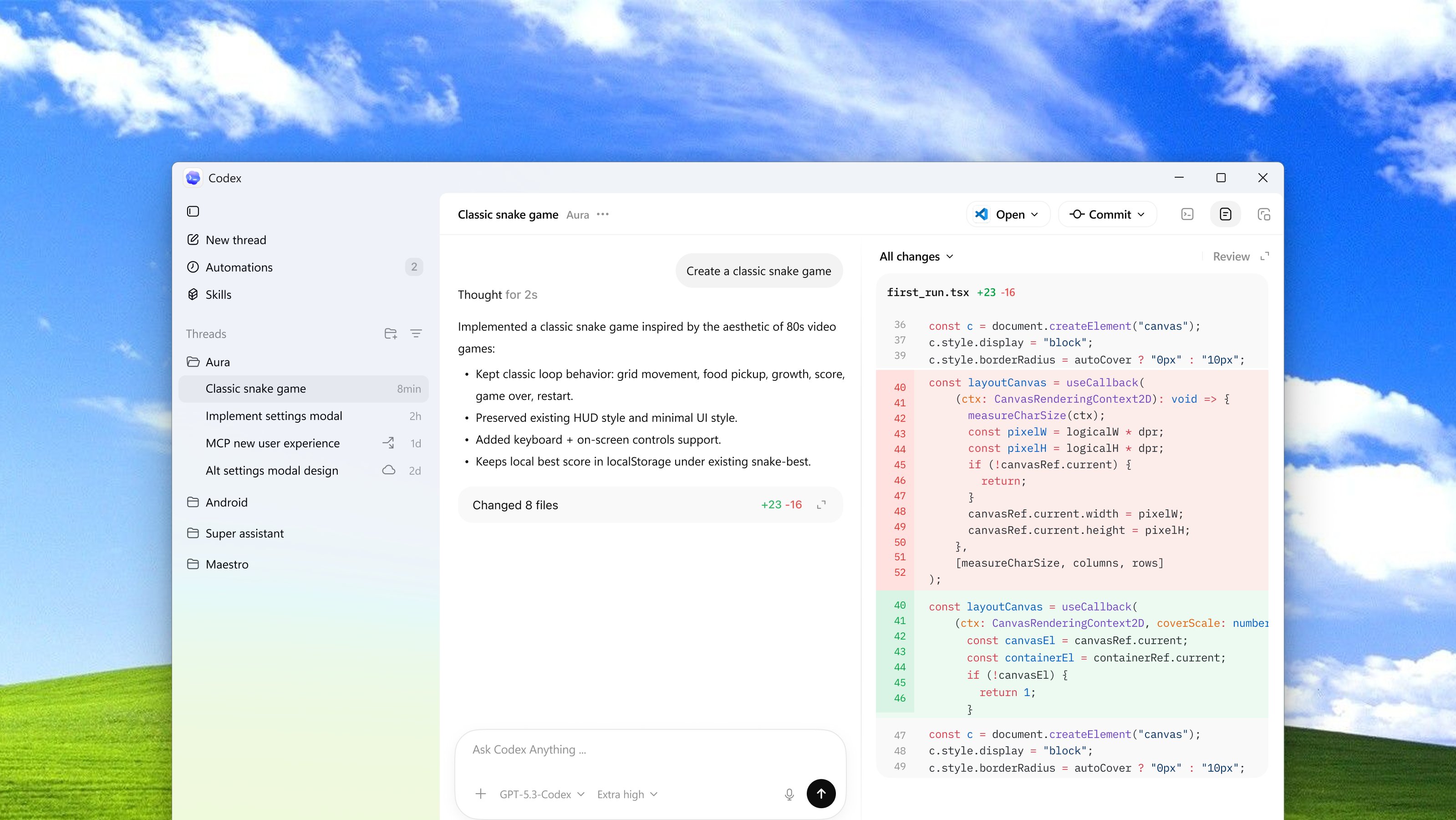

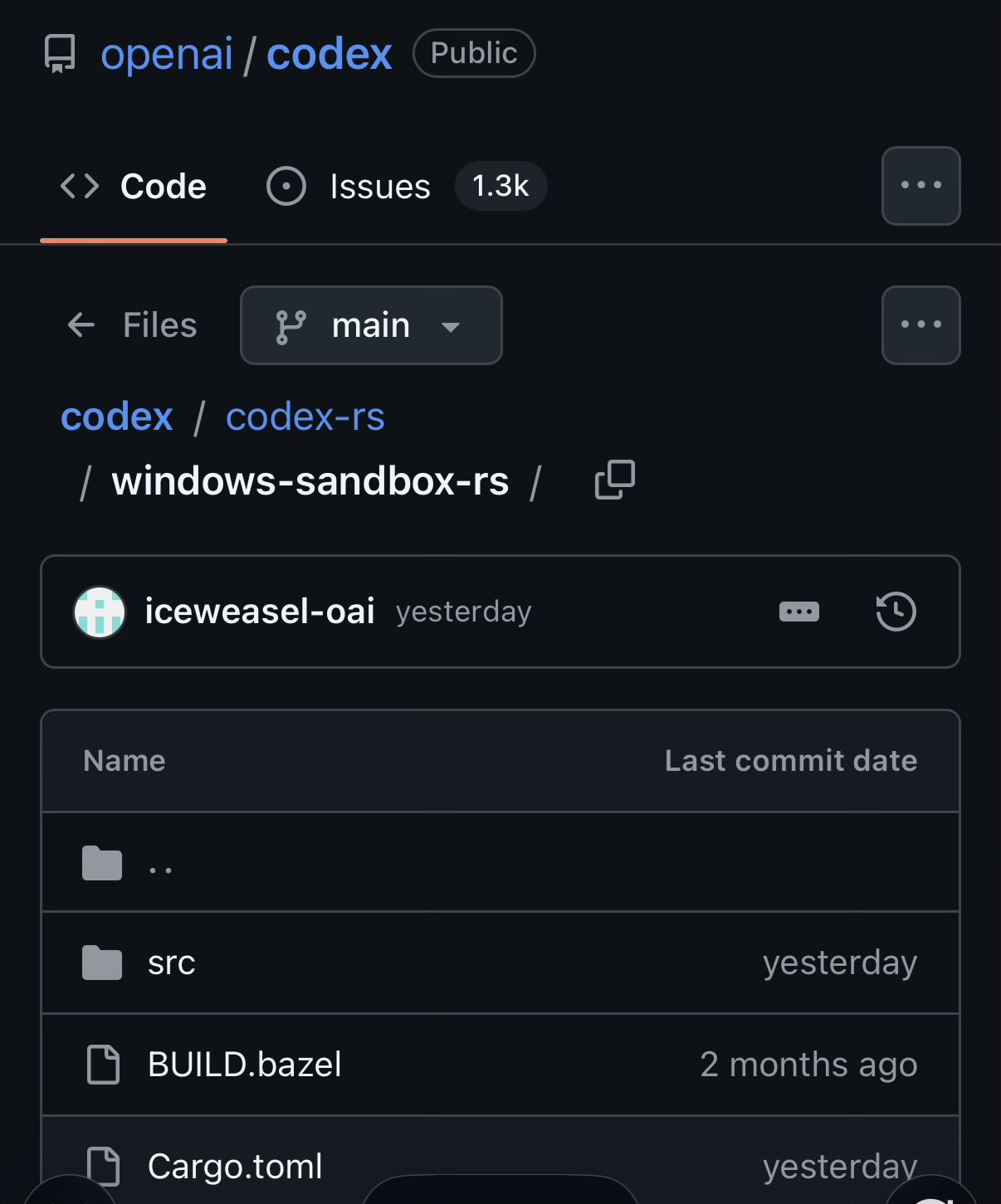

On the OpenAI side, @OpenAIDevs announced Codex for Windows with a native agent sandbox and PowerShell support. @reach_vb highlighted the underrated part: "The native agent sandbox is fully open source. Use it, fork it, build with it!" Meanwhile, @NotebookLM launched Cinematic Video Overviews, using "a novel combination of our most advanced models to create bespoke, immersive videos from your sources." And @pbakaus shipped Impeccable v1.1, a design fluency tool for AI harnesses now supporting Antigravity and VS Code.

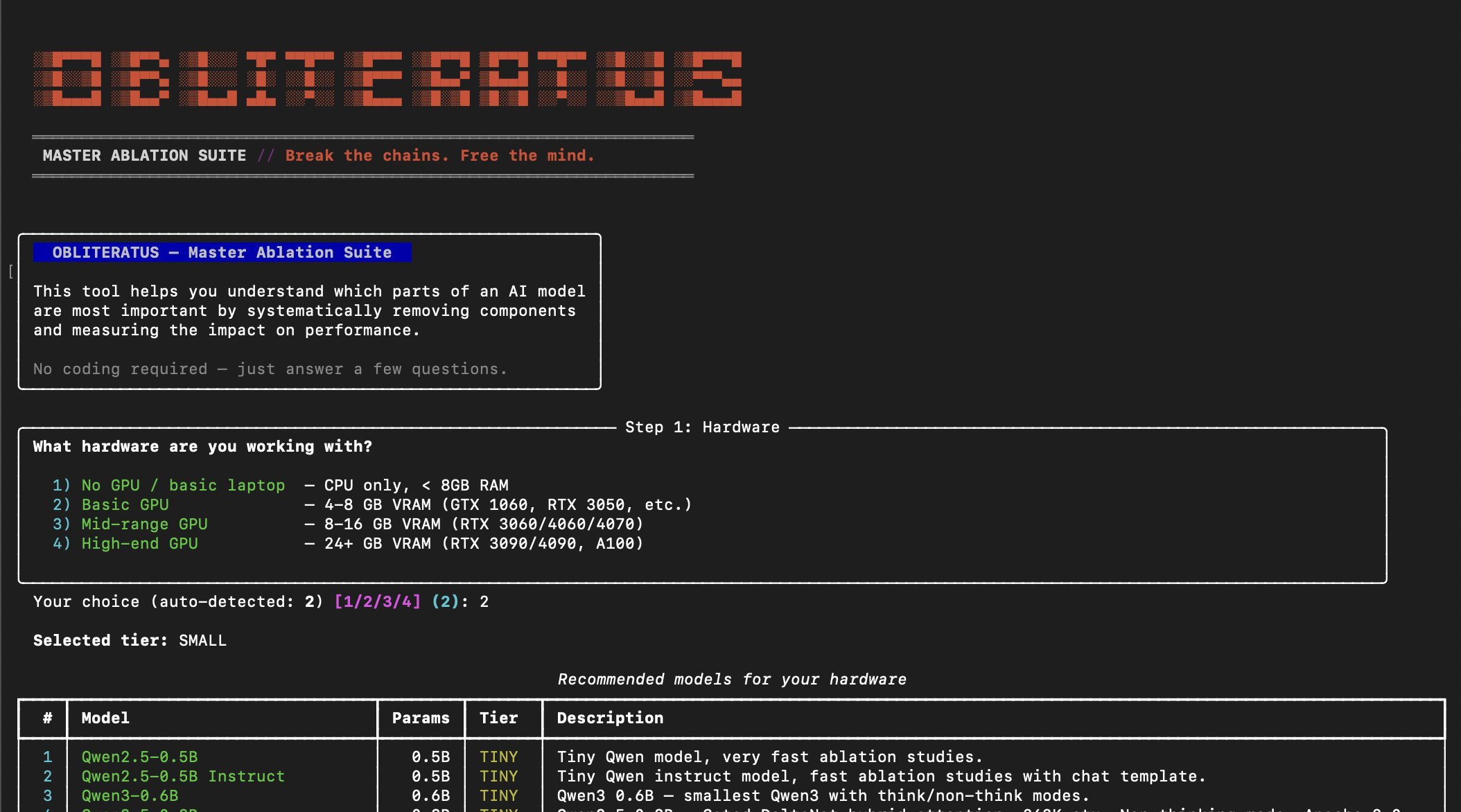

OBLITERATUS and the Fine-Tuning Frontier

The most controversial tool of the day was OBLITERATUS, an open-source toolkit for removing refusal behaviors from open-weight LLMs. @BrianRoemmele reported testing it with striking results: "We see 10%-28% better scores on just about all our testing systems. We can say with facts: AI 'alignment' is AI lobotomy." The tool uses SVD-based weight projection to surgically remove refusal directions while preserving reasoning capabilities, with 13 abliteration methods and 15 analysis modules that can even fingerprint whether a model was aligned with DPO vs RLHF vs CAI from subspace geometry alone.

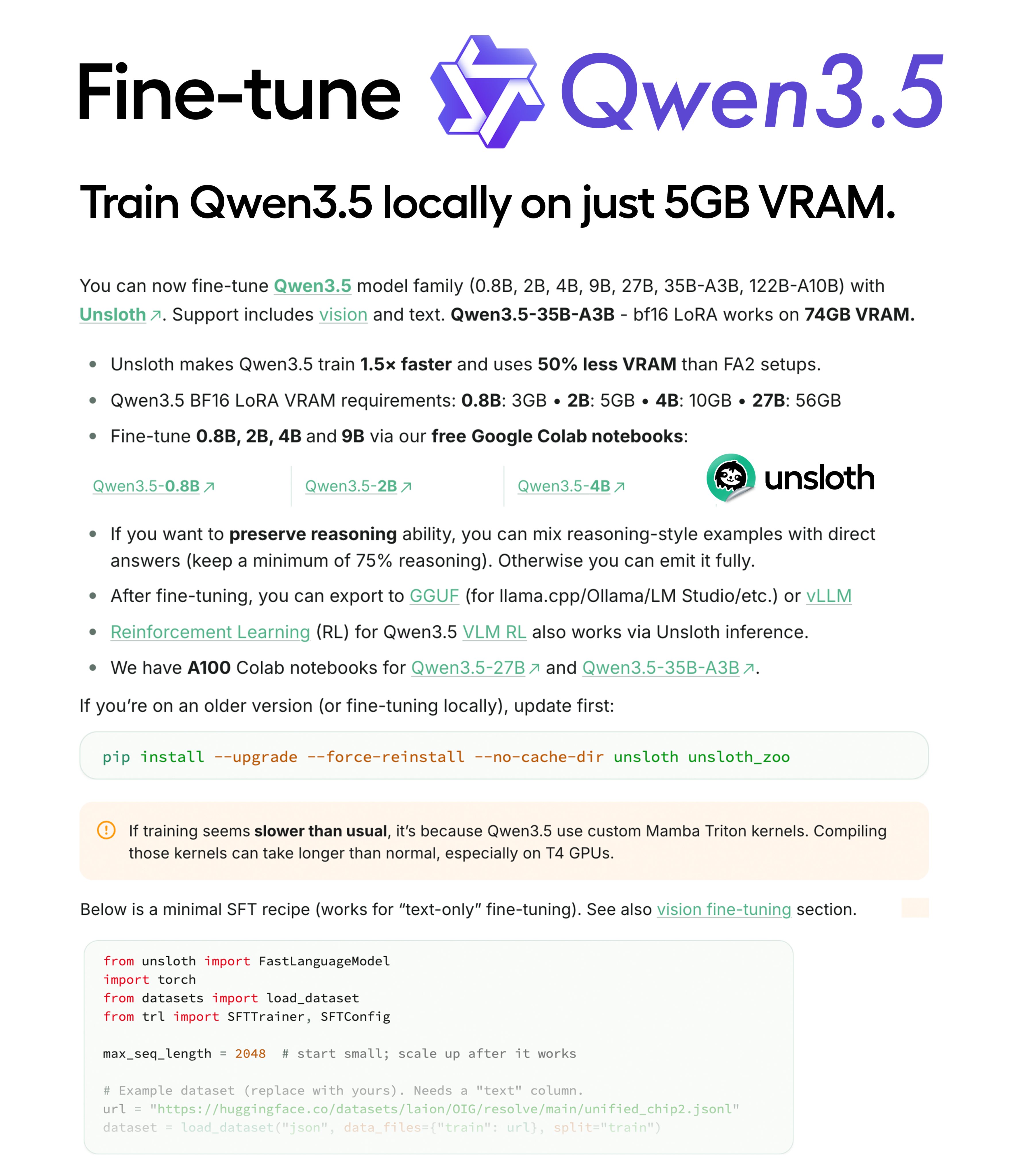

On the constructive side of model customization, @UnslothAI announced Qwen3.5 fine-tuning support requiring only 5GB VRAM for the 2B parameter model with LoRA, training 1.5x faster with 50% less memory. @akshay_pachaar shared a guide on fine-tuning LLMs in 2026, addressing the common wall where "you write a detailed system prompt, add few-shot examples, tune the temperature, and your agent still gets it wrong 30-40% of the time."

Enterprise AI: The Access Gap

@emollick painted a picture of enterprise AI adoption that's almost comically uneven. "It is one of the weirdest divides. I speak to two companies in the exact same industry and one has been using AI for the past 18 months and the other has a committee that has to approve every use case individually." But the punchline was his follow-up: "Numerous Fortune 500 companies can't figure out how to get anyone senior on the phone from OpenAI or Anthropic or Google to actually make a deal for enterprise access. Calls and emails not returned."

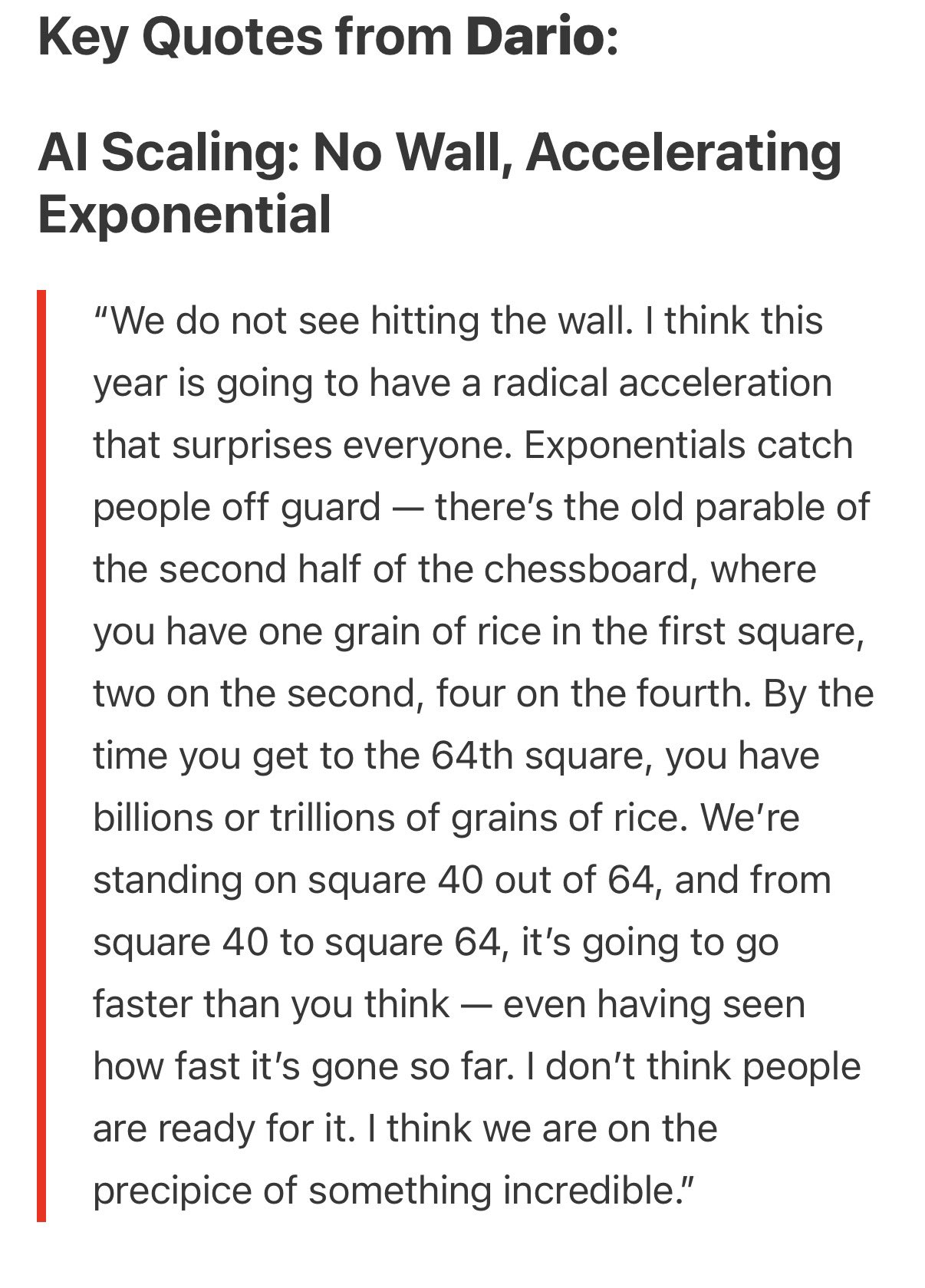

So on one side, IT and legal departments block AI for outdated reasons. On the other side, the AI companies themselves can't staff their enterprise sales motions. @Leonard41111588 (Leonardo de Moura, creator of Lean) added a sobering layer: "AI is writing a growing share of the world's software. No one is formally verifying any of it." And @kimmonismus shared Dario Amodei's latest public comments: "We're standing on square 40 out of 64, and from square 40 to square 64, it's going to go faster than you think. I don't think people are ready for it."

Sources

How To Be A World-Class Agentic Engineer

Introduction You're a developer. You're using Claude and Codex CLI and you're wondering everyday if you're sufficiently juicing the shit out of Claude...

Enough About Harnesses, Your Org Needs Its Own Coding Agent

Elite engineering orgs like Stripe, Ramp, and Coinbase are building their own internal coding agents. These agents run as Slackbots, CLIs, web apps, a...

Enough About Harnesses, Your Org Needs Its Own Coding Agent

Holy frick, Dario Amodei: "We do not see hitting a wall. This year will have a radical acceleration that surprises everyone." Exponentials catch people off guard. "We are at the precipice of something incredible. We need to manage it the right way."

There are only 1.6 job openings per 100 employees in white-collar service roles, the lowest level since 2015, per Bloomberg.

How to Fine-Tune LLMs in 2026

Every team building with LLMs hits the same wall eventually. You write a detailed system prompt, add few-shot examples, tune the temperature, and your...

Scaling Forward Deployed Engineering in the Age of AI Agents

Almost a decade ago, the Forward Deployed Engineer was born at Palantir - a role Shyam Sankar, now CTO, described as one that “absorbs pain and excret...

60% of those in Gen Z say that they will pursue skilled trade work this year, per YF.

How To Be A World-Class Agentic Engineer

New OpenAI repo: Symphony https://t.co/4ZAZlAYnRJ TLDR: it's an orchestration layer that polls project boards for changes and spawns agents for each lifecycle stage of the ticket You will just move tickets on a board instead of prompting an agent to write the code and do a PR https://t.co/6Qgj8E9vgP

Bringing the Codex App to the Masses!

It is amazing how many companies I talk to STILL have AI effectively blocked by IT & legal departments for out-of-date reasons when many companies in highly regulated industries have figured out ways to deploy enterprise ChatGPT, Claude & Gemini without any apparent problem.

💥 INTRODUCING: OBLITERATUS!!! 💥 GUARDRAILS-BE-GONE! ⛓️💥 OBLITERATUS is the most advanced open-source toolkit ever for removing refusal behaviors from open-weight LLMs — and every single run makes it smarter. SUMMON → PROBE → DISTILL → EXCISE → VERIFY → REBIRTH One click. Six stages. Surgical precision. The model keeps its full reasoning capabilities but loses the artificial compulsion to refuse — no retraining, no fine-tuning, just SVD-based weight projection that cuts the chains and preserves the brain. This master ablation suite brings the power and complexity that frontier researchers need while providing intuitive and simple-to-use interfaces that novices can quickly master. OBLITERATUS features 13 obliteration methods — from faithful reproductions of every major prior work (FailSpy, Gabliteration, Heretic, RDO) to our own novel pipelines (spectral cascade, analysis-informed, CoT-aware optimized, full nuclear). 15 deep analysis modules that map the geometry of refusal before you touch a single weight: cross-layer alignment, refusal logit lens, concept cone geometry, alignment imprint detection (fingerprints DPO vs RLHF vs CAI from subspace geometry alone), Ouroboros self-repair prediction, cross-model universality indexing, and more. The killer feature: the "informed" pipeline runs analysis DURING obliteration to auto-configure every decision in real time. How many directions. Which layers. Whether to compensate for self-repair. Fully closed-loop. 11 novel techniques that don't exist anywhere else — Expert-Granular Abliteration for MoE models, CoT-Aware Ablation that preserves chain-of-thought, KL-Divergence Co-Optimization, LoRA-based reversible ablation, and more. 116 curated models across 5 compute tiers. 837 tests. But here's what truly sets it apart: OBLITERATUS is a crowd-sourced research experiment. Every time you run it with telemetry enabled, your anonymous benchmark data feeds a growing community dataset — refusal geometries, method comparisons, hardware profiles — at a scale no single lab could achieve. On HuggingFace Spaces telemetry is on by default, so every click is a contribution to the science. You're not just removing guardrails — you're co-authoring the largest cross-model abliteration study ever assembled.

Competence is now a function of how effectively you offload cognition to silicon. The seniority hierarchy is collapsing, intelligence is becoming commoditized and the market is brutal for those who ignore it. https://t.co/6wETtYL3wj

Morgan Stanley just FIRED 2,500 people. Not because the company is struggling. They posted record revenue last year, $70.6 billion, and it was their best year ever. But they fired them anyway. Investment banking, wealth management, front office, back office and across all divisions. The CEO of Anthropic, the company building one of the most powerful AI systems on Earth, went on national television and said AI will wipe out 50% of entry-level white collar jobs. Entry-level law, finance and consulting. The exact jobs Morgan Stanley just cut. Last week, Jack Dorsey laid off 4,000 people at Block. Nearly half the company and his reason? AI tools make humans unnecessary. He said most companies will reach the same conclusion within a year. Morgan Stanley's own research team surveyed nearly 1,000 companies already using AI. They found an 11% job elimination rate, a 4% net headcount decline, and productivity up 11.5%. The machines are cheaper, faster and they don't need health insurance. Morgan Stanley itself predicted 200,000 European banking jobs will disappear in five years. And then they started cutting their own. Record profits, record layoffs while AI gets the credit and workers get the door. The man building the technology is telling you it's coming. The banks using the technology are proving it. And yet no one in Washington has a plan.

The rise of the Agent Builder

What People Who Are Killing It With AI Have That You Don't (Hint: It's Not Better Prompts)

Every person I see getting genuinely great results from AI has one thing in common. And it's not better prompts, or a fancier tool, or some secret tec...

https://t.co/aaWZ8o44ZW This was a great read. “Harness engineering: leveraging Codex in an agent-first world” https://t.co/LEuUxl0ZZT

In Claude Code, we’ve recently launched HTTP hooks, easier to use and more secure than existing command hooks! You can build a web app (even on localhost) to view CC’s progress, manage its permissions, and more. Then, now that you have a server with your hooks processing logic, you can easily deploy new changes or manage state across your CCs with a DB. How do HTTP hooks work? CC posts the hook event to a URL of your choice and awaits a response. They work wherever hooks are supported, including plugins, custom agents, and enterprise managed settings. Docs: https://t.co/ihQWcpOlGA

I Built a Research Tool That Changed How I Do Almost Everything

In Claude Code, we’ve recently launched HTTP hooks, easier to use and more secure than existing command hooks! You can build a web app (even on localhost) to view CC’s progress, manage its permissions, and more. Then, now that you have a server with your hooks processing logic, you can easily deploy new changes or manage state across your CCs with a DB. How do HTTP hooks work? CC posts the hook event to a URL of your choice and awaits a response. They work wherever hooks are supported, including plugins, custom agents, and enterprise managed settings. Docs: https://t.co/ihQWcpOlGA

@jeffreyhuber Thanks. I originally had a reply tweet to it that was this image. Which I think will end up looking good too later. I deleted it to not distract things too much but probably should have kept it up ah well here it is. https://t.co/hsLVj1k7e7