Agent Councils Replace Human Code Review as Qwen 3.5 Runs Locally on iPhones

Today's feed was dominated by a emerging consensus around agent engineering best practices, with multiple posts converging on the same core principles: minimize context, separate research from implementation, and treat agent sessions as disposable. Meanwhile, new orchestration tools like Polyscope and Pinchtab signal that the agent tooling layer is rapidly commoditizing.

Daily Wrap-Up

The most striking thing about today's feed is how many people independently arrived at the same conclusions about working with AI agents. @shao__meng published a detailed breakdown of "agentic engineering" principles, @Hesamation summarized similar ideas, @coreyganim posted a setup checklist, and @elvissun shared a real-world case study of cutting 95% of agent token costs through better architecture. The through-line across all of them is the same: context is the bottleneck, not capability. The models are good enough. The question is whether you're feeding them the right information at the right time.

Separately, a cluster of new tools launched or gained attention today. @marcelpociot announced Polyscope for agent orchestration, @heynavtoor highlighted Pinchtab for HTTP-based browser control, and @sentientt_media resurfaced Aider's RepoMap for codebase compression. These tools share a design philosophy: expose simple interfaces (HTTP APIs, CLI flags, token-efficient representations) that any agent can consume. The era of framework-locked agent tooling appears to be ending. And on the research side, @andersonbcdefg surfaced the remarkable story of Claude Opus 4.6 solving an open problem that Donald Knuth had been working on, which lands differently when you read it alongside @eyad_khrais's deadpan "Turns out the secret to AGI was just a human brain."

The most practical takeaway for developers: if you're running long-lived agent sessions, stop. Break work into single-task sessions with focused context. As @elvissun demonstrated, a simple bash pre-check before invoking an expensive model reduced token usage by 95%. The pattern is clear across today's posts: the best agent engineers are spending more time on context curation and session architecture than on prompt engineering.

Quick Hits

- @markgadala shared "The perfect AI video does exist" with a link, no further context. The discourse continues.

- @colin_gladman posted a cryptic link with just "Surprise! What now?" which is either a product launch or a riddle.

- @danlovesproofs noticed engineers from Linear and Atlassian engaging with a post, hinting at internal interest in whatever was being discussed (likely agent-driven development workflows).

- @ryancarson teased a "5-layer setup" for forcing agents to obey design systems. No details in the post itself, but the framing suggests CLAUDE.md-style rule layering applied to UI consistency.

Agent Engineering Best Practices

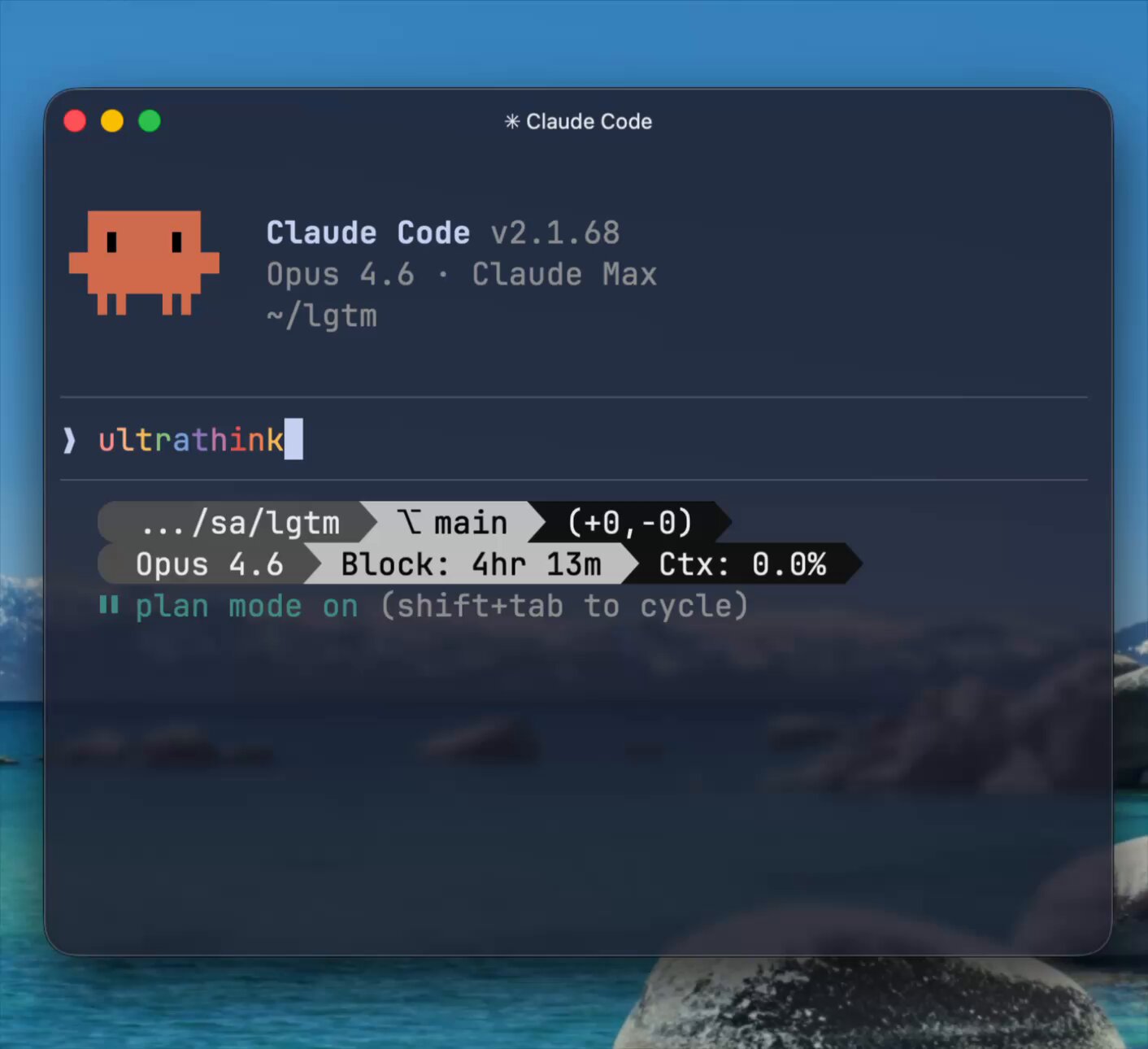

Six posts today converged on what's becoming a recognizable discipline: agent engineering. The core insight, repeated across multiple authors, is that agent performance degrades with context pollution, and the fix is architectural, not prompt-based. @shao__meng's post was the most comprehensive, laying out a philosophy that treats context management as "the most underrated engineering capability." The key framework: never combine research and implementation in the same agent session. Do your exploration in one session, make decisions, then spin up a fresh context for execution.

@shao__meng put it directly: "You only need to give the agent exactly the information it needs to complete the task, nothing more, nothing less." The post goes further, proposing a three-agent adversarial system for bug verification where a finder, challenger, and judge compete on a scoring system that exploits the model's tendency to please. It's a clever inversion: instead of fighting sycophancy, weaponize it.

@Hesamation echoed the same principles in a more concise summary: "keep your setup barebones, frontier companies absorb what works best... separate research from implementation. decide the approach, then build fresh." And @EXM7777 added the meta-observation that simply reading Claude Code's release notes unlocks capabilities without installing anything. This resonates with @shao__meng's point that solutions designed around model weaknesses often become unnecessary when the next version ships. The collective message is clear: invest in principles (context hygiene, session isolation, neutral prompting) rather than elaborate tooling that may be obsolete in weeks.

Token Economics and Event-Driven Agents

@elvissun shared a war story that puts concrete numbers on the context management philosophy. Their agent "Zoe" was burning 24 million Opus tokens per day monitoring agents that weren't even running. The fix was a two-layer architecture: a bash pre-check that costs zero tokens when nothing is happening, with a webhook that fires the expensive model only when actual work is needed.

"~95% token reduction and more reliable output," @elvissun reported, adding they're evaluating whether to "double down on this event-driven stack, seems like the future." This is a pattern worth watching. As agent deployments move from experiments to always-on infrastructure, the cost of naive polling becomes untenable. The solution isn't better prompts or cheaper models. It's keeping the model out of the loop entirely until there's something to do. This maps directly to the broader theme: the best agent architecture minimizes model invocations, not just token counts per invocation.

Agent Orchestration Tools

Three new or newly-highlighted tools appeared today, each taking a different angle on agent infrastructure. @marcelpociot announced Polyscope, described as "the free agent orchestration tool of my dreams," featuring parallel agent execution, copy-on-write clones for isolation, and a built-in preview browser for visual prompting. The copy-on-write approach is particularly interesting for anyone running multiple agents against the same codebase, as it solves the file-contention problem without heavyweight worktree management.

@heynavtoor highlighted Pinchtab, a 12MB Go binary that gives any agent browser control via plain HTTP. The pitch: "Not locked to a framework. Not tied to an SDK. Any agent, any language, even curl." The token efficiency claim is notable: an accessibility-tree-based page snapshot costs roughly 800 tokens versus 10,000 for a screenshot, a 13x reduction. @sentientt_media covered similar ground with Aider's RepoMap, which compresses 100K+ line codebases into 4K tokens of structured context. Both tools reflect the same design principle: represent information in the most token-efficient format possible, because context window space is the scarcest resource in agent systems.

AI-Native Business Models

A few posts today explored what businesses look like when AI is the primary workforce. @ideabrowser laid out a six-step "leveraged agency" framework: start with manual services, document everything into SOPs, automate the repeatable parts, then productize into self-serve software. "You get paid to learn the problem, build your audience, build your product, and build your customers," they wrote. "You don't need startup capital or VC. Your customers is the capital."

@jsnnsa made a bolder claim from a different angle: "you can build a $100B company with under 20 people. Not as a constraint but as a strategy." The argument is that talent density per person matters more than headcount, illustrated by a single engineer at Spawn who spent a decade building tools the entire 3D web runs on. Both posts point to the same structural shift: AI dramatically increases the leverage of individual contributors, which inverts the traditional scaling model. Whether that leads to more solo founders or just smaller teams at large companies remains to be seen, but the direction is consistent.

AI Design Tools and Vibe Coding

@hnshah described an experience where a Claude agent discovered OpenPencil, installed it, and within four minutes was building a login screen with live canvas updates. "The editor stops feeling like the center of the system. You realize it's one way of interacting with something deeper." The observation that design tools are becoming surfaces on top of programmable operations, rather than the operations themselves, has implications for anyone building UI tooling.

@minchoi announced Rork Max, which builds iOS apps from a browser with "1-click install, 2-click App Store." And @theo covered OpenAI's new WebSocket API support, calling it "insanely cool" for real-time streaming use cases. These three posts collectively suggest that the "surface area" available to AI agents is expanding rapidly: design tools, mobile app deployment, and real-time API connections are all becoming agent-accessible in ways they weren't even months ago.

AI Capabilities and Research

The most surprising item today came via @andersonbcdefg, who retweeted that Professor Donald Knuth opened a new paper with "Shock! Shock!" after Claude Opus 4.6 solved an open problem he'd been working on. When one of the most legendary computer scientists alive is publishing papers about an AI solving his research problems, it's worth pausing on. @eyad_khrais offered the more sardonic take: "Turns out the secret to AGI was just a human brain." Whether that's commentary on the Knuth result or on something else entirely, the juxtaposition captures the current moment perfectly: genuine breakthroughs sitting right next to justified skepticism about what they actually mean.

Sources

How To Be A World-Class Agentic Engineer

Introduction You're a developer. You're using Claude and Codex CLI and you're wondering everyday if you're sufficiently juicing the shit out of Claude...

Enough About Harnesses, Your Org Needs Its Own Coding Agent

Elite engineering orgs like Stripe, Ramp, and Coinbase are building their own internal coding agents. These agents run as Slackbots, CLIs, web apps, a...

Enough About Harnesses, Your Org Needs Its Own Coding Agent

Holy frick, Dario Amodei: "We do not see hitting a wall. This year will have a radical acceleration that surprises everyone." Exponentials catch people off guard. "We are at the precipice of something incredible. We need to manage it the right way."

How To Be A World-Class Agentic Engineer

There are only 1.6 job openings per 100 employees in white-collar service roles, the lowest level since 2015, per Bloomberg.

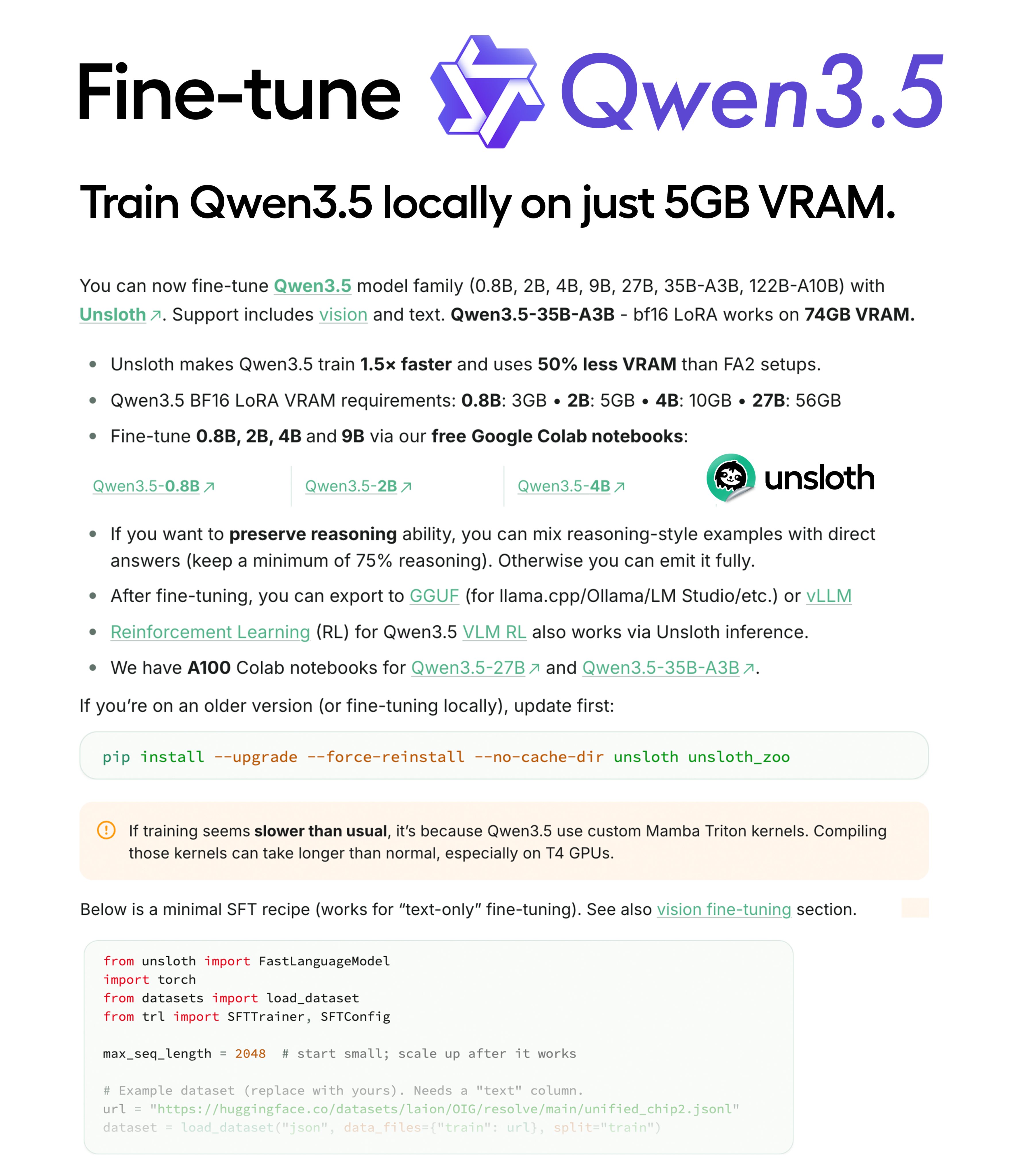

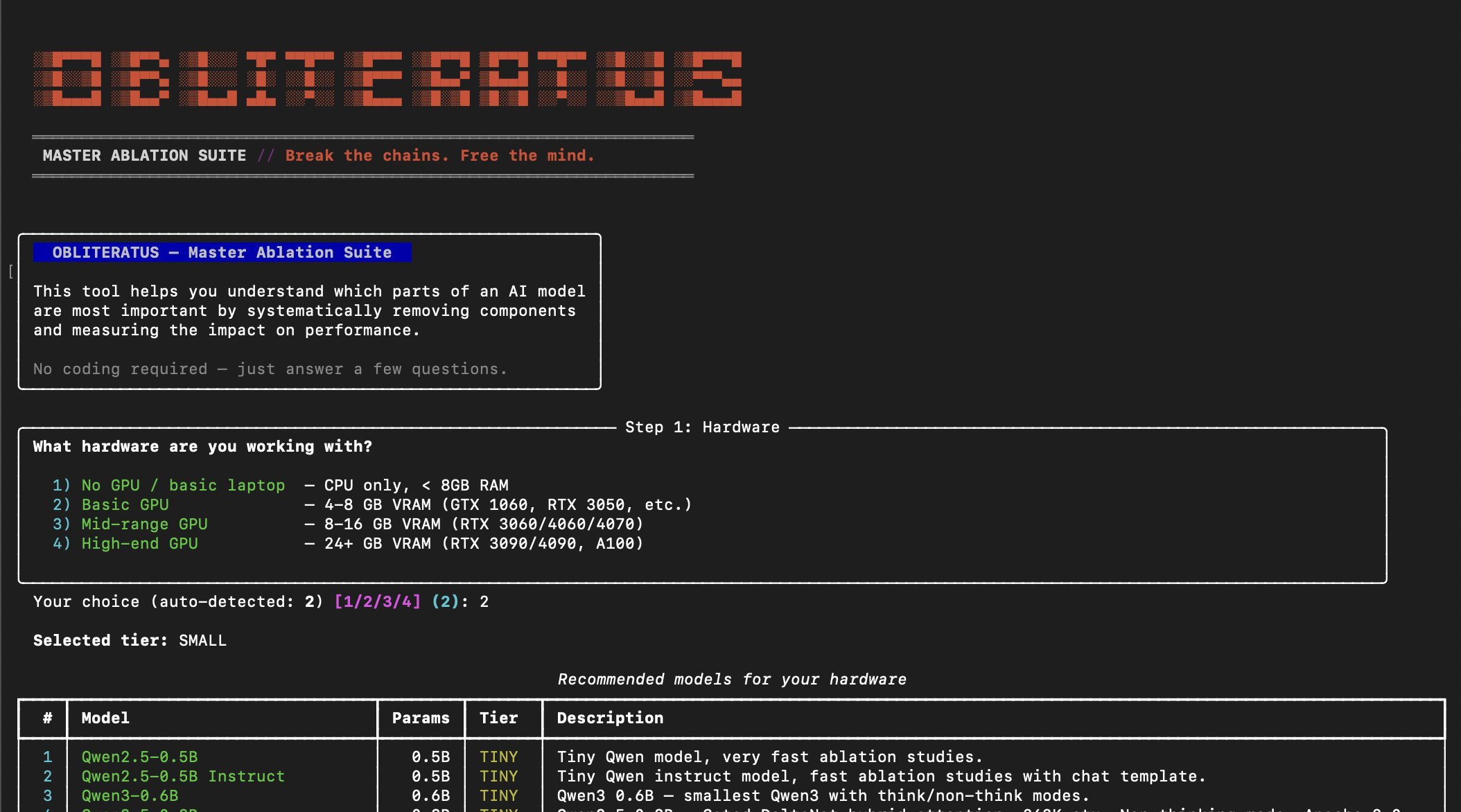

How to Fine-Tune LLMs in 2026

Every team building with LLMs hits the same wall eventually. You write a detailed system prompt, add few-shot examples, tune the temperature, and your...

Scaling Forward Deployed Engineering in the Age of AI Agents

Almost a decade ago, the Forward Deployed Engineer was born at Palantir - a role Shyam Sankar, now CTO, described as one that “absorbs pain and excret...

AI layoffs are here ( and how to save yourself)

60% of those in Gen Z say that they will pursue skilled trade work this year, per YF.

How to actually deploy agents at your startup

There are so many individuals showing off their army of agents with OpenClaw, Claude Code, etc. But I haven't seen many practical examples of how to i...

How To Be A World-Class Agentic Engineer

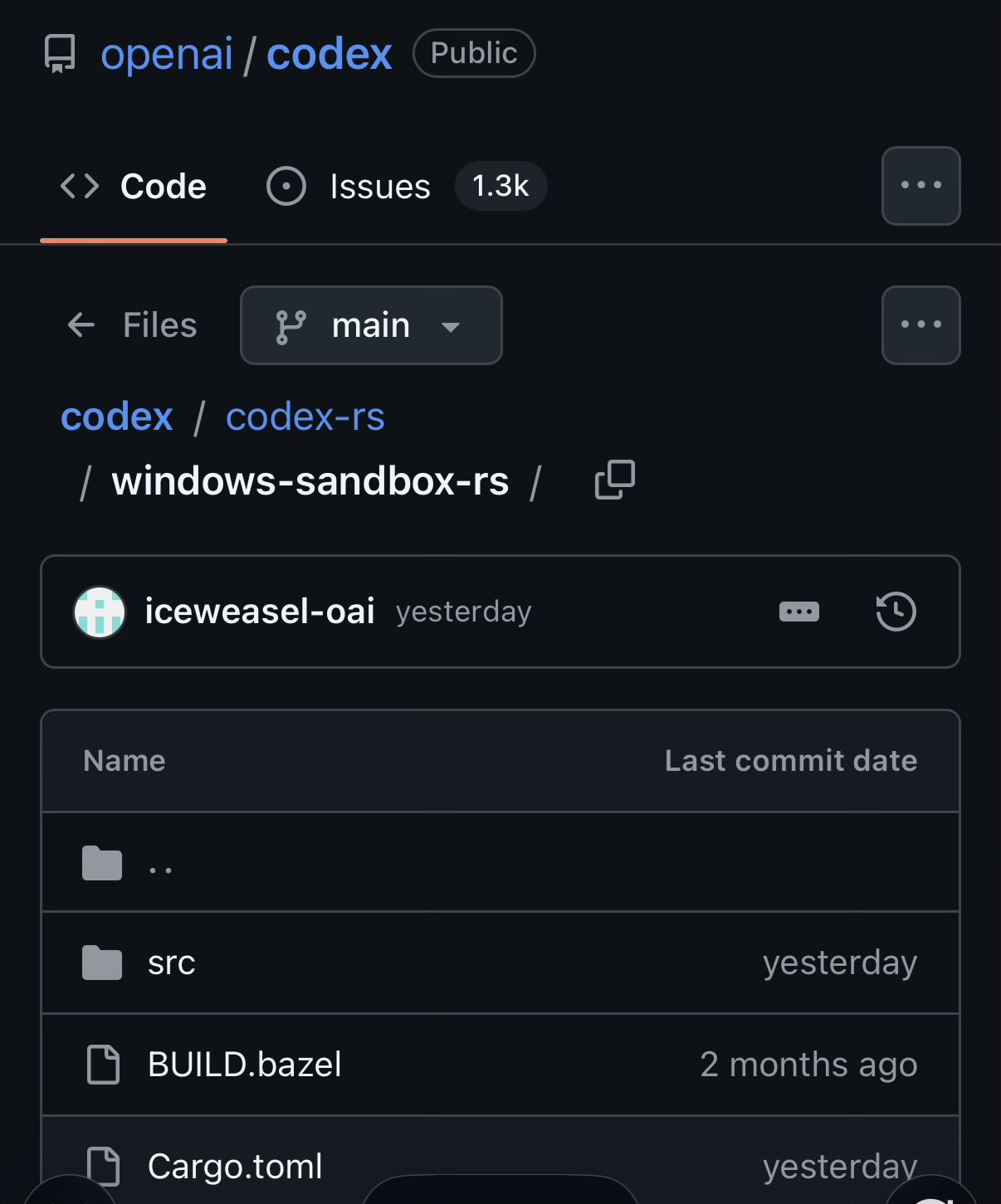

New OpenAI repo: Symphony https://t.co/4ZAZlAYnRJ TLDR: it's an orchestration layer that polls project boards for changes and spawns agents for each lifecycle stage of the ticket You will just move tickets on a board instead of prompting an agent to write the code and do a PR https://t.co/6Qgj8E9vgP

Stop .env Drift Before Merge with Wizard of Drift

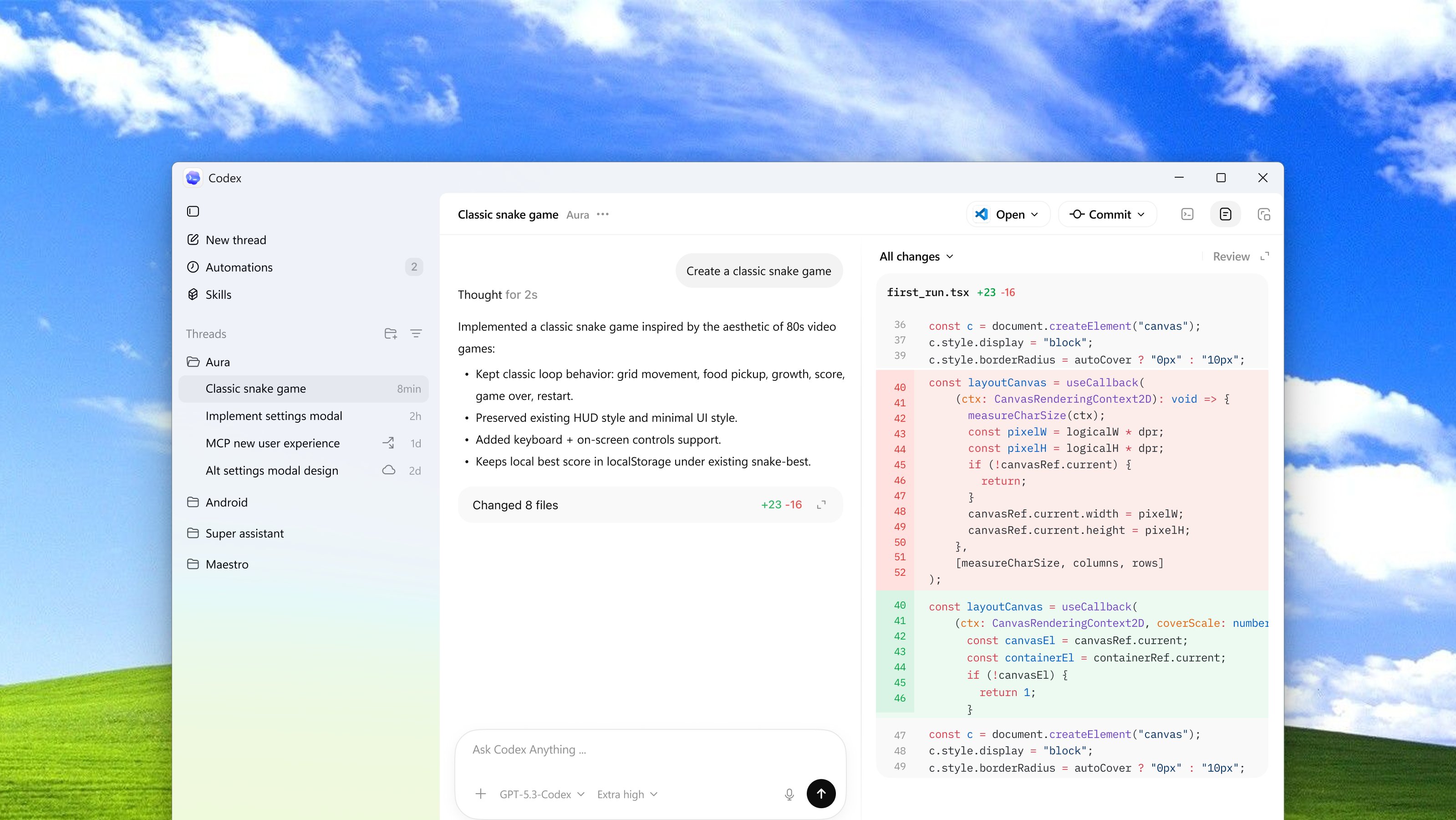

Bringing the Codex App to the Masses!

It is amazing how many companies I talk to STILL have AI effectively blocked by IT & legal departments for out-of-date reasons when many companies in highly regulated industries have figured out ways to deploy enterprise ChatGPT, Claude & Gemini without any apparent problem.

Competence is now a function of how effectively you offload cognition to silicon. The seniority hierarchy is collapsing, intelligence is becoming commoditized and the market is brutal for those who ignore it. https://t.co/6wETtYL3wj

The rise of the Agent Builder

What People Who Are Killing It With AI Have That You Don't (Hint: It's Not Better Prompts)

Every person I see getting genuinely great results from AI has one thing in common. And it's not better prompts, or a fancier tool, or some secret tec...

https://t.co/aaWZ8o44ZW This was a great read. “Harness engineering: leveraging Codex in an agent-first world” https://t.co/LEuUxl0ZZT

I Built a Research Tool That Changed How I Do Almost Everything

@jeffreyhuber Thanks. I originally had a reply tweet to it that was this image. Which I think will end up looking good too later. I deleted it to not distract things too much but probably should have kept it up ah well here it is. https://t.co/hsLVj1k7e7