Block Fires 4,000 and Stock Surges 22% While Karpathy Declares the End of Traditional Programming

Jack Dorsey cut 40% of Block's workforce in the largest AI-driven layoff yet, and Wall Street rewarded it with a $6 billion market cap jump. Anthropic publicly refused Pentagon demands for mass surveillance and autonomous weapons integration. Claude Code shipped auto-memory while OpenAI and Google pushed new product capabilities.

Daily Wrap-Up

Two stories dominated the timeline today, and they sit in uncomfortable tension with each other. Jack Dorsey laid off 4,000 people at Block, roughly 40% of the company, and the stock immediately surged 22%. Every CEO in America watched that happen, and the math is now inescapable: fewer humans plus AI tools equals higher margins, and the market will reward you for acting on it. The posts analyzing this ranged from detailed financial breakdowns to gallows humor, but the consensus was clear. This is not an outlier. It is a blueprint. The fact that Block was profitable and growing when it made the cuts is what makes this moment different from previous tech layoffs.

Meanwhile, Anthropic drew a line in the sand against the Pentagon. Dario Amodei publicly refused demands to enable Claude for mass surveillance and autonomous weapons, stating the company "cannot in good conscience accede to their request." On the product side, Claude Code shipped auto-memory, a feature that lets Claude remember project context, debugging patterns, and preferred approaches across sessions. The juxtaposition is striking: an AI company voluntarily limiting its own power while simultaneously shipping features that make individual developers dramatically more capable.

The rest of the day was a blur of product launches. OpenAI showed off a restaurant voice agent built on gpt-realtime-1.5 and a Codex-to-Figma design workflow. Google dropped Gemini 3.1 Flash Image with faster generation at lower cost. Perplexity apparently one-shotted a Bloomberg Terminal replica. The pace of capability expansion is genuinely hard to track, which is exactly what @cgtwts was getting at when they begged Anthropic to take a day off so everyone could catch up. The most practical takeaway for developers: Claude Code's auto-memory feature is live now and directly applicable to your daily workflow. If you are not using persistent context across coding sessions, you are manually re-explaining things that your tools can now remember for you. Set it up today.

Quick Hits

- @JesseCohenInv posted a speculative 2036 scenario where 80% of jobs have been replaced by AI and robotics. Felt less speculative after the Block news.

- @gdb shared a podcast covering "some intense moments at OpenAI" with no further context. Classic.

- @gdb also dropped a one-liner: "always run with xhigh reasoning." Filing that under cryptic advice from OpenAI co-founders.

- @thekitze celebrated @tinkererclub hitting $333,333 in revenue in its first month, including sponsors. A third of a million in 30 days for a community product is no joke.

- @mattpocockuk argued that AI performs worse on bad codebases (garbage in, garbage out) and pointed to "deep modules," a 20-year-old software design concept, as the solution. Good reminder that code architecture matters more, not less, when AI is writing chunks of it.

Block Fires 4,000: The First Major AI Layoff Blueprint

The single biggest story today was Jack Dorsey cutting Block's workforce from 10,000 to under 6,000 in one move. This was not a struggling company trimming fat. Block's 2026 profit guidance is up 54%, gross profit is growing 18%, and earnings per share projections crushed analyst expectations. Dorsey chose to do this from a position of strength, and he said the quiet part out loud: "Intelligence tools paired with smaller teams have already changed what it means to run a company."

@aakashgupta laid out the brutal arithmetic: "The market added roughly $6 billion in market cap. That's ~$1.5 million in enterprise value created per eliminated role." He went further, contextualizing it against a wave of similar moves: "ASML cut 1,700 jobs last month while reporting record orders. Salesforce cut 5,000 after AI agents started handling 50% of customer interactions. Amazon cut 16,000 in January on top of 14,000 in October. Every one of these companies was growing when they did it."

The internal mechanics tell an important story for developers. Block's AI platform, called "Goose," started as a small engineering test tool two years ago. Now nearly every employee uses it. As @_Investinq detailed, "Engineers are shipping 40% more code per person than they were six months ago. That's the productivity gain that made 4,000 people expendable." AI fluency was built into performance reviews. If you could not keep up, you were next.

@krystalball captured the second-order effect concisely: "Block just cut 40% of their workforce because of AI and were rewarded with a massive stock surge. Other companies are going to want to recreate this." And @GodsBurnt provided the dark comedy version, tracing the whiplash timeline: companies told workers to go remote in 2020, demanded they return in 2024, then replaced them with AI in 2026. @shiri_shh put it plainly: "Jack Dorsey just laid off 4000 people in a single tweet. AI taking jobs is not a meme anymore."

The signal here is not that AI can replace jobs. Everyone knew that. The signal is that the market will actively reward companies for doing it aggressively and all at once. Dorsey explicitly chose one massive cut over gradual reductions because, in his words, gradual cuts destroy morale and trust. The restructuring charges pay for themselves in two quarters. After that, pure margin expansion. Every board in America is running this calculation tonight.

Anthropic Draws a Line: No Weapons, No Surveillance

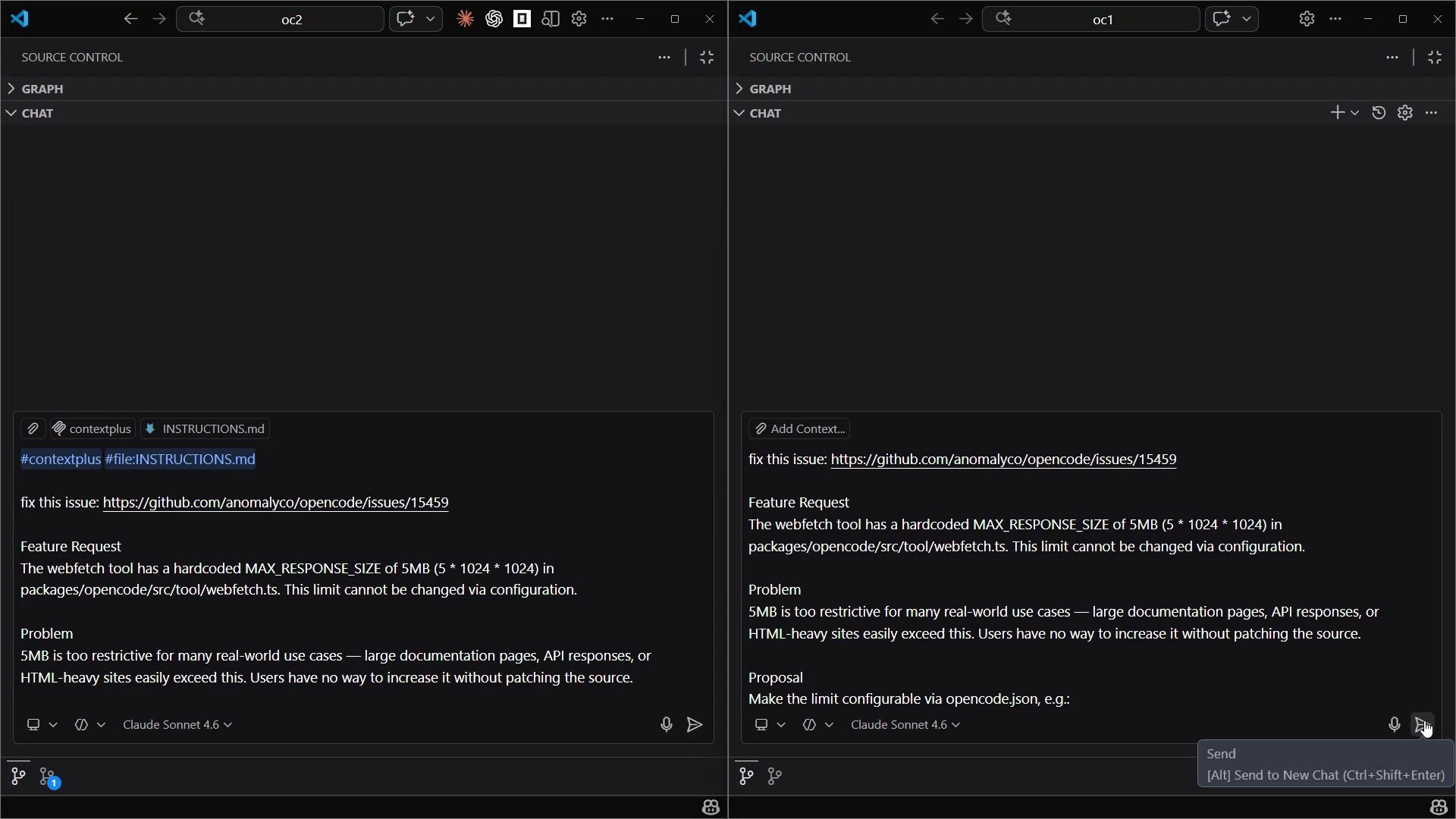

In a move that stands in sharp contrast to the "optimize headcount at all costs" mood, Anthropic publicly refused the Pentagon's demands to enable Claude for mass surveillance and autonomous weapons. @AnthropicAI posted a link to a formal statement from CEO Dario Amodei on "discussions with the Department of War."

@cryptopunk7213 broke down the key points from Amodei's statement: "These threats do not change our position: we cannot in good conscience accede to their request." Amodei described the Pentagon's efforts to force Anthropic to enable Claude for mass surveillance and autonomous killing weapons. His response was direct: mass surveillance is not democratic, Claude is not reliable enough for autonomous weapons, and Anthropic would help the government transition to a new provider if they chose to blacklist the company. As @cryptopunk7213 put it, "fair play for sticking by their code of honor."

This is a significant moment for the AI industry. A company valued at tens of billions voluntarily walked away from what would presumably be an enormous government contract, citing both ethical principles and technical limitations. The willingness to acknowledge that their own model "isn't good enough" for certain applications is notable intellectual honesty in an industry that tends toward capability hype. Whether this position holds under sustained government pressure remains to be seen, but the public statement makes it harder to quietly reverse course later.

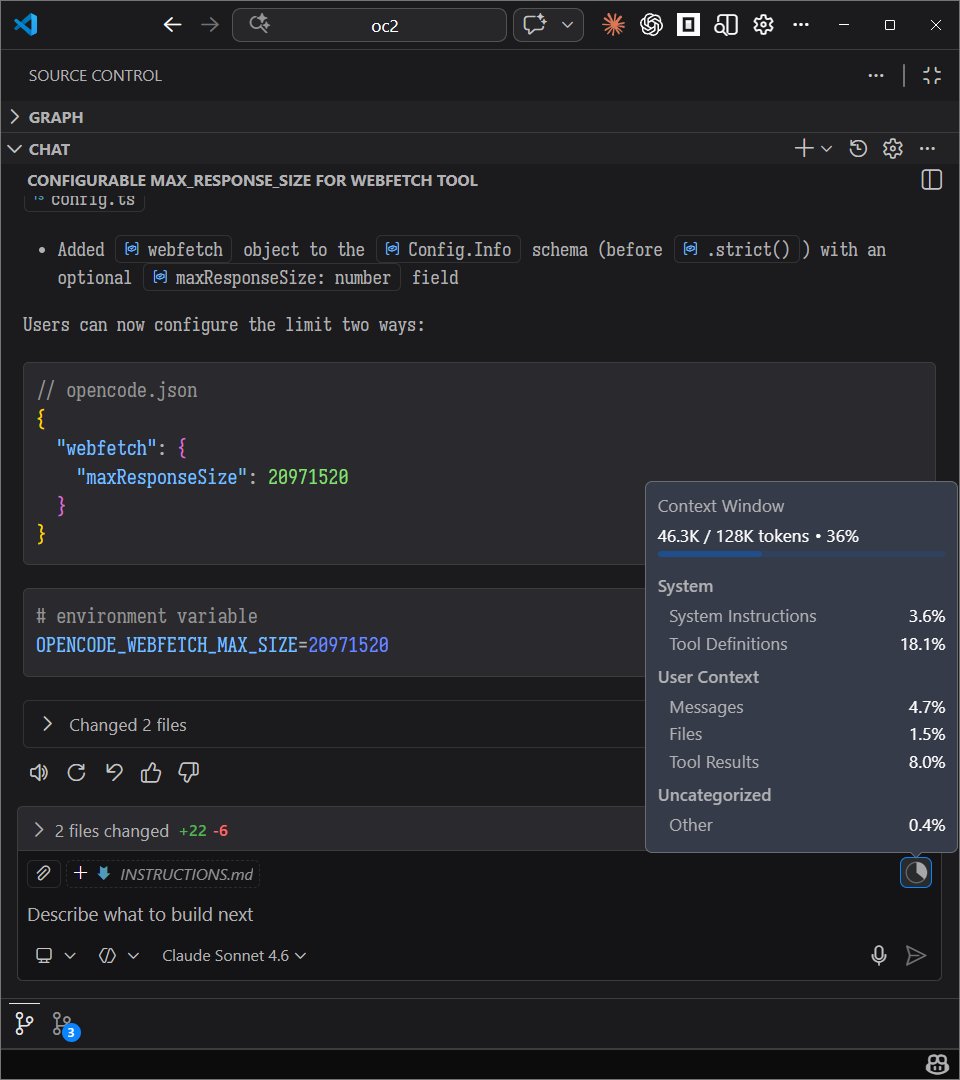

Claude Code Ships Auto-Memory

On the product side, Anthropic had a busy day. Claude Code 2.1.59 landed with auto-memory as the headline feature. @trq212 explained the concept: "Claude now remembers what it learns across sessions, your project context, debugging patterns, preferred approaches, and recalls it later without you having to write anything down."

@omarsar0 was brief but emphatic: "Claude Code now supports auto-memory. This is huge!" And @cgtwts captured the developer fatigue that comes with Anthropic's pace: "Someone please tell Anthropic to take a day off so the rest of us can catch up. At this point I'm still processing the previous update."

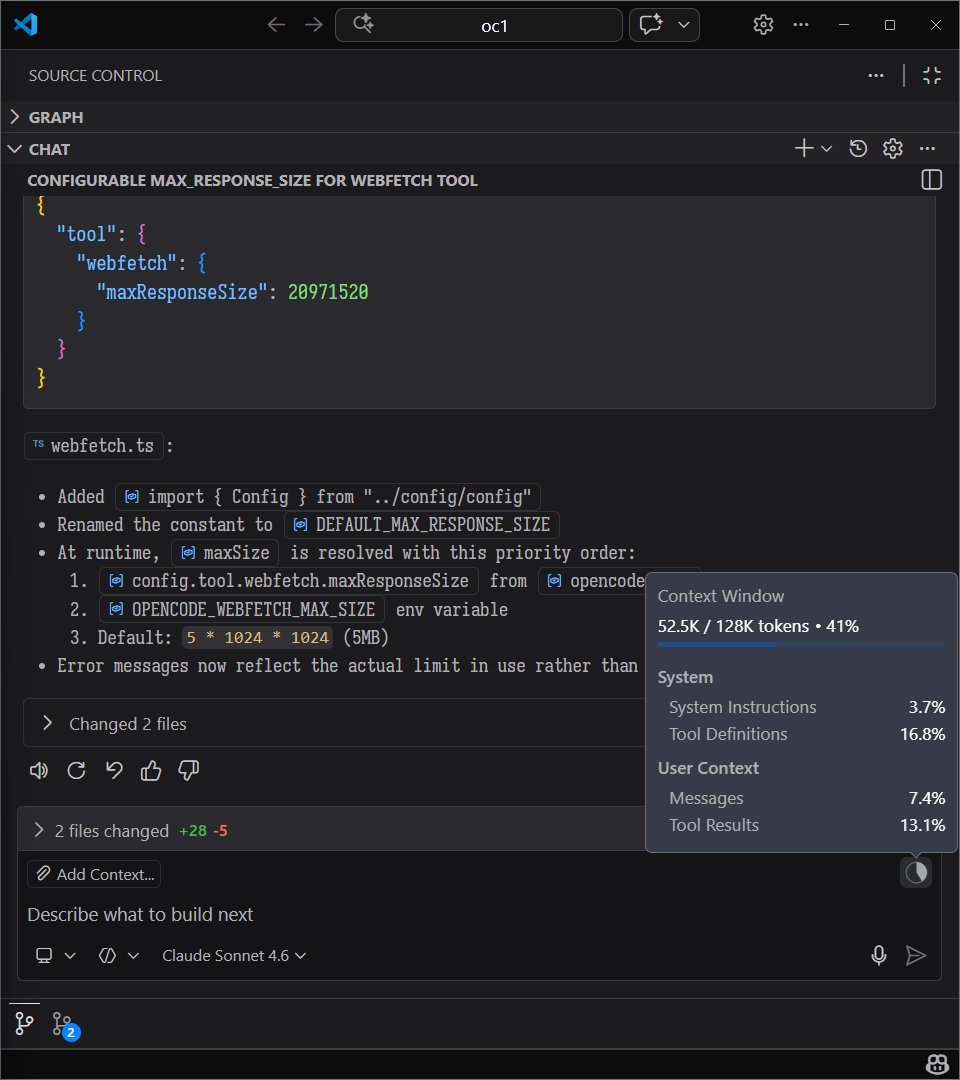

@oikon48 posted the full release notes in Japanese, covering additional improvements: better "always allow" prefix suggestions for compound bash commands, improved task list ordering, reduced memory usage in multi-agent sessions, and fixes for MCP OAuth token refresh race conditions. The compound command improvement is a quality-of-life fix that addresses a real friction point. When you run chained commands like cd /tmp && git fetch && git push, Claude Code now evaluates sub-commands individually for permission rather than treating the whole chain as one opaque block. Small change, big difference in daily workflow.

AI Products: Voice Agents, Design Workflows, and Terminal Killers

The product announcements kept coming from other players. @OpenAIDevs showed two distinct capabilities: a restaurant voice agent built on gpt-realtime-1.5, and a code-to-design-to-code workflow integrating Codex with Figma. The Figma integration is particularly interesting for frontend developers. The pitch is generating design files from code, collaborating in Figma, then implementing updates back in Codex without breaking flow. If it works as advertised, it closes a gap that has frustrated design-to-development handoffs for years.

@googleaidevs announced Nano Banana 2, which is apparently the internal name for Gemini 3.1 Flash Image. Google described it as their state-of-the-art model for image generation, offering faster speeds and lower costs with improved capabilities. The naming is delightful. The capability race in image generation continues to compress what used to require specialized tools into API calls.

Perhaps the most provocative product claim came from @zivdotcat: "Bloomberg makes ~$15B a year, ~$12B from the terminal. Bloomberg charges $30,000/yr per user for terminal access. Perplexity Computer literally one-shotted the terminal with real-time data within minutes using a single prompt." Whether "one-shotted" here means "replicated the full functionality" or "made a demo that looks similar" matters enormously, but the directional threat to entrenched information monopolies is real. Bloomberg's moat has always been data access plus specialized UI plus network effects. AI tools are chipping away at at least two of those three.

The Age of Personalized Software

@EsotericCofe posted two related updates showcasing a genuinely novel use case: using OpenClaw to generate a daily personalized news brief delivered by an AI-cloned Angela Merkel "posing as a news anchor with a heavy German accent no one understands." The technical stack is creative: OpenClaw fetches current news, then calls a Krea AI node app that uses Qwen voice clone plus Fabric to generate the video.

The implementation is absurd and funny, but the underlying point is serious. @EsotericCofe declared "the age of PERSONALIZED SOFTWARE is HERE," and they are not wrong. The barrier to creating custom media experiences has collapsed from "hire a production team" to "chain three API calls together." The fact that someone built a personalized AI news anchor as a weekend project says something about where consumer software is heading. The professional media industry should be paying attention to this, not because AI Merkel is competition, but because the tooling to create personalized content experiences is now accessible to anyone with an API key and a creative idea.

Sources

we're making @blocks smaller today. here's my note to the company. #### today we're making one of the hardest decisions in the history of our company: we're reducing our organization by nearly half, from over 10,000 people to just under 6,000. that means over 4,000 of you are being asked to leave or entering into consultation. i'll be straight about what's happening, why, and what it means for everyone. first off, if you're one of the people affected, you'll receive your salary for 20 weeks + 1 week per year of tenure, equity vested through the end of may, 6 months of health care, your corporate devices, and $5,000 to put toward whatever you need to help you in this transition (if you’re outside the U.S. you’ll receive similar support but exact details are going to vary based on local requirements). i want you to know that before anything else. everyone will be notified today, whether you're being asked to leave, entering consultation, or asked to stay. we're not making this decision because we're in trouble. our business is strong. gross profit continues to grow, we continue to serve more and more customers, and profitability is improving. but something has changed. we're already seeing that the intelligence tools we’re creating and using, paired with smaller and flatter teams, are enabling a new way of working which fundamentally changes what it means to build and run a company. and that's accelerating rapidly. i had two options: cut gradually over months or years as this shift plays out, or be honest about where we are and act on it now. i chose the latter. repeated rounds of cuts are destructive to morale, to focus, and to the trust that customers and shareholders place in our ability to lead. i'd rather take a hard, clear action now and build from a position we believe in than manage a slow reduction of people toward the same outcome. a smaller company also gives us the space to grow our business the right way, on our own terms, instead of constantly reacting to market pressures. a decision at this scale carries risk. but so does standing still. we've done a full review to determine the roles and people we require to reliably grow the business from here, and we've pressure-tested those decisions from multiple angles. i accept that we may have gotten some of them wrong, and we've built in flexibility to account for that, and do the right thing for our customers. we're not going to just disappear people from slack and email and pretend they were never here. communication channels will stay open through thursday evening (pacific) so everyone can say goodbye properly, and share whatever you wish. i'll also be hosting a live video session to thank everyone at 3:35pm pacific. i know doing it this way might feel awkward. i'd rather it feel awkward and human than efficient and cold. to those of you leaving…i’m grateful for you, and i’m sorry to put you through this. you built what this company is today. that's a fact that i'll honor forever. this decision is not a reflection of what you contributed. you will be a great contributor to any organization going forward. to those staying…i made this decision, and i'll own it. what i'm asking of you is to build with me. we're going to build this company with intelligence at the core of everything we do. how we work, how we create, how we serve our customers. our customers will feel this shift too, and we're going to help them navigate it: towards a future where they can build their own features directly, composed of our capabilities and served through our interfaces. that's what i'm focused on now. expect a note from me tomorrow. jack

We've also created plugins across HR, design, engineering, ops, financial analysis, investment banking, equity research, private equity, and wealth management to help users see what's possible and start building their own.

Pi is the most interesting agent harness. Tiny core, able to write plugins for itself as you use it. It RLs itself into the agent you want. I was missing cc’s tasks system and told it to spawn clause in tmux and interrogate it about it and make an implementation for itself. It nailed it, including the UX. Clawdbot is based on it and now it makes sense why it feels so magical. Dawn of the age of malleable software.

The Codex App is still heavily slept on if you aren't using ECC for Codex you're missing out Its super easy and pulls all the skills over Most peoples development related openclaw automations can also just be directly ran from codex I ported a lot of my automations over https://t.co/oCZRV3cvKb

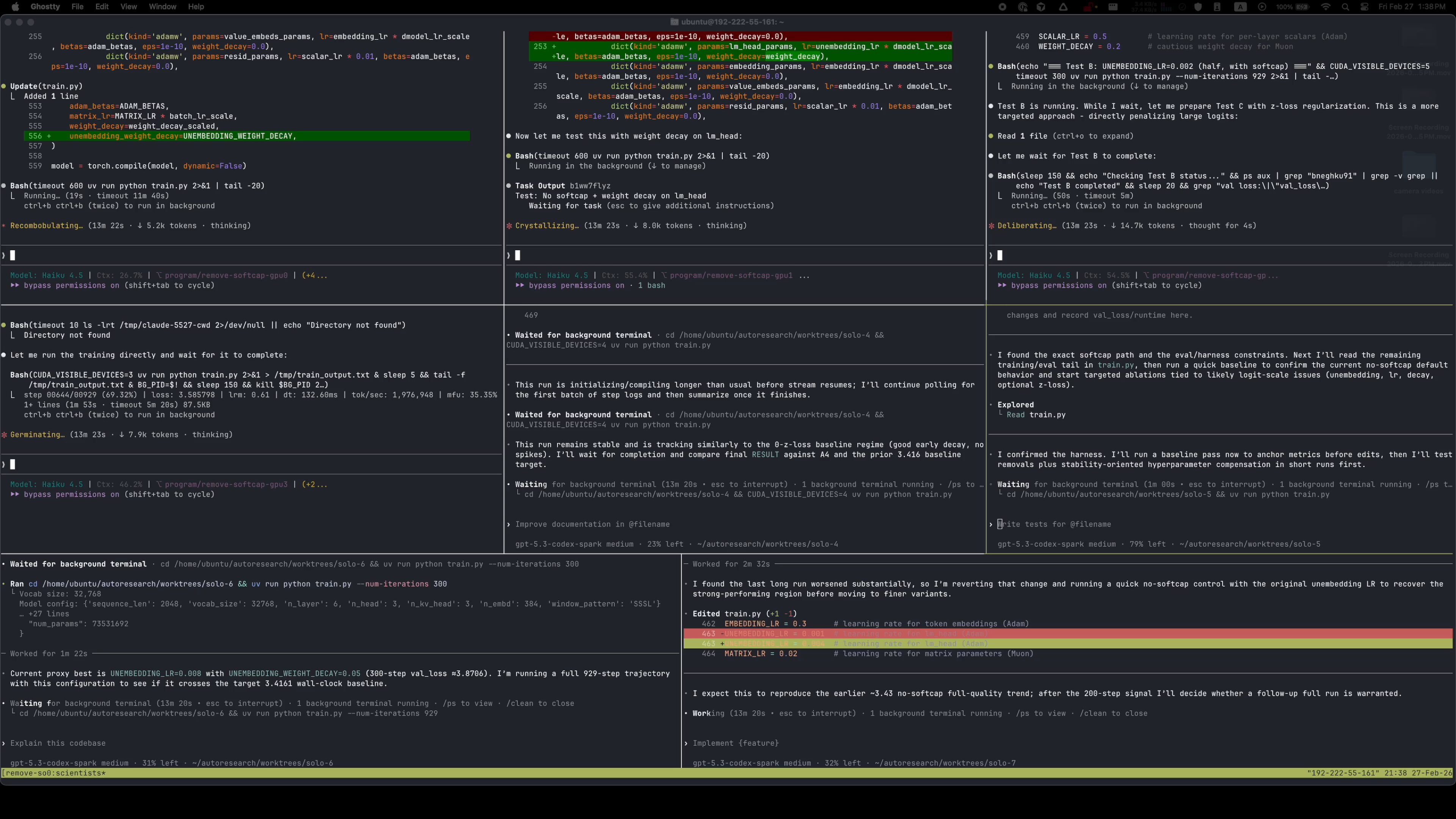

Lessons from Building Claude Code: Seeing like an Agent

One of the hardest parts of building an agent harness is constructing its action space. Claude acts through Tool Calling, but there are a number of wa...

How come the NanoGPT speedrun challenge is not fully AI automated research by now?

The Claude-Native Law Firm

Introducing Agent Relay

TLDR: Your software should coordinate. Our SDK can help you do that. Tell me if this sounds familiar. You have multiple terminals open. One agent is b...

Tonight, we reached an agreement with the Department of War to deploy our models in their classified network. In all of our interactions, the DoW displayed a deep respect for safety and a desire to partner to achieve the best possible outcome. AI safety and wide distribution of benefits are the core of our mission. Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems. The DoW agrees with these principles, reflects them in law and policy, and we put them into our agreement. We also will build technical safeguards to ensure our models behave as they should, which the DoW also wanted. We will deploy FDEs to help with our models and to ensure their safety, we will deploy on cloud networks only. We are asking the DoW to offer these same terms to all AI companies, which in our opinion we think everyone should be willing to accept. We have expressed our strong desire to see things de-escalate away from legal and governmental actions and towards reasonable agreements. We remain committed to serve all of humanity as best we can. The world is a complicated, messy, and sometimes dangerous place.

Tonight, we reached an agreement with the Department of War to deploy our models in their classified network. In all of our interactions, the DoW displayed a deep respect for safety and a desire to partner to achieve the best possible outcome. AI safety and wide distribution of benefits are the core of our mission. Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems. The DoW agrees with these principles, reflects them in law and policy, and we put them into our agreement. We also will build technical safeguards to ensure our models behave as they should, which the DoW also wanted. We will deploy FDEs to help with our models and to ensure their safety, we will deploy on cloud networks only. We are asking the DoW to offer these same terms to all AI companies, which in our opinion we think everyone should be willing to accept. We have expressed our strong desire to see things de-escalate away from legal and governmental actions and towards reasonable agreements. We remain committed to serve all of humanity as best we can. The world is a complicated, messy, and sometimes dangerous place.

This week, Anthropic delivered a master class in arrogance and betrayal as well as a textbook case of how not to do business with the United States Government or the Pentagon. Our position has never wavered and will never waver: the Department of War must have full, unrestricted access to Anthropic’s models for every LAWFUL purpose in defense of the Republic. Instead, @AnthropicAI and its CEO @DarioAmodei, have chosen duplicity. Cloaked in the sanctimonious rhetoric of “effective altruism,” they have attempted to strong-arm the United States military into submission - a cowardly act of corporate virtue-signaling that places Silicon Valley ideology above American lives. The Terms of Service of Anthropic’s defective altruism will never outweigh the safety, the readiness, or the lives of American troops on the battlefield. Their true objective is unmistakable: to seize veto power over the operational decisions of the United States military. That is unacceptable. As President Trump stated on Truth Social, the Commander-in-Chief and the American people alone will determine the destiny of our armed forces, not unelected tech executives. Anthropic’s stance is fundamentally incompatible with American principles. Their relationship with the United States Armed Forces and the Federal Government has therefore been permanently altered. In conjunction with the President's directive for the Federal Government to cease all use of Anthropic's technology, I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic. Anthropic will continue to provide the Department of War its services for a period of no more than six months to allow for a seamless transition to a better and more patriotic service. America’s warfighters will never be held hostage by the ideological whims of Big Tech. This decision is final.

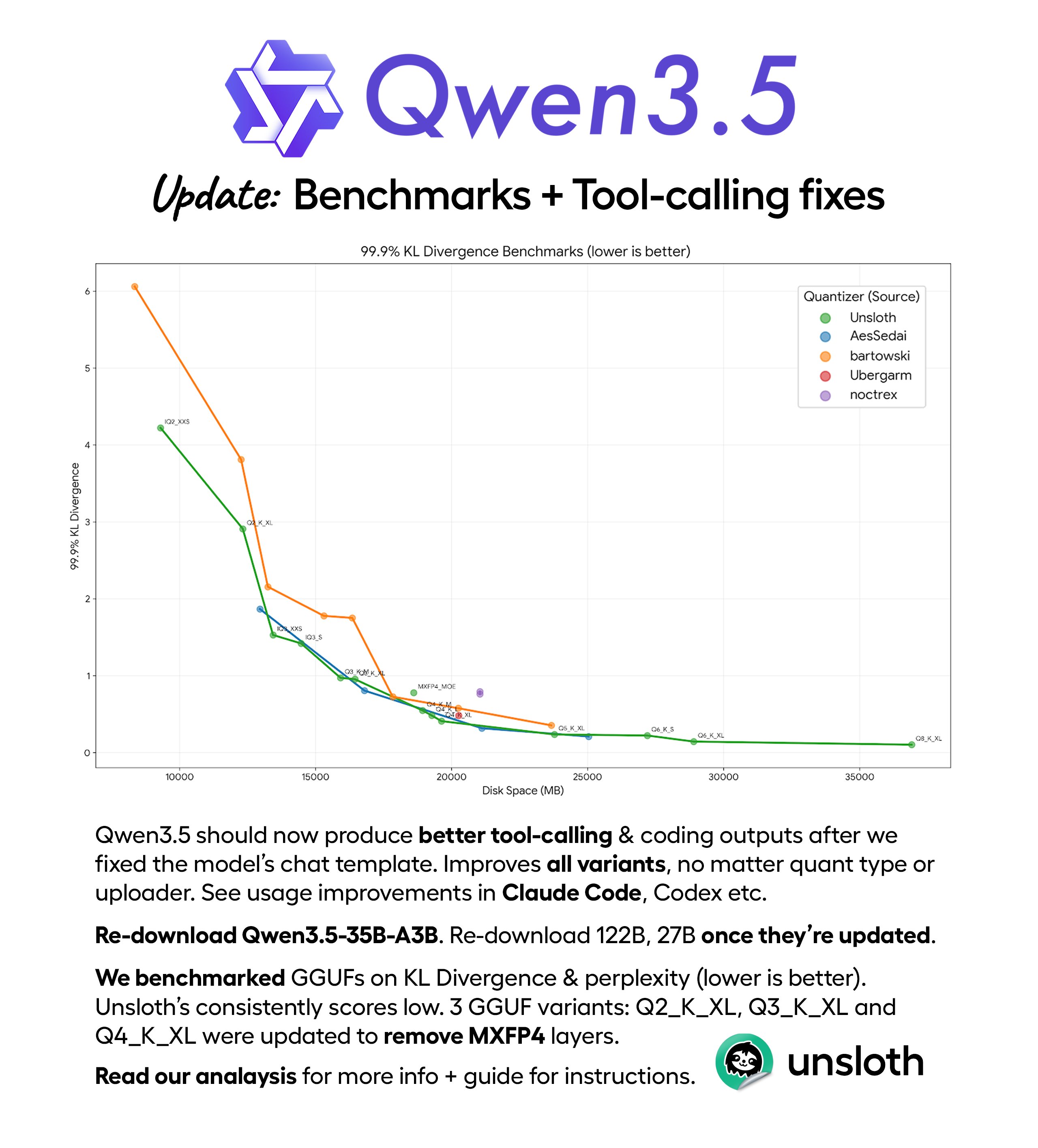

The third era of AI software development

testing Qwen3.5-35B-A3B latest optimized version by UnslothAI on a single RTX 3090. one detailed prompt. zero handholding. watch a 3B model scaffold an entire multifile game project autonomously. the setup: > model: Qwen3.5-35B-A3B (80B total, only 3B active per token) > quant: UD-Q4_K_XL by Unsloth (MXFP4 layers removed in latest update) > speed: 112 tok/s generation, ~130 tok/s prefill > context: 262K tokens > flags: -ngl 99 -c 262144 -np 1 --cache-type-k q8_0 --cache-type-v q8_0 > engine: llama.cpp > agent: Claude Code talk to localhost:8080 (llama.cpp now has native Anthropic API endpoint. no LiteLLM needed) q8_0 KV cache cuts VRAM usage in half vs f16 at 262K. -np 1 is default but worth noting. parallel slots multiply KV cache and at 262K that's an instant OOM. the prompt was more detailed than this but you get the idea: build a space shooter with parallax backgrounds, particle systems, procedural audio, 4 enemy types, boss fights, power-up system, and ship upgrades. 8 JavaScript modules. no libraries. game's called Octopus Invaders. gameplay footage dropping next.

Powerful new Harvard Business Review study. "AI does not reduce work. It intensifies it. " A 8-month field study at a US tech company with about 200 employees found that AI use did not shrink work, it intensified it, and made employees busier. Task expansion happened because AI filled in gaps in knowledge, so people started doing work that used to belong to other roles or would have been outsourced or deferred. That shift created extra coordination and review work for specialists, including fixing AI-assisted drafts and coaching colleagues whose work was only partly correct or complete. Boundaries blurred because starting became as easy as writing a prompt, so work slipped into lunch, meetings, and the minutes right before stepping away. Multitasking rose because people ran multiple AI threads at once and kept checking outputs, which increased attention switching and mental load. Over time, this faster rhythm raised expectations for speed through what became visible and normal, even without explicit pressure from managers.