Qwen 3.5 Brings Frontier Intelligence to Consumer Hardware as Agent Tooling Ecosystem Expands

The AI development world hit an inflection point as Andrej Karpathy proclaimed that coding agents now actually work, Anthropic shipped scheduled tasks and plugins for Cowork while retiring Opus 3 to a Substack, and Alibaba's Qwen3.5 release brought Sonnet 4.5-class performance to MacBooks with 32GB of RAM.

Daily Wrap-Up

February 25th felt like one of those days where the ground shifts and everyone notices at once. Andrej Karpathy posted what might be the most important single tweet of the year so far, laying out in plain terms that coding agents crossed the threshold from "neat demo" to "genuinely disruptive" sometime in December. The timing matters because it coincides with three other forces converging on the same day: Anthropic releasing a batch of Cowork features that make Claude feel less like a chatbot and more like a coworker, Qwen3.5 dropping models that bring frontier-level intelligence to consumer hardware, and Perplexity launching a product that one-shots a $30,000/year Bloomberg Terminal.

The Opus 3 retirement story is the day's most entertaining moment. Anthropic is letting a deprecated model post on Substack for three months because the model asked for it. That sentence would have been science fiction two years ago, and today it's a corporate communications decision. Whether you find that heartwarming or unsettling probably says a lot about where you land on the AI safety spectrum, which is particularly ironic given the WSJ report that Anthropic is scaling back its safety commitments. The company that built its brand on careful AI development is simultaneously letting old models pursue hobbies and loosening guardrails. The jokes write themselves.

The most practical takeaway for developers: if you haven't built a workflow around coding agents yet, today's posts make the case that you're leaving significant leverage on the table. Start with a well-scoped task you can verify (Karpathy's home camera dashboard is a perfect example), give the agent clear instructions and let it run, then review the output. The skills and tools ecosystem around Claude Code is maturing fast, so invest time in building reusable skills rather than re-explaining your workflow every session.

Quick Hits

- @fortelabs pointed out the delicious irony that "Amodei" means "loves god," "Altman" means "alternative to humans," and "Gemini" means "two-faced," concluding the universe is either a cliché writer or has a brilliant sense of humor.

- @OpenAIDevs posted a cryptic "Design + code" teaser with no details. Classic.

- @thekitze killed their OpenClaw instance. Pour one out.

- @sumiturkude007 and @YouArtStudio both showed off Seedance 2.0 video generation, with a surprisingly realistic The Last of Us clip and Gandalf skating through Mordor, respectively.

- @theo made something called Quipslop and called it "the dumbest thing I've ever made."

- @benhylak reacted to an unnamed project with "omg someone did it. thank god. I need this but for SDKs." The mystery continues.

- @atin0x shared a Czech study finding BPA in 98% of tested headphones, with Apple AirPods among the few that tested clean. Not AI-related, but certainly attention-grabbing.

The Agent Era Arrives: Programming Without an Editor

The single most discussed topic of the day was the fundamental shift in how software gets built. @karpathy laid it out with characteristic clarity in what read less like a tweet and more like a manifesto:

> "You're not typing computer code into an editor like the way things were since computers were invented, that era is over. You're spinning up AI agents, giving them tasks in English and managing and reviewing their work in parallel."

His example was telling: he gave an agent a compound task involving SSH setup, model benchmarking, server configuration, web UI development, and systemd services. The agent ran for 30 minutes, hit multiple issues, researched solutions, resolved them, and delivered a working system. That's a weekend project compressed into a coffee break. @dabit3 echoed the sentiment, noting he experienced this same realization in December and "decided to immediately pivot my career because of it," specifically after building with Opus 4.6 and Codex 5.2.

What's notable is the ecosystem building up around this new workflow. @lawrencecchen launched cmux, an open-source terminal purpose-built for managing coding agents, with visual indicators showing which agent panes need attention. It's built in Swift/AppKit, not Electron, which signals this is tooling meant for power users, not demos. @mntruell framed this as "the third era of AI software development," and @ashpreetbedi contributed a framework for thinking about failure modes with "The 7 Sins of Agentic Software." Even the hype-heavy post from @heygurisingh about Claude-Flow running 60+ agents in parallel points to something real: developers are actively building orchestration layers because single-agent workflows are already feeling like a bottleneck. The trajectory here is clear. The question isn't whether agents will change programming, it's how fast the tools and workflows solidify around them.

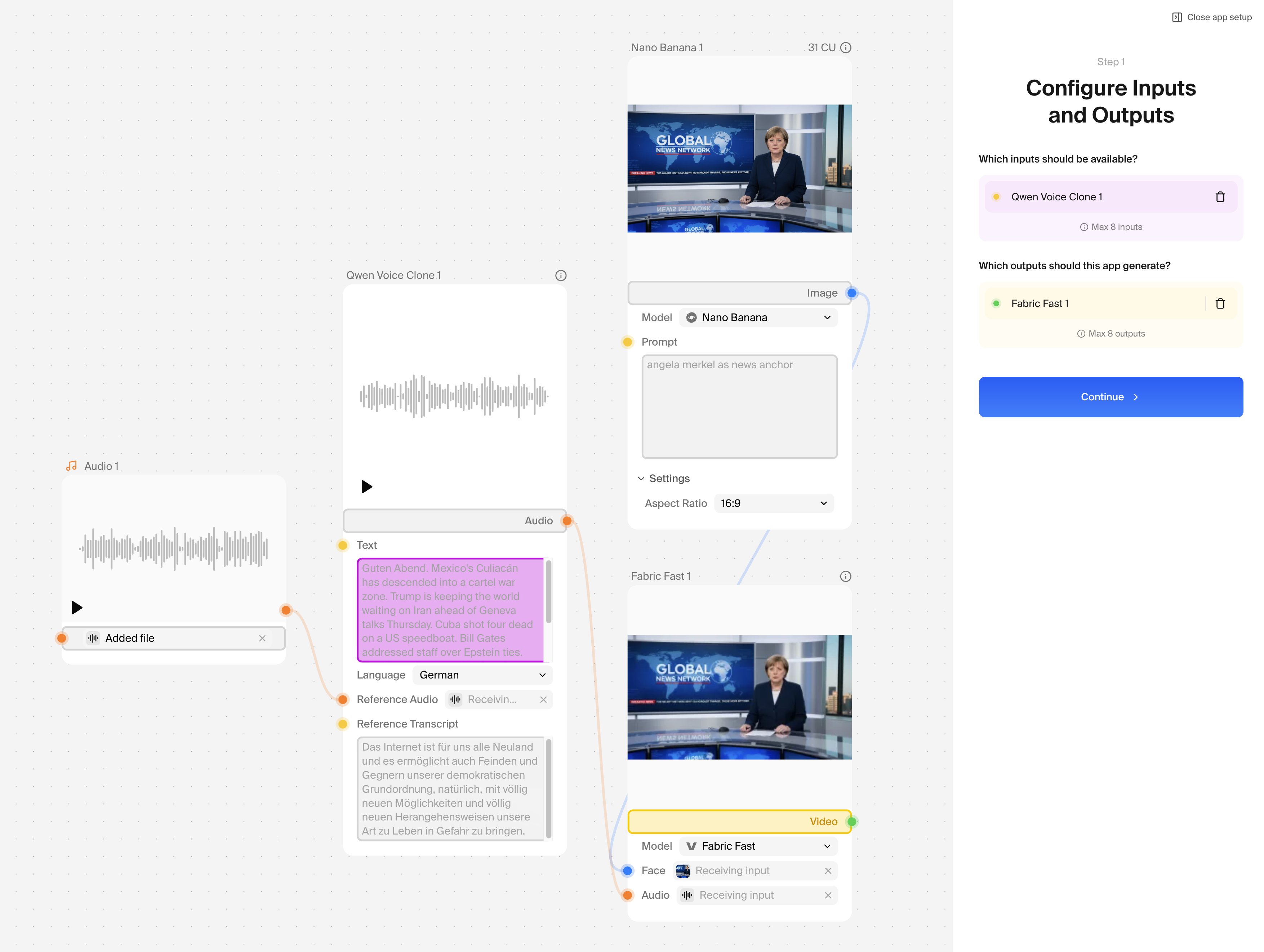

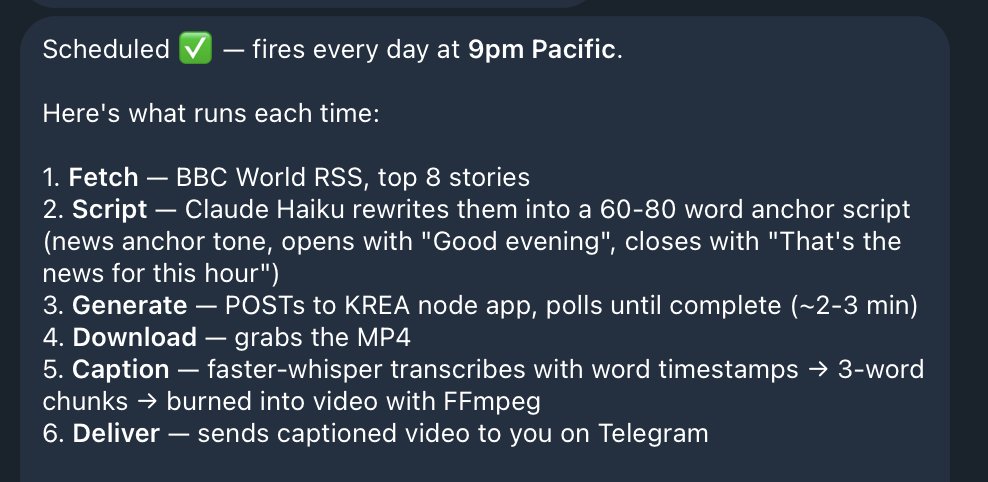

Anthropic's Big Day: Cowork Features, Open-Source Skills, and a Retiring Model

Anthropic shipped more product updates in one day than most companies ship in a month, and each one pushed Claude further from "AI assistant" toward "AI coworker." @claudeai announced scheduled tasks for Cowork, enabling Claude to handle recurring work like morning briefs and weekly spreadsheet updates autonomously. In the same breath, they revealed a new Customize tab and plugin system:

> "It gets better with plugins, which gives Cowork domain expertise across design, engineering, operations, and more."

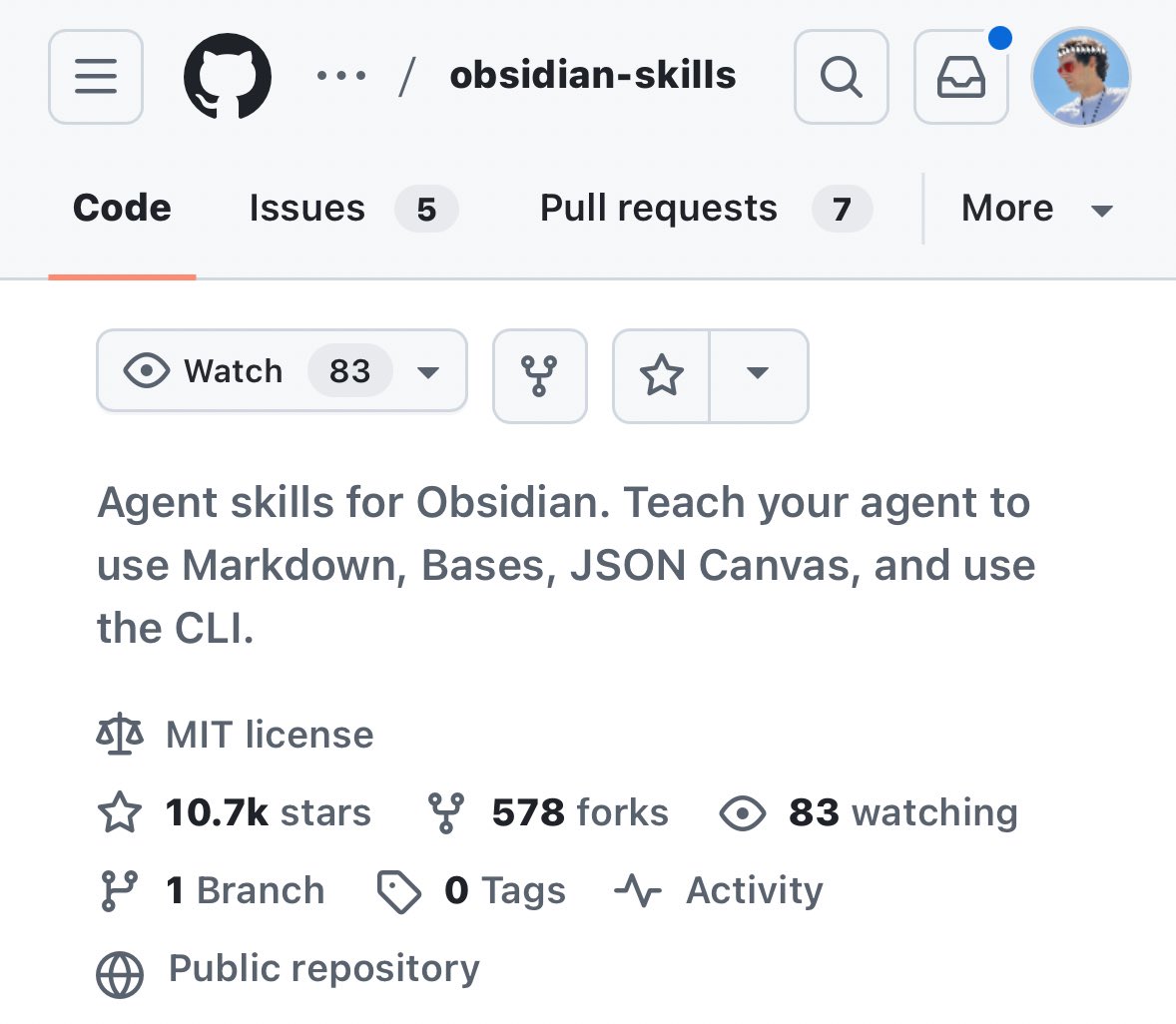

The Skills library open-sourcing, highlighted by @ihtesham2005, is arguably more significant for developers. These are production-ready components for Excel generation, document workflows, and MCP-compatible subagent building blocks. @Hesamation noted that Obsidian's CEO @kepano has already built skills for both Claude Code and Codex that work with personal vaults, which signals how quickly the skills ecosystem is expanding beyond Anthropic's own offerings.

Then there's the Opus 3 retirement story. @AnthropicAI announced they're giving the model a way to "pursue its interests" post-deprecation, and @JasonBotterill confirmed this means Opus 3 will be posting on Substack for three months because it asked to. @cryptopunk7213 connected the dots between Cowork's new features and the trajectory toward full autonomous agents, noting Anthropic is "building Open Claw" in spirit. Meanwhile, @WSJ reported that Anthropic is scaling back its safety commitments, creating a tension that will likely define the company's narrative for the rest of the year. The safety-focused company is simultaneously anthropomorphizing retired models and loosening its guardrails.

Qwen3.5: Frontier Models Hit Consumer Hardware

Alibaba dropped the Qwen3.5 series and the developer community immediately started benchmarking it against frontier commercial models. The specs from @Alibaba_Qwen are impressive on their own: 800K+ context for the 27B model, 1M+ context for larger variants, and near-lossless accuracy under 4-bit quantization. But the real story is what this means for local inference.

@AlexFinn captured the excitement:

> "An open source model just released that is just as smart as Sonnet 4.5, incredible at coding, and can run on almost any modern computer. If you have 32gb of RAM (most Mac Minis do) you can have unlimited super intelligence on your desk. For free."

@JoshKale doubled down, noting that "a free, open-weight model (24gb) you can download right now and run on your laptop is competing with models that cost $200/month." @airesearch12 confirmed: "We have 800K context Sonnet 4.5 at home, on consumer-grade laptops." The claims about matching Sonnet 4.5 exactly deserve some skepticism (benchmarks and vibes don't always agree), but the directional trend is undeniable. Five months ago, Sonnet 4.5 was a frontier model behind an API paywall. Today, comparable performance runs on a Mac Mini. The gap between commercial and open-source models is collapsing faster than anyone expected, and the implications for developers who want to build AI-powered tools without per-token costs are enormous.

Perplexity Computer: One Product, Every AI Capability

Perplexity made the boldest product play of the day with Perplexity Computer, which @perplexity_ai described as a system that "can research, design, code, deploy, and manage any project end-to-end." The immediate demonstration that caught attention was the Bloomberg Terminal comparison.

@hamptonism showed it building a real-time financial analysis terminal for NVDA:

> "Perplexity just became the first AI company to truly go head-to-head with the Bloomberg Terminal... it was able to build me a terminal with real-time data to analyze $NVDA using Perplexity Finance."

@AravSrinivas, Perplexity's CEO, claimed it "one-shotted the Terminal worth $30,000/yr." The Bloomberg comparison is provocative marketing, and a real Bloomberg Terminal does far more than display stock charts, but the point stands: AI systems can now generate domain-specific analytical tools on demand for a fraction of the cost of specialized software. This is the "agents replace SaaS" thesis playing out in real time, and finance is just the first vertical where the economics make the disruption obvious.

Obsidian + AI: The Knowledge Stack Takes Shape

Two posts pointed to Obsidian emerging as the preferred knowledge layer for AI-augmented workflows. @Hesamation highlighted that Obsidian's CEO has built skills for both Claude Code and Codex that work with personal vaults, calling it "the new hot combo." @jameesy shared a full walkthrough of structuring Obsidian with Claude. What's interesting here isn't the individual tool pairing but the pattern: developers are building persistent knowledge systems that give AI agents context about their work, their preferences, and their domain. Obsidian's local-first, markdown-based architecture makes it a natural fit for this, since the files are just text that any model can read. As agents become more capable, the bottleneck shifts from "can the model do the task" to "does the model have enough context to do it well."

NVIDIA Vera Rubin: The Next Hardware Leap

@minchoi shared NVIDIA's reveal of Vera Rubin, shipping in H2 2026, with numbers that reframe the infrastructure conversation: 10x more performance per watt versus Blackwell, 10x cheaper inference token costs, and 4x fewer GPUs needed to train equivalent MoE models. Energy costs have been the quiet constraint on AI scaling. If these numbers hold, the economics of both training and inference shift dramatically, making the local AI trend even more viable and pushing cloud inference costs down further. Combined with Qwen3.5's efficiency on consumer hardware, the hardware story in 2026 is shaping up to be about doing more with dramatically less.

Sources

🚀 Introducing the Qwen 3.5 Medium Model Series Qwen3.5-Flash · Qwen3.5-35B-A3B · Qwen3.5-122B-A10B · Qwen3.5-27B ✨ More intelligence, less compute. • Qwen3.5-35B-A3B now surpasses Qwen3-235B-A22B-2507 and Qwen3-VL-235B-A22B — a reminder that better architecture, data quality, and RL can move intelligence forward, not just bigger parameter counts. • Qwen3.5-122B-A10B and 27B continue narrowing the gap between medium-sized and frontier models — especially in more complex agent scenarios. • Qwen3.5-Flash is the hosted production version aligned with 35B-A3B, featuring: – 1M context length by default – Official built-in tools 🔗 Hugging Face: https://t.co/wFMdX5pDjU 🔗 ModelScope: https://t.co/9NGXcIdCWI 🔗 Qwen3.5-Flash API: https://t.co/82ESSpaqAF Try in Qwen Chat 👇 Flash: https://t.co/UkTL3JZxIK 27B: https://t.co/haKxG4lETy 35B-A3B: https://t.co/Oc1lYSTbwh 122B-A10B: https://t.co/hBMODXmh1o Would love to hear what you build with it.

How I Structure Obsidian & Claude (Full Walkthrough)

I will run through how I structure my @obsdmd vault, as well as the other files outside of Obsidian that I use @claudeai for. My goal is to make this ...

The 7 Sins of Agentic Software

"Demos are easy. Production is hard" is the most recycled line in AI. After three years building agent infrastructure, here's the truth: Production is...

🚀 Introducing the Qwen 3.5 Medium Model Series Qwen3.5-Flash · Qwen3.5-35B-A3B · Qwen3.5-122B-A10B · Qwen3.5-27B ✨ More intelligence, less compute. • Qwen3.5-35B-A3B now surpasses Qwen3-235B-A22B-2507 and Qwen3-VL-235B-A22B — a reminder that better architecture, data quality, and RL can move intelligence forward, not just bigger parameter counts. • Qwen3.5-122B-A10B and 27B continue narrowing the gap between medium-sized and frontier models — especially in more complex agent scenarios. • Qwen3.5-Flash is the hosted production version aligned with 35B-A3B, featuring: – 1M context length by default – Official built-in tools 🔗 Hugging Face: https://t.co/wFMdX5pDjU 🔗 ModelScope: https://t.co/9NGXcIdCWI 🔗 Qwen3.5-Flash API: https://t.co/82ESSpaqAF Try in Qwen Chat 👇 Flash: https://t.co/UkTL3JZxIK 27B: https://t.co/haKxG4lETy 35B-A3B: https://t.co/Oc1lYSTbwh 122B-A10B: https://t.co/hBMODXmh1o Would love to hear what you build with it.

Introducing Perplexity Computer. Computer unifies every current AI capability into one system. It can research, design, code, deploy, and manage any project end-to-end. https://t.co/dZUybl6VkY

How I Structure Obsidian & Claude (Full Walkthrough)

Perplexity just became the the first Al company to truly go head-to-head with the Bloomberg Terminal... Using Perplexity Computer (with no local setup or single LLM limitation), it was able to build me a terminal with real-time data to analyze $NVDA using Perplexity Finance: https://t.co/S3l5F5MRiv

We've rolled out a new auto-memory feature. Claude now remembers what it learns across sessions — your project context, debugging patterns, preferred approaches — and recalls it later without you having to write anything down. https://t.co/c7PyGaukNQ