Claude Code Ships Built-in Git Worktree Support as Psychology Paper Reframes AI Memory Design

Claude Code's new built-in worktree support dominated the feed today, enabling parallel agent sessions without code conflicts. Meanwhile, a deep analysis of Karpathy's NanoClaw philosophy challenged decades of software configuration patterns, and multiple posts converged on the same uncomfortable truth: the vast majority of the world still hasn't touched AI tools.

Daily Wrap-Up

The biggest practical development today was Anthropic rolling out native git worktree support across all Claude Code surfaces. This is one of those features that sounds incremental but changes how you actually work. If you've ever had a Claude Code session editing files while you needed to kick off another task in the same repo, you know the pain. Worktrees solve it cleanly, and the fact that subagents can now spin up their own isolated worktrees means parallelism just got a lot more practical for large migrations and multi-file refactors. @bcherny from Anthropic walked through the full feature set in a five-post thread, and @mattpocockuk was already calling it his new default workflow.

The more intellectually interesting thread of the day came from @rryssf_, who wrote what amounts to a research paper on why AI memory systems are fundamentally broken. The core argument: we've been modeling agent memory on databases when we should be modeling it on human identity construction. Drawing from Conway's Self-Memory System, Damasio's somatic markers, and Bruner's narrative psychology, the post makes a compelling case that flat vector stores and conversation summaries miss the hierarchical, emotionally-weighted, goal-filtered nature of how humans actually remember. It's the kind of post that makes you stop and reconsider your architecture, which is rare on the timeline.

The most practical takeaway for developers: if you're using Claude Code for anything beyond single-file edits, start every session with claude --worktree. The isolation guarantees mean you can run parallel sessions on the same repo without merge conflicts, and the new subagent worktree support means Claude itself can parallelize its own work across branches. This is a genuine workflow multiplier, not a nice-to-have.

Quick Hits

- @dani_avila7 shared a Ghostty terminal tip:

unfocused-split-opacity = 0.85in your config dims inactive panes so you always know where your cursor is. Small quality-of-life win for multi-pane workflows.

- @jliemandt claims 43% of Alpha School students prefer school over vacation, crediting AI-driven mastery learning. Take with appropriate salt, but the "AI tutoring" thread keeps producing surprising data points.

- @p_misirov flagged a Steam game called "Data Center" that lets you build and manage your own data center. Called it "lowkey genius" as an edutainment model that hyperscalers should learn from.

- @gdb showed off a Codex API accessible via

codex app-server. Brief post, no details, but notable that OpenAI is exposing local API surfaces for their agent tooling.

- @mnedoszytko received an award at Anthropic's Claude Code 1st Birthday hackathon at SHACK15 in SF. The celebration of builders shipping with AI tools continues.

- @yacineMTB posted a cryptic "That's it. OpenAI won. They did the thing." No context provided. The timeline was left to speculate.

- @RhysSullivan shared the universal experience of Claude estimating "1-2 weeks" for a task that takes considerably less (or more, depending on your luck).

- @LinusEkenstam posted about what happens "when you take a creative human that learns to whisper commands to AI," sharing visual work that demonstrates the creative-technical intersection.

- @thekitze urged people not to quit OpenClaw yet, which is interesting context given the security concerns raised in other posts today about the platform's 400K+ line codebase.

- @vasuman shared a collection of articles for "founders, builders, AI enthusiasts, C-Suite executives." Link roundup with no specific thesis.

Claude Code Worktree Support and the Skills Ecosystem

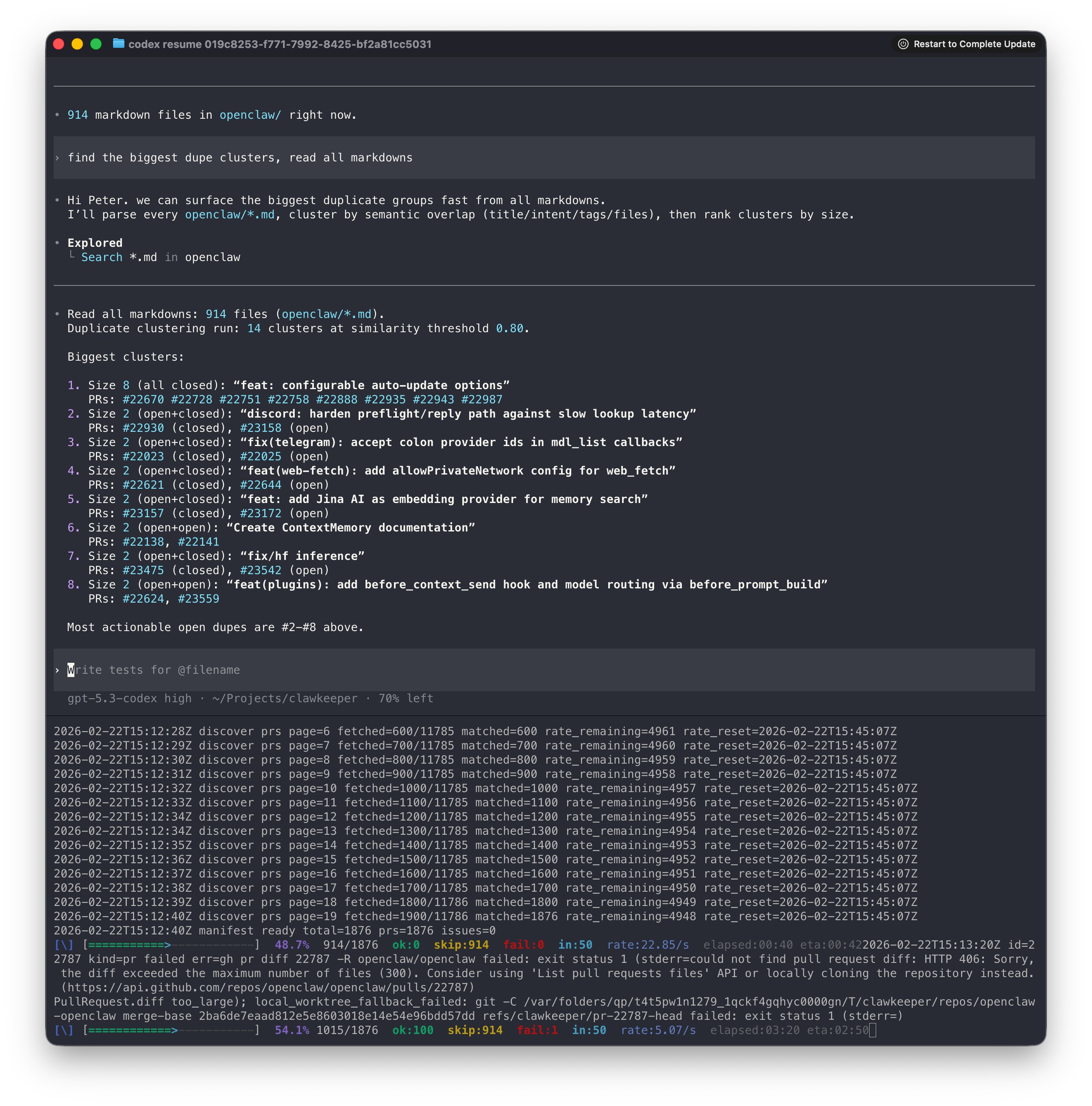

Anthropic shipped one of the most requested Claude Code features today: native git worktree integration across CLI, Desktop, IDE extensions, and mobile. The feature addresses a fundamental friction point in AI-assisted development. When an AI agent is editing your files, your working directory is effectively locked. Worktrees give each session its own isolated copy of the repo, making true parallelism possible without the risk of clobbering edits.

@bcherny laid out the full feature set in a detailed thread. The CLI usage is straightforward: claude --worktree starts a session in its own worktree, and you can name them or let Claude auto-name. But the more powerful angle is subagent isolation: "Subagents can also use worktree isolation to do more work in parallel. This is especially powerful for large batched changes and code migrations." Custom agents can also declare isolation: worktree in their frontmatter to always run isolated.

@mattpocockuk captured the practical sentiment: "claude --worktree is so good I'm making it my new default." His follow-up on parallelizing subagents gets at why this matters beyond convenience: "Especially parallelizing subagents makes spawning a bunch of agents to do a lot of work a lot simpler. Especially when merge conflicts are so cheap." That last point is key. In a worktree model, each agent produces clean commits on separate branches, and Git's merge machinery handles the integration. The conflict surface is usually small.

Separately, @LexnLin shipped an open-source Claude Code skill called Taste-Skill, aimed at fixing the "AI slop" problem in frontend generation. The insight is sound: "Without strict rules, they statistically default to the most likely patterns, that's where AI slop comes from. To get clean and production-grade UI, you need to override these biases with some engineering constraints." This is the skills ecosystem maturing. Rather than fighting the model's defaults in every prompt, you encode your aesthetic constraints once and let them apply consistently. Combined with worktrees, you're looking at a workflow where multiple specialized agents can ship UI, backend, and tests in parallel, each with their own quality guardrails.

We're Still Early: AI Adoption by the Numbers

A cluster of posts today all pointed at the same uncomfortable reality for anyone living inside the AI bubble: almost nobody else is here yet. The stats, the anecdotes, and even the jokes all converge on one conclusion. We are very, very early.

@AoverK laid out the numbers bluntly: "Paying $20/mo for AI puts you in the 0.3% globally. Using AI for tasks like coding puts you in the 0.04% globally with only 2-5 million doing this." @damianplayer made the same point from a different angle: "your timeline convinced you AI is in a bubble. talk to a boomer above the age 35 for 5 minutes. most people don't even know what claude is." He doubled down in a separate post: "6.5 billion people have NEVER used AI. think about that."

Meanwhile, Sam Altman provided the quote of the day, picked up by both @TheChiefNerd and @MorningBrew: "People talk about how much energy it takes to train an AI model... But it also takes a lot of energy to train a human. It takes like 20 years of life and all of the food you eat during that time before you get smart." @5eniorDeveloper responded with a Matrix meme suggesting Altman's next move is using humans as literal fuel for AI, which is the kind of dark humor that writes itself.

The tension here is real. Inside the bubble, worktree support and custom skills feel like table stakes. Outside it, most people haven't had their first conversation with a chatbot. That gap represents both a massive adoption runway and a reminder that the current discourse is being shaped by an extremely small and non-representative group.

Maximally Forkable Code and the Death of Configuration

@aakashgupta wrote the most thought-provoking post of the day, unpacking a pattern that Karpathy mentioned in passing about NanoClaw's approach to customization. The thesis: when AI can cheaply modify source code, the entire abstraction layer of configuration files, plugin registries, and feature flags becomes dead weight.

The contrast he draws is sharp. OpenClaw: "400,000+ lines of vibe-coded TypeScript trying to support every messaging platform, every LLM provider, every integration simultaneously. The result is a codebase nobody can audit." NanoClaw: "~500 lines of TypeScript. One messaging platform. One LLM. One database. Want something different? The LLM rewrites the code for your fork. Every user ends up with a codebase small enough to audit in eight minutes." The security implications alone are significant, with CrowdStrike having published advisories on OpenClaw's sprawling attack surface.

The "maximally forkable repo" pattern resonates beyond personal agents. @inazarova demonstrated a related principle with Rails and Claude Code, building Evil Martians' entire planning, HR, and financial system in two weeks: "Twenty years of conventions. LLMs love opinions. The more opinionated your framework, the better they perform. Convention over configuration just became a compound advantage nobody saw coming." Meanwhile, @victorianoi offered the poetic counterpoint: "In 20 years, vibe coders will look at the Linux kernel repo the way we look at the pyramids." There's something real in that observation. The era of hand-crafted, deeply understood codebases may be giving way to AI-generated, purpose-built, disposable ones. Whether that's progress depends on what you value.

Psychology Already Solved the AI Memory Problem

@rryssf_ posted what might be the most substantive single post of the week: a detailed argument that AI agent memory architectures are fundamentally misconceived, and that cognitive psychology has had the answers for decades. The post draws from Conway's Self-Memory System, Damasio's Somatic Marker Hypothesis, and research on autobiographical memory to identify five specific gaps in current approaches.

The core framework is compelling: "Current architectures all fail for the same reason: they treat memory as storage, not identity construction." The five missing principles are hierarchical temporal organization (memories aren't flat), goal-relevant filtering (retrieval should be gated by current context), emotional weighting (significant interactions should encode deeper), narrative coherence (memories need a story structure), and co-emergent self-modeling (the agent's sense of self should evolve with its memory).

What makes this more than armchair theorizing is that the post maps each psychological principle to existing engineering primitives. Hierarchical memory maps to graph databases with temporal clustering. Emotional weighting maps to sentiment-scored metadata. Goal-relevant filtering maps to attention mechanisms conditioned on task state. The argument isn't that we need new technology. It's that we need a new conceptual framework for assembling technology we already have. For anyone building agent memory systems, this post is worth reading in full and probably bookmarking.

Sources

xurl 1.0.3 (our X API CLI tool) is now available with huge updates! • Added agent-friendly shortcuts for endpoints • Better app & user management Most importantly, we added a https://t.co/782afvBw2c and merged it to OpenClaw (thanks, @steipete) npm i -g @xdevplatform/xurl https://t.co/uWOkNE6iIx

Our latest Claude Code hackathon is officially a wrap. 500 builders spent a week exploring what they could do with Opus 4.6 and Claude Code. Meet the winners:

If you're using SQLite with Cloudflare Durable Objects, I would love to hear why you're using that over D1. What workloads benefit from this approach the most?

First time seeing a representative of an AI Lab confirm that models are trained on their harness. Doesn't mean it hasn't been mentioned. But first seeing it for me. Anthropic has been ahead with Claude Code because Claude Code came out of the gate first. But OpenAI is catching up *FAST*. My intuition is that OpenAI has the most rapid RL pipeline capability, which is why you saw such a rapid succession of: > 5.1-Codex --> 11/12/25 > 5.2-Codex --> 12/18/25 > 5.3-Codex --> 2/5/26 If OpenAI hasn't already surpassed Anthropic and Opus 4.6 with GPT-5.3-Codex... They certainly will with the next iteration.