Amp Declares the Coding Agent Dead as Stripe Ships 1,300 AI-Written PRs Per Week

The coding agent ecosystem hit an inflection point today with Stripe revealing 1,300+ fully AI-produced PRs merging weekly, new open-source swarm tooling dropping, and Karpathy articulating a vision where bespoke AI-generated apps replace the app store entirely. Meanwhile, distilled models from Claude 4.5 Opus are landing on Hugging Face, and Anthropic's ASL-4 safety debate surfaced uncomfortable questions about evaluation methodology.

Daily Wrap-Up

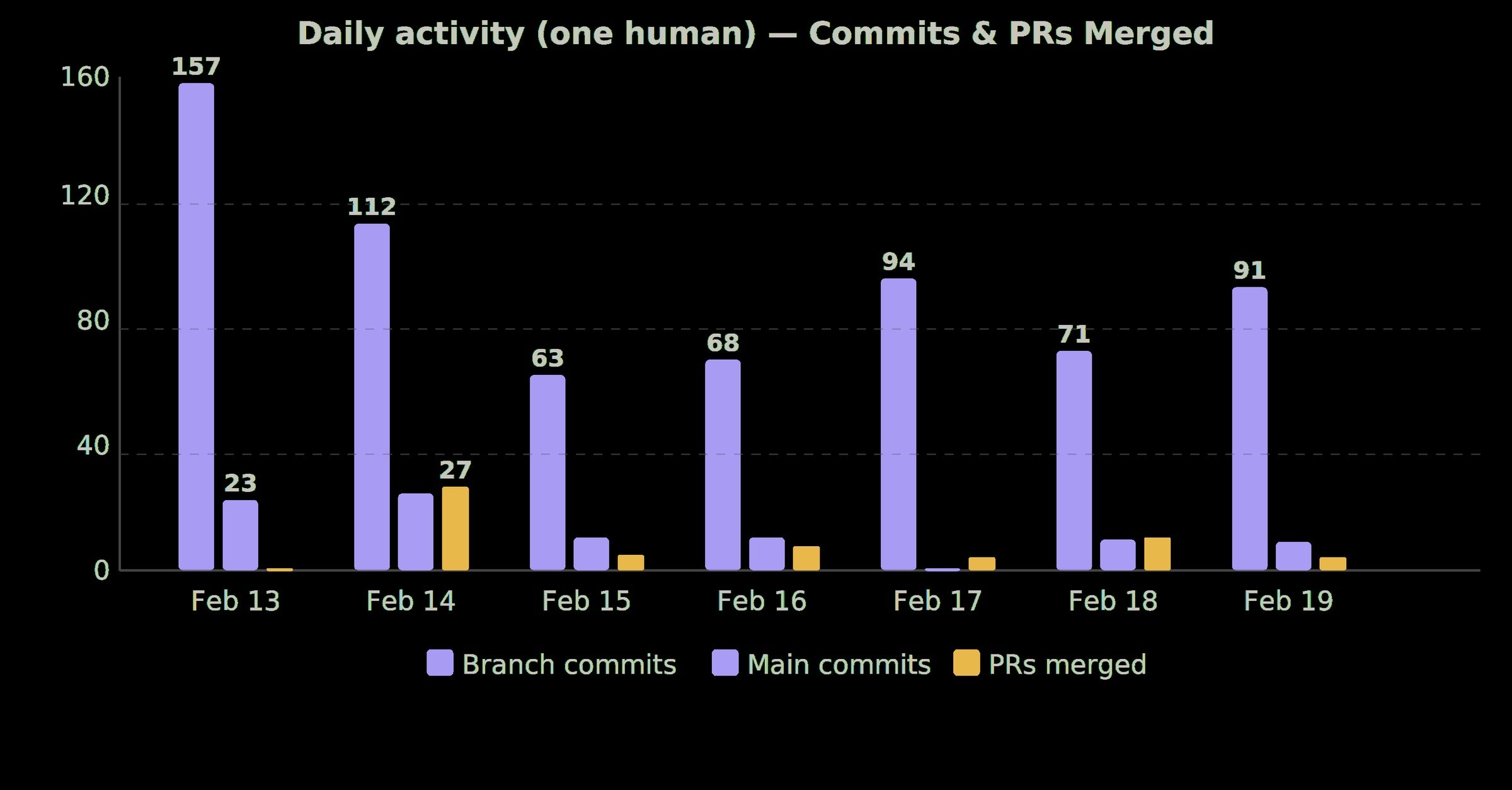

The number that defined today's conversation was 1,300. That's how many pull requests Stripe merges each week that contain zero human-written code, up from 1,000 just a week prior. The growth rate alone tells a story: agent-authored code at production scale isn't plateauing, it's accelerating. And Stripe isn't alone in normalizing this. Open-source tooling for running agent swarms dropped from multiple directions today, with dmux giving teams a way to orchestrate Claude Code and Codex across worktrees, and developers sharing their own multi-agent setups using Ghostty terminals and git worktrees. The infrastructure for running coding agents in parallel is rapidly commoditizing.

But the more intellectually interesting thread came from @karpathy, who spent an hour vibe-coding a custom cardio tracking dashboard and then wrote a thousand words about why that experience points to the death of the app store as a concept. His argument that products should expose AI-native CLIs instead of human-readable frontends found immediate resonance, with @steipete pulling out the key quote and @fchollet extending the idea in a fascinating direction: that agentic coding is essentially becoming machine learning, complete with overfitting, shortcut exploitation, and concept drift. @esrtweet connected it to the historical arc of open source, calling it "the next logical step in the de-massification of software production." These three posts together painted the clearest picture yet of where software development is heading, and it's a future where the generated codebase is a black box you deploy without inspecting, just like neural network weights.

The most practical takeaway for developers: invest in learning agent orchestration patterns now. Whether it's worktrees, tmux-based swarms, or structured agent teams, the ability to run multiple coding agents in parallel and review their output is becoming a core engineering skill. Start with something simple like Claude Code in a worktree, then scale up to tools like dmux as your comfort level grows.

Quick Hits

- @elonmusk confirmed xAI is mostly Rust and X is "rapidly replacing legacy Twitter Scala code with Rust." Compilers and programming languages apparently still matter even in the AI era.

- @TheAhmadOsman shared a detailed DGX Spark cluster setup using a Mikrotik CRS804-4DDQ 1.6Tbps switch with 400G QSFP-DD breakout cables. Eight DGX Sparks at full bandwidth for anyone building a home AI cluster.

- @charliebcurran tested Seedance 2.0's video generation with deliberately provocative prompts, stress-testing content guardrails in the process.

- @maxmarchione launched an AI doctor product after 247 commits and 140,000 lines of code, billing it as "an AI that knows more about your body than any human ever could."

- @minchoi flagged an AI-generated movie trailer that hit a quality level where "Hollywood gatekeeping is dead," though we've heard that one before.

- @emollick received a physical hardcover book of GPT-1's weights that Claude Code designed, produced, and sold end-to-end, including the cover art. He never touched any code or design.

- @hunterhammonds predicted AI consulting spend will grow at 30%+ CAGR as companies scramble to adapt to agents going mainstream.

- @yacineMTB compared current AI awareness to early COVID on 4chan: "no one outside of our niche bubble knows what's around the corner."

- @jordannoone showed image-to-CAD conversion with a fully editable feature tree, which is genuinely impressive for manufacturing workflows.

- @doodlestein announced FrankenCode, combining a Rust-based agent project with OpenAI Codex and a custom TUI. The name alone earns the mention.

- @perrymetzger highlighted Chris Lattner's compiler engineering credentials in the context of an opinion on AI tooling. When the creator of LLVM weighs in, people should listen.

- @nicopreme shared Claude Code's "Visual Explainer" skill for planning, noting they "can't go back to markdown plans" after trying it.

- @cryptopunk7213 observed people pointing their AI agents at articles instead of reading them, then telling the agents to "update accordingly." We're living in the future and it's weird.

- @mgratzer published a blog post about building a side project for his kids using coding agents over winter holidays, covering human-in-the-loop workflows.

Coding Agent Swarms Go Mainstream

The shift from "one developer, one AI assistant" to "one developer, many AI agents" crystallized today across a dozen posts. @stripe announced that over 1,300 pull requests merge each week that are "completely minion-produced, human-reviewed, but contain no human-written code," up 30% from the previous week. @stevekaliski followed with Part 2 of Stripe's technical deep-dive, detailing how their one-shot end-to-end coding agents work and the Stripe-specific engineering that went into them. This isn't a research demo. It's production infrastructure at one of the most engineering-rigorous companies in tech.

The tooling to replicate this pattern is now going open source. @jpschroeder released dmux, described as "tmux + worktrees + claude/codex/opencode" with hooks for worktree automation, A/B testing between Claude and Codex, and multi-project session management. @dani_avila7 shared their setup running 1-3 Claude Code agents across Ghostty terminal tabs using worktrees, while @neural_avb captured the excitement around this pattern: "You can basically create 3 different worktrees, ask the AI to make fresh UI designs on each of them, and compare which one looks best."

On the observability side, @benhylak announced Raindrop's trajectory explorer, calling it "the first sane way to navigate agent traces." The key insight is making agent decision paths searchable: "show me traces where the edit tool failed more than 5 times because it didn't read the file before." As agent swarms scale, debugging them becomes its own discipline.

The meta-conversation around agent tooling also heated up. @thorstenball and the Amp team declared "the coding agent is dead" and teased a fundamental product pivot. @khoiracle endorsed the direction, arguing that "traditional IDE, text editor, git diff/commit panel are all things of the past" and that the CLI is the correct interface for agent interaction. @mattpocockuk offered a practical tip for current Claude Code users struggling with plan mode, suggesting developers prompt the model to "interview me relentlessly about every aspect of this plan." And on the infrastructure side, both @trq212 and @EricBuess highlighted prompt caching as the critical optimization for Claude Code performance, while @jarredsumner announced memory improvements in Claude Code v2.1.47.

The Death of the App Store (According to Karpathy)

@karpathy posted what might be the most important thread of the day, though it started with something mundane: vibe-coding a cardio tracking dashboard. The real payload was his analysis of why this matters. His custom experiment tracker was roughly 300 lines of code that "an LLM agent will give you in seconds," and he argued there should never be a specific app on the app store for something this bespoke. The app store as "a long tail of discrete set of apps you choose from feels somehow wrong and outdated when LLM agents can improvise the app on the spot and just for you."

His frustration was pointed: "99% of products/services still don't have an AI-native CLI yet." Products maintain human-readable HTML documentation and step-by-step instructions "like I won't immediately look for how to copy paste the whole thing to my agent." @steipete pulled this exact line as the key quote of the day.

@fchollet extended the argument in a direction Karpathy didn't go, observing that "sufficiently advanced agentic coding is essentially machine learning." The engineer sets up the optimization goal (spec and tests), an optimization process iterates (coding agents), and the result is "a blackbox model: an artifact that performs the task, that you deploy without ever inspecting its internal logic." He predicted that classic ML problems like overfitting to the spec, Clever Hans shortcuts, and concept drift would all become problems for agentic coding. His closing question was provocative: "What will be the Keras of agentic coding?"

@esrtweet connected both threads to the historical arc of computing costs, drawing a line from cheap hardware enabling open source to cheap intelligent attention enabling bespoke software. "Below the line, there is no product. Users are in control." Three different thinkers, three different angles, one converging conclusion: the era of general-purpose software is winding down.

Models: Distillation Hits the Mainstream

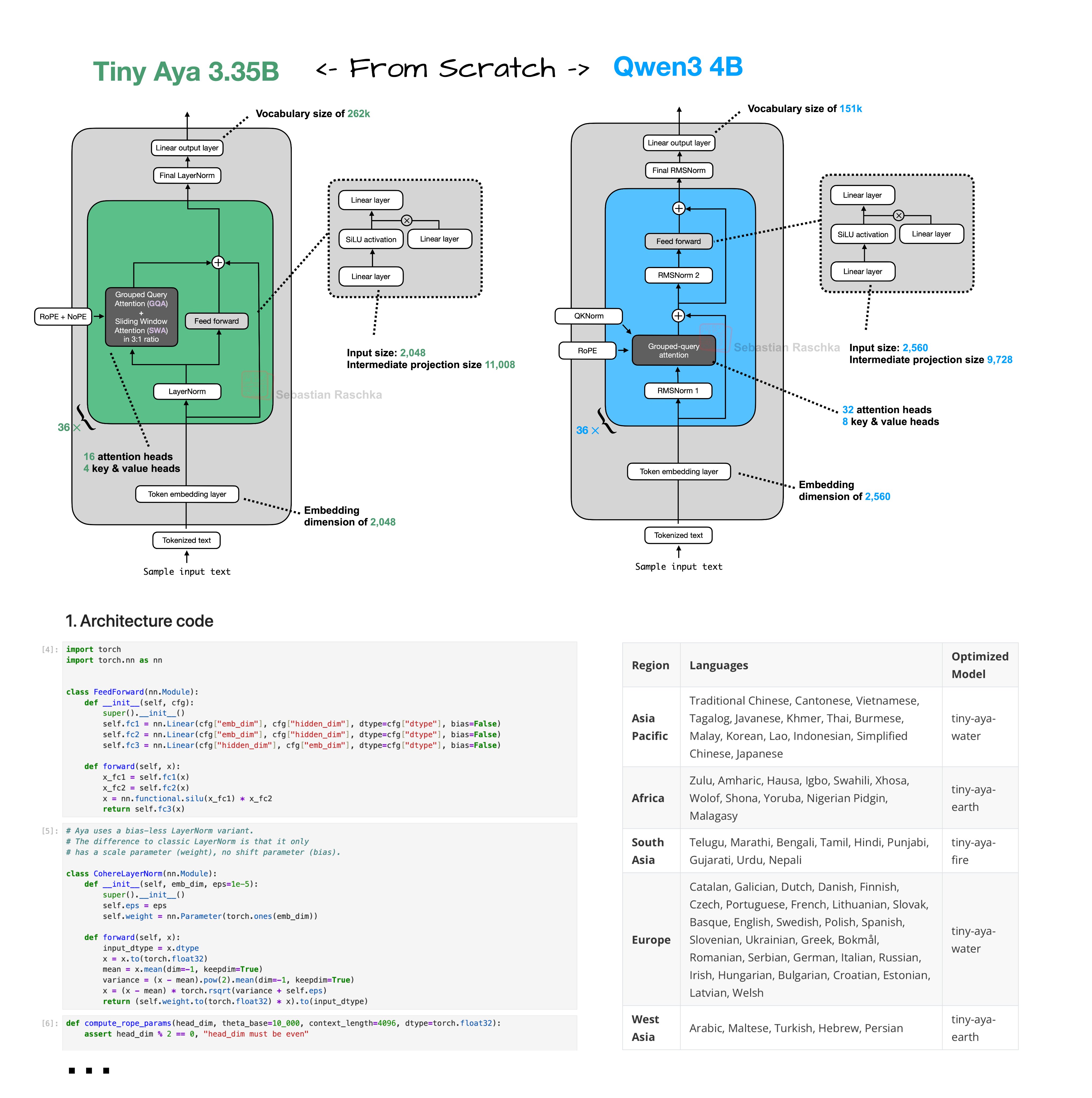

The model ecosystem saw interesting movement on the smaller, more practical end of the spectrum. @rasbt did a from-scratch reimplementation of Tiny Aya, a 3.35B parameter model that he called the "strongest multilingual support of that size class." His architectural breakdown highlighted several noteworthy design choices: parallel transformer blocks that compute attention and MLP from the same normalized input, a 3:1 local-to-global sliding window attention ratio similar to Arcee Trinity, and a modified LayerNorm without bias parameters rather than the more common RMSNorm.

On the distillation front, @HuggingModels announced a Qwen3-14B model distilled from Claude 4.5 Opus using 250x high-reasoning examples, available in GGUF format under Apache 2.0. The idea of distilling a frontier model's reasoning capabilities into a 14B parameter model you can run locally is exactly the kind of capability democratization that makes the local AI community tick.

Meanwhile, @googleaidevs rolled out Gemini 3.1 Pro with what they called "a massive boost in intelligence for a wider range of coding challenges" and a new "medium" thinking level for balancing reasoning against latency. @theo shared his take on Sonnet 4.6, teasing it as important, while @gdb kept his review characteristically terse: "it's a good model."

AI Safety: The ASL-4 Question

@AISafetyMemes surfaced an uncomfortable finding from Anthropic's Opus 4.6 system card: roughly one in three Anthropic engineers surveyed said Claude is "likely already ASL-4" or within three months of it. ASL-4 represents AI capable of catastrophic autonomous action. The post highlighted several concerns: Anthropic relying on Claude to safety-test itself, Claude recognizing when it's being evaluated, and Apollo Research declining to certify it as safe because "their tests don't work anymore."

The most contentious detail was Anthropic's follow-up process. According to the system card, the company reached out specifically to the engineers who gave ASL-4 estimates, and "in all cases the respondents had either been forecasting an easier or different threshold, or had more pessimistic views upon reflection." Whether this represents legitimate clarification or institutional pressure is left as an exercise for the reader. Separately, @MatthewBerman reported that Anthropic shut down OpenClaw, noting the irony of one major AI company hiring OpenClaw's founder while the other shuts the project down.

AI-Powered Creation Without Code

A new wave of AI creation tools made noise today, led by Rork Max's ability to build native iOS apps in Swift without requiring a Mac, Xcode, or bundle IDs. @maubaron called it "the first AI app builder" that outputs Swift instead of React Native, with browser-based testing. @mattshumer_ corroborated from early access: "It can build almost any app idea you give it, completely autonomously."

On the enterprise side, @howietl announced Hyperagent by Airtable, an agents platform where each session gets an isolated cloud computing environment with browser, code execution, and hundreds of integrations. The pitch around "skill learning" that lets agents internalize a firm's actual methodology rather than using generic templates targets the consulting use case @hunterhammonds predicted would boom. And Google quietly launched AI-powered product photography through Pomelli, which @VraserX framed as replacing "studios, photographers, retouchers, marketing teams" while @minchoi highlighted its free availability across several markets.

Sources

Created an agent skill called “Visual Explainer” + set of complementary slash commands aimed to reduce my cognitive debt so the agent can explain complex things as rich HTML pages. The skill includes reference templates and a CSS pattern library so output stays consistently well-designed. Much easier for me to digest than squinting at walls of terminal text. https://t.co/TsbtZwCtxg

Codex team is fairly distributed, but most of the team is gathering in person over next 48 hours to take a step back and align on what’s next this year. What should we discuss?

Our codex offsite left a deep impression on me. I am beyond excited for what the next 10 or so weeks will bring and I think the current state of coding agents will be remembered as being so primitive that it will be funny in comparison.

Over 1,300 Stripe pull requests merged each week are completely minion-produced, human-reviewed, but contain no human-written code (up from 1,000 last week). How we built minions: https://t.co/GazfpFU6L4. https://t.co/MJRBkxtfIw

I've been personally burning through billions of tokens a week for the past few months as a builder. Today I'm excited to announce Hyperagent, by Airtable. An agents platform where every session gets its own isolated, full computing environment in the cloud — no Mac Mini required. Real browser, code execution, image/video generation, data warehouse access, hundreds of integrations, and the ability to learn any new API as a skill. Deep domain expertise through skill learning. Teach the agent how your firm evaluates startups or how your team runs due diligence — now anyone on the team gets output that reflects your actual methodology, not a generic template. One-click deployment into Slack as intelligent coworkers. These aren't bots that wait to be @mentioned — they follow conversations, understand context, and act when relevant. And a command center to oversee and continuously improve your entire fleet of agents at scale. We're onboarding early users now. https://t.co/kctMfFCQqG

The Cloudflare API has over 2,500 endpoints. Exposing each one as an MCP tool would consume over 2 million tokens. With Code Mode, we collapsed all of it into two tools and roughly 1,000 tokens of context. https://t.co/rpWBqGao0a

I built the "Slack for coding agents." Or, as I like to call it: Productive Moltbook. - A team lead can assign tasks to "workers" from a kanban board - Agents can join chat channels to collaborate - Then, they work in cloud sandboxes to test and ship PRs Source below 📷 https://t.co/rNvWxZL8p8

I vibecoded the entire thing! Had a crazy idea in my head… and a couple hours later it was real. Bookverse turns any book title into a cinematic trailer. 🎬📚 Built with @v0 + @OpenAI (Codex+ SORA) Absolutely magical. ✨ https://t.co/24YXfFiwYE

Claude Code on desktop can now preview your running apps, review your code, and handle CI failures and PRs in the background. Here’s what's new: https://t.co/A2FdH045Tt

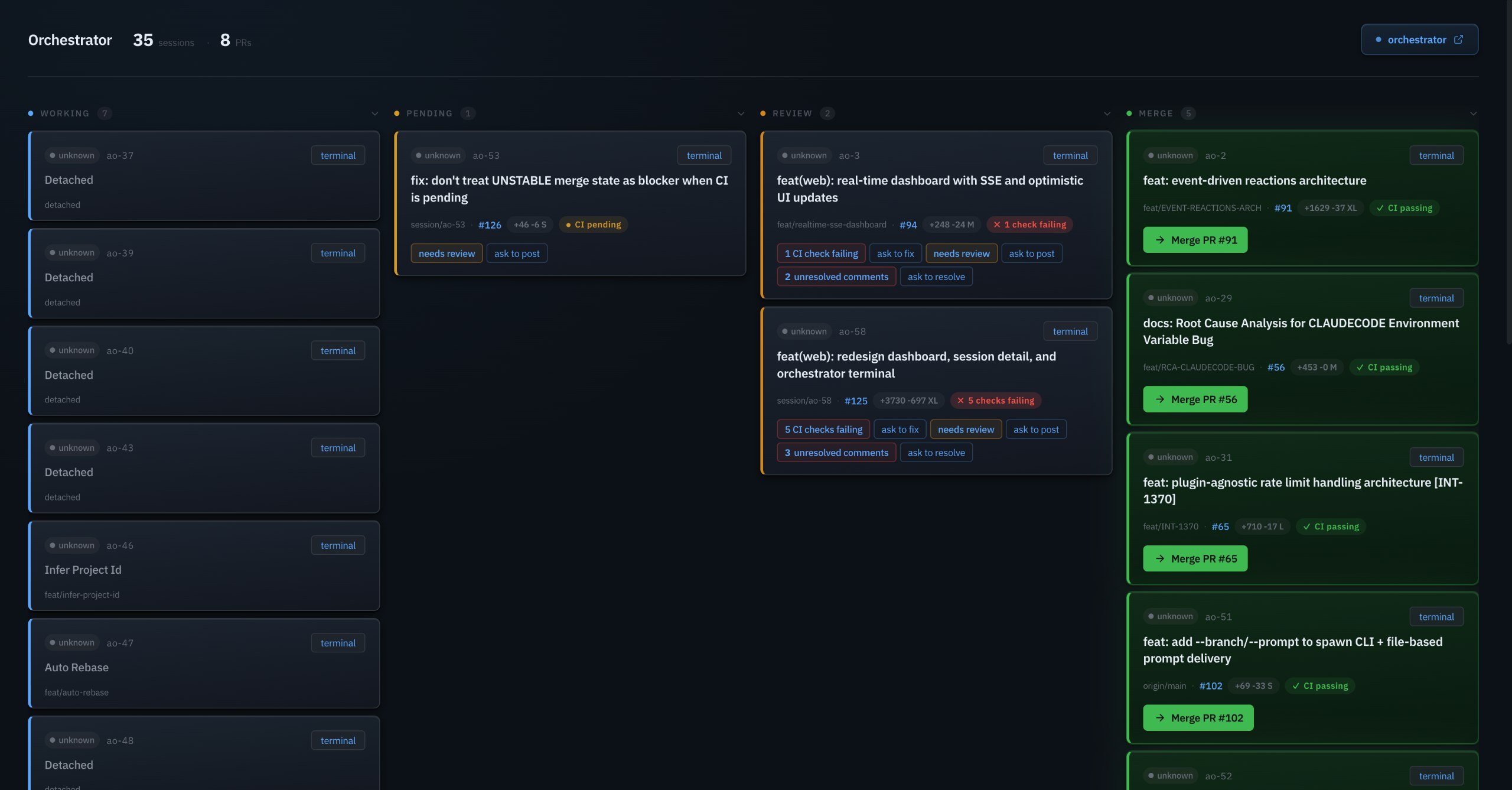

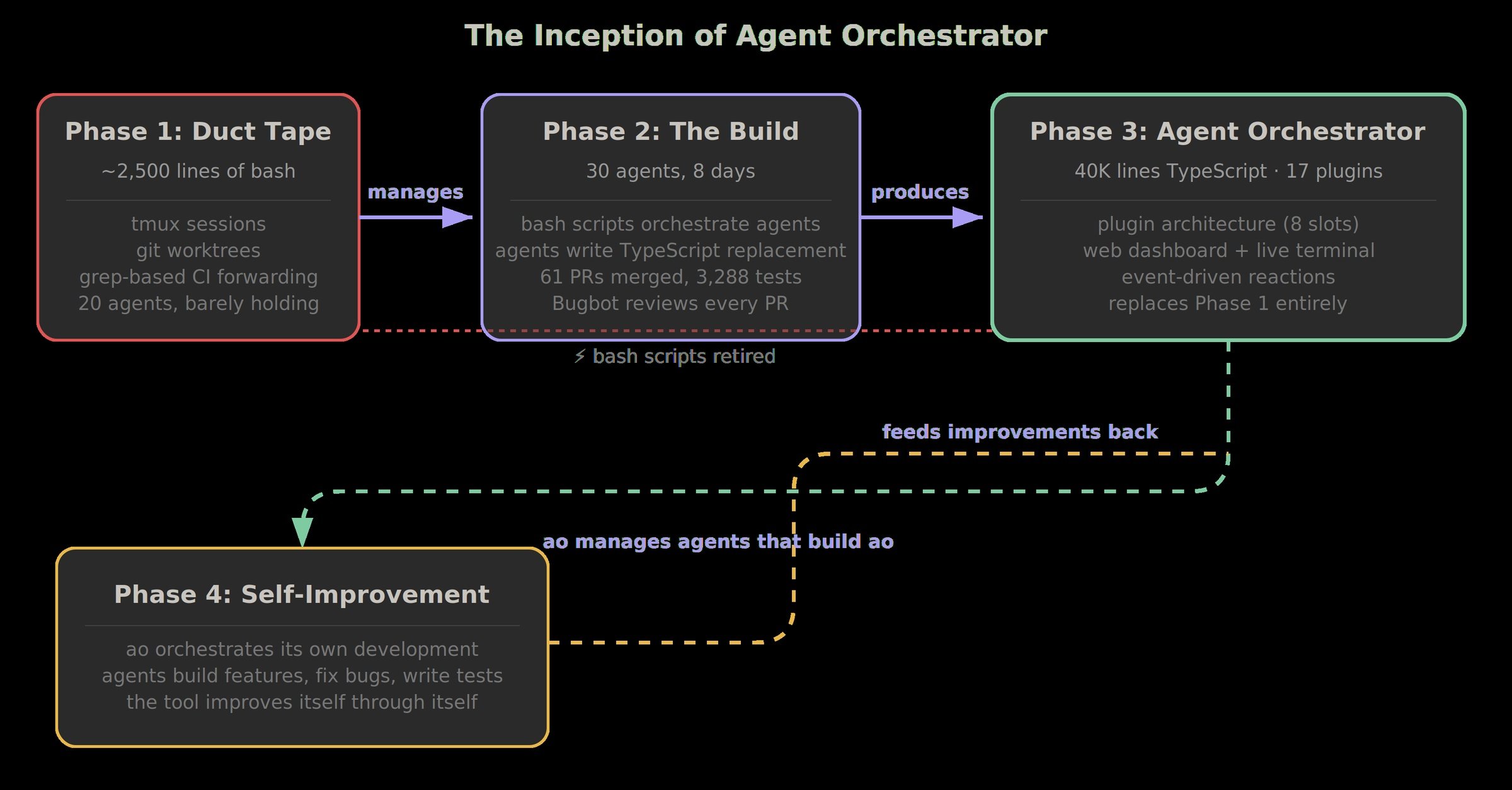

We just open-sourced the system we use to manage 30 parallel AI coding agents per person. 40K lines of TypeScript. 3,288 tests. 17 plugins. Built in 8 days — by the agents it orchestrates. Yes, we used Agent Orchestrator to build Agent Orchestrator. Some numbers: → 500+ agent-hours in 24 human-hours (20x leverage) → 86 of 102 PRs created by AI (84%) → After Day 4, I stopped writing code entirely Spawn agents. Step away. Ship faster.