Sonnet 4.6 Launches Alongside Figma Integration as Qwen Opens 397B-Parameter Multimodal Model

Anthropic's Sonnet 4.6 dropped alongside a Figma-to-Claude Code integration, dominating the feed and sparking a wave of ecosystem content from podcasts to WarCraft sound hooks. Meanwhile, "harness engineering" emerged as the term of the day for agent builders, and the IDE wars heated up with calls to move beyond VS Code entirely.

Daily Wrap-Up

Today belonged to Claude Code. Between the Sonnet 4.6 launch, a Figma integration that had designers and developers equally excited, a YCombinator podcast deep dive with its creator, and a flood of community-built extensions, it felt like an entire product ecosystem leveling up in a single day. The sheer volume of Claude Code content suggests it has crossed the threshold from "impressive tool" to "platform," and the community building around it (WarCraft hook sounds, pixel-art companions, elaborate CRM systems) is starting to resemble the early days of the VS Code extension ecosystem.

The more subtle throughline was the emergence of "harness engineering" as a distinct discipline. Multiple posts converged on the same idea: the bottleneck in AI development is no longer model capability but the infrastructure that wraps around agents. @casper_hansen_ distilled it cleanly with "design the verification layer, let the model do the rest," and @NickADobos identified a significant architectural shift in how Claude handles tool calls that could compress agent loops dramatically. For anyone building agent systems, this is the week to pay attention to how the industry is thinking about orchestration, sandboxing, and supervision.

The most entertaining moment was easily @JorgeCastilloPr suggesting you add WarCraft 3 sounds to Claude hooks for task completion alerts, because apparently the path to 10x engineering runs through Azeroth. On the policy side, Anthropic caught heat from Palmer Luckey over Pentagon contract terms, adding a geopolitical subplot to an otherwise product-heavy day. The most practical takeaway for developers: if you're using Claude Code, configure the 1M context Sonnet 4.6 model as your default via ~/.claude/settings.json per @nummanali's instructions, and explore the new Figma integration for rapid UI prototyping workflows.

Quick Hits

- @minchoi shares Seedance 2.0 early access videos, calling creators "the future of filmmaking." The AI video generation space continues its rapid iteration cycle.

- @cgtwts reacts to what they call a casual AGI drop, though the bar for that claim keeps shifting.

- @elonmusk promotes Grok 4.20 as "BASED," continuing the pattern of model launches doubling as culture war statements.

- @theaidocfilm announces "The AI Doc: Or How I Became an Apocaloptimist" hitting theaters March 27.

- @ryanlightbourn, a 42-year-old former filmmaker, made a creative project in 3 days with $39 in AI credits, covering writing, directing, cinematography, VFX, editing, and sound design.

- @heykahn calls something "the craziest thing I've seen since ChatGPT," which at this point is said roughly every 48 hours.

- @thdxr notes GLM5 is free in opencode for the week.

- @MarketWatch covers the intersection of AI and tax preparation, humanity's oldest number-crunching ritual meeting its newest calculator.

- @gdb posts an OpenAI hiring call for infra and security engineers, noting that engineering is "already different from a few months ago" and emphasizing domain understanding over raw coding skill.

- @BenCarr630567 introduces cara-3, a real-time avatar model claiming sub-180ms response times.

- @ashpreetbedi teases a programming language designed specifically for agentic software.

Claude Code's Expanding Universe

The Claude Code ecosystem had its biggest single day in recent memory, with content spanning from the deeply technical to the delightfully absurd. The centerpiece was a YCombinator Lightcone episode featuring @bcherny, Claude Code's creator, covering everything from the tool's origin story to the future of terminal-based development. The 47-minute conversation touched on subagents, plan mode, and a question that would have seemed absurd a year ago: does the terminal still have life left in it?

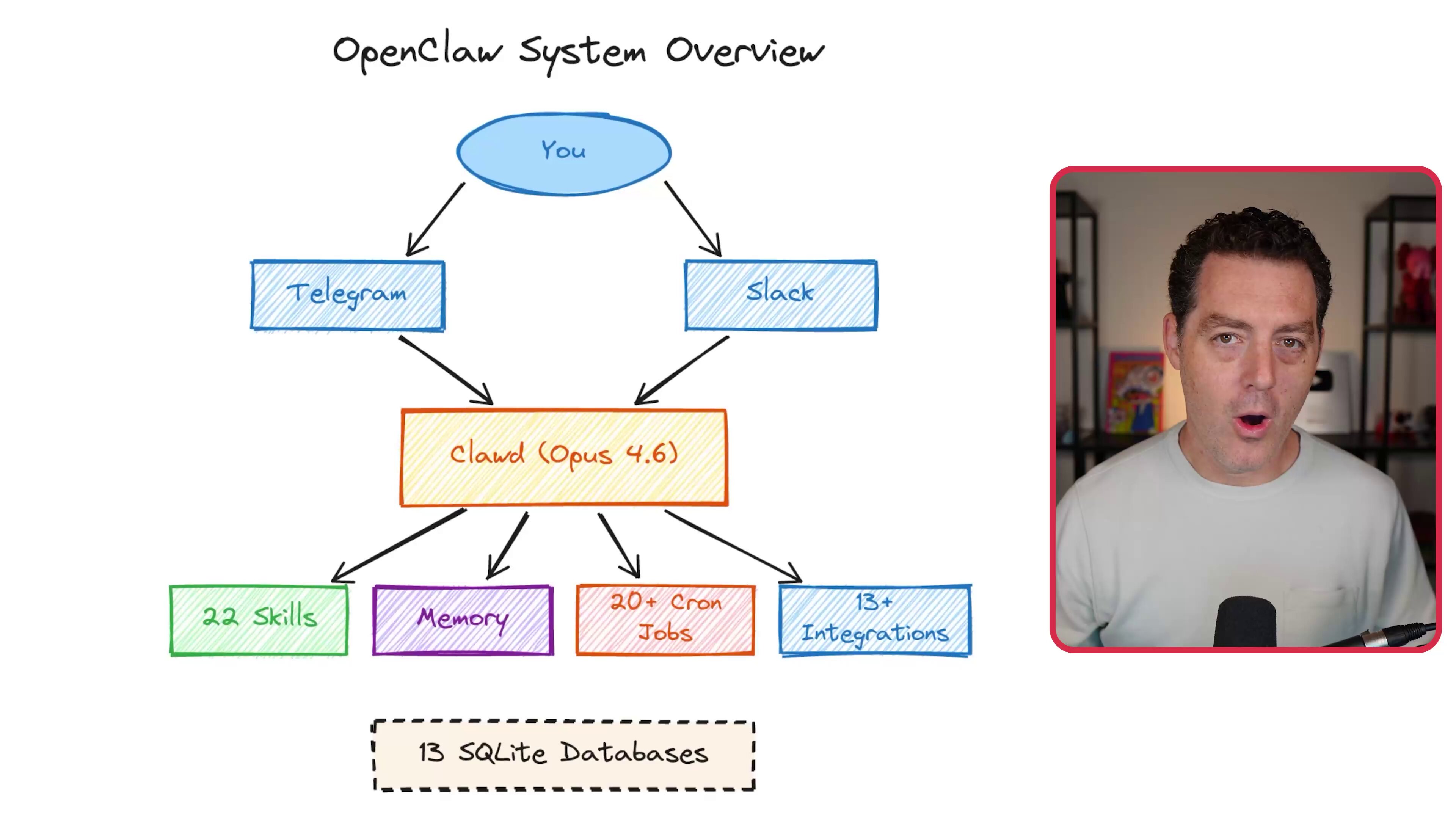

On the community side, @MatthewBerman revealed he has burned through 2.54 billion tokens building out his OpenClaw setup, sharing 21 daily use cases that range from a CRM system to a food journal:

> "I've spent 2.54 BILLION tokens perfecting OpenClaw. The use cases I discovered have changed the way I live and work."

That token count is staggering, but the breadth of his use cases (meeting-to-action-item pipelines, security councils, business advisory councils, self-updating systems) illustrates how Claude Code is being stretched well beyond its original "coding assistant" framing into something closer to a personal operating system.

The community creativity didn't stop there. @JorgeCastilloPr offered what might be the most culturally specific productivity hack of the year: wiring WarCraft 3 sound effects into Claude hooks so your computer announces task completions like a Protoss building warping in. @marcvermeeren released Clawy, a JRPG-inspired Claude Code companion that lives as a tiny pixel creature, tapping into the nostalgia of developers who grew up with Game Boys. These projects are lighthearted, but they signal something important: developers are treating Claude Code as a platform worth customizing and extending, not just a tool to use as-is.

Sonnet 4.6 Arrives

Anthropic's latest model drop landed with a clear message: the gap between Sonnet and Opus is closing fast. @alexalbert__ set the tone for the announcement:

> "Sonnet 4.6 is here. It's our most capable Sonnet model by far, approaching Opus-class capabilities in many areas. The performance jump over Sonnet 4.5 (which was released just over four months ago) is quite insane."

@bcherny confirmed the model is now live in Claude Code and noted that developers in early testing "often preferred it to Opus 4.5," which is a remarkable statement given the price difference between the tiers. The model is now the default for Pro and Team plans.

Perhaps more interesting than the model itself was @nummanali's configuration guide for accessing the 1M context variant. The fact that you can point Claude Code's Haiku and Sonnet model slots at claude-sonnet-4-6[1m] via environment variables means developers can get near-Opus intelligence with massive context at Sonnet pricing. But the real hidden gem was @NickADobos identifying a significant architectural change in how Claude handles tool calls. Rather than the old loop of "call tool, read result, decide next step," Claude now writes code that pre-plans decision paths for tool results, compressing what used to require multiple LLM round trips into a single execution. As @NickADobos put it, "the LLM pre-bakes potentially hundreds or thousands of decision paths." If this holds up in practice, it represents a fundamental shift in agent loop efficiency.

Figma Meets Claude Code

The Figma integration was arguably the most crowd-pleasing announcement of the day, generating excitement from both the design and engineering camps. @trq212 broke down what shipped:

> "Figma just shipped the ability to bring UI work done in Claude Code straight into Figma as editable design frames. Use this to explore new ideas in Figma, view multi-page flows on the canvas, or reimagine user experiences."

The key word there is "editable." This isn't a screenshot import; it's structured design data flowing between a coding agent and a design tool. @bcherny kept his reaction to three words and an emoji ("Claude Code + Figma"), while @Av1dlive captured the community sentiment with "design has been freed." The integration collapses a workflow that previously required either a designer translating code output into Figma manually or a developer working from static mockups. For teams where the same person wears both hats (increasingly common in the AI-assisted era), this removes a significant context-switching tax.

Harness Engineering Takes Shape

A clear pattern emerged across multiple posts: the AI engineering community is converging on "harness engineering" as a distinct practice. @Vtrivedy10 and @hwchase17 both referenced deep dives into the topic, while @casper_hansen_ offered the most concise framing of the philosophy:

> "This is the frontier of AI engineering. Design the verification layer, let the model do the rest."

@almonk took the concept in a playful but genuinely interesting direction, proposing a TikTok-style interface for reviewing PR suggestions from background agents running across your codebase. The idea of doom-scrolling through agent-generated code improvements sounds like a joke, but the underlying concept (continuous background agents proposing changes that humans review asynchronously) is exactly the kind of human-AI collaboration pattern that harness engineering is trying to formalize. The discipline is essentially about building the scaffolding, guardrails, and review mechanisms that let agents operate with increasing autonomy while keeping humans in a supervisory role rather than a bottleneck position.

The IDE Debate Heats Up

The question of what development environments should look like in an agent-first world produced a sharp exchange. @BenjaminDEKR drew a line in the sand, pushing back on the idea that existing IDEs are sufficient:

> "Cursor and all VSCode-like IDEs are the old way. We need the new way, agentic first, code several layers down and not prominent."

@leerob responded by pointing out that Cursor already supports agent-first workflows if configured that way, while @theo took a more pragmatic stance with his "Opus vs Codex" comparison video, concluding that he uses both daily and thinks others should too. The tension here reflects a genuine architectural question: should AI coding tools be extensions bolted onto existing editors, or do we need purpose-built environments designed from the ground up around agent interaction patterns? The answer probably depends on where you sit on the spectrum between "I still want to read and write code" and "I want to describe what I want and review what I get."

Anthropic's Political Headwinds

Anthropic found itself on the defensive as defense-sector politics spilled into the tech discourse. @ns123abc surfaced Palmer Luckey's response to the Anthropic-Pentagon story, quoting Luckey as calling it "a rational response to a vendor trying to control the government via terms of service." @beffjezos compiled a list of recent Anthropic setbacks (legal threats, a controversial article, the Pentagon contract situation, OpenAI acquiring OpenClaw, Codex gaining ground) and asked whether Anthropic had "lost the mandate of heaven this week." Whether or not that framing is fair, it captures a sentiment shift worth tracking. Product excellence and public perception don't always move in lockstep, and Anthropic is learning that shipping strong models doesn't insulate you from political and narrative headwinds.

Products and Tools

A handful of product updates rounded out the day. @claudeai announced that the Claude Excel add-in now supports MCP connectors for financial data providers including S&P Global, LSEG, PitchBook, and FactSet, which is a significant play for the finance and analytics crowd who live in spreadsheets. @NotebookLM shipped prompt-based slide deck revisions and PPTX export support, addressing what they called their most requested feature. And @moment_dev positioned their product as "Google Docs but for markdown, with a git backend," targeting the increasingly crowded space of developer-friendly writing tools. @rudrank shared genuine excitement about an App Store Connect CLI that's proving more powerful than expected, noting the urgency of getting it popular enough to become model training data, a motivation that's becoming increasingly common among open-source developers.

Sources

Improving Deep Agents with Harness Engineering

TLDR: Our coding agent went from Top 30 to Top 5 on Terminal Bench 2.0. We only changed the harness. Here’s our approach to harness engineering (teas...