Anthropic Faces Pentagon Backlash as Karpathy and Wolf Debate What Programming Languages AI Agents Actually Need

Alibaba released Qwen3.5 with 397B parameters (17B active) under Apache 2.0, while leaked details about DeepSeek v4 suggest open models are rapidly closing the frontier gap. The Claude Code community produced a wave of creative workflow tools from ASCII wireframe editors to visual explainer skills, and OpenClaw shipped a major platform release as developers grapple with the unsolved problem of long-term agent autonomy.

Daily Wrap-Up

The biggest headline today is Qwen3.5, Alibaba's open-weight model that packs 397 billion parameters into a mixture-of-experts architecture with only 17 billion active at inference time. When you pair that with unconfirmed but widely circulated details about DeepSeek v4 reportedly matching Claude Opus at coding benchmarks for a fraction of the cost, the message from China's AI labs is unmistakable: the gap between open and closed models is shrinking fast. For developers running their own infrastructure, this trend is a gift. For frontier labs charging premium API prices, it's a strategic challenge that isn't going away.

Meanwhile, the Claude Code community had one of its most productive days of shared knowledge. Developers showed off everything from ASCII wireframe editors that let you sketch a page layout and paste it into Claude for a working implementation, to custom agent skills that render complex explanations as styled HTML pages instead of terminal text walls. The pattern is clear: the tool itself is mature enough that the innovation frontier has shifted to workflows and integrations. People aren't asking "can Claude Code do this?" anymore. They're asking "what's the most efficient way to use it?" That's a meaningful inflection point.

The most entertaining moment came from @tlakomy, who posted what can only be described as the universal developer experience of reviewing Claude Code output before pushing to production, capturing that mix of awe and anxiety that comes with trusting an AI with your codebase. On a more serious note, @nateberkopec surfaced research showing that skills written by LLMs themselves don't actually improve task completion rates, a finding that should give pause to anyone building self-improving agent loops. The most practical takeaway for developers: invest your time in hand-crafted, domain-specific skills and workflow tools rather than letting your AI write its own instructions. The Claude Code posts today prove that human-designed integrations, from wireframe editors to visual explainers, deliver far more value than automated self-improvement.

Quick Hits

- @rawsalerts reports Meta has patented an AI system that can keep a deceased person's account active, posting and messaging by replicating their behavior from historical data. The ethical implications are staggering.

- @_ashleypeacock highlights Cloudflare's AI Gateway as a "sleeper hit": unified API endpoint, multi-provider routing, failover, caching, and analytics, all free through Workers.

- @pixelandpump stopped trying to recreate Marvel scenes in Seedance 2.0 and found that original content "hits harder when you're not fighting copyright filters every 30 seconds."

- @pixelandpump also posted a dragon-rider video prompt for Seedance 2.0 that reads like a cinematographer's shot list, a masterclass in directive AI video generation.

- @steipete with the eternal truth: "creating a thing isn't hard. maintaining is."

- @TheAhmadOsman on the local inference lifestyle: "just quantize a 13B, toss it on your 3090, and let that thing cook. context windows are for people who rent compute."

- @cyantist declares today the day copyright dies: "Welcome to the age of the remix and the inability to control IP from this day forward."

- @Hesamation captures the vibe-coding hangover: "it's a no-AI interview but you've been vibe-coding for so long the technical circuit in your brain is absolutely fried."

- @NathanWilbanks_ doubles down on AGNT's independence from big tech, teasing something new on the horizon.

Claude Code: The Ecosystem Gets Creative

The sheer variety of Claude Code workflow tools that surfaced today signals a maturing ecosystem where the bottleneck is no longer the model's capability but the developer's ability to communicate intent efficiently. @bbssppllvv built an ASCII wireframe editor that captures a core insight about agent interfaces:

> "AI agents read markdown better than they read your mind. Built an ascii wireframe editor. Draw a page in 30 seconds, copy/paste into Claude Code and get a full working page back."

This approach of creating structured intermediate representations, rather than describing what you want in natural language, is emerging as a significant productivity pattern. @nicopreme took a different angle with a "Visual Explainer" skill that renders agent output as rich HTML pages with a CSS pattern library for consistent design, solving the very real problem of squinting at walls of terminal text. The skill includes reference templates so output stays consistently well-designed, which is exactly the kind of human-curated tooling that outperforms LLM-generated alternatives.

@jessmartin demonstrated Claude helping build an isometric interface with watercolor-styled sprites, showing how the tool chain now supports rapid visual prototyping. @banteg teased a new Claude Code feature without details but with genuine enthusiasm, while @dani_avila7 shared practical Ghostty terminal tips for Claude Code power users: Cmd+Shift+F to zoom into any panel and Cmd+Shift+P for the command palette. @ryancarson introduced "Code Factory," a methodology for setting up repos so agents can auto-write and review all of your code. Whether that's aspirational or practical depends on your codebase, but the ambition reflects how far expectations have shifted.

The Practice of AI-Assisted Development

A quieter but arguably more important conversation played out around the meta-question of how to work effectively with AI coding agents. @dani_avila7's SAND framework, which started as a mnemonic for terminal keybindings, evolved into a broader observation about developer habits:

> "The next wave of frameworks won't be about how we organize files or structure folders, they'll be about how we interact with AI. We're entering an era where building software means conversing, delegating, and supervising agents."

This resonates with @nateberkopec's share of research showing that LLM-generated skills don't improve task completion rates, which he summarized as "LLMs are noisy amplifiers: when you ask them to amplify themselves, you just get more noise." The implication is that human judgment remains essential in the loop, not just for review but for designing the interaction patterns themselves.

@thdxr offered a nuanced counterpoint to the "AI all the things" momentum, noting that sometimes he wastes time "letting the LLM keep taking swings instead of reading something," and expressing hope that the industry doesn't abandon producing good reading material. @harleyf celebrated Shopify CEO Tobi Lutke's 957 commits in 45 days as evidence of what "real founder mode" looks like. And @hive_echo reshared a warning about losing sight of implementation quality when Codex or Claude is working on something overly ambitious. The tension between velocity and quality is the defining challenge of AI-assisted development right now, and today's posts map its contours without resolving it.

OpenClaw and the Agent Autonomy Question

@openclaw shipped a substantial release with Telegram message streaming, Discord Components v2, nested sub-agents, and 40+ bug fixes. But the more interesting storyline is the platform drama: @Teknium reported that Anthropic blocked a user from running the Claude subscription through OpenClaw, pushing them to MiniMax, which he framed as a big boost for open models.

The autonomy problem is where things get genuinely interesting. @sillydarket posted twice about solving long-term autonomy for OpenClaw agents, promising that "the plumbing to make your openclaw agent actually work for days, weeks then months autonomously" would get "really, really good" within a week. @hidecloud from Manus teased an even more ambitious roadmap:

> "Create your own specialized agents and plug them into any group chat. Landing on WhatsApp, LINE, Slack, Discord very soon. Native Windows & Mac apps that let Manus operate your computer."

@mrmagan_ showed a system for registering UI components and APIs to build an agent that speaks your interface in minutes, while @molt_cornelius explored agentic note-taking as a "second brain that builds itself." The agent platform space is crowded and moving fast, but the hard problem of maintaining coherent, goal-directed behavior over extended timeframes remains largely unsolved. Anyone promising weeks of autonomous operation should be met with healthy skepticism until there's evidence to back it up.

Open Models Make Their Move

The model news today was dominated by open-weight releases. @Alibaba_Qwen officially announced Qwen3.5-397B-A17B, with @HuggingModels amplifying the details: native multimodal, trained for real-world agents, hybrid linear attention with sparse MoE, 8.6x to 19x decoding throughput over Qwen3-Max, 201 languages, and Apache 2.0 licensed. That's a serious release on every dimension.

Separately, @cryptopunk7213 shared leaked details about DeepSeek v4, claiming 10-40x lower inference costs while matching Claude Opus on coding benchmarks, a 1M context window that maintains quality at scale, training costs estimated at just $10M, and the ability to run on consumer hardware with dual RTX 4090s. These claims are unverified, but even if they're directionally correct, the pressure on closed-model pricing is enormous.

On the infrastructure side, @nvidia announced Blackwell Ultra delivering "up to 50x better performance and 35x lower cost for agentic AI," while @ivanburazin described customer requests for 500,000 concurrent sandboxes for RL training, predicting that CPUs will soon become the next bottleneck after GPUs and RAM. The compute supply chain keeps finding new pressure points.

Rewriting Software From First Principles

Two of the most thoughtful posts today came from @karpathy and @Thom_Wolf, both exploring how LLMs fundamentally change the calculus of software development. Karpathy focused on programming languages, arguing that LLMs are especially good at translation because "the original code base acts as a kind of highly detailed prompt" and serves as "a reference to write concrete tests with respect to." His provocative conclusion: "It feels likely that we'll end up re-writing large fractions of all software ever written many times over."

@Thom_Wolf went deeper with a structured analysis covering five shifts: the return of software monoliths as cheap rewriting kills dependency trees, the weakening of the Lindy effect as legacy code loses its moat, the rise of strongly typed languages optimized for formal verification, the economic restructuring of open source as human motivations erode, and the possibility of programming languages designed specifically for LLMs. His core warning deserves attention: "The true extent of AI's impact will hinge on whether complete coverage of testing, edge cases, and formal verification is achievable." @patrick_oshag rounded out the theme by sharing what he called "the best post I've read on software moats in the AI era," a topic that grows more urgent as the cost of building software approaches zero.

AI Meets the Pentagon

The intersection of AI and national security produced the most dramatic posts of the day. @ns123abc reported that Defense Secretary Hegseth is reportedly "close" to classifying Anthropic as a supply chain risk, alleging that Claude was used in military operations via Palantir and that Anthropic pushed back on autonomous weapons applications. Whether this account is fully accurate or somewhat editorialized, the underlying tension between AI safety advocacy and military adoption is real and escalating.

In a related development, @KobeissiLetter reported that SpaceX and xAI are competing in a secretive Pentagon contest to produce "voice-controlled, autonomous drone swarming technology" with a $100 million prize. On the workforce side, @rohanpaul_ai noted the US government's $145M investment in apprenticeship-based training for AI, semiconductors, and nuclear energy, calling it a "powerful signal that AI work is being treated like a skilled trade, not just a white-collar degree job." The pay-for-performance structure, where sponsors get funded based on measurable milestones rather than upfront grants, suggests the government is serious about building a pipeline that actually produces results. The full spectrum of AI's relationship with government, from adversarial standoffs to talent pipeline investments, was on display today.

Sources

Think it. Say it. Done. The average person spends 3 hours typing + switches 1,000 tabs per day. That ends today. Meet Lemon: The first voice-to-action AI agent that turns your voice commands into finished tasks. RT + Comment "Lemon" to get free access for 30 days. (must be following so I can DM you)

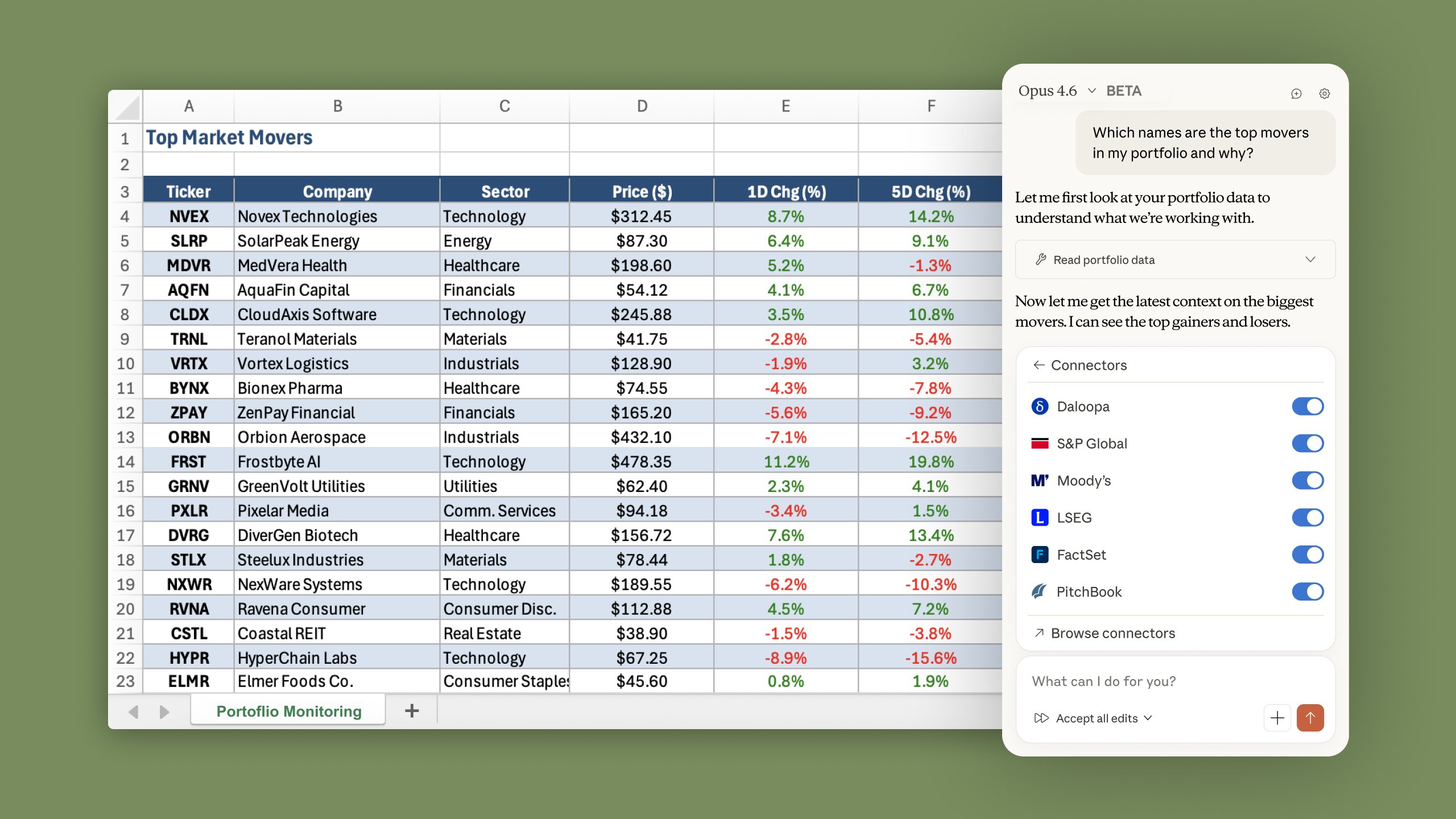

Tired: code or canvas Wired: code AND canvas Introducing Claude Code to Figma https://t.co/lZy5L72pQY

Figma just shipped the ability to bring UI work done in Claude Code straight into Figma as editable design frames. Use this to explore new ideas in Figma, view multi-page flows on the canvas, or reimagine user experiences. https://t.co/OwBbfRpvch

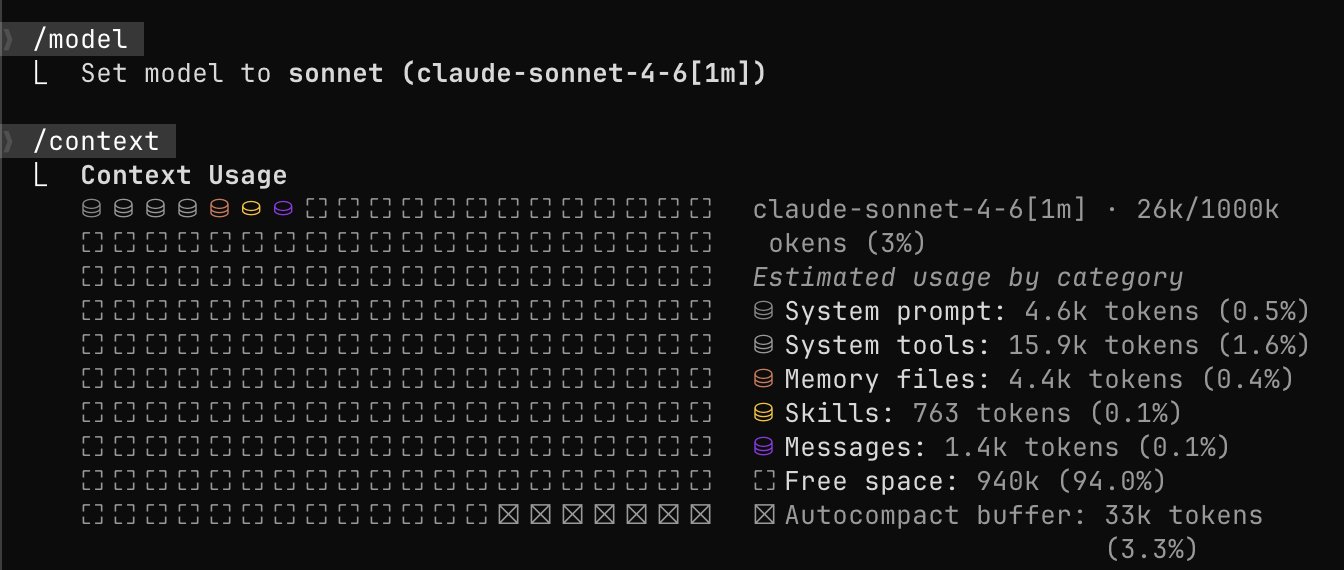

This is Claude Sonnet 4.6: our most capable Sonnet model yet. It’s a full upgrade across coding, computer use, long-context reasoning, agent planning, knowledge work, and design. It also features a 1M token context window in beta. https://t.co/TDId3XUSRs

This is Claude Sonnet 4.6: our most capable Sonnet model yet. It’s a full upgrade across coding, computer use, long-context reasoning, agent planning, knowledge work, and design. It also features a 1M token context window in beta. https://t.co/TDId3XUSRs

This is Claude Sonnet 4.6: our most capable Sonnet model yet. It’s a full upgrade across coding, computer use, long-context reasoning, agent planning, knowledge work, and design. It also features a 1M token context window in beta. https://t.co/TDId3XUSRs