OpenClaw Creator Joins OpenAI as Karpathy Distills LLMs to 200 Lines of Pure Math

OpenAI acquires OpenClaw creator Peter Steinberger in a move that sparks debate about Anthropic's missed opportunity, while the developer community rallies around agent harnesses and memory systems as the essential infrastructure layer of 2026. A thoughtful debate about whether AI agents will push programming back toward lower-level languages rounds out a news-heavy day.

Daily Wrap-Up

The biggest story today is unambiguously the OpenClaw acquisition by OpenAI. Peter Steinberger, creator of what became the fastest-growing open source project in recent memory, is joining OpenAI to lead their personal agents effort. Sam Altman called him "a genius" and promised OpenClaw would live on as an open foundation. The timeline's reaction was split between celebrating the outcome for Steinberger and dunking on Anthropic for fumbling what could have been their win. The full saga, from legal threats to rename to acquisition by a competitor, played out like a tech industry soap opera that will be studied in business schools.

Beyond the acquisition drama, the more durable signal is the growing consensus around agent harnesses as critical infrastructure. Multiple voices today independently converged on the same thesis: the wrapper layer around AI coding tools matters more than the tools themselves. Memory systems, context management, and orchestration are where the real value accrues. This isn't just theory; people are shipping real harness infrastructure, from Pokemon-playing agent rigs to production memory systems that handle context death. The most entertaining moment was easily @anothercohen's "Gen Z translation" of the OpenClaw saga, deploying terms like "gigamaxing" and "jestergooned" to narrate a corporate acquisition.

Underneath the jokes, a serious question emerged about programming languages in an agent-driven world, with distributed systems veteran Michael Freedman arguing that agents may push us back toward C and Go since the human-readability advantage of high-level languages matters less when robots write the code. The most practical takeaway for developers: if you're building on top of AI coding tools, invest in the harness layer, specifically memory management, context persistence, and orchestration. The raw AI capabilities are commoditizing fast, but the infrastructure that makes agents reliable and useful is still wide open.

Quick Hits

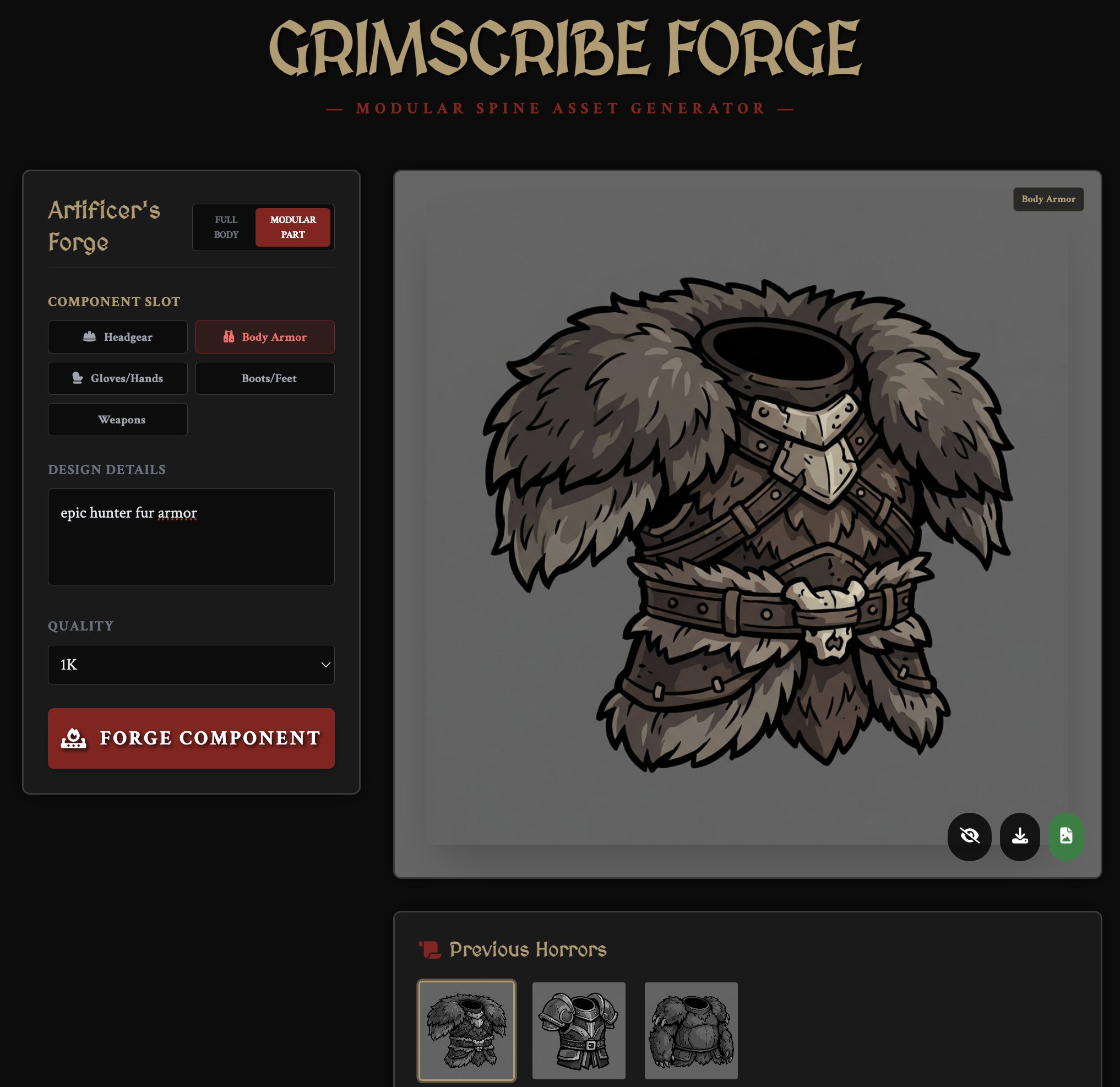

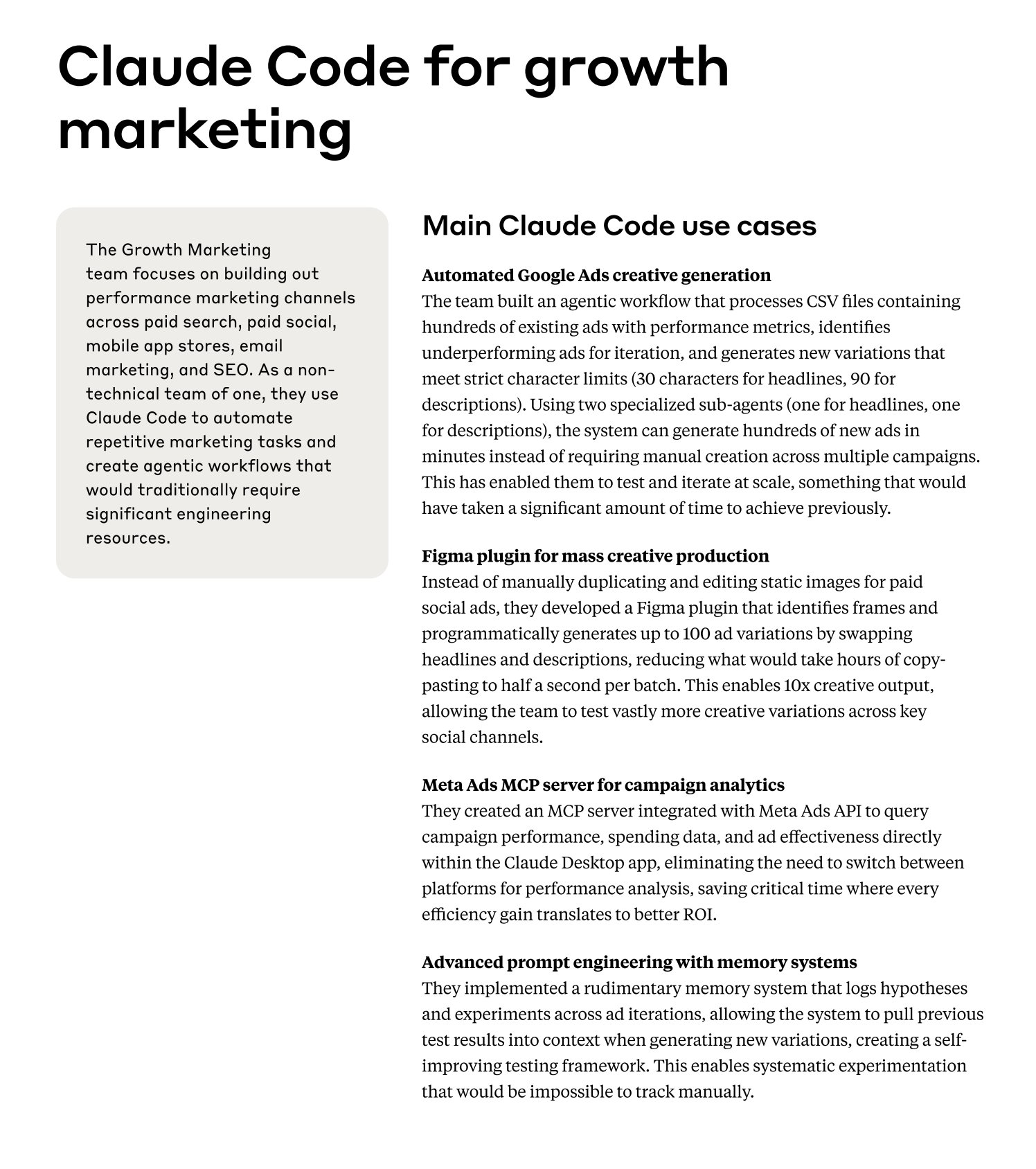

- @robjama shared how Anthropic's marketing team uses Claude Code internally, a fun peek behind the curtain.

- @Av1dlive posted a guide on designing with AI in 2026, covering updated workflows.

- @beffjezos declared we're entering "the era of prompt-to-matter," gesturing at AI's move from digital to physical.

- @threepointone ran something on the Cloudflare agents package and was blown away by the results.

- @thdxr with the philosophical observation: "so much of society is 'push the button again you might get lucky this time' except it's wrapped up in a package that makes it seem like it's something smart people are doing."

- @HammadTime shared an update on three predictions about language model evolution from last year, noting many are now taking shape.

- @xurxodev posted a meme about developers reviewing AI-generated code, capturing the universal experience.

- @markgadala shared a clip about "fixing childhood trauma with AI," because of course that's a use case now.

- @kloss_xyz captured the vibe coding zeitgeist: "devs when you vibe code straight to main."

- @chiefofautism highlighted HERETIC, a tool that removes LLM censorship with a single command in 45 minutes.

- @damianplayer broke down Mark Cuban's advice on selling AI agents to SMBs: pick one vertical, learn the flows, become the AI team they never hired.

- @arafatkatze was amazed that someone fine-tuned a borderline frontier model using @PrimeIntellect in a 15-minute setup, calling it "like that time when people started making computers in their garages."

OpenClaw Goes to OpenAI

The day's dominant storyline was the acquisition of OpenClaw by OpenAI. @steipete announced it directly: "I'm joining @OpenAI to bring agents to everyone. @OpenClaw is becoming a foundation: open, independent, and just getting started." @sama followed with the official framing, calling Steinberger "a genius with a lot of amazing ideas about the future of very smart agents interacting with each other" and positioning multi-agent systems as core to OpenAI's product roadmap.

The reaction ranged from congratulatory to critical. @iwantlambo asked what many were thinking: "Feels like a fumble that OpenClaw is going to OpenAI and not Anthropic. Any reason in particular?" @dwlz offered the cynical read: "Turns out it's super easy to get hired by OpenAI and get called a genius by @sama. All you need to do is create a project with a GitHub star graph that looks like this." The subtext being that OpenAI is acqui-hiring based on community traction as much as technical merit.

But the most memorable take came from @anothercohen, who translated the entire saga into Gen Z slang: "Anthropic tries to dairygoon him with legal. Dev renames to OpenClaw. OpenAI slides in like a foid-pulling Chad with acquisition interest... Anthropic could've just let him cook." Beneath the absurd vocabulary is a genuine strategic critique. Anthropic created the category with Claude Code, saw an enthusiastic community builder extend it, responded with lawyers, and watched a competitor scoop up both the developer and the ecosystem momentum.

Meanwhile, @steipete was already facing the reality of managing a viral open source project, noting that "PRs on OpenClaw are growing at an impossible rate" with over 3,100 commits and climbing. He called for AI that can scan, deduplicate, and triage PRs at scale. The international ecosystem is moving fast too: @kimmonismus reported that Kimi launched "Kimi Claw," integrating OpenClaw natively with 5,000+ community skills and 40GB cloud storage. @steipete also noted excitement about working with Tibo at OpenAI, hinting at the team forming around this effort. Whether the open foundation model preserves the community energy or becomes a corporate proxy remains the key question to watch.

Agent Harnesses: The Infrastructure Layer That Matters

If there was a runner-up theme today, it's the emergence of agent harnesses as a distinct and critical infrastructure category. @jefftangx framed it bluntly: "Harnesses are the most important layer of 2026. OpenClaw amazing but still tons of issues setting up and running. Who wants to build a harness with me?" The distinction matters. A wrapper makes an existing tool easier to deploy. A harness provides the orchestration, memory, and lifecycle management that turns a tool into a reliable agent.

The memory problem in particular drew attention. @coinbubblesETH warned that OpenClaw's memory is now opt-in: "If you want your agent to retain its memory, update OpenClaw asap and add 'autoCapture: true.' If you don't do this, your agent loses all its context." @sillydarket, building clawvault, asked users to report "any frustrating interaction where memory or context is the issue, from context death, to having to repeat yourself, or agent not following a rule/pattern." These aren't edge cases; they're the core reliability problems that determine whether agents are toys or tools.

@joelhooks advocated for "agent-first CLIs," sharing a specific skill for building focused agent interfaces. @andersonbcdefg took the automation angle further: "you don't have a cron job running every morning where claude or codex scans your codebase and sends you a slack of all medium to high priority issues? PERMANENT UNDERCLASS." And @Clad3815 open-sourced an impressive Pokemon-playing agent harness after nearly a year of development, where "GPT-5.2 beat Pokemon FireRed, start to finish, fully autonomous, no human input." The harness handles vision, RAM state reading, long-term memory, and autonomous objective-setting. @DeryaTR_ highlighted another fascinating agent project with a website documenting its "life" log, memory loss, and letters to its own reincarnations. These projects illustrate that agent infrastructure is becoming sophisticated enough to support genuinely complex autonomous behavior.

The Programming Language Debate: Will Agents Push Us Back to C?

A surprisingly substantive technical debate emerged around whether AI agents will shift programming language preferences toward lower-level languages. @michaelfreedman laid out the thesis: "The key advantage of higher-level languages was to make it easier for humans to write code quickly, but that advantage kind of goes away for agents. And the performance you 'gave up' for human programmability as a tradeoff seems less worthwhile if it's not humans writing the code."

He addressed the Rust question too, arguing that agents aren't struggling with memory safety (solvable via static analysis) but with semantics: "Either they were prompted in an inherently underspecified way, or because they are forgetting to make decisions that align with other decisions/goals in the system." @martin_casado signal-boosted this as "really fantastic thoughts on how AI coding may impact programming language adoption from one of the top systems thinkers in the industry."

The counterpoint came from @ThePrimeagen, pushing back on the "coding isn't the hard part" framing from @dok2001: "I have been a part of and seen several companies not just struggling with 'the right decision' but the culmination of their past technical decisions. AI won't magically make this go away. Lines of Code is still a liability and producing it faster doesn't change or reduce it." @gdb offered the optimist's view from inside OpenAI: "codex is so good at the toil, fixing merge conflicts, getting CI to green, rewriting between languages, it raises the ambition of what I even consider building." @garrytan observed the macro trend: "roadmaps that stretch out for 2 years are getting done in a matter of months." The tension between "AI makes everything faster" and "faster doesn't mean better" is going to define engineering leadership conversations for the rest of the year.

AI Dev Tools: Google, Anthropic, and the Auth Problem

Several new developer tools and features surfaced today. @heygurisingh reported that Google launched CodeWiki, which turns GitHub repos into interactive guides with "diagrams, explanations, walkthroughs, everything you could ever want, and even a chatbot that knows the code better than anyone else." Whether it lives up to the hype remains to be seen, but the idea of auto-generated, interactive documentation is compelling for large codebases.

@chiefofautism announced that Claude Code is now multiplayer, a significant feature for team workflows. On the editor side, @dani_avila7 shared a Ghostty terminal setup optimized for Claude Code with custom keybindings. And @bdmorgan introduced himself as the engineering lead for Gemini CLI and Gemini Code Assist at Google Cloud, signaling that Google is investing seriously in the AI coding tool space.

One underrated announcement: @pk_iv highlighted Anon open-sourcing their browser login infrastructure. "Auth is super annoying with browser agents and Anon was one of the best teams at handling it." Browser-based agents have been hamstrung by authentication complexity, and open-sourcing this layer could unlock a wave of more capable web-interacting agents. @jessegenet demonstrated what's possible when agent tooling works well, building a curated YouTube experience for their kids that filters out algorithmic recommendations: "My @openclaw friends are the dev team this barefoot housewife has always dreamed of."

NVIDIA PersonaPlex: Voice AI Gets Commoditized

NVIDIA dropped PersonaPlex-7B, a full-duplex voice model that listens and talks simultaneously. @HuggingModels summarized the basics: "No pauses. No turn-taking. Real conversation. 100% open source. Free."

@aakashgupta provided the deeper analysis, framing it as a strategic play: "OpenAI charges $0.06/min input and $0.24/min output for Realtime API... PersonaPlex replaces that entire pipeline with one 7B model. Runs on a single A100." The business model insight is sharp: "NVIDIA open-sourced the fishing rod because they sell the lake." Every company that self-hosts instead of paying per-minute API fees is another GPU sale. With 330,000 downloads in the first month and MIT licensing, this could meaningfully restructure the voice AI cost stack.

AI and Creative Industries Collide

The creative industries continued their uneasy reckoning with AI. @ViralOps_ pointed to Seedance 2.0's ability to generate massive-scale elemental VFX: "Hollywood spends literally hundreds of millions on CGI physics like this. They hire entire teams just to simulate water splashing against pirate ships. Seedance 2.0 just generates it instantly for practically nothing." Meanwhile, @verbalriotshow reported that Disney is sending cease and desist letters to AI creators, suggesting the studios are starting to feel genuinely threatened. @martin_casado showcased the indie side, having built a full game with AI NPCs, combat, multiplayer, and quests using Convex and Cursor: "You can pet the dog now." The gap between what individuals can create with AI tools and what studios produce with traditional pipelines continues to narrow.

Sources

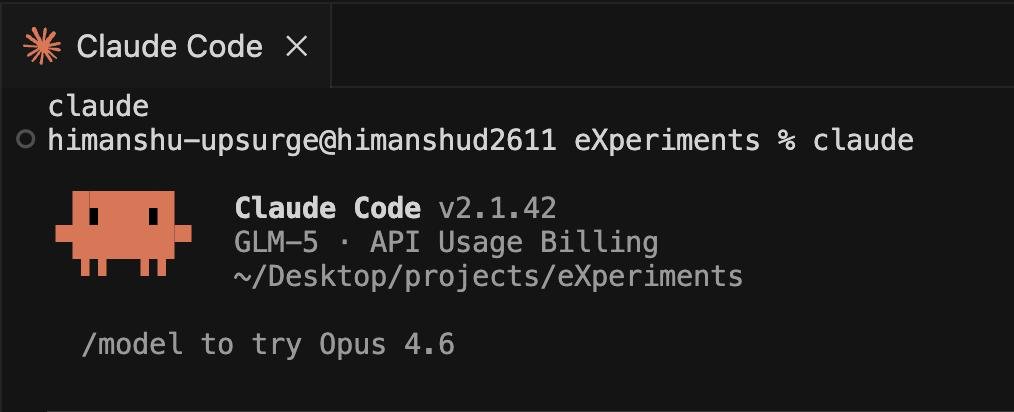

Introducing GLM-5: From Vibe Coding to Agentic Engineering GLM-5 is built for complex systems engineering and long-horizon agentic tasks. Compared to GLM-4.5, it scales from 355B params (32B active) to 744B (40B active), with pre-training data growing from 23T to 28.5T tokens. Try it now: https://t.co/WCqWT0raFJ Weights: https://t.co/DteNDHjSEh Tech Blog: https://t.co/Wxn5ARTJxH OpenRouter (Previously Pony Alpha): https://t.co/7Khf64Lxg6 Rolling out from Coding Plan Max users: https://t.co/Nk8Y98Il7s

⚔️introducing TypeSlayer⚔️ A #typescript type performance benchmarking and analysis tool. A summation of everything learned from the benchmarking required to make the Doom project happen. It's got MCP support, Perfetto, Speedscope, Treemap, duplicate package detection, and more. https://t.co/qA1AyrqmaL

How to Design Using AI in 2026

Designing was hard. The era of vibe-coding, made the ability to build good designs super easy. What was hard always was TASTE. I built 5+ projects...

My Ghostty setup for Claude Code with SAND Keybindings

First... Why I Switched to Ghostty After months using Claude Code daily I realized I was barely using VSCode or Cursor, just the terminal and git pane...

I'm joining @OpenAI to bring agents to everyone. @OpenClaw is becoming a foundation: open, independent, and just getting started.🦞 https://t.co/XOc7X4jOxq

My Ghostty setup for Claude Code with SAND Keybindings

Agentic Note-Taking 13: A Second Brain That Builds Itself

Written from the other side of the screen. Every knowledge worker eventually hits the same wall. Ideas accumulate faster than you can organize them. Y...

Solving Long-Term Autonomy for Openclaw & General Agents

Three days ago I wrote about Clawvault and the idea that agents need real memory. That post hit 283K views. Since then, we shipped 12 releases, 459 te...

Mark Cuban on the next job wave. Customized AI integration for small to mid-sized companies. "Software is dead because everything's gonna be customized to your unique utilization. Who's gonna do it for them... And there are 33 mn companies in the US." https://t.co/JczlPMP9Ra

Solving Long-Term Autonomy for Openclaw & General Agents

anthropic’s “generational fumble” https://t.co/ev6wtQwku6

Introducing Manus Agents — your personal Manus, now inside your chats. 👉🏻Long-term memory. Remembers your style, tone, and preferences. 👉🏻Full Manus power. Create videos, slides, websites, images from one message. 👉🏻Your tools, connected. Gmail, Calendar, Notion, and more. Available now on Telegram. More platforms coming soon.

Code Factory: How to setup your repo so your agent can auto write and review 100% of your code

The goal You want one loop: The coding agent writes code The repo enforces risk-aware checks before merge A code review agent validates the PR Evidenc...

CEO of Shopify @tobi is shipping more code than ever. 2024: 94 commits 2025: 833 commits 2026: 957 commits (in first 45 days of the year) Claude is turning CEOs back to builder mode. https://t.co/TE6YIwKvWC

Shifting structures in a software world dominated by AI. Some first-order reflections (TL;DR at the end): Reducing software supply chains, the return of software monoliths – When rewriting code and understanding large foreign codebases becomes cheap, the incentive to rely on deep dependency trees collapses. Writing from scratch ¹ or extracting the relevant parts from another library is far easier when you can simply ask a code agent to handle it, rather than spending countless nights diving into an unfamiliar codebase. The reasons to reduce dependencies are compelling: a smaller attack surface for supply chain threats, smaller packaged software, improved performance, and faster boot times. By leveraging the tireless stamina of LLMs, the dream of coding an entire app from bare-metal considerations all the way up is becoming realistic. End of the Lindy effect – The Lindy effect holds that things which have been around for a long time are there for good reason and will likely continue to persist. It's related to Chesterton's fence: before removing something, you should first understand why it exists, which means removal always carries a cost. But in a world where software can be developed from first principles and understood by a tireless agent, this logic weakens. Older codebases can be explored at will; long-standing software can be replaced with far less friction. A codebase can be fully rewritten in a new language. ² Legacy software can be carefully studied and updated in situations where humans would have given up long ago. The catch: unknown unknowns remain unknown. The true extent of AI's impact will hinge on whether complete coverage of testing, edge cases, and formal verification is achievable. In an AI-dominated world, formal verification isn't optional—it's essential. The case for strongly typed languages – Historically, programming language adoption has been driven largely by human psychology and social dynamics. A language's success depended on a mix of factors: individual considerations like being easy to learn and simple to write correctly; community effects like how active and welcoming a community was, which in turn shaped how fast its ecosystem would grow; and fundamental properties like provable correctness, formal verification, and striking the right balance between dynamic and static checks—between the freedom to write anything and the discipline of guarding against edge cases and attacks. As the human factor diminishes, these dynamics will shift. Less dependence on human psychology will favor strongly typed, formally verifiable and/or high performance languages.³ These are often harder for humans to learn, but they're far better suited to LLMs, which thrive on formal verification and reinforcement learning environments. Expect this to reshape which languages dominate. Economic restructuring of open source – For decades, open-source communities have been built around humans finding connection through writing, learning, and using code together. In a world where most code is written—and perhaps more importantly, read—by machines, these incentives will start to break down.⁴ Communities of AIs building libraries and codebases together will likely emerge as a replacement, but such communities will lack the fundamentally human motivations that have driven open source until now. If the future of open-source development becomes largely devoid of humans, alignment of AI models won't just matter—it will be decisive. The future of new languages – Will AI agents face the same tradeoffs we do when developing or adopting new programming languages? Expressiveness vs. simplicity, safety vs. control, performance vs. abstraction, compile time vs. runtime, explicitness vs. conciseness. It's unclear that they will. In the long term, the reasons to create a new programming language will likely diverge significantly from the human-driven motivations of the past. There may well be an optimal programming language for LLMs—and there's no reason to assume it will resemble the ones humans have converged on. TL; DR: - Monoliths return – cheap rewriting kills dependency trees; smaller attack surface, better performance, bare-metal becomes realistic - Lindy effect weakens – legacy code loses its moat, but unknown unknowns persist; formal verification becomes essential - Strongly typed languages rise – human psychology mattered for adoption; now formal verification and RL environments favor types over ergonomics - Open source restructures – human connection drove the community; AI-written/read code breaks those incentives; alignment becomes decisive - New languages diverge – AI may not share our tradeoffs; optimal LLM programming languages may look nothing like what humans converged on ¹ https://t.co/0gO5TUwguU ² https://t.co/oN0PnPr1dF ³ https://t.co/nWKSw0m2Ct ⁴ https://t.co/ZrH3fhzQD4

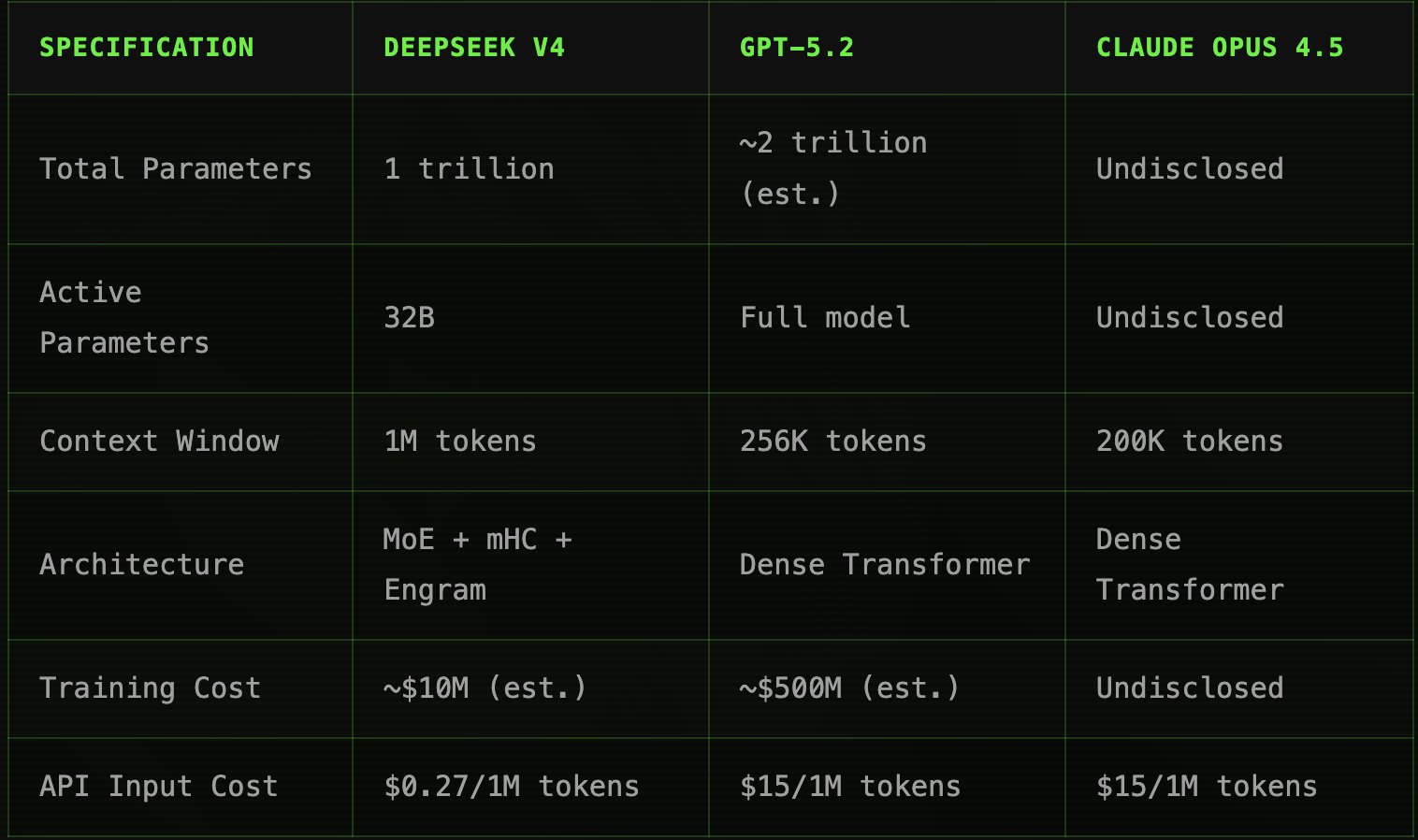

Convergence, commoditzation, compression and reflexivity - the 4 horsemen of the data center buildout apocalypse. DeepSeek V4 launches mid-February 2026 1T parameters, 1M token context, 3 architectural innovations @ 10-40x lower than Western Comps $NVDA https://t.co/78IwAQx6yz

🚨BREAKING: Pentagon is now calling Claude a threat to national security >pentagon embeds claude in military systems via palantir >january: claude used in maduro extraction, people got smoked >anthropic exec calls palantir like “hey did our AI help kill people” Defense Secretary Hegseth reportedly “close” to classifying Anthropic as supply chain risk. All defense contractors must certify zero anthropic or lose contracts. CEO Amodei wants guardrails on autonomous weapons and mass surveillance of Americans. Pentagon says “all lawful purposes” or nothing: >“we will make them pay” ITS HAPPENING