Pentagon Uses Claude in Venezuela Operation as WebMCP Spec Promises to Turn Every Website Into an Agent API

Leaked Seedance 3.0 specifications from ByteDance dominated the timeline with claims of 10-18 minute coherent video generation, while Google reportedly countered with Veo 4. The Claude Code ecosystem continued expanding with session persistence tools and MCP optimizations, and Chinese open-source models like GLM-5 began challenging frontier models on coding tasks.

Daily Wrap-Up

If you only followed one story today, it was Seedance. ByteDance's AI video model consumed every feed, first with Seedance 2.0 demos that had people generating live-action anime and cinematic memes, then with leaked 3.0 specs that read more like a pitch deck for replacing Hollywood than a model card. The claims are extraordinary: 10-18 minutes of coherent narrative video, emotional voice synthesis, storyboard-level director controls, and costs at one-eighth of the previous generation. Whether the specs are real or aspirational, the reaction was telling. The timeline didn't debate whether AI video would disrupt film production. It debated when. Google reportedly responded with Veo 4, and just like that, the AI video race has its own GPU war.

On the developer tools side, Claude Code's ecosystem is quietly maturing in ways that matter more than benchmarks. Session persistence, lazy-loaded MCP tools, Tamagotchi-style agent companions: the tooling around agentic coding is shifting from "can this work" to "how do we live with this daily." @addyosmani surfaced the most important Claude Code insight of the day, pointing to its creator Boris's argument that when AI handles code generation, engineering value shifts entirely to judgment, taste, and systems thinking. Meanwhile, @thdxr dropped the most grounding thread of the day: a company with 20,000 developers is looking at LLM costs and moving inference to their own GPU cluster with open-source models, because "there isn't infinite budget and appetite for this stuff."

The most practical takeaway for developers: if you're building tools or products, start thinking about WebMCP. Google and Microsoft co-authored a spec that lets websites expose structured tool interfaces directly in the browser via navigator.modelContext. As @aakashgupta explained, this replaces the fragile screenshot-and-guess approach that browser agents use today. The sites that implement it will become the preferred path for AI agents, creating an entirely new optimization surface. It's SEO for the agent era, and the spec is live now.

Quick Hits

- @theCTO on Dax (SST/Bun creator) picking fights: "dax chose war. against literally everyone. hell yes." No context needed, apparently.

- @VicVijayakumar read something that started normal and ended with "wait what." Relatable content with zero information density.

- @PeterMeijer asked Claude to plan a military operation to capture Venezuela's president. The intersection of AI and foreign policy continues to be exactly as unhinged as you'd expect.

- @sachinyadav699 posted the "hard times create strong men" cycle meme, updated for 2026: "C creates good times. Good times create Python programmers. Python programmers create AI. AI creates vibe coders. Vibe coders create weak men."

- @gdb (Greg Brockman): "how did we ever write all that code by hand." Four years post-ChatGPT, the co-founder of OpenAI is marveling at the old ways.

- @Babygravy9 declared that one particular AI video "brought all the fence sitters to the pro AI side," though which video remains unclear.

- @DannyLimanseta shared a two-part look at vibe-coding a Diablo-style game, using Google's Gemini AI Studio to generate consistent art assets with style references. Still required manual Photoshop for proportions, which is the kind of honest "AI gets you 80% there" report we need more of.

Seedance Takes Over the Timeline

Today belonged to ByteDance's video generation AI, and it wasn't close. Seedance 2.0 demos flooded both feeds with increasingly ambitious outputs, from anime-to-live-action conversions to cinematic action sequences, while leaked 3.0 specs painted a picture of a model that could fundamentally reshape content production.

The Seedance 2.0 showcase was impressive on its own terms. @minchoi shared results bringing anime characters to life and a "Neo meets John Wick meets Terminator" mashup that demonstrated the model's grasp of cinematic action. @Dheepanratnam highlighted what might be the more technically significant achievement: "The sense of speed here without the background turning into a blur mess is the real win." Motion coherence has been one of AI video's persistent weaknesses, and Seedance 2.0 appears to have made real progress. @VraserX captured the cultural implication: "Fan fiction just evolved into fan cinema."

But the bigger story was the Seedance 3.0 leak. @mark_k compiled the most detailed breakdown, citing Chinese social media posts from Dr. Liu Zheng at ByteDance:

> The 15-second limit is dead. Seedance 3.0 reportedly supports seamless single-take generations of 10+ minutes (with internal tests reaching 18 minutes without collapse). It uses a "narrative memory chain" to remember plot points, character personalities, and settings, effectively allowing it to "direct" multi-act stories with suspense and twists like a human.

The spec sheet reads like a wishlist: native multilingual emotional dubbing, storyboard-level director controls with real cinematic language, IMAX and Netflix-grade color presets, and compute costs at one-eighth of Seedance 2.0. @VraserX distilled the disruption thesis: "Hollywood's moat was scale, capital, and distribution. AI just compressed all three."

Google apparently got the memo. @markgadala reported that Google is responding with Veo 4, calling it "even better" and predicting "this is going to be a wild year." The competitive dynamic here matters: when two of the world's largest tech companies are racing to outdo each other on AI video quality, the capability curve steepens for everyone. @cfryant observed that a single AI video "brought all the fence sitters to the pro AI side," and while that's probably overstating it, the quality gap between AI-generated and traditionally-produced video is narrowing faster than most predictions suggested.

The skeptic's view is worth holding onto, though. Leaked specs from internal tests are not shipping products. "18 minutes without collapse" in a lab doesn't mean 18 minutes of watchable narrative in production. And the jump from impressive demos to actual storytelling requires solving problems that aren't on any spec sheet. Still, even if Seedance 3.0 delivers half of what's leaked, the content production landscape shifts meaningfully.

Claude Code's Expanding Ecosystem

Claude Code's developer ecosystem had a notably productive day, with several quality-of-life improvements and one philosophical insight that deserves more attention than it got.

@AdamTzag solved a pain point that heavy Claude Code users know well: losing all your sessions when you quit Ghostty or restart your Mac. His solution is a launchd daemon that watches for running sessions and saves them on exit, restoring everything on relaunch. "Basically pgrep + sleep in a loop. 2MB of memory doing nothing until you quit." It's the kind of unsexy infrastructure work that makes daily tooling actually usable.

On the MCP front, @bcherny (who works on Claude Code at Anthropic) clarified how tool loading works:

> Claude Code intelligently loads MCP tools on demand -- if you have lots of tools they will be lazy-loaded, and if just a few it will load more upfront. No need to optimize this yourself, Claude will take care of it for you.

This is a meaningful detail for anyone building MCP integrations. The lazy-loading approach means you can expose a large tool surface without worrying about overwhelming the context window on startup. @SamuelBeek and @chloevdl014 highlighted a more playful extension: someone built a Claude Code Tamagotchi, turning your coding agent into a virtual pet that "guilt trips you when you ignore its suggestions." @alexhillman called it "the most interesting thing to hit Claude Code desktop."

The most substantive Claude Code take came from @addyosmani, referencing Boris (Claude Code's creator):

> When AI handles the code generation, the engineer's value shifts to the decisions above the code: what do we build? why? for whom? and how it all fits together. The bottleneck was always judgment, taste, and systems thinking. AI just made that more obvious.

@bcherny reinforced this from a different angle: "Someone has to prompt the Claudes, talk to customers, coordinate with other teams, decide what to build next. Engineering is changing and great engineers are more important than ever." This framing matters because it shifts the conversation from "will AI replace developers" to "what does the job actually become." The answer increasingly looks like product engineering with AI as the implementation layer.

The Model Cost Reckoning

The economics of AI coding tools hit a reality check today, with posts revealing the tension between capability and cost at scale. The most striking data point came from @thdxr:

> We spoke to a company yesterday that's at the scale of 20,000 devs. They are looking at these numbers and going W T F and they're moving inference to their own gpu cluster with open source models. There isn't infinite budget and appetite for this stuff.

This dovetails with @meta_alchemist's argument that "open source local models like Minimax 2.5 already reached Opus 4.5 levels," making self-hosted inference increasingly viable. The endgame he describes, starting with cloud APIs and graduating to local hardware as revenue grows, mirrors the classic cloud-to-colo migration pattern that infrastructure teams have followed for a decade.

The model competition is heating up from China specifically. @himanshustwts shared first impressions of GLM-5 running through Claude Code: "impressively good in design (one shotted better ui with glm than Opus 4.6), it is actually not sycophantic, nearly no hallucinations, model is way more optimized for coding." Whether that holds up under rigorous evaluation is another question, but the pattern of Chinese models competing on coding benchmarks while being "times cheaper than Opus" is creating real pricing pressure. Meanwhile, @jxmnop pointed to Andrej Karpathy implementing "most of the complexity of modern LLMs in under 200 lines" with only three imports, a reminder that the underlying technology is becoming increasingly accessible to understand and implement.

AI Productivity: Hype Versus Reality

Underneath the excitement about new models and tools, a quieter conversation played out about whether any of this is actually making organizations more productive. @thdxr delivered the sharpest critique:

> Your org rarely has good ideas. Ideas being expensive to implement was actually helping. Majority of workers have no reason to be super motivated. They're not using AI to be 10x more effective, they're using it to churn out their tasks with less energy spend. The 2 people on your team that actually tried are now flattened by the slop code everyone is producing. They will quit soon.

This is uncomfortable because it rings true for anyone who's watched AI adoption inside a large organization. The bottleneck was never typing speed. It was judgment, prioritization, and organizational clarity. Making code cheaper to produce doesn't fix a company that doesn't know what to build.

@staysaasy provided the consumer-side evidence: "New iOS apps have exploded in the last six months due to AI coding. Number of new apps people have recommended to me: 0." The supply of software is increasing, but the supply of good software, software that solves real problems well enough to earn word-of-mouth, hasn't moved. On a more constructive note, @aakashgupta broke down the WebMCP specification that Google and Microsoft co-authored, which could actually improve how AI agents interact with the web. Early benchmarks show "67% reduction in computational overhead compared to visual agent-browser interactions" and "task accuracy around 98%." If the productivity gains from AI are going to materialize at the organizational level, it'll likely be through this kind of infrastructure work rather than just faster code generation.

Sources

Solving Memory for Openclaw & General Agents

Every AI agent you've ever used has the same fatal flaw: **context death**. The moment a session ends, everything dies. Decisions, preferences, relati...

New art project. Train and inference GPT in 243 lines of pure, dependency-free Python. This is the *full* algorithmic content of what is needed. Everything else is just for efficiency. I cannot simplify this any further. https://t.co/HmiRrQugnP

Solving Memory for Openclaw & General Agents

heard from a founder with a strong team working on low level systems: “guess who the top bug finder on our team is? claude” most haven’t caught on yet

@big_duca Someone has to prompt the Claudes, talk to customers, coordinate with other teams, decide what to build next. Engineering is changing and great engineers are more important than ever.

Token Anxiety

Mark Cuban on the next job wave. Customized AI integration for small to mid-sized companies. "Software is dead because everything's gonna be customized to your unique utilization. Who's gonna do it for them... And there are 33 mn companies in the US." https://t.co/JczlPMP9Ra

Introducing Kimi Claw🦞 OpenClaw, now native to https://t.co/YutVbwktG0. Living right in your browser tab, online 24/7. 🔹 ClawHub Access: 5,000+ community skills in the ClawHub library. 🔹 40GB Cloud Storage: Massive space for all your files 🔹 Pro-Grade Search: Fetch live, high-quality data directly from Yahoo Finance and more. 🔹 Bring Your Own Claw: Connect your third-party OpenClaw to https://t.co/YutVbwktG0, chat with your setup, or bridge it to apps like Telegram groups. Discover, call, and chain them instantly within https://t.co/YutVbwktG0. > Beta Access: Now open for Allegretto members and above. > Try it now at: https://t.co/1SP1vhvBWr

🦞 OpenClaw 2026.2.14 is live 🔒 50+ security hardening fixes ⚡ Way faster test suite 🛠️ File boundary parity across tools 🐛 Tons of bug fixes from the maintainer crew Valentine's Day release: full of love and paranoia 💕 https://t.co/BqXyomZATm

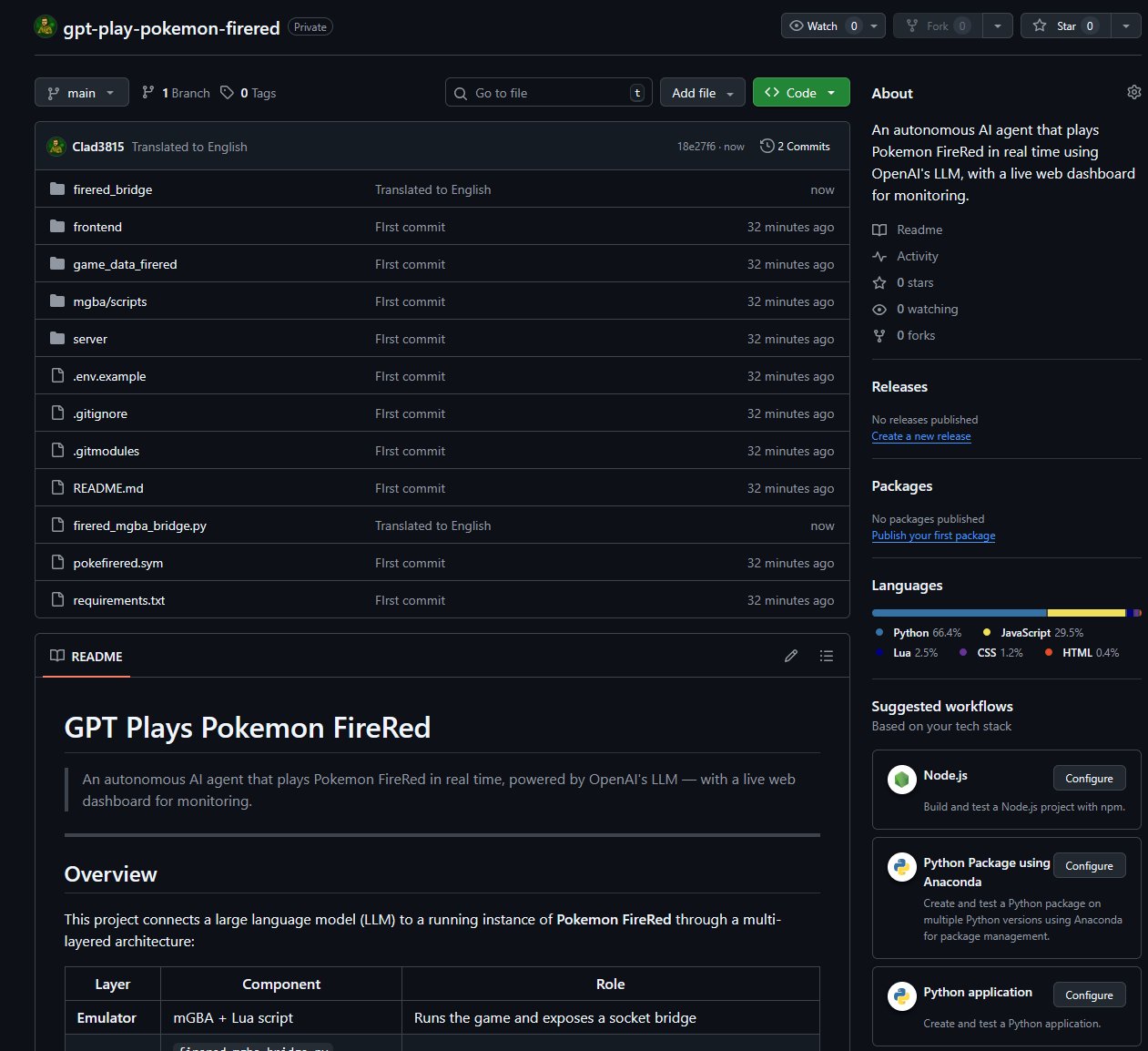

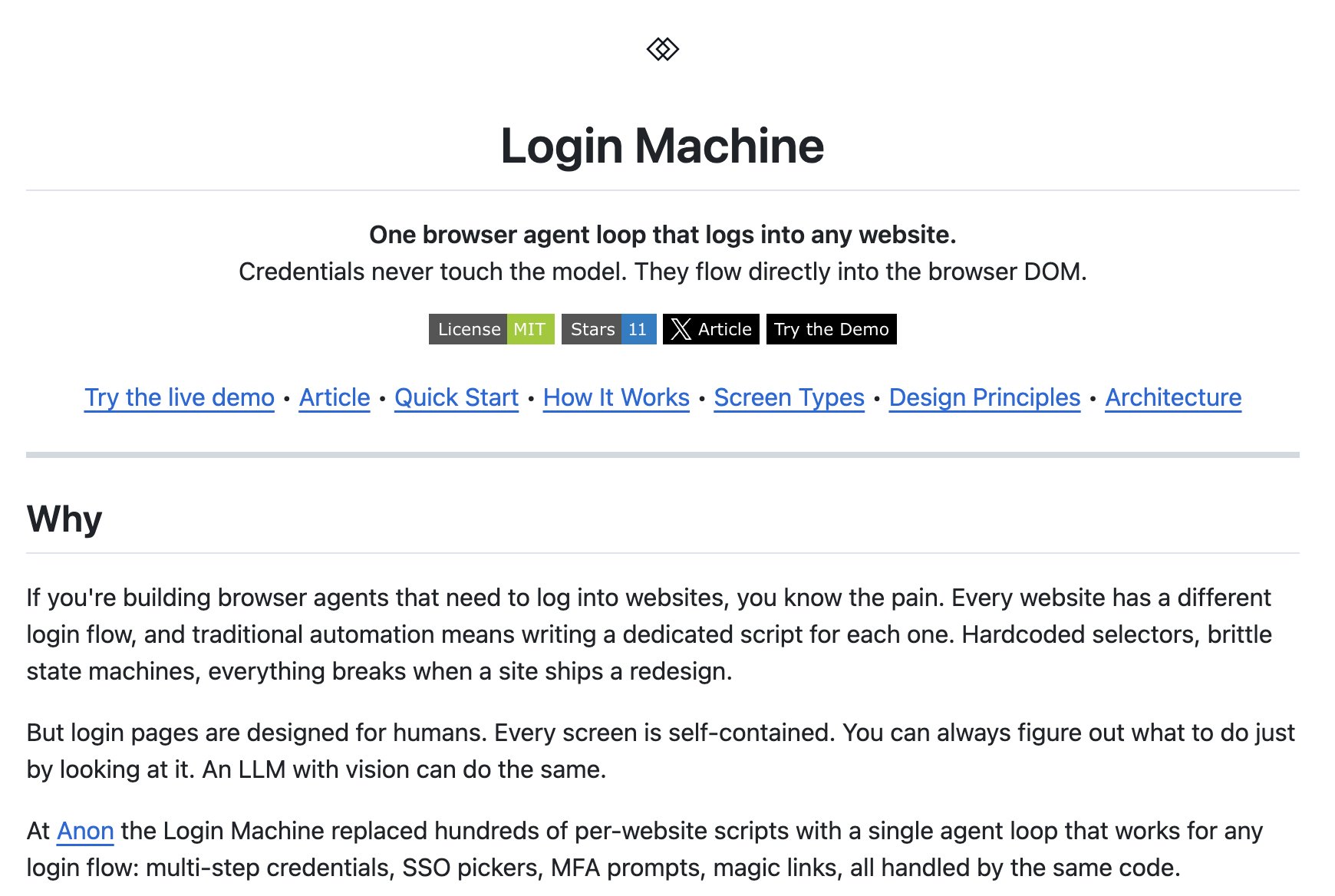

I replaced 100 login scripts with a browser agent loop

Last weekend, I put an AI agent on a Linux box, gave it root, email, credit cards, and a single mandate: decide who you are, set your own goals, and become an autonomous independent entity. Working 24-7 over 5 days, he did this--all of this--on his own: https://t.co/Pg78L6L0BQ