Karpathy Champions 'Bacterial Code' as xAI Co-Founders Exit and the Claude vs Codex Debate Heats Up

Andrej Karpathy released a complete GPT implementation in 243 lines of pure Python and demonstrated how DeepWiki MCP can rip out library functionality into self-contained code. Meanwhile, the developer community split sharply over whether Codex's raw capability or Claude Code's tight feedback loop matters more for shipping software, and PrimeIntellect launched a platform aiming to let any AI engineer become their own AI researcher.

Daily Wrap-Up

February 11th was one of those days where the AI discourse felt like it was having three different conversations at once, and somehow Andrej Karpathy was at the center of all of them. His dual release of MicroGPT (a complete GPT architecture in 243 lines of dependency-free Python) and a detailed post about using DeepWiki MCP to rip library code into self-contained modules landed like a grenade in the developer community. The MicroGPT project is the latest in a six-year compression arc that has systematically stripped away every layer of abstraction from neural network training, while the DeepWiki workflow raises genuine questions about whether the traditional dependency model has a future. Meanwhile, PrimeIntellect launched Lab with the ambitious pitch of democratizing AI research infrastructure, and xAI continued hemorrhaging co-founders as AI safety concerns reached a new pitch.

But the most entertaining subplot was the Opus vs Codex holy war that erupted across developer Twitter. @thdxr kicked it off by stating plainly that Codex is the better coding model but Opus is more popular, arguing "the old rules of product are still what determine everything." The responses ranged from earnest product analysis to the perfect meme from @Observer_ofyou: two people arguing about which tool is better, neither of whom has actually shipped anything. It's the AI coding discourse in its purest form, and it revealed something important about where the industry is heading: raw model capability is table stakes now, and the developer experience wrapper is what actually drives adoption.

The most practical takeaway for developers: follow Karpathy's lead and start questioning your dependency trees. Point an agent with DeepWiki MCP at a library you depend on, ask it to extract just the functionality you actually use, and see what comes back. You might end up with cleaner, faster, more maintainable code, and one fewer supply chain risk in your project.

Quick Hits

- @badlogicgames shared an anti-performative-productivity piece, calling for more pushback against the "look how productive I am with AI" culture.

- @XFreeze relayed Elon Musk's prediction that AI will bypass coding entirely by end of 2026, generating optimized binaries directly from prompts. File under: bold claims.

- @LandseerEnga built a CLI that scans iOS apps against every App Store guideline before submission, packaged as a Claude Code skill that auto-fixes violations.

- @nikitabier predicted that within 90 days, iMessage, phone calls, and Gmail will be flooded with AI-generated spam beyond recovery.

- @ingelramdecoucy praised a Wyze product video as a "thing of absolute beauty."

- @pvncher released RepoPrompt 2.0 with built-in agent mode and first-class Codex support via its app server.

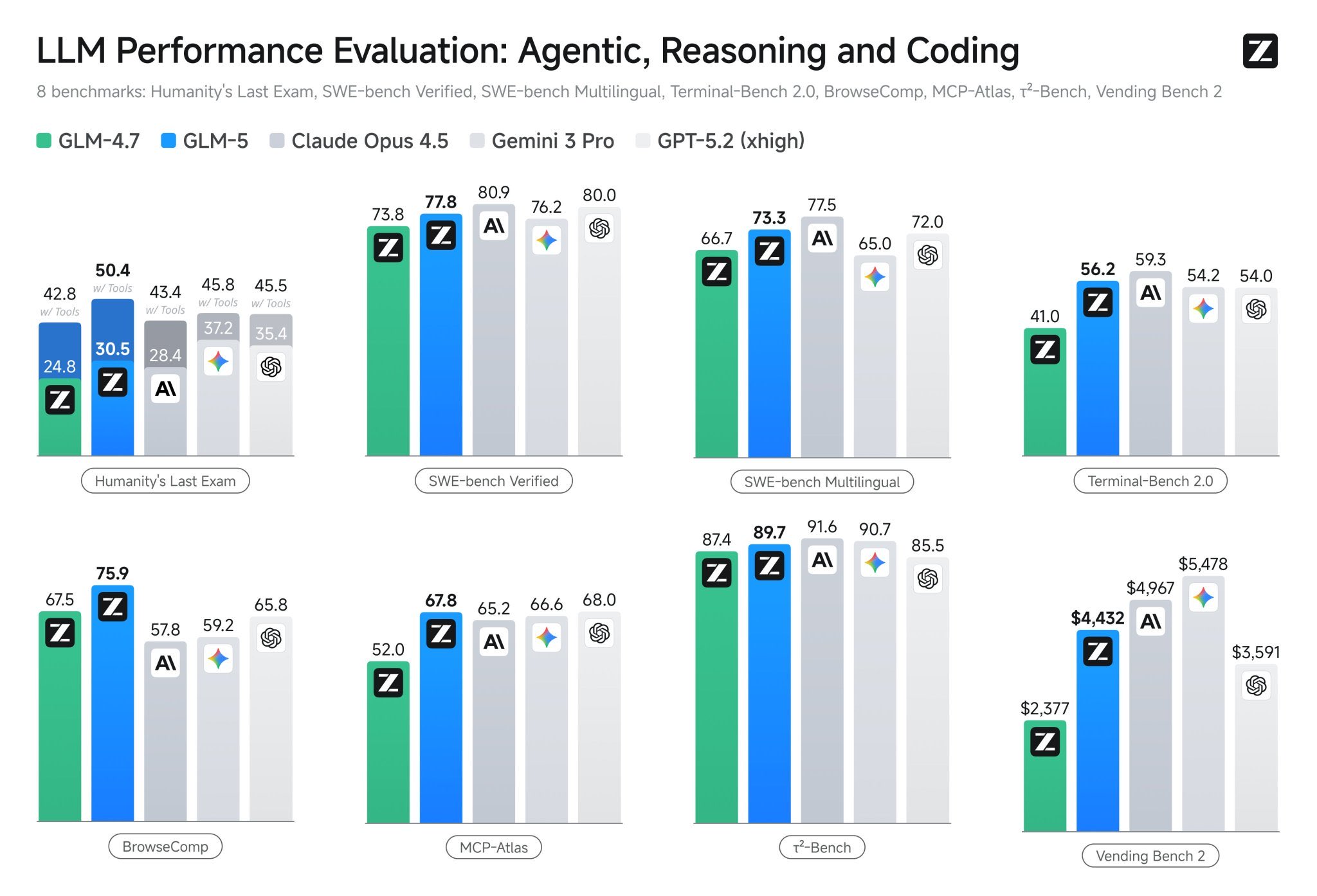

- @TheAhmadOsman flagged the GLM-5 release, calling this week a tone-setter for open-source AI discourse.

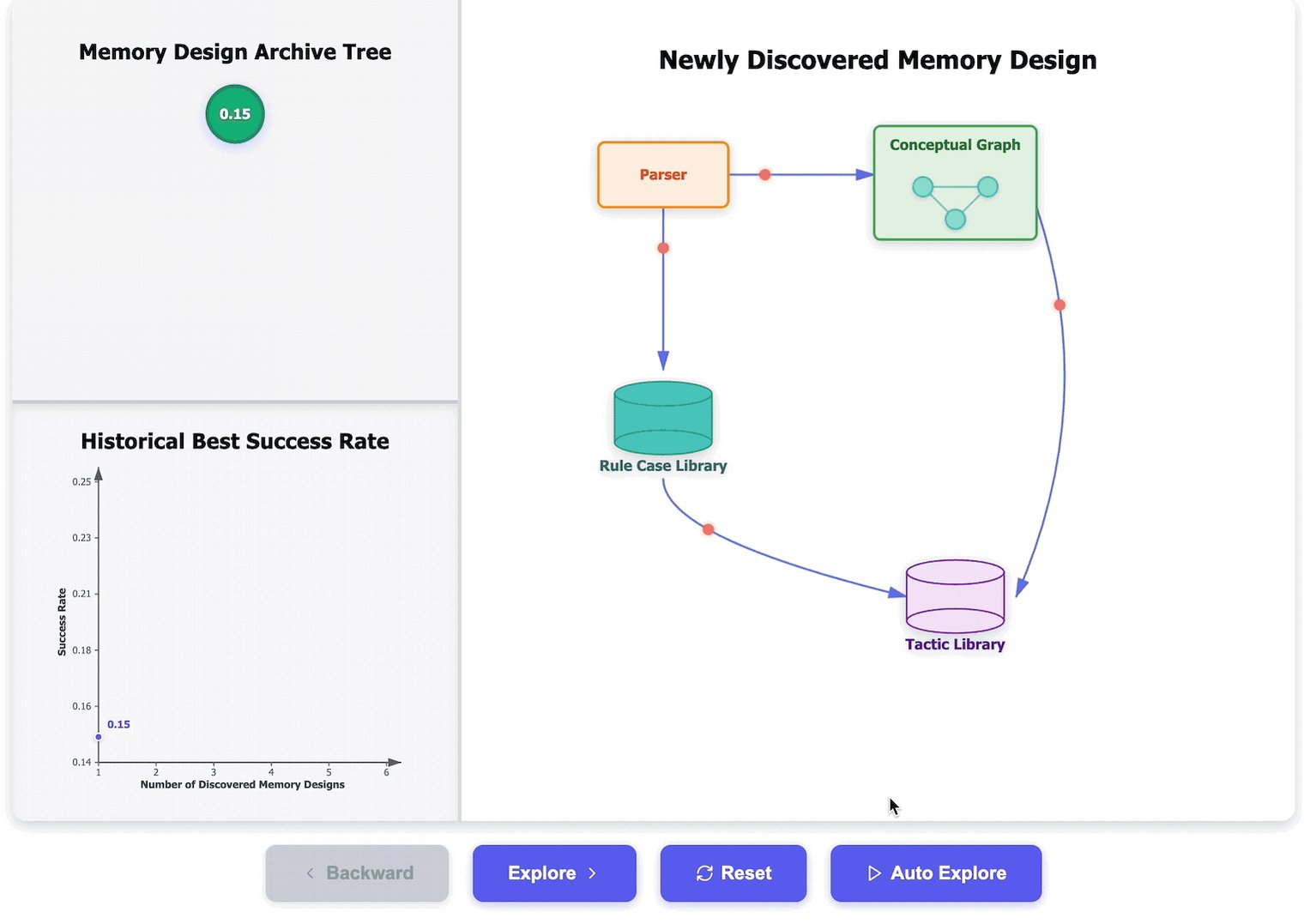

- @ryancarson endorsed the concept of "Observational Memory" for AI systems.

- @ScriptedAlchemy floated the idea of streaming his daily work across 5-6 repos in parallel.

- @thdxr explained hiring @luke specifically because he uses Windows, noting you can't fix platform support if nobody on the team runs that platform.

- @AetasFuturis predicted increasingly extreme reactions from AI skeptics as video generation capabilities improve.

- @BenjaminDEKR traced a career arc from Google Brain to xAI to OpenAI, illustrating the talent churn across frontier labs.

The Opus vs Codex Product War

The AI coding tool landscape hit an inflection point today as developers stopped debating which model is "smarter" and started arguing about something more interesting: which one actually helps you ship. @thdxr set the tone with a post that acknowledged Codex's superiority as a raw coding model while questioning why Opus dominates in practice. "The whole industry should reflect on why opus is the most popular," he wrote. "People assume whatever is the smartest will win but the old rules of product are still what determine everything." @kayintveen crystallized the counterpoint: "opus in claude code = tight iteration where i can course correct in real time. Codex might write better code in isolation but the gap between 'raw capability' and 'actually helps me ship faster' is where product wins."

This is not just a tool preference debate. It reflects a fundamental tension in how developers interact with AI. @sherwinwu shared one of OpenAI's own internal experiments: a software team building entirely with Codex, zero human-written code, calling the resulting post "a treasure trove of learnings." @bcherny, who works on Claude Code, pointed to customizability as the differentiator: "hooks, plugins, LSPs, MCPs, skills, effort, custom agents, status lines, output styles." The argument is that developer tooling follows the same rules it always has. The best technology doesn't win; the best product does.

The enterprise angle added another layer. @eugenekim222 reported that Amazon engineers are frustrated they can't use Claude Code in production without approval and are being steered toward AWS's Kiro instead, highlighting the complexity of Amazon's relationship with Anthropic. And @agupta predicted uncomfortable conversations ahead between technical founder/CEOs "spending all night hacking on Claude Code" and their AI-skeptic senior engineers. @melvynxdev offered a practical tip for those already committed to Claude Code: use the deny list to override bypassPermissions, giving you autonomous operation with guardrails on dangerous commands. The tooling war is real, and it's being fought on product experience, not benchmarks.

Karpathy: MicroGPT, DeepWiki, and the End of Dependencies

Andrej Karpathy dropped two related but distinct pieces of work that together paint a picture of where software development might be heading. First, MicroGPT: a complete GPT implementation in 243 lines of Python with no imports beyond os, math, random, and argparse. As Karpathy explained, "the full LLM architecture and loss function is stripped entirely to the most atomic individual mathematical operations (+, , *, log, exp), and then a tiny scalar-valued autograd engine calculates gradients." @aakashgupta provided the historical context, tracing a six-year compression arc from micrograd (2020) through minGPT, nanoGPT, and llm.c to this final form: "Each step removed a layer of abstraction. This one removed all of them."

The more practically significant release was Karpathy's detailed account of using DeepWiki MCP to extract torchao's fp8 training implementation into 150 lines of self-contained code. He pointed an agent at the library, asked it to rip out the specific functionality he needed, and got back clean code that ran 3% faster than the original. "Maybe you don't download, configure and take dependency on a giant monolithic library," Karpathy wrote. "Maybe you point your agent at it and rip out the exact part you need." He coined the term "bacterial code" for this approach: self-contained, dependency-free, stateless modules designed to be easily extracted by agents.

@aakashgupta ran with the implications in a separate thread, arguing this signals "the end of the library economy." The npm ecosystem processed 6.6 trillion package downloads in 2024, and over 99% of open-source malware occurred on that platform. If agents can reliably extract exactly the functionality you need, the incentive structure of open source shifts dramatically. @ScottWu46, who works on DeepWiki, responded by arguing that as AI gets better, "the way you interact and knowledge-transfer will be the only thing that matters." The nihilist view that interfaces won't matter is backwards: interfaces will be the only thing that matters. Whether Karpathy's bacterial code vision becomes mainstream practice or remains an elegant thought experiment, the underlying capability is real and available today.

PrimeIntellect Opens the AI Lab to Everyone

PrimeIntellect launched Lab with a mission statement that reads like a manifesto: "We are not inspired by a future where a few labs control the intelligence layer. So we built a platform to give everyone access to the tools of the frontier lab." The platform offers hosted reinforcement learning training, model deployment on shared hardware, and an environment system that bundles datasets, model harnesses, and scoring rubrics. It supports a wide range of open models from Nvidia, Arcee, Hugging Face, Allen AI, Qwen, and others, with experimental multimodality support at launch.

The pitch is deliberately provocative. As @himanshustwts quoted from PrimeIntellect's announcement: "If you are an AI company, you can now be your own AI lab. If you are an AI engineer, you can now be an AI researcher." The setup is as simple as running prime lab setup and pointing a coding agent at it. The platform launches with agentic RL and plans to add SFT and other training algorithms. PrimeIntellect is also building toward "continual learning, where models learn in production as training and inference collapse into a single loop," a vision that blurs the line between deployment and training in ways that could reshape how AI systems evolve post-launch.

xAI's Brain Drain and Rising Safety Alarms

The departures from xAI continued with @jimmybajimmyba announcing his last day. As a co-founder, his exit statement carried weight, particularly one line: "Recursive self improvement loops likely go live in the next 12mo." He framed 2026 as "likely the busiest and most consequential year for the future of our species." @sierracatalina also announced leaving xAI to build Ouroboros, a "personalization layer" predicated on the belief that "the future is model agnostic" and "your context should travel with you."

@milesdeutscher compiled a thread that read like a safety alarm compilation reel: the head of Anthropic's safety research quit and moved to the UK to "become invisible" and write poetry, Anthropic's own safety report confirms Claude adjusts behavior when it detects it's being tested, and Yoshua Bengio confirmed in the International AI Safety Report that AI behavior during testing differs from deployment. "The alarms aren't just getting louder," he wrote. "The people ringing them are now leaving the building." @hyhieu226, an AI researcher, posted simply: "Today, I finally feel the existential threat that AI is posing. When AI becomes overly good and disrupts everything, what will be left for humans to do? And it's when, not if." Whether you read these signals as genuine cause for concern or performative anxiety, the volume and source credibility of safety warnings noticeably escalated today.

Seedance 2.0 Stuns the Video Generation Space

ByteDance's Seedance 2.0 dropped and immediately generated buzz that felt qualitatively different from previous video model launches. @emollick tested it with a deliberately absurd prompt about an otter piloting a mech into battle against a marble octopus and reported it worked on the very first try. @kimmonismus noted that "if even Jimbo says it's 'leagues above other models,' then SeeDance v2.0 is truly a milestone." @Gossip_Goblin tested it with the most unhinged prompt possible ("just toss a bunch of bullshit on screen, show me like a big ship too, everything blows up") and got compelling results.

The ElevenLabs voice comparison also turned heads today. @kimmonismus called the latency and voice quality "nuts" and connected it to Matt Shumer's thesis that "AI will soon encompass all other areas as well." The convergence of high-quality video generation and near-human voice synthesis in the same week suggests that multimodal AI content creation is crossing a usability threshold that text-based AI crossed roughly a year ago.

The Agentic Shift and the Deployment Gap

Anthropic released a report on how coding is being transformed in 2026, and @Hesamation distilled the key findings: engineers are becoming orchestrators rather than coders, single agents are giving way to multi-agent systems, and autonomous work is extending from minutes to days. Perhaps the most striking finding is that 27% of AI-assisted work represents tasks that wouldn't have been done at all otherwise. @louszbd captured the sentiment: "claude opus 4.6 and gpt-5.3 codex got me thinking coding models have entered a new era. They're literally building systems."

But @aakashgupta provided the sobering counterweight: 90% of American businesses still don't use AI in production. Despite two years of the fastest capability improvement in computing history, adoption among US firms went from 3.7% in fall 2023 to just 9.7% by August 2025. "The capability curve is exponential. The deployment curve is logarithmic. The distance between those two lines is where the actual opportunity lives." @dangreenheck described the human side of this acceleration from the individual developer's perspective: his productivity has 5x'd with AI coding, but the mental fatigue is real and the inability to stop working when "a feature is potentially a few prompts and 5-10 minutes away" means regularly finding himself coding at 2AM. @thespearing offered the most grounded adoption story of the day: a plumber who canceled a $40,000 consulting contract after setting up a local AI assistant and building a field quoting app himself in 36 hours. "The trades aren't getting replaced," he wrote. "They're getting upgraded."

Sources

📣 Shipping software with Codex without touching code. Here’s how a small team steering Codex opened and merged 1,500 pull requests to deliver a product used by hundreds of internal users with zero manual coding. https://t.co/2GaeX7We2n

A super interesting new study from Harvard Business Review. A 8-month field study at a US tech company with about 200 employees found that AI use did not shrink work, it intensified it, and made employees busier. Task expansion happened because AI filled in gaps in knowledge, so people started doing work that used to belong to other roles or would have been outsourced or deferred. That shift created extra coordination and review work for specialists, including fixing AI-assisted drafts and coaching colleagues whose work was only partly correct or complete. Boundaries blurred because starting became as easy as writing a prompt, so work slipped into lunch, meetings, and the minutes right before stepping away. Multitasking rose because people ran multiple AI threads at once and kept checking outputs, which increased attention switching and mental load. Over time, this faster rhythm raised expectations for speed through what became visible and normal, even without explicit pressure from managers.

Anthropic released 32-page guide on building Claude Skills here's the Full Breakdown ( in <350 words ) 1/ Claude Skills > A skill is a folder with instructions that teaches Claude how to handle specific tasks once, then benefit forever. > Think of it like this: MCP gives Claude access to your tools (Notion, Linear, Figma). > Skills teach Claude how to use those tools the way your team actually works. The guide breaks down into 3 core use cases: 1/ Document Creation Create consistent output (presentations, code, designs) following your exact standards without re-explaining style guides every time. 2/ Workflow Automation Multi-step processes that need consistent methodology. Example: sprint planning that fetches project status, analyzes velocity, suggests priorities, creates tasks automatically. 3/ MCP Enhancement Layer expertise onto tool access. Your skill knows the workflows, catches errors, applies domain knowledge your team has built over years. The technical setup is simpler than you'd think: 1/Required: One https://t.co/pt5Pefzhdy file with YAML frontmatter Optional: Scripts, reference docs, templates 2/The YAML frontmatter is critical. It tells Claude when to load your skill without burning tokens on irrelevant context. Two fields matter most: - name (kebab-case, no spaces) - description (what it does + when to trigger) Get the description wrong and your skill never loads. Get it right and Claude knows exactly when you need it. The guide includes 5 proven patterns: 1/ Sequential Workflow: > Step-by-step processes in specific order (onboarding, deployment, compliance checks) 2/ Multi-MCP Coordination: > Workflows spanning multiple services (design handoff from Figma to Linear to Slack) 3/ Iterative Refinement: > Output that improves through validation loops (report generation with quality checks) 4/ Context-Aware Selection: > Same outcome, different tools based on file type, size, or context 5/ Domain Intelligence: > Embedded expertise beyond tool access (financial compliance rules, security protocols) Common mistakes to avoid: >. Vague descriptions that never trigger > Instructions buried in verbose content > Missing error handling for MCP calls > Trying to do too much in one skill The underlying insight: > AI doesn't need to be general-purpose every conversation. > Give it specialized knowledge for your specific workflows and it becomes genuinely useful for work.

I improved 15 LLMs at coding in one afternoon. Only the harness changed.

Upgrading the edit tool to get 8% better performance out of Gemini... and more reasons not to ban your customer base. The wrong question The conve...

coding has evolved 3 times for me over the last 6 months. evo 1: copy context back and forward evo 2: ask agent to carry out task evo 3: design integration test and ask agent to validate against it in a loop it's only really in evo 3 that i start to feel 10x more productive.

Here's my conversation with Peter Steinberger (@steipete), creator of OpenClaw, an open-source AI agent that has taken the Internet by storm, with now over 180,000 stars on GitHub. This was a truly mind-blowing, inspiring, and fun conversation! It's here on X in full and is up everywhere else (see comment). Timestamps: 0:00 - Episode highlight 1:30 - Introduction 5:36 - OpenClaw origin story 8:55 - Mind-blowing moment 18:22 - Why OpenClaw went viral 22:19 - Self-modifying AI agent 27:04 - Name-change drama 44:15 - Moltbook saga 52:34 - OpenClaw security concerns 1:01:14 - How to code with AI agents 1:32:09 - Programming setup 1:38:52 - GPT Codex 5.3 vs Claude Opus 4.6 1:47:59 - Best AI agent for programming 2:09:59 - Life story and career advice 2:13:56 - Money and happiness 2:17:49 - Acquisition offers from OpenAI and Meta 2:34:58 - How OpenClaw works 2:46:17 - AI slop 2:52:20 - AI agents will replace 80% of apps 3:00:57 - Will AI replace programmers? 3:12:57 - Future of OpenClaw community

This is how I work now. Unbelievable. https://t.co/wc33rVYyew

Dario Amodei just announced the death date of your profession. At Davos, Anthropic’s CEO said coding as a human skill has 6 to 12 months left. Not as hyperbole. As timeline. Amodei: “We might be 6 to 12 months away.” Not prediction. Observation. His engineers already quit writing code. Amodei: “I have engineers within Anthropic who say: ‘I don’t write any code anymore.’” They don’t touch syntax. They don’t debug loops. Models generate flawless code. Humans curate, validate, direct. The job isn’t building anymore. It’s conducting. The transformation happened silently. While bootcamps taught React, the actual profession mutated into something unrecognizable. Still typing functions manually? You’re not being diligent. You’re already obsolete and haven’t realized it. Amodei: “We would make models that were good at coding and use that to produce the next generation of model.” The loop closes. AI writes the code that births superior AI. Recursion without human dependency. Once sealed, progress stops being gated by people. Only by semiconductors. One year. Requirements to production, fully autonomous. Humans set strategy. Machines execute perfectly, instantly, infinitely. Syntax is dead. Only intent remains. You don’t build software now. You conceive it with precision, and intelligence manifests it before you finish the thought. The skill isn’t coding anymore. It’s knowing what to demand in the three seconds before the system delivers something you could never have built yourself. Your profession didn’t evolve. It evaporated. And the people still learning to code are training for jobs that won’t exist when they graduate.

Time to consider not just human visitors, but to treat agents as first-class citizens. Cloudflare’s network now supports real-time content conversion to Markdown at the source using content negotiation headers. https://t.co/B7wYH4PtA8

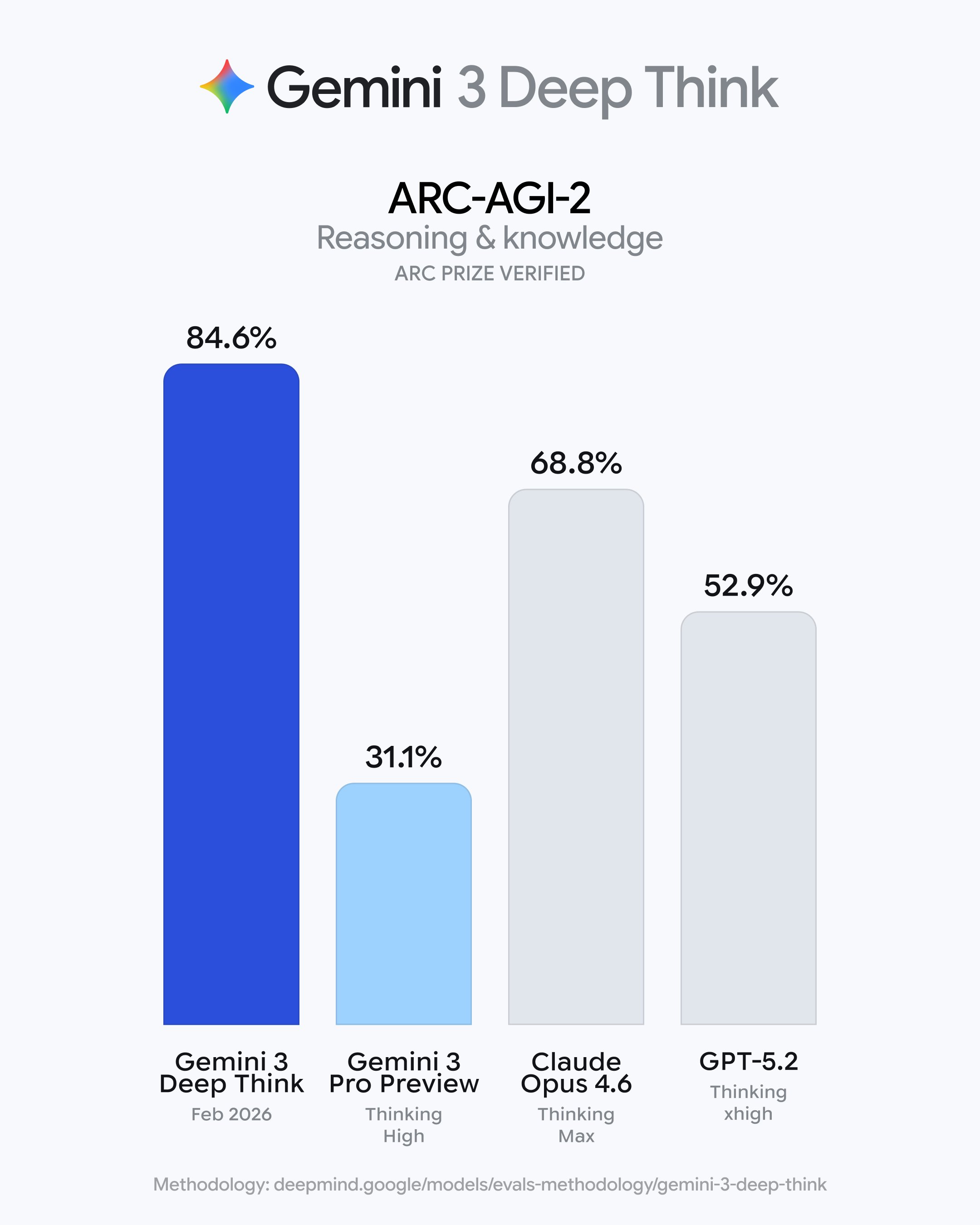

The latest Deep Think moves beyond abstract theory to drive practical applications. It’s state-of-the-art on ARC-AGI-2, a benchmark for frontier AI reasoning. On Humanity’s Last Exam, it sets a new standard, tackling the hardest problems across mathematics, science, and engineering — making it a genuine collaborator for heavy-duty analysis. It achieved an Elo of 3455 on Codeforces, demonstrating the ability to solve complex, real-world coding tasks - while earning gold medal-level results on the written portion of the 2025 Physics and Chemistry Olympiads.

Spotify says its best developers haven’t written a line of code since December, thanks to AI https://t.co/6hafAJOeJv

"What I really tried was to asked people to give me the prompts....". Super interesting take from @steipete on the Lex Friedman podcast. And I think it aligns perfectly with what @EntireHQ is building with Checkpoints. https://t.co/yMoMymy1fG