Anthropic Launches $100K Hackathon as AI Industry Braces for 'Fast Takeoff' Discourse

The developer community is converging on multi-agent orchestration and persistent context management as the next critical infrastructure layer, with OneContext earning its creator a Google interview and one developer solving context compaction with a creative mix of cron jobs and vector search. Meanwhile, Seedance 2.0 demos out of China have the film industry reassessing its future, and the AI acceleration discourse continues to intensify.

Daily Wrap-Up

The throughline today is infrastructure for persistence. Whether it's agent memory, context windows, or orchestration layers, the people actually building with AI are converging on the same problem: how do you keep these systems coherent across sessions, tasks, and teams? @JundeMorsenWu got cold-emailed by Google's Gemini team after building OneContext, a persistent context layer for coding agents. @PerceptualPeak spent a grueling day solving context transfer across compaction boundaries in their Clawdbot system. @ryancarson laid out the thesis plainly: nobody wants one agent anymore, they want one agent that runs teams of agents. The tooling layer between "single prompt" and "autonomous workforce" is where the real engineering is happening right now, and it's still wide open.

On the creative side, Seedance 2.0 out of China is generating the kind of reaction that Sora got a year ago, but with capabilities that feel a generation ahead. @EHuanglu described features that sound almost too aggressive: upload screenshots from any movie and generate new scenes that match the original's look, or upload clips and swap characters, add VFX, change backgrounds on the fly. Chinese indie filmmakers are already producing full AI-generated movies with it. The "Will Smith eating spaghetti" benchmark that @SpecialSitsNews referenced feels quaint now. The gap between "impressive demo" and "production tool" is collapsing faster than anyone expected.

The acceleration discourse is louder than ever, with multiple voices warning that 2026 is the inflection point. But @backseats_eth offered the most grounded take of the day: ignore the AI theatre, skip the vibe coders who learned last month, and spend your time learning from experienced engineers who are updating their processes with AI. The most practical takeaway for developers: invest your learning time in persistent context management for your AI tooling. Whether it's OneContext, custom memory systems, or simple file-based approaches, the developers who solve context continuity across agent sessions will have a massive productivity edge over those still treating each AI interaction as a blank slate.

Quick Hits

- @elonmusk announced SpaceX has shifted focus to building a self-growing Moon city first, citing the 10-day launch cadence vs. Mars's 26-month alignment windows. Mars plans still on the table in 5-7 years.

- @SawyerMerritt summarized the SpaceX pivot: "The overriding priority is securing the future of civilization and the Moon is faster."

- @elonmusk also promoted the Starlink Super Bowl ad. Busy day for the timeline's most prolific poster.

- @exolabs teased what they call the future of local AI architecture: separate specialized chips for prefill and decode phases. Worth watching if you're into inference optimization.

- @thdxr announced improved traffic routing for Kimi K2.5 on Zen, calling the speed "something different." The inference provider wars continue heating up.

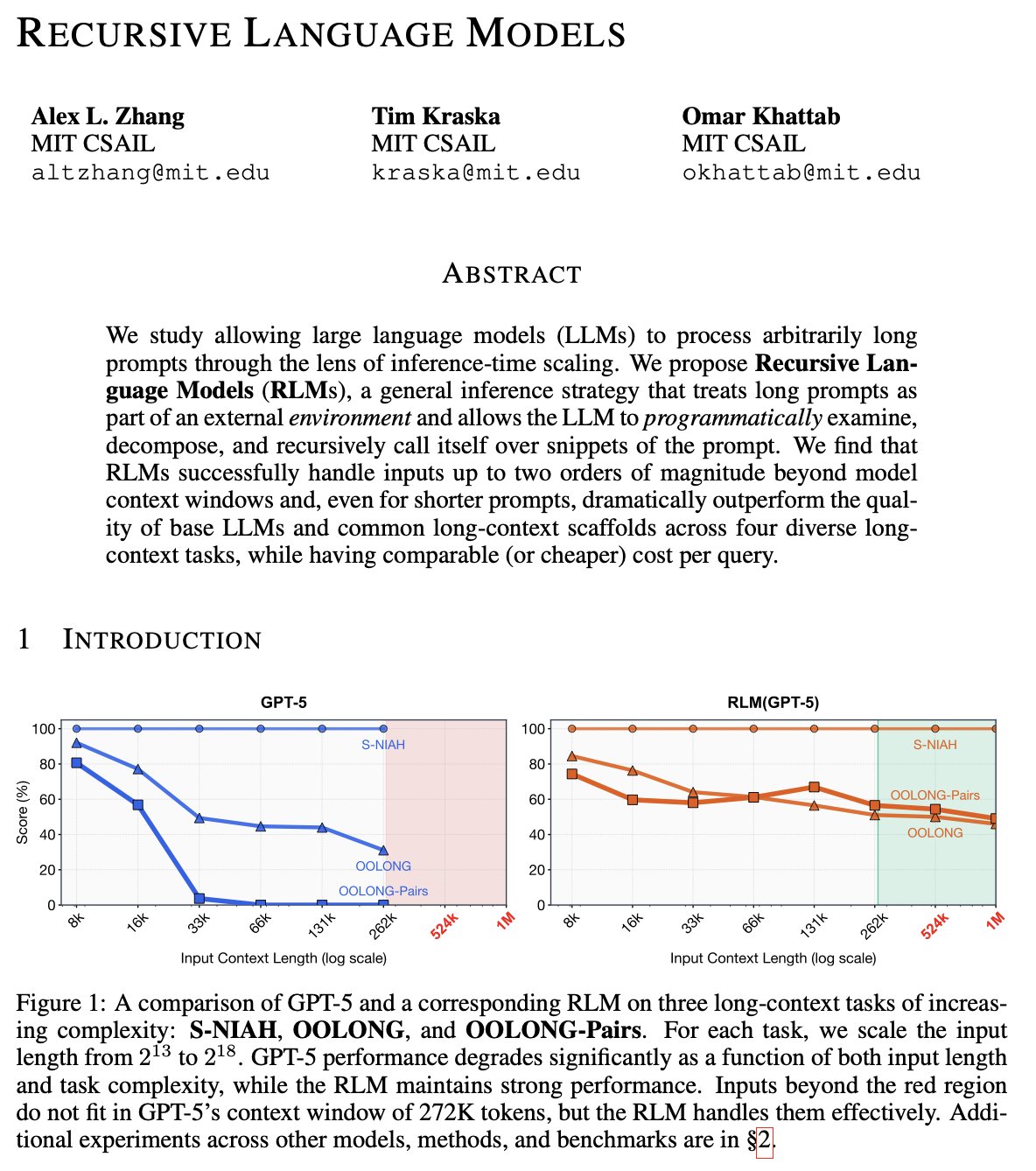

- @inductionheads dropped a brief but emphatic endorsement of RLMs (Reinforcement Learning Models) as a breakthrough. No details, just "please make sure you understand this."

- @andrewmccalip revealed their app received its first acquisition offer. No details on the app or the offer, but the vibes were good.

Agent Orchestration and Persistent Context

The single biggest cluster of conversation today revolved around how to make AI agents remember things and work together. This isn't the "build an agent" hype from six months ago. The people posting about this are deep in implementation, fighting real problems around context windows, session boundaries, and multi-agent coordination.

The standout project is OneContext by @JundeMorsenWu, which @LLMJunky described as "a persistent context layer that sits above your coding agents." The pitch is straightforward: it automatically manages and syncs context across agent sessions so any new agent you spin up already knows everything about your project. The simplicity of the setup and cross-agent compatibility (Codex, Claude Code, Gemini) clearly resonated. As @LLMJunky put it:

> "There are other similar strategies surrounding agent memory, but I don't think I've seen one quite like this. It's incredibly simple to set up, works across all of your various coding agents."

The project earned @JundeMorsenWu a cold email from Google's Gemini team, proving that building in public still works as a career strategy.

On the more DIY end of the spectrum, @PerceptualPeak shared an exhaustive breakdown of how they solved context transfer between pre-compacted and post-compacted states in their Clawdbot system. The approach layers multiple strategies: hourly cron jobs that summarize work into a running memory file, injection of 24 hours of summaries into post-compaction context, a persistent JSONL conversation log that survives compaction, and a vector database with bi-hourly embedding of learnings from chat logs. The result, in their words:

> "Anytime I submit a prompt to Clawdbot, it will embed my prompt, find relevant memories in the vector database, and inject them alongside my prompt before it even begins processing. These additions have completely changed the game for me."

@ryancarson connected this to the broader market opportunity, arguing that the winning solution for agent orchestration won't come from a single lab. "The solution will be a clever mix of closed/open source models + deterministic orchestration," he wrote, noting that everyone wants "1 agent who runs teams of agents." @ScriptedAlchemy shared that their own AI orchestration system caught attention from Kent (likely Kent C. Dodds), while @ashebytes captured the weekend warrior energy of anyone deep in this space with a simple "mood when orchestrating agents on the weekend."

The convergence here is real. Three independent builders, all solving variations of the same problem, all getting traction. The infrastructure layer for agent persistence and orchestration is the bottleneck, and the market knows it.

The Acceleration Consensus

A significant chunk of today's posts carried the same message from different angles: the pace of AI progress is about to become undeniable to everyone, not just the people paying close attention. The tone ranged from urgent to anxious.

@kimmonismus set the stakes directly, calling 2026 "the year everything changes" and claiming "the take-off will be felt by everyone." @chatgpt21 cited Gabriel (a former Sora lead at OpenAI) warning that this is "the last time to get employment before the fast takeoff," adding their own interpretation that people should "lock in your current job and buckle down for the singularity." @deredleritt3r quoted someone describing models that are "10x faster, smarter, and more capable in specific domains" and admitted:

> "Imagining AI progress continuing at its current velocity is already difficult. I must confess that imagining it relentlessly accelerating over the foreseeable future is almost beyond me."

The career implications showed up in two sharp observations. @cgtwts noted that "engineers' worst nightmare has come true, they all have to become product managers," while @hkarthik simply wrote "as the cost of code falls to zero," letting the implication hang. Whether or not you buy the strongest versions of these claims, the directional shift toward product thinking and away from pure implementation skill is hard to argue with. The builders who thrive will be the ones who can define what to build, not just how to build it.

Building at AI Speed

While some people debated timelines, others just shipped. Today's coding posts showed what's actually possible when you lean hard into AI-assisted development, along with some wisdom about how to approach it.

@martin_casado posted an update on a multiplayer game world built in just 8 hours of development time: item layer, object interactions, multi-world portals, live editing, persistent backend, and multiplayer with movement prediction. Built with Cursor and Convex, primarily using Codex 5.2 and Opus 4.6. The scope of what fits into a single sitting now is genuinely different from even six months ago. @garybasin reinforced this with a raw stat: "After ripping through a billion tokens in 8 hours I can attest this is the future."

@steipete noted that "even the amp folks fell in love with Codex," adding a pointed aside about VS Code agent sidebars: "I know exactly one guy that uses a VS Code agent sidebar. Burn it." The terminal-first agent workflow continues to gain ground over IDE-embedded approaches.

The most useful counterweight came from @backseats_eth, who pushed back on the noise-to-signal ratio in the AI development space:

> "Spend more time on content of experienced engineers updating their processes with AI than vibe coders who learned last month."

Good advice. The people getting real results aren't the ones making flashy demo threads. They're the ones quietly integrating AI into established engineering workflows and sharing what actually works.

Seedance 2.0 and the Future of Film

The most visceral reactions of the day came from @EHuanglu's two posts about Seedance 2.0, a video generation model out of China that appears to have leapfrogged Western competitors in practical capability.

The feature list reads like science fiction for anyone who worked in post-production even two years ago. Upload a script, get generated scenes with VFX, voice, sound effects, and music, all edited together. Upload storyboard frames from existing movies and generate matching scenes. Upload film clips and edit anything: swap characters, add effects, change backgrounds. As @EHuanglu described:

> "Literally every job in film industry is gone. You upload a script, it generates scenes with VFX, voice, SFX, music all nicely edited... this feels like the quiet end of traditional film industry and the beginning of something we don't know."

The second post noted that Chinese indie filmmakers have already gone "full insane mode" and started producing 100% AI-generated movies with the tool. @SpecialSitsNews offered a lighter take, calling the "Will Smith eating spaghetti" video the true benchmark for AI video progress, a callback to the infamous early Sora demo that became a meme for uncanny valley artifacts.

The geographic dimension matters here. @EHuanglu noted that Seedance 2.0 isn't available outside China, and speculated about features that "feel so illegal" in terms of intellectual property implications. The regulatory and copyright questions around these tools are going to become very loud, very fast. But the capability genie is out of the bottle, and the creative industries are going to look fundamentally different by the end of this year.

Sources

a new ai video model Seedance 2.0 is beta testing in china.. this is going to blow ur mind https://t.co/upBeN2SOOR

by popular demand, here are my agent coding tips and tricks that YOU MUST know or be LEFT BEHIND FOREVER: 1⃣the best model is task dependent. codex 5.2/5.3 has been consistently much better at AI, pytorch, ML. opus 4.5/4.6 is more pragmatic and obviously fast. at your actual task, model capabilities and styles may be wildly different. figure out what works for you rapidly. given the above... 2⃣dual wield two models in whatever harness works for you. come up with a workflow where you can shift between models easily when one gets stuck. for me this looks like claude code in the terminal and cursor with codex 5.3. don't sleep on cursor, it has a very good harness that is battle tested across models. at times where a third model (or a cheaper model like K2.5) is in the arena, it can be very helpful to be able to flip back and forth in a normal agentic chat environment. but also... if you're comfortable with what you use, stick with it. workflow optimization is the enemy of productivity. thus... 3⃣minimize skills, mcp, rules as much as possible and add them slowly if at all. i use no skills, no mcp in any of my workflows. treat your context window like a life bar and have respect for the core competency of the models. over time tool use, capabilities will continue to improve and you'll be wasting time explaining skills or tools that can natively be used by the model. there are exceptions to this and sometimes its fun to experiment with a prompt someone else has made (this is all a skill is). in the long term, i can imagine skills being a great way to, for example, inject some of the latest updates and knowledge of the most recent next js capabilities into a model without that inherent knowledge. or to copy a prompt from someone who has had great results in a particular task. however, generally... avoid loading up here. 4⃣ have something to actually build. the more time you spend optimizing without a target the less effective you are. the most aggressive breakthrough moments for me were about obsessing over a problem. these are the times that your workflow gets rebuilt, but you will have an actual metric internal to build intuition against: is all of this actually helping me get things done faster or not? 5⃣ add measurement to kill noise. as "orchestration" methods and other "infinite agent loop" structures re-emerge, treat them all with suspicion. they may work very well for your use case and they can be super fun to try out esp for a side hobby project. but when you're working in production or on a serious goal, try to build some minimal measurement to keep yourself honest. it can feel like you're making progress in the short term very rapidly. this might as simple as writing down how much time you're actually spending checking in / correcting the bot that's running "autonomously" versus if you just sat down and hand prompted over an hour. additionally, use straight forward, verifiable tests to better understand if your agents are making progress or not against the goal. very simple, nothing ground breaking but easy to get lost in the sauce with ralph loops etc. and then finally, most important: 🚨ignore the noise🚨 there will always be a HOT NEW TRICK to OPTIMIZE YOUR PRODUCTIVITY x2. ignore them. hate them. banish them. just do work. do more work. every minute you spend watching a youtube tutorial is a minute you could have been screaming at the computer to do its job better. the models will change, the behaviors will get trained in, orchestration will get trained in. the tips and the tricks of today are not always going to translate. build the intuition on what works personally for you now and then use YOUR criteria to judge the next new thing, not someone else's.

OH MY FKING GODDDDDD 😱😱😱 indie filmmakers in china have already gone FULL INSANE MODE and started making movies using Seedance 2.0.. 100% AI https://t.co/ljUg7tTbjn

Will Smith eating spaghetti is the true test of AI $msft $goog $meta $nvda https://t.co/GM1M0r40Ru

Wow. This clever new project got Junde an instant interview at @GoogleAI. OneContext is a persistent context layer that sits above your coding agents. It automatically manages and syncs context across all your agent sessions, so any new agent you spin up already knows everything about your project. There are other similar strategies surrounding agent memory, but I don't think I've seen one quite like this. It's incredibly simple to set up, works across all of your various coding agents like Codex, Claude Code, Gemini, and more, and it allows you to share context between team members via a simple link. Bookmark this one. I'm following it closely.

Strongly recommend explicitly telling Claude Code to only use Sonnet or Opus for sub agents Explore Agent defaults to Haiku, and Task Agent is specified by parent For large, complex repos, this means high potential of missing key logic You will see the model used as below: https://t.co/gvtO7679cc

Anthropic CPO Mike Krieger says that Claude is now effectively writing itself Engineers regularly ship 2–3,000-line pull requests generated entirely by Claude Dario predicted a year ago that 90% of code would be written by AI, and people thought it was crazy "today it's effectively 100%"

If you're coding with AI agents, check out @doodlestein's destructive_command_guard. It just saved me from losing hours of work by catching a dangerous shell command before it executed. A genuinely useful safety net. https://t.co/as1mWTFMkB https://t.co/xBIYCvGyuK

Claude Code Desktop now supports --dangerously-skip-permissions! This skips all permission prompts so Claude can operate fully autonomously. Great for workflows in a trusted environment where you want no interruptions, no approval prompts, just uninterrupted work. But as the name suggests... use it with caution! 🙏