Xcode Integrates Claude Agent SDK as Industry Standardizes on .agents/skills

Apple's Xcode 26.3 launched with full Claude Agent SDK integration while .agents/skills rapidly emerged as the industry-standard format for coding agent customization, with VS Code, Copilot, Codex, and Cursor all adopting it. Meanwhile, Alibaba's Qwen dropped a 3B-parameter coding model matching Sonnet 4.5 performance, and the community debated whether agentic search has definitively beaten RAG for codebase understanding.

Daily Wrap-Up

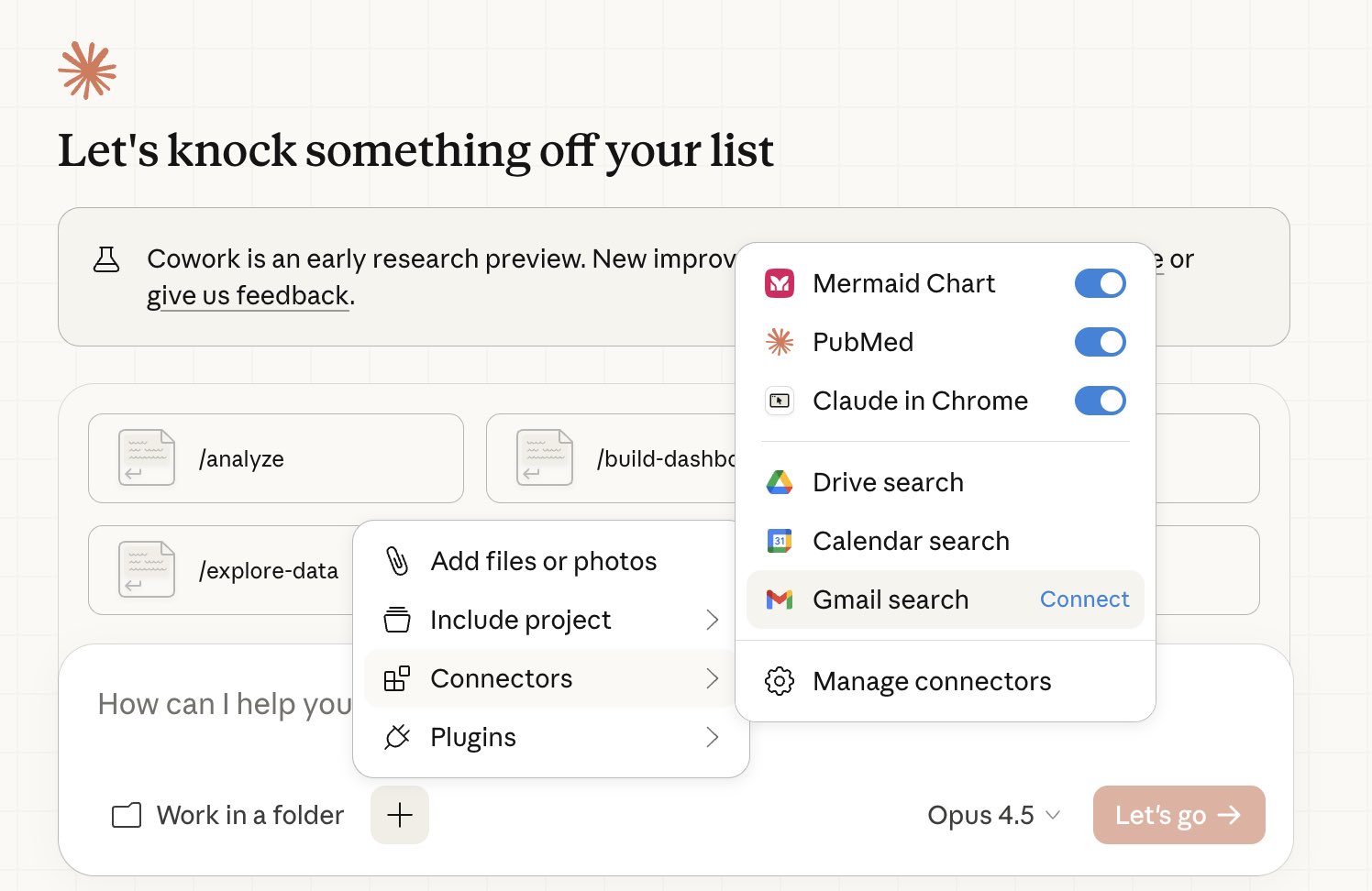

Today felt like a tipping point for coding agents as platform infrastructure rather than novelty tools. The biggest signal was Apple shipping Xcode 26.3 with native Claude Agent SDK integration, giving iOS and Mac developers the same subagent, background task, and plugin architecture that powers Claude Code. That is not an experiment or a beta flag. That is Apple building agent-native workflows into its flagship IDE for millions of developers. Combine that with VS Code, Copilot, Cursor, and Codex all converging on the .agents/skills directory format, and you start to see a world where agent customization is as portable as a .gitignore file.

The model layer had its own moment. Alibaba quietly released a Qwen coding model with only 3B active parameters that benchmarks near Sonnet 4.5 levels, small enough to run locally on modest hardware. @karpathy continued his GPT-2 speedrun saga, pushing training time down to 2.91 hours with fp8 and noting the whole thing costs about $20 on spot instances. The gap between frontier capability and local inference keeps narrowing. @simonw flagged an Unsloth quantized model at 46GB that might actually drive a coding agent harness effectively, which would be a meaningful threshold if confirmed.

The most entertaining moment was @banteg throwing down a gauntlet, challenging "claude boys, ralph boys" to take two decompiled C files and rewrite an entire game in a different engine, ideally running in a browser. A serious peace offering wrapped in competitive energy. The most practical takeaway for developers: adopt the .agents/skills directory convention now. With five major tools converging on the same format, skills you write today will be portable across Claude Code, Copilot, Cursor, Codex, and VS Code without modification.

Quick Hits

- @theworldlabs showed off persistent 3D scenes from their world model, no 60-second time limits, just stay and build.

- @minchoi dropped a creative piece with the caption "Haters will say no AI was used." No further commentary needed.

- @OpenAIDevs announced a live Codex app workshop with @romainhuet and @dkundel building apps end to end.

- @kloss_xyz shared what a $200/mo Claude setup looks like in practice.

- @pierceboggan asked the VS Code + GitHub Copilot CLI crowd what to prioritize improving.

- @minchoi highlighted Higgsfield AI's Vibe-Motion, prompt-to-motion-design powered by Claude reasoning.

- @felixleezd published a Claude Code guide specifically for designers.

- @HuggingModels surfaced GLM-4.7-Flash-Uncensored-Heretic, a zero-guardrails text generator that the community is buzzing about.

- @jukan05 raised eyebrows about potential OpenAI staff layoffs.

- @banteg challenged AI coding enthusiasts to rewrite a fully decompiled game from two C files into any language and engine, ideally browser-runnable.

- @KaranKunjur reflected on K2 Space's journey from "why would anyone need a 100kW satellite?" to mainstream orbital data center ambitions.

- @cb_doge laid out Elon Musk's five-step plan to reach Kardashev Type II civilization through orbital AI data centers.

- @micLivs offered blunt advice on the Claude Code configuration discourse: "just create a symlink and get on with your life."

Claude Code: Slack, Xcode, and a Growing Ecosystem

Claude Code had one of its most feature-dense days in recent memory. The headline announcement was Apple integrating the Claude Agent SDK directly into Xcode 26.3. As @mikeyk put it: "Devs get the full power of Claude Code (subagents, background tasks, and plugins) for long-running, autonomous work directly in Xcode." This is not a chat sidebar or autocomplete feature. It is the full agent runtime embedded in Apple's development environment, covering everything from iPhone to Apple Vision Pro.

On the web side, @claudeai announced Slack integration for Pro and Max plans, letting users "search your workspace channels, prep for meetings, and send messages back to keep work moving forward." @_catwu showed the real-world impact: "We have a user feedback channel where we regularly tag in @Claude to investigate issues and push fixes." That workflow, user reports a bug in Slack, Claude investigates and ships the fix, is becoming increasingly normal.

> "Claude Code 2.1.30 is out. 19 CLI, 1 flag, and 1 prompt changes." -- @ClaudeCodeLog

@lydiahallie announced session sharing across web, desktop, and mobile, and @Yampeleg highlighted the new /insights command in 2.1.30. The browser got attention too, with @trq212 showing Claude connecting to Chrome through the VS Code extension for frontend debugging and browser automation. On the business side, @rockatanescu noted Anthropic offers premium seats at $125/mo with limits similar to the $100 consumer plan, while @OrenMe did the math showing that $1,000/mo on GitHub Copilot yields roughly 8,500 Opus-level requests, arguing the value proposition is stronger than many realize.

The .agents/skills Standard Takes Hold

Something quietly significant happened today: the industry converged on a directory convention. @theo tracked the adoption with characteristic directness: "Products that moved to .agents/skills so far: Codex, OpenCode, Copilot, Cursor. Not Claude Code." The conspicuous absence of Claude Code from the list drew attention, but the broader signal is unmistakable. When four major coding tools adopt the same file structure independently, that is a de facto standard.

> "We're adding support for .agents/skills in the next release! This will make it easier to use skills with any coding agent." -- @leerob

@pierceboggan confirmed .agents/skills coming to VS Code proper, and @haydenbleasel showed Vercel's AI Elements Skills installable via a single npx skills add command. The marketplace layer is forming too, with @EXM7777 promoting SkillStack as an audited distribution channel: "investing in skills is the best play you can make in 2026." Whether or not you buy the marketplace hype, the portability story is real. A skill written once can now run across most major agent-powered development tools.

Models: Qwen's 3B Coder, Sonnet 5 Whispers, and fp8 Training

The model landscape shifted in several directions simultaneously. The most striking announcement was Alibaba Qwen releasing a coding model with only 3B active parameters. @itsPaulAi captured the reaction: "Coding performance equivalent to Sonnet 4.5. Comparable to models with 10x-20x more active parameters. But you can run it LOCALLY." If those benchmarks hold in real-world usage, this compresses the gap between cloud-hosted frontier models and what fits on consumer hardware.

@karpathy shared a detailed update on his GPT-2 speedrun, pushing training time to 2.91 hours with fp8 precision on H100s. The economics are striking: roughly $20 on spot instances. His candid assessment of fp8's complexity was refreshing: "On paper, fp8 on H100 is 2X the FLOPS, but in practice it's a lot less." The nuance around tensorwise vs. rowwise scaling, and the tradeoff between step quality and step speed, is the kind of detail that separates benchmarks from production.

On the frontier side, @synthwavedd spotted what appears to be a soft launch of Sonnet 5 on claude.ai, noting that "Anthropic have stealth launched models hours before release almost every time." @kimmonismus flagged Anthropic's image model going live on LMArena. @simonw raised an important practical question about whether Unsloth's 46GB quantized model can actually drive a coding agent harness, noting he has "had trouble running those usefully from other local models that fit in <64GB." @wzhao_nlp shared the emotional side of model development, describing how they redid midtraining entirely because models "failed to follow instructions on out-of-distribution scaffolds," choosing fundamental fixes over surface-level patches. @DrJimFan teased "The Second Pre-training Paradigm" without elaboration.

Agent Architecture and Multi-Agent Workflows

The conversation around agent orchestration matured noticeably today. @rauchg articulated the thesis clearly: "Agents give developers horizontal scalability." His vision spans from simple tmux sessions running CLI agents in parallel to sandboxed environments offering "infinite parallelism, run while you sleep, on PRs, when an incident is filed." The punchline: "Automating the full product development loop is now your job, and your edge."

@addyosmani confirmed this is not just startup enthusiasm, noting at Google: "I use a multi-agent swarm for most of my daily development. This is a future we're planning for more of." His advice was practical: be intentional about deep vs. shallow review, and audit which Skills and MCPs actually help. @tobi praised Pi as "the most interesting agent harness," highlighting its ability to write plugins for itself and effectively RL into the agent you want. @zeeg asked a question many are wrestling with: "What's the best user interface for managing multiple claude code sessions?" The tooling for orchestrating agents is still catching up to the capability of the agents themselves.

@hasantoxr highlighted a fully local desktop automation agent from China that runs without internet, and @flaviocopes endorsed Docker sandboxes as "a fantastic way to run agents in YOLO mode without anxiety." The infrastructure layer for running agents safely and in parallel is solidifying.

Making Coding Agents Smarter

A cluster of posts today focused on improving what coding agents can actually perceive and do. @aidenybai shared how he made Claude Code 3x faster and promoted React Grab, which "extracts file sources rather than DOM selectors" because "agents can't actually do much with selectors, while sources are the source of truth." @e7r1us suggested parsing JS/TS projects with Babel to create compact representations of hooks, constants, and function signatures to feed as agent context.

> "RAG + vector DB gives decent results, but agentic search over the repo (glob/grep/read, etc) consistently worked better on real-world codebases." -- @dani_avila7

@dani_avila7 shared extensive experience comparing RAG with agentic search, concluding that "fast models + bash-style agentic search ended up outperforming general RAG search, even if it requires more tool calls." The tradeoffs with RAG around staleness and privacy, requiring continuous re-indexing with code living on your servers, pushed them toward the simpler approach. @o_kwasniewski reinforced the quality angle: "Build & lint passing is not enough to ensure what your agent built is actually working. Testing the flow end to end is crucial."

AI and the Changing Nature of Work

The human side of the AI acceleration got thoughtful attention today. @TheGeorgePu reported that Meta now tracks over 200 data points on employee AI usage, with high adoption earning 300% bonuses and low adoption leading to being managed out. @nomoreplan_b distilled it to four words: "AI fluency is becoming job security."

@adityaag wrote the most emotionally honest post of the day: "I spent a lot of time over the weekend writing code with Claude. And it was very clear that we will never ever write code by hand again... Something I was very good at is now free and abundant. I am happy...but disoriented." That tension between capability and identity resonated. @naval offered the strategic reframe: "Vibe coding is the new product management. Training and tuning models is the new coding." Whether that framing is premature or prescient depends on how fast the agent infrastructure covered above continues to mature. Based on today's posts, the answer is: very fast.

Sources

Introducing Agent Device: token‑efficient iOS & Android automation for AI agents 𝚗𝚙𝚡 𝚊𝚐𝚎𝚗𝚝-𝚍𝚎𝚟𝚒𝚌𝚎 https://t.co/6hfs2LDyxq

nanochat can now train GPT-2 grade LLM for <<$100 (~$73, 3 hours on a single 8XH100 node). GPT-2 is just my favorite LLM because it's the first time the LLM stack comes together in a recognizably modern form. So it has become a bit of a weird & lasting obsession of mine to train a model to GPT-2 capability but for much cheaper, with the benefit of ~7 years of progress. In particular, I suspected it should be possible today to train one for <<$100. Originally in 2019, GPT-2 was trained by OpenAI on 32 TPU v3 chips for 168 hours (7 days), with $8/hour/TPUv3 back then, for a total cost of approx. $43K. It achieves 0.256525 CORE score, which is an ensemble metric introduced in the DCLM paper over 22 evaluations like ARC/MMLU/etc. As of the last few improvements merged into nanochat (many of them originating in modded-nanogpt repo), I can now reach a higher CORE score in 3.04 hours (~$73) on a single 8XH100 node. This is a 600X cost reduction over 7 years, i.e. the cost to train GPT-2 is falling approximately 2.5X every year. I think this is likely an underestimate because I am still finding more improvements relatively regularly and I have a backlog of more ideas to try. A longer post with a lot of the detail of the optimizations involved and pointers on how to reproduce are here: https://t.co/vhnK0d3L7B Inspired by modded-nanogpt, I also created a leaderboard for "time to GPT-2", where this first "Jan29" model is entry #1 at 3.04 hours. It will be fun to iterate on this further and I welcome help! My hope is that nanochat can grow to become a very nice/clean and tuned experimental LLM harness for prototyping ideas, for having fun, and ofc for learning. The biggest improvements of things that worked out of the box and simply produced gains right away were 1) Flash Attention 3 kernels (faster, and allows window_size kwarg to get alternating attention patterns), Muon optimizer (I tried for ~1 day to delete it and only use AdamW and I couldn't), residual pathways and skip connections gated by learnable scalars, and value embeddings. There were many other smaller things that stack up. Image: semi-related eye candy of deriving the scaling laws for the current nanochat model miniseries, pretty and satisfying!

Claude Opus 4.6 has been spotted. This is separate from the misinterpretation of Sonnet 5 information that led people to definitively assert the release date was this Tuesday.

Claude is built to be a genuinely helpful assistant for work and for deep thinking. Advertising would be incompatible with that vision. Read why Claude will remain ad-free: https://t.co/Dr8FOJxINC

Claude is built to be a genuinely helpful assistant for work and for deep thinking. Advertising would be incompatible with that vision. Read why Claude will remain ad-free: https://t.co/Dr8FOJxINC

If you work in tech in 2026, you’re either at the beginning of your career or at the end of it. If you’re acting like you’re anywhere else I’m sorry to tell you but you’re actually at the end. This holds for VCs too.

You told us you’re running multiple AI agents and wanted a better UX. We listened and shipped it! Here’s what’s new in the latest @code release: 🗂️ Unified agent sessions workspace for local, background, and cloud agents 💻 Claude and Codex support for local and cloud agents 🔀 Parallel subagents 🌐 Integrated browser And more...

https://t.co/jEWDjs30kf