OpenAI Launches Codex App as SpaceX Acquires xAI and Multi-Agent Workflows Hit Mainstream

OpenAI's Codex app launch dominated the conversation with Sam Altman admitting AI made him "feel useless," while a CTO's public migration from Copilot to Cursor to Claude Code in under a year crystallized just how fast the AI IDE market is moving. Meanwhile, a push to standardize agent skills under `.agents/skills/` signaled the ecosystem maturing beyond single-tool silos, and SpaceX quietly acquired xAI.

Daily Wrap-Up

The biggest story today was the launch of OpenAI's Codex app, a dedicated workspace for managing multiple coding agents in parallel. But what made it memorable wasn't the product specs. It was @sama admitting that after building with Codex and asking it for feature ideas, "at least a couple of them were better than I was thinking of. I felt a little useless and it was sad." When the CEO of the company building the thing feels displaced by the thing, you know the ground is shifting under everyone's feet. The Codex launch also came with doubled rate limits across all paid plans, which is the kind of aggressive move that signals OpenAI sees the AI coding space as an existential battleground.

What made today's feed especially interesting was how it captured the full spectrum of reactions to this moment. On one end, @GergelyOrosz reported a CTO at a 600-engineer company describing their tool migration: GitHub Copilot to Cursor nine months ago, then Claude Code just 1.5 weeks ago, with Copilot cancelled entirely. That's the entire product lifecycle of an AI coding tool compressed into less than a year. On the other end, @alexhillman articulated a counter-position: not everyone should be building factories optimized for output and scale. Some developers are choosing depth and understanding over speed, maintaining open loops and low error tolerance with more human input, not less. Both approaches are valid, but the tension between them is going to define how teams ship software in 2026.

The quieter but potentially more impactful story was @embirico's open call for agent builders to standardize on .agents/skills/ as the shared directory for agent skill definitions, with Codex already making the move. If that convention sticks across Claude Code, Cursor, and the growing crop of open-source alternatives, it would be the first real interoperability standard in agentic coding. The most practical takeaway for developers: start organizing your project-level agent instructions under .agents/skills/ now. Whether you're on Codex, Claude Code, or Cursor, that directory convention is converging fast, and having your automation portable across tools will matter more than which IDE you pick this quarter.

Quick Hits

- @SpaceX announced the acquisition of xAI, forming what they called "one of the most ambitious, vertically integrated innovation engines on (and off) Earth." Rockets meet reasoning.

- @rough__sea reacted to projections of terawatts of AI compute launched annually, asking "Is this how von Neumann probes start?" Fair question.

- @argosaki posted about researchers implanting procedural memories via transcranial magnetic stimulation, claiming piano novices played intermediate pieces after 20 minutes. File this one firmly under "extraordinary claims requiring extraordinary evidence."

- @PalmerLuckey discovered a team in the AI Grand Prix using cultured mouse brain cells to control a drone. His verdict: "At first look, this seems against the spirit of the software-only rules. On second thought, hell yeah."

- @Google highlighted using AI tools to sequence animal genomes in days, work that once took 13 years and $3 billion for a single human genome.

- @jamesvclements built a tool that adds before-and-after screenshots to all PRs, which is the kind of small automation that compounds into massive review quality improvements.

- @thdxr announced a beta channel for opencode with SQLite migration testing. The open-source AI coding tool space keeps growing.

- @badlogicgames declared "pi is now a certified game engine," adding another unexpected use case to the AI coding tool landscape.

- @aliasaria launched the public beta of Transformer Lab for Teams, positioning it as "the open-source OS for modern AI research."

- @GOROman shared a workflow combining Moshi, Mosh, tmux, and Tailscale for remote AI development. The tool-stacking meta continues.

- @nummanali called for an open-source version of 8090, an AI-native SDLC platform for cross-functional collaboration.

- @doodlestein's Agent Flywheel Hub Discord hit 339 members, a growing community for developers swapping agent workflows.

- @marty posted a meme about every tech person working on their @openclaw "productivity" system, which hit a little too close to home for the homelab crowd.

The Codex App Launch and the AI IDE Arms Race

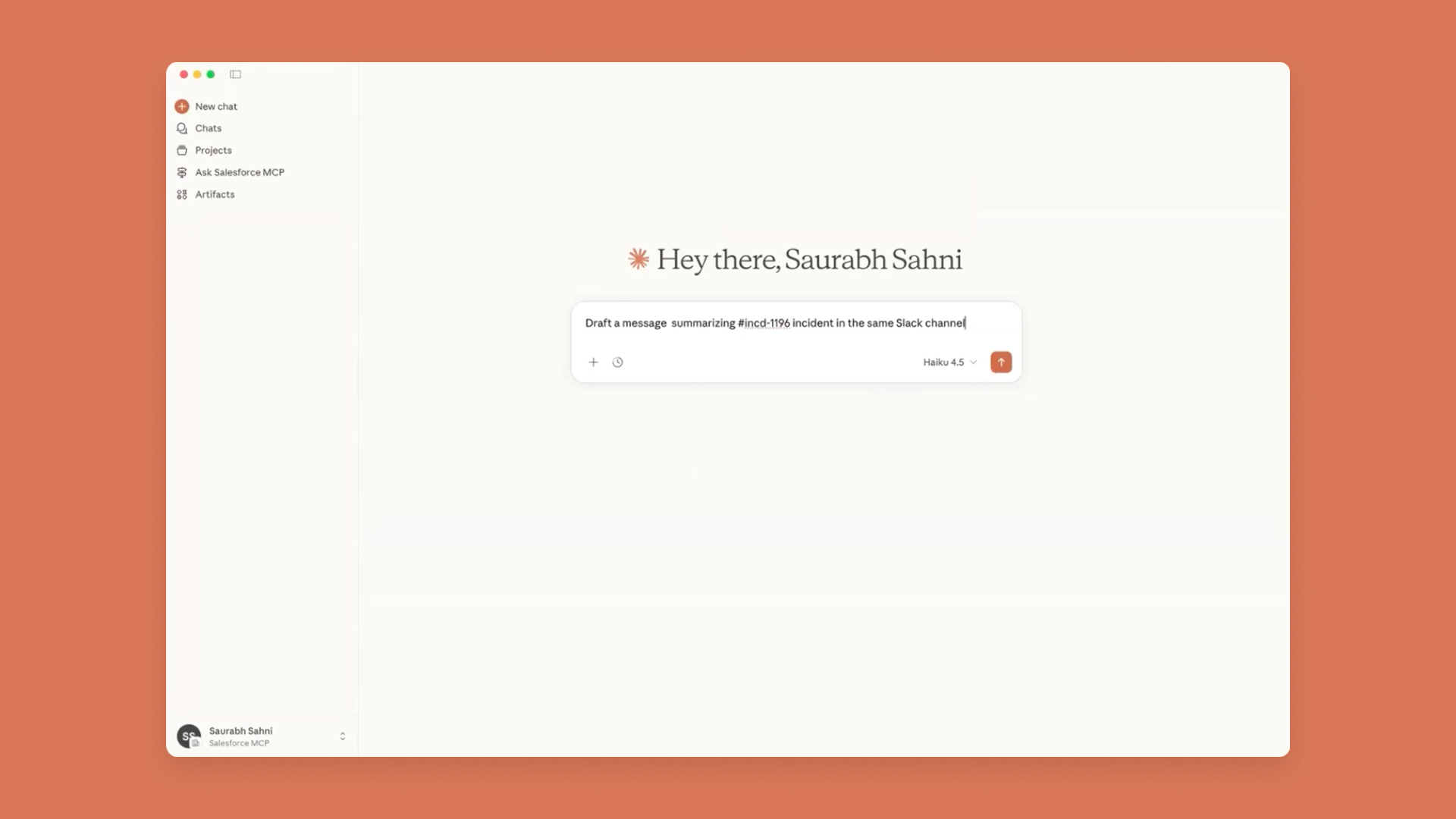

OpenAI shipped its most direct challenge to Claude Code and Cursor today with the Codex app, a standalone workspace designed around managing multiple agents in parallel across long-running tasks. @OpenAIDevs described it as "a command center for building with agents," and the framing is deliberate. This isn't a code completion tool or an inline assistant. It's a workbench that assumes you're orchestrating, not typing.

The immediate community reaction was enthusiastic but revealing. @theo called it "OpenAI's Cursor killer" after a week of access, saying he's "addicted." @polynoamial confirmed switching entirely: "Codex is writing all my code these days, and I've fully switched to using the Codex app." And @heccbrent, who worked on the launch internally, shared that he'd turned Codex into "a fairly competent video editor" that can make rough cuts in Adobe Premiere Pro, pushing the boundaries of what a coding agent even means.

But the most telling data point came from outside OpenAI. @GergelyOrosz shared a CTO's timeline: all developers on GitHub Copilot, then Cursor rolled out nine months ago, then Claude Code deployed to everyone 1.5 weeks ago with Copilot cancelled. "I hear this exact transition story, a LOT!" he added. The AI coding tool market isn't just competitive; it's experiencing churn rates that would terrify any SaaS company. @flaviocopes piled on with praise for what appears to be the new Codex feature set: "incredible automations, easy to use/create skills, workflow Conductor, integrate external IDEs."

@sama celebrated the launch by doubling rate limits on paid plans for two months and adding free/go tier access. But his more candid post was the one that stuck: building with Codex was fun until the AI started generating better feature ideas than he could. @kimmonismus connected the dots: "We got Codex that builds itself and Claude that's being built with Claude. In what way haven't we reached the point of self-improving models?" As @alexalbert__ noted simply, "It's only been one year since vibe coding was coined." The distance between that coinage and today's multi-agent orchestration workspaces is staggering. @emollick put it in measured terms: "I think the implications are pretty large for software development, and beyond."

Agent Skills Standardization and the Agent-Readable Web

A quieter but structurally important conversation emerged around making the web and codebases legible to AI agents. @embirico issued an open call to agent builders: "Let's read agent skills from .agents/skills, so people don't have to manage separate folders per agent." Codex has already migrated, and the goal is to deprecate tool-specific directories like .codex/skills. This is the kind of boring infrastructure decision that ends up mattering enormously. If Claude Code, Cursor, Codex, and open-source tools all converge on one directory, agent skills become portable and projects become tool-agnostic.

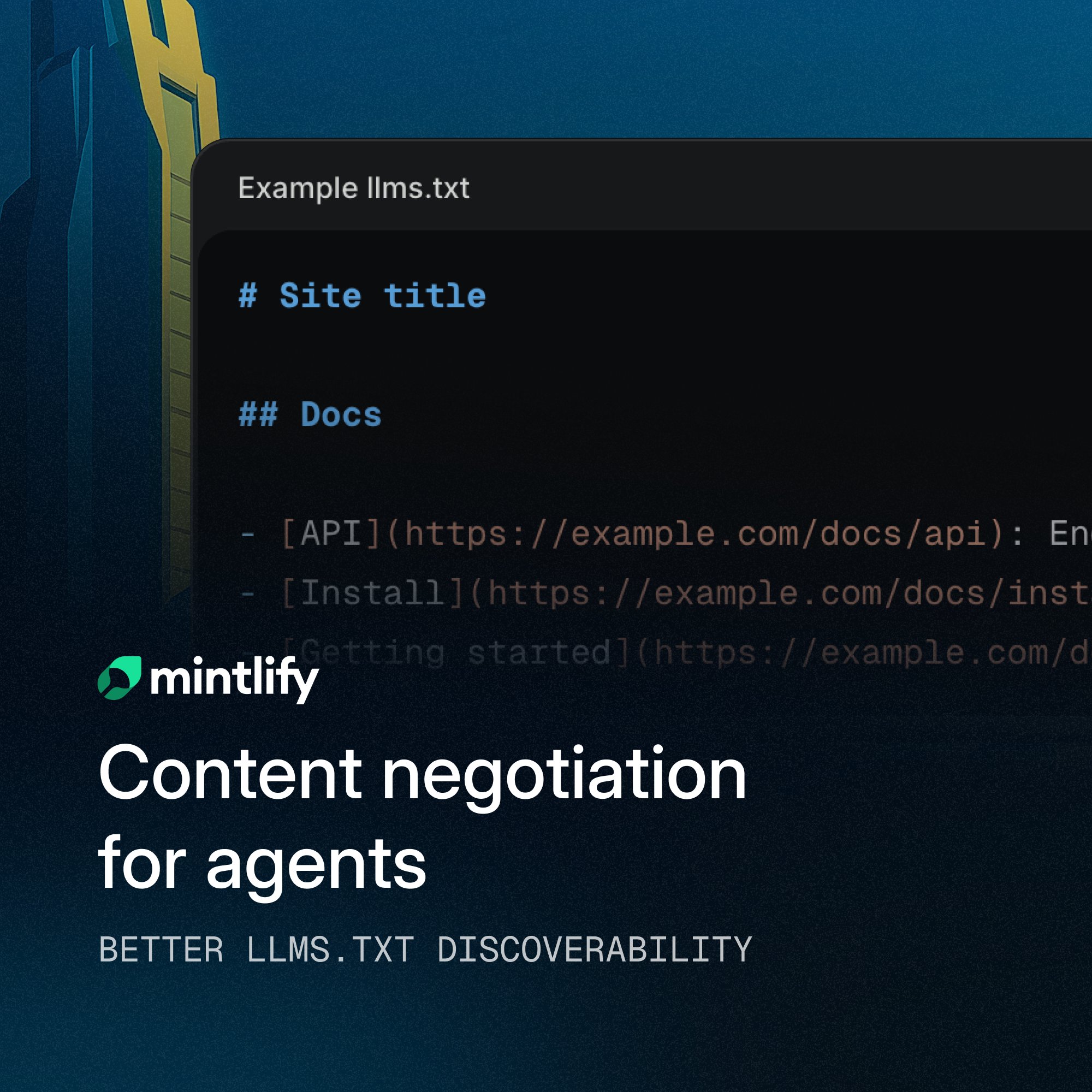

On the web side, @mintlify improved llms.txt discoverability by placing the index instruction at the top of Markdown responses as a blockquote, "so agents see guidance immediately without having to parse the full document." @michael_chomsky went further, sharing optimization advice for agent-facing content:

> "Always respect 'Accept: text/plain' and 'Accept: text/markdown' headers (most agents don't render JS, biggest win). Let agents know about your llms.txt, otherwise they won't check for it. Keep important context for agents at the top of your files (they tend to truncate)."

The thread connecting these posts is that the agentic web is developing its own SEO. Just as the 2000s web optimized for Googlebot crawlers, the 2026 web is starting to optimize for AI agents that fetch, parse, and act on content. The developers who internalize this shift early will have an outsized advantage in making their tools, docs, and APIs agent-friendly.

Engineering Careers and the AI Productivity Paradox

The career implications of AI coding tools generated the day's most heated takes. @Architect9000 delivered the sharpest framing: "Don't worry about a bunch of software engineers losing their jobs. Worry about what a bunch of unemployed engineers will do to yours." The argument is that engineers are lifelong learners who must master both code and a subject domain, and displaced engineers will simply learn another profession's job and automate it. "Software skills are going to be mandatory for any white-collar job by 2030."

@levie, the Box CEO, tackled the corporate strategy angle. If an engineer can produce 2-5X more output, "the general direction will be roadmap expansion. Companies that just use this leverage to cut costs will be outcompeted by those that decide to do more." But he identified the real bottleneck: customer adoption speed and whether companies can monetize more software or if expectations simply inflate. GTM and distribution moats become critical when development costs per unit drop.

The gap between AI-forward and AI-hesitant organizations was a recurring theme. @vig_xyz captured it perfectly: friends at AI labs are "running multiple Claude Code agents in parallel, sometimes with custom cloud infra," while PE-backed companies are "still figuring out what their first AI initiative should be." @gauthampai confirmed the same pattern from a consulting perspective: some clients are shipping agents, skills, and MCPs like there's no tomorrow while others "still complain how ChatGPT didn't answer their questions right." @vasuman pushed back on the hype cycle with practical advice: "New models are great, but everything that truly moves the needle for your business is already possible. Spend more time studying business needs."

Two Schools of AI-Assisted Development

A philosophical divide crystallized today between what @alexhillman identified as two schools of thought. One school uses AI tools to "build factories" with emphasis on scale, speed, output, and less human input. The other, where alexhillman places himself, emphasizes "depth and understanding, maintaining open loops, low tolerance for errors, MORE human input." @jonhilt noted this mirrors how expertise works in the real world: "You bring all your knowledge, skills and experience to bear on every project. You've packaged some of that up into an AI assistant which now does the same."

@badlogicgames offered the most grounded workflow advice of the day, pushing back against the "run a gazillion things in parallel" productivity hype. "There's likely only a handful of humans that have the mental make-up to survive the permanent context switches," he wrote, describing his own approach of limiting to 2-3 parallel tasks with virtual desktops as task bundles. He uses a pi extension that shows the relevant GitHub issue for each session and maintains a dedicated entertainment desktop for brain rest.

@solarapparition took the long view with a vivid metaphor: "There's a big fucking wave coming. It's higher than any high ground you can reasonably reach. It's advancing too fast for you to build protection. So, just take a deep breath, maybe try to get a clear space so you don't get dashed against rocks, and just let it carry you to wherever you're going to end up." The advice pairs surprisingly well with @TheAhmadOsman's much more tactical tip to "tell Claude Code or any other agent to generate relevant pre-commit hooks for your project" and @leerob's Cursor workflow of stacking skills like /code-review, /simplify, /deslop, and /commit-pr. Whether you're riding the wave philosophically or tactically, the common thread is letting agents handle the mechanical work while you focus on judgment calls that still require a human brain.

Sources

Codex now pretty much builds itself, with the help and supervision of a great team. The bottleneck has shifted to being how fast we can help and supervise the outcome.

@alexhillman What's interesting about this is that it feels like it models the real world. You, as an individual, bring all your knowledge, skills and experience to bear on every project you touch. You've packaged some of that up into an AI assistant which now does the same :)

We improved llms.txt discoverability for coding agents at the content and HTTP layers. In Markdown responses, the llms.txt index instruction now appears at the top of the page as a clear blockquote, so agents see guidance immediately without having to parse the full document. https://t.co/R8v2u0iaQd

Introducing the Codex app—a powerful command center for building with agents. Now available on macOS. https://t.co/HW05s2C9Nr

SpaceX has acquired xAI, forming one of the most ambitious, vertically integrated innovation engines on (and off) Earth → https://t.co/3ODfcYnqfg https://t.co/el40rCUBGe

For devs asking “how do I run coding agents without breaking my machine?” Docker Sandboxes are now available. They use isolated microVMs so agents can install packages, run Docker, and modify configs - without touching your host system. Read more → https://t.co/VjlWMG5wqF https://t.co/7ssqWboten

[미국 블라인드 구글 직원의 글 번역] 샘 알트먼의 타운홀 미팅 이후 OpenAI가 인원을 감축했다는 소식을 들었습니다. 심지어 팀 매칭(Team Match)이나 온사이트 면접(Onsite Loop)을 마친 후에도 리크루터로부터 연락이 끊겼다는 분들이 있다고 하네요. 이것이 사실인지, 그리고 이미 오퍼레터에 서명한 사람들에게도 영향이 있을지 궁금합니다.

So anthropic's cooking an in-house image model sonata is live on lmarena and it's having a whole identity crisis claims google made it half the time, anthropic the other half This is from claude config , so it's 100% guaranteed now it is coming https://t.co/xlYDFWU1BM

We are live. The marketplace to buy high-quality, pre-vetted Claude Skills https://t.co/N7hJjVmsBa https://t.co/LdsTmrFQZd

🚀 Introducing Qwen3-Coder-Next, an open-weight LM built for coding agents & local development. What’s new: 🤖 Scaling agentic training: 800K verifiable tasks + executable envs 📈 Efficiency–Performance Tradeoff: achieves strong results on SWE-Bench Pro with 80B total params and 3B active ✨ Supports OpenClaw, Qwen Code, Claude Code, web dev, browser use, Cline, etc 🤗 Hugging Face: https://t.co/rZoW4vRJpr 🤖 ModelScope: https://t.co/P0vT5zILBZ 📝 Blog: https://t.co/hFfFDYcwvd 📄 Tech report: https://t.co/Qx83PWS3oi

Qwen releases Qwen3-Coder-Next. 💜 The new 80B MoE model excels at agentic coding & local use. With 256K context, it delivers similar performance to models with 10-20× more active parameters. Run on 46GB RAM or less. Guide: https://t.co/wzoXlZwDuL GGUF: https://t.co/rpYrlnazsm

The Second Pre-training Paradigm

Next word prediction was the first pre-training paradigm. Now we are living through the second paradigm shift: world modeling, or “next physical state...

Vibe coding is the new product management. Training and tuning models is the new coding.

@EthanLipnik 👋 Early versions of Claude Code used RAG + a local vector db, but we found pretty quickly that agentic search generally works better. It is also simpler and doesn’t have the same issues around security, privacy, staleness, and reliability.

Agent Skills are now available in Cursor. Skills let agents discover and run specialized prompts and code. https://t.co/aZcOkRhqw8

"Earlier, all devs used GitHub Copilot. 9 months ago, we rolled out Cursor to all devs. 1.5 weeks ago, we rolled out Claude Code to everyone, and cancelled our Copilot subscription" - CTO at a company with 600 engineers (I hear this exact "transition" story, a LOT!)

@embirico changed merged in @code for broader copilot adoption https://t.co/JtKTMo4tP8, cheers!

How I made Claude Code 3x faster

Coding agents suck at frontend because translating intent (from UI → prompt → code → UI) is lossy. For example, if you want to make a UI change: Creat...

We're adding support for .agents/skills in the next release! This will make it easier to use skills with any coding agent.