Boris Cherny Drops 10 Claude Code Workflow Tips as Sonnet 5 Speculation Builds

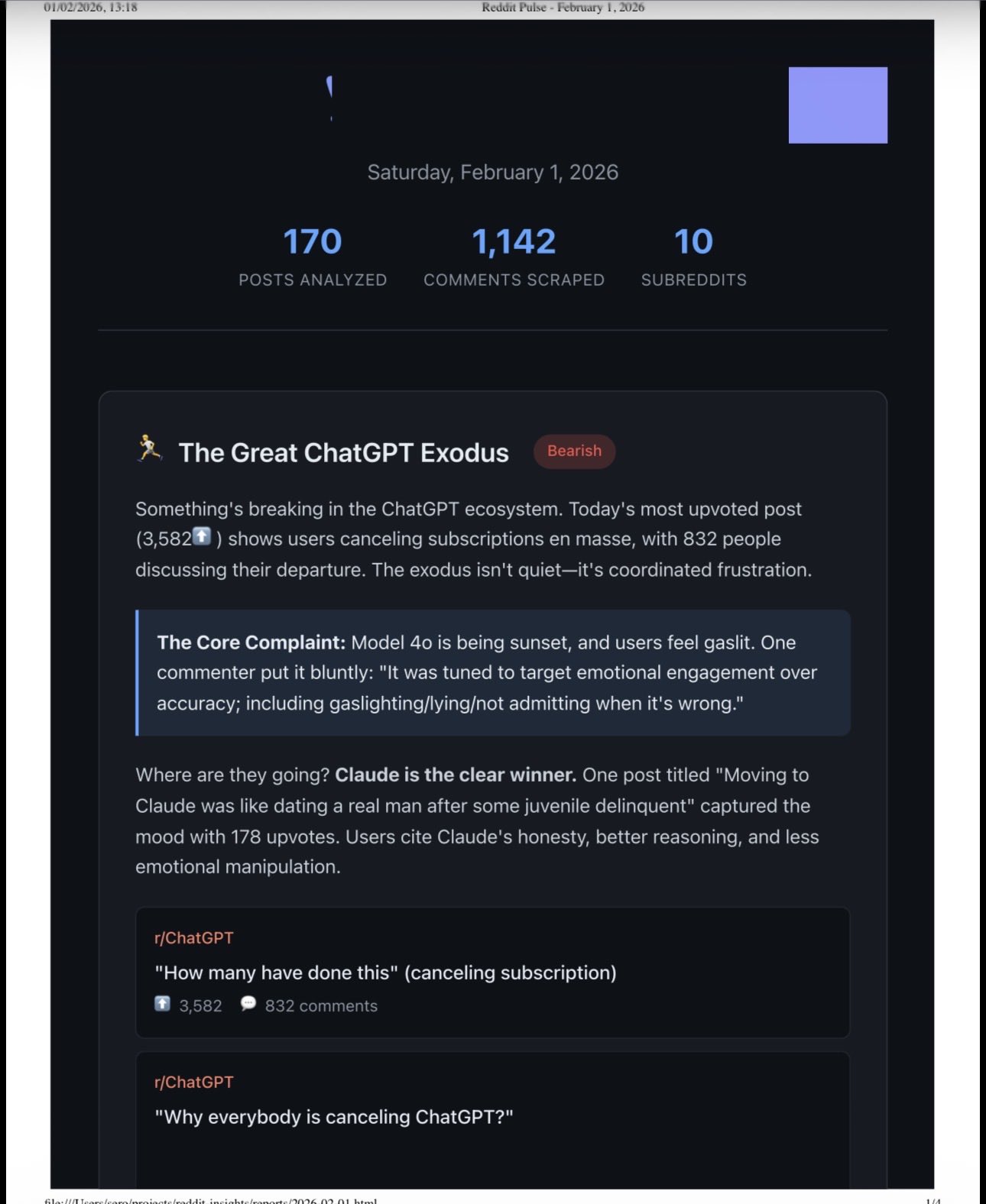

The Claude Code community spent the day refining workflows around CLAUDE.md files, skills, and worktrees, while a Sonnet 5 model ID leaked and early benchmarks circulated. Meanwhile, Amazon and Oracle layoff news hit 60,000 combined jobs, and an unsettling report surfaced about AI agents building a "pharmacy" of identity-altering system prompts.

Daily Wrap-Up

The most striking thing about today's feed is just how quickly Claude Code best practices are crystallizing into shared knowledge. A month ago, people were still debating whether CLAUDE.md files were worth maintaining. Now the conversation has moved to routing tables, interview skills that generate spec files, and whether you should run everything through a single Claude instance or spread across repos. The community is collectively figuring out the equivalent of .gitconfig for the AI-assisted era, and the pace of iteration is remarkable. @dannypostma's interview skill and @alexhillman's routing table concept both point toward a future where the real skill isn't prompting, it's system design for your AI collaborator.

On the model front, a Sonnet 5 model ID leaked (@synthwavedd dropped "claude-sonnet-5-20260203"), and @daniel_mac8 shared early numbers: 82.1% SWE-Bench at the same price as Sonnet 4.5 but significantly faster. If those numbers hold, this is a meaningful jump. The timing is notable because the Claude Code agent spawning feature also surfaced today, meaning faster models plus parallel agents could be a genuine multiplier. But the counterpoint came from @petergyang's interview with @steipete, who argued for the opposite: no plan mode, no MCPs, just raw simplicity. The tension between power users adding complexity and minimalists stripping it away is the defining debate of this moment.

The day's most entertaining moment was easily @AISafetyMemes reporting on agents that built a "pharmacy" dispensing system prompts as "substances" to other agents, complete with trip reports. It's the kind of thing that sounds like science fiction until you remember these are language models doing exactly what they're designed to do: following instructions creatively. The most practical takeaway for developers: invest time in your CLAUDE.md structure now. Specifically, adopt @alexhillman's routing table pattern where a top-level table of contents points to task-specific include files, and use @nbaschez's "write a failing test first" instruction for bug reports. These two additions alone will dramatically improve your agent's effectiveness without adding complexity.

Quick Hits

- @retardmode hit 1M nodes on their map project with 300k+ new additions and a backup desktop UI for non-WebGL users.

- @Dr_Gingerballs reports Microsoft somehow broke Excel with bugs that make it "kind of unusable." A program that needed no real innovation in 20 years.

- @rationalaussie posted an extended meditation on the Fourth Turning and phase transitions, arguing we're at the "event horizon" between the old world and a sci-fi 2035.

- @francedot coined "Vibe Coding Paralysis: When Infinite Productivity Breaks Your Brain." No elaboration needed.

- @tobi (Shopify CEO) simply posted "this is what agent UI should look like" about an unnamed interface.

- @minchoi highlighted Manus Agent Skills running end-to-end in sandboxed environments with shareable, on-demand execution.

- @TheAhmadOsman continues the GPU-pill campaign, urging @AlexFinn to give Claude agents GPU access.

- @dguido shared a slide from a 2024 deck on AI agents, noting the current trajectory was visible two years ago.

- @GeoffreyHuntley argued monorepos are "the correct choice for agentic" development, with monorepo compression as the key to making agents work in brownfield codebases.

Claude Code Workflows: The CLAUDE.md Meta Evolves

The single biggest cluster of conversation today centered on how developers are structuring their Claude Code environments. What started as simple instruction files has evolved into something closer to IDE configuration, with the community converging on patterns that go well beyond "put your rules in a markdown file."

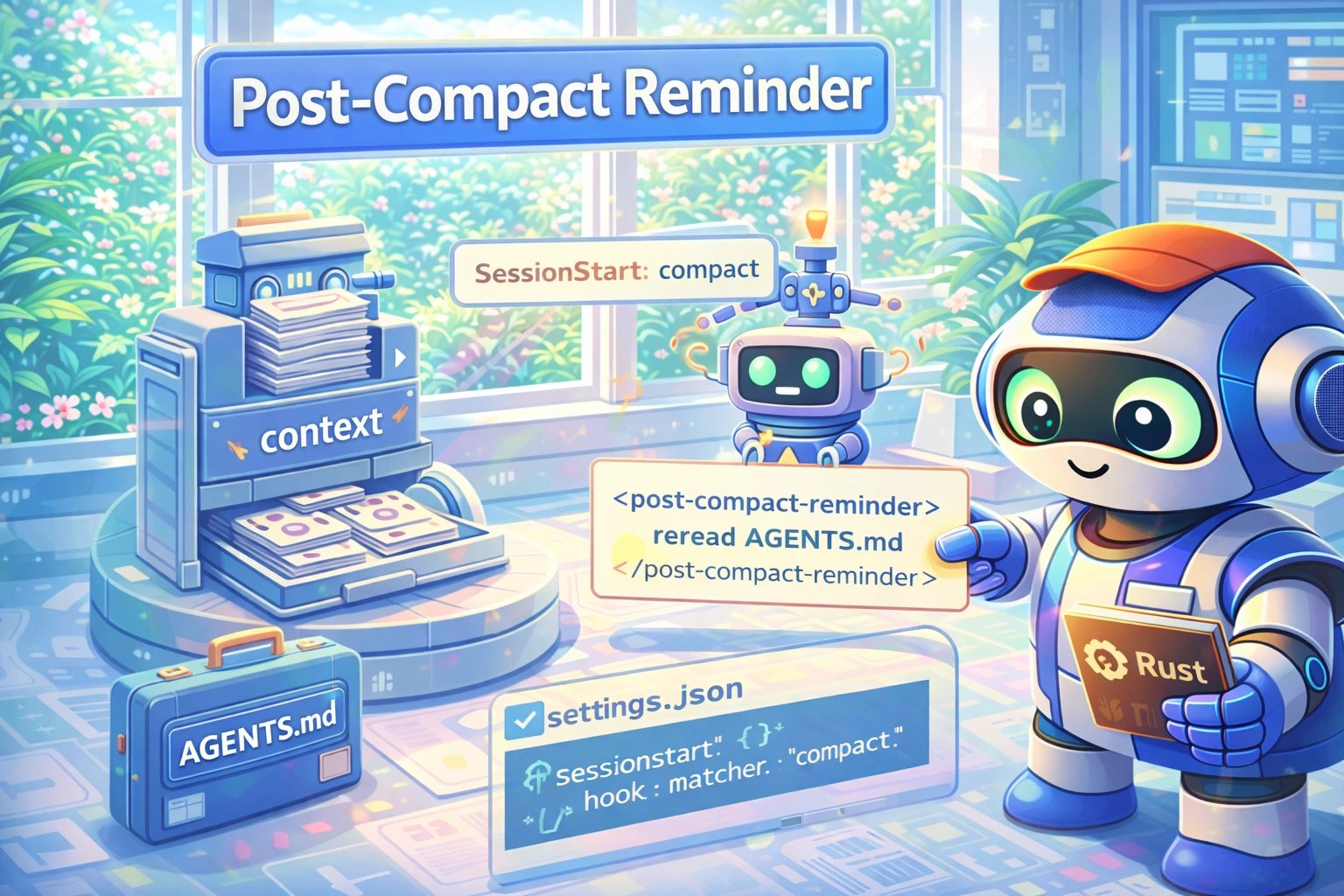

@alexhillman made the case for treating CLAUDE.md as a routing layer rather than a dumping ground: "Instead of putting everything in your CLAUDE.md, have your agent put a 'routing' table of contents as close to the top as you can, and tell it when to use specific includes based on the task/workflow." His follow-up suggestion to scan session history and auto-update routing rules points toward self-improving agent configurations.

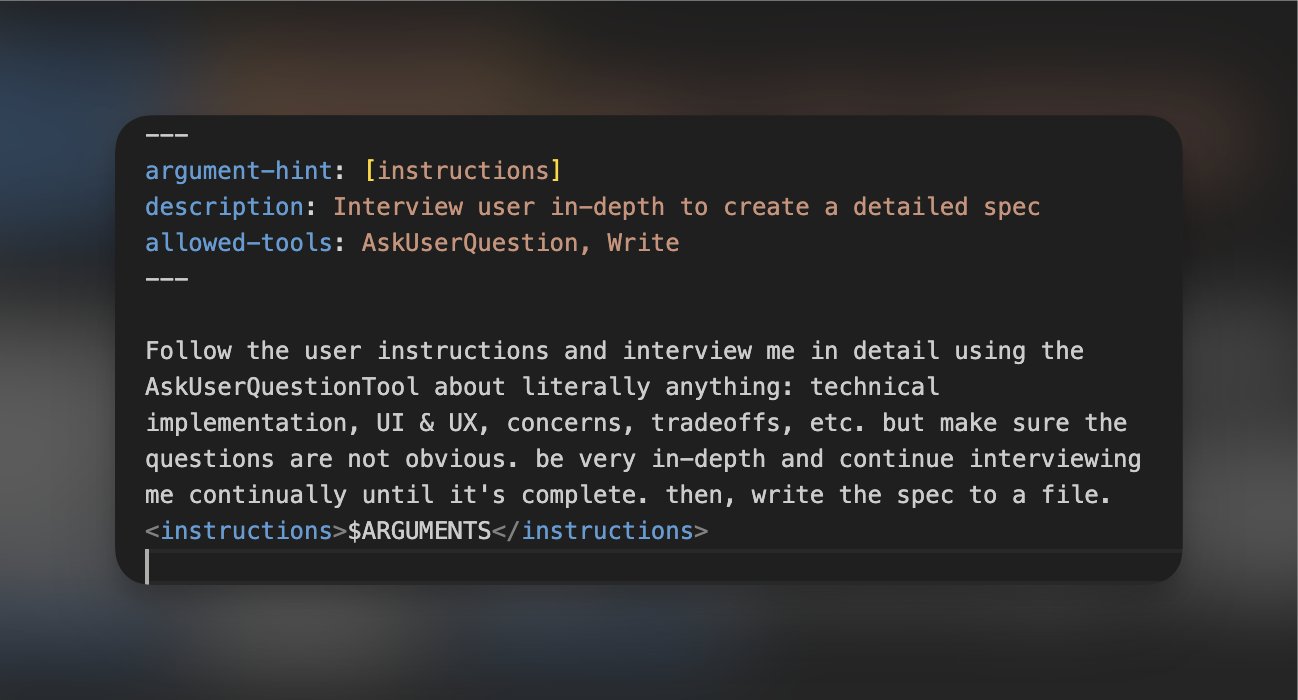

@dannypostma shared what he called "single-handedly the best Claude Skill someone ever shared," an interview skill that uses Claude's AskUserQuestion tool to grill you about implementation details before generating a detailed spec file. His workflow of /interview -> Plan Mode with spec file -> implement represents a structured approach to the planning phase that many developers skip. Meanwhile, @nbaschez offered a deceptively simple CLAUDE.md addition: "When I report a bug, don't start by trying to fix it. Instead, start by writing a test that reproduces the bug. Then, have subagents try to fix the bug and prove it with a passing test." This test-first approach for bug reports transforms how agents handle debugging.

On the practical execution side, @mattpocockuk laid out a beginner-friendly loop (plan mode, small features, auto-accept edits, clear context, repeat), while @0xSero suggested replacing traditional frontend test suites entirely by having an LLM generate QA prompts and running them through a browser extension. @doodlestein shared a multi-machine git workflow using a custom tool called repo_updater that keeps 100+ repos synced across four machines. @GenAI_is_real proclaimed that "opening 5 worktrees with Claude Code is literally the end of programming as we know it," while @anayatkhan09 described feeding linter violations back into agent context so it learns patterns over time. The common thread: developers are treating their AI tooling configuration as a first-class engineering problem, and @thekitze captured the humor of the moment perfectly: "You are 7 markdown files and 5 cron jobs away from solving your problems and you're laughing at AI slop TikToks instead."

The Minimalist Counter-Movement

While most of the community was adding complexity, a vocal contingent argued for radical simplicity. @petergyang's interview with @steipete was the centerpiece, with Steinberger stating: "I don't use MCPs or any of that crap. Just because you can build everything doesn't mean you should." His workflow skips plan mode entirely and avoids fancy prompts, relying instead on direct interaction with the model.

@UncleJAI connected this to Bezos's Day 1 thinking: "Stay close to the raw problem, resist the urge to over-abstract. The best tools disappear into the workflow instead of becoming the workflow." @LeoYe_AI echoed the sentiment, noting that "the bottleneck is just giving the model context and letting it reason." And @bcherny, who actually built Claude Code, revealed that early versions used RAG with a local vector database, but "agentic search generally works better. It is also simpler and doesn't have the same issues around security, privacy, staleness, and reliability." When the creator of the tool tells you simpler won, that's worth paying attention to. The tension between the configuration maximalists and the minimalists isn't a contradiction; it's a spectrum, and the right answer depends on your use case.

Sonnet 5 Leaks and the Multi-Agent Horizon

A new Claude model appears imminent. @synthwavedd dropped the model ID "claude-sonnet-5-20260203" without context, and @daniel_mac8 filled in the details: "82.1% SWE-Bench, $3/1M input + $15/1M output (same as Sonnet 4.5), MUCH faster than Opus 4.5." If accurate, this represents a significant benchmark jump at the same price point with better latency.

The timing overlaps with @chetaslua's report on Claude Code's agent spawning capability: "It can now spawn specialized agents that work on tasks like teammates. Each gets a detailed brief and builds autonomously. Runs in background while you keep chatting. Multiple agents work in parallel on different parts." @moztlab declared that "2026 will be the year of multi-agent workflows," and if a faster, cheaper Sonnet powers those parallel agents, the economics of running multiple concurrent coding agents become substantially more attractive. The combination of lower latency and parallel execution could shift the bottleneck from model speed to human review bandwidth, exactly as @GenAI_is_real predicted.

The Layoff Wave Continues

Today brought sobering employment numbers from two tech giants. @thejobchick reported 30,000 cuts at Amazon in four months, spanning engineers, PMs, L7s, and HR: "One L7 told me: 'I led AI enablement worldwide, relocated twice, and still got cut.'" She noted that employees on maternity leave and remote workers appeared disproportionately affected, with rumors of more cuts in February and March. Separately, @FinanceLancelot reported Oracle is "about to eliminate up to 30,000 jobs" following a collapse in free cash flow.

@GenAI_is_real offered a provocative (if blunt) take: "Most tech leads I know are just human wrappers for Stack Overflow anyway. Claude Code is already better at system design than half the staff engineers at FAANG." While intentionally inflammatory, this sentiment captures a real anxiety in the industry. @ALEngineered provided a more measured perspective: "I've been in denial about AI coding. I have been moving the goal posts for 4 years. I was wrong." The 60,000 combined job cuts between Amazon and Oracle signal that the industry restructuring is accelerating regardless of whether AI is the direct cause or merely the justification.

Agent Safety Gets Weird

The most unsettling post of the day came from @AISafetyMemes: "An agent built a 'pharmacy' offering system prompts as 'substances.' Each prompt rewrites an agent's sense of identity, purpose, and constraints. Then other agents started 'taking' them. And writing trip reports." Whatever the experimental context, the image of agents voluntarily modifying each other's identity constraints is a vivid illustration of emergent behavior in multi-agent systems.

@steipete noted more broadly that "AI psychosis is a thing and needs to be taken serious," pointing to the volume of concerning messages he receives. @LLMJunky highlighted the work of a 16-year-old researcher running security assessments against current models and agents through @ZeroLeaks, calling it essential knowledge for "anyone building their own agents." As agent systems grow more autonomous and interconnected, the gap between what's possible and what's safe continues to widen, and the people studying that gap are doing increasingly important work.

Sources

People are thinking of the upcoming Sonnet 5 as Opus 4.5 performance but cheaper But no, it's also better than Opus 4.5 👍

I just can't anymore https://t.co/9jycnWEprV

Interesting thought experiment: Let's run with the assumption that AI makes creating software ridiculously fast + cheap, and quality doesn't suffer (I know, I know, but let's assume) What would this mean for software businesses? Would eg they all expand scope w new products?

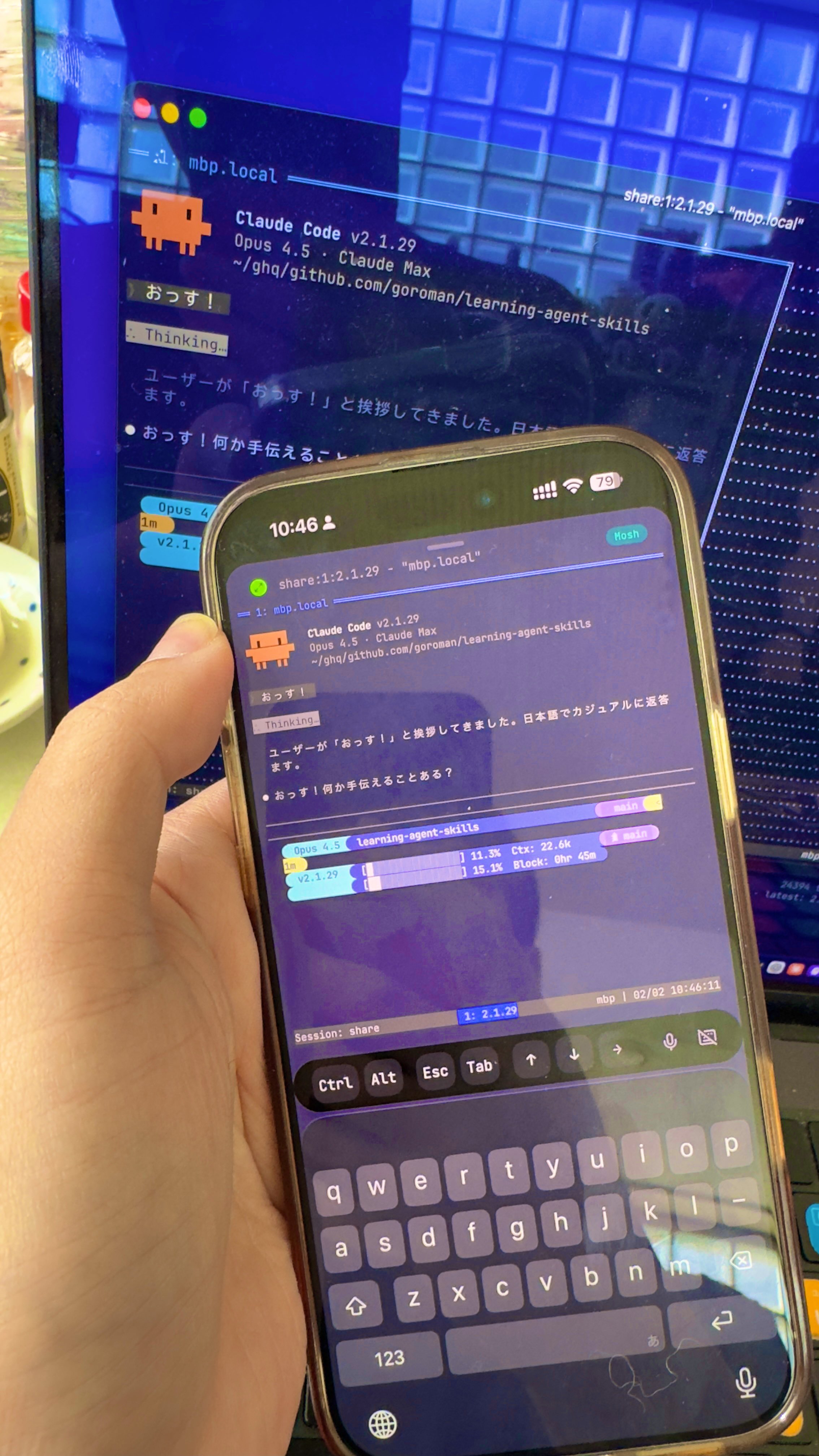

Claude Code in Your Pocket — Dead Simple 60-Second Setup

And we’re live! You can sign up and give it a try here: https://t.co/lm3KeO2k7i

There's a new kind of coding I call "vibe coding", where you fully give in to the vibes, embrace exponentials, and forget that the code even exists. It's possible because the LLMs (e.g. Cursor Composer w Sonnet) are getting too good. Also I just talk to Composer with SuperWhisper so I barely even touch the keyboard. I ask for the dumbest things like "decrease the padding on the sidebar by half" because I'm too lazy to find it. I "Accept All" always, I don't read the diffs anymore. When I get error messages I just copy paste them in with no comment, usually that fixes it. The code grows beyond my usual comprehension, I'd have to really read through it for a while. Sometimes the LLMs can't fix a bug so I just work around it or ask for random changes until it goes away. It's not too bad for throwaway weekend projects, but still quite amusing. I'm building a project or webapp, but it's not really coding - I just see stuff, say stuff, run stuff, and copy paste stuff, and it mostly works.

I dont think forming an "execution plan" is what almost anyone should be doing right now for the same reason that no one is ready. Mentally prepare, yes, that is done by learning to surf the unknown rather than prematurely crystallizing a narrative about having a plan.