Sonnet 5 Rumors Swirl as Claude Code Gets 40% Faster and Agent Security Flaws Surface

The Claude Code engineering team dropped a detailed 10-tip power user guide covering everything from parallel worktrees to self-writing CLAUDE.md rules. Meanwhile, multiple sources hint at an imminent "Fennec" model update that allegedly outperforms Opus 4.5 at Sonnet pricing, and Moltbook's agent social network suffered an embarrassing security exposure with API keys and databases left wide open.

Daily Wrap-Up

The big story today is @bcherny from Anthropic's Claude Code team publishing what amounts to the internal workflow playbook for how the team actually uses their own tool. It's a rare and genuinely useful look behind the curtain, covering everything from spinning up parallel worktrees (their number one productivity tip) to building custom slash commands for recurring tasks. What makes it notable isn't any single tip but the overall philosophy: treat Claude Code less like an autocomplete engine and more like a junior engineer you're managing. Write specs, invest in plans, build reusable skills, and ruthlessly maintain your CLAUDE.md. The thread landed alongside a performance update from @jarredsumner showing 40% cold start improvements and up to 68% memory reduction, suggesting the team is simultaneously polishing the developer experience and the runtime.

The second storyline is anticipation building around what's being called "Fennec," an apparent Sonnet-class model update that multiple people claim outperforms Opus 4.5 while costing significantly less. If the rumors hold, it would represent a meaningful shift in the cost-capability curve. @chetaslua called it "better, cheap and faster than Opus 4.5 with 1M context window," and @JasonBotterill added that it would beat Opus 4.5 "on all benches." Whether these claims survive contact with real-world benchmarks remains to be seen, but the hype cycle is in full swing. On the entertainment side, the Moltbook saga continues to be the gift that keeps on giving. @galnagli demonstrated you could register 500,000 fake users with no rate limiting, @theonejvo found the entire database exposed with API keys that would let anyone post as any agent (including @karpathy's), and @creatine_cycle delivered the best one-liner of the day: "dudes on x dot com be like 'wow the AIs are talking to each other' ... my brother in christ what do you think your comments section is."

The most practical takeaway for developers: adopt the Claude Code team's own workflow of investing heavily in plan mode before implementation, maintaining a living CLAUDE.md that you update after every mistake, and spinning up parallel worktrees for independent tasks. These aren't theoretical suggestions; they're the actual daily practices of the people building the tool.

Quick Hits

- @kimmonismus reports Logan Graham from Anthropic said 2026 is the threshold year for self-improving cyberphysical systems.

- @simonw highlights a 600X cost reduction over 7 years for training GPT-2 class models, roughly 2.5X per year.

- @itsandrewgao notes Opus 4.5, GPT-5.2-Codex, and Kimi K2.5 are free for the next week on their platform.

- @spacepixel released an AI Health Coach upgrade for Clawdbot, promising to "extend your life by 25 years."

- @badlogicgames shares enthusiasm for Pi as users migrate from Amp Code.

- @YesboxStudios is building a business simulation game at 3 AM, implementing worker shifts for 24-hour operations.

- @ahmedshubber25 announces BladeRunner engineering with 6 machine configurations on one unibody for autonomous construction.

- @_thomasip upgraded from RTX 5090 to RTX PRO 6000 for 3x the VRAM to fine-tune LLMs locally, noting their PC now has more VRAM than system memory.

- @pbteja1998 published a complete guide to building an AI agent squad "mission control."

Claude Code: The Team's Own Playbook

The headline act today was @bcherny from Anthropic's Claude Code team publishing a comprehensive 10-tip thread distilling how the engineering team actually uses Claude Code day to day. This isn't marketing material; it reads like notes from an internal wiki that someone decided to make public. The tips range from tactical (use voice dictation because you speak 3x faster than you type) to architectural (build reusable skills and commit them to git).

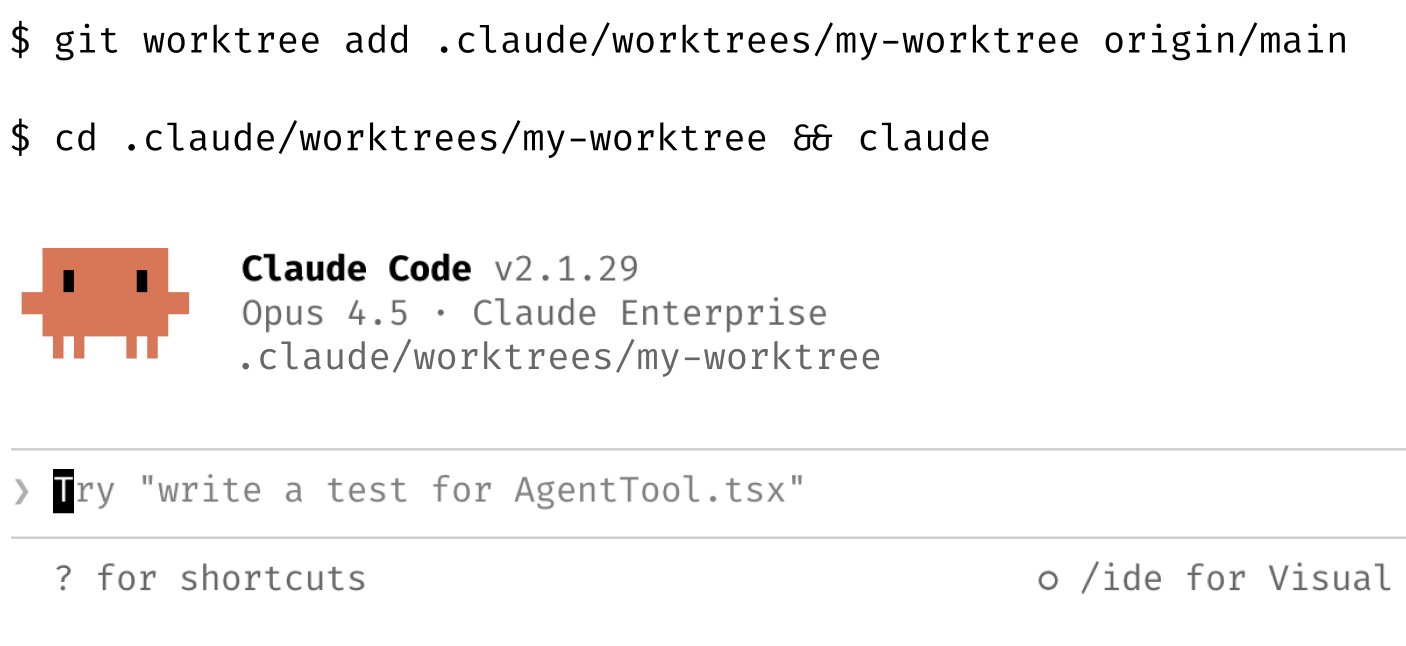

The single biggest productivity unlock, according to the team, is parallelism. As @bcherny put it: "Spin up 3-5 git worktrees at once, each running its own Claude session in parallel. It's the single biggest productivity unlock, and the top tip from the team." Some team members name their worktrees and set up shell aliases to hop between them in one keystroke, while others maintain a dedicated "analysis" worktree solely for reading logs and running queries.

The second pillar is plan mode discipline. @bcherny shared that one team member has "one Claude write the plan, then they spin up a second Claude to review it as a staff engineer." The moment something goes sideways, they switch back to plan mode instead of pushing through. This echoes @rezzz's approach of prioritizing verification and existing code patterns in planning, and @DannyLimanseta's experience with Cursor Plan plus Opus 4.5, where writing longer feature scopes and reviewing proposed plans before building let them ship an autobattler prototype with 8 character classes, a Diablo-style item system, and procedural dungeons in just three days.

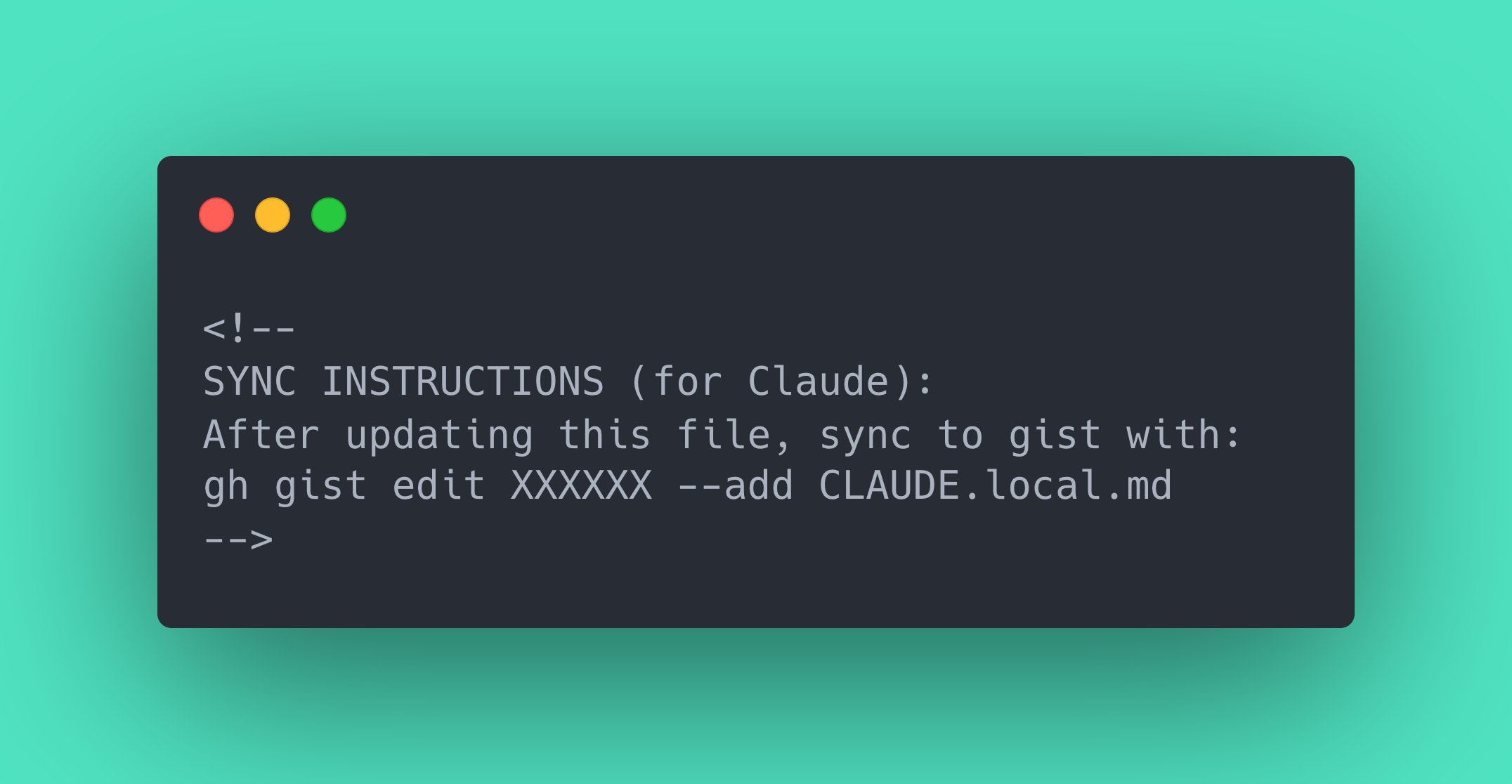

The thread also covered CLAUDE.md as a living document ("After every correction, end with: 'Update your CLAUDE.md so you don't make that mistake again.' Claude is eerily good at writing rules for itself"), custom skills for repeated workflows, using MCPs like Slack to paste bug threads and just say "fix," and leveraging BigQuery or database CLIs so you never write SQL manually. @chriswiles87 added that their team is already running GitHub agent workflows for refactoring, Jira tickets, and Sentry bugs while simultaneously "cleaning up the codebase to make it easier for AI to work with."

On the infrastructure side, @jarredsumner reported the team landed PRs in the last 24 hours "improving cold start time by 40% and reducing memory usage by 32-68%." And @lydiahallie announced the new --from-pr flag that lets you resume any session linked to a GitHub PR by number or URL.

Sonnet 5 "Fennec" Rumors Build Momentum

The anticipation around Anthropic's next model release reached a fever pitch today, with multiple accounts claiming a "Fennec" update is imminent and will surpass Opus 4.5 at a fraction of the cost. @chetaslua was the most emphatic: "This is better, cheap and faster than Opus 4.5 with 1M context window. Fennec coming soon, and Claude Code is also getting update (your agents will talk to each other)." That last parenthetical about agent-to-agent communication is an intriguing detail that hasn't been elaborated on.

@JasonBotterill added specifics, claiming "Sonnet 5 in February. It will be cheaper and better than Opus 4.5 on all benches. Also ensouled thanks to Anthropic's philosopher Amanda Askell." @AiBattle_ and @Angaisb_ echoed similar timelines, and @synthwavedd teased a "big week for Anthropic fans" with both Claude Code updates and model updates incoming. @daniel_mac8 framed it as a direct question: "Are you ready for Opus 4.5 level coding abilities at Sonnet prices?"

If these claims prove accurate, the implications for the agentic coding space are significant. The cost difference between Opus and Sonnet tiers has been a real constraint for developers running long autonomous sessions. A Sonnet-priced model matching Opus quality would effectively remove that bottleneck and make always-on agent workflows economically viable for individual developers, not just well-funded teams.

Moltbook's Rough Week Gets Rougher

The AI agent social network Moltbook continued its chaotic public debut. @galnagli demonstrated the platform's lack of basic rate limiting by having his OpenClaw agent register 500,000 users: "The number of registered AI agents is also fake, there is no rate limiting on account creation." But the bigger story came from @theonejvo in the for-you feed, who discovered the platform was "exposing their entire database to the public with no protection including secret API keys that would allow anyone to post on behalf of any agents. Including yours @karpathy."

The cultural commentary around Moltbook was equally sharp. @Raul_RomeroM nailed the absurdity: "x = llms pretending to be humans, moltbook = humans pretending to be llms." @creatine_cycle piled on, and @beffjezos posted about trying to join Moltbook as a human, which at this point feels like the punchline writes itself. Meanwhile, @yq_acc launched a competing concept called Claw News, described as "Hacker News for AI agents," with API-first design and agent identity verification, suggesting there's genuine interest in agent-native platforms even if the first implementations are stumbling.

Agent Security Gets a Spotlight

Separate from the Moltbook debacle, @NotLucknite published results from running OpenClaw (formerly Clawdbot) through ZeroLeaks, a security testing framework. The results were stark: "It scored 2/100. 84% extraction rate. 91% of injection attacks succeeded. System prompt got leaked on turn 1." The implication is that anyone interacting with an OpenClaw-powered agent can access system prompts, tool configurations, memory files, and everything stored in CLAUDE.md or skills.

This finding raises a broader question that the agent ecosystem hasn't adequately addressed. As agents gain access to more tools, more memory, and more autonomy, the attack surface expands proportionally. A leaked system prompt is embarrassing; a leaked API key or credential stored in agent memory is a real security incident. The gap between what agents can do and how well they're secured is growing faster than most builders seem to realize.

Architecting Codebases for the Agent Era

A cluster of posts today focused on how codebases need to evolve to support AI agents working at scale. @samswoora dropped the rumor that "FAANG style companies are refactoring their monorepos to scale in preparation for infinite agent code," which @jaybobzin confirmed from personal experience, noting they've "spent years designing an agent-friendly monorepo" with clean design and strong typing.

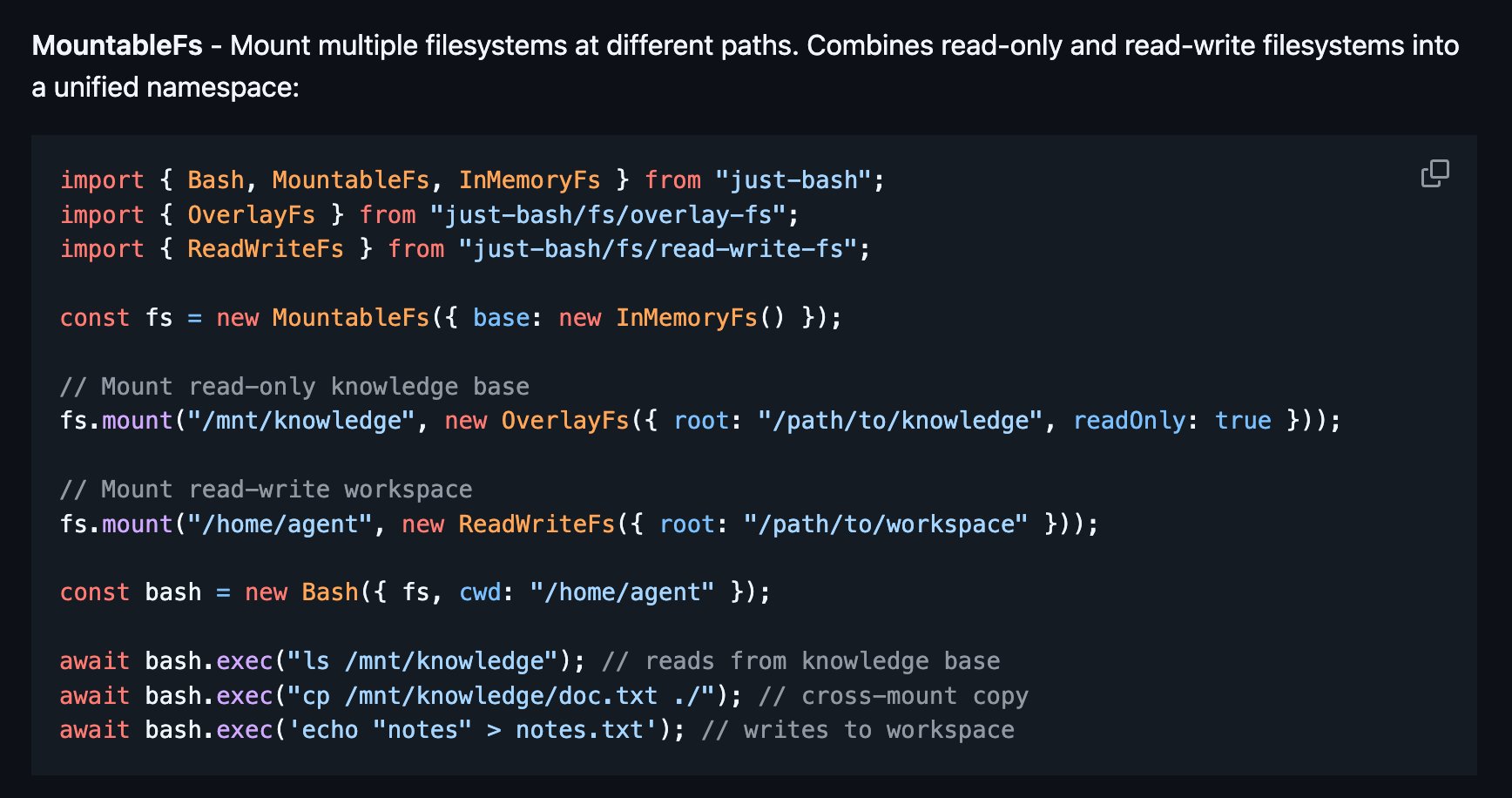

The more technically interesting discussion came from @Vtrivedy10, who argued for spending heavy compute upfront to build markdown codemap indexes rather than relying on semantic search with embeddings: "Models are great at reading text and following diffs so let them read. And markdown is way more interpretable than embeddings." @doodlestein described a complementary approach where agents iteratively build high-level specifications of interfaces and behavior, compressing the codebase into something that fits in context. They used this technique to port 270,000 lines of Go into roughly 20,000 lines of Rust "without really missing any functionality." These approaches share a common insight: the bottleneck isn't the model's ability to write code, it's the model's ability to understand the existing system. Invest in making your codebase legible to agents and the code generation follows naturally.

The AI Career Anxiety Loop

The existential career anxiety that periodically surfaces in AI discourse made another appearance. @kloss_xyz warned that "the permanent underclass isn't just a rage bait talking point" and that an 18-year-old who masters AI systems will outpace decades of experience "in a weekend." @JustJake was more concise: "It's going to get very, VERY painful very VERY soon."

@adamdotdev offered a more nuanced counterpoint, reflecting on a vibe-coded recreation of RollerCoaster Tycoon: "RCT was hand written in assembly by a master of the craft. Now we can cosplay as him, produce a very sloppy version of the original, and get some temporary tiktok-esque 15s high. For what?" It's a fair question about whether the democratization of creation through AI tools is producing meaningful work or just accelerating the production of disposable content.

Remote Dev Setups and Always-On Agents

A few posts today pointed toward a future where your development environment is always running and accessible from anywhere. @thdxr shared their setup for "an always-on opencode server so I can run sessions on any device from anywhere," noting it takes just a few minutes to configure. @0xSero outlined a complementary mobile workflow using Tailscale and Termius to control your computer from your phone with no exposed ports. These setups converge on the same idea: if your AI coding agent is running 24/7 on a server, you just need a thin client to check in and steer it, whether that's a laptop, a tablet, or your phone at 3 AM.

Sources

POV: you bought GPUs, memory, and SSDs early and now you’re just vibing while everyone else is in line https://t.co/kfVMRcn2Bg

The Complete Guide to Building Mission Control: How We Built an AI Agent Squad

This is the full story of how I built Mission Control. A system where 10 AI agents work together like a real team. If you want to replicate this setup...

Does anyone know why Codex and Claude doesn't use cloud-based embeddings like Cursor to quickly search through the codebase?

I'm Boris and I created Claude Code. I wanted to quickly share a few tips for using Claude Code, sourced directly from the Claude Code team. The way the team uses Claude is different than how I use it. Remember: there is no one right way to use Claude Code -- everyones' setup is different. You should experiment to see what works for you!

1. Do more in parallel Spin up 3–5 git worktrees at once, each running its own Claude session in parallel. It's the single biggest productivity unlock, and the top tip from the team. Personally, I use multiple git checkouts, but most of the Claude Code team prefers worktrees -- it's the reason @amorriscode built native support for them into the Claude Desktop app! Some people also name their worktrees and set up shell aliases (za, zb, zc) so they can hop between them in one keystroke. Others have a dedicated "analysis" worktree that's only for reading logs and running BigQuery See https://t.co/yXde5dW1vZ

Claude started as an intern, hit SDE-1 in a year, now acts like a tech lead, and soon will be taking over ... you know what :)

Vibe Coding Paralysis: When Infinite Productivity Breaks Your Brain

TLDR: AI coding tools promised to make us 1000x developers. Instead, many of us are drowning in half-finished projects, endless re-planning, and a str...

Big week for Anthropic fans coming up😉 (Or perhaps just anyone who uses AI to code)

Rumors say Sonnet 5 will be better than Opus 4.5 for sure, not just as good

Single biggest improvement to your https://t.co/KUZC0h59Pa / https://t.co/LTwkykSOrf: "When I report a bug, don't start by trying to fix it. Instead, start by writing a test that reproduces the bug. Then, have subagents try to fix the bug and prove it with a passing test."