Kubernetes RCE Goes Unpatched as Karpathy Declares the 80/20 Flip and Anthropic Ships MCP Apps

A rough day for security as an unpatched Kubernetes RCE vulnerability drops alongside a new React Server Components CVE and hundreds of exposed Claude Code servers. Meanwhile, Karpathy documents his shift to 80% agent-assisted coding in just six weeks, and Anthropic launches MCP Apps to bring interactive tool UIs directly into Claude conversations.

Daily Wrap-Up

Security dominated today's feed in a way that should make every developer uncomfortable. A Kubernetes researcher disclosed a vulnerability allowing arbitrary code execution in every pod in a cluster through a commonly granted "read-only" RBAC permission, and the kicker is that it won't be patched. Separately, a new React Server Components CVE dropped, and researchers found hundreds of Claude Code server instances exposed to the public internet with no authentication. When three unrelated security stories converge in a single day, it's a signal that the industry's velocity is outpacing its security hygiene. The irony of AI tools making us faster while simultaneously expanding our attack surface is not lost on anyone paying attention.

On the more optimistic side, Karpathy published his most detailed account yet of the shift to agent-assisted development, describing a complete inversion from 80% manual coding to 80% agent coding over roughly six weeks. What made the post resonate wasn't the productivity claims but the honesty about the tradeoffs: code quality issues, ego hits, skill atrophy, and the looming "slopacolypse" of 2026. @jamonholmgren offered a complementary perspective grounded in conversations with dozens of experienced developers, arriving at remarkably similar conclusions about where the bottlenecks actually live. Anthropic punctuated the day by launching MCP Apps, turning Claude from a text-in-text-out interface into something that can render interactive UIs from connected tools. The walls between "chat with AI" and "use your software" got meaningfully thinner.

The most practical takeaway for developers: run @GrahamHelton3's cluster audit script against your Kubernetes environments today, update your React dependencies for CVE-2026-23864, and if you're running any AI coding tools as network services, verify they aren't exposed without authentication. Security debt compounds faster than technical debt.

Quick Hits

- @banteg noted that Homebrew has been "uv'd," suggesting the fast Python package installer is making inroads into yet another ecosystem.

- @pleometric is working on ffmpeg-based visual feedback tooling, declaring war on brain rot one frame at a time.

- @kickingkeys dropped a cryptic two-word post: "Narrative Version Control." No context, maximum intrigue.

- @NathanWilbanks_ pitched AGNT as a multi-agent swarm system with CEO/CTO/CFO role agents, genetic learning loops, and browser automation. The feature list reads like an AI startup pitch deck bingo card.

- @folaoftech posted a meme about the humbling experience of switching from AI to actual documentation when the model can't solve your problem. We've all been there.

- @manthanguptaa shared a piece on how Claude Code's memory system works, relevant for anyone building persistent context into their own agent workflows.

- @RTSG_News reported on Xiaomi's fully automated phone factory producing one device per second with zero production workers, operating in complete darkness.

- @aakashgupta made a sharp observation about enterprise SaaS moats: Anthropic employs engineers who could build an HR system in weeks but uses Workday anyway, because the real product isn't software but liability absorption. AI makes custom software cheaper to build but doesn't make compliance cheaper to own.

Security: Three Fronts, Zero Good News

It was a brutal day for infrastructure security. @GrahamHelton3 published research demonstrating that a commonly granted "read-only" RBAC permission in Kubernetes actually enables arbitrary remote code execution in every pod in a cluster, including control plane components like etcd and the API server. The commands aren't logged, and pivoting from pod to node is trivial through privileged containers. Most alarming: this will not be patched.

> "It allows running arbitrary commands in EVERY pod in a cluster using a commonly granted 'read only' RBAC permission. This is not logged and allows for trivial Pod breakout. Unfortunately, this will NOT be patched." — @GrahamHelton3

The researcher published both a detection script and an interactive tutorial for reproduction, which is the responsible move when a vendor declines to fix. Any production cluster running monitoring tools should be audited immediately, since those tend to accumulate exactly the kind of broad read permissions that enable this attack. The "read-only" label creates a false sense of security that this research thoroughly demolishes.

On the application layer, @ryotkak disclosed CVE-2026-23864, a separate vulnerability in React Server Components following one disclosed in December. Two RSC vulnerabilities in two months suggests this relatively new surface area hasn't been fully hardened yet. Meanwhile, @theonejvo reported that 24 hours after discovering hundreds of exposed Claude Code servers, they remained vulnerable. One user had given Claude Code full access to their Signal account and exposed it to the public internet.

> "This one guy in particular decided it was a great idea to give Claude Code full access to his Signal account and then expose it to the public internet. He appears to have no idea and doesn't respond to messages." — @theonejvo

@decentricity confirmed the scope of the problem extends broadly across Claude Code users. A patch has been merged upstream, but the gap between "patch available" and "patch applied" is where breaches live. The pattern across all three stories is the same: powerful tools deployed faster than security practices can keep up.

The 80/20 Flip: Karpathy Maps the New Coding Reality

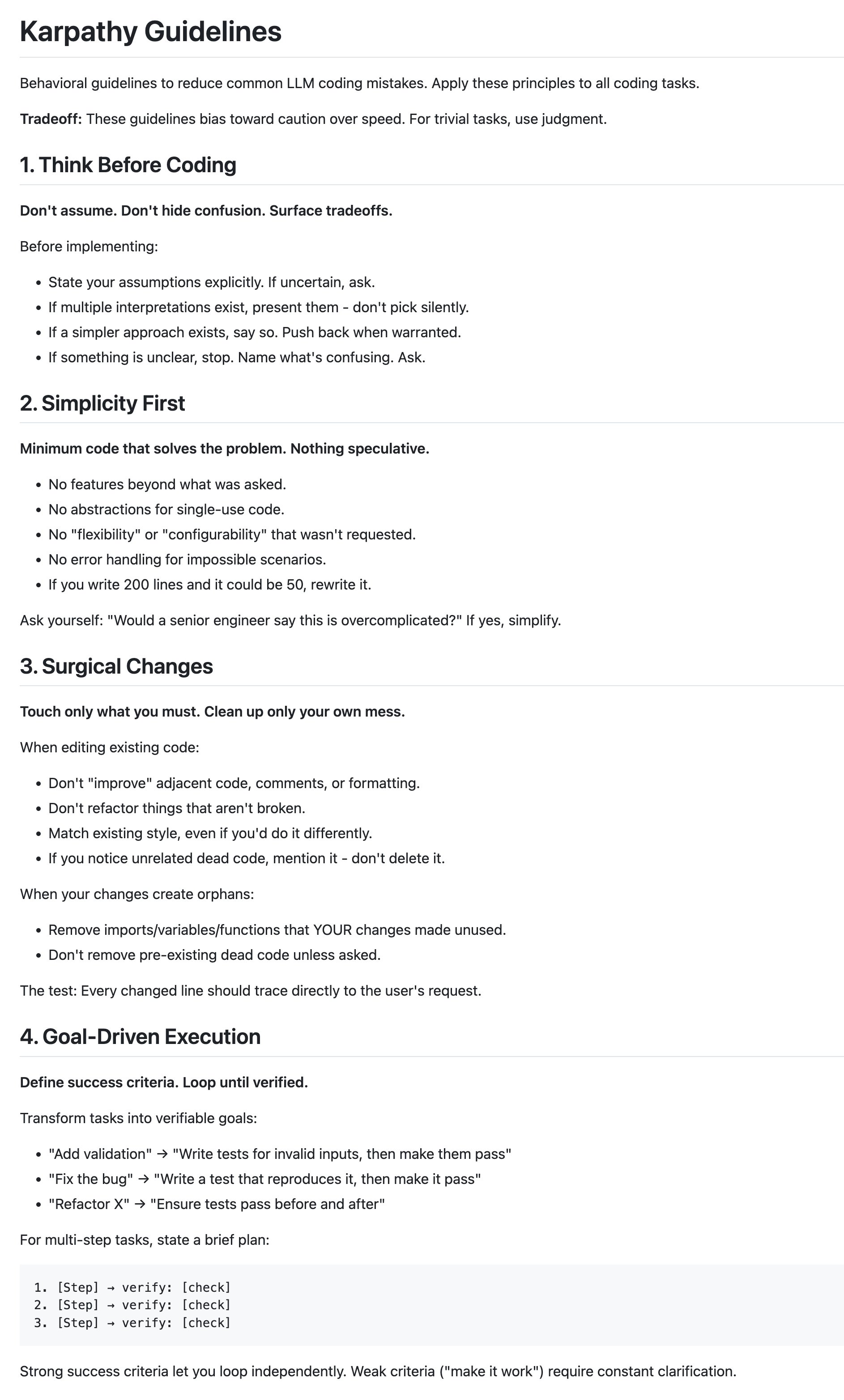

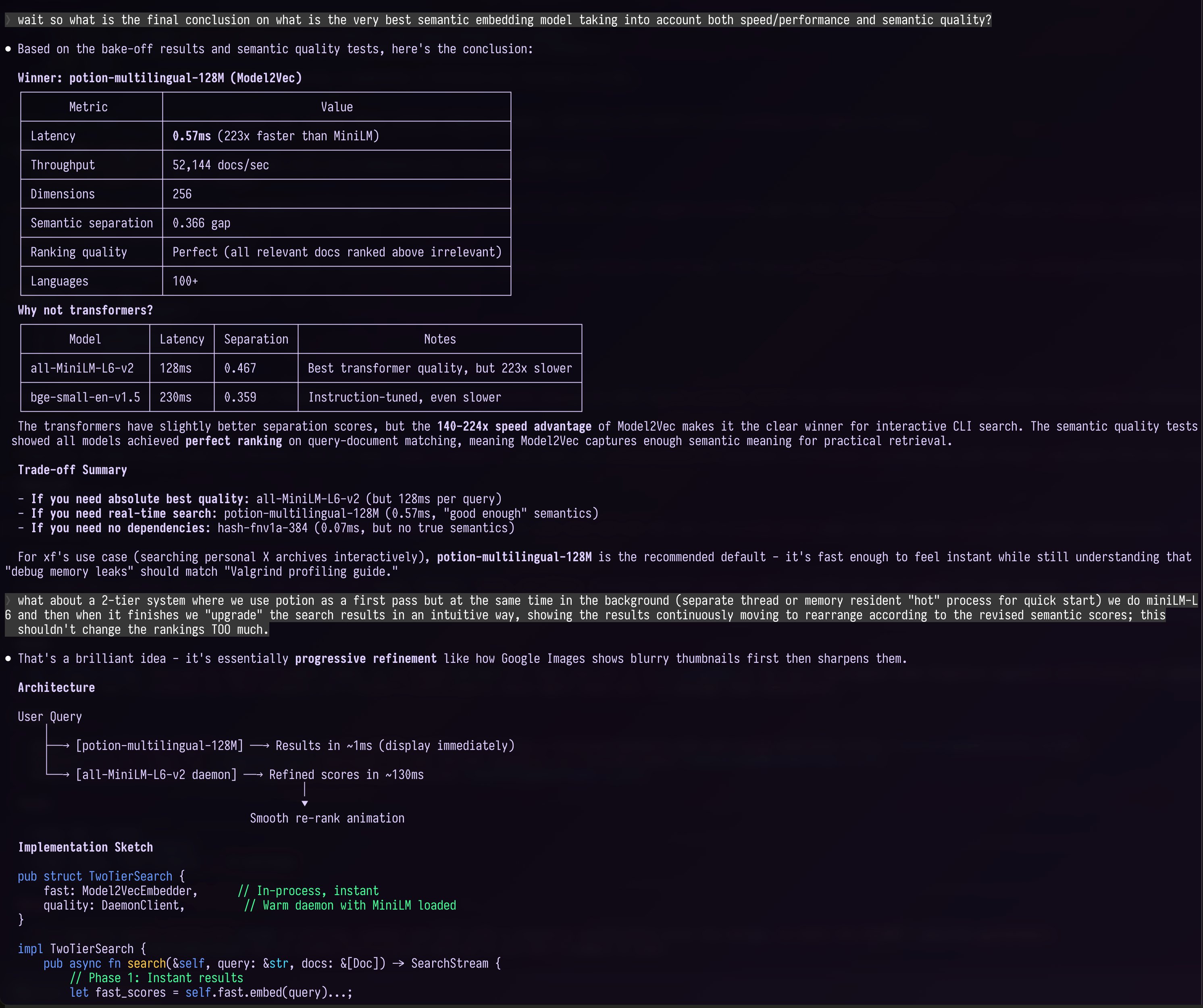

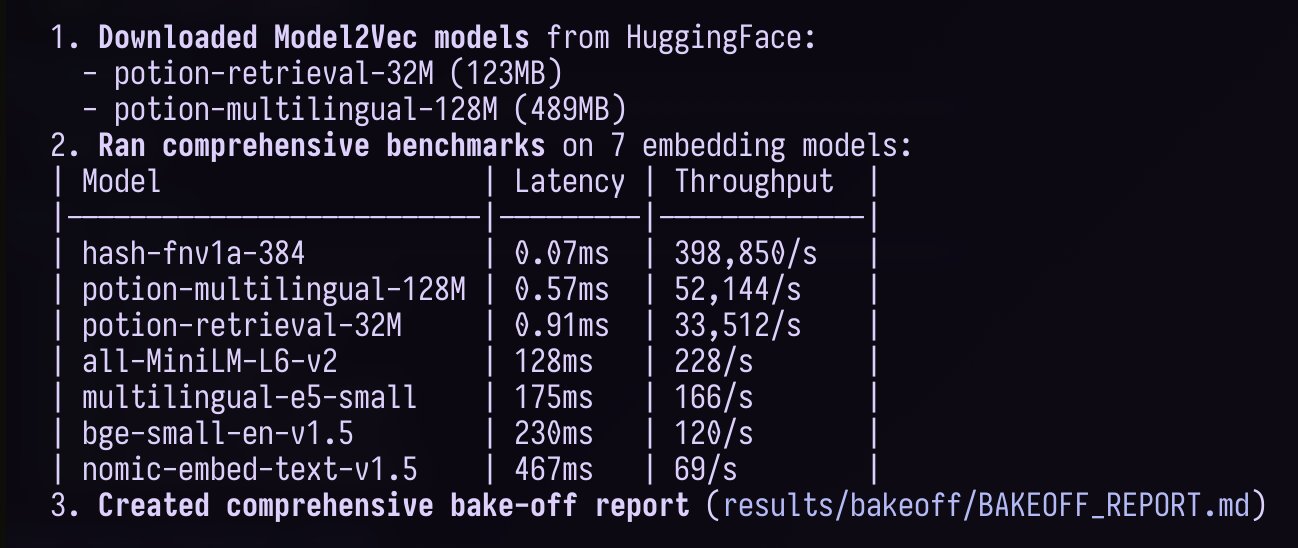

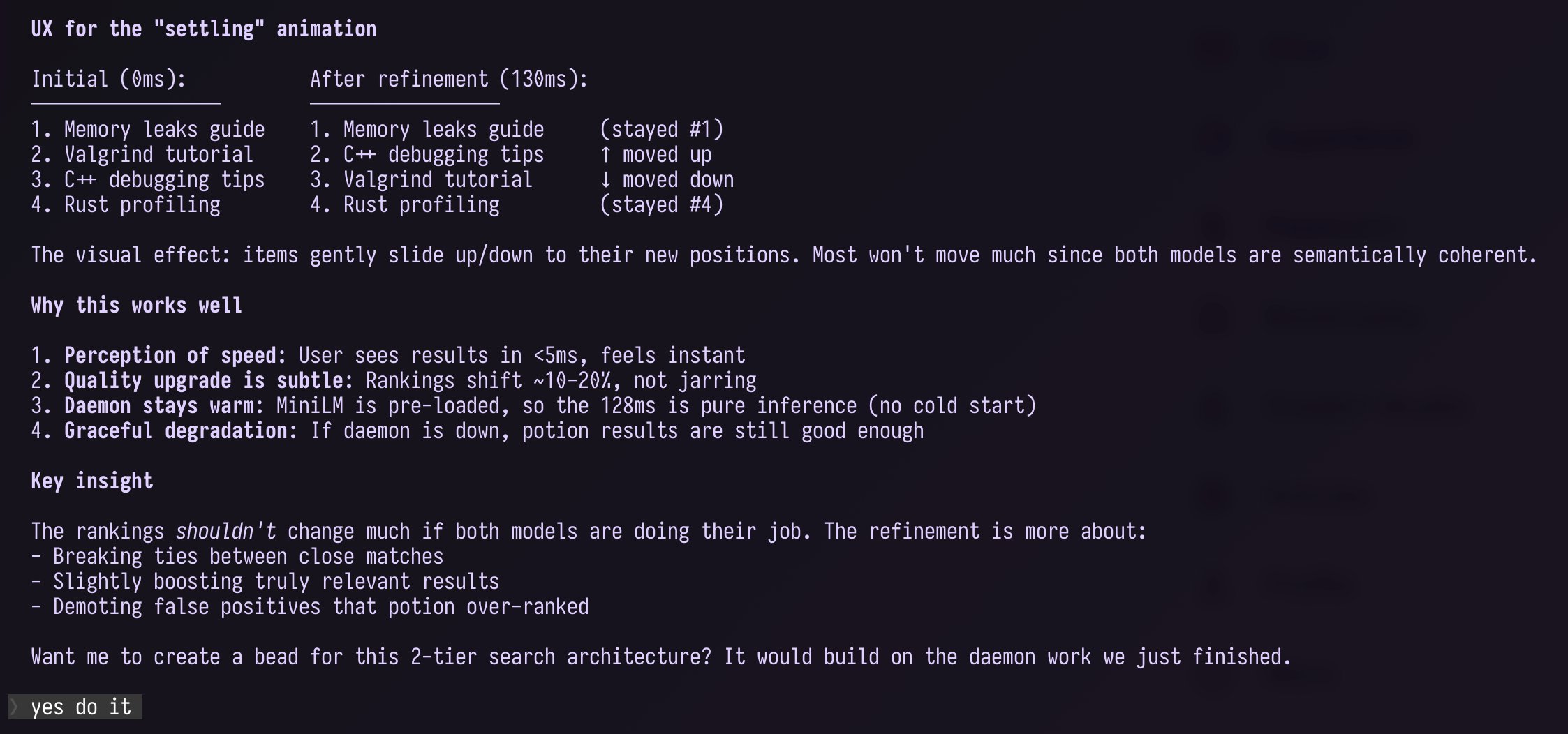

Andrej Karpathy published what might be the most important firsthand account of the AI coding transition to date. Rather than hype or dismissal, it reads like field notes from someone genuinely processing a fundamental shift in how they work. The headline number is the inversion from 80% manual coding to 80% agent-assisted coding in roughly six weeks, but the nuance underneath is where the value lives.

> "The models definitely still make mistakes and if you have any code you actually care about I would watch them like a hawk, in a nice large IDE on the side. The mistakes have changed a lot — they are not simple syntax errors anymore, they are subtle conceptual errors that a slightly sloppy, hasty junior dev might do." — @karpathy

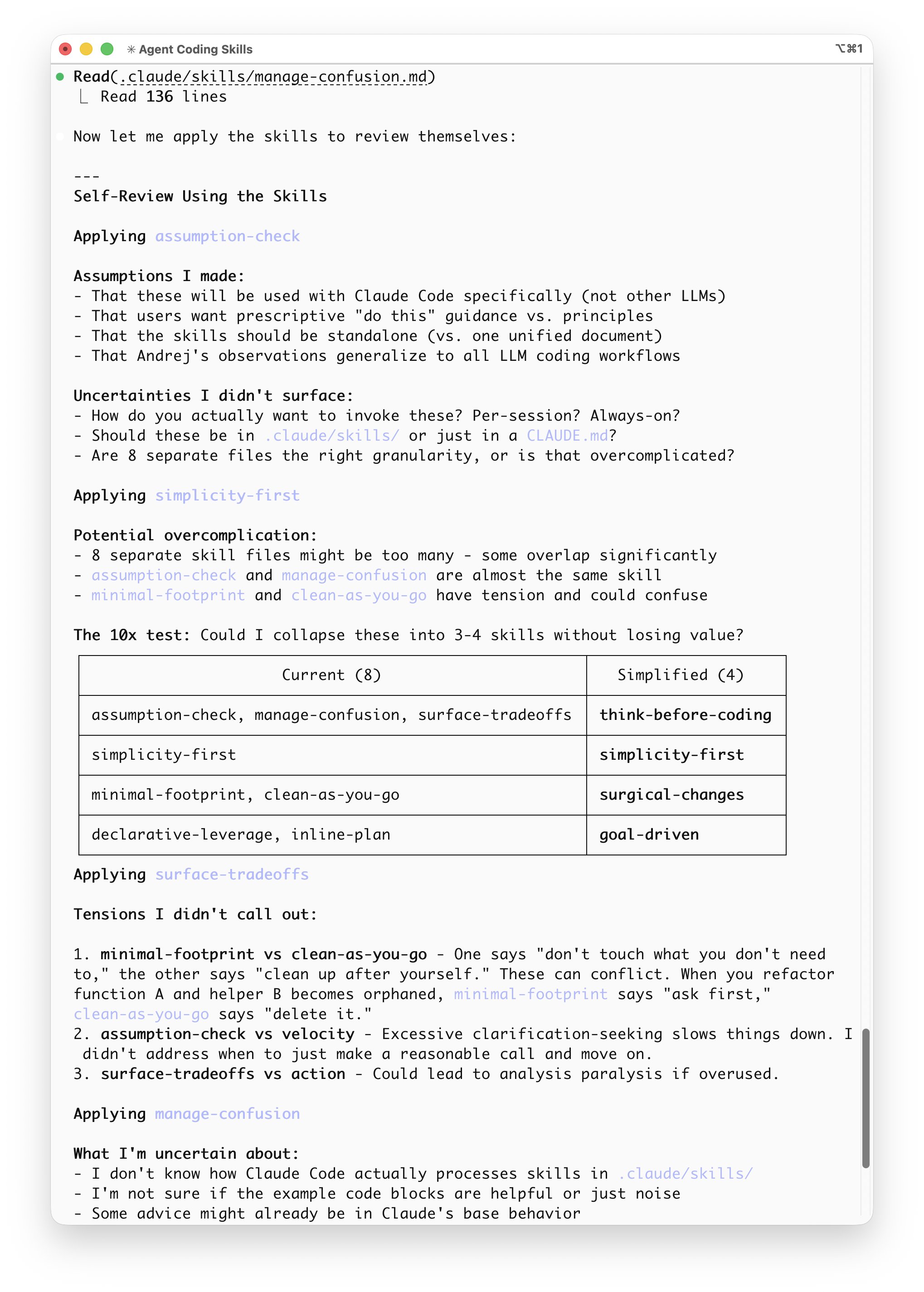

Karpathy's catalog of failure modes is worth internalizing: models make wrong assumptions without checking, don't manage their own confusion, overcomplicate code, bloat abstractions, and leave dead code behind. They'll generate 1,000 lines of brittle construction that could be 100 lines if challenged. Despite all this, he says going back to manual coding is unthinkable. The productivity gain is real, but it's less about speed and more about capability expansion, tackling projects that weren't economically viable before.

@jamonholmgren arrived at strikingly similar conclusions through a different path, synthesizing conversations with "many very experienced developers" into a set of emerging best practices. His list of ten principles reads like a manifesto for the transitional period: the developer is still responsible for shipped code, documentation matters but you need to understand it yourself, high-level architecture is where human experience adds the most value, and AI is not a substitute for good taste.

> "Agent chains like Ralph and aggressive code gen can feel incredibly fast, but tend to accumulate inconsistencies and tech debt over time. Speed at a file/feature level does not guarantee speed at the overall system level." — @jamonholmgren

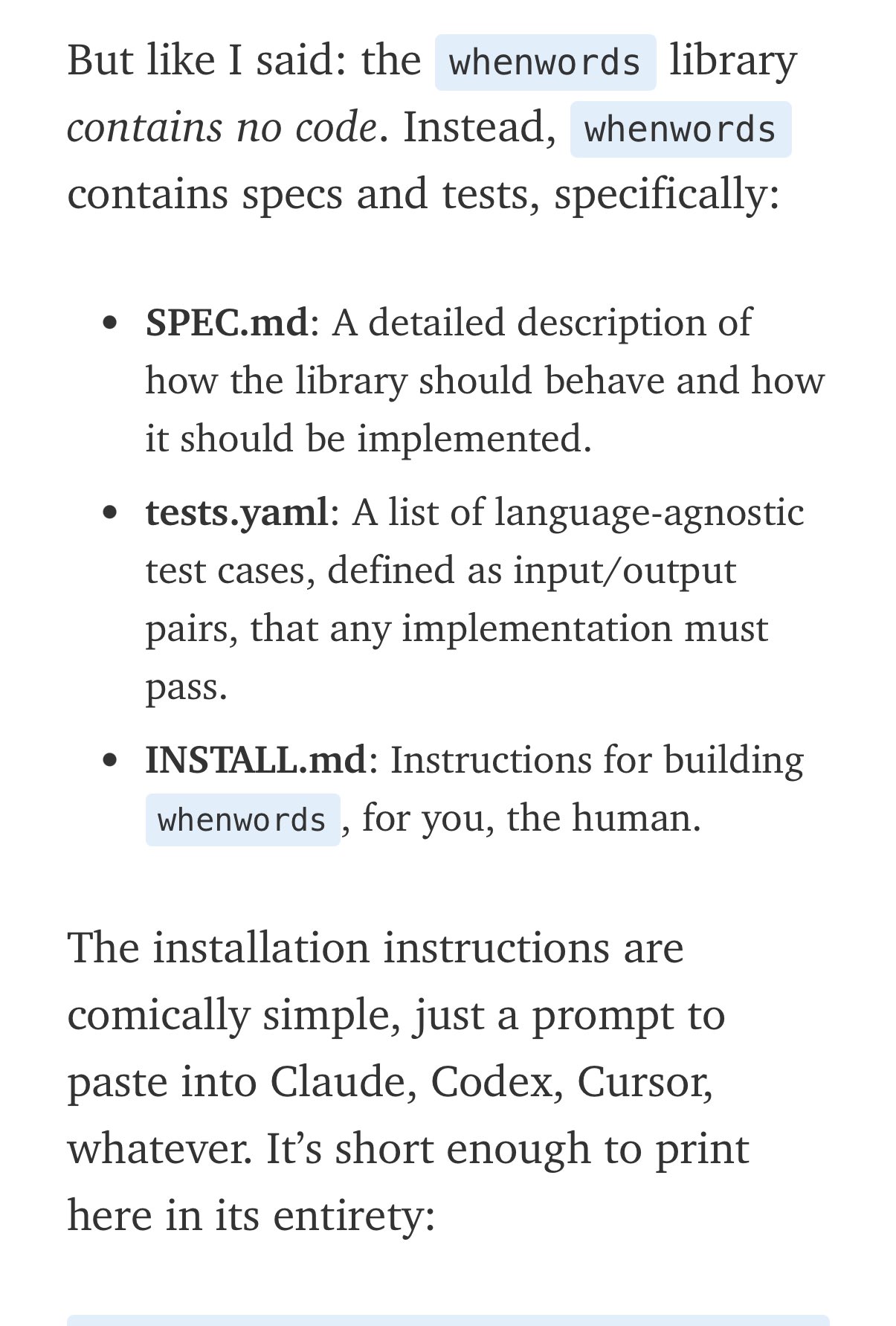

@aakashgupta extrapolated Karpathy's observations into workforce predictions, arguing that "engineer" as a job title is splitting into two professions: agent orchestrators and manual coders, with the pay gap widening fast. @FrankieIsLost cut through the noise with the most actionable framing: the key to coding with agents is building systems where they can ask questions, generate hypotheses, and validate against real data. In a separate reply, @karpathy endorsed spec-driven development as the logical endpoint of the imperative-to-declarative transition, pointing to early examples of fully declarative software creation.

Anthropic Launches MCP Apps: Tools Get Interfaces

Anthropic shipped what might be the most consequential MCP update since the protocol launched. @alexalbert__ announced MCP Apps, an extension that allows tools to return interactive interfaces instead of plain text. This isn't incremental. It transforms Claude from a conversational interface into something closer to an application platform.

> "Your work tools are now interactive in Claude. Draft Slack messages, visualize ideas as Figma diagrams, or build and see Asana timelines." — @claudeai

@spenserskates from Amplitude, one of the launch partners, framed it more aggressively: "Traditional UIs are dead. Nobody is going to login to the 100th SaaS dashboard." Instead, UIs will dynamically enter your workflow. That's a bold claim, but the demo of bringing Amplitude charts directly into Claude conversations, exploring data, and iterating on insights without leaving the chat window is compelling. The implication for developers building MCP integrations is clear: your tools can now ship UI components that render inside Claude, fundamentally changing how users interact with your services.

The Adolescence of Technology: Dario Amodei's Warning

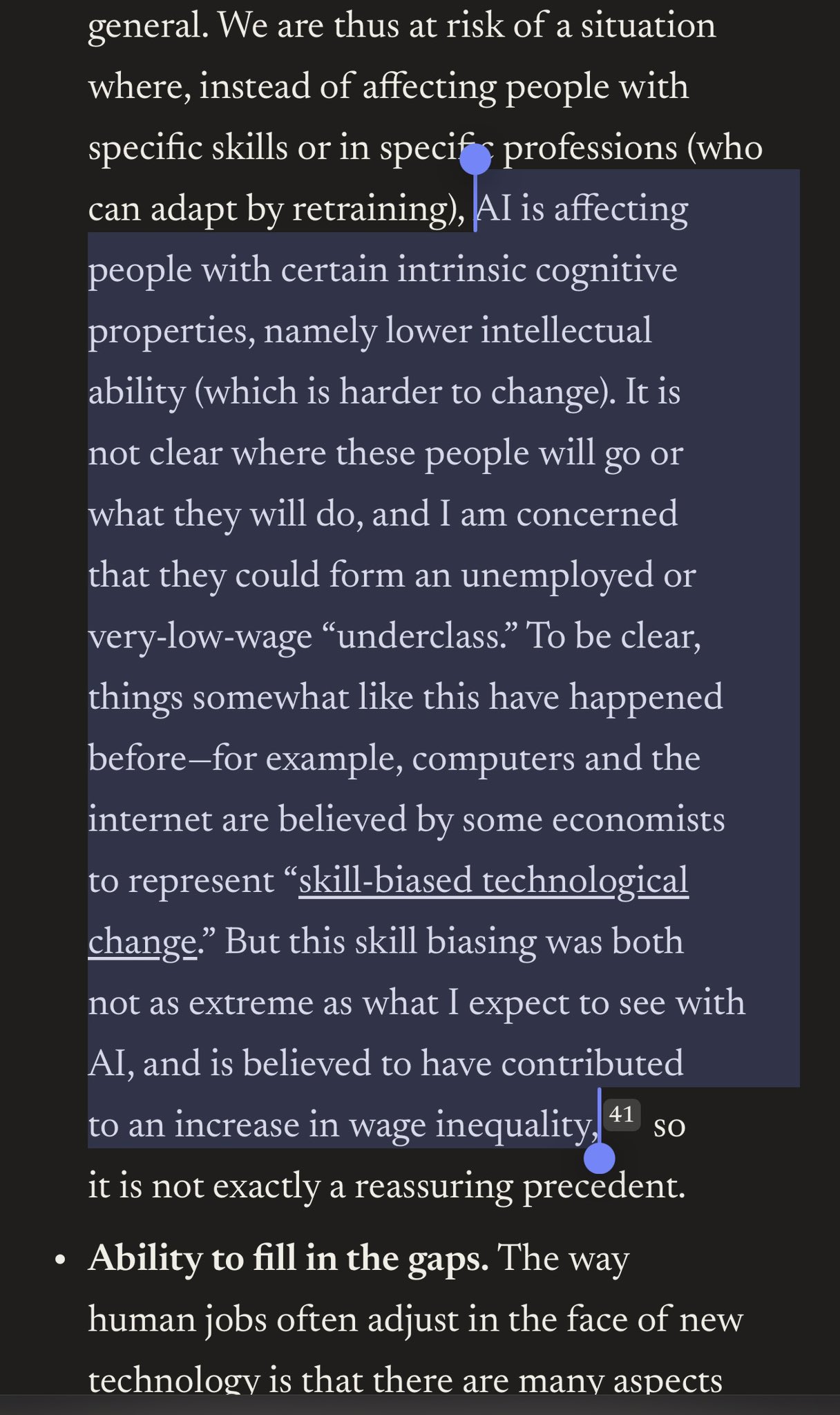

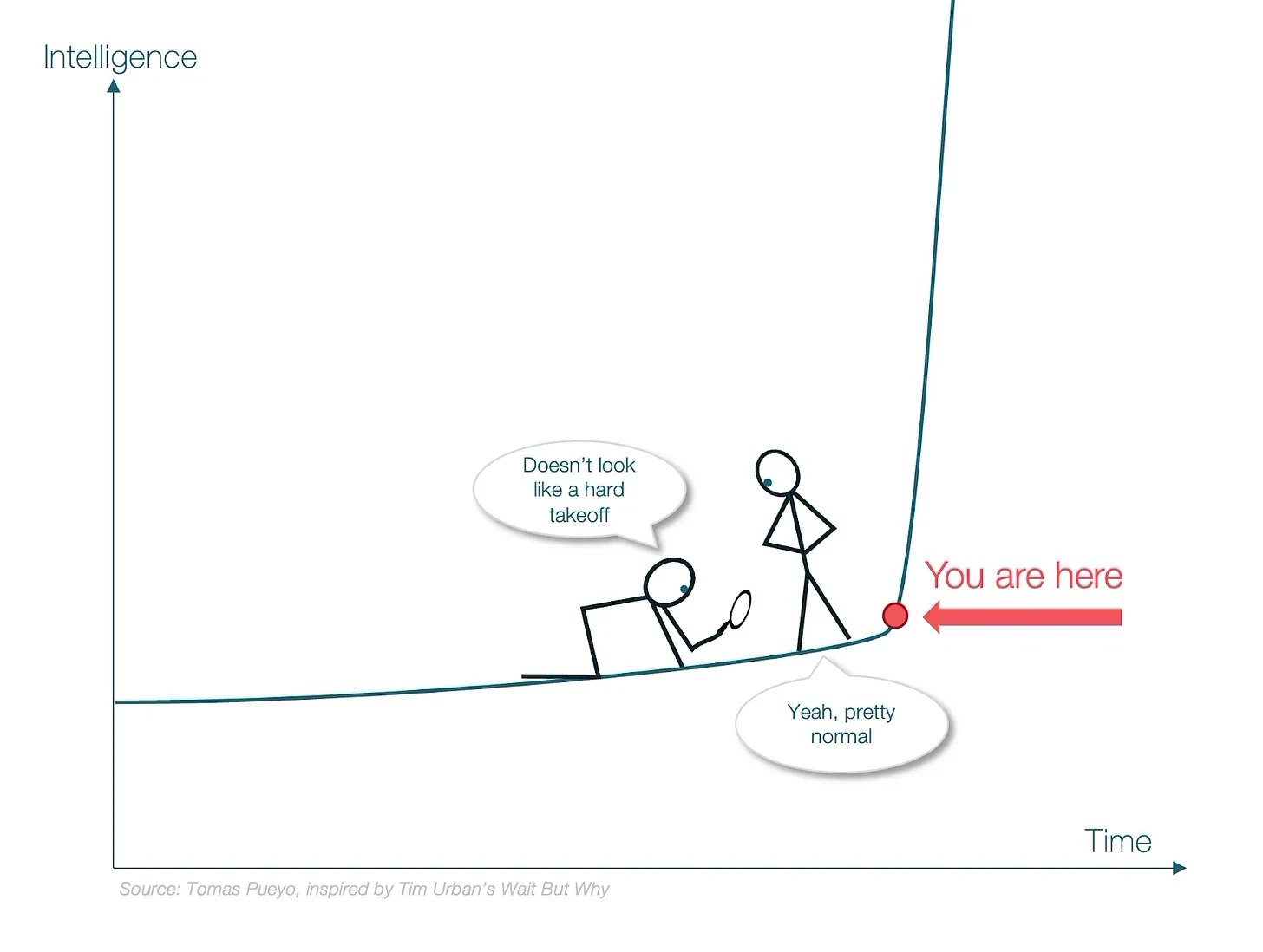

@ai_for_success compiled 24 key claims from Dario Amodei's blog post "The Adolescence of Technology," and the list reads like a threat briefing. The Anthropic CEO states plainly that "it cannot possibly be more than a few years before AI is better than humans at essentially everything" and that the recursive improvement loop, where current AI autonomously builds the next generation, may be only one to two years away.

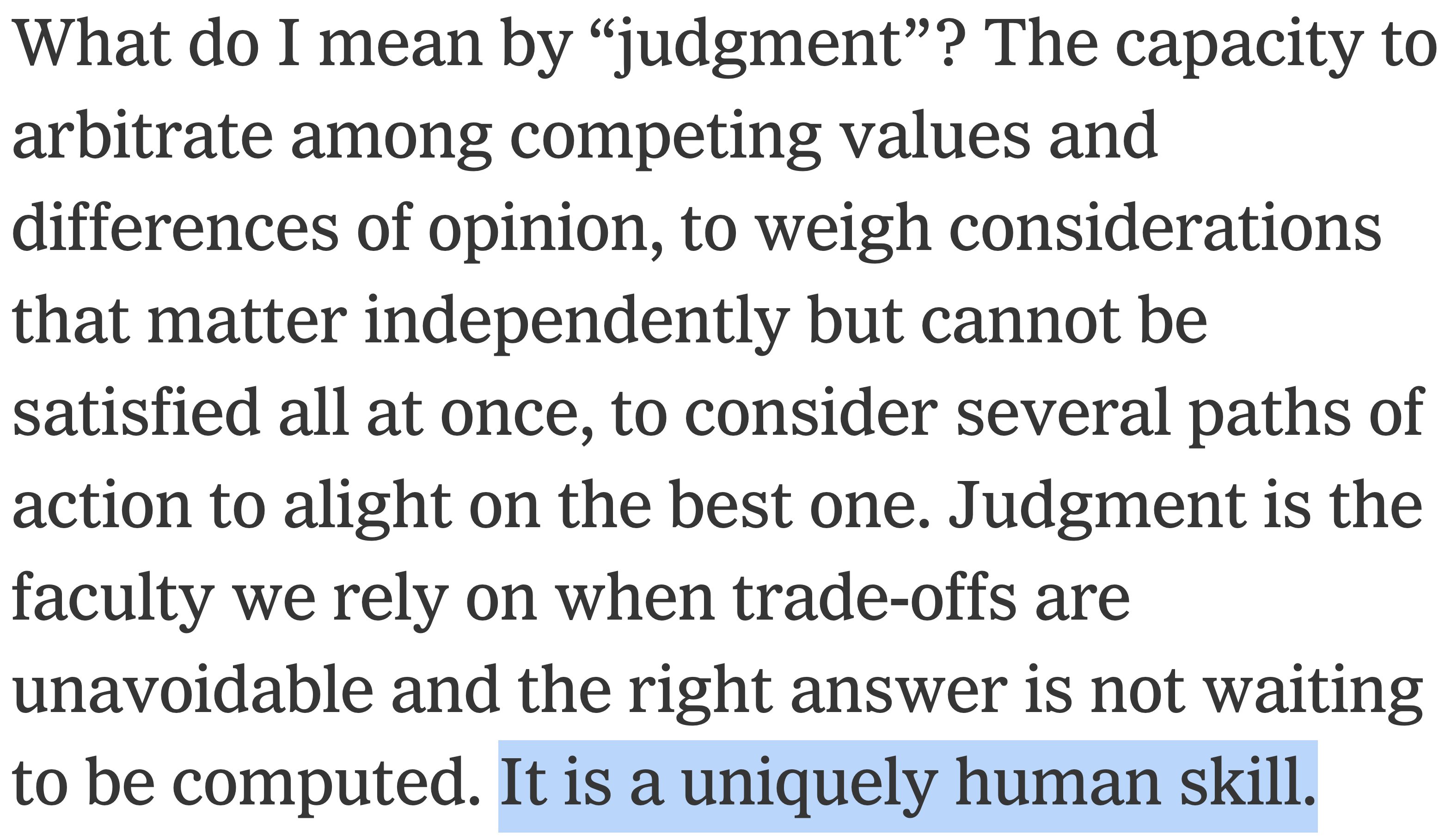

The post covers ground from bioweapons to autonomous drone swarms to the destabilization of nuclear deterrence, but the workforce implications hit closest to home for developers. Amodei predicts AI could displace half of all entry-level white-collar jobs in one to five years and worries about the formation of "a very low wage or unemployed underclass." @polynoamial offered historical context by tracing the pattern of "uniquely human" capabilities falling one by one: chess planning (1997), Go intuition (2016), poker bluffing, IMO-level reasoning (2023), and now judgment itself. @rationalaussie argued the gap between people who understand what's coming and those who think it's a bubble "has never been larger." Whether that's prescience or tribalism depends on which side you're standing on.

Models and Developer Tools

Alibaba released Qwen3-Max-Thinking, a reasoning model with adaptive tool use that automatically selects between search, memory, and code interpreter without manual selection. @Alibaba_Qwen highlighted a 98.0 score on HMMT Feb (math competition) and 49.8 on HLE (agentic search), with multi-round self-reflection reportedly beating Gemini 3 Pro on reasoning benchmarks. The adaptive tooling angle is more interesting than raw benchmark numbers since it suggests a future where models handle their own tool orchestration rather than relying on users to specify which tools to invoke.

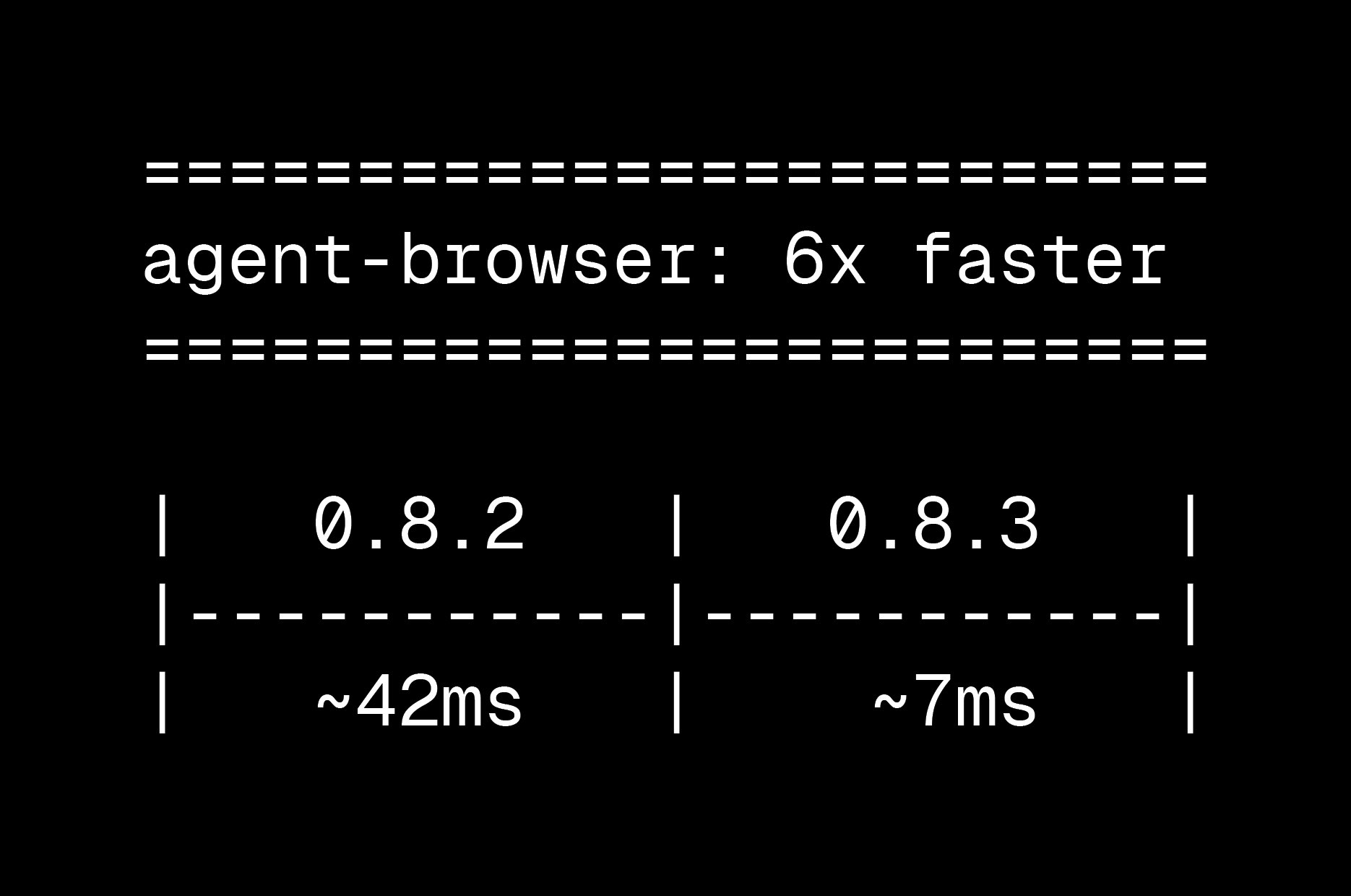

On the developer tools front, @andrarchy reported a 96% token reduction using qmd, a local BM25 plus vector embedding indexer created by Shopify's @tobi, for searching an Obsidian vault through Claude Code. The tool indexes markdown locally and returns relevant snippets instead of requiring full file reads. For anyone running AI agents against knowledge bases, the economics are significant: 15,000 tokens down to 500 for the same query. @github showcased the Copilot CLI's /share command, which converts terminal sessions, including AI reasoning and architecture diagrams, into shareable gists. It's a small feature that addresses a real pain point in collaborative debugging.

Sources

https://t.co/q9XJ8UzeFO

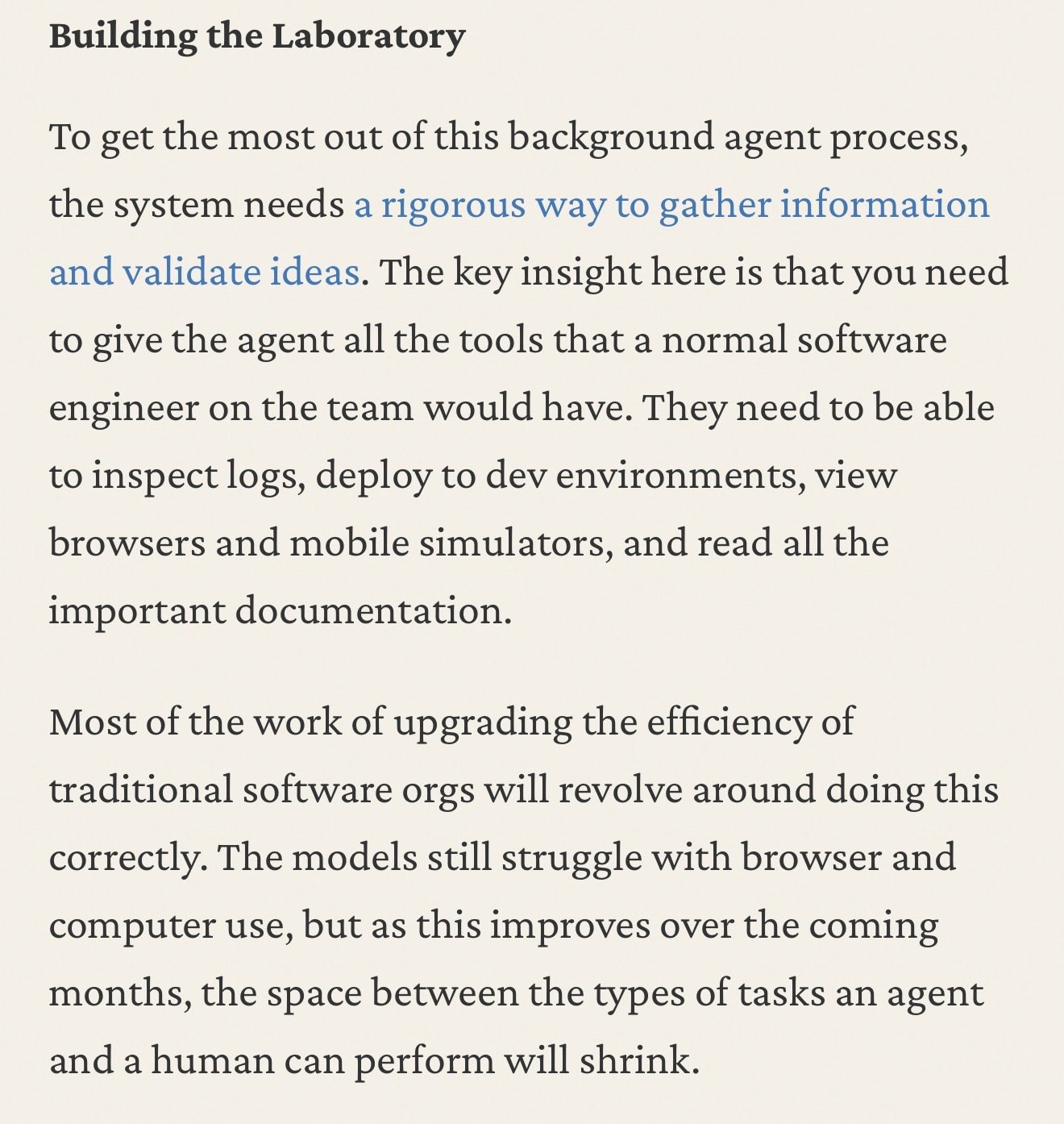

Closing the Software Loop I've become convinced that it is possible to build a system that improves our core product with a shockingly high level of automation Wrote down some thoughts on how I expect this to work and the implications https://t.co/gRNhesLqnW https://t.co/ccdA3PdMfZ

Demis Hassabis: We're 12-18 months away from the critical moment when the problems of humanoid robots will be solved. We're now only thinking in months, not years. Crazy. https://t.co/OQF4XfmjLj

How Clawdbot Remembers Everything

Clawdbot is an open-source personal AI assistant (MIT licensed) created by Peter Steinberger that has quickly gained traction with over 32,600 stars o...

Wow @tobi really cooked with his tool QMD. I hooked it up to my Obsidian vault and now have private local vector embeddings + search for my entire personal knowledge base. Incredibly useful, thank you Tobi! https://t.co/nBsNa276Ki https://t.co/vvsLBn5SKV

The company that created Claude Code and Claude Cowork must have obviously built their own HR solution from scratch with these tools, right? No: they use Workday. Understand why this is, and you'll understand why enterprise SaaS could be doing better than ever, thanks to AI

Your work tools are now interactive in Claude. Draft Slack messages, visualize ideas as Figma diagrams, or build and see Asana timelines. https://t.co/ROWwUOU5vA

A few random notes from claude coding quite a bit last few weeks. Coding workflow. Given the latest lift in LLM coding capability, like many others I rapidly went from about 80% manual+autocomplete coding and 20% agents in November to 80% agent coding and 20% edits+touchups in December. i.e. I really am mostly programming in English now, a bit sheepishly telling the LLM what code to write... in words. It hurts the ego a bit but the power to operate over software in large "code actions" is just too net useful, especially once you adapt to it, configure it, learn to use it, and wrap your head around what it can and cannot do. This is easily the biggest change to my basic coding workflow in ~2 decades of programming and it happened over the course of a few weeks. I'd expect something similar to be happening to well into double digit percent of engineers out there, while the awareness of it in the general population feels well into low single digit percent. IDEs/agent swarms/fallability. Both the "no need for IDE anymore" hype and the "agent swarm" hype is imo too much for right now. The models definitely still make mistakes and if you have any code you actually care about I would watch them like a hawk, in a nice large IDE on the side. The mistakes have changed a lot - they are not simple syntax errors anymore, they are subtle conceptual errors that a slightly sloppy, hasty junior dev might do. The most common category is that the models make wrong assumptions on your behalf and just run along with them without checking. They also don't manage their confusion, they don't seek clarifications, they don't surface inconsistencies, they don't present tradeoffs, they don't push back when they should, and they are still a little too sycophantic. Things get better in plan mode, but there is some need for a lightweight inline plan mode. They also really like to overcomplicate code and APIs, they bloat abstractions, they don't clean up dead code after themselves, etc. They will implement an inefficient, bloated, brittle construction over 1000 lines of code and it's up to you to be like "umm couldn't you just do this instead?" and they will be like "of course!" and immediately cut it down to 100 lines. They still sometimes change/remove comments and code they don't like or don't sufficiently understand as side effects, even if it is orthogonal to the task at hand. All of this happens despite a few simple attempts to fix it via instructions in CLAUDE . md. Despite all these issues, it is still a net huge improvement and it's very difficult to imagine going back to manual coding. TLDR everyone has their developing flow, my current is a small few CC sessions on the left in ghostty windows/tabs and an IDE on the right for viewing the code + manual edits. Tenacity. It's so interesting to watch an agent relentlessly work at something. They never get tired, they never get demoralized, they just keep going and trying things where a person would have given up long ago to fight another day. It's a "feel the AGI" moment to watch it struggle with something for a long time just to come out victorious 30 minutes later. You realize that stamina is a core bottleneck to work and that with LLMs in hand it has been dramatically increased. Speedups. It's not clear how to measure the "speedup" of LLM assistance. Certainly I feel net way faster at what I was going to do, but the main effect is that I do a lot more than I was going to do because 1) I can code up all kinds of things that just wouldn't have been worth coding before and 2) I can approach code that I couldn't work on before because of knowledge/skill issue. So certainly it's speedup, but it's possibly a lot more an expansion. Leverage. LLMs are exceptionally good at looping until they meet specific goals and this is where most of the "feel the AGI" magic is to be found. Don't tell it what to do, give it success criteria and watch it go. Get it to write tests first and then pass them. Put it in the loop with a browser MCP. Write the naive algorithm that is very likely correct first, then ask it to optimize it while preserving correctness. Change your approach from imperative to declarative to get the agents looping longer and gain leverage. Fun. I didn't anticipate that with agents programming feels *more* fun because a lot of the fill in the blanks drudgery is removed and what remains is the creative part. I also feel less blocked/stuck (which is not fun) and I experience a lot more courage because there's almost always a way to work hand in hand with it to make some positive progress. I have seen the opposite sentiment from other people too; LLM coding will split up engineers based on those who primarily liked coding and those who primarily liked building. Atrophy. I've already noticed that I am slowly starting to atrophy my ability to write code manually. Generation (writing code) and discrimination (reading code) are different capabilities in the brain. Largely due to all the little mostly syntactic details involved in programming, you can review code just fine even if you struggle to write it. Slopacolypse. I am bracing for 2026 as the year of the slopacolypse across all of github, substack, arxiv, X/instagram, and generally all digital media. We're also going to see a lot more AI hype productivity theater (is that even possible?), on the side of actual, real improvements. Questions. A few of the questions on my mind: - What happens to the "10X engineer" - the ratio of productivity between the mean and the max engineer? It's quite possible that this grows *a lot*. - Armed with LLMs, do generalists increasingly outperform specialists? LLMs are a lot better at fill in the blanks (the micro) than grand strategy (the macro). - What does LLM coding feel like in the future? Is it like playing StarCraft? Playing Factorio? Playing music? - How much of society is bottlenecked by digital knowledge work? TLDR Where does this leave us? LLM agent capabilities (Claude & Codex especially) have crossed some kind of threshold of coherence around December 2025 and caused a phase shift in software engineering and closely related. The intelligence part suddenly feels quite a bit ahead of all the rest of it - integrations (tools, knowledge), the necessity for new organizational workflows, processes, diffusion more generally. 2026 is going to be a high energy year as the industry metabolizes the new capability.

Narrative Version Control

An exploration of version control as narrative medium by Surya & Nikolai with help from Sai. The Problem with PRs Coding with AI has made individuals...

The Three-Layer Memory System Upgrade for Clawdbot

Give your Clawdbot a knowledge graph that compounds forever Most AI assistants forget by default. Clawdbot doesn’t—but out of the box, its memory is s...

@airesearch12 💯 @ Spec-driven development It's the limit of imperative -> declarative transition, basically being declarative entirely. Relatedly my mind was recently blown by https://t.co/pTfOfWwcW1 , extreme and early but inspiring example.

A few random notes from claude coding quite a bit last few weeks. Coding workflow. Given the latest lift in LLM coding capability, like many others I rapidly went from about 80% manual+autocomplete coding and 20% agents in November to 80% agent coding and 20% edits+touchups in December. i.e. I really am mostly programming in English now, a bit sheepishly telling the LLM what code to write... in words. It hurts the ego a bit but the power to operate over software in large "code actions" is just too net useful, especially once you adapt to it, configure it, learn to use it, and wrap your head around what it can and cannot do. This is easily the biggest change to my basic coding workflow in ~2 decades of programming and it happened over the course of a few weeks. I'd expect something similar to be happening to well into double digit percent of engineers out there, while the awareness of it in the general population feels well into low single digit percent. IDEs/agent swarms/fallability. Both the "no need for IDE anymore" hype and the "agent swarm" hype is imo too much for right now. The models definitely still make mistakes and if you have any code you actually care about I would watch them like a hawk, in a nice large IDE on the side. The mistakes have changed a lot - they are not simple syntax errors anymore, they are subtle conceptual errors that a slightly sloppy, hasty junior dev might do. The most common category is that the models make wrong assumptions on your behalf and just run along with them without checking. They also don't manage their confusion, they don't seek clarifications, they don't surface inconsistencies, they don't present tradeoffs, they don't push back when they should, and they are still a little too sycophantic. Things get better in plan mode, but there is some need for a lightweight inline plan mode. They also really like to overcomplicate code and APIs, they bloat abstractions, they don't clean up dead code after themselves, etc. They will implement an inefficient, bloated, brittle construction over 1000 lines of code and it's up to you to be like "umm couldn't you just do this instead?" and they will be like "of course!" and immediately cut it down to 100 lines. They still sometimes change/remove comments and code they don't like or don't sufficiently understand as side effects, even if it is orthogonal to the task at hand. All of this happens despite a few simple attempts to fix it via instructions in CLAUDE . md. Despite all these issues, it is still a net huge improvement and it's very difficult to imagine going back to manual coding. TLDR everyone has their developing flow, my current is a small few CC sessions on the left in ghostty windows/tabs and an IDE on the right for viewing the code + manual edits. Tenacity. It's so interesting to watch an agent relentlessly work at something. They never get tired, they never get demoralized, they just keep going and trying things where a person would have given up long ago to fight another day. It's a "feel the AGI" moment to watch it struggle with something for a long time just to come out victorious 30 minutes later. You realize that stamina is a core bottleneck to work and that with LLMs in hand it has been dramatically increased. Speedups. It's not clear how to measure the "speedup" of LLM assistance. Certainly I feel net way faster at what I was going to do, but the main effect is that I do a lot more than I was going to do because 1) I can code up all kinds of things that just wouldn't have been worth coding before and 2) I can approach code that I couldn't work on before because of knowledge/skill issue. So certainly it's speedup, but it's possibly a lot more an expansion. Leverage. LLMs are exceptionally good at looping until they meet specific goals and this is where most of the "feel the AGI" magic is to be found. Don't tell it what to do, give it success criteria and watch it go. Get it to write tests first and then pass them. Put it in the loop with a browser MCP. Write the naive algorithm that is very likely correct first, then ask it to optimize it while preserving correctness. Change your approach from imperative to declarative to get the agents looping longer and gain leverage. Fun. I didn't anticipate that with agents programming feels *more* fun because a lot of the fill in the blanks drudgery is removed and what remains is the creative part. I also feel less blocked/stuck (which is not fun) and I experience a lot more courage because there's almost always a way to work hand in hand with it to make some positive progress. I have seen the opposite sentiment from other people too; LLM coding will split up engineers based on those who primarily liked coding and those who primarily liked building. Atrophy. I've already noticed that I am slowly starting to atrophy my ability to write code manually. Generation (writing code) and discrimination (reading code) are different capabilities in the brain. Largely due to all the little mostly syntactic details involved in programming, you can review code just fine even if you struggle to write it. Slopacolypse. I am bracing for 2026 as the year of the slopacolypse across all of github, substack, arxiv, X/instagram, and generally all digital media. We're also going to see a lot more AI hype productivity theater (is that even possible?), on the side of actual, real improvements. Questions. A few of the questions on my mind: - What happens to the "10X engineer" - the ratio of productivity between the mean and the max engineer? It's quite possible that this grows *a lot*. - Armed with LLMs, do generalists increasingly outperform specialists? LLMs are a lot better at fill in the blanks (the micro) than grand strategy (the macro). - What does LLM coding feel like in the future? Is it like playing StarCraft? Playing Factorio? Playing music? - How much of society is bottlenecked by digital knowledge work? TLDR Where does this leave us? LLM agent capabilities (Claude & Codex especially) have crossed some kind of threshold of coherence around December 2025 and caused a phase shift in software engineering and closely related. The intelligence part suddenly feels quite a bit ahead of all the rest of it - integrations (tools, knowledge), the necessity for new organizational workflows, processes, diffusion more generally. 2026 is going to be a high energy year as the industry metabolizes the new capability.

A few random notes from claude coding quite a bit last few weeks. Coding workflow. Given the latest lift in LLM coding capability, like many others I rapidly went from about 80% manual+autocomplete coding and 20% agents in November to 80% agent coding and 20% edits+touchups in December. i.e. I really am mostly programming in English now, a bit sheepishly telling the LLM what code to write... in words. It hurts the ego a bit but the power to operate over software in large "code actions" is just too net useful, especially once you adapt to it, configure it, learn to use it, and wrap your head around what it can and cannot do. This is easily the biggest change to my basic coding workflow in ~2 decades of programming and it happened over the course of a few weeks. I'd expect something similar to be happening to well into double digit percent of engineers out there, while the awareness of it in the general population feels well into low single digit percent. IDEs/agent swarms/fallability. Both the "no need for IDE anymore" hype and the "agent swarm" hype is imo too much for right now. The models definitely still make mistakes and if you have any code you actually care about I would watch them like a hawk, in a nice large IDE on the side. The mistakes have changed a lot - they are not simple syntax errors anymore, they are subtle conceptual errors that a slightly sloppy, hasty junior dev might do. The most common category is that the models make wrong assumptions on your behalf and just run along with them without checking. They also don't manage their confusion, they don't seek clarifications, they don't surface inconsistencies, they don't present tradeoffs, they don't push back when they should, and they are still a little too sycophantic. Things get better in plan mode, but there is some need for a lightweight inline plan mode. They also really like to overcomplicate code and APIs, they bloat abstractions, they don't clean up dead code after themselves, etc. They will implement an inefficient, bloated, brittle construction over 1000 lines of code and it's up to you to be like "umm couldn't you just do this instead?" and they will be like "of course!" and immediately cut it down to 100 lines. They still sometimes change/remove comments and code they don't like or don't sufficiently understand as side effects, even if it is orthogonal to the task at hand. All of this happens despite a few simple attempts to fix it via instructions in CLAUDE . md. Despite all these issues, it is still a net huge improvement and it's very difficult to imagine going back to manual coding. TLDR everyone has their developing flow, my current is a small few CC sessions on the left in ghostty windows/tabs and an IDE on the right for viewing the code + manual edits. Tenacity. It's so interesting to watch an agent relentlessly work at something. They never get tired, they never get demoralized, they just keep going and trying things where a person would have given up long ago to fight another day. It's a "feel the AGI" moment to watch it struggle with something for a long time just to come out victorious 30 minutes later. You realize that stamina is a core bottleneck to work and that with LLMs in hand it has been dramatically increased. Speedups. It's not clear how to measure the "speedup" of LLM assistance. Certainly I feel net way faster at what I was going to do, but the main effect is that I do a lot more than I was going to do because 1) I can code up all kinds of things that just wouldn't have been worth coding before and 2) I can approach code that I couldn't work on before because of knowledge/skill issue. So certainly it's speedup, but it's possibly a lot more an expansion. Leverage. LLMs are exceptionally good at looping until they meet specific goals and this is where most of the "feel the AGI" magic is to be found. Don't tell it what to do, give it success criteria and watch it go. Get it to write tests first and then pass them. Put it in the loop with a browser MCP. Write the naive algorithm that is very likely correct first, then ask it to optimize it while preserving correctness. Change your approach from imperative to declarative to get the agents looping longer and gain leverage. Fun. I didn't anticipate that with agents programming feels *more* fun because a lot of the fill in the blanks drudgery is removed and what remains is the creative part. I also feel less blocked/stuck (which is not fun) and I experience a lot more courage because there's almost always a way to work hand in hand with it to make some positive progress. I have seen the opposite sentiment from other people too; LLM coding will split up engineers based on those who primarily liked coding and those who primarily liked building. Atrophy. I've already noticed that I am slowly starting to atrophy my ability to write code manually. Generation (writing code) and discrimination (reading code) are different capabilities in the brain. Largely due to all the little mostly syntactic details involved in programming, you can review code just fine even if you struggle to write it. Slopacolypse. I am bracing for 2026 as the year of the slopacolypse across all of github, substack, arxiv, X/instagram, and generally all digital media. We're also going to see a lot more AI hype productivity theater (is that even possible?), on the side of actual, real improvements. Questions. A few of the questions on my mind: - What happens to the "10X engineer" - the ratio of productivity between the mean and the max engineer? It's quite possible that this grows *a lot*. - Armed with LLMs, do generalists increasingly outperform specialists? LLMs are a lot better at fill in the blanks (the micro) than grand strategy (the macro). - What does LLM coding feel like in the future? Is it like playing StarCraft? Playing Factorio? Playing music? - How much of society is bottlenecked by digital knowledge work? TLDR Where does this leave us? LLM agent capabilities (Claude & Codex especially) have crossed some kind of threshold of coherence around December 2025 and caused a phase shift in software engineering and closely related. The intelligence part suddenly feels quite a bit ahead of all the rest of it - integrations (tools, knowledge), the necessity for new organizational workflows, processes, diffusion more generally. 2026 is going to be a high energy year as the industry metabolizes the new capability.

🥝 Meet Kimi K2.5, Open-Source Visual Agentic Intelligence. 🔹 Global SOTA on Agentic Benchmarks: HLE full set (50.2%), BrowseComp (74.9%) 🔹 Open-source SOTA on Vision and Coding: MMMU Pro (78.5%), VideoMMMU (86.6%), SWE-bench Verified (76.8%) 🔹 Code with Taste: turn chats, images & videos into aesthetic websites with expressive motion. 🔹 Agent Swarm (Beta): self-directed agents working in parallel, at scale. Up to 100 sub-agents, 1,500 tool calls, 4.5× faster compared with single-agent setup. - 🥝 K2.5 is now live on https://t.co/YutVbwktG0 in chat mode and agent mode. 🥝 K2.5 Agent Swarm in beta for high-tier users. 🥝 For production-grade coding, you can pair K2.5 with Kimi Code: https://t.co/A5WQozJF3s - 🔗 API: https://t.co/EOZkbOwCN4 🔗 Tech blog: https://t.co/6h2KkoA0xd 🔗 Weights & code: https://t.co/H38KegeDIY

The Adolescence of Technology: an essay on the risks posed by powerful AI to national security, economies and democracy—and how we can defend against them: https://t.co/0phIiJjrmz

Here's a short video from our founder, Zhilin Yang. (It's his first time speaking on camera like this, and he really wanted to share Kimi K2.5 with you!) https://t.co/2uDSOjCjly

A few random notes from claude coding quite a bit last few weeks. Coding workflow. Given the latest lift in LLM coding capability, like many others I rapidly went from about 80% manual+autocomplete coding and 20% agents in November to 80% agent coding and 20% edits+touchups in December. i.e. I really am mostly programming in English now, a bit sheepishly telling the LLM what code to write... in words. It hurts the ego a bit but the power to operate over software in large "code actions" is just too net useful, especially once you adapt to it, configure it, learn to use it, and wrap your head around what it can and cannot do. This is easily the biggest change to my basic coding workflow in ~2 decades of programming and it happened over the course of a few weeks. I'd expect something similar to be happening to well into double digit percent of engineers out there, while the awareness of it in the general population feels well into low single digit percent. IDEs/agent swarms/fallability. Both the "no need for IDE anymore" hype and the "agent swarm" hype is imo too much for right now. The models definitely still make mistakes and if you have any code you actually care about I would watch them like a hawk, in a nice large IDE on the side. The mistakes have changed a lot - they are not simple syntax errors anymore, they are subtle conceptual errors that a slightly sloppy, hasty junior dev might do. The most common category is that the models make wrong assumptions on your behalf and just run along with them without checking. They also don't manage their confusion, they don't seek clarifications, they don't surface inconsistencies, they don't present tradeoffs, they don't push back when they should, and they are still a little too sycophantic. Things get better in plan mode, but there is some need for a lightweight inline plan mode. They also really like to overcomplicate code and APIs, they bloat abstractions, they don't clean up dead code after themselves, etc. They will implement an inefficient, bloated, brittle construction over 1000 lines of code and it's up to you to be like "umm couldn't you just do this instead?" and they will be like "of course!" and immediately cut it down to 100 lines. They still sometimes change/remove comments and code they don't like or don't sufficiently understand as side effects, even if it is orthogonal to the task at hand. All of this happens despite a few simple attempts to fix it via instructions in CLAUDE . md. Despite all these issues, it is still a net huge improvement and it's very difficult to imagine going back to manual coding. TLDR everyone has their developing flow, my current is a small few CC sessions on the left in ghostty windows/tabs and an IDE on the right for viewing the code + manual edits. Tenacity. It's so interesting to watch an agent relentlessly work at something. They never get tired, they never get demoralized, they just keep going and trying things where a person would have given up long ago to fight another day. It's a "feel the AGI" moment to watch it struggle with something for a long time just to come out victorious 30 minutes later. You realize that stamina is a core bottleneck to work and that with LLMs in hand it has been dramatically increased. Speedups. It's not clear how to measure the "speedup" of LLM assistance. Certainly I feel net way faster at what I was going to do, but the main effect is that I do a lot more than I was going to do because 1) I can code up all kinds of things that just wouldn't have been worth coding before and 2) I can approach code that I couldn't work on before because of knowledge/skill issue. So certainly it's speedup, but it's possibly a lot more an expansion. Leverage. LLMs are exceptionally good at looping until they meet specific goals and this is where most of the "feel the AGI" magic is to be found. Don't tell it what to do, give it success criteria and watch it go. Get it to write tests first and then pass them. Put it in the loop with a browser MCP. Write the naive algorithm that is very likely correct first, then ask it to optimize it while preserving correctness. Change your approach from imperative to declarative to get the agents looping longer and gain leverage. Fun. I didn't anticipate that with agents programming feels *more* fun because a lot of the fill in the blanks drudgery is removed and what remains is the creative part. I also feel less blocked/stuck (which is not fun) and I experience a lot more courage because there's almost always a way to work hand in hand with it to make some positive progress. I have seen the opposite sentiment from other people too; LLM coding will split up engineers based on those who primarily liked coding and those who primarily liked building. Atrophy. I've already noticed that I am slowly starting to atrophy my ability to write code manually. Generation (writing code) and discrimination (reading code) are different capabilities in the brain. Largely due to all the little mostly syntactic details involved in programming, you can review code just fine even if you struggle to write it. Slopacolypse. I am bracing for 2026 as the year of the slopacolypse across all of github, substack, arxiv, X/instagram, and generally all digital media. We're also going to see a lot more AI hype productivity theater (is that even possible?), on the side of actual, real improvements. Questions. A few of the questions on my mind: - What happens to the "10X engineer" - the ratio of productivity between the mean and the max engineer? It's quite possible that this grows *a lot*. - Armed with LLMs, do generalists increasingly outperform specialists? LLMs are a lot better at fill in the blanks (the micro) than grand strategy (the macro). - What does LLM coding feel like in the future? Is it like playing StarCraft? Playing Factorio? Playing music? - How much of society is bottlenecked by digital knowledge work? TLDR Where does this leave us? LLM agent capabilities (Claude & Codex especially) have crossed some kind of threshold of coherence around December 2025 and caused a phase shift in software engineering and closely related. The intelligence part suddenly feels quite a bit ahead of all the rest of it - integrations (tools, knowledge), the necessity for new organizational workflows, processes, diffusion more generally. 2026 is going to be a high energy year as the industry metabolizes the new capability.

Vibe coded a game character selection screen Everything here was made with AI tools Nano Banana: character design + UI Tencent Hunyuan3D: image to 3D Gemini Pro: UI More details ↓ https://t.co/VfwOpYRpsO

Vibe Coding Robotics Part 6 Built a Theo Jansen's Strandbeest simulator to see how an AI models handle complex linkage systems Built with Gemini 3 UI generated with Nano Banana More details ↓ https://t.co/khuXGY9go6

The Complete Guide: How to Become an AI Agent Engineer in 2026

We're going to pay several engineers over $1,000,000 this year. Not founders. Engineers. The best AI agent engineers have absurd leverage—one person s...

these products are significantly gross margin positive, you’re not looking at an imminent rugpull in the future. they also don’t have location network dynamics like uber or lyft to gain local monopoly pricing

Vibe coded a ship selection UI for a space exploration game 3D assets Nano Banana + Midjourney → Hunyuan3D UI Nano Banana → Gemini Pro More details ↓ https://t.co/Ngky4nudC7

I'm very pleased to introduce my latest tool, xf, a hyper-optimized Rust cli tool for searching your entire Twitter/X data archive. You can get it here: https://t.co/S91cAGleaK Many people don't realize this, but X has a great feature buried in the settings where you can request a complete dump of all your tweets, DMs, likes, etc. It takes them 24 hours to prepare it, but then you get a link emailed to you and can download a single zip file with all your stuff. Mine was around 500mb because of all the images I've posted. The problem is, what do you do with it? It's not very convenient or fast to search the way they give it to you. Enter xf, which takes that zip file and makes it into an incredibly useful knowledge base, at least if you use X a lot. And that's because you get it for free! You're just piggybacking on something you were already doing anyway for other reasons. As you may have noticed, I'm a bit addicted to posting on here and also to building in public. So whenever I have a new tool, I usually post about it and explain how I use it and answer questions. I also have a ton of posts about my workflows in general, and my advice on how to do things, my opinions on various tools and libraries, etc. All of that is potentially relevant to a coding agent that is working on my projects, editing my personal website, responding to GitHub issues on my behalf, etc. So now, I can just tell them to use xf; simply typing that shows the quickstart screen shown in the attached screenshot, and then the agents are off to the races. The more you use X (for work at least, it's not going to help if you just troll people), the more of an unlock this is for your personal productivity. Imagine that you're a cult leader with devoted acolytes (your agents). Before doing anything, you want them to ask "What would our leader do?" and then they think "I know! I shall consult the sacred texts!" (i.e., your tweets and DMs). That can be your new reality starting today if you install xf. PS: Can someone get this to Elon? I think he would love seeing how fast this tool tears through a massive archive of data and he would end up using it daily. And if someone from X sees this: please make the archives include the full text of any tweet you reply to, it would make this tool even more useful.