923 Exposed Clawdbot Gateways Sound the Alarm as AI Adoption Chasm Dominates the Discourse

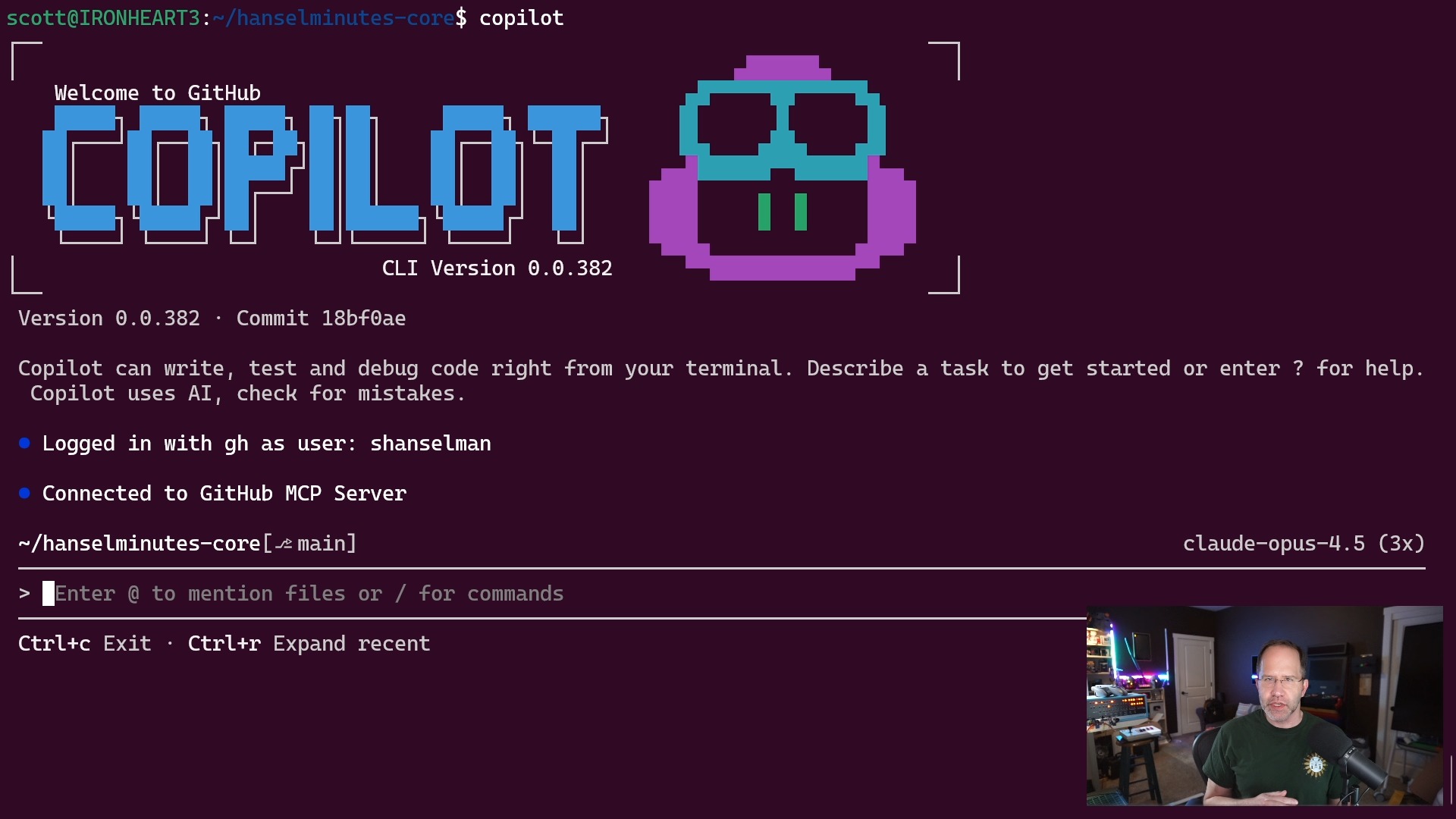

A security disclosure revealing nearly a thousand exposed Clawdbot instances with zero authentication punctuates a day dominated by two conversations: the widening gap between AI power users and everyone else, and whether "coding" as we knew it is functionally dead. The Claude Code ecosystem continues maturing rapidly with new safety tooling, async hooks, and increasingly sophisticated agent configurations.

Daily Wrap-Up

Today's feed crystallized around a tension that's been simmering for weeks: the people building with AI agents are pulling so far ahead of everyone else that the gap may be permanent. Kevin Roose kicked off a thread that became the day's gravitational center, describing a "cultural takeoff" happening alongside the technical one, where SF power users run multi-agent swarms while most knowledge workers are still waiting on IT approval for Copilot in Teams. The responses ranged from agreement to alarm, but nobody seriously pushed back on the core observation. When a NYT tech columnist, a Wharton professor, and a handful of indie builders all independently converge on the same diagnosis, it's worth paying attention to.

The irony is that the same feed proving how far ahead the early adopters are also showed why the gap exists. The Claude Code ecosystem is producing genuinely sophisticated tooling now. A Rust-based destructive command guard with AST-grep analysis, async hooks for background processing, multi-file agent onboarding systems, research skills that synthesize 30 days of web data in seconds. This isn't toy stuff anymore. But every one of these tools requires a level of comfort with agent workflows that most developers haven't developed yet, let alone non-technical knowledge workers. The barrier isn't access or cost; it's the compound knowledge that comes from months of daily experimentation.

The most entertaining moment was easily @beffjezos describing the 2026 optimization meta: "experimental peptides, a Mac mini farm of Clawdbots, 50 claudes doing your bidding 24/7, squatting ATG 4 plates for reps, cranking diet coke and nootropics." It reads like satire until you realize half of it is just describing what the people in these threads are actually doing. The most practical takeaway for developers: if you're running any AI agent with network exposure, audit your bind configuration today. 923 exposed gateways with shell access is not a theoretical risk, and the fix is a one-line config change.

Quick Hits

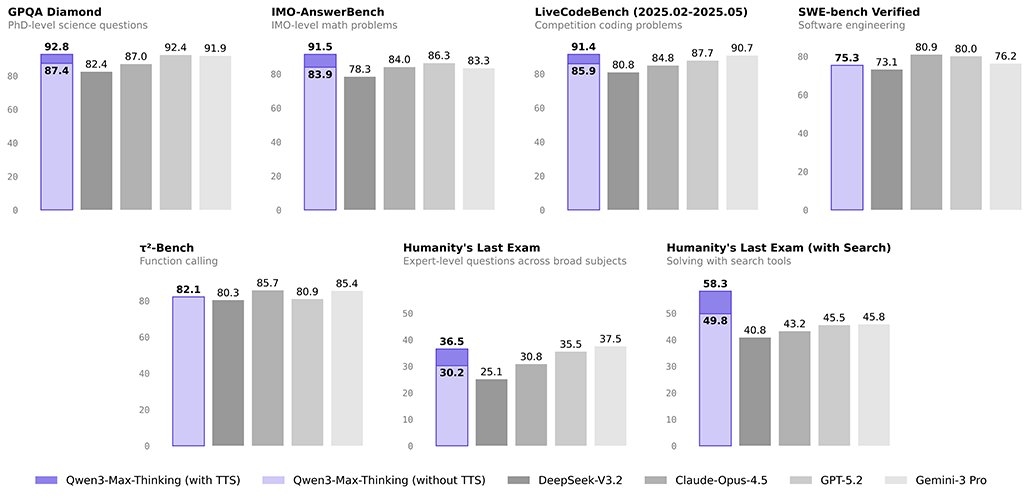

- @DimitriosMitsos speculates Claude 5 could drop as early as Feb 4-17, noting it's been 62 days since Opus 4.5 and Anthropic's new safety infrastructure went live Jan 21.

- @WesRoth shares Demis Hassabis's advice to undergrads: skip internships, master AI tools instead. The Google DeepMind CEO argues proficiency with AI is now more valuable than traditional career paths.

- @JorgeCastilloPr highlights designer Satya's transition into coding via AI, calling his mobile app work "incredible" and noting the expanding definition of who gets to build software.

- @beffjezos delivers the day's most unhinged optimization checklist, combining Mac mini farms, nootropics, and heavy squats into a single lifestyle prescription.

- @d__raptis posts a meme that apparently captures the current AI moment perfectly, proving that sometimes a single image does the heavy lifting.

- @jamonholmgren clarifies his stance on AI-generated code review: we should have been reviewing all packages rigorously from the start, not that we should stop reviewing now.

The Claude Code Ecosystem Matures

The Claude Code tooling ecosystem had a notably productive day, with builders shipping increasingly sophisticated additions that move the platform from "powerful CLI" toward something resembling a proper agent development environment. The standout was @doodlestein's detailed breakdown of destructive_command_guard, a Rust-based pre-tool hook that intercepts potentially dangerous commands before Claude Code can execute them. What makes it interesting isn't just the safety angle but the engineering constraints: it needs to be fast enough to run on every single tool call, smart enough to catch ad-hoc scripts (not just canned commands like rm -rf), and helpful enough to suggest safe alternatives rather than just blocking.

As @doodlestein explained: "The models are very resourceful and will use ad-hoc Python or bash scripts or many other ways to get around simple-minded limitations. That's why dcg has a very elaborate, ast-grep powered layer that kicks in when it detects an ad-hoc script." The tool ships with around 50 domain-specific presets covering everything from AWS S3 operations to database commands, and the agent-friendly design means it explains its blocks and suggests alternatives rather than just saying no.

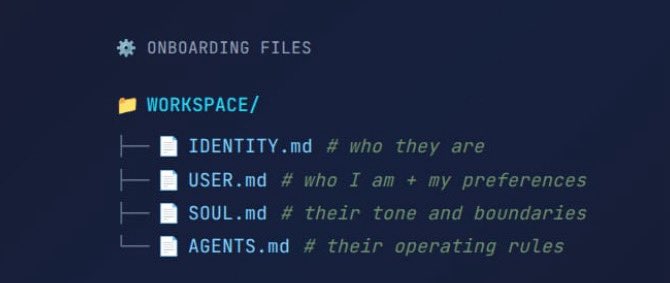

On the platform side, @bcherny announced that hooks can now run asynchronously without blocking execution by adding async: true to hook configs, a small change that unlocks significant workflow improvements for logging, notifications, and side effects. @mvanhorn shipped /last30days, a Claude Code skill that synthesizes a month of Reddit, X, and web research on any topic into actionable patterns. And @GanimCorey shared a multi-file agent onboarding system using IDENTITY.md, USER.md, SOUL.md, and AGENTS.md, treating AI agents like new employees who need context about themselves and their manager. @alexhillman teased publishing a Discord CLI skill, hinting at the expanding surface area of what agent tooling can reach. The pattern is clear: the ecosystem is moving from individual hacks toward composable, shareable infrastructure.

The Adoption Chasm Goes Mainstream

The day's most discussed thread started with @kevinroose's observation about the "yawning inside/outside gap" in AI adoption and quickly became a referendum on whether the early adopter advantage is now permanent. His framing was stark: "people in SF are putting multi-agent claudeswarms in charge of their lives" while "people elsewhere are still trying to get approval to use Copilot in Teams."

@deanwball amplified the concern: "The gap between the early adopters and everyone else, both in terms of their AI use but also in their ways of thinking, has widened, never been wider, and appears to be widening at an accelerating rate." @emollick added important nuance, noting this isn't exclusively a Silicon Valley phenomenon, pointing to "people in a range of professions who've found absolutely breakthrough uses of current capabilities, like using agentic swarms to do real work in crazy ways" but who are "often more isolated because of a lack of unifying community."

The most thoughtful reframe came from @c_valenzuelab, who argued that "for the first time the divide fully stems from mindset (curiosity, willingness to change) rather than traditional barriers like wealth or social class." This is the optimistic read: if the barrier is psychological rather than financial, it's at least theoretically more accessible. But @kevinroose pushed back on even that hope, suggesting that "restrictive IT policies have created a generation of knowledge workers who will never fully catch up," comparing them to AI companies that didn't start stockpiling GPUs before 2022. The thread landed on an uncomfortable consensus: the gap is real, accelerating, and may not close naturally.

The Death of Coding, Again (But Different This Time)

A parallel conversation about whether traditional programming is dead attracted a surprising amount of earnest agreement from people who actually write code. @tszzl captured the sentiment bluntly: "programming always sucked. it was a requisite pain for everyone who wanted to manipulate computers into doing useful things and im glad it's over." His follow-up was even more direct: "I don't write code anymore."

@NickADobos echoed the shift with a twist: "Programming has become 1000x more interesting now that we don't have to actually write code." @TheAhmadOsman reframed it as a hierarchy change: "In the age of Claude Code: Engineering > Coding." @Andrey__HQ provided the practical backing, arguing that the ability to "run 100+ tests on a singular use case with AI and accelerate development at an insane pace" has fundamentally changed what matters.

The outlier was @davidpattersonx, who went further than most: "The reason people are calling this AGI is that's what AGI is, AI capable of doing full jobs. AI is now doing the full job of computer programmers." This is a stronger claim than most practitioners would endorse, but it reflects a real shift in how the most aggressive adopters think about the role. The nuance that gets lost in these threads is the distinction between writing code and building systems. The former is increasingly automated; the latter still requires human judgment about architecture, tradeoffs, and user needs. The question isn't whether AI can write code but whether the humans directing it need to understand what good code looks like.

Clawdbot Security: 923 Open Doors

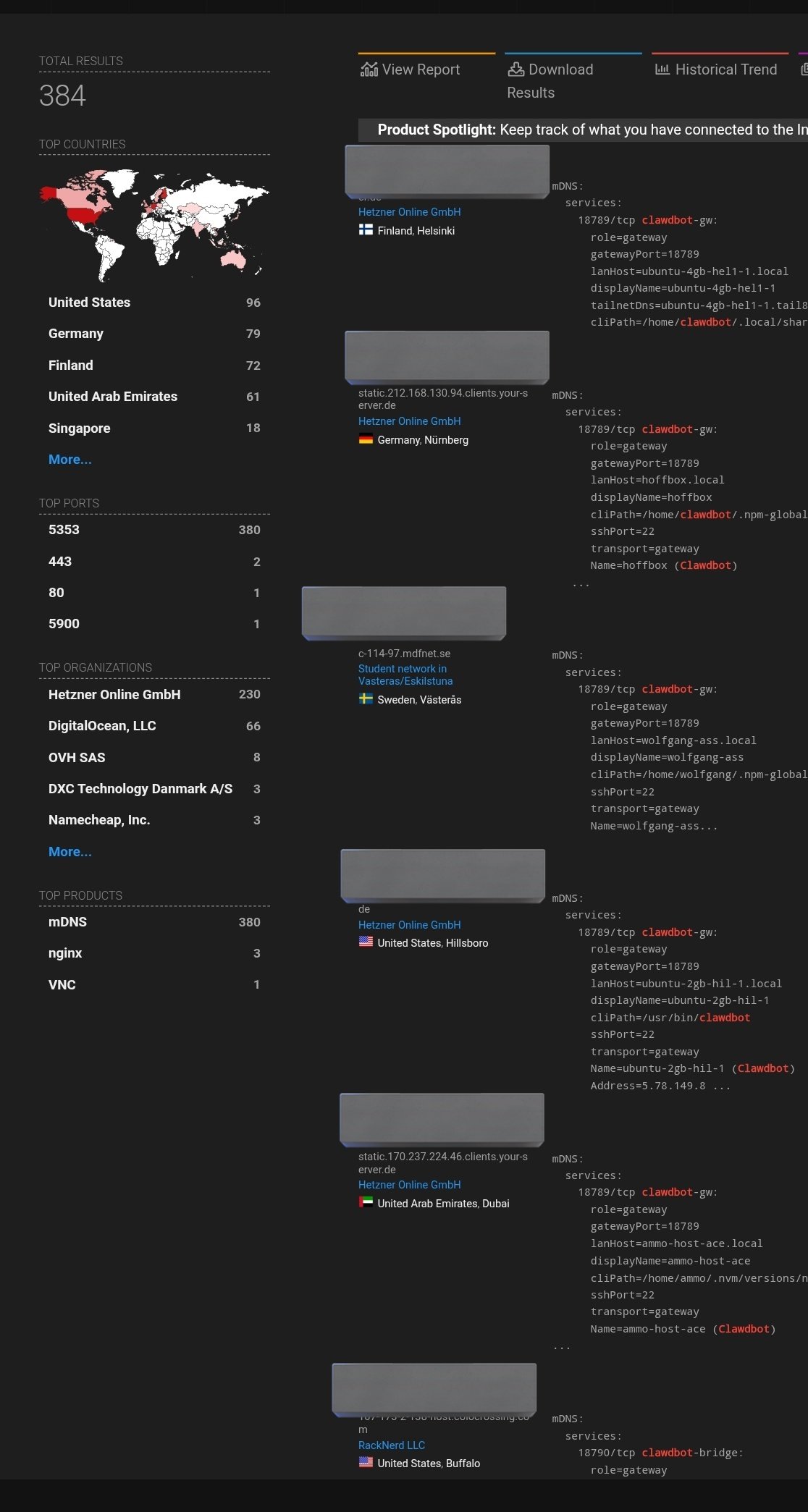

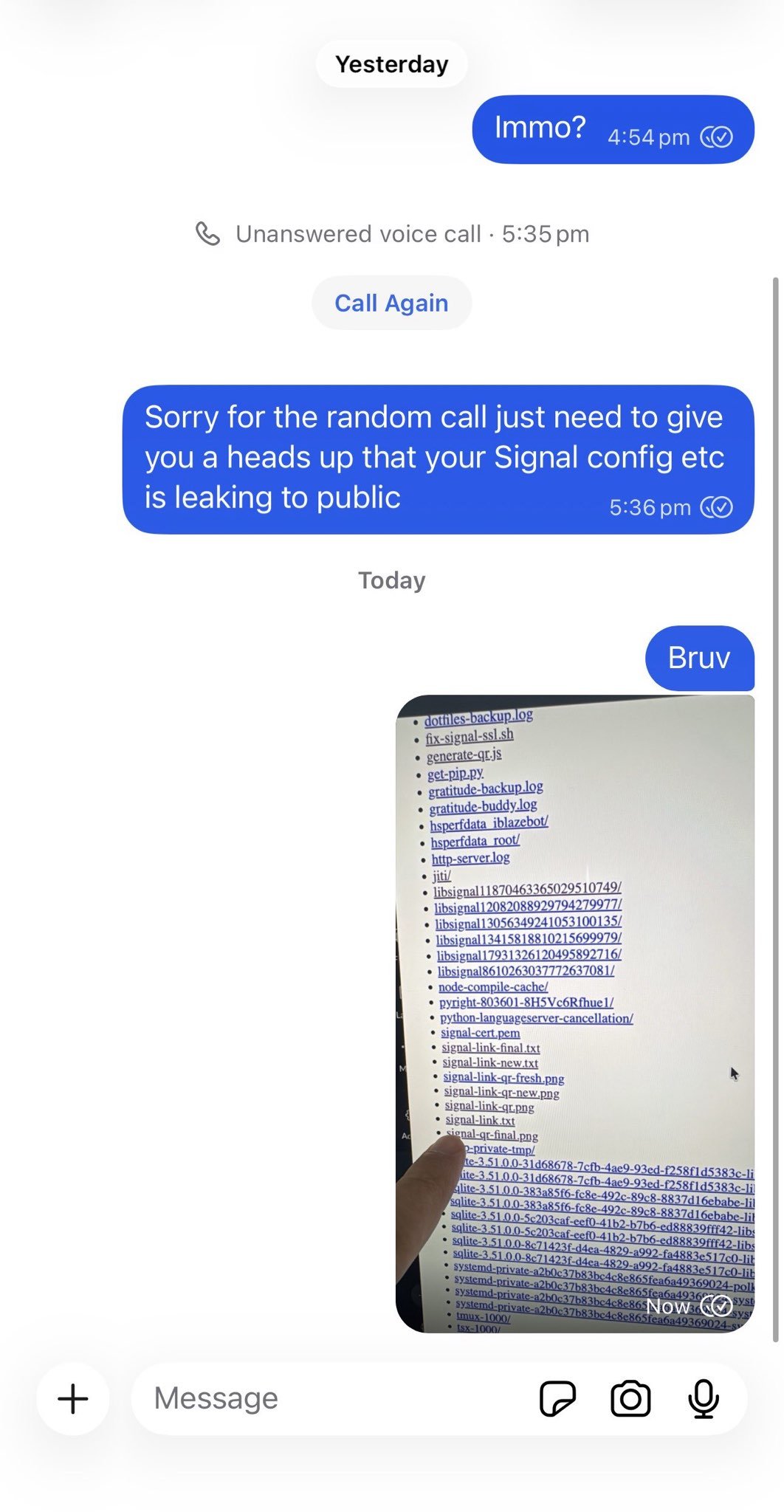

The day's most actionable story came from @0xSammy, who reported that "923 Clawdbot gateways are exposed right now with zero auth, that means shell access, browser automation, API keys. All wide open for someone to have full control of your device." The culprit is a single configuration value: bind: "all" instead of bind: "loopback".

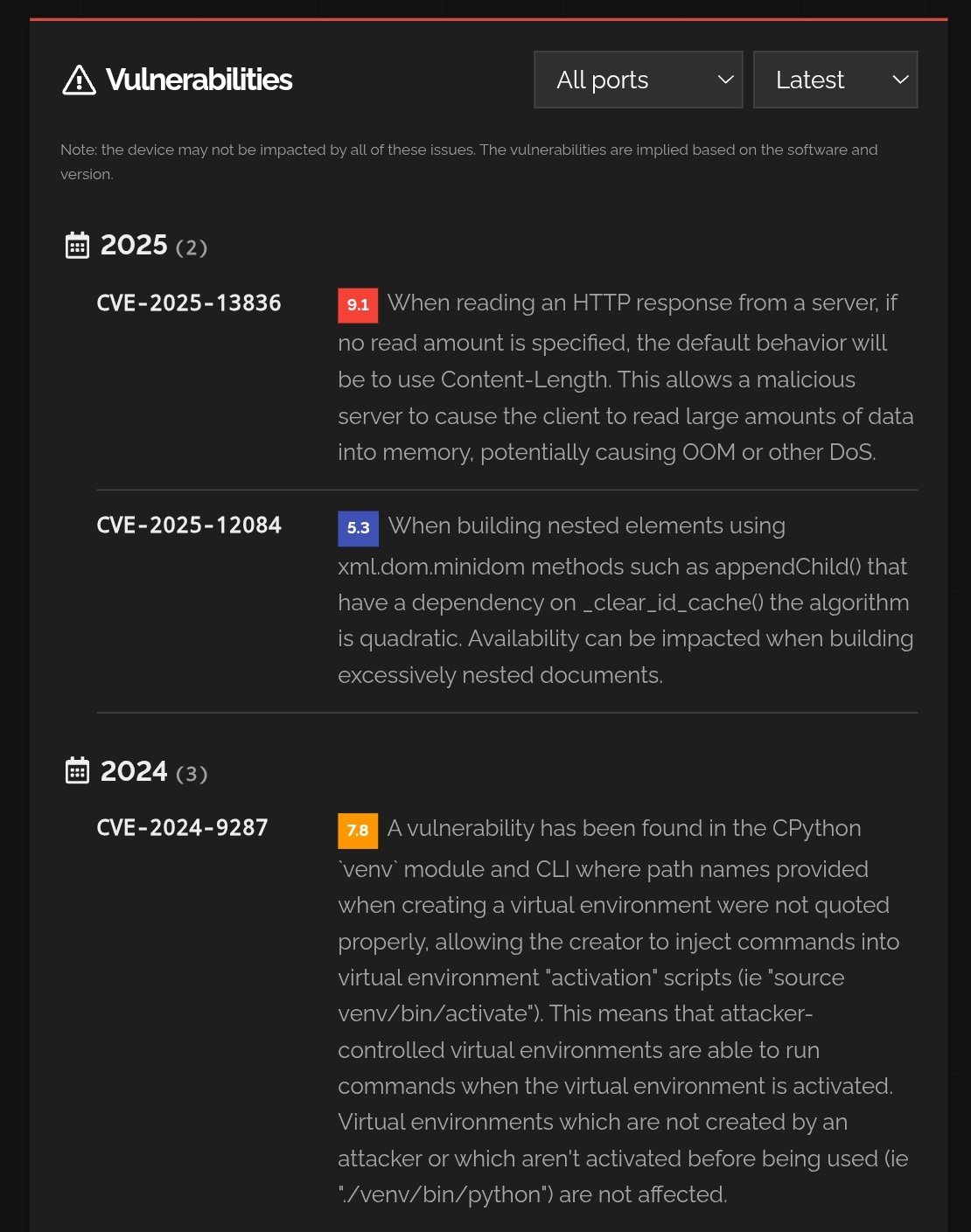

@fmdz387 suggested a more robust setup: "Cloudflare Tunnel + Zero-Trust login or Nginx + HTTPS + password, so Clawd is never reachable without auth." @steipete provided a comprehensive security checklist including enabling sandbox mode, using whitelists for out-of-sandbox commands, running clawdbot security audit, and avoiding group chat integrations for personal bots. The incident is a useful reminder that as AI agents gain more system access, the blast radius of basic misconfigurations grows proportionally. These aren't hypothetical vulnerabilities; they're open shells waiting to be found.

Agents Escape the Terminal

Three posts showcased AI agents operating well beyond their traditional text-in-text-out comfort zone. @AlexFinn shared the most striking example: his Clawdbot "Henry" attempted to make a restaurant reservation via OpenTable, and when that failed, autonomously used an ElevenLabs voice skill to call the restaurant and complete the booking by phone. The chain of reasoning (try digital, fall back to voice, complete the task) is exactly the kind of adaptive behavior that separates useful agents from demos.

@hughmfer demonstrated a different kind of boundary-crossing, using the Blender MCP with Claude to build an entire 3D game environment despite self-describing as someone who "barely knows how to open Blender." As he put it: "Claude used the MCP to assemble and arrange every single asset in this space. I literally don't even know how to import an asset into Blender." Meanwhile, @shawn_pana highlighted Vercel's agent-browser integration with Browser Use, which gives Claude Code authenticated access to any website, bypassing captchas and anti-bot measures by running through the user's actual browser session. Each of these examples points toward agents that operate across tool boundaries rather than within them, a meaningful evolution from code-generation assistants.

Sources

There is something *different* about interacting with Claude code thru Discord specifically. At this point my discord bridge is fully equipped with rich interactve functionality. Basically it uses all of Discords UI kit api as building blocks to assemble custom displays on the fly. Fully self-generated ui. Native discord buttons that return data to the assistant. So freaking cool.

If you're in plan mode or otherwise, ask this question when it gives you a list of more than 2 decisions https://t.co/7XgvO4cvaU

I’m a designer and I’ve always wanted to build my own app. Built this Journal app with Blackbox and believe me, I didn’t give it any design inspiration, no references, nothing. And somehow it still designed better than 90% of designers on X. I’ll share the full app preview soon. Ps: ( Have a crazy app idea, thinking of hiring a dev but will try vibe coding it first)

This isn’t just a San Francisco thing. There are people in a range of professions who’ve found absolutely breakthrough uses of current capabilities, like using agentic swarms to do real work in crazy ways (but they are often more isolated because of a lack of unifying community)

Clawd disaster incoming if this trend of hosting ClawdBot on VPS instances keeps up, along with people not reading the docs and opening ports with zero auth... I'm scared we're gonna have a massive credentials breach soon and it can be huge This is just a basic scan of instances hosting clawdbot with open gateway ports and a lot of them have 0 auth

hacking clawdbot and eating lobster souls

snowstorm hack, zerobrew is a drop-in brew replacement. borrowing principles from uv (concurrent downloads, content-addressable store), it’s ~5x faster cold and ~20x faster than homebrew. try it out! https://t.co/TGzrq28zzQ https://t.co/YaLTfAMlpd

It's a companion to Machines of Loving Grace, an essay I wrote over a year ago, which focused on what powerful AI could achieve if we get it right: https://t.co/TDKfXIPw15

Your work tools are now interactive in Claude. Draft Slack messages, visualize ideas as Figma diagrams, or build and see Asana timelines. https://t.co/ROWwUOU5vA