Claude Code 'Swarms' Unlock Multi-Agent Delegation as Skills-Sharing Ecosystem Takes Off

The Claude Code and Clawdbot community hit a fever pitch with the discovery of a hidden "Swarms" delegation feature, a wave of publicly shared skill libraries, and spirited debate over async subagent workflows. Meanwhile, @alexhillman coined "software tailoring" as a new frame for AI-assisted development, and Alibaba pushed Qwen3-TTS updates that have the open-source voice AI crowd declaring victory over proprietary alternatives.

Daily Wrap-Up

January 24th felt like the day the agentic coding movement graduated from "neat trick" to "genuine workflow." The sheer volume of Claude Code and Clawdbot content in the timeline was staggering, but what stood out wasn't the quantity. It was the maturity. People aren't just prompting anymore. They're building skills libraries, sharing them publicly, orchestrating sub-agents with explicit guardrails, and discovering features like Claude Code Swarms that turn the AI from a pair programmer into a project manager. The skills-sharing culture that's emerging looks a lot like the early days of npm or VS Code extensions, except the barrier to entry is even lower and the surface area of what you can automate is dramatically wider.

The philosophical counterweight came from @alexhillman, who dropped one of the more memorable frames in recent memory: "software tailoring." The idea that we're not just building software but fitting it to ourselves, the way a tailor adjusts a suit, resonated hard. Combined with @Hesamation's carpenter analogy about the genuine grief developers feel when the craft portion of their work gets automated, the day produced a surprisingly thoughtful conversation about identity alongside all the power-user tips. The tension between "this is incredible" and "what does this mean for us" is getting more nuanced, and that's healthy.

The most practical takeaway for developers: if you're using Claude Code or similar agentic tools, invest time in building reusable skills and CLI wrappers for the APIs you interact with daily. Multiple people independently confirmed that the "CLI for every app" pattern compounds fast, and publicly shared skill libraries like @jdrhyne's 20-skill collection and @LLMJunky's Context7 approach give you a running start instead of building from scratch.

Quick Hits

- @timolins recommends UTM for free, open-source virtualization on Mac.

- @prmshra built their company website personally because "if words were enough to cure cancer, my company would not exist."

- @JohnPhamous shipped an animated dog using a 57kb sprite sheet with zero dependencies.

- @0xgaut posted the universal Claude Code experience: "Claude usage limit reached. Your limit will reset at 7 AM."

- @irl_danB has a request: "I don't want to hear any of you utter the word microservice ever again."

- @milichab teased IsoCity + IsoCoaster coming to desktop with split panes.

- @akshay_pachaar shared "The 2026 AI Engineer roadmap."

- @HuggingModels highlighted a production-ready OCR tool claiming 99% accuracy.

- @CodeByNZ posted the definitive "what to build" list, from operating systems to zero-knowledge proof systems.

- @elonmusk announced xAI is working on topic-specific For You tabs, like a dedicated AI feed with no political rage bait.

- @MiniMax_AI promoted M2-her for optimized roleplay with longer coherence.

- @Sdefendre dropped a link in reply to Matthew Berman's Clawdbot excitement.

Claude Code and Clawdbot: Skills, Swarms, and Psychosis

The agentic coding ecosystem around Claude Code and Clawdbot dominated the timeline with a density that suggests we're past early adoption and into a genuine tooling culture. The biggest discovery of the day came from @NicerInPerson, who described unlocking a hidden "Swarms" capability in Claude Code where the AI stops writing code and starts acting as a team lead, spawning specialist workers who share a task board, work in parallel, and coordinate with each other before reporting back. This isn't theoretical multi-agent orchestration from a research paper. It's someone finding it in a shipping product.

The skills-sharing ecosystem matured noticeably. @jdrhyne published a collection of 20 skills for Clawdbot, Claude Code, Codex, and Cursor, covering everything from Remotion video creation to multi-agent task orchestration to "Charlie Munger mental models for daily review." Credit went to @LLMJunky, who has been sharing skills publicly and also pushed the idea of using Context7 API access in your CLAUDE.md to keep agents current past their knowledge cutoff. @francedot open-sourced their Remotion video setup after getting flooded with questions, showing how they produced a product launch video in two hours.

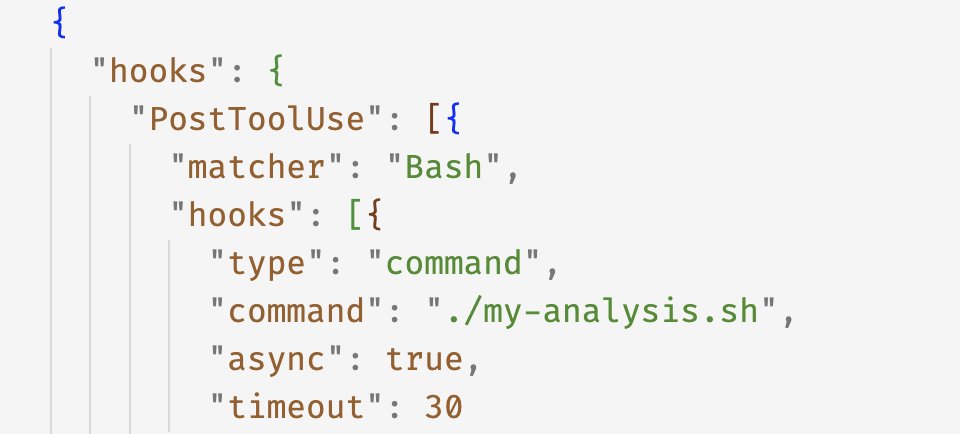

The workflow discourse got specific and tactical. @NicerInPerson evangelized backgrounded async subagents as a major unlock: "It returns control to you, so you can keep discussing things with the main agent and kicking off additional work." Meanwhile @nateliason shared the exact guardrails he uses to force Clawdbot to delegate coding tasks to Codex CLI rather than spawning its own subagents, complete with the CLAUDE.md instructions to make it stick. @alexhillman described building a "CLI creator skill" that he can throw at any API docs, which asks him a few questions and generates a custom CLI plus documentation plus skill. @Voxyz_AI confirmed the pattern: "the CLI for every app approach is underrated... it compounds fast once you have a few of these scripts talking to each other."

On the lighter side, the term "Claude Code psychosis" made multiple appearances. @fabianstelzer offered a guide to recognizing the warning signs early, while @godofprompt simply confirmed: "claude code psychosis is real." And @mrdoob casually ported Quake to Three.js in an hour, which is the kind of flex that makes the psychosis seem worth it.

Software Tailoring and the Developer Identity Crisis

@alexhillman offered what might become one of the defining metaphors for this era of software development. Rather than thinking of AI-assisted coding as "software development," he proposed "software tailoring," comparing it to the difference between a suit off the rack and one fitted specifically to the wearer. The function hasn't changed, but the fit and flow are transformed. "I think we're entering into an era of Custom Tailored Software," he wrote. "Not everyone will build their own, but once you've had one, it's gonna be hard to come back."

He backed it up with specifics about his own toolkit: CLIs for every API-connected app, prototypes in markdown before converting to deterministic code, SQLite or JSON for persistence depending on context, and Laravel plus React for anything more complex. What makes this credible is that @alexhillman is explicit about not being a professional developer. He's a multi-business owner who used Claude Code to understand a complex local tax model and turn it into a calculator any Philadelphia business owner could use. His advice on the learning dimension was equally grounded: "If you're not learning while you're yolo-ing, you're doing yourself a disservice."

The philosophical counterpoint came from @Hesamation, who pushed back on the dismissive "coding was never the goal" crowd with a carpenter analogy that landed hard: "A carpenter's job isn't to cut wood, it's to create objects. But in that process he pours his love of the job and his years of mastering the wood into it." The point isn't that developers are wrong about what their job is. The point is that the craft was the passion, and stripping it away produces real grief regardless of whether the job title survives. @jamonholmgren added a well-timed reality check aimed at developers demanding everyone vet every line of AI-generated code: the same people "shipping bundles full of NPM libraries he's never looked at for years."

Personal AI Assistants Hit the Integration Tipping Point

The Clawdbot-as-personal-assistant thread showed signs of crossing from novelty into genuine utility. @MatthewBerman captured the moment of realization that hooks so many people: "Oh ok wow. I see why everyone is talking about this." He listed connecting Slack, Telegram, Gmail, Calendar, Asana, Hubspot, and Obsidian, which is the kind of integration density that turns a chatbot into an operating system for your life.

@ArnovitzZevi described a particularly domestic workflow: connecting a shared Apple Reminders grocery list with a spouse through a Telegram group, using voice transcription via local Whisper to add items throughout the week, then having the AI add everything to a cart on grocery day so "all you need to do is pay." @techfrenAJ showed the deployment side is getting trivial too, claiming a five-minute setup on AWS free tier with interfaces through WhatsApp, Discord, and Telegram, and noting people are connecting it to Ray-Ban smart glasses for real-time price comparisons.

Open Source Voice AI and the Local Inference Push

Alibaba's Qwen team dropped a substantive update on Qwen3-TTS that addressed the community's most common questions. @Alibaba_Qwen confirmed streaming inference support is in progress with the vLLM team, recommended using Voice Design plus the Base model's clone feature for consistent voice tone, and teased an upcoming 25Hz model that will bring Instruct-style emotion and style control to the Base model. @aisearchio went further, declaring "RIP Elevenlabs" and highlighting that Qwen3-TTS is free, open source, requires less than 2GB VRAM, and includes instant voice cloning plus emotion control.

This feeds into a broader local-AI momentum that @TheAhmadOsman captured across two posts. "Calling it now," he wrote. "Open source AI will win. AGI will run local, not on someone else's servers." His follow-up noted that his entire feed was people discussing local models and buying GPUs and Macs to run them, calling it "the good timeline." Whether or not the AGI prediction lands, the practical reality is that capable models running on consumer hardware are getting good enough to matter, and the voice synthesis space is the latest domain where open source is closing the gap fast.

AI-Powered Creative Tools Push Boundaries

The creative applications of AI coding tools produced some of the day's most visually striking results. @minchoi highlighted VIGA, a system that "thinks with Blender," taking any image and writing Blender code to render, compare, and self-correct until the 3D scene matches the input. It's a compelling example of code-generation AI applied to a domain where the feedback loop is visual rather than logical.

On the video production side, @fkadev demonstrated using Claude Code with Remotion to build an Instagram content generation app optimized for Reels, complete with correct aspect ratios and safe areas, built across "just a few prompts." This pairs with @francedot's open-sourced Remotion templates, suggesting video production through code is becoming a genuinely accessible workflow rather than a demo curiosity. @nateliason's Clawdbot-generated "video game interface for controlling everything we're building together" rounded out the creative showcase, described simply as "WILD."

Sources

The Longform Guide to Everything Claude Code

In "The Shorthand Guide to Everything Claude Code", I covered the foundational setup: skills and commands, hooks, subagents, MCPs, plugins, and the co...

@lkr @clawdbot It would be amazing to have while travelling! So easy. Check out @alexhillman feed to how to setup on a virtual machine, easy peasy. As in, just ask Claude code 😁 (I’m at your level, I just ask CC for everything, I have no idea what the code is doing, just yolo it)

Qwen3-TTS is officially live. We’ve open-sourced the full family—VoiceDesign, CustomVoice, and Base—bringing high quality to the open community. - 5 models (0.6B & 1.8B) - Free-form voice design & cloning - Support for 10 languages - SOTA 12Hz tokenizer for high compression - Full fine-tuning support - SOTA performance We believe this is arguably the most disruptive release in open-source TTS yet. Go ahead, break it and build something cool. 🚀 Everything is out now—weights, code, and paper. Enjoy. 🧵 Github: https://t.co/X4CNGRpBAG Hugging Face: https://t.co/QzshIqzYDU ModelScope: https://t.co/XaWVuDerZ6 Blog: https://t.co/xPER3lyeb5 Paper: https://t.co/9mi5dFyJza Hugging Face Demo: https://t.co/cL7AyaMDwM ModelScope Demo: https://t.co/MYpIeYdYN5 API: https://t.co/lIEikdB6uM

bro are you fucking serious all of ChatGPT runs on postgres with only one fucking writer??? https://t.co/7SPqe0Kc1i

I am a multi-business owner, not a professional software developer. So since using Claude Code to build more and more custom apps for myself and my businesses, I've started thinking about this process less as software development and more as *software tailoring.* A good suit looks and feels good on anybody. But the same suit tailored for the wearer looks and feels AMAZING. The suit itself hasn't changed. Still the same amount of sleeves, cuffs, collar, etc. Tailoring not about function or form, but about FIT and FLOW. I think we're entering into an era of Custom Tailored Software. Not everyone will build their own, but once you've had one, it's gonna be hard to come back.

Fun new model from @MiniMax_AI: M2-her Optimized for roleplay, with new message roles 👀 https://t.co/iSD05Qit2f

@alexhillman @steipete the "CLI for every app" approach is underrated been doing something similar, it compounds fast once you have a few of these scripts talking to each other

programming always sucked. it was a requisite pain for ~everyone who wanted to manipulate computers into doing useful things and im glad it’s over. it’s amazing how quickly I’ve moved on and don’t miss even slightly. im resentful that computers didn’t always work this way

i follow AI adoption pretty closely, and i have never seen such a yawning inside/outside gap. people in SF are putting multi-agent claudeswarms in charge of their lives, consulting chatbots before every decision, wireheading to a degree only sci-fi writers dared to imagine. people elsewhere are still trying to get approval to use Copilot in Teams, if they're using AI at all. it's possible the early adopter bubble i'm in has always been this intense, but there seems to be a cultural takeoff happening in addition to the technical one. not ideal!

i follow AI adoption pretty closely, and i have never seen such a yawning inside/outside gap. people in SF are putting multi-agent claudeswarms in charge of their lives, consulting chatbots before every decision, wireheading to a degree only sci-fi writers dared to imagine. people elsewhere are still trying to get approval to use Copilot in Teams, if they're using AI at all. it's possible the early adopter bubble i'm in has always been this intense, but there seems to be a cultural takeoff happening in addition to the technical one. not ideal!

Clawdbot is awesome 🦞 But I just checked Shodan and there are exposed gateways on port 18789 with zero auth That's shell access, browser automation, your API keys Cloudflare Tunnel is free, there's no excuse RT to save a ClawdBot from getting cooked https://t.co/RC08q9Cstm