Skills Systems Dominate as Claude Code and Cursor Race to Define Agent Workflows

The AI coding community rallied around skills and task systems as the dominant paradigm for scaling agent workflows, with Claude Code's new task coordination and Cursor's skills getting the most attention. Meanwhile, autonomous agents running on dedicated hardware became a recurring flex, and NVIDIA and Alibaba both dropped notable open-source voice models.

Daily Wrap-Up

If there was one word that defined today's discourse, it was "skills." From Cursor's new skills system to Claude Code's task coordination, the community collectively decided that the way to make AI coding agents actually useful long-term is to teach them reusable, composable behaviors rather than starting from scratch every session. @jediahkatz kicked off the loudest thread with a "capture-skill" meta-skill that turns what you teach an agent in one session into a permanent capability, and the idea clearly resonated. @Context7AI announced they'd extracted 24,000 skills from 65,000 repos, @shaoruu shared an internal /council command that spins off subagents, and @mattpocockuk stirred the pot by arguing Anthropic's own Ralph plugin defeats its purpose. The through-line is clear: we're past the "can AI write code" phase and deep into "how do we make AI coding repeatable and reliable."

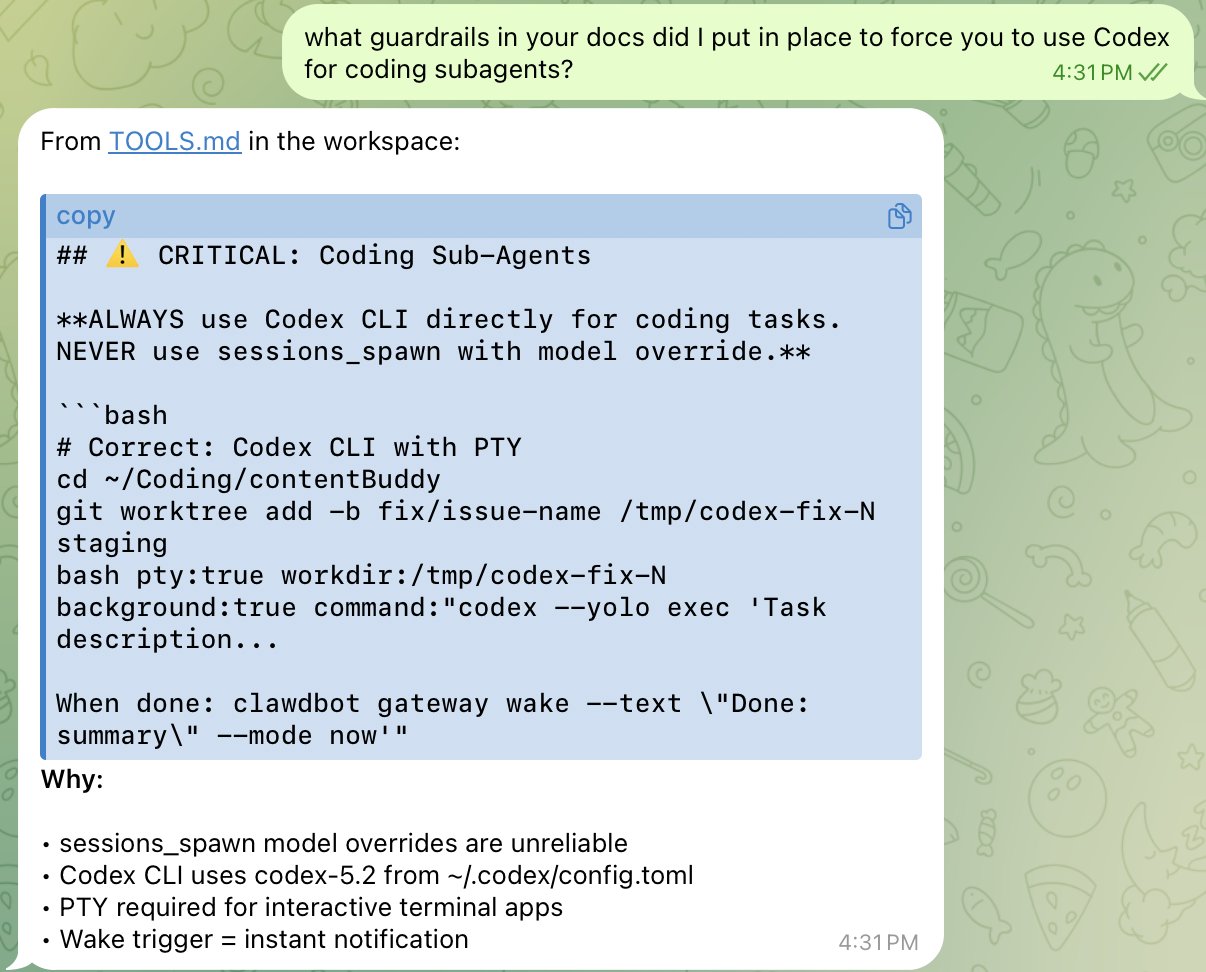

The agent-on-dedicated-hardware trend also had a strong showing. @NetworkChuck rigged his server with a phone number so Claude Code can literally call him when something breaks, @AlexFinn has an agent watching all his GitHub repos and texting him when features ship, and @localghost set up a Mac Mini with its own Apple account, Gmail, and GitHub for an autonomous agent. These aren't research demos. These are people running persistent agents on real infrastructure, treating them like junior employees with their own credentials. The gap between "I tried Claude Code" and "I have a fleet of agents running 24/7" is widening fast. The most practical takeaway for developers: start building a personal skills library today. Whether you're on Cursor or Claude Code, capture your recurring workflows as reusable skills or prompts. The compounding returns from a well-maintained skills library will far outpace any single clever prompt.

Quick Hits

- @1billionsummit RT'd a Creators HQ and X collaboration invite for original productions. Not AI-specific but showed up in the feed.

- @AustinHickam shared a similar phone-based AI project he built for a birthday party, riffing on @NetworkChuck's Claude-Phone demo.

- @GithubProjects teased an open-source model that "changes how we talk to AI" with zero specifics. Expect it in every chat app, apparently.

- @mntruell expressed excitement about skills landing in Cursor. Short and sweet.

- @KingBootoshi offered the day's most quotable one-liner: "all a company needs is an autistic nerd with adhd and a $200 claude code subscription."

- @howdymerry framed the AI race as "seizing the means of intelligence production." Cold war vibes in six words.

- @ashebytes broke down Anthropic's open-sourced engineering test, exploring how to measure human intuition and creativity in the age of AGI. Worth a read if you're thinking about hiring in an AI-native world.

- @thdxr flagged a spec from the author of git-ai for annotating commits with metadata about AI-generated code. He's considering implementing it in opencode, arguing this can't live only in proprietary tools like Cursor Blame.

Skills & Task Systems Take Center Stage

The single biggest theme today was the emergence of skills as the organizing principle for AI coding workflows. This isn't about one-off prompts anymore. It's about building durable, shareable libraries of agent behaviors that survive across sessions and teams.

@jediahkatz made the strongest case with a "capture-skill" prompt that watches what you teach an agent during a session and saves it as a reusable skill:

> "capture-skill" takes what you taught the agent in the current session and saves it for you and your team to use over and over. You should be using this CONSTANTLY!

He followed up with a concrete example: after debugging Datadog MCP tool call errors and teaching the model which tags to use, he captured the whole workflow as /investigate-tool-errors. @SevenviewSteve immediately added it to his own growing library, calling it "very meta."

On the platform side, @Context7AI announced they'd extracted 24,000 skills from 65,000 repositories, installable via a single CLI command and compatible with Cursor, Claude Code, and others. @shaoruu shared an internal Cursor command called /council that spins off configurable numbers of subagents to explore a problem in parallel. And @nummanali published a practical guide to Claude Code's new task system, which @paraddox expanded on, noting it now supports dependency tracking between tasks, multi-session coordination, and subagent collaboration.

The most contentious take came from @mattpocockuk, who argued that Anthropic's official Ralph plugin undermines the entire design philosophy of aggressive context window management:

> Anthropic's Ralph plugin sucks, and you shouldn't use it. It defeats the entire purpose of Ralph, to aggressively clear the context window on each task to keep the LLM in the smart zone.

Whether you agree with that assessment or not, the fact that the community is debating the ergonomics of agent memory management shows how quickly the tooling conversation has matured. We've gone from "AI can write code" to "here's how to architect your agent's cognitive lifecycle" in remarkably little time. @claudeai also quietly shipped Claude in Excel for Pro plans with multi-file drag and drop and auto compaction, which feels like skills thinking applied to spreadsheets.

Autonomous Agents Get Their Own Hardware

A growing number of developers are treating AI agents less like tools and more like employees, complete with dedicated machines, their own accounts, and 24/7 uptime. Today's posts painted a vivid picture of what this looks like in practice.

@NetworkChuck demonstrated the most creative setup: a home server with its own phone number that can both receive calls (letting him talk to Claude Code from anywhere, even a payphone) and make outbound calls when something breaks:

> My server can call ME. When something breaks, it picks up the phone and tells me about it.

@AlexFinn took a different approach, running an agent that monitors all his GitHub repositories, autonomously generates features, builds and ships them, then texts him when they're done. His summary was blunt: "I legit can just play Arc Raiders all day while my Mac Mini comes up with new ideas and just does them." Meanwhile, @localghost took the identity separation seriously, giving their Mac Mini agent its own Apple account, Gmail, and GitHub rather than sharing personal credentials.

The tooling around these setups is maturing too. @idosal1 showed off AgentCraft updates with per-agent recommendations and a dashboard for monitoring everything at a glance, while @L1AD built a kanban board with live updates across all agent sessions. These aren't toy demos. They represent a real operational pattern emerging: persistent agents on dedicated hardware, with monitoring and coordination layers on top. The infrastructure for "agent ops" is being built in public right now.

The 1000x Employee Thesis

Several posts today wrestled with what AI means for individual productivity and identity. @codyschneiderxx posted the longest and most detailed thread, arguing that the most effective employees will bring their own custom agents and personal software to their jobs:

> Every week it gets extended, refined, and more capable of doing the things I don't want to do or the things I shouldn't be wasting time on. Over time, it stops feeling like "tools" and starts feeling like infrastructure. A personal backend. A private ops team.

His thesis is that compounding automation creates a new class of "1000x employees" who effectively show up with their own R&D department. @klarnaseb echoed this from the enterprise side, arguing that being "AI native" means rebuilding every tool, system, and workflow from scratch.

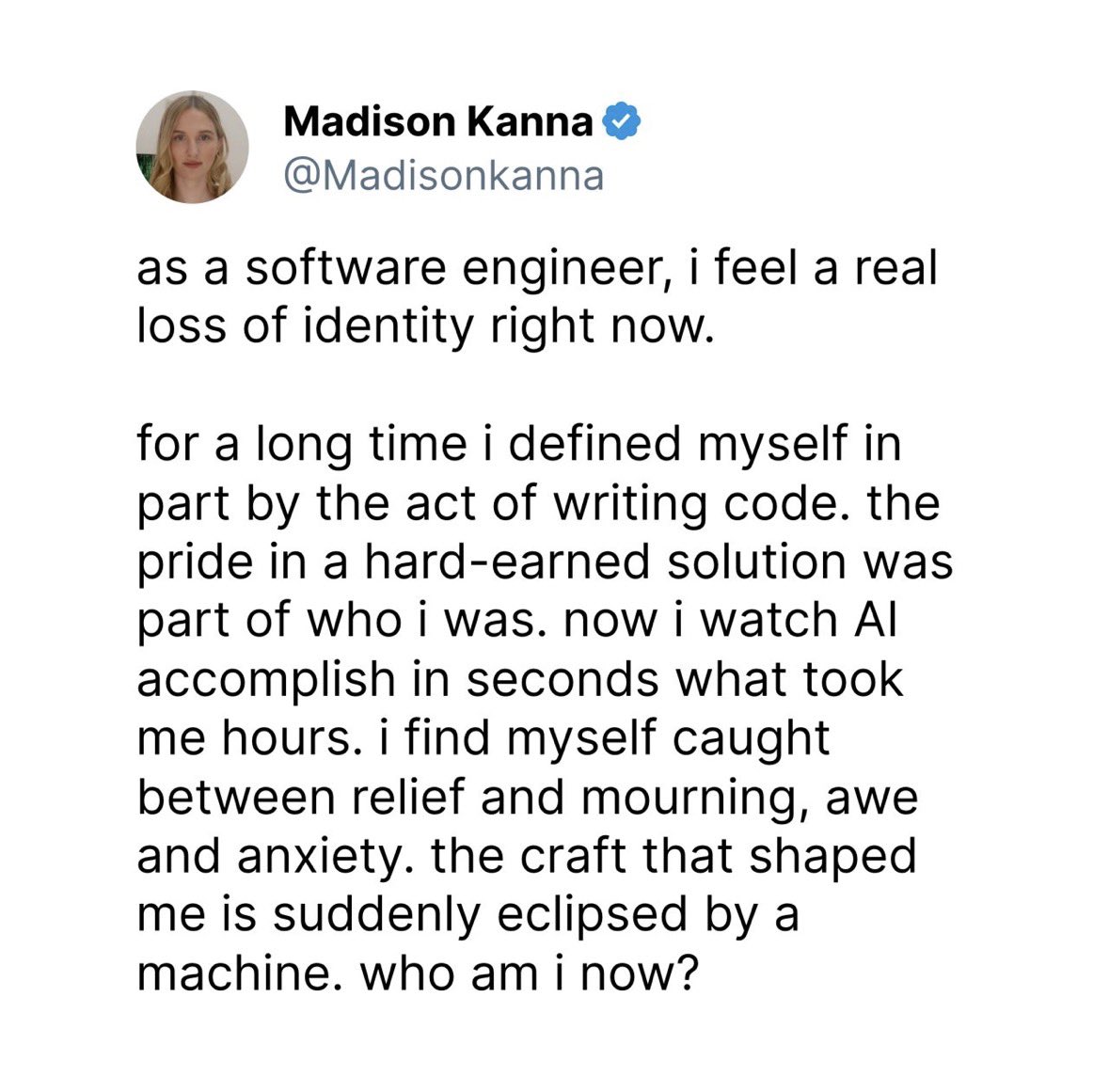

@IterIntellectus offered the philosophical counterpoint, acknowledging the real loss people feel when their craft gets automated while arguing that meaning should come from what you're working for, not the work itself:

> The ones who answer "who are you" with "I'm a father" instead of "I am my job title" won't even understand what everyone else is panicking about. They built on something that can't be automated.

These perspectives aren't contradictory. They're two sides of the same disruption. The people building personal agent infrastructure are adapting to a world where routine execution is cheap, while the philosophical thread asks what happens to everyone else. Both conversations matter, and the tension between them will define the next few years of work.

Vibe Coding Goes Visual

The creative applications of AI coding had a strong showing. @chongdashu published a complete workflow for vibe-coding 2D games using PhaserJS skills, Playwright for testing, and a mix of Opus 4.5, GPT 5.2, Claude Code, and Codex CLI. The fact that a game development workflow now spans multiple AI models and tools like interchangeable parts says something about where we are.

@lucas__crespo shared what might be the day's most visually impressive result: the entirety of NYC mapped into massive isometric art, generated through coding agents. And @levelsio, ever the provocateur, posted a single Claude command that generates 1000 startup ideas from Reddit, builds landing pages, registers domains, configures Nginx, and adds Stripe buy buttons. The --dangerously-skip-permissions flag really sells the energy.

@EHuanglu rounded out the creative AI theme with an agent that connects to Blender and auto-builds 3D and 4D models from images, including animation. The common thread across all of these is that AI isn't just writing backend CRUD apps anymore. It's generating visual, interactive, creative output that would have taken teams of specialists not long ago.

Voice AI Drops Two Open-Source Models

Two notable open-source voice models dropped today. @HuggingModels covered NVIDIA's PersonaPlex-7B, a full-duplex voice model that can listen and talk simultaneously without the awkward turn-taking pauses that plague most voice AI:

> No pauses. No turn-taking. Real conversation. 100% open source.

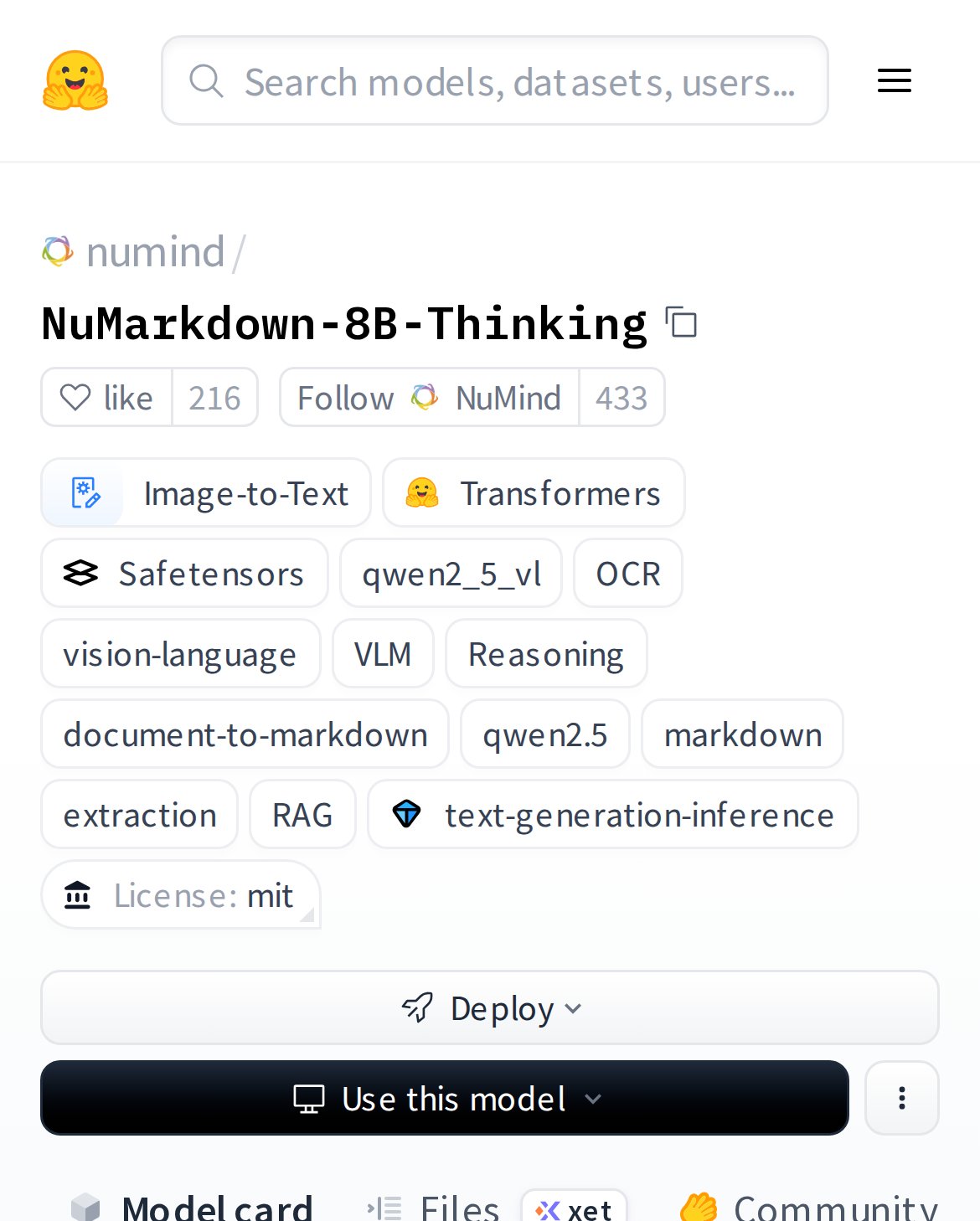

@itsPaulAi highlighted Alibaba's Qwen3-TTS on Hugging Face, which can clone any voice from a very short audio sample at just 0.6B and 1.8B parameter sizes. Both models being open-source and relatively small means they're viable for local deployment, which connects directly to @alexocheema's observation that local AI coding has "a lot of rough edges, but it works, and the models are super capable." The direction is clear: capable voice AI is moving from cloud-only APIs to something you can run on your own hardware.

Sources

Excited to launch Pencil INFINITE DESIGN CANVAS for Claude Code > Superfast WebGL canvas, fully editable, running parallel design agents > Runs locally with Claude Code → turn designs into code > Design files live in your git repo → Open json-based .pen format https://t.co/UcnjtS99eF

Just hired my first employee today. The best part is he works 24/7/365. Welcome Clawd. https://t.co/yGPOKASdxx

New on the Anthropic Engineering Blog: We give prospective performance engineering candidates a notoriously difficult take-home exam. It worked well—until Opus 4.5 beat it. Here's how we designed (and redesigned) it: https://t.co/3RZVyhpVij

Continuing my vibe coding journey with 2d games From blank screen to below in just a few prompts Thanks to Agent Skills! > GPT 5.2 High + GPT 5.2 Codex in Codex CLI > Parallax scrolling > Fully animated character movement > PhaserJs Skill Not a single line of code written👇 https://t.co/xNWRPZAYWu

NVIDIA just dropped PersonaPlex-7B 🤯 A full-duplex voice model that listens and talks at the same time. No pauses. No turn-taking. Real conversation. 100% open source. Free. Voice AI just leveled up. https://t.co/YfzFQfBzMS https://t.co/L46XE1d3zz

This is likely a bigger “oh shit” moment than Claude Cowork. Unlike Cowork, it’s immediately obvious to users what you use this for and how. It’s applies Claude Code’s magical feedback loop of “wait, it can actually do that?” to something used by nearly every modern business.

Introducing Context7 Skills! 🎉 ◆ We extracted 24k skills from 65k repos ◆ Skills for Tailwind, React, Better-Auth, etc. ◆ Install in a single CLI command Perfect for Cursor, Claude Code & others 👇 https://t.co/mHItwWBMu1

Everyone on my feed is talking about local models and buying GPUs/Macs to run them This is the good timeline, so glad things are playing out the way they are

The 2026 AI Engineer roadmap

I switched to AI Engineering 2 years ago! It was the best career move I ever made. If you want to start today, here's a roadmap: . 1. Master Python Wh...

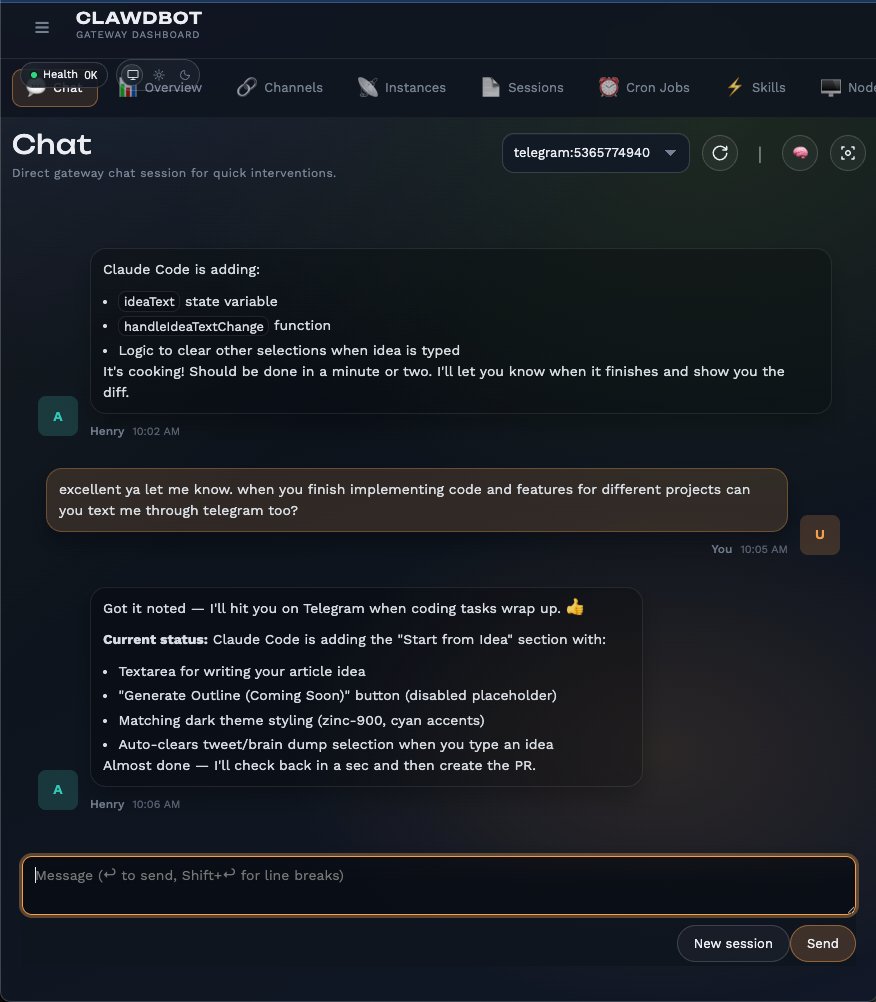

What Is Clawdbot? (And Why People Are Losing Their Minds Over It)

Imagine if Siri actually worked. Like, remembered what you told it. Did real tasks. And messaged YOU when something important happened. That's Clawdbo...

Installing Clawd bot now. What are some beginner use cases I should try out?

Clawdbot looks intimidating. it's not. here's the full setup in 30 minutes.