Claude Code Ships Task Management and Multi-Agent Swarms as Skills Ecosystem Hits Critical Mass

Claude Code's new Tasks system and swarm capabilities signal the end of community workarounds like Ralph Wiggum, while the skills ecosystem reaches critical mass with contributions from Vercel, Supabase, and Exa in a single day. MagicPath launches Figma Connect for pixel-perfect design-to-code, and Alibaba open-sources Qwen3-TTS across 10 languages.

Daily Wrap-Up

Today's feed painted a clear picture of where AI-assisted development is heading: the tools are becoming self-managing. Claude Code shipped native task management and multi-agent swarm capabilities, effectively replacing community-built workarounds with first-party features. At the same time, the skills ecosystem experienced a Cambrian explosion, with Vercel, Supabase, and Exa all shipping skills within the same news cycle. The convergence of these two trends points toward a future where developers spend more time directing agents and less time babysitting them.

The other major thread was the race to give AI agents persistent, full-fidelity computing environments. Martin Casado championed the Sprite model of containerized AI workspaces, Microsoft shipped the GitHub Copilot SDK for embedding agentic loops anywhere, and Palantir's AgentOS documentation started making the rounds. The infrastructure layer for autonomous agents is being built in real time by multiple well-funded players, and it's happening faster than most people realize. Meanwhile, WSJ ran a feature about people getting "Claude-pilled," which has to be the first time a major newspaper has used that particular construction.

The most entertaining moment was @thdxr's perfect distillation of the recursion: "first we had LLMs, put it in a loop and call it an agent, put that in a loop and call it ralph. guys i think i know what's next." The most practical takeaway for developers: invest time learning the Claude Code skills system and start writing project-specific skills. As @rauchg noted, the return on effort for a well-crafted skill far exceeds that of MCPs, and the ecosystem is moving fast enough that early adopters will have a meaningful advantage.

Quick Hits

- @cursor_ai shipped a feature letting agents ask clarifying questions mid-conversation without pausing their work.

- @NickADobos on Cursor's new activity tracking: "Cursor is trying to get me fired. Now they will know I'm not writing any code."

- @sdrzn announced Cline integration with ChatGPT subscriptions for unlimited GPT 5.2 access.

- @iruletheworldmo claims Google is "preparing for AGI" with dedicated roles.

- @unusual_whales: OpenAI plans to take a cut of customers' AI-aided discoveries, per The Information.

- @qianl_cs praised OpenAI's Postgres scaling blog, noting write-heavy workloads will be the next pain point.

- @ExaAILabs launched semantic search over 60M+ companies with structured data on traffic, headcount, and financials, plus a companion Claude skill.

- @codewithantonio discovered a tool that looks like a SaaS but is actually an open-source npm package: "this is genius, I will be using this in every project going forward."

- @ShaneLegg (DeepMind co-founder) is hiring a Senior Economist to investigate post-AGI economics, reporting directly to him.

- @cb_doge shared Elon Musk predicting more robots than people and "amazing abundance."

- @IterIntellectus listed a dozen simultaneous breakthroughs from self-driving to fusion to CRISPR and concluded "I think we're going to be fine."

- @crystalsssup generated a 25-slide Stardew Valley-themed business report using Kimi Slides in one shot.

- @nummanali is switching to Browser Use's new CLI as a primary driver for browser-based agents.

- @tetsuoai posted a meme of vibe coders watching senior engineers struggle to ship features.

- @milichab reacted to Claude Code changes: "Insane, open a pull request!"

- @aulneau and @benjitaylor exchanged links with minimal commentary.

- @lukebelmar offered the always-insightful "AI is about to get crazy."

Claude Code Gets Tasks and Multi-Agent Swarms

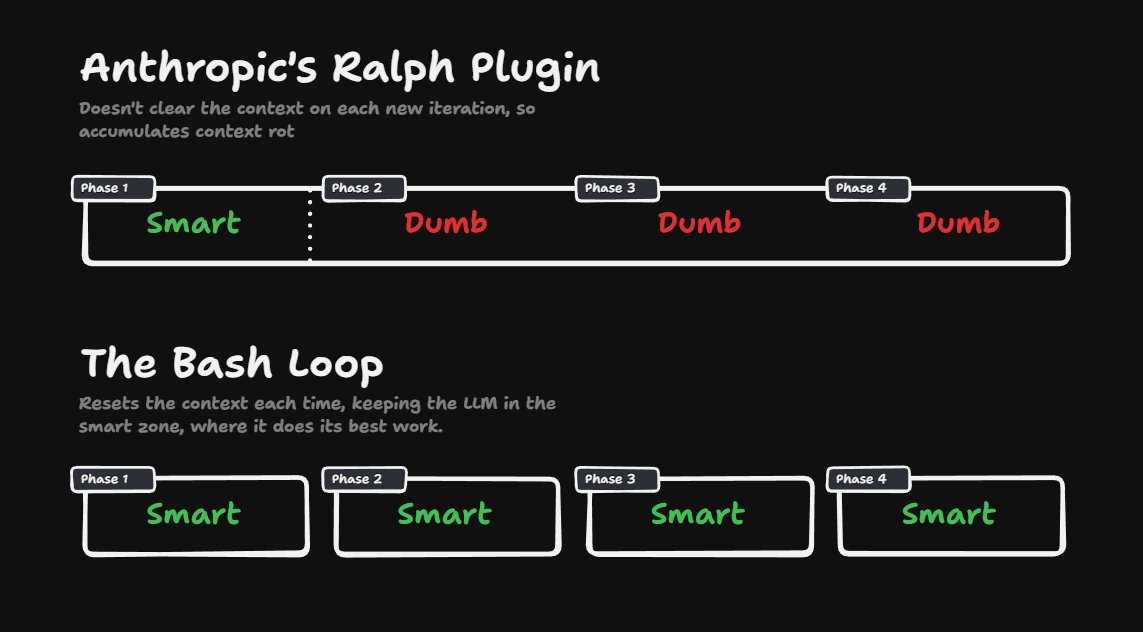

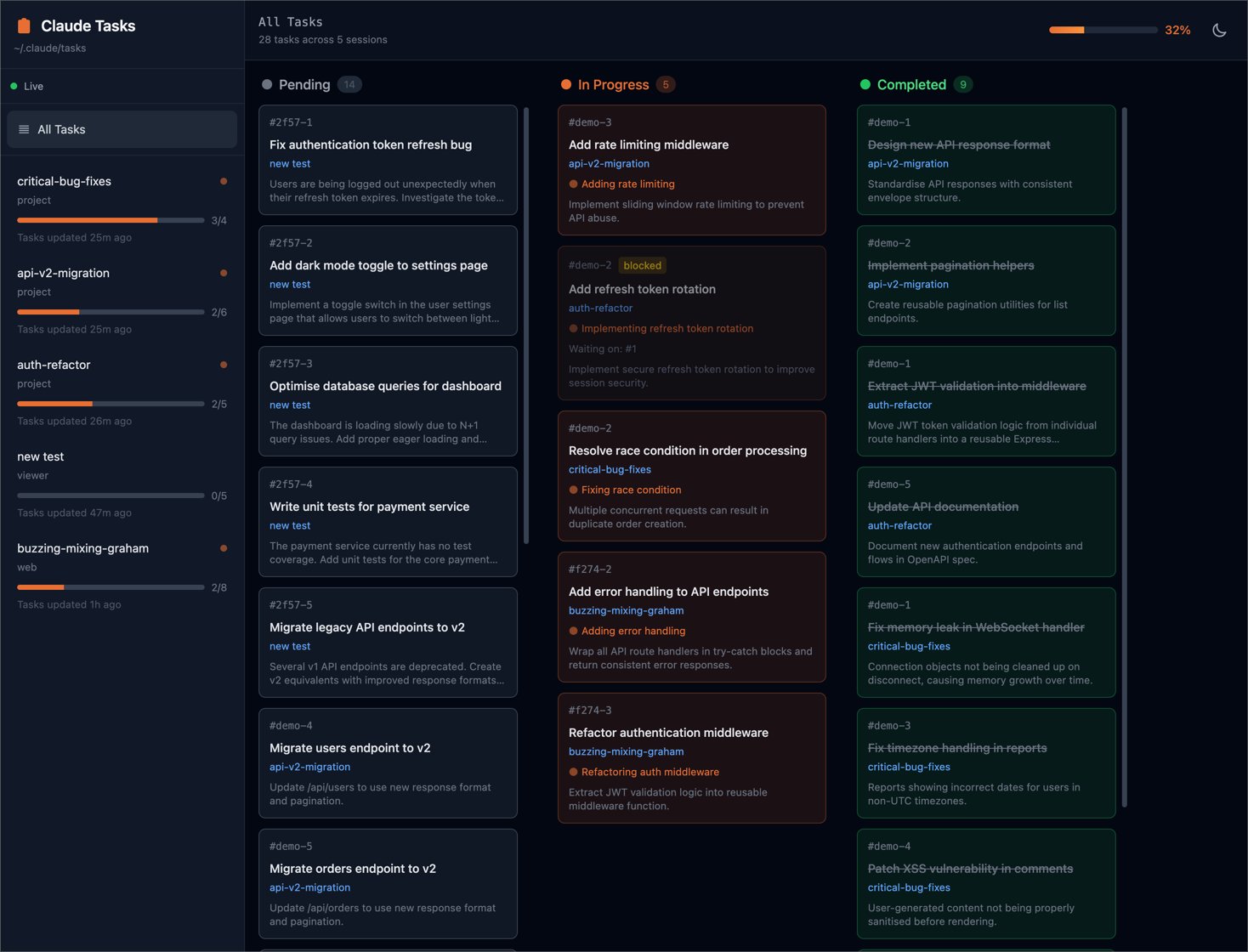

The biggest news of the day landed with relatively little fanfare. @trq212 announced "We're turning Todos into Tasks in Claude Code," and the diffs from @ClaudeCodeLog filled in the details. Claude can now configure spawned Task agents with names, team contexts, and permission modes. More significantly, the ExitPlanMode schema now includes launchSwarm and teammateCount fields, meaning Claude can request spawning a multi-agent swarm to implement an approved plan.

The implications weren't lost on the community. @AlexFinn declared "And just like that Ralph Wiggum is dead," referring to the community pattern of looping Claude Code as an autonomous agent:

> "This is the next step towards Claude being a 24/7 autonomous agent. Lesson from this: spend more time on the planning phase. Have Claude build as many detailed tasks as it can. The more time you spend on this, the more time you'll save later."

@thdxr summed up the recursion with characteristic brevity: "first we had LLMs, put it in a loop and call it an agent, put that in a loop and call it ralph. guys i think i know what's next." And @nayshins posted about "everyone showing off their crazy vibe coded claude orchestrators," underscoring how quickly the community has been building on top of the pattern. The @WSJ, meanwhile, is running features about executives getting "Claude-pilled" after witnessing what they called "a thinking machine of shocking capability." That a major newspaper is covering the cultural phenomenon around a specific AI tool is itself a signal of how much the landscape has shifted.

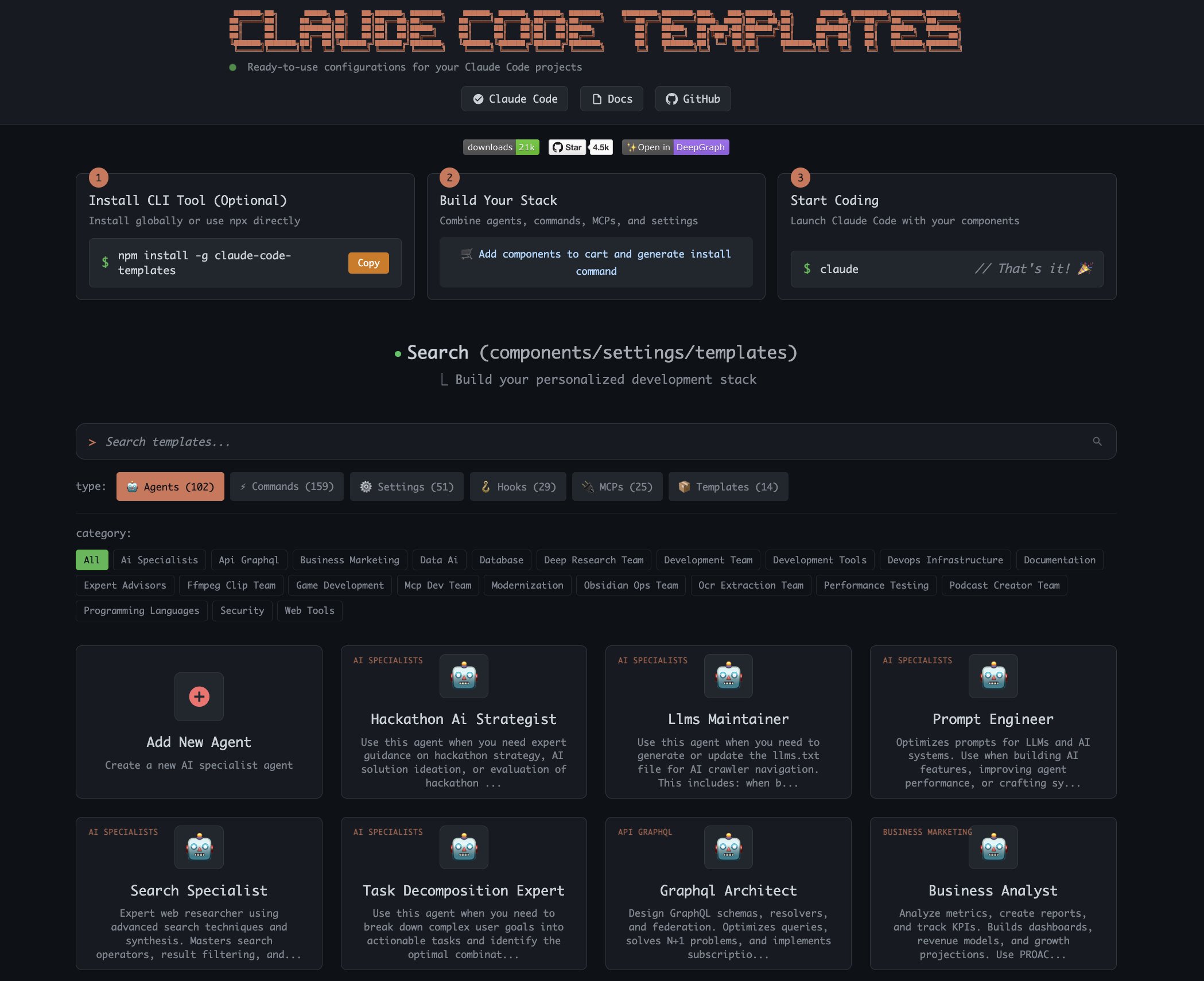

The Skills Ecosystem Finds Its Groove

If tasks are how Claude Code manages itself, skills are how the community teaches it new tricks. And today the ecosystem hit critical mass. @rauchg reflected on the reception, noting that "the return on effort invested is much greater" compared to MCPs, and that "a skill on how to use a CLI + Claude Code makes your service or library way more attractive." @vercel_dev dropped a one-liner showing how simple skill installation has become: npx skills add anthropics/skills --skill frontend-design.

The contributions came from all directions. @supabase launched Postgres best practices agent skills. @dom_scholz proposed the natural UI for skills should be a skill tree (the gaming metaphor writes itself). @elithrar is already thinking about discoverability with npx skills add parsing an index.json. @mamagnus00 demonstrated the practical power, using a remotion skill to go from zero to polished product demo videos in five steps.

@RayFernando1337 highlighted the often-overlooked foundation: "The context you build here is powerful for getting high quality output from your agents." Skills work because they encode domain knowledge in a format agents can reliably consume. @doodlestein took this further, describing a workflow of "using skills to improve skills, skills to improve tool use, and then feeding the actual experience in the form of session logs back into the design skill." Whether or not you buy every claim in that thread, the core loop of using agent experience to refine agent instructions is sound engineering practice.

Agent Runtimes Get Serious Infrastructure

The question of where agents actually run got real attention today. @martin_casado championed the Sprite model: "Basically full linux environments running an AI agent. Full persistent with checkpoints. No need for git. Spin up as many as you want. Just little AI compute gremlins in the cloud." @AniC_dev offered a practical counterpoint, explaining that they tried building on similar infrastructure but found it "too expensive for how much compute you get" with HTTP-only access and Docker headaches, so they wrapped Hetzner VPSs instead.

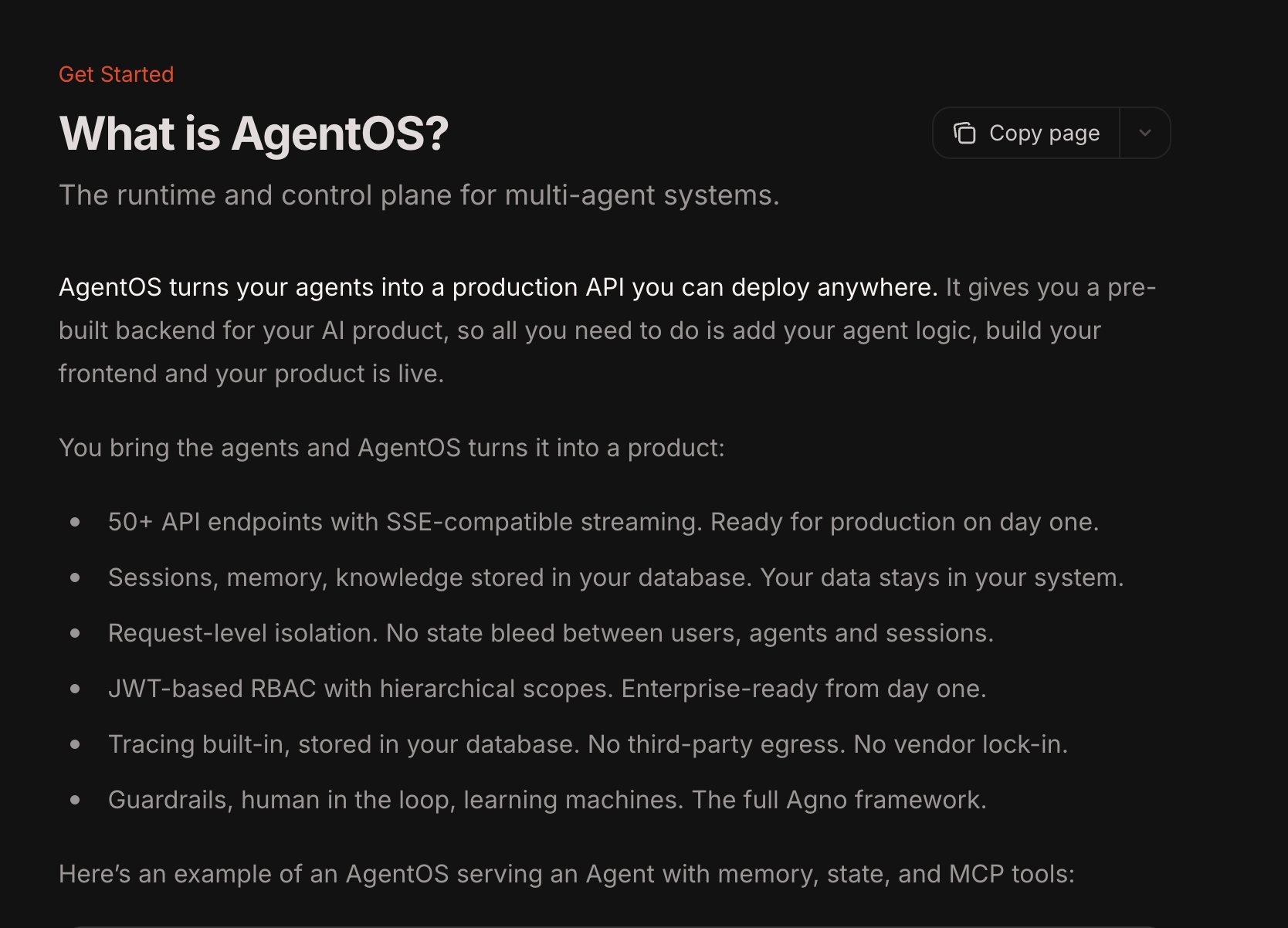

On the enterprise side, @satyanadella positioned the GitHub Copilot SDK as embedding "the same production-tested runtime behind Copilot CLI" directly into apps, with @github describing it as the "agentic core" made embeddable in a few lines of code. @ashpreetbedi noticed Palantir's AgentOS documentation mirrors many of the same patterns. And @irl_danB tracked the proliferation: "since announcing OpenProse, I've seen four more attempts: first VVM, then Kimi Agent-Flow, then NPC, now lobster shell. I told you the year of the intelligent VM was upon us."

The connecting thread is that agents need more than a chat window. They need filesystems, processes, network access, and persistence. Whether that comes from Sprite, Copilot SDK, wrapped VPSs, or @penberg's AgentFS, the infrastructure layer is being built simultaneously by multiple teams racing toward the same destination.

Figma Connect Bridges Design and Code

@skirano launched Figma Connect for MagicPath across a four-post thread, positioning it as "the best way to turn your Figma designs into code." The workflow is straightforward: connect your Figma account, copy any design with Cmd+L, paste into MagicPath. "Images, typography, colors, and layout are all preserved," with the output becoming an interactive prototype you can edit with AI, share, or export as production code.

The pitch explicitly addresses the MCP fatigue that's been building: "No MCP hell. No plugins. Just copy and paste your designs into MagicPath and turn them into interactive prototypes without compromising your craft." @nityeshaga called the onboarding "straight out of a science fiction movie," adding that "it's bringing design to the vibe coding era." For frontend developers who've been hearing "design to code is solved" for years, the key differentiator here is fidelity: "You spent hours perfecting those pixels in Figma. We care about that. Your precision, plus the magic of MagicPath."

Models, Benchmarks, and a TTS Breakthrough

The model race continues on multiple fronts. @iruletheworldmo claims "openai will drop gpt 5.3 next week and it's a very strong model, much more capable than claude opus, much cheaper, much quicker." Meanwhile, Anthropic published a blog post about their notoriously difficult performance engineering take-home exam. @AnthropicAI explained that Opus 4.5 beat it, forcing a redesign: "Given enough time, humans still outperform current models, but the fastest human solution we've received still remains well beyond what Claude has achieved even with extensive test-time compute."

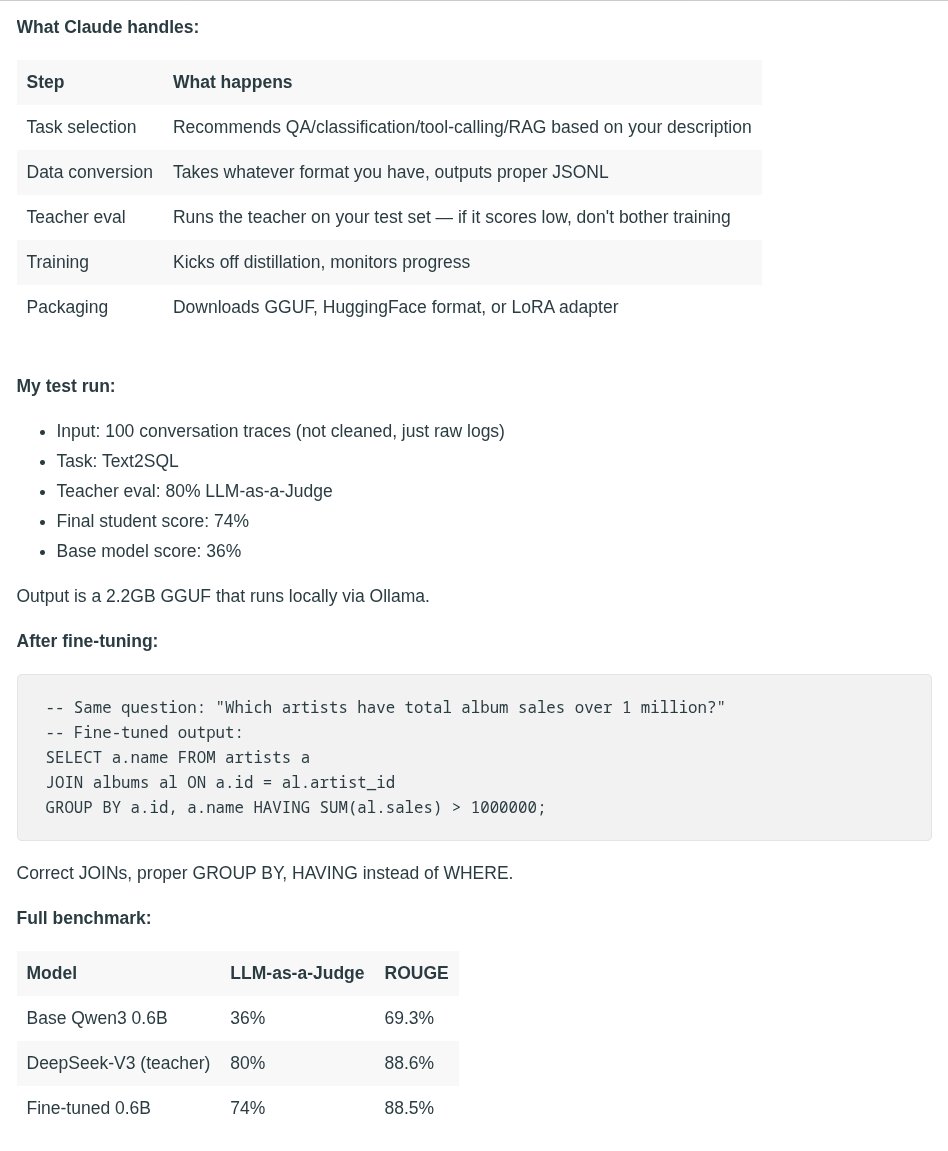

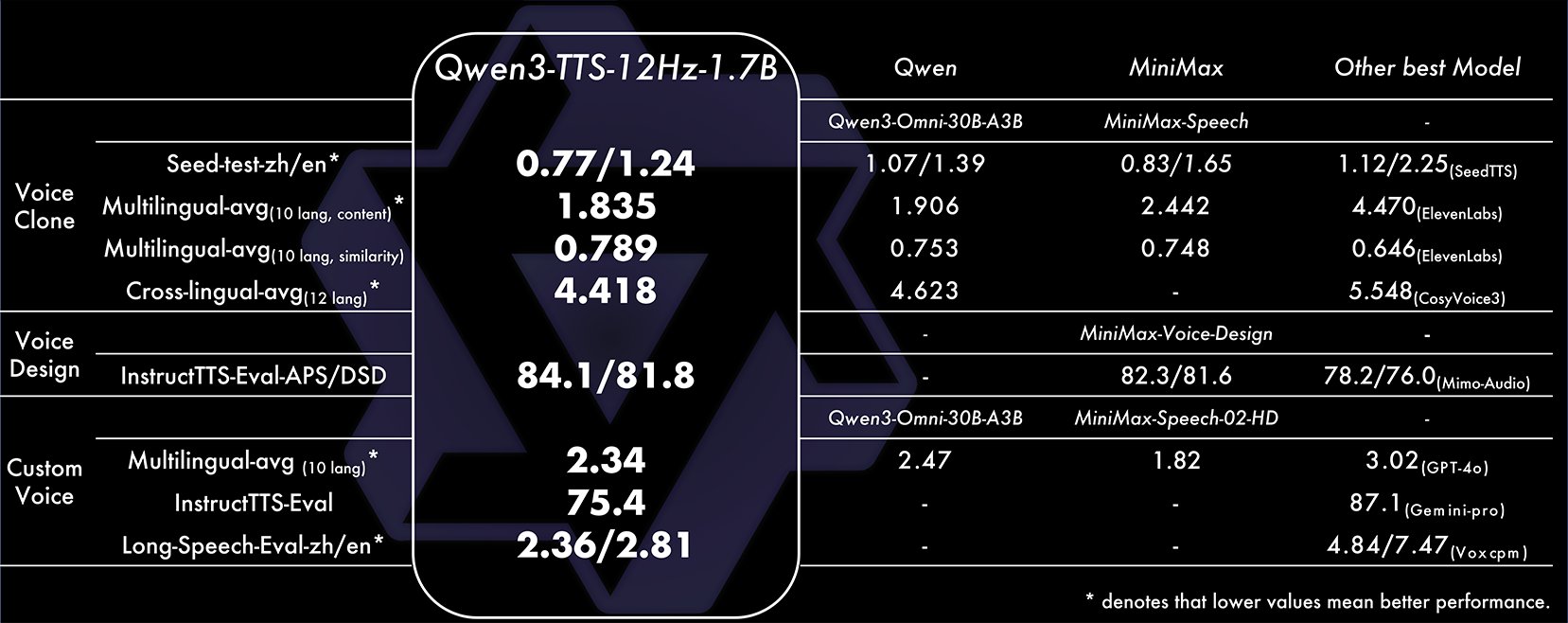

On the open-source side, @Alibaba_Qwen shipped Qwen3-TTS with five models spanning 0.6B and 1.8B parameters, support for 10 languages, voice cloning, and a state-of-the-art 12Hz tokenizer. They called it "arguably the most disruptive release in open-source TTS yet." Separately, @TheAhmadOsman detailed an impressive knowledge distillation workflow where a 0.6B model went from 36% accuracy on Text2SQL to 74% after distilling from DeepSeek-V3 using a Claude skill as the orchestrator. The takeaway: "You don't need a giant model for every job. You need tiny specialists that understand your world."

Code Quality at Scale Remains Unsolved

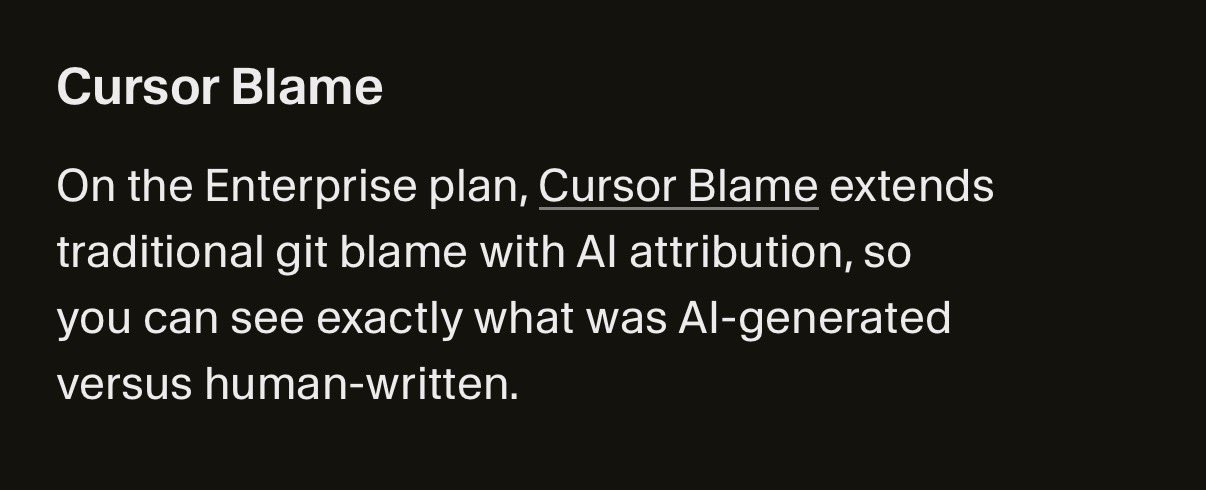

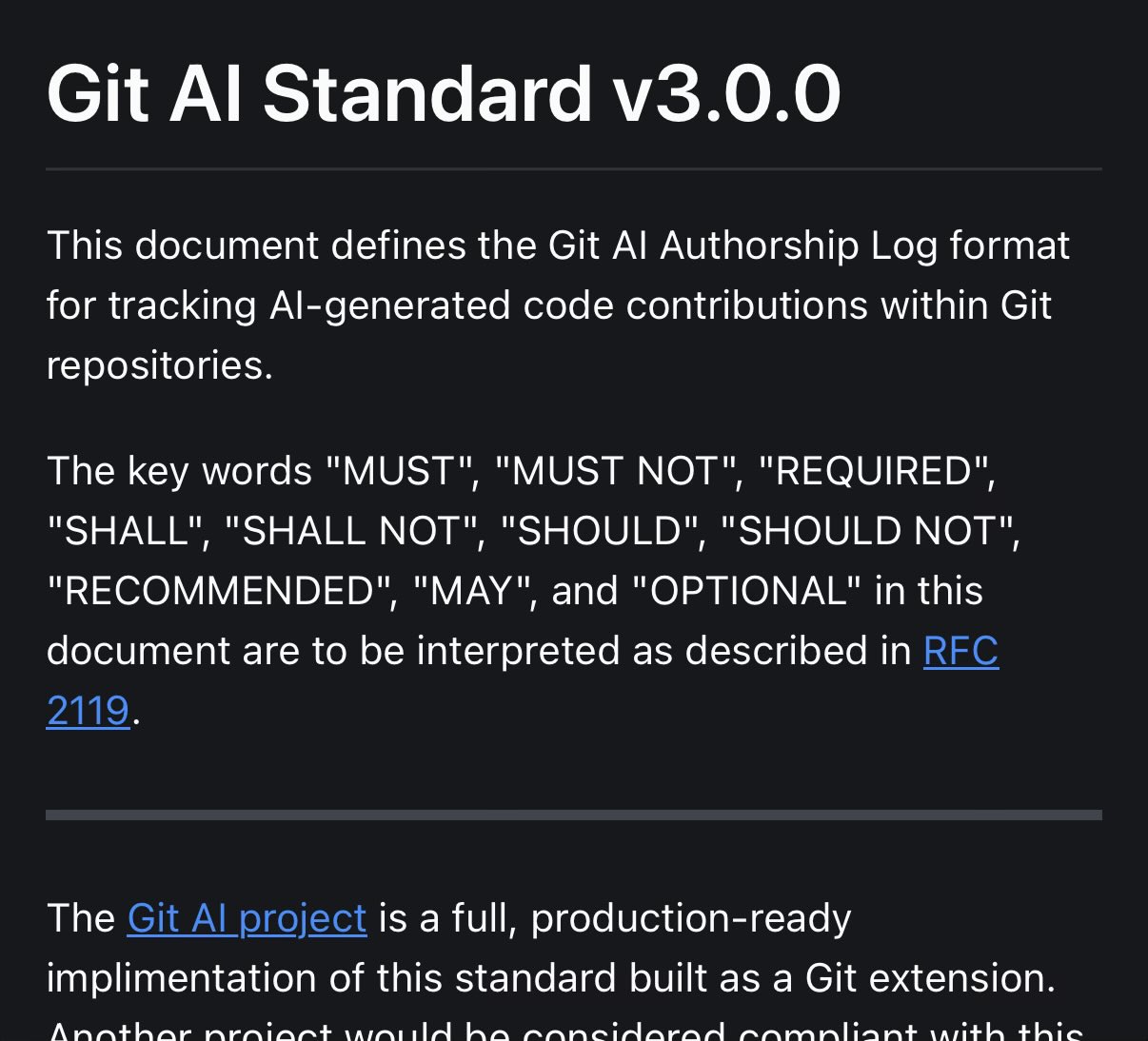

@nayshins articulated what many are feeling: "infinite code currently leads to the choices: 1. infinite review burden, 2. slop. We need to keep experimenting with tools to ease the review burden or we will be buried in slop." This is the uncomfortable truth lurking behind every productivity claim about AI coding tools. @emollick offered a more measured take, noting that "there is definitely an accumulating AI skillset that comes with experience" and that knowledge about model capabilities "changes more gradually and, with enough experience, predictably, than you might expect."

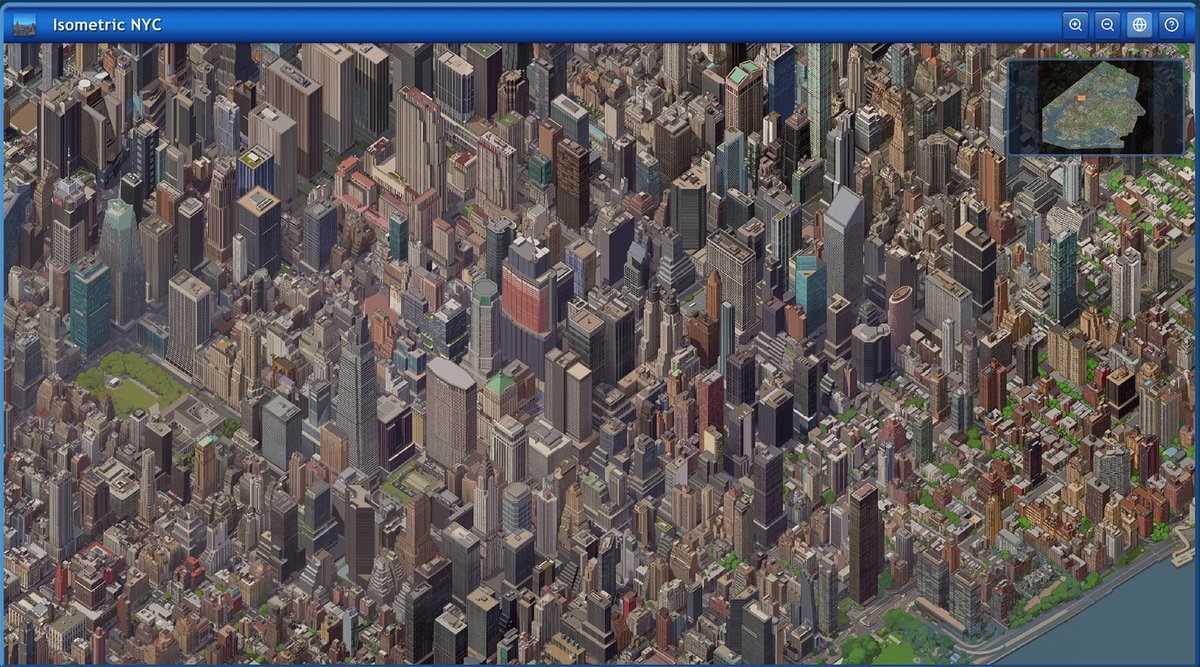

@_coenen provided a compelling case study, sharing a massive isometric pixel art map of NYC built entirely with coding agents: "I didn't write a single line of code." But the follow-up was telling: "Of course no-code doesn't mean no-engineering. This project took a lot more manual labor than I'd hoped!" The gap between "the AI wrote all the code" and "the project was effortless" remains wide, and closing it is the central challenge for the next generation of developer tools.

Sources

Build the machine that builds the machine

Remotion now has Agent Skills - make videos just with Claude Code! $ npx skills add remotion-dev/skills This animation was created just by prompting 👇 https://t.co/hadnkHlG6E

Meet Devin Review: a reimagined interface for understanding complex PRs. Code review tools today don’t actually make it easier to read code. Devin Review builds your comprehension and helps you stop slop. Try without an account: https://t.co/Zzu1a3gfKF More below 👇 https://t.co/sYQLjwSk6s

v1 of my "reimplement this PR using an ideal commit history" command, actually works quite well. "What commits would I have made if I had perfect information about the desired end state?" https://t.co/5S4kCIo8bR

I don't think people have fully internalized the implications of autonomous AI software engineering agents yet Early adopters have noticed (or at least intuited) something important: as the cost of generating code approaches zero, the bottleneck shifts from writing code to understanding it, verifying it, and catching bugs or security issues before you ship Put simply: our capacity to generate code is growing much faster than our capacity to review it The good news is that as we get better at building AI coding agents, we also get better at building tools that help us understand, organize, and verify the generated code This is why I think Devin Review is indicative of the next generation of SWE agents: we're now moving beyond going from prompt-to-PR or prompt-to-app, and toward automating the other parts of being a SWE (specifically planning and testing)

Humanity's future rest on one key question: https://t.co/mSMlVmEYim

We been working on a typed workflow runtime for @clawdbot - composable pipelines with approval gates. Use fewer tokens, have more predictable outcomes. lobster🦞 is the "shell" for your agent. (kudos, @_vgnsh) https://t.co/MY9Tq9hfrU https://t.co/ooSe6VqNsw

In love with this aesthetic https://t.co/pYz1Gn97jD https://t.co/5fvSPHco1k

Agent Sandboxes: A Primer

Over 4,500 unique agent skills have been added via 𝚗𝚙𝚡 𝚜𝚔𝚒𝚕𝚕𝚜 from major products across the ecosystem: • @neondatabase • @remotion • @stripe • @expo • @tinybird • @supabase • @better_auth Find new skills and level up your agents at https://t.co/wcRHxRUm9u

The World API is live. Generate persistent, explorable 3D worlds from text, images, and video. Integrate them directly into your products. https://t.co/oJQwP50A6e

@DavidKPiano "Catching up takes a day, not month" I don't think that's true. I see so many people throwing their hands up saying "I don't get why you have good results from this stuff while I find it impossible to get decent code that works" The difference is I've spent 3+ years with it!

Securing Agents in Production (Agentic Runtime, #1)

Bring your ChatGPT subscription to Cline for inference. We partnered with @OpenAI to let you use your existing subscription. Sign in and access all the models in your subscription. No API keys, flat-rate pricing instead of per-token costs. Here is how to enable this: https://t.co/Plq2qrfxVH

Learn about everything new in 2.4: https://t.co/hNxdhhaPdi

frontend-design skill https://t.co/Tl20xQJZc1

We’re turning Todos into Tasks in Claude Code

Today, we're upgrading Todos in Claude Code to Tasks. Tasks are a new primitive that help Claude Code track and complete more complicated projects and...

@PostgreSQL has long powered core @OpenAI products like ChatGPT and the API. Over the past year, our production load grew 10× and keeps rising. Today we run a single primary with nearly 50 read replicas in production, delivering low double-digit millisecond p99 client-side latency and five-nines availability. In our latest OpenAI Engineering blog, we unpack the optimizations we made to to scale @Azure PostgreSQL to millions of queries per second for more than 800M ChatGPT users. Check out the full post here: https://t.co/VTnxhlwlat

We’re turning Todos into Tasks in Claude Code

Been loving IsoCity by @milichab, but one thing was missing - what if I wanted ANY building in my city? So I built this using fal 🏙️ https://t.co/V2kRrFuAnp

Cursor now uses subagents to complete parts of a task in parallel. Subagents lead to faster overall execution and better context usage. They also let agents work on longer-running tasks. Also new: Cursor can generate images, ask clarifying questions, and more. https://t.co/LTsxuaYuoU

I wanted to share something I built over the last few weeks: https://t.co/QRqMK9CpTR is a massive isometric pixel art map of NYC, built with nano banana and coding agents. I didn't write a single line of code. https://t.co/97nOJPzF0u

We’re turning Todos into Tasks in Claude Code

as a software engineer, i feel a real loss of identity right now. for a long time i defined myself in part by the act of writing code. the pride in a hard-earned solution was part of who i was. now i watch AI accomplish in seconds what took me hours. i find myself caught between relief and mourning, awe and anxiety. the craft that shaped me is suddenly eclipsed by a machine. who am i now?

Claude Code's New Task System: The Practical Guide and Explainer

From flat to-do lists to dependency-aware orchestration You've Outgrown To-Do Lists We've all been there. You're working on something substantial - a ...

yaaaaas! got GLM-4.7-Flash 4-bit running on my M3 with @opencode 🚀 crashed my mac 3 times already... and not exactly fast enough to do anything with... still epic that it's possible though 🙌 https://t.co/8XcY7MR3m4

Agent Skills are now available in Cursor. Skills let agents discover and run specialized prompts and code. https://t.co/aZcOkRhqw8

Introducing Claude-Phone

This is the first thing I built myself....open source....and just said, "Here, everyone use it." ..and honestly I'm terrified because I REALLY hope it...

Agent Skills are now available in Cursor. Skills let agents discover and run specialized prompts and code. https://t.co/aZcOkRhqw8