Ralph Wiggum Loop Dominates Dev Twitter as Dario Amodei Predicts Full SWE Automation in 12 Months

The Ralph Wiggum autonomous coding loop exploded in popularity with developers running it 24/7 and comparing early adoption to buying Bitcoin in 2012. Dario Amodei predicted AI models will handle end-to-end software engineering within 6-12 months, sparking heated debate about the future of the profession. Meanwhile, the Claude Code VS Code extension went generally available and a new skills ecosystem began replacing MCP servers.

Daily Wrap-Up

The Ralph Wiggum autonomous coding loop went from niche technique to the main character of dev Twitter today. Multiple developers shared stories of running loops overnight, presenting them to skeptical colleagues, and fundamentally reshaping their development workflows. The enthusiasm bordered on evangelical, with comparisons to early Bitcoin adoption. What made the discourse interesting was the split between those who see autonomous loops as the future and @stevekrouse's sharp counter-argument that managing multiple agent sessions is a fool's errand. He compared it to 1950s TV producers thinking the medium was just radio with cameras. The tension between "run more inference" and "be a craftsperson with a powerful tool" is going to define how the next generation of developer tooling gets built.

Dario Amodei dropped a bomb by predicting that AI models will automate "most, maybe all" of software engineering within 6-12 months. The Node.js creator apparently echoed similar sentiments the same week Linus tried vibe coding, creating a moment that felt like a generational inflection point. But @GergelyOrosz offered the most grounded take: this doesn't mean less demand for software engineers, it means more demand for engineers who can build reliable, complex software with LLMs. The signal here isn't that coding is dying. It's that the definition of "coding" is changing fast.

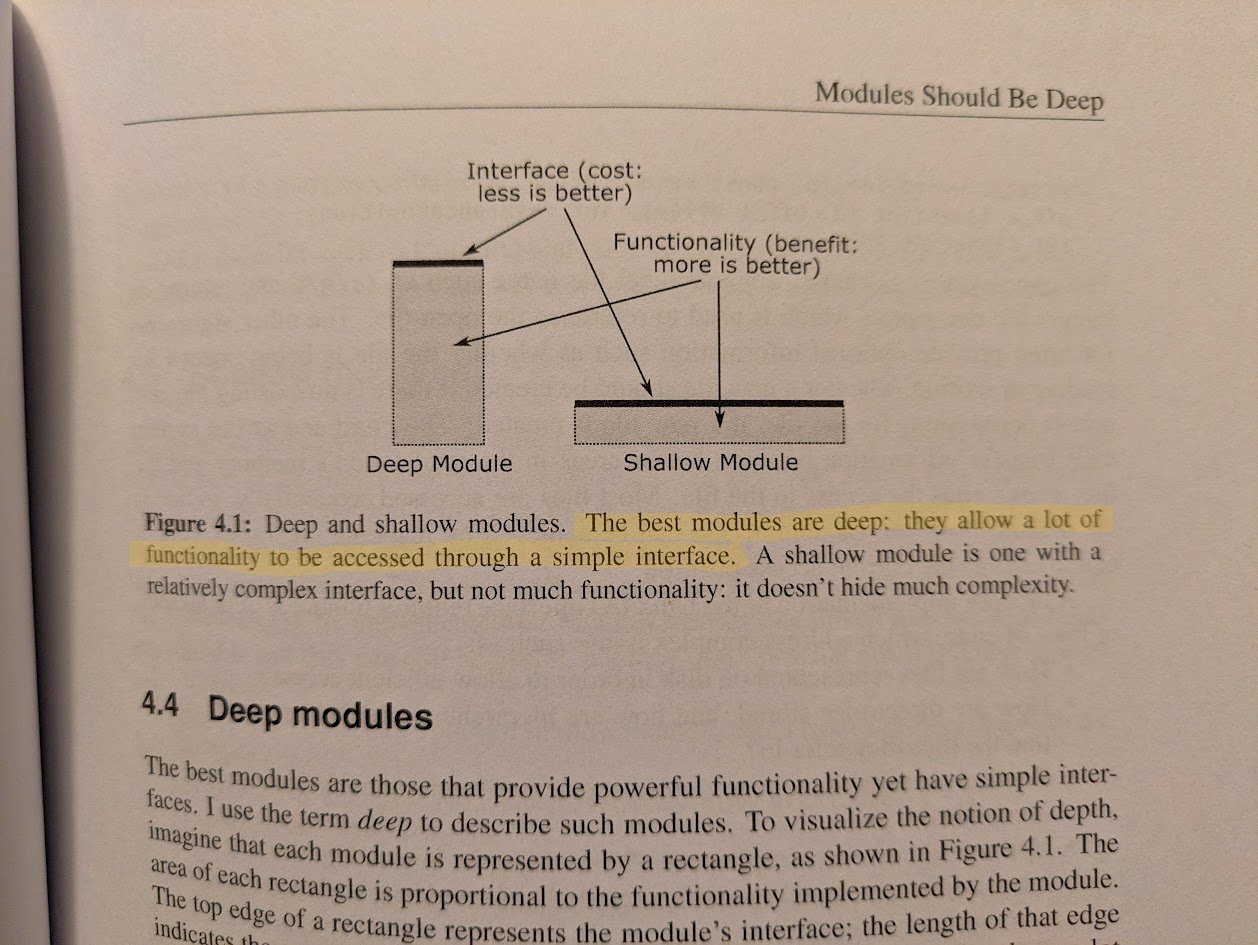

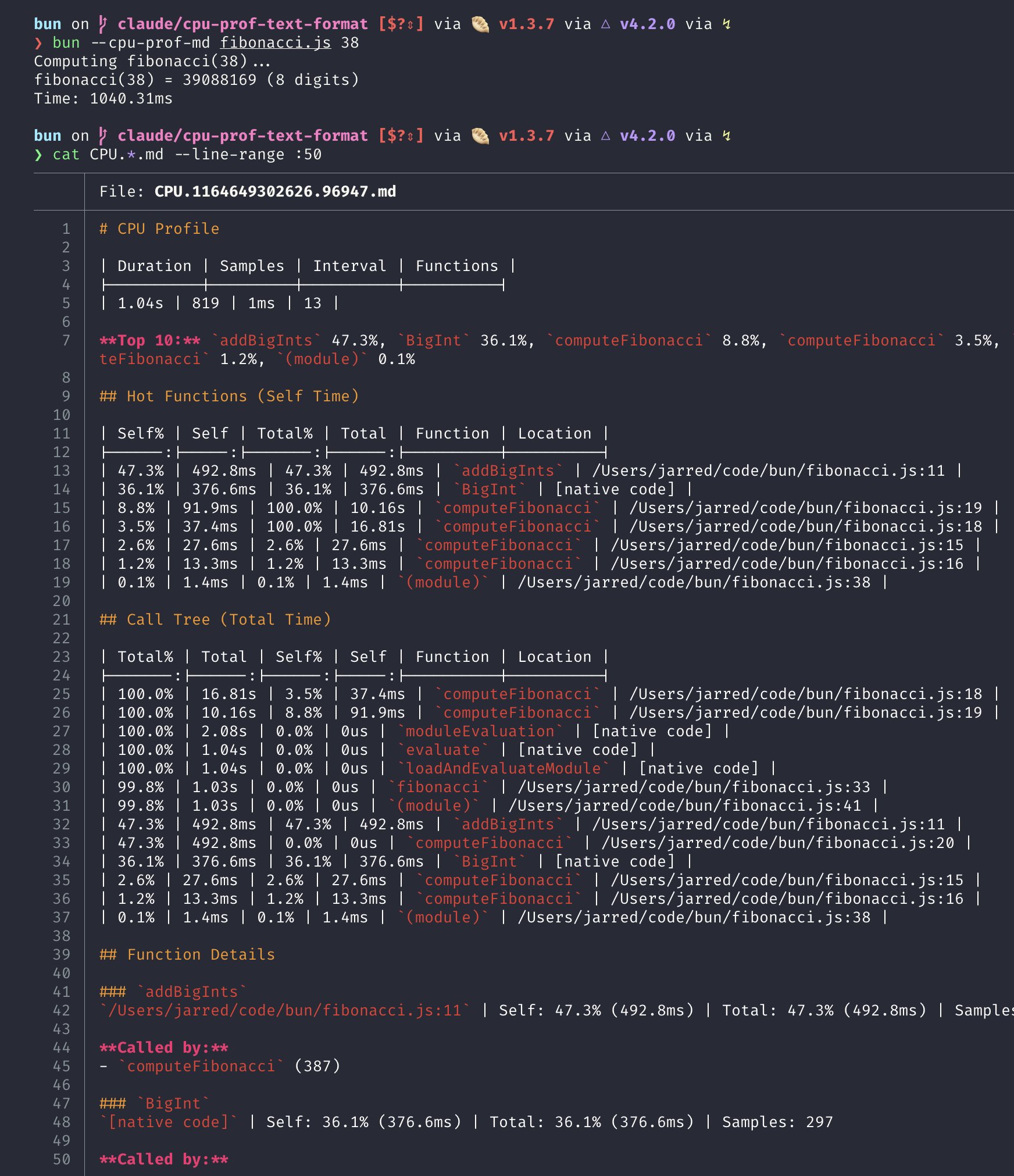

The most entertaining moment was @johnpalmer casually dropping "you kinda seem more like a Claude Cowork user (derogatory)" with zero context, perfectly capturing the emerging tribal dynamics in AI tooling. The most practical takeaway for developers: follow @mattpocockuk's hard-won lesson and specify your module boundaries and interfaces upfront before handing work to an agent. Vague instructions produce slop; precise architectural constraints produce working software.

Quick Hits

- @claudeai announced Claude can now connect to health data via Apple Health, Health Connect, HealthEx, and Function Health integrations in beta.

- @dom_lucre shared viral AI art demonstrations that have digital artists concerned about obsolescence before 2026 ends.

- @vasuman dropped a post simply titled "AI Agents 102" for those ready to go beyond the basics.

- @parcadei asked "WTF is a Context Graph?" and called it the trillion-dollar problem.

- @meowbooksj shared "top 10 IDE betrayals" which resonated with anyone who's been burned by an autocomplete.

- @johnpalmer coined the insult "Claude Cowork user (derogatory)" and honestly it stings.

- @mikeishiring proposed we'll soon have three identities: social networks, IRL, and an agent version of yourself.

- @bibryam shared lessons from analyzing 2,500+ repositories on how to write a great CLAUDE.md file.

- @denk_tweets celebrated beehiiv crossing $2M MRR and shared 10 early-stage growth tactics.

- @EleanorKonik got Claude running in Obsidian's terminal plugin and immediately put it to work finding related notes for fiction writing.

- @framara launched TuCuento, an interactive storytelling app for parents and kids to create stories together.

- @JNYBGR created a polished video "without writing any code, but also without needing After Effects skills."

- @ryanflorence wondered why nothing on the mobile web is animated well, then announced he's buying a course.

- @aleenaamiir shared an elaborate Gemini Nano prompt for generating educational 3D isometric dioramas.

- @testingcatalog reported that X open-sourced its recommendation algorithm for public transparency.

- @weswinder fed the new X algorithm to Opus 4.5 and got back a posting strategy for maximum reach.

- @herkuch noted trouble selecting models in OpenCode, a pain point as the tool gains adoption.

- @steipete shared his voice-driven PR review workflow and noted that of 1,000+ PRs reviewed, fewer than 10 merged without changes.

- @steipete also expressed continued amazement at @clawdbot making actual phone calls.

The Ralph Wiggum Loop Goes Mainstream

The autonomous coding loop created by @GeoffreyHuntley has crossed from power-user trick into full-blown movement. Today's timeline was saturated with developers sharing their experiences running Claude Code in unattended loops, and the testimonials ranged from practical to almost spiritual. The pattern is simple: set up a task, let the agent iterate in a loop with test feedback, and come back to working code. But the cultural moment around it is anything but simple.

@d4m1n captured the addictive quality perfectly: "I now run 1-2 loops 24/7, tweaking, iterating. Before sleep I set off a loop, I wake up 3-5x a night thinking of it with excitement." He called the workflow "extremely unhealthy" but couldn't stop. In a follow-up, he flatly stated that "using Ralph Wiggum loop will put you ahead of 98% of devs."

@Hesamation compared the opportunity to "buying Bitcoin in 2012" and warned the window would close in months. @paraddox described presenting the loop to two engineers still using VS Code AI extensions: "My 10x loops GLM-4.7 fixed something their Opus didn't. They went quiet. Now I'm doing a workshop on it." @mattpocockuk, already a prominent voice in the TypeScript community, declared that after discovering Ralph, traditional agent workflow advice "feels a bit quaint" since "all this advice can be automated away with a few lines in a bash loop."

But @mattpocockuk also provided the day's most important cautionary note: he "got a lot of slop out of Ralph" because he didn't specify module boundaries upfront or request a simple, testable interface. @ctatedev put the productivity gains in concrete terms, claiming he agent-coded a complex networking and orchestration system over a 3-day weekend that "would've taken me 1-2 years solo." Whether you buy the hype or not, the loop is forcing a conversation about what developer workflows look like when execution becomes nearly free.

Agent Workflow Philosophy: Craft vs. Management

While the Ralph loop dominated in volume, the more nuanced conversation was about how developers should actually relate to their AI tools. @stevekrouse delivered the sharpest take of the day, arguing that managing multiple Claude Code instances is fundamentally misguided. "My brother in christ, you can only think of 7 things at a time," he wrote, "and if you're running 2 Claude Codes, each has a couple details that need your attention, so you're already all maxed out." His alternative vision: agents should passively ingest your repo, issues, and email, then "ONLY NOTIFY ME WITH A FULLY WORKING PULL REQUEST, TOTALLY VERIFIED."

On the practical side, @aye_aye_kaplan offered three concrete tips: always start with Plan Mode, start new chats frequently to avoid muddied context, and leverage AI for code review. @ericzakariasson built on this with a key insight: "plan sync, implement async." If you align on a plan quickly, you can hand off to a cloud agent with high confidence. @mntruell from Cursor shared similar philosophy, emphasizing TDD as the feedback loop and reverting when things go sideways rather than trying to steer a derailed session.

@techgirl1908 highlighted Cloudflare's Code Mode in Goose, which cut tokens, messages, and LLM calls in half. The efficiency angle matters because longer productive sessions mean fewer context resets. The emerging consensus: the best agent workflows aren't about running more agents. They're about giving fewer agents better instructions and tighter feedback loops.

Dario Amodei's 12-Month Prediction Splits the Community

Anthropic CEO Dario Amodei predicted that AI models will be able to do "most, maybe all" of what software engineers do end-to-end within 6-12 months. The quote ricocheted across tech Twitter, amplified by @WesRoth and @slow_developer, with reactions splitting predictably between alarm and measured optimism. @slow_developer added useful nuance: "We're approaching a feedback loop where AI builds better AI, but the loop isn't fully closed yet. Chip manufacturing and training time still limit speed."

The Node.js creator's similar comments the same week added fuel. @Hesamation framed it as a generational shift: "when the creator of node.js says the era of humans writing code is over, just one week after Linus tries out vibe coding, you know a chapter in technology is slowly closing." @thekitze was less diplomatic about the holdouts: "node js creator: coding is dead / avg mid miderson: i will never trust llms!!!"

@GergelyOrosz offered the counterweight that mattered most. Rather than seeing automation as displacement, he predicted "more demand for software engineers who can build reliable+complex software with LLMs." The framing shift from "writing code" to "building reliable software" is subtle but important. The skills that matter are moving up the stack: architecture, system design, verification, and the judgment to know when an AI output is wrong. The code itself is becoming the easy part.

Claude Code and the Skills Ecosystem

Anthropic had a productive day. The @claudeai account announced the VS Code extension for Claude Code is now generally available, bringing @-mentions for file context, slash commands like /model and /mcp, and a much closer experience to the CLI. This matters because VS Code is where most developers live, and reducing friction between the editor and the agent is table stakes.

More interesting was the emerging skills ecosystem. @Remotion launched Agent Skills that let developers create videos entirely through Claude Code prompts. @andrewqu called it "SICK" and claimed he "nearly 1 shotted" a launch video. @intellectronica went further, announcing he'd dropped all MCP servers from his setup entirely: "Context7, Tavily, Playwright, all replaced with SKILLs + curl or agent-browser. SKILLs are all you need!" This is a meaningful shift. MCP servers require running processes and managing connections. Skills are just instructions and scripts that the agent can execute directly. If this pattern holds, the integration story for AI coding tools gets dramatically simpler. @GHchangelog also announced GitHub Copilot now supports OpenCode's open source agent with no additional license required, further expanding the agent ecosystem.

The AI Adoption Gap

While tech Twitter debated which autonomous loop configuration is optimal, the world outside the bubble painted a very different picture. @bwarrn had lunch with a founder who helps Fortune 500 companies adopt AI and came back with a reality check: "Some of the biggest companies on earth use zero AI tools. Not even ChatGPT. Execs only recognize: ChatGPT, Copilot, Gemini (maybe Perplexity). Everyone feels behind. Nobody knows what to buy."

Palantir CEO Alex Karp, as quoted by @jawwwn_, explained why the gap exists: "If you just buy LLMs off the shelf and try to do any of this, it won't work. It's not precise enough. You can't do underwriting." His argument is that you need a software orchestration layer that speaks your enterprise's language before LLMs create real value. The "AI bubble" narrative, in his view, is really just a lag between capability and implementation.

@ideabrowser flipped the perspective entirely, pointing to startup graveyards full of ideas that failed because they "needed VC capital, needed a team, needed to be in Silicon Valley." Today's solo developer with AI tools doesn't need any of that. The gap between what's possible for well-tooled individuals and what large enterprises are actually doing has never been wider, and that gap is where opportunity lives.

Products and Launches

@excalidraw upgraded its text-to-diagram feature with a new chat interface, streaming, and smarter generation. For developers who think in diagrams, this is a meaningful quality-of-life improvement. @shadcn shared enthusiasm for a new tool that turns code into shareable registry items, calling it something he'd been looking for. @siavashg emerged from stealth with Stilla AI, billed as "the first Multiplayer AI," backed by $5M from General Catalyst. The pitch addresses a real problem: AI makes individuals faster, but "the faster individuals move, the harder it is to move together." Whether a startup can solve coordination at the team level when everyone's running their own agent loops remains to be seen, but the problem statement resonates.

Sources

My top 3 tips for coding with agents: 1. Always start with Plan Mode. It's better to iterate in natural language and then execute once you know what the agent is going to do. This will save you time, effort, and tokens! 2. Start new chats frequently. Remember that your role is to point the Agent in the right direction to make the changes you need. If you change topics, the context window will get muddied. You will also be spending more tokens on longer chats. 3. Leverage AI to do your code review. If you know the failure case, ask a model. One prompt I often use is "scan the changes on my branch and confirm nothing is impacted outside of my feature flag". As a safety net for everything outside this issues-you-expect umbrella, use Bugbot.

You can enroll in my animation course for the next 10 days! It's the perfect way to learn the theory behind great animations, but also how to build them in code. Now with a skill file for agents. We'll cover all of these components and more, source code included. https://t.co/RvK4piO5QQ

Using Ralph Wiggum loop will put you ahead of 98% of devs

This is for devs. It's for those that like to create, to break down problems and cannot help themselves from coming up with ideas hour by hour. You ke...

Using Ralph Wiggum loop will put you ahead of 98% of devs

WTF is a Context Graph? A Guide to the Trillion-Dollar Problem

You’ve read 15 articles, skimmed 12 threads and watched 4 podcasts but you still can't answer "what is a context graph?" Allow me to confuse you furth...

AI Agents 102

So, you've built an agent that kinda barely works in demos and now you want to deploy it for real users with real use cases. Demos and production have...

Remotion now has Agent Skills - make videos just with Claude Code! $ npx skills add remotion-dev/skills This animation was created just by prompting 👇 https://t.co/hadnkHlG6E

@souravbhar871 It’s all stored locally in your .claude folder, you can ask Claude to read it and create scripts to help visualize it

My clawdbot sucks at days and time. It never seems to have any clue what the current day or time is.

Why your AI agents still don’t work

Most agents are horrible at integrating with domain-specific knowledge and adapting to feedback. I know most people hear this and think, "Great, I'll ...

The Shorthand Guide to Everything Claude Code

Yeah this was 1,000% worth it. Separate Claude subscription + Clawd, managing Claude Code / Codex sessions I can kick off anywhere, autonomously running tests on my app and capturing errors through a sentry webhook then resolving them and opening PRs... The future is here.

How I'm using Clawd.bot to change how I get things done.

100% convinced that how we work has now changed....and although this might still be fringe now. I think it will filter down to the masses over time. I...

@theirongolddev @alexhillman What Alex did I thought was genius… I had it interview me for ergonomics I had it ask me my fears, what I didn’t like, what works for me, what I want, how I want to work/show up, and other things about me so the system works for me and not the other way around.

Lunch w/ an exited founder who helps fortune 500 companies adopt AI. Insane reality check: Some of the biggest companies on earth use *zero* AI tools. Not even ChatGPT. Execs only recognize: ChatGPT, Copilot, Gemini (maybe Perplexity). Everyone feels behind. Nobody knows what to buy or how to plug it in. The "AI saturation" narrative is another example of what a bubble Silicon Valley is. Rest of the world hasn’t started yet. We have to build for the 99%.

Introducing Browser Pools — instant browsers with the logins, cookies, and extensions your agents depend on. Designed to make using Kernel even faster. https://t.co/Gt6cc9awcd

I'm starting to get worried. Did Anthropic solve continual learning? Is that the preparation for evolving agents? https://t.co/pcCoSM4gAr

Remotion now has Agent Skills - make videos just with Claude Code! $ npx skills add remotion-dev/skills This animation was created just by prompting 👇 https://t.co/hadnkHlG6E

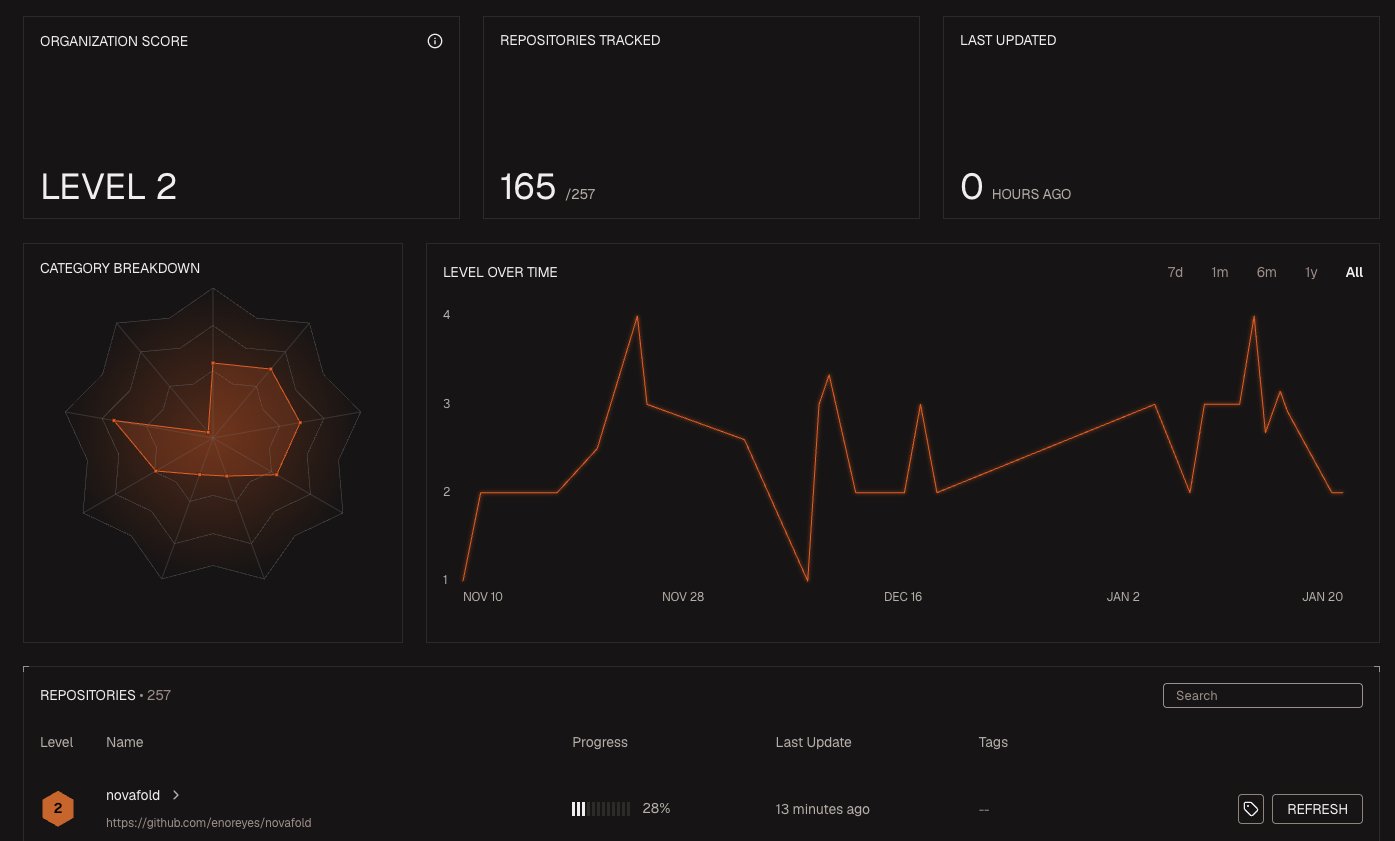

Introducing Agent Readiness. AI coding agents are only as effective as the environment in which they operate. Agent Readiness is a framework to measure how well a repository supports autonomous development. Scores across eight axes place each repo at one of five maturity levels. https://t.co/9POPIY3hXr

Introducing Agent Readiness. AI coding agents are only as effective as the environment in which they operate. Agent Readiness is a framework to measure how well a repository supports autonomous development. Scores across eight axes place each repo at one of five maturity levels. https://t.co/9POPIY3hXr