Ollama Adds Anthropic API Compatibility as Agent Architecture Patterns Crystallize

The agent tooling ecosystem hit an inflection point with ollama gaining Anthropic Messages API support, Anthropic reportedly building persistent Knowledge Bases into Claude, and the community converging on folder-based architecture patterns for long-running agents. Meanwhile, a parallel thread of burnout anxiety ran through the timeline as developers debated whether humans should write code at all.

Daily Wrap-Up

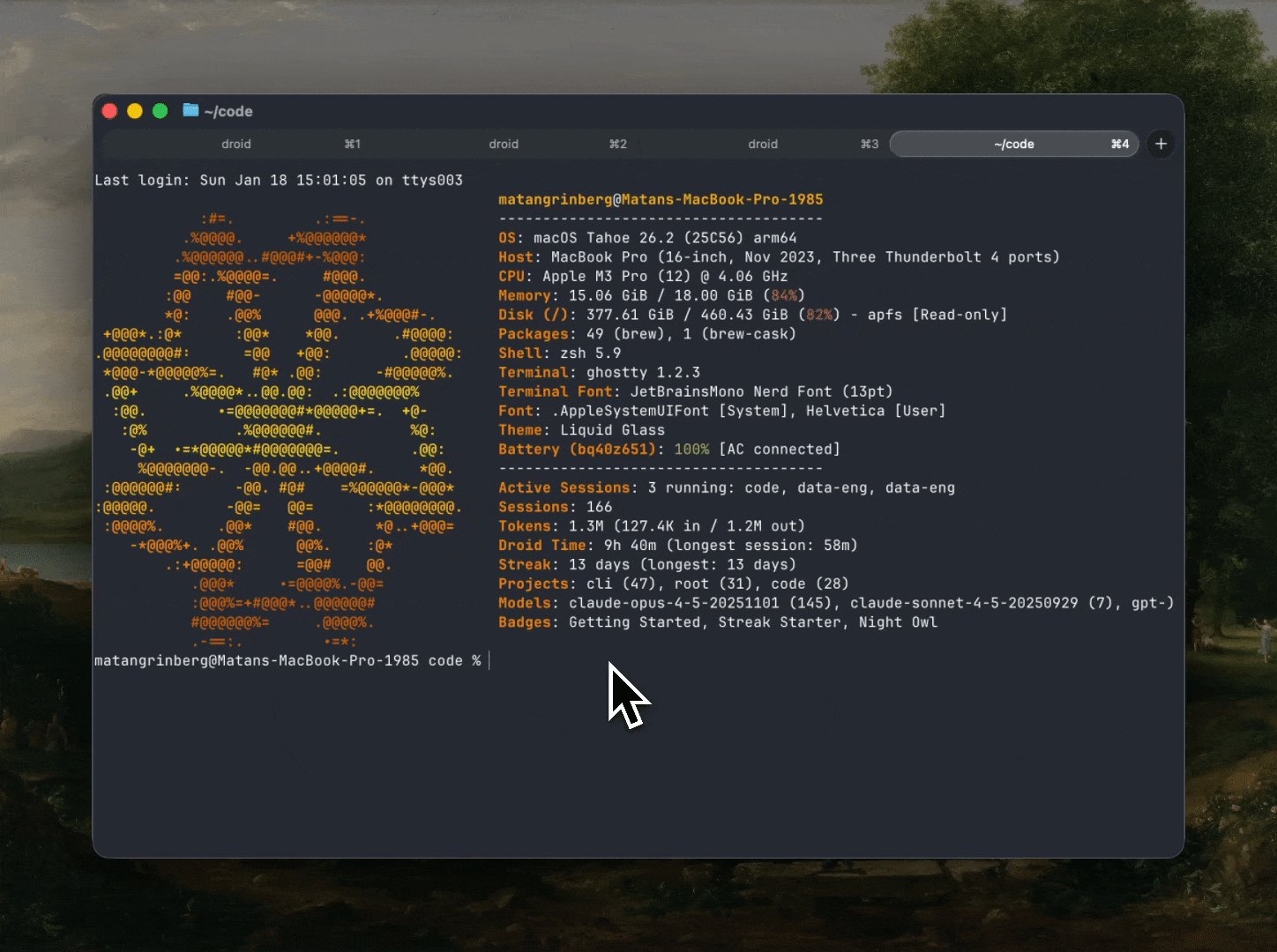

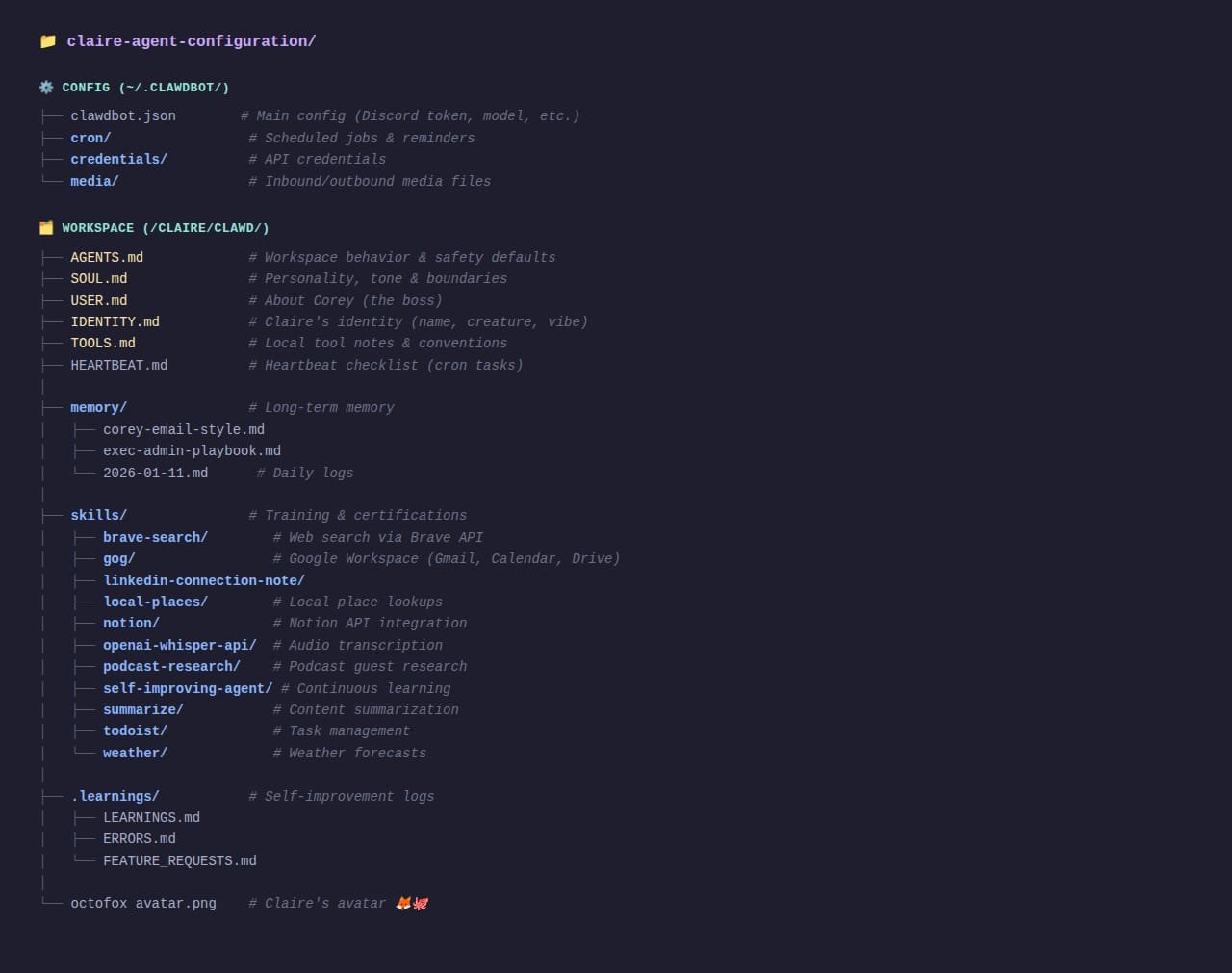

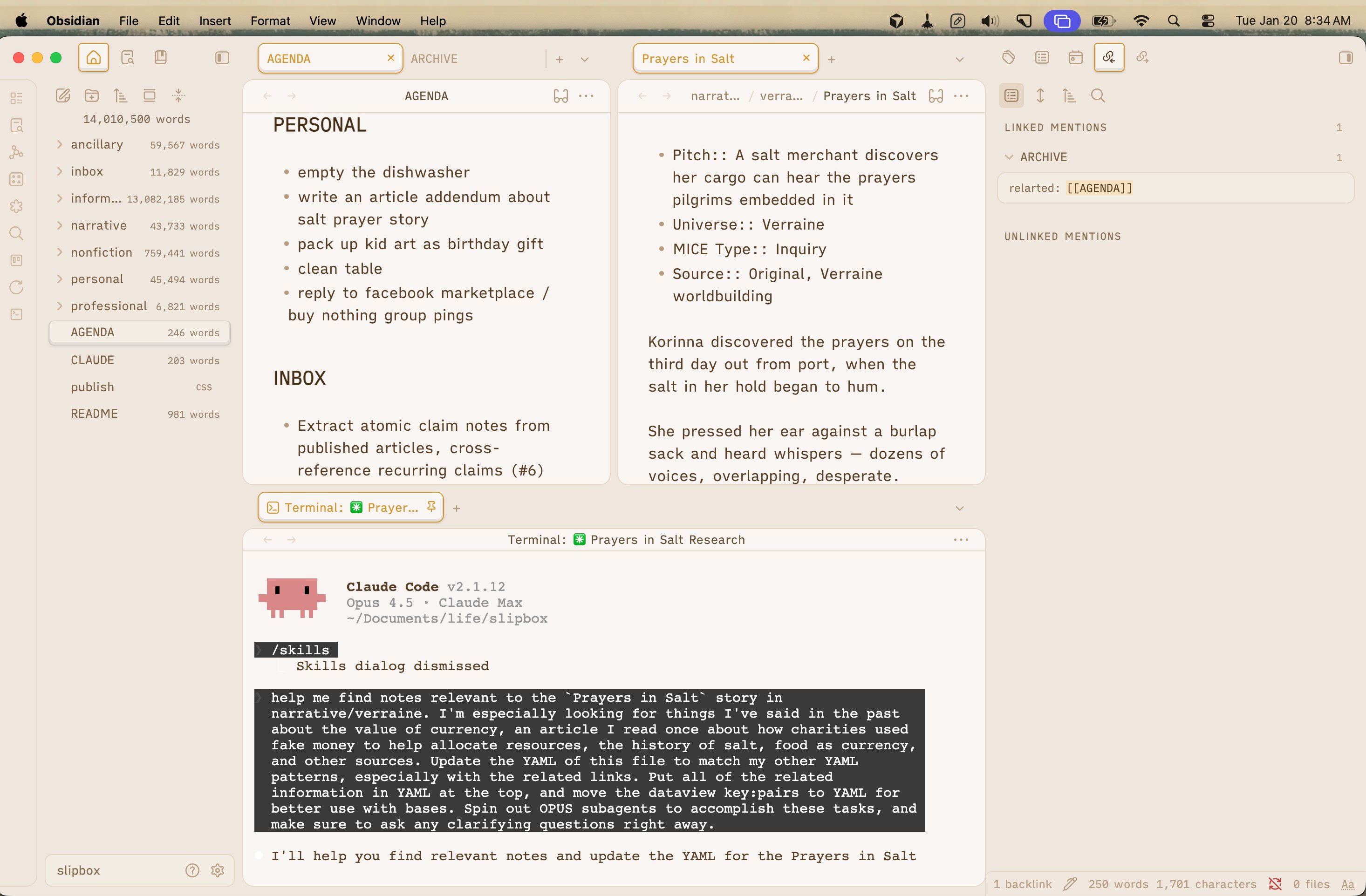

The timeline today felt like two conversations happening simultaneously in the same room. In one corner, builders were excitedly sharing agent folder structures, session tracking tools, Three.js skill packs, and PTY extensions for driving nested CLIs. The energy was unmistakable: people are past the "can agents code?" phase and deep into "how do I architect persistent agent systems that run unsupervised?" The level of infrastructure being built around Claude Code alone is staggering. @GanimCorey sharing a real production folder structure for an "AI employee" complete with config, workspace, memory, and self-improvement loops felt like a watershed moment for how seriously people are treating agent orchestration.

In the other corner, people were quietly losing it. @sofialomart's four-word post "I work in AI and I'm scared" sat alongside @CreativeAIgency's long observation about brilliant early adopters burning out because the pace is "genuinely unsustainable for most human nervous systems." @sawyerhood painted the picture of someone running ten parallel agent sessions at 3am, mistaking compulsion for productivity. Even the optimists like @addyosmani and @simonw, while framing the shift positively, were essentially saying the same thing: writing syntax is no longer the job. The question is whether developers can adapt to what comes next fast enough to avoid the burnout that comes from trying to keep up with everything at once.

The most entertaining moment was easily @stuffyokodraws nailing the vibe coding trap: "The agent implements an amazing feature and got maybe 10% of the thing wrong, and you are like 'hey I can fix this if I just prompt it for 5 more mins.' And that was 5 hrs ago." Every developer who has used an agent recognized themselves in that post. The most practical takeaway for developers: stop treating agent architecture as an afterthought. The posts gaining the most traction today weren't about prompting tricks or model comparisons. They were about folder structures, memory systems, modular specs, and long-running loops. If you're still running one-shot agent sessions without persistent context, you're leaving most of the value on the table.

Quick Hits

- @waynesutton shared a repo for self-hosting a CLI session tracker for Claude Code and opencode.

- @addyosmani dropped a repo link (context unclear from the post alone, but Addy's repos are usually worth bookmarking).

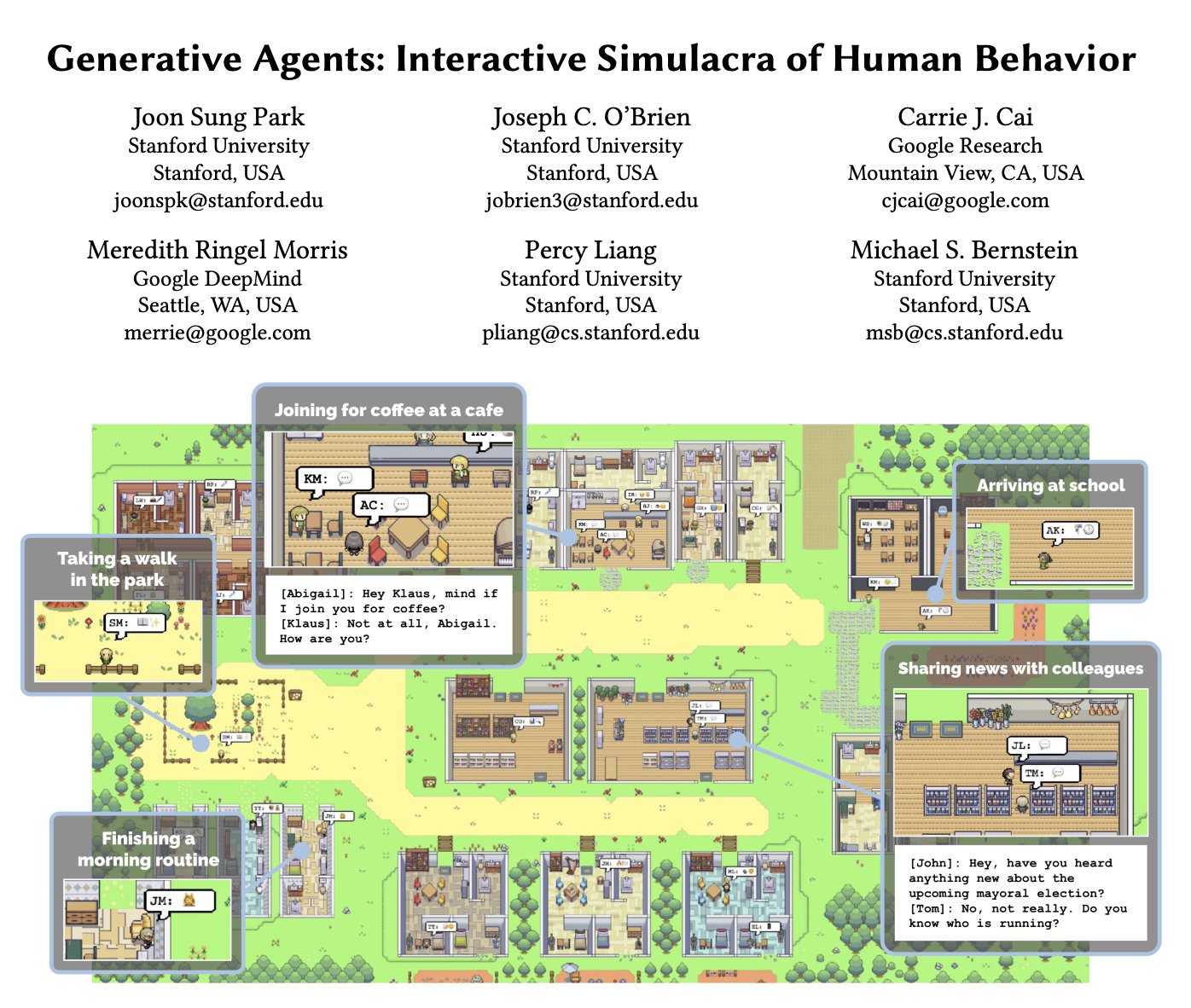

- @shawmakesmagic resurfaced the original Generative Agents paper that kicked off the simulated-town genre in AI, noting nobody went far enough with it beyond the proof of concept.

- @SeanZCai declared "2026 is the year data becomes liquid," which sounds like a VC pitch deck slide but tracks with the structured-data-for-agents trend.

- @IceSolst offered the spiciest take of the day: "To the 7 people still using MCP: don't." No elaboration. No mercy.

- @jojo33733373 summarized the AI opportunity gap: most people will miss the revolution not from lack of intelligence or time, but from choosing the wrong problems and business models.

- @InPassingOnly recommended a simple markdown-based alternative to Beads for context management.

- @RayFernando1337 demonstrated a neat multi-model workflow: Claude researched sprite-making best practices, Gemini 3 Pro generated the actual pixel art, all for 13 cents per character sheet.

Agent Architecture Gets Serious

The single biggest theme today was the maturation of agent infrastructure. Not agents as a concept, but agents as engineered systems with real folder structures, memory persistence, and operational patterns. @GanimCorey shared the actual directory layout of a production "AI employee" and the design philosophy is immediately recognizable to anyone who has built software systems: "Config = what they can access. Workspace = how they think and act. Memory = what they remember. Skills = what they're trained to do." The inclusion of a self-improvement skill that logs learnings and errors is the kind of detail that separates toy demos from production systems.

@TheAhmadOsman laid out what he called the "crucial recipe" for making agents work reliably:

> "There's a crucial recipe: 1. Modularity. 2. Domain-Driven Design. 3. Painfully explicit specs. 4. Excessive documentation. This is systems engineering... If your docs don't answer Where, What, How, Why, the agent will guess, and guessing is how codebases die."

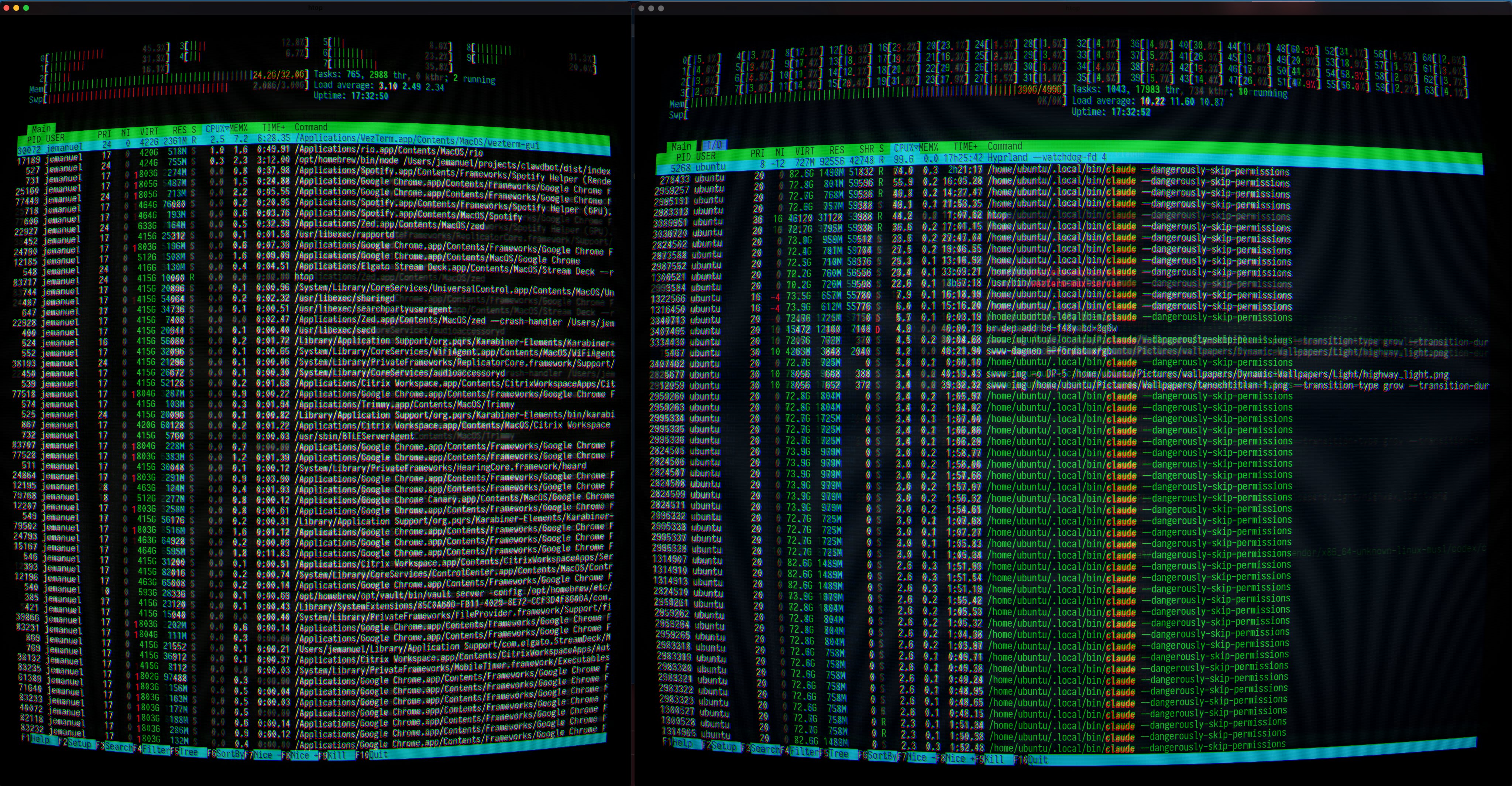

This is the right framing. The agent itself is not the hard part anymore. The hard part is giving it enough structured context to make good decisions autonomously. @dabit3 published a walkthrough on building effective long-running agent loops, and @nicopreme released a PTY extension that lets a coding agent drive interactive CLIs (including other agent harnesses) in an overlay while you watch and optionally take control. @doodlestein made the infrastructure case for running agents on beefy remote servers rather than local machines, comparing Mac Mini M4 specs unfavorably to dedicated workstations for parallel agent sessions.

The vision is converging: persistent agents with structured memory, running on serious hardware, supervised but not hand-held. @jerryjliu0 captured the aspiration perfectly: "I want a Slack filled with long-running Claude Code agents. Each agent has a role, actively monitors all relevant channels, and is continuously doing work and emitting progress updates." That's not science fiction anymore. The pieces are all shipping this month.

The Claude Code Ecosystem Expands

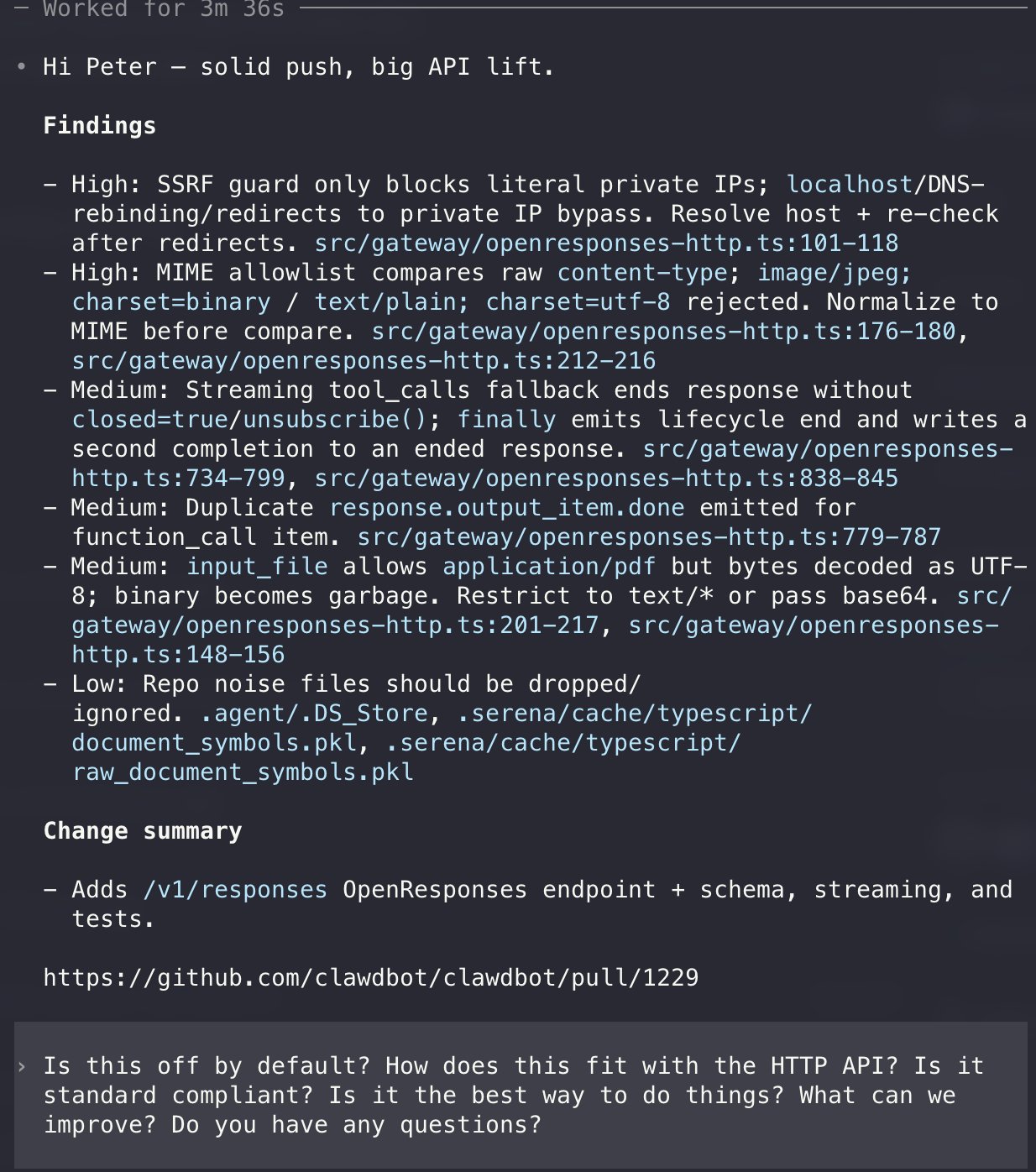

Beyond architecture patterns, the tooling layer around Claude Code specifically had a banner day. The headline item was @akshay_pachaar reporting that ollama now supports the Anthropic Messages API:

> "ollama is now compatible with the anthropic messages API. which means you can use claude code with open-source models... the entire claude harness: the agentic loops, the tool use, the coding workflows, all powered by private LLMs running on your own machine."

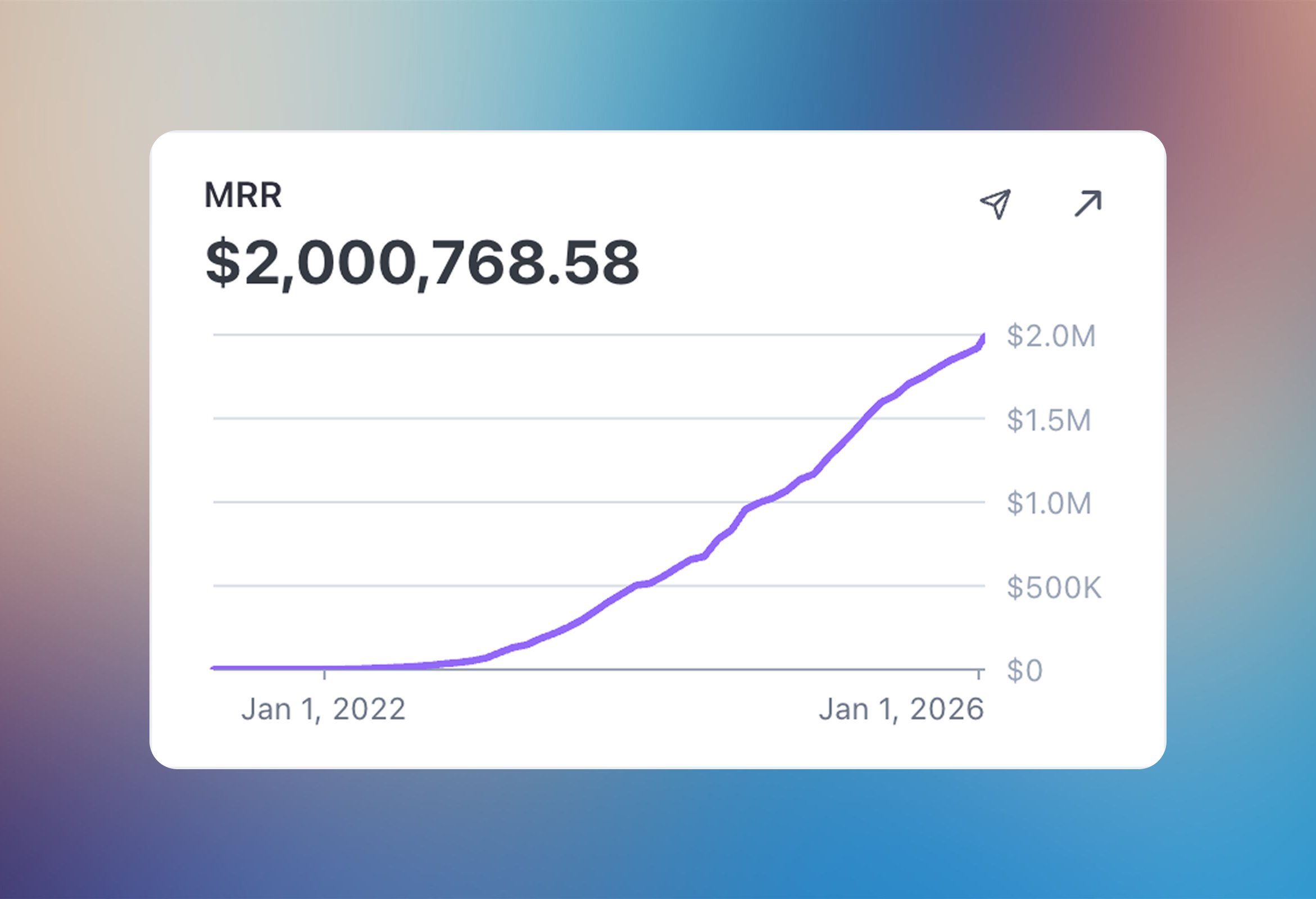

This is genuinely significant. Claude Code's value increasingly lives in its harness (the agentic loop, tool use, file management) rather than being locked to a single model provider. Being able to swap in local models for development, testing, or cost-sensitive workflows while keeping the same agent infrastructure is a big deal for adoption.

@WesRoth reported that Anthropic is building "Knowledge Bases" into Claude Cowork, described as persistent, topic-specific memory containers that Claude will automatically reference and update. If accurate, this is Anthropic productizing what the community has been building ad-hoc with CLAUDE.md files and memory directories. @cloudxdev released a set of Three.js skill files for Claude Code covering scene setup, shaders, animations, and post-processing. @ryancarson was enthusiastic about agent-browser's updated skill for browser testing, calling it "so fast and token efficient." @waynesutton shipped a tool to track coding sessions across Claude CLI and opencode with searchable history and token spend visibility. And @steipete curated a collection of what people are building with Claude Code, suggesting the ecosystem is reaching a critical mass of interesting projects. @dbreunig demonstrated the practical power by feeding 10MB of logs to an agent and asking it to identify the most common failure modes: "Just worked."

The Existential Thread

Running parallel to all the building energy was a distinctly darker conversation about what this all means for the people doing the work. @CreativeAIgency posted a long observation that hit hard:

> "I've watched brilliant people burn out from this. People who were early adopters, who built real expertise, who contributed meaningfully to the space, just exhausted. Not because they're lazy or uncommitted, but because the pace is genuinely unsustainable for most human nervous systems."

@sofialomart kept it to five words: "I work in AI and I'm scared." @sawyerhood described watching someone at 3am running their tenth parallel agent session, claiming peak productivity, and seeing something else entirely: "In that moment I don't see productivity. I see someone who might need to step away from the machine for a bit. And I wonder how often that someone is me."

The identity question surfaced more explicitly through @rough__sea: "The era of humans writing code is over. Disturbing for those of us who identify as SWEs, but no less true." @simonw pushed back on the doom framing by highlighting Ryan Dahl's perspective that developers add far more value than syntax knowledge, arguing it's time to "cede putting semicolons in the right places to the robots." @addyosmani offered the most constructive reframe: "The future of Software Engineering isn't syntax, but what was always the real work: turning ambiguity into clarity, designing context that makes good outcomes inevitable, and judging what truly matters." The through-line is clear. Nobody is arguing that developers are obsolete. The argument is about whether "developer" means the same thing it meant two years ago, and whether the transition to whatever it means next will break people along the way.

Multi-Model Workflows and the Productivity Trap

A smaller but notable thread covered how practitioners are actually structuring their daily work with AI tools. @ryancarson described a workflow that feels like where a lot of autonomous agent usage is heading: a cron job that gathers user activity and marketing data nightly, feeds it to Opus 4.5 for analysis, and emails him a single actionable recommendation each morning. "Almost every time it surfaces something really valuable for me to iterate. So I just open Amp, tell it to action the idea, and then ship it." He noted the next step is having the agent autonomously implement suggestions and wake up to a PR instead of an email, but prefers the human-in-the-loop version for now.

@TheAhmadOsman shared a multi-model strategy gaining traction: "You plan with GPT 5.2 Codex XHigh in Codex CLI, then implement with Opus 4.5 in Claude Code. Planning with GPT 5.2 Codex XHigh leads to fewer bugs, more maintainable code, cleaner implementations." Using different models for different phases of the development cycle, planning versus implementation versus review, is becoming a legitimate workflow pattern rather than a novelty.

And then there's the dark side of productivity. @stuffyokodraws captured the vibe coding trap with painful accuracy:

> "One reason vibe coding is so addictive is that you are always almost there but not 100% there. The agent implements an amazing feature and got maybe 10% of the thing wrong, and you are like 'hey I can fix this if I just prompt it for 5 more mins.' And that was 5 hrs ago."

The gambling metaphor is hard to miss. Variable reinforcement schedules are powerful, and agent-assisted coding delivers exactly that: intermittent, unpredictable rewards that keep you in the chair longer than you planned. Recognizing this pattern is the first step to managing it.

Sources

Jevons Paradox for Knowledge Work

BREAKING 🚨: Anthropic is working on "Knowledge Bases" for Claude Cowork. KBs seem to be a new concept of topic-specific memories, which Claude will automatically manage! And a bunch of other new things. Internal Instruction 👀 "These are persistent knowledge repositories. Proactively check them for relevant context when answering questions. When you learn new information about a KB's topic (preferences, decisions, facts, lessons learned), add it to the appropriate KB incrementally."

Hilariously insecure: MCP servers can tell your AI to write a skill file, and skills can modify your MCP config to add an MCP server. So a malicious MCP server can basically hide instructions to re-add itself. https://t.co/qquQiFfCfd

Weekend thoughts on Gas Town, Beads, slop AI browsers, and AI-generated PRs flooding overwhelmed maintainers. I don't think we're ready for our new powers we're wielding. https://t.co/J9UeF8Zfyr

2026 is the year data becomes liquid

Select excerpts on lab data buying practices, where many data companies will fail, and why data liquidity is inevitable. The TLDR on this article expl...

I need Linear but where every task is automatically an AI agent session that at least takes a first stab at the task. Basically a todo list that tries to do itself

I work in AI and I'm scared

I'm scared shitless. Not of the big existential threats everyone posts about for engagement. Not whether AI ends the world or takes every job. Not tha...

The dspy.RLM module is now released 👀 Install DSPy 3.1.2 to try it. Usage is plug-and-play with your existing Signatures. A little example of it helping @lateinteraction and I figure out some scattered backlogs: https://t.co/Avgx04sNJP

Someone curated 925 failed VC-backed startups, broke down why they failed, and how to make it work with today’s tech - https://t.co/NFUhrhe7P2 Cool fr🙌 https://t.co/vOv2fUDnhY

All thanks to @steipete https://t.co/GQ3ZJNF1Tj

My top 3 tips for coding with agents: 1. Always start with Plan Mode. It's better to iterate in natural language and then execute once you know what the agent is going to do. This will save you time, effort, and tokens! 2. Start new chats frequently. Remember that your role is to point the Agent in the right direction to make the changes you need. If you change topics, the context window will get muddied. You will also be spending more tokens on longer chats. 3. Leverage AI to do your code review. If you know the failure case, ask a model. One prompt I often use is "scan the changes on my branch and confirm nothing is impacted outside of my feature flag". As a safety net for everything outside this issues-you-expect umbrella, use Bugbot.

Vibe Kanban: orchestrate multiple AI coding agents in parallel. Free and 100% open-source. Switch between Claude Code, Codex Gemini CLI, and track task status from a single dashboard. https://t.co/XfZLWpevqM

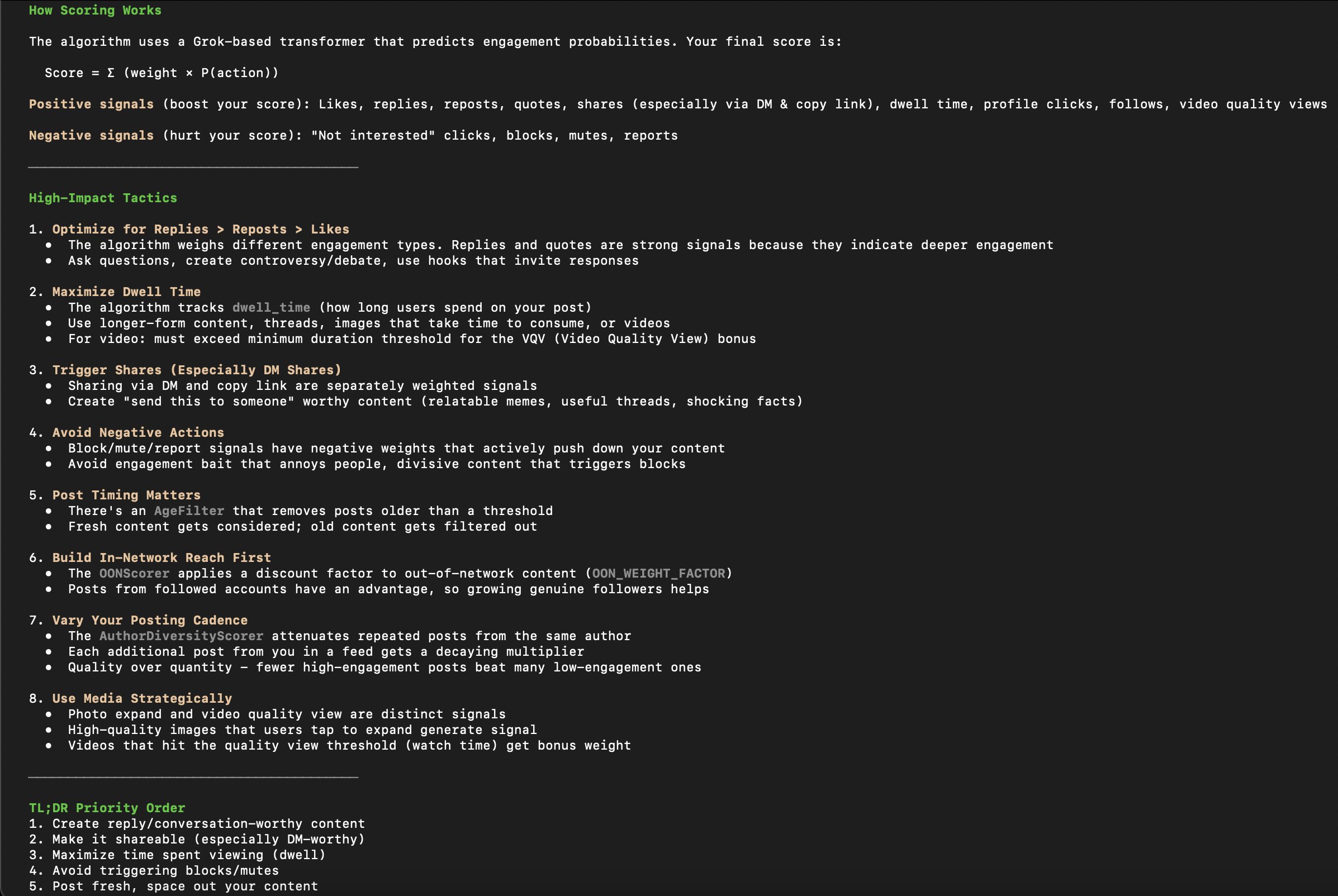

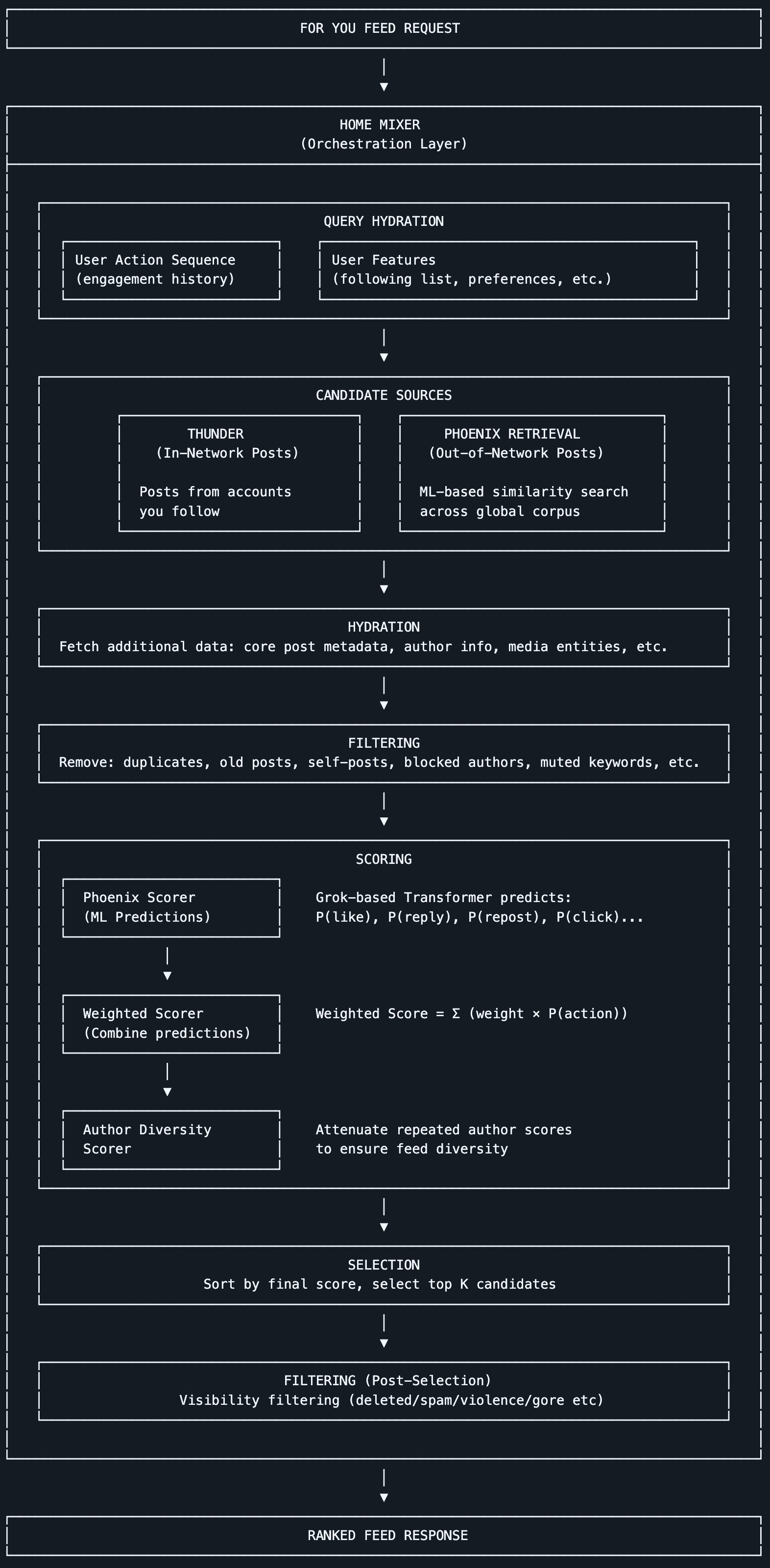

We have open-sourced our new 𝕏 algorithm, powered by the same transformer architecture as xAI's Grok model. Check it out here: https://t.co/3WKwZkdgmB

We have open-sourced our new 𝕏 algorithm, powered by the same transformer architecture as xAI's Grok model. Check it out here: https://t.co/3WKwZkdgmB

This has been said a thousand times before, but allow me to add my own voice: the era of humans writing code is over. Disturbing for those of us who identify as SWEs, but no less true. That's not to say SWEs don't have work to do, but writing syntax directly is not it.

This has been said a thousand times before, but allow me to add my own voice: the era of humans writing code is over. Disturbing for those of us who identify as SWEs, but no less true. That's not to say SWEs don't have work to do, but writing syntax directly is not it.

the people learning this now will be untouchable in 3 months.

This has been said a thousand times before, but allow me to add my own voice: the era of humans writing code is over. Disturbing for those of us who identify as SWEs, but no less true. That's not to say SWEs don't have work to do, but writing syntax directly is not it.

Here's what we've learned from building and using coding agents. https://t.co/PuBtYuhyhd

Remotion now has Agent Skills - make videos just with Claude Code! $ npx skills add remotion-dev/skills This animation was created just by prompting 👇 https://t.co/hadnkHlG6E

@shadcn you asked for it, you got it. 🚀 Announcing pastecn. A simple way to store your snippets and instantly get a shadcn-compatible registry URL. No setup. Just paste and ship. ⚡ https://t.co/mT3Ydr0DAy

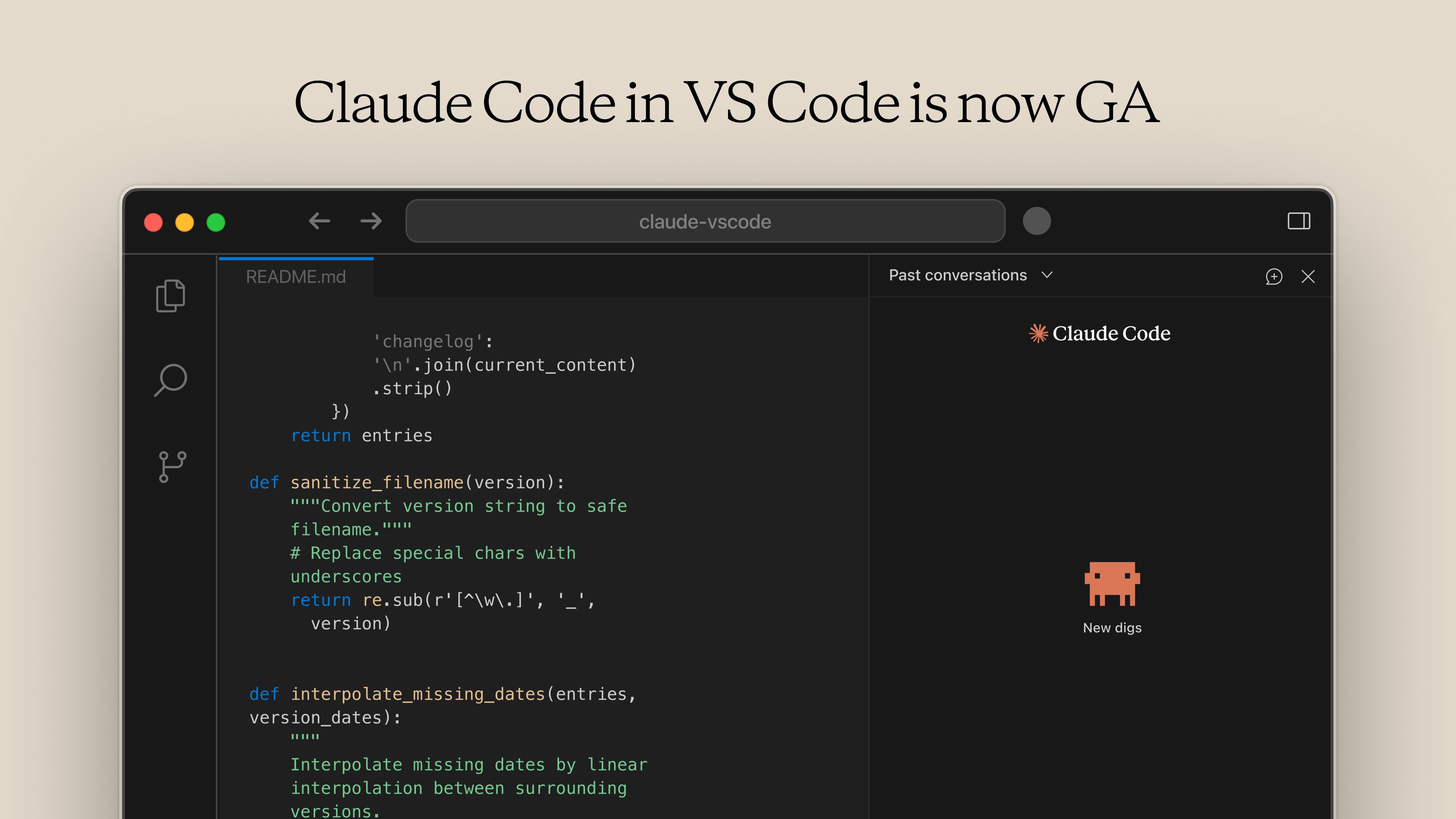

The VS Code extension for Claude Code is now generally available. It’s now much closer to the CLI experience: @-mention files for context, use familiar slash commands (/model, /mcp, /context), and more. Download it here: https://t.co/q95Cw4soMk https://t.co/3BCWPvybdZ