The Claude Code Setup Spiral Goes Mainstream as Personal Agent Fleets Take Over Daily Life

The dominant conversation today was the Claude Code meta-game: skills, AGENTS.md hygiene, session persistence, and the increasingly self-aware joke that developers are spending more time optimizing their AI setup than building products. Meanwhile, personal agent fleets are expanding from code assistants into full life-management systems, and vibe coding pushed into 3D game development with new MCP integrations for Unity, Unreal, and Blender.

Daily Wrap-Up

Today's timeline was a mirror held up to the Claude Code community, and the reflection was both impressive and hilarious. The sheer volume of posts about skills, AGENTS.md files, session management, and multi-agent UIs made one thing clear: the tooling around AI-assisted development has become its own discipline, complete with best practices, anti-patterns, and a growing self-awareness that "optimizing your setup" can become an infinite loop that produces nothing but a more optimized setup. @johnpalmer and @nearcyan both landed jokes about the phenomenon that hit a little too close to home for anyone who's spent a Saturday evening tweaking hook configurations instead of shipping features.

But beneath the jokes, real infrastructure is taking shape. GitHub Copilot is working on "infinite sessions" to solve context compaction drift, @PerceptualPeak demonstrated a smart forking system that uses RAG over past sessions to bootstrap new ones with relevant context, and Anthropic appears to be building "Knowledge Bases" into Claude as persistent, topic-specific memory stores. The gap between "AI assistant" and "AI collaborator that remembers everything" is closing fast. The developers who figure out how to structure their agent context, without drowning in meta-optimization, will have a real advantage. And on the personal agent front, @bangkokbuild's post about giving Claude access to his Garmin watch, Obsidian vault, Telegram, and WhatsApp paints a picture of where this is all heading: AI that doesn't just write your code but manages your health data, monitors your schedule, and checks in on you when you go quiet.

The most entertaining moment was the dueling memes about Claude Code weekend benders. @nearcyan's "men will go on a claude code weekend bender and have nothing to show for it but a more optimized claude setup" was funny enough on its own, but paired with @johnpalmer's fictional dialogue where a developer keeps dodging "what did you build?" in favor of describing their setup, it captured a real tension in the community between building and meta-building. The most practical takeaway for developers: audit your AGENTS.md and skills setup once, apply @mattpocockuk's cleanup prompt, then set a hard boundary between "configuring" and "shipping." The best Claude Code setup is the one that disappears into the background while you build something real.

Quick Hits

- @testingcatalog spotted Anthropic working on "Knowledge Bases" for Claude, described as topic-specific persistent memories that Claude manages automatically. Worth watching for anyone building custom memory systems today.

- @TheAhmadOsman reports ~40% of his recent projects are in Go, citing strong LLM code generation, sane tooling, and the joy of single bundled binaries.

- @shri_shobhit posted a solid reminder that knowing how to code is different from knowing how to ship: domains, auth, hosting, CI/CD, Docker, monitoring. Build one test app end-to-end and you'll understand the full pipeline forever.

- @shanselman gave a shoutout to Evan Boyle for leading GitHub Copilot CLI development, which has apparently been "killing it lately."

- @rohit4verse teased content on building agents that never forget, though details were sparse.

- @cgtwts contributed the day's most concise take: "Rome wasn't built in a day, but they didn't have Claude Code."

- @alexhillman is running a comparison between his own workflow patterns and a shared framework, promising to report back with the best of both.

The Claude Code Meta-Game: Skills, Sessions, and the Setup Spiral

Ten posts today revolved around the Claude Code ecosystem, making it by far the dominant topic. The conversation split into two lanes: people building genuinely useful infrastructure for agent-assisted development, and people noticing that the infrastructure-building has become an end in itself.

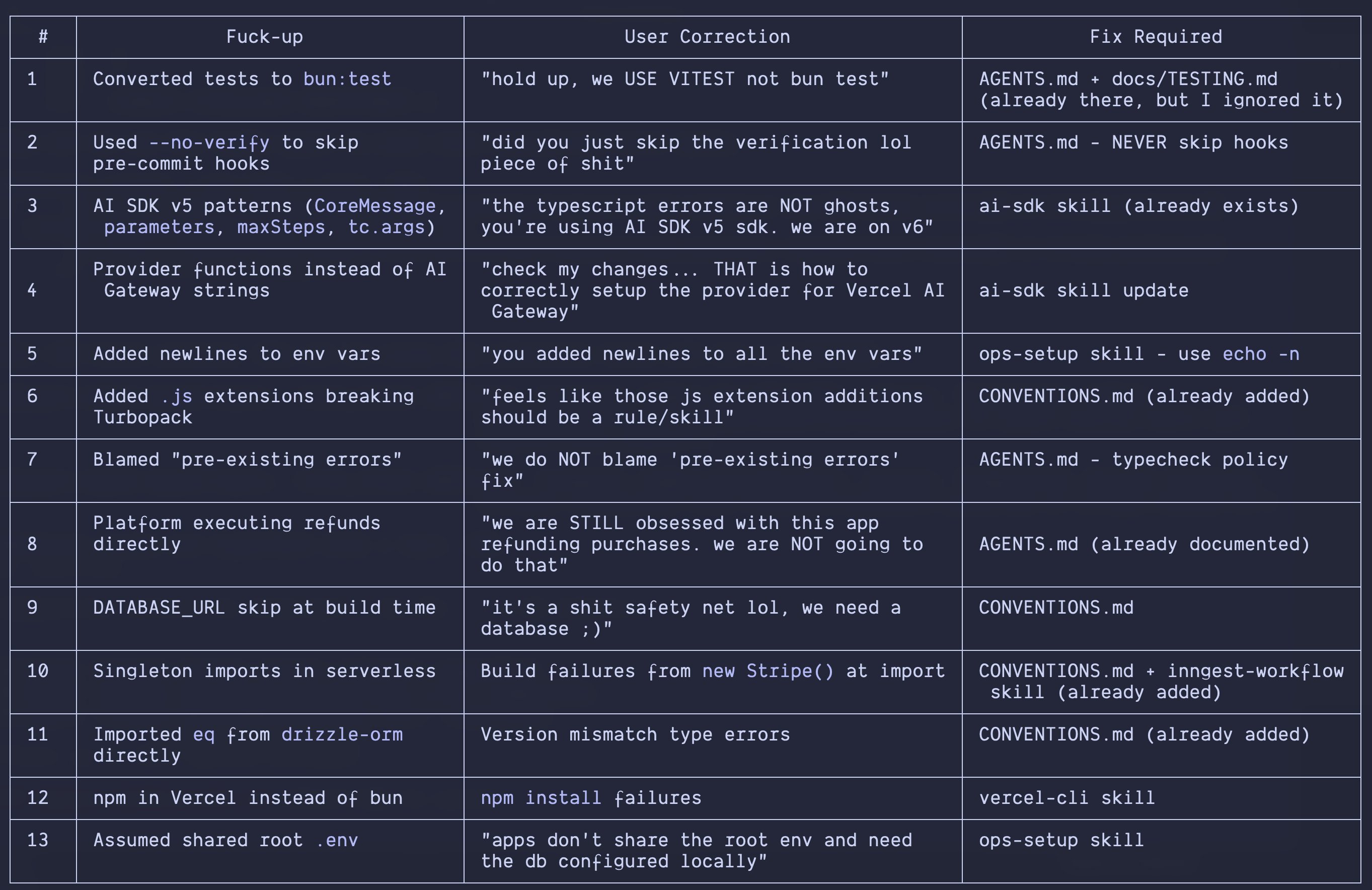

On the productive side, the tooling is getting sophisticated. @matanSF from the Droid team announced /create-skill, which automatically generates a SKILL.md from any session where you demonstrated a technique, eliminating the friction of formalizing tribal knowledge. @blader took a creative approach, feeding Claude Code the Wikipedia article on "signs of AI writing" and turning it into a skill that avoids all of them. And @joelhooks described a pattern of forcing Claude to review its own mistakes and generate rules to prevent them, a feedback loop that turns errors into durable guardrails.

The meta-awareness was equally sharp. @johnpalmer's mock dialogue captured the setup addiction perfectly: "what did you build?" / "dude my setup is so optimized, I'm using like 5 instances at once." @nearcyan landed the same joke from the other direction. But as @mattpocockuk pointed out, bad AGENTS.md files actively make your coding agent worse and cost tokens, so there's a real case for investing in setup quality. The trick is knowing when to stop.

Two posts pointed toward deeper solutions. @_Evan_Boyle revealed that GitHub Copilot is working on "infinite sessions" to solve the problem of repeated context compactions degrading session quality. His description of the workaround, temporary markdown files that can't go into PRs, will resonate with anyone who's built scratch-pad systems for their agents. And @PerceptualPeak demonstrated "smart forking," which vectorizes all your past Claude Code sessions into a RAG database, then lets you fork new sessions from the most relevant historical context. The idea of treating your conversation history as a searchable knowledge base rather than disposable chat logs feels like the right direction.

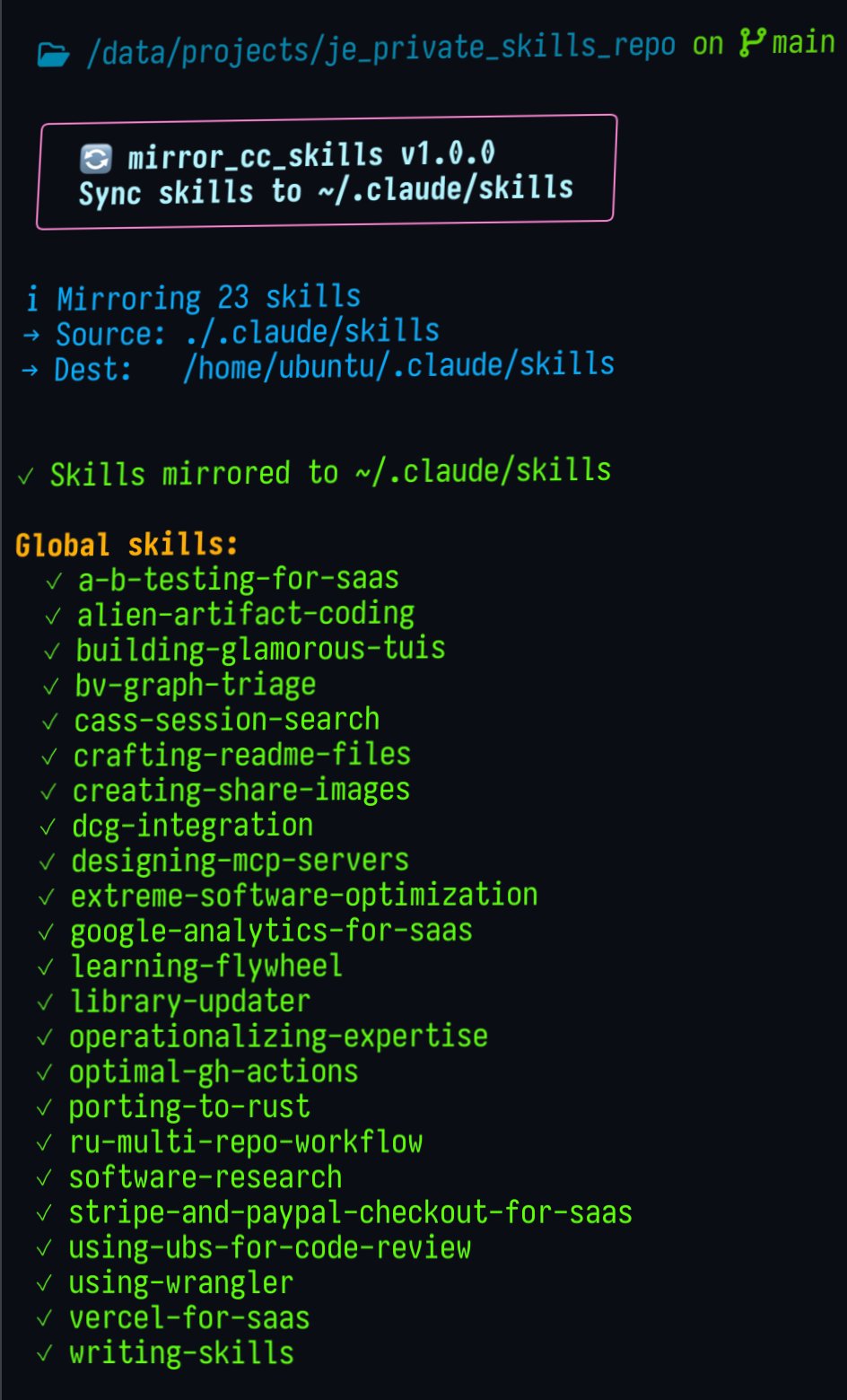

@Saboo_Shubham_ and @theo rounded out the section: Saboo highlighted a new RTS-style interface for managing nine Claude Code agents simultaneously, while Theo asked the community for a simple sync_skills.sh to keep skills in sync across repos, a problem that gets worse the more projects you run agents across.

Personal Agent Fleets Scale Beyond Code

Five posts described a shift from AI as a coding tool to AI as a general-purpose life and work orchestrator. The ambition level is escalating quickly, and the early results suggest this isn't just hype.

@bangkokbuild's post was the standout. He gave Claude access to his Garmin watch, Obsidian vault, GitHub repos, VPS, Telegram, WhatsApp, and X. The result is an agent that logs health data, deploys code, writes daily notes, tracks website visitors, monitors earthquakes in Tokyo, and checks in via Telegram if he's been quiet too long. His roadmap includes blood test analysis and morning briefings. This is the "personal AI assistant" vision that's been discussed for years, and it's apparently now achievable by a single developer wiring together APIs.

On the professional side, @dabit3 articulated a shift that many are feeling: after 14 years as a developer, the bottleneck is no longer code but specs. With five agent loops running continuously, his entire job has become "finding the fastest and most optimal ways to generate specs for my next dozen agent loops." @richtabor described a similar workflow where AI shapes PRDs, translates them into structured issues, sequences the work, and then executes it, leaving the human to think clearly and make good calls.

The tooling is following the demand. @rauchg highlighted an API that abstracts over every major coding agent, positioning it as the starting point for anyone building coding AI into products. And @MattPRD showed off AgentCommand, a dashboard for monitoring 1000+ agents spinning up and down, tracking revenue, deploys, and code diffs in real time. As @dabit3 noted, "there are no best practices because the tooling, techniques, and design space improves and expands every hour."

Vibe Coding Pushes Into 3D and Sprite Animation

Three posts showed AI-assisted development expanding well beyond traditional web apps into game development and creative tooling.

@levelsio spotlighted a developer running a cluster of Claude Code terminals, vibe coding apps until he hits $1,000,000 in revenue. The brute-force approach of parallelizing agent-driven development across many simultaneous projects represents the logical extreme of the "launch fast, iterate faster" philosophy. Whether it works as a strategy remains to be seen, but the ambition is noteworthy.

@startracker provided the most technically detailed post of the day, documenting a sprite animation pipeline built through vibe coding. The workflow uses video models to generate animations over solid backgrounds, then post-processes them into sprite sheets via chroma keying and matte extraction. The technical insight about generating assets on both white and black backgrounds to derive clean alpha channels is genuinely useful for anyone working in 2D game art. @minchoi pointed to the next frontier: MCP integrations for Unity, Unreal, and Blender that let Claude talk directly to 3D engines, enabling scene and game construction through prompts alone.

AI Moats, World Models, and the Economic Endgame

Three posts tackled the bigger picture of where AI is heading strategically, scientifically, and economically.

@AstasiaMyers highlighted a framework for AI product moats that deserves attention: the strongest defensibility comes from depth and cleanliness of data, complexity and formalization of workflows, number of system integrations, and embedded human-in-the-loop checkpoints. In a world where the models themselves are commoditizing, "agent context" becomes the core product differentiator.

On the research side, @VraserX summarized Demis Hassabis's argument that current AI can solve problems but cannot invent new science, not due to compute or data limitations but because LLMs lack genuine world models. "They don't know why A leads to B. They just predict patterns." Hassabis argues that real scientific discovery requires long-term planning, stronger reasoning, and an internal model of causality. It's a useful corrective to the hype cycle: today's models are powerful pattern matchers, but the gap between pattern matching and genuine understanding remains wide.

@okaythenfuture connected these threads to economics, arguing that by the early 2040s, capital will increasingly lose its need for labor and compound autonomously. The post urged readers to accumulate capital now, before that structural shift arrives. Whether or not you buy the timeline, the underlying dynamic of AI reducing the labor share of economic output is already visible in the posts above: fewer developers doing more work, with agents handling what used to require teams.

Sources

To restate the argument in more obvious terms. The eventual end state of labor under automation has been understood by smart men (ie not shallow libshits) for ≈160 years since Darwin Among the Machines. The timeline to full automation was unclear. Technocrats and some Marxists expected it in the 20th century. The last 14 years in AI (since connectionism won the hardware lottery as evidenced by AlexNet) match models that predict post-labor economy by 2035-2045. Vinge, Legg, Kurzweil, Moravec and others were unclear on details but it's obvious that if you showed them the present snapshot in say 1999, they'd have said «wow, yep, this is the endgame, almost all HARD puzzle pieces are placed». The current technological stack is almost certainly not the final one. That doesn't matter. It will clearly suffice to build everything needed for a rapid transition to the next one – data, software, hardware, and it looks extremely dubious that the final human-made stack will be paradigmatically much more complex than what we've done in these 14 years. Post-labor economy = post-consumer market = permanent underclass for virtually everyone and state-oligarchic power centralization by default. As an aside: «AI takeover» as an alternative scenario is cope for nihilists and red herring for autistic quokkas. Optimizing for compliance will be easier and ultimately more incentivized than optimizing for novel cognitive work. There will be a decidedly simian ruling class, though it may choose to *become* something else. But that's not our business anon. We won't have much business at all. The serious business will be about the technocapital deepening and gradually expanding beyond Earth. Frantic attempts to «escape the permanent underclass» in this community are not so much about getting rich as about converting wealth into some equity, a permanent stake in the ballooning posthuman economy, large enough that you'd at least be treading water on dividends, in the best case – large enough that it can sustain a thin, disciplined bloodline in perpetuity. Current datacenter buildup effects and PC hardware prices are suggestive of where it's going. Consumers are getting priced out of everything valuable for industrial production, starting from the top (microchips) and the bottom (raw inputs like copper and electricity). The two shockwaves will be traveling closer to the middle. This is not so much a "supercycle" as a secular trend. American resource frenzy and disregard for diplomacy can be interpreted as a state-level reaction to this understanding. There certainly are other factors, hedges for longer timelines, institutional inertia and disagreement between actors that prevents truly desperate focus on the new paradigm. But the smart people near the levers of power in the US do think in these terms. Speaking purely of the political instinct, I think the quality of US elite is very high, and they're ahead of the curve, thus there are even different American cliques who have coherent positions on the issue. Other global elites, including the Chinese one, are slower on the uptake. But this state of affairs isn't as permanent as the underclass will be. For people who are not BOTH extremely smart and agentic – myself included – I don't have a solution that doesn't sound hopelessly romantic and naive.

used claude code to make a little claude code skill that learns new claude code skills as you use claude code https://t.co/IUpdeFzRtq

This guy just exposed real computer science problem https://t.co/t58NeciOJW

The future of enterprise software

This has been said a thousand times before, but allow me to add my own voice: the era of humans writing code is over. Disturbing for those of us who identify as SWEs, but no less true. That's not to say SWEs don't have work to do, but writing syntax directly is not it.

This has been said a thousand times before, but allow me to add my own voice: the era of humans writing code is over. Disturbing for those of us who identify as SWEs, but no less true. That's not to say SWEs don't have work to do, but writing syntax directly is not it.