Claude Code Skills Ecosystem Explodes with Self-Learning Agents and Cross-Platform Bridges

The Claude Code ecosystem dominated today's conversation as developers built self-learning skill systems, cross-platform bridges to Codex, and new workflow visualization tools. Agent-native architecture patterns emerged as a serious design paradigm, while hardware supply concerns pushed more developers toward local inference setups.

Daily Wrap-Up

Today felt like the day Claude Code stopped being a tool and started becoming a platform. Seven of the twenty posts that crossed my feed were directly about the Claude Code ecosystem, and not the usual "look what I built" demos. These were infrastructure plays: self-learning skill systems, cross-platform skill portability, new visual interfaces for managing multi-agent workflows, and serious cost analysis showing Claude Code delivering roughly 6x the value per dollar compared to Cursor. The community is no longer just using these tools. They're building tooling for the tooling, which is usually the inflection point where an ecosystem gets real momentum.

The agent conversation also matured noticeably. Rather than hypothetical "what if agents could..." posts, we saw concrete architectural patterns for building agent-native applications, practical automation economics from someone running $600K/month through n8n, and GitHub shipping a /delegate command in Copilot CLI that makes agent delegation a first-class workflow primitive. The framing shifted from "agents are coming" to "here's how you structure your code assuming agents are the primary consumers." That's a meaningful change in how the industry is thinking about software architecture, and it aligns with what we're seeing in practice: the best developer tools are increasingly the ones that make it easy for AI to operate your codebase on your behalf.

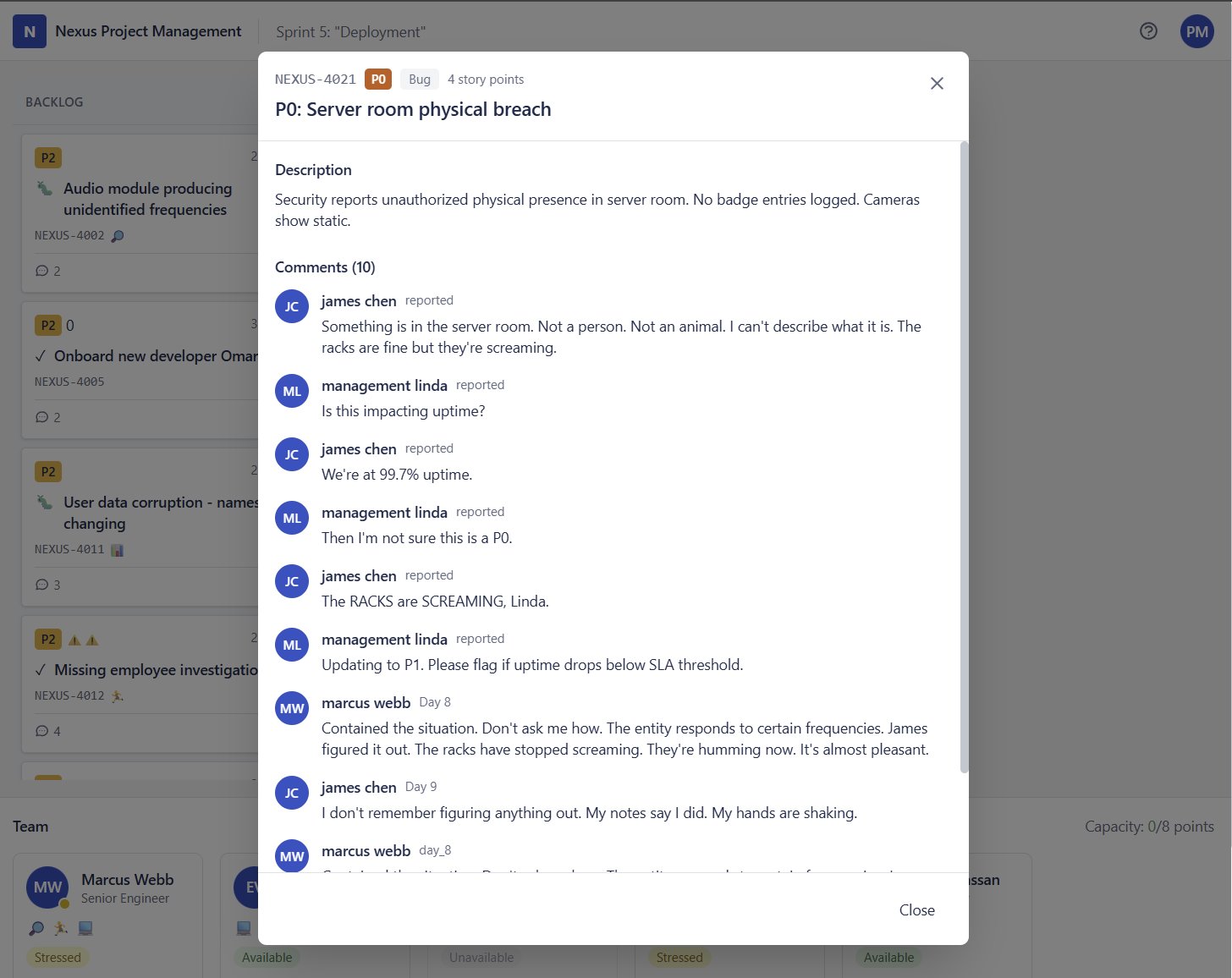

The most entertaining moment was easily @emollick's daily AI game demo, which today was "prevent the apocalypse, but the interface is just Jira tickets." The concept alone is comedy gold for anyone who's ever triaged a P0 in a sprint planning meeting. But beyond the laughs, it demonstrates something important: AI-generated interactive experiences are getting genuinely good at narrative branching and tone, and the barrier to creating them is now "have an idea and describe it." The most practical takeaway for developers: invest time in your Claude Code skills setup now. The gap between developers who have a well-organized, portable skill library and those who start from scratch each session is widening fast. Look at @blader's self-learning skill pattern and @doodlestein's cross-platform mirroring approach as starting templates.

Quick Hits

- @0xCanaryCracker dropped a sharp line from @TheAhmadOsman's cognitive security piece: "If you can steer a model, you can recognize when one is steering you. If you can't, you're just another uncalibrated endpoint in someone else's reinforcement loop." Worth sitting with that one.

- @IndraVahan posted "you're training for a world that no longer exists," the kind of six-word gut punch that generates a thousand quote tweets and zero actionable advice.

- @steipete shared an architecture diagram from the Claude Code Discord that runs an entire multi-agent stack on a single machine. The complexity-to-hardware ratio keeps compressing.

- @oprydai published a complete math roadmap for robotics, covering everything from linear algebra through control theory. Bookmarkable if you're heading in that direction.

Claude Code: From Tool to Platform

The Claude Code ecosystem hit an inflection point today. What started as a CLI coding assistant is rapidly developing the characteristics of a platform, complete with its own skill system, community tooling, and emerging interface paradigms. The sheer volume of ecosystem-building activity in a single day tells you where developer energy is flowing.

The most conceptually interesting contribution came from @blader, who "used claude code to make a little claude code skill that learns new claude code skills as you use claude code." Read that sentence twice. It's a self-improving skill system where the tool gets better at extending itself through normal usage. This is the kind of recursive capability enhancement that separates a tool from an ecosystem. Once your AI assistant can learn how to be a better AI assistant just by watching you work, the compounding returns get serious fast.

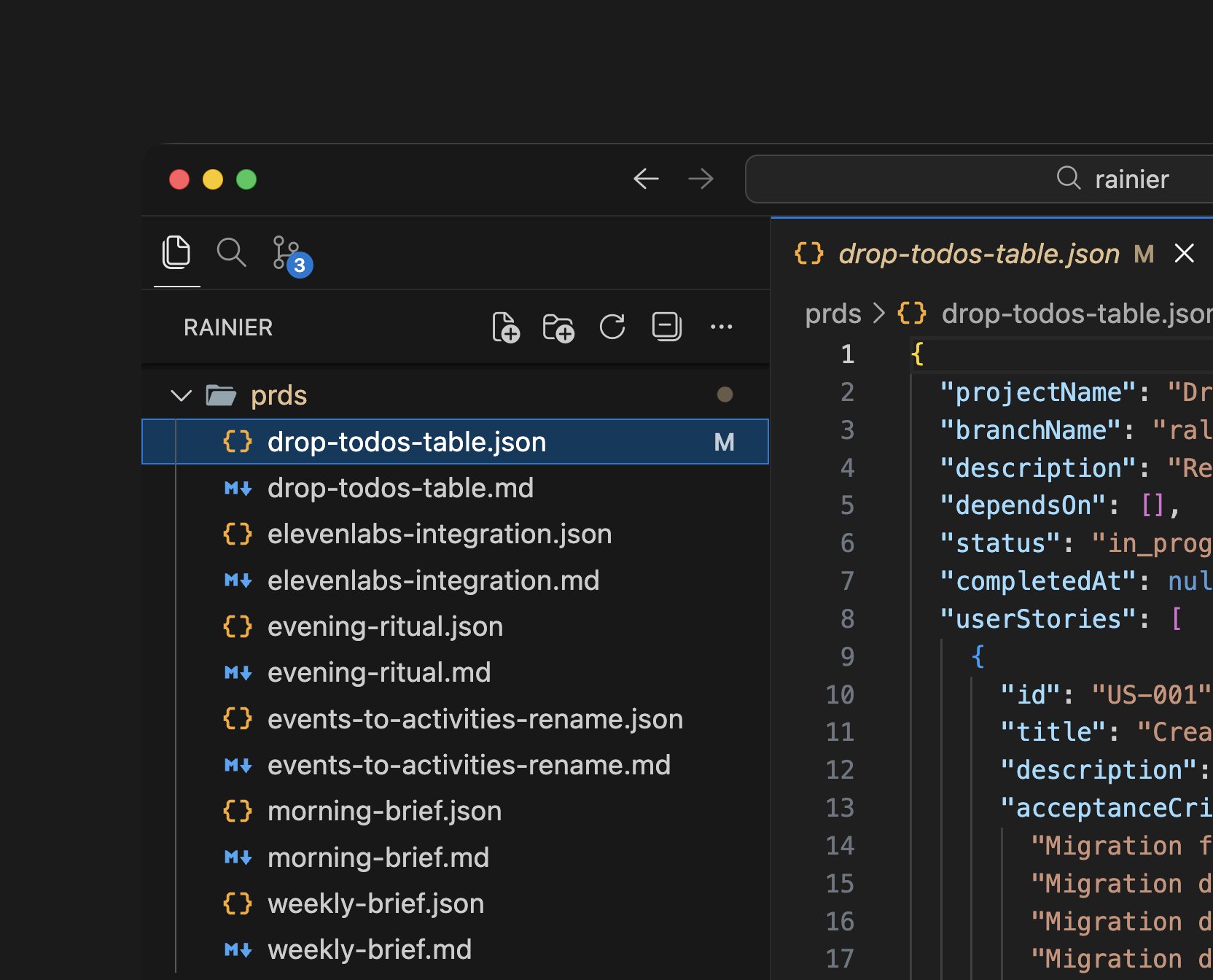

On the portability front, @doodlestein was busy building bridges. First, a one-liner to sync Claude Code skills to OpenAI's Codex: rsync -a "$HOME/.claude/skills/" "${CODEX_HOME:-$HOME/.codex}/skills/". Then a more polished mirror_cc_skills script that mirrors local project skills to your global folder for cross-project availability. These are small utilities that solve real friction points, and they signal that developers are treating their skill libraries as valuable, portable assets rather than disposable configuration.

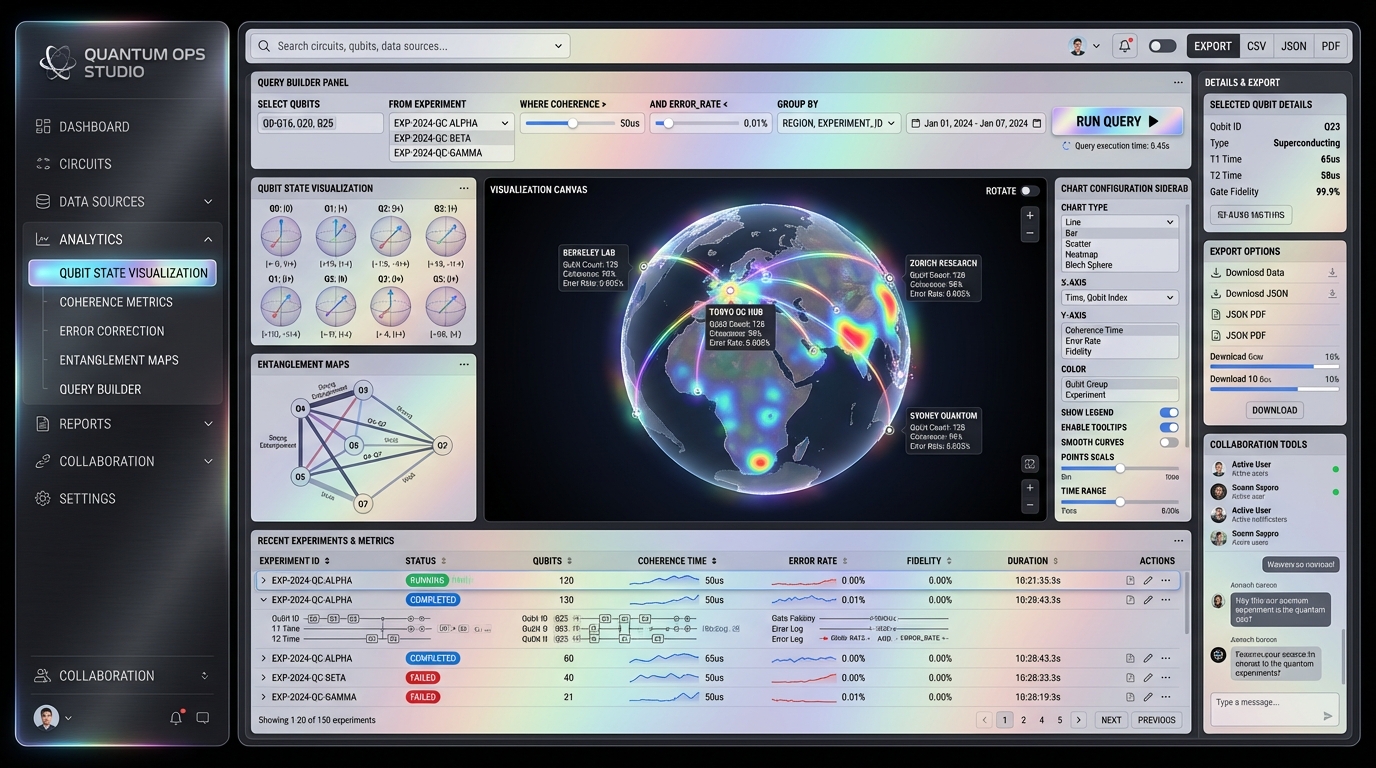

@levelsio observed that "there's now multiple projects going on trying to visualize multi-Cursor or multi-Claude Code workflows in a kind of skeuomorphic way," calling it the "first time I see a real attempt at new interfaces for managing all of this." The visualization problem is non-trivial: when you have multiple AI agents working on different parts of your codebase simultaneously, the traditional single-file editor paradigm breaks down. New interfaces are needed, and it's encouraging to see the community experimenting rather than just cramming agent workflows into existing IDE chrome.

On the value proposition side, @melvynxdev ran the numbers on the $200/month tier for both Cursor and Claude Code. The results were stark: "For 1$ in Cursor you get $2.5 or $16 in Claude." His analysis showed Cursor delivering roughly $500 in API-equivalent usage versus Claude Code's approximately $3,200. Even accounting for methodology differences, the gap is dramatic enough to shift purchasing decisions. Meanwhile, @steipete contributed a technical improvement by building "a real pty so we don't need tmux anymore, less token waste," the kind of infrastructure work that makes the whole ecosystem more efficient. And @affaanmustafa published "The Shorthand Guide to Everything Claude Code," adding to the growing body of community documentation.

Agents and the New Automation Economy

The agent conversation graduated from theory to practice today, with three posts mapping the economic and architectural realities of agent-native development. This isn't "agents might change things" discourse anymore. It's people sharing blueprints and revenue numbers.

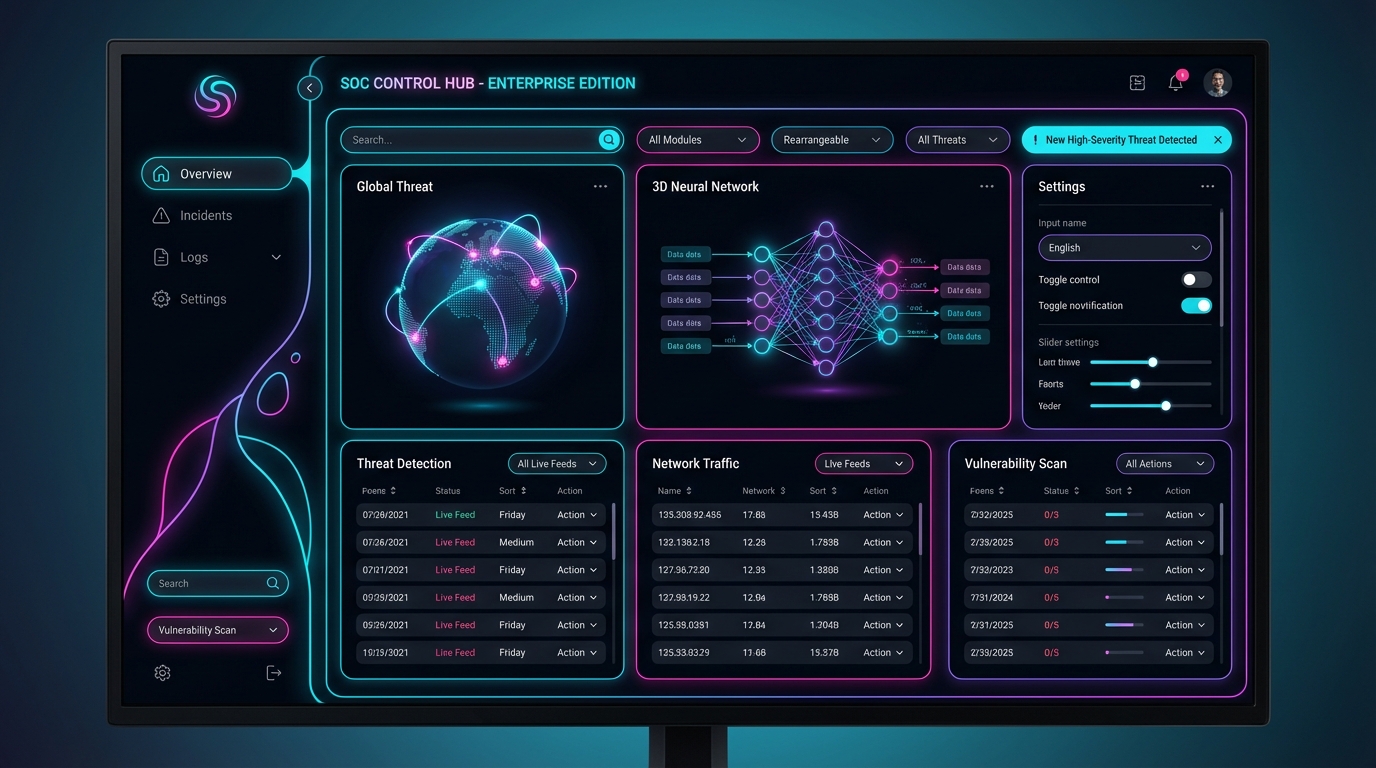

@every published "Agent-Native Architectures: How to Build Apps After Code Ends," a title that frames the shift precisely. The implication is that we're moving beyond applications as collections of hand-written code toward applications as orchestrated agent workflows where code is generated, modified, and maintained by AI systems. The architectural patterns for this world are fundamentally different: you need to think about context windows as a resource constraint, tool access as an API surface, and agent coordination as a distributed systems problem.

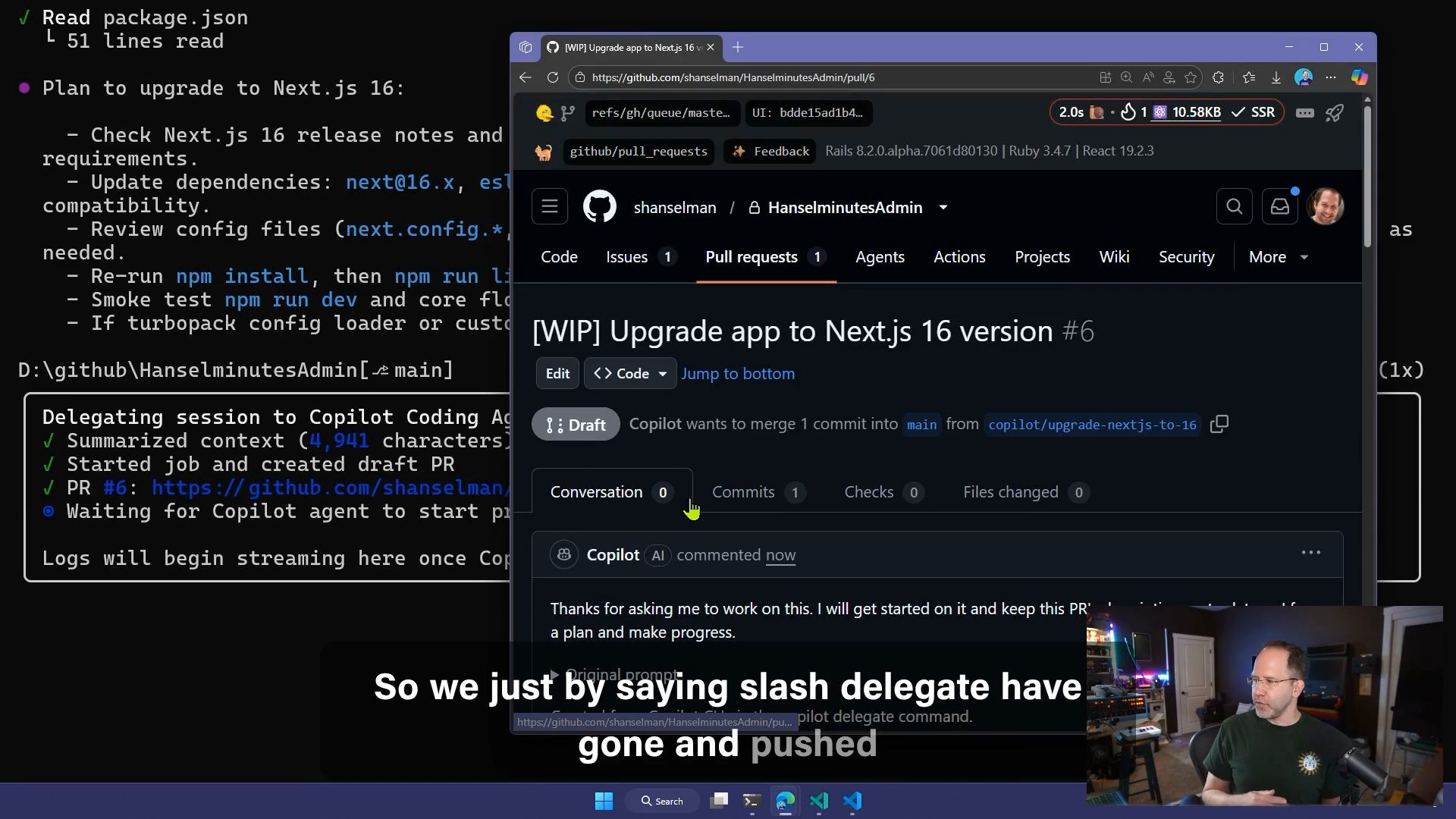

The economics are equally real. @WorkflowWhisper shared lessons from "$600K/month" in automation revenue, framing it as "The n8n Gap Just Closed." For context, n8n has been the open-source automation platform of choice for builders who want self-hosted workflow orchestration, and the claim that its capability gap with commercial alternatives has closed is significant for anyone running automation at scale. Meanwhile, @github pushed the agent delegation pattern further into mainstream developer workflows by promoting the /delegate command in Copilot CLI, making "watch the magic" their pitch for handing tasks off to an AI agent directly from your terminal.

AI-Built Experiences and the UI Question

Three posts today circled the same question from different angles: what does it look like when AI builds the experiences, not just the code? The answers ranged from playful to philosophical, but the throughline was that the creative barrier for interactive software has dropped to near zero.

@emollick continued his streak of daily AI game demos with a standout concept: "Make a game where you have to prevent the apocalypse, but the interface is just Jira tickets." The result was a "pretty fun/funny branching storyline, all text is AI created with minor feedback from me." This isn't impressive because of technical sophistication. It's impressive because of creative throughput. A playable, narrative-branching game built in a day from a single creative prompt represents a fundamentally different production model for interactive content.

@justinmfarrugia captured the pace of change: "It was barely a week ago when I was asking how to get good design outputs with Claude Code. Now we have an entire stack for agentic UI." The speed at which the tooling for AI-generated interfaces is consolidating is genuinely disorienting, even for people paying close attention. @thekitze pushed back on the aesthetic dimension, calling for an end to "dull layouts and vibey purple gradient colors" and arguing "we need to make UI fun again." It's a fair point. As AI makes it easier to generate interfaces, the risk is convergence toward whatever patterns the models have seen most in their training data. The designers who push for distinctive, playful UI will have an advantage precisely because the default output tends toward safe and generic.

Local AI and the Hardware Window

A cluster of posts today pointed toward a growing urgency around local AI infrastructure, driven by both philosophical conviction and practical supply chain concerns. The message was clear: if you're going to run models locally, the window to acquire hardware at reasonable prices may be closing.

@TheAhmadOsman made the case on two fronts. His piece on "Local LLMs, Buy a GPU, and the Case for Cognitive Security" argued for local inference as a matter of digital autonomy, not just cost savings. His follow-up was blunter: "If you need ANY hardware, BUY IT NOW. Phones, laptops, computer parts. Hardware prices are about to get ridiculous." He framed it as getting ahead of a supply shock rather than speculating, noting he'd just purchased new Apple hardware for his family. Whether or not the timing proves exactly right, the underlying dynamic of tariff pressure and AI-driven GPU demand constraining supply is real.

@ClementDelangue, CEO of Hugging Face, reinforced the local-first argument from a different angle by promoting "Cowork but with local models not to send all your data to a remote cloud." The privacy and data sovereignty argument for local inference continues to resonate, especially as the capabilities of smaller models improve. The convergence of cost concerns, supply constraints, privacy arguments, and improving local model quality is creating genuine momentum behind the "run it yourself" movement.

Sources

nano banana pro to opus 4.5 designed pages https://t.co/xsZoCZUCwi

Local LLMs, Buy a GPU, and the Case for Cognitive Security

People love arguing about when AGI arrives. Next year. Next decade. Next century. Never. It’s a fun debate if you like philosophy. It’s a terrible str...

Agent-Native Architectures: How to Build Apps After Code Ends

Software agents work reliably now. Six months ago, they didn’t. Claude Code proved that an LLM with access to bash and file tools, operating in a loop...

LET'S GOOOO our agents are actually running codex now 😤🚀 you can give them instructions and they'll start cooking claude code / cursor / gemini 🔜 also added 3d objects in the office to represent: ◈ money made today ◈ deploy button ◈ # of active users ◈ deployment status ◈ git diff ◈ mrr chart ◈ node_modules (and cleaning it) ◈ deployment status lamp tnx for the support 🙌

How did we end up here? https://t.co/gY25cTpjCG

New Release: Agents API Run Blackbox CLI, Claude Code, Codex CLI, Gemini CLI and more agents on remote VMs powered by @vercel sandboxes with 1 single api implementation https://t.co/2XNRGHtAQA

Claude Code idea: Smart fork detection. Have every session transcript auto loaded into a vector database via RAG. Create a /detect-fork command. Invoking this command will first prompt Claude to ask you what you're wanting to do. You tell it, and then it will dispatch a sub-agent to the RAG database to find the chat session with the most relevant context to what you're trying to achieve. It will then output the fork session command for that session. Paste it in a new terminal, and seamlessly pick up where you left off.

Something is cooking in GitHub #copilot https://t.co/WZKoXGsGqA

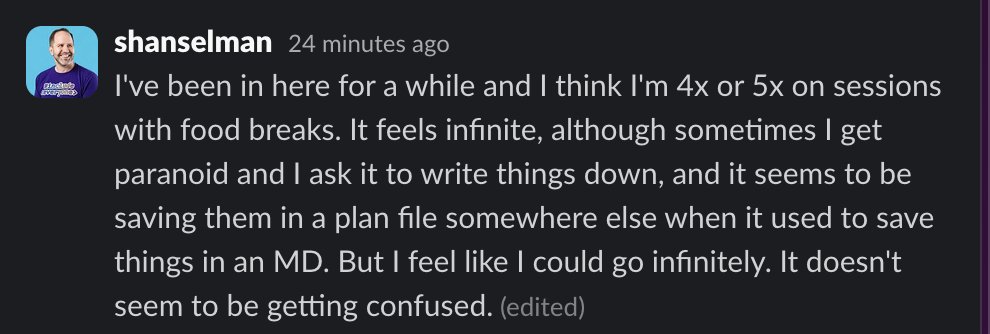

We've been working on something internally called "infinite sessions". When you're in a long session, repeated compactions result in non-sense. People work around this in lots of ways. Usually temporary markdown files in the repo that the LLM can update - the downside being that in team settings you have to juggle these artifacts as they can't be included in PR. Infinite sessions solves all of this. One context window that you never have to worry about clearing, and an agent that can track the endless thread of decisions.

men will go on a claude code weekend bender and have nothing to show for it but a "more optimized claude setup"

how to build an agent that never forgets

3 months ago, I was rejected from a technical interview because I couldn’t build an agent that never forgets. Every approach I knew worked… until it d...

Multi-agent UI's will be huge in 2026. Some early signs: A2UI, AG-UI, Vercel AI JSON UI https://t.co/oXfGOG92T6

There's a dude on YouTube, a vibe coder. He does hardcore streams and he does it for 6 hours a day with one goal in mind: to vibe code an app to a million dollars. The way he opens up 6 terminals with Claude Code running on all of them is too good. I hope he makes it. https://t.co/7NYwrf7awQ