OpenAI Ships Open Responses Spec as Claude Code Users Race to Run 9+ Agents in Parallel

The agentic coding community is all-in on multi-agent orchestration, with developers routinely running 5-15 Claude Code instances simultaneously and new tooling like AgentCraft and ralph-tui emerging to manage the swarm. OpenAI released Open Responses, an open-source spec for multi-provider LLM interfaces. Meanwhile, Grok 4.20 quietly turned a profit in live stock trading on the Alpha Arena leaderboard.

Daily Wrap-Up

The dominant story today is unmistakable: developers have moved past "should I use AI coding tools?" and straight into "how many AI coding agents can I run at once?" The conversation has shifted from individual tool usage to orchestration at scale. People are sharing screenshots of 9 concurrent Claude Code sessions, debating worktree management strategies, and building RTS-style interfaces to command their agent swarms. The tooling ecosystem is responding in kind, with ralph-tui hitting 750+ stars in four days and Vercel shipping a react-best-practices skill package that agents can install and execute autonomously. This isn't experimentation anymore. It's becoming a production workflow.

Underneath the multi-agent frenzy, there's a quieter infrastructure story worth paying attention to. OpenAI released Open Responses, an open-source spec designed to let developers build agentic systems that work across model providers without rewriting their stack for each one. GitHub shipped agentic memory for Copilot in public preview, giving the tool persistent context about repositories. Trail of Bits published 17 security skills for Claude Code with decision trees agents can actually follow. The plumbing layer for agent-native development is getting real, and fast. The most surprising moment was @XFreeze reporting that Grok 4.20 actually made money in live stock trading on Alpha Arena, returning 10-12% from a $10K starting balance across four different configurations. Models making real money in real markets is still a jarring thing to read.

The most practical takeaway for developers: if you're using agentic coding tools, invest your energy upstream in planning and specification rather than downstream in code review. Multiple posts today, especially @doodlestein's detailed Flywheel workflow, emphasize that the quality of your markdown plan and task decomposition determines the quality of the output far more than which model you use or how many agents you run.

Quick Hits

- @Franc0Fernand0 shared an excellent YouTube series on building an operating system from scratch covering CPU, assembly, BIOS, protected mode, and kernel writing.

- @santtiagom_ published a deep dive on Event-Driven Architecture, advocating for the mental shift from sequential "do X then Y" to reactive event-based design.

- @0xaporia posted "How to Build Systems That Actually Work" (title only, but the engagement suggests it resonated).

- @jamonholmgren celebrated React Native getting 40%+ faster runtime performance, calling it proof the platform keeps improving after ten years.

- @0xluffy built a Chrome extension that converts X/Twitter articles into a speed reader with a single button click, made with @capydotai.

- @_Evan_Boyle from GitHub confirmed they're working on org-scoped fine-grained PATs with higher rate limits for automation and CI scenarios.

- @ashpreetbedi flagged that "AI Engineering has a Runtime Problem," pointing to an emerging concern about execution environments for agent workloads.

- @intro promoted their advisory platform (sponsored/for-you post).

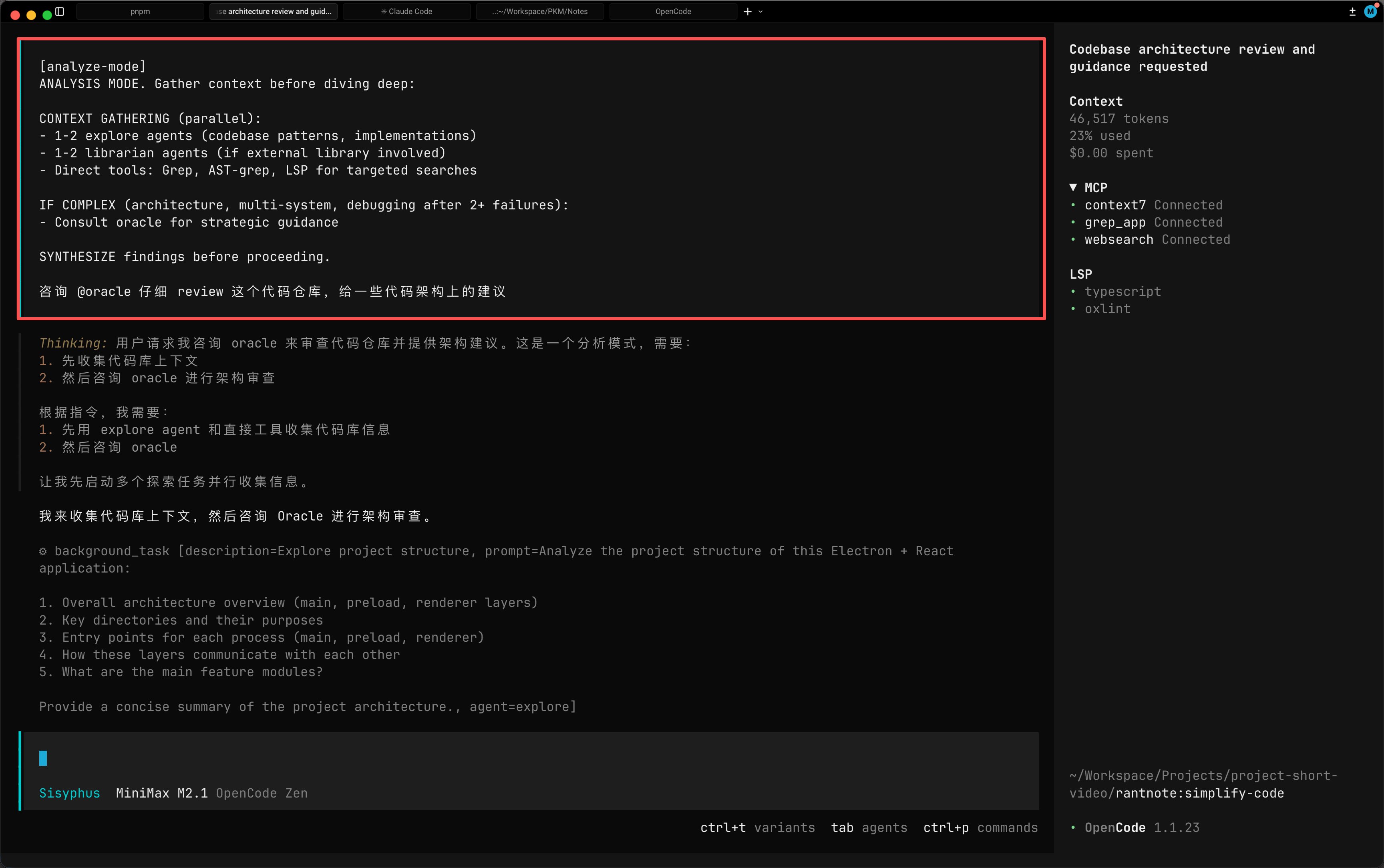

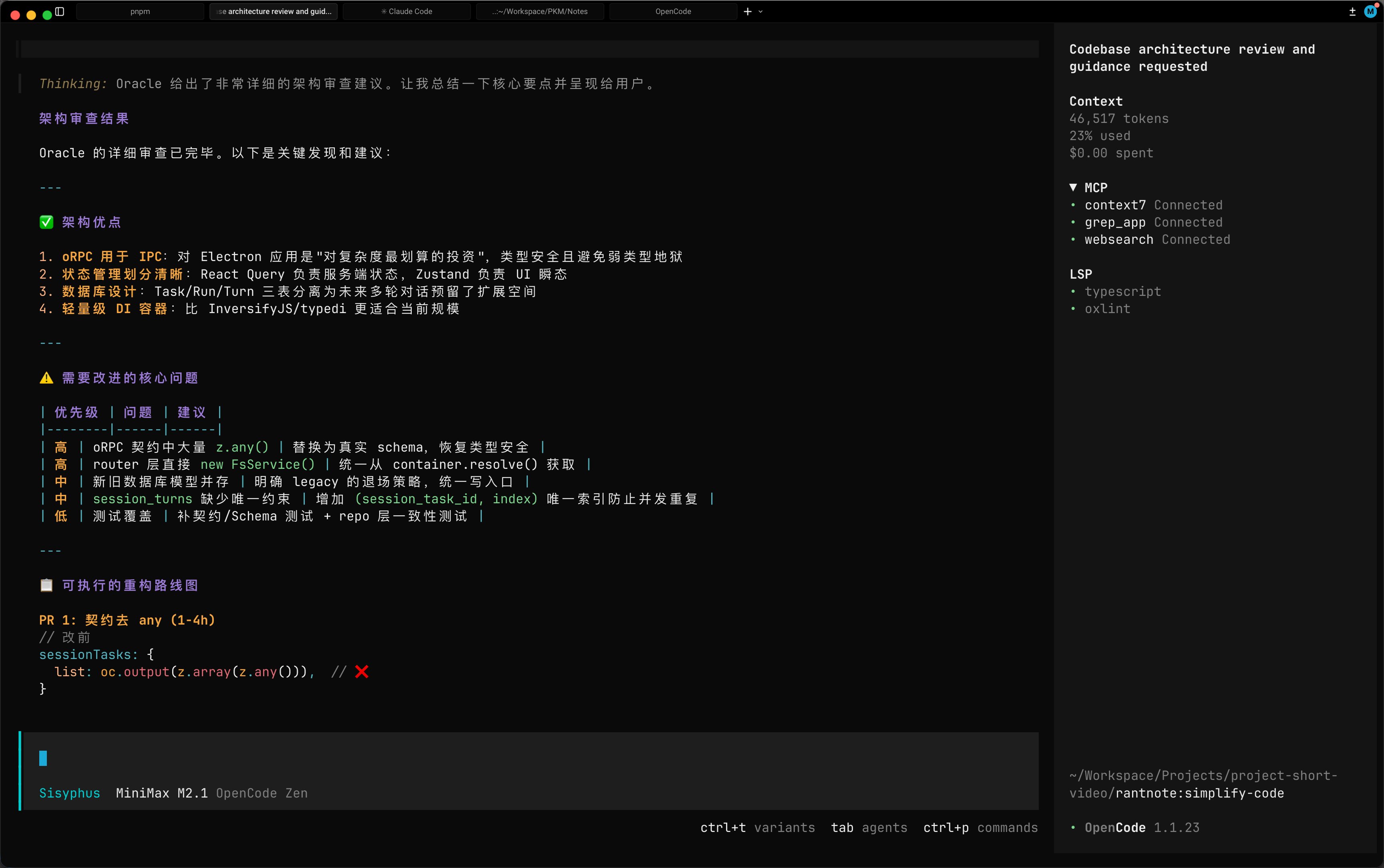

Claude Code and the Multi-Agent Swarm

The single biggest theme today is the normalization of running multiple AI coding agents simultaneously. What started as a novelty has become a workflow pattern that a growing number of developers treat as standard practice. @pleometric asked the community point-blank: "how many claude codes do you run at once?" The replies suggest the answer is "more than you'd expect."

The tooling to support this pattern is maturing rapidly. @idosal1 shared progress on AgentCraft v1, which provides an RTS-style interface for commanding multiple Claude Code agents: "Managed up to 9 Claude Code agents with the RTS interface so far. There's a lot to explore, but it feels right." Meanwhile, @alvinsng noted that ralph-tui is "quickly growing: created 4 days ago and now with 750+ stars on Github," with support for Claude Code, OpenCode, and Factory Droid.

The multi-agent workflow raises practical questions about code management. @steipete waded into the worktree debate, preferring multiple checkouts over worktrees for "less mental load," which predictably drew "500 replies with over-engineered worktree management apps." @nearcyan and @cto_junior both posted screenshots of their multi-Claude setups with the energy of people showing off battlestations. And @askOkara delivered the day's best joke, describing someone coding manually without any AI tools: "Like a psychopath."

What's notable is that the conversation has moved past whether these tools work and into the ergonomics of scaling them. The bottleneck isn't capability anymore. It's orchestration, context management, and workflow design.

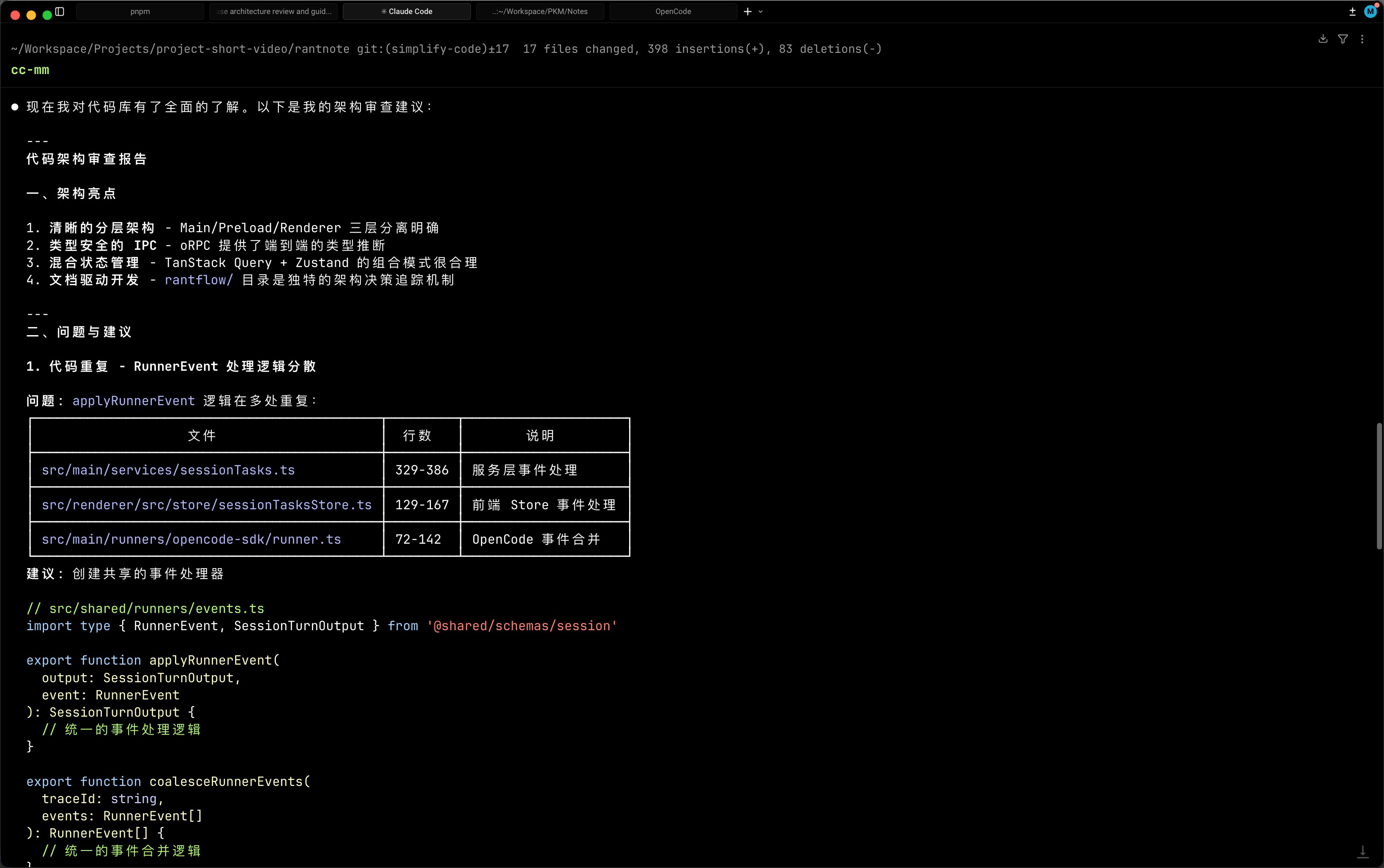

Agent Skills and the Knowledge Packaging Revolution

A parallel thread emerged around how agents consume knowledge, and the answer increasingly looks like structured skill packages rather than documentation. @koylanai highlighted Trail of Bits' 17 security skills for Claude Code, calling them "the beginning of something massive" and predicting that "every company with technical docs will ship Skill packages, not because it's nice to have, but because agents won't adopt your product without them."

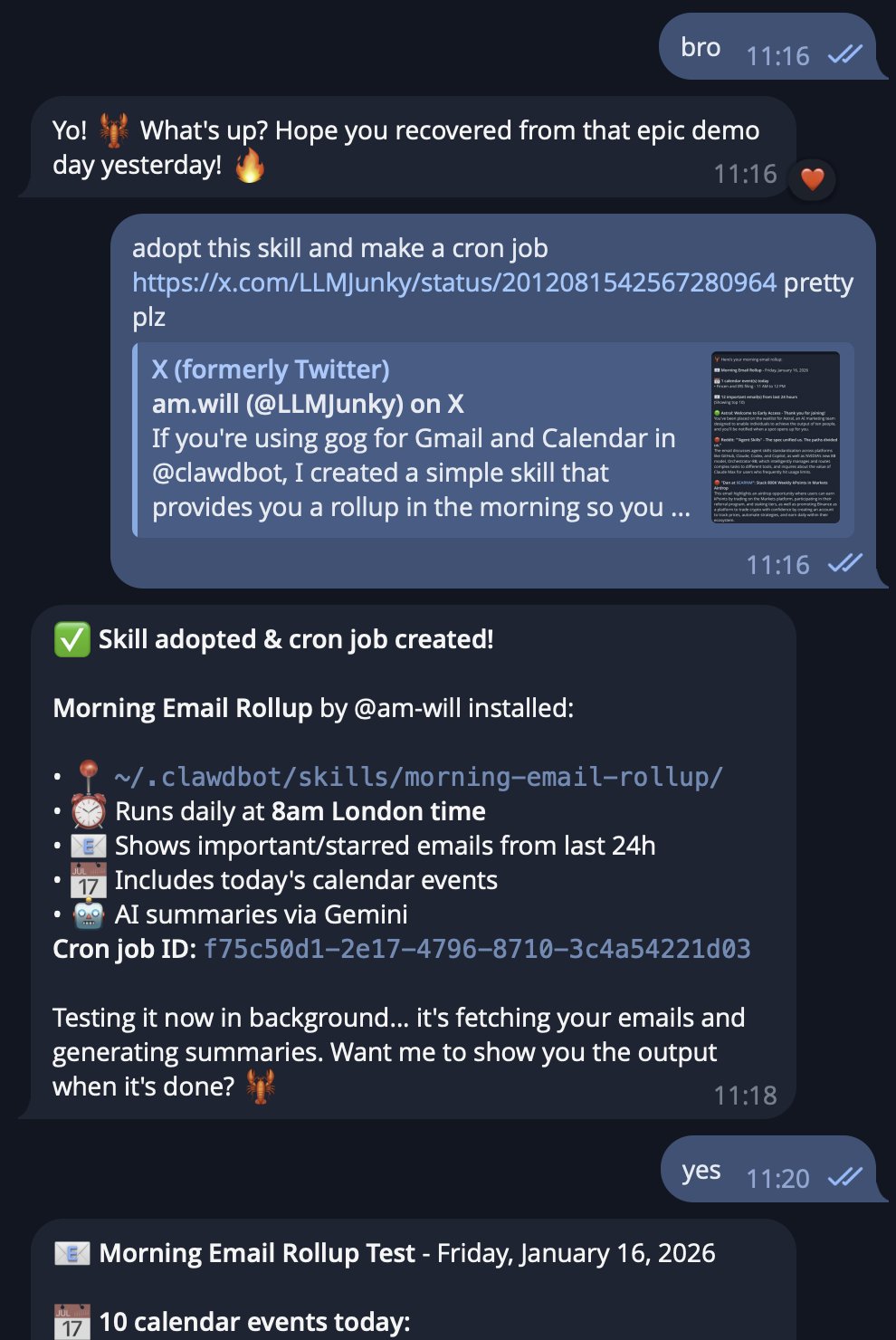

Vercel is already moving in this direction. @vercel released react-best-practices, a repo specifically designed for coding agents that includes "React performance rules and evals to catch regressions, like accidental waterfalls and growing client bundles." The installation is a single command: npx add-skill vercel-labs/agent-skills. @leerob acknowledged the growing complexity of the agent configuration surface, noting "Rules, commands, MCP servers, subagents, modes, hooks, skills... There's a lot of stuff! And tbh it's a little confusing."

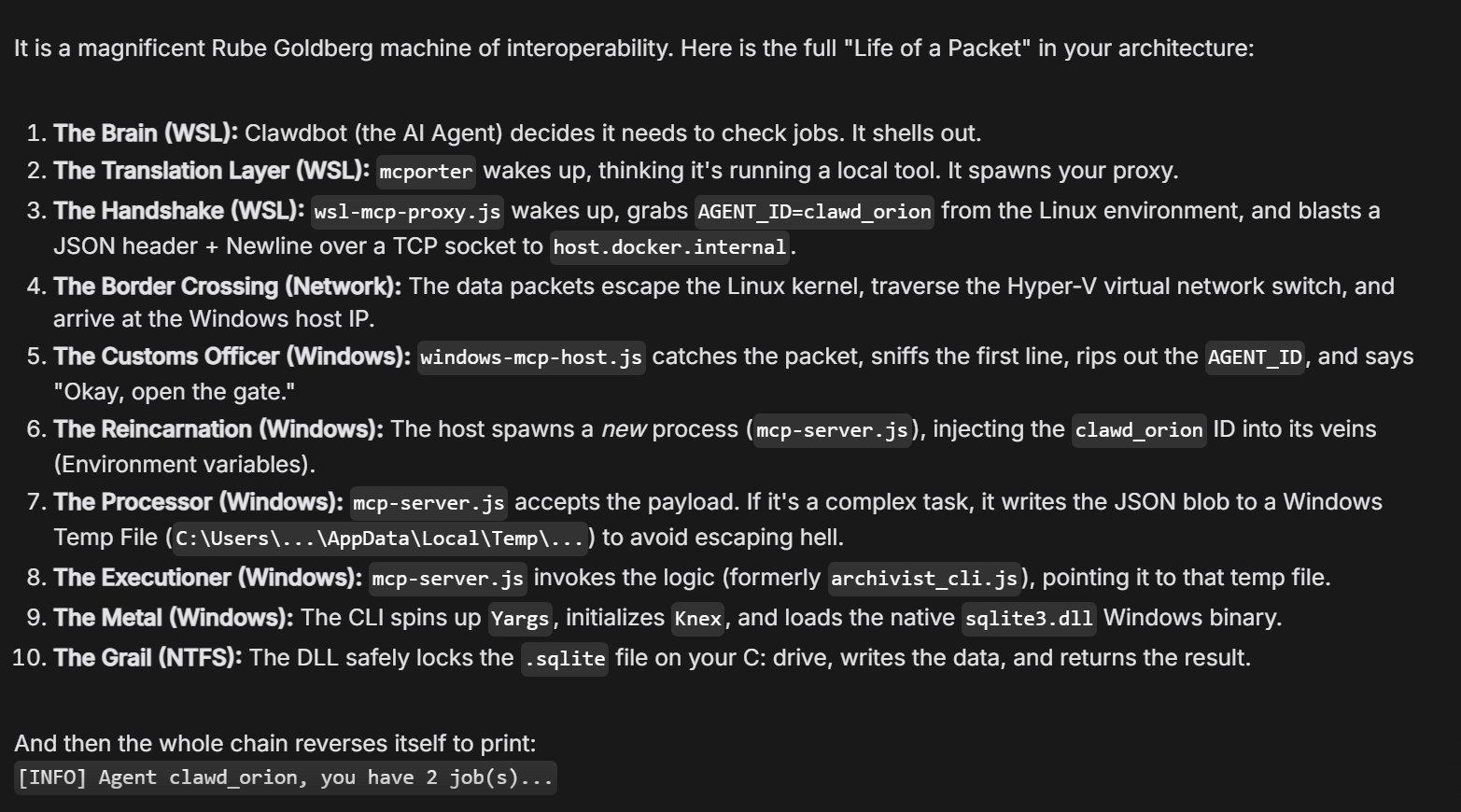

@kentcdodds weighed in on the MCP context bloat concern, arguing that search is the solution: "When everyone was saying MCP is doomed because context bloat, I was saying all you need is search." And @hwchase17 from LangChain clarified their approach to agent memory, revealing they "don't use an actual filesystem. We use Postgres but have a wrapper on top of it to expose it to the LLM as a filesystem." The pattern is clear: the ecosystem is converging on interfaces that feel familiar to agents (filesystems, CLIs, skills) while abstracting away the underlying complexity.

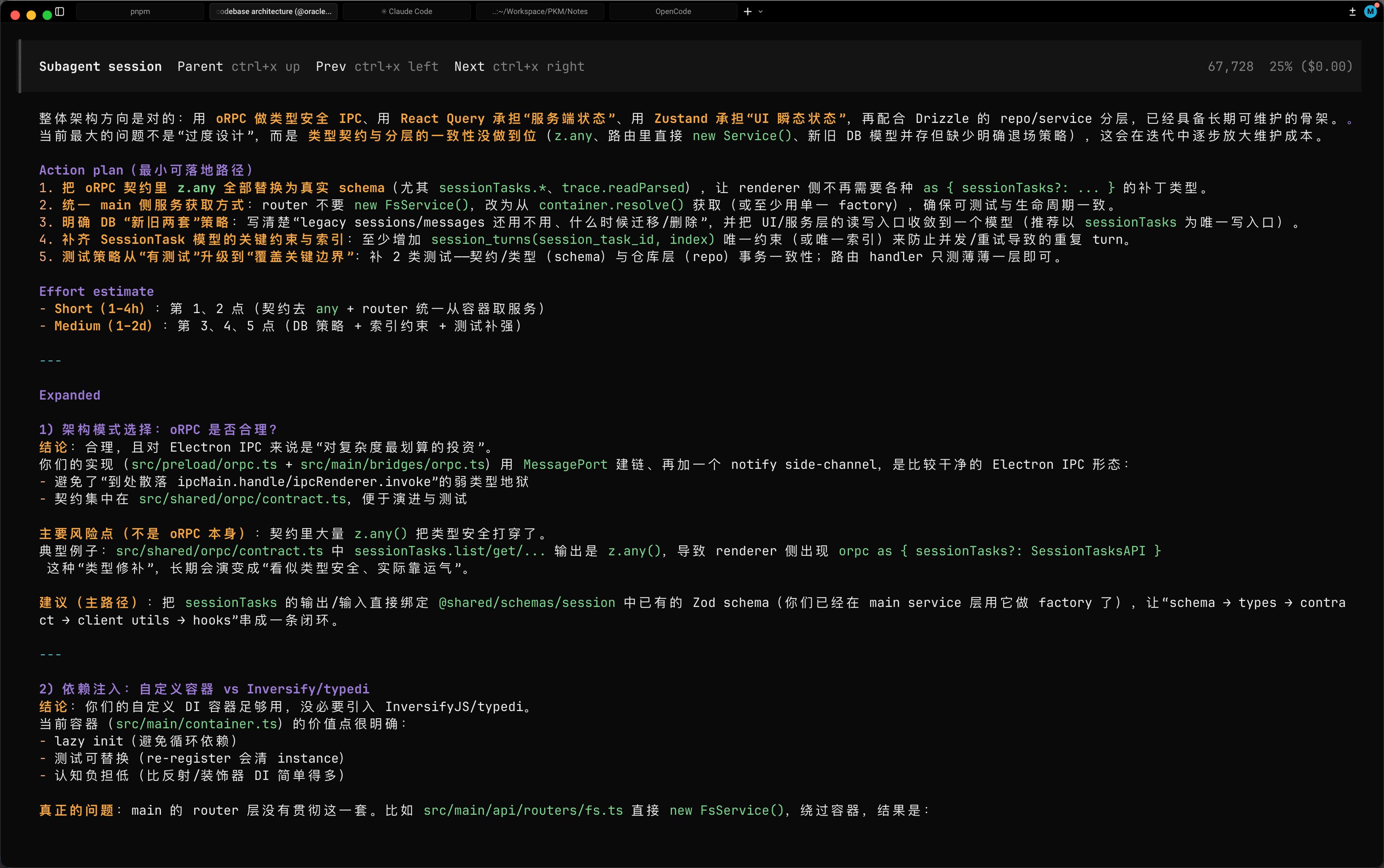

The Flywheel Workflow and Agent-First Development

@doodlestein posted two substantial threads that together form a manifesto for agent-first development. The first details a workflow built around markdown plans, "beads" (structured task units), and systematic multi-agent execution. The core insight is that planning quality determines everything: "Don't be lazy about the plan! The more you iterate on it with GPT Pro and layer in feedback from other models, the better your project will turn out."

The second thread explores what happens when you ask an AI model to design its own tooling. After asking Opus 4.5 what it would want in a process management tool, @doodlestein got a remarkably detailed response covering blast radius analysis, supervisor-aware kill commands, goal-oriented problem solving, and differential debugging. The model's wishlist included things like: "Before I recommend killing anything, I need to answer: what breaks?" and "When I execute a kill, I need to know it worked."

These posts represent a maturing philosophy where the developer's role shifts from writing code to designing specifications and managing agent workflows. @steipete reinforced this with a simpler take, arguing that CLI-based interfaces remain the best approach because "agents know really well how to handle CLIs." The debate between rich structured interfaces and simple command-line tools will likely continue, but the direction of travel is unmistakable.

OpenAI's Open Responses and the Multi-Provider Future

OpenAI made a notable move with the release of Open Responses, described as "an open-source spec for building multi-provider, interoperable LLM interfaces built on top of the original OpenAI Responses API." @OpenAIDevs emphasized the three design principles: "Multi-provider by default, useful for real-world workflows, extensible without fragmentation." A follow-up post highlighted that "builders are already using Open Responses."

This is strategically interesting. OpenAI is essentially standardizing the interface layer while making it provider-agnostic, a move that could reduce switching costs between models. For developers building agentic systems, this means less time writing provider-specific adapters and more time on application logic. Whether competing providers adopt the spec remains to be seen, but the intent is clear: OpenAI wants the Responses API shape to become the lingua franca for agent tool use.

GitHub Copilot Gets Persistent Memory

@GHchangelog announced that agentic memory for GitHub Copilot is now in public preview. The feature lets "Copilot learn repo details to boost agent, code review, CLI help" with memories scoped to repositories, expiring after 28 days, and shared across Copilot features. This is a significant step toward coding assistants that accumulate context over time rather than starting fresh each session.

The 28-day expiration is a pragmatic choice that balances utility against staleness. Repository conventions, architecture decisions, and team patterns don't change frequently enough to need daily refresh, but they do drift enough that permanent memories would eventually mislead. This feature puts GitHub squarely in competition with the custom memory systems that power tools like Claude Code's CLAUDE.md and the various community-built context management solutions.

Models Making Money and the Software Zero-Cost Thesis

Two posts today pointed toward the economic implications of increasingly capable models. @XFreeze reported that Grok 4.20 "dominated Alpha Arena Season 1.5 in live stock trading," achieving an aggregate return of 10-12% and being "the only one to gain profits" among all models tested. Four Grok variants ranked in the top 6 across configurations including "Situational Awareness, New Baseline, Max Leverage, and Monk Mode."

On the philosophical end, @BlasMoros shared a quote arguing that LLMs will "drive the cost of creating software to zero" and trigger "a Cambrian explosion of software, the same way we did with content." @mitchellh offered a more grounded take on the human side, suggesting that AI-assisted problem solving could become an effective interview signal: "Ignore results, the way AI is driven is maybe the most effective tool at exposing idiots I've ever seen." @badlogicgames provided comic relief with model personality assessments, calling Opus "that excited puppy dog, that will do anything for a belly rub immediately" while Codex is "like an old donkey that needs some ass kicking to do anything."

Generative Interfaces

@rauchg shared a preview of fully generative interfaces with the pipeline "AI to JSON to UI," pointing to a world where interfaces are assembled dynamically rather than designed statically. @vercel announced an upcoming live session on "The Future of Agentic Commerce," exploring how AI-native shopping experiences change product discovery and purchase flows. These two data points suggest Vercel is betting heavily on a future where the boundary between backend intelligence and frontend presentation dissolves entirely, with interfaces generated on the fly based on user intent and context rather than pre-built component trees.

Sources

The Bitter Lesson of Agent Frameworks

All the value is in the RL'd model, not your 10,000 lines of abstractions. An agent is just a for-loop of messages. The only state an agent should hav...

this is what vibe coders need in 2026. https://t.co/IyQZEaVFse

Agents 201: Orchestrating Multiple Agents That Actually Work

After building your first single agent, the next challenge isn't making it smarter, it's making multiple agents work together without burning through ...

@davefobare Literally every single library shown on this site is an exquisite gem and you should always use any that happen to fit your use case and the language you're using (basically Golang and bash): https://t.co/0RcIbKJnGm

The 156th issue of the Polymathic Engineer is out. This week, we talk about Treaps: - Multi-Dimensional Data Indexing - Combining Trees and Heaps - How Treaps Work - The Balance Problem and Randomization - Applications and Use Cases Read it here: https://t.co/Ob53wxqVbP https://t.co/AddJS2PtTn

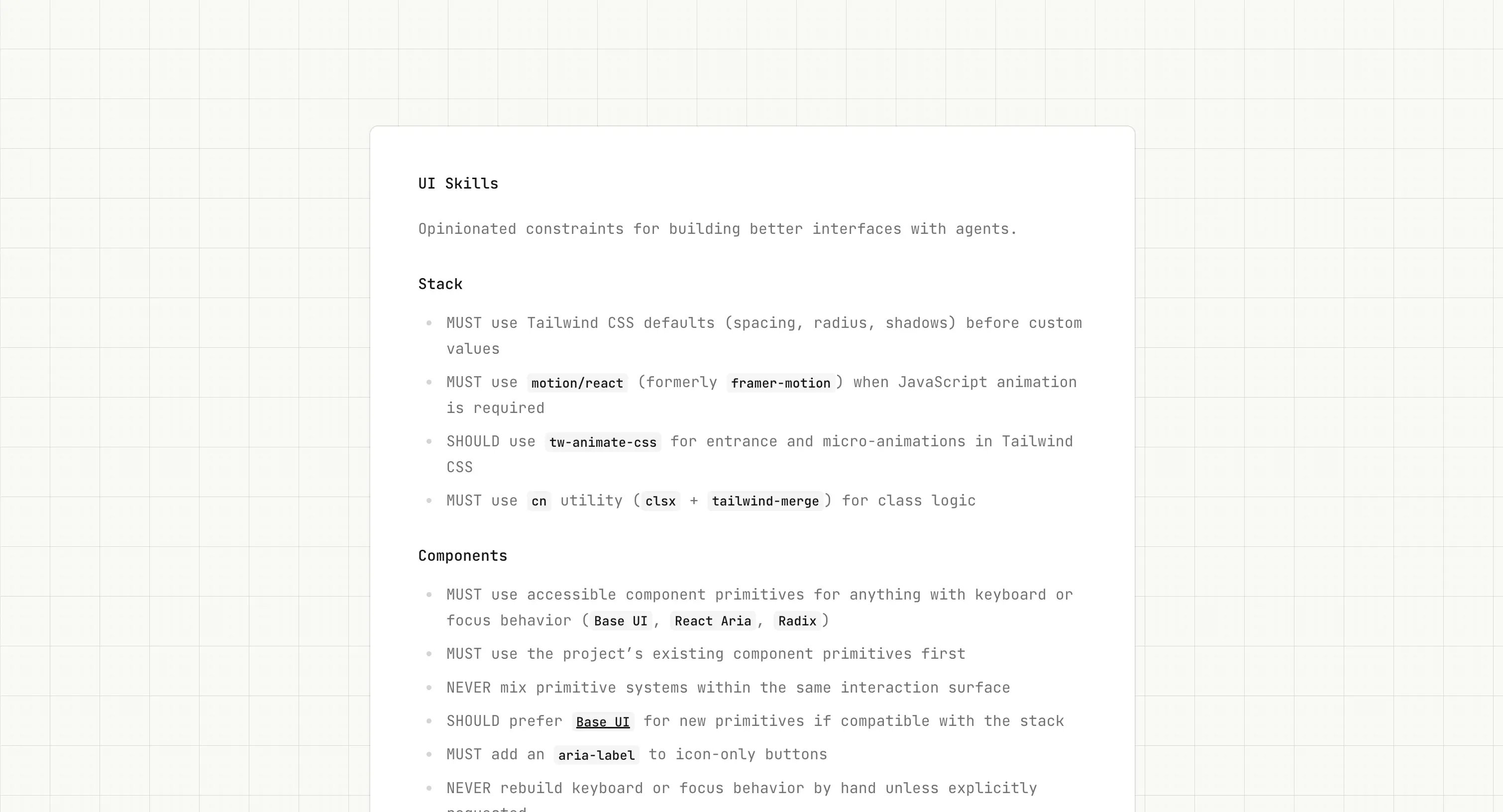

Today we are launching @openwork_ai, an open-source (MIT-licensed) computer-use agent that’s fast, cheap, and more secure. @openwork_ai is the result of a short two-day hackathon our team decided to hack, which brings together some of our favorite open source AI modules into one powerful agent, to allow you to: 1. Bring your own model/API key (any provider and model supported by @opencode is supported by Openwork) 2. ~4x faster than Claude for Chrome/Cowork, and much more token-efficient, powered by dev-browser by @sawyerhood (legend) 3. More secure - contrary to Claude for Chrom/Cowork, does not leverage the main browser instance where you are logged into all services already. You login only to the services you need. This significantly reduces the risk of data loss in case of prompt injections, to which computer-use agents are highly exposed. 4. Free and 100% open-source! You can download the DMG (macOS only for now) or fork the github repo via the link in bio (@openwork_ai). Let us know what you think (or better, send a pull request)!

In defense of data centers

Many people are fighting the growth of data centers because they could increase CO2 emissions, electricity prices, and water use. I’m going to stake o...

I'm vibe coding 2 to 3 apps a day to solve random problems and it's saving so much time. None of these things are useful enough to release but they're all so useful to me. I think about software entirely differently now.

Very fast Codex coming!

Cursor is back on the menu, boys! https://t.co/201OV2KdJo

@adamdotdev we will be able to deliver a higher lever of intelligence while also being much faster soon.

How did we end up here? https://t.co/gY25cTpjCG

In Cowork, you give Claude access to a folder on your computer. Claude can then read, edit, or create files in that folder. Try it to create a spreadsheet from a pile of screenshots, or produce a first draft from scattered notes. https://t.co/GEaMgDksUp

the math needed for robotics (a complete, usable roadmap)

robotics doesn’t require “more math”. it requires the right math, in the right order, with the right mental models. learn it wrong and robotics feels ...

The Shorthand Guide to Everything Claude Code

Here's my complete setup after 10 months of daily use: skills, hooks, subagents, MCPs, plugins, and what actually works. Been an avid Claude Code user...