Claude Code Skills Ecosystem Explodes as MCP Context Pollution Fix Unlocks Hundreds of Tool Integrations

A major Claude Code update solving MCP context pollution dominated the timeline, unleashing a skills marketplace of 60,000+ plugins and prompting Trail of Bits to release their first official skills. Meanwhile, the developer community converged on best practices for agent-assisted coding, and a viral breakdown of the "Ralph loop" pattern laid out a blueprint for AI-native software engineering.

Daily Wrap-Up

Today felt like a inflection point for Claude Code's ecosystem. The fix for MCP context pollution, a longstanding friction point that kept power users from connecting more than a handful of tools, appears to have broken a dam. Within hours of @simonw declaring "there's no reason not to hook up dozens or even hundreds of MCPs," we saw a skills marketplace surface with 60,000+ entries, Trail of Bits dropping official security skills, and multiple people sharing installation guides. The tooling layer around Claude Code is maturing fast, and the gap between "interesting CLI tool" and "extensible development platform" closed meaningfully today.

The other thread worth tracking is the growing consensus on how to actually work with coding agents. Cursor published their best practices, @addyosmani wrote about how code review needs to evolve when agents write the code, and @jaimefjorge dropped a sprawling breakdown of the Ralph loop pattern that reads like a manifesto for AI-native engineering. The consistent message: the value isn't in the code generation itself, it's in the specifications, architecture decisions, and orchestration patterns that guide it. People who treat agents as fancy autocomplete are leaving 90% of the value on the table.

The most entertaining moment was @emollick's "vibefounding" MBA class, where non-technical students are building working products in four days that would have taken a semester previously. His observation that "AI doesn't just do work for you, it also does new kinds of work" is the kind of reframe that separates people who are annoyed by AI hype from people who are actually shipping. The most practical takeaway for developers: if you've been avoiding MCP integrations due to context pollution issues, today's the day to revisit. Connect your project management, documentation, and monitoring tools to Claude Code and treat skills as composable capabilities rather than monolithic plugins.

Quick Hits

- @jefftangx reverse-engineered Cowork's VM snapshot and found it's an Electron app wrapping Claude Code with its own Linux sandbox, an "internal-comms skill" by Anthropic, and two security vulnerabilities. When asked what questions he should have asked, the agent suggested adding memory and leaving notes for itself "once it dies."

- @steipete reports his productivity roughly doubled moving from Claude Code to Codex, though it took some initial adjustment to figure out the workflow.

- @cryptopunk7213 flagged Google's "Personal Intelligence" announcement, where emails, photos, YouTube history, location, and documents will all train a personalized Gemini. The argument: Google's data moat is something OpenAI and Anthropic simply cannot compete with.

- @gregisenberg posted "40 reasons 2026 is the best time ever to build a startup" without elaboration, which is either inspiring or anxiety-inducing depending on your current runway.

- @vista8 shared an extensive Chinese-language analysis of how AI transitions from personal assistant to organizational intelligence, arguing that the real unlock is AI participating in existing collaboration mechanisms (email, chat, docs) rather than inventing new ones.

- @AngryTomtweets identified a video as Kling AI 2.6 Motion Control, for those tracking the generative video space.

- @pleometric shared what appears to be a humorous take on the Claude Code experience, apparently capturing the emotional rollercoaster many developers know well.

Claude Code Skills & MCP Ecosystem

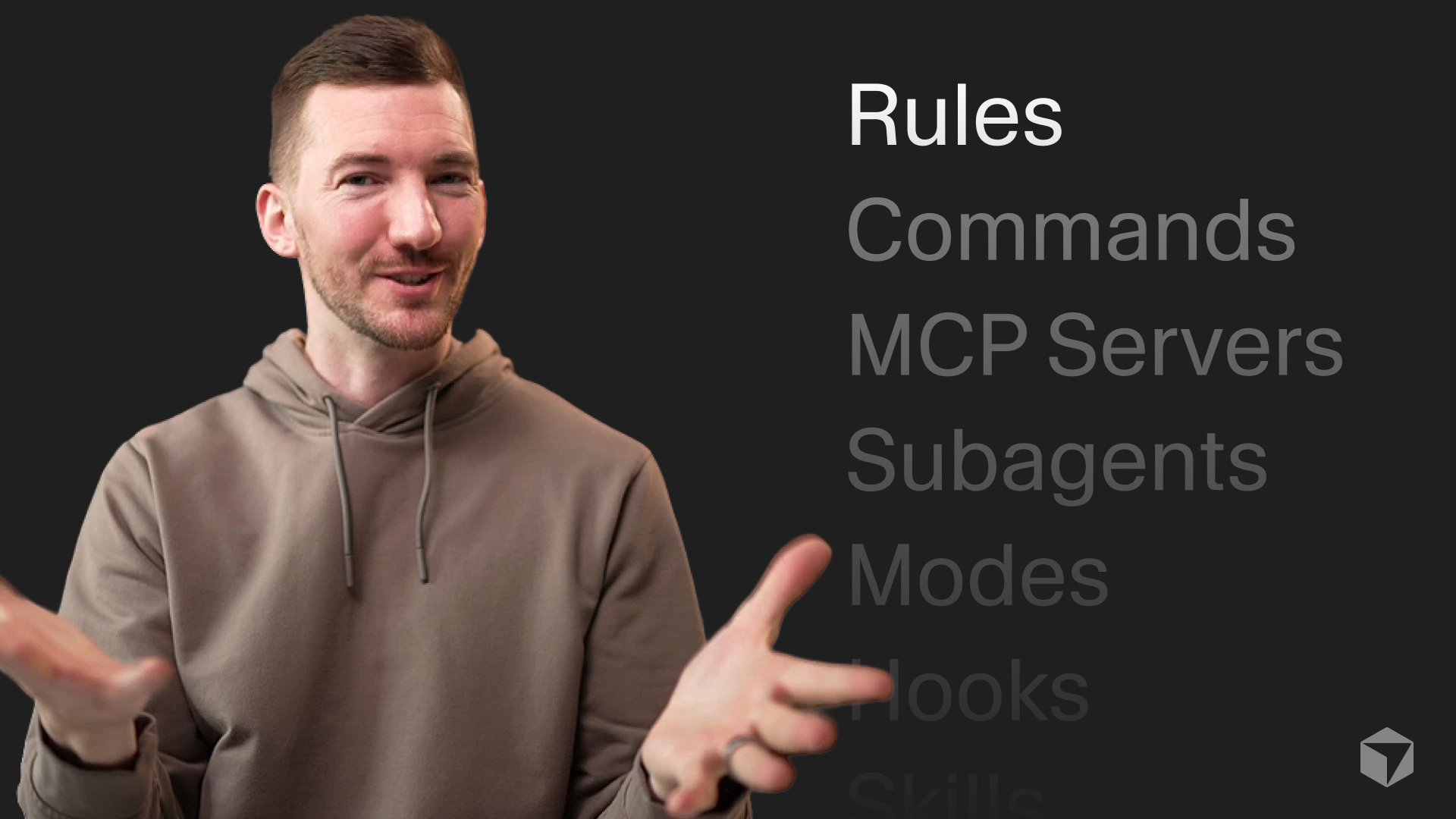

The biggest story today is the Claude Code ecosystem crossing a usability threshold. @simonw captured the mood precisely: "Context pollution is why I rarely used MCP, now that it's solved there's no reason not to hook up dozens or even hundreds of MCPs to Claude Code." That single fix transforms Claude Code from a tool that technically supports extensions to one where extensions are practical at scale. @bcherny, clearly involved in the launch, added that "every Claude Code user just got way more context, better instruction following, and the ability to plug in even more tools." @arlanr put it more bluntly: "it happened, MCP is no longer bs."

The downstream effects were immediate. @milesdeutscher surfaced a skills marketplace at skillsmp.com with 60,000+ Claude Skills ready for use. @dguido announced that Trail of Bits, a well-respected security firm, released their first batch of Claude Skills. @d4m1n shared a practical guide for installing skills: copy the directory including SKILL.md into your project's .claude/skills directory, noting that "skills only take a bit of context and are loaded when needed by the agent." @trq212 flagged Tool Search as a new capability in Claude Code, which makes discovering relevant tools from a large library feasible.

On the more experimental end, @deepfates noted you can "just make Claude Code a RLM by telling it to look at its own conversation logs," turning the tool into a reflective learning machine. And @pvncher shared that a big tech company rolling out Claude Code with $100/month budgets found people burning through credits in 2-3 days, raising real questions about how agentic work scales with API pricing. The ecosystem is clearly ready for prime time, but the economics of heavy usage remain an open question.

Agent-Assisted Development Practices

A clear consensus is forming around how to work effectively with coding agents, and today brought several frameworks worth internalizing. @Hesamation distilled Cursor's official blog post into ten principles, with the most notable being: "use plan mode before any code," "revert and refine instructions rather than fixing hopelessly," and "write tests first so it can iterate." These aren't radical ideas individually, but together they describe a workflow where the human's job is creating guardrails, not writing code.

@addyosmani pushed this further with a provocative take on code review:

> "When agents write the code, review inverts. You stop asking only 'is this correct?' and start asking 'was this intent clear enough to execute safely?'... The diff tells you what shipped. The conversation tells you why."

This lands differently when paired with @bibryam quoting Osmani's longer piece: "The best software engineers won't be the fastest coders, but those who know when to distrust AI." The emerging picture is one where engineering judgment becomes the primary skill, with code generation delegated to agents.

On the architecture side, @forgebitz made a practical observation: "having a monorepo turned out to be a massive advantage for AI coding. All context is inside one repo." @victor_explore distilled it even further: "the real context window was the architecture decisions we made along the way." And @hjcharlesworth shared a mental model for agent pairing that seems to be gaining traction. The through-line across all of these: the developers getting the most from agents are the ones investing in context architecture, specifications, and project structure rather than prompt engineering.

AI & Work Transformation

The "what happens to jobs" conversation took several interesting turns today. @emollick is running an experimental MBA class on "vibefounding" where students have four days to launch a company:

> "Everything they are doing in four days would have taken a semester in previous years, if it could have done it at all. Quality is also far better... The non-coders are all building working products."

His fourth observation is the most important: "The hardest thing to get across is that AI doesn't just do work for you, it also does new kinds of work." This isn't about replacement, it's about capability expansion.

On the organizational side, three posts converged on the same role. @Codie_Sanchez called an internal AI transformation hire "the best money I've ever spent as a CEO," someone who "goes across your entire org and kills stupid manual processes." @jainarvind said Glean calls these "AI Outcomes Managers." @damianplayer warned that if you aren't actively hiring for this role, "you're already behind." The pattern is clear: companies are creating dedicated positions for people who can identify and automate workflows across departments.

@DaveShapi went darker, revising his future labor force participation estimate down to 15%, meaning "less than 1 out of 6 working age adults will have meaningful employment." Whether you find that plausible or alarmist, the direction of the trend lines is hard to argue with when MBA students are shipping in four days what used to take a semester.

The Ralph Loop: A Blueprint for AI-Native Engineering

@jaimefjorge dropped a massive breakdown of his interview with Geoffrey Huntley on the Ralph loop pattern that deserves its own section. The core insight: "The simplest version is a bash loop that deterministically allocates memory, lets the LLM pick one task, executes it, then starts fresh. Every loop gets a brand new context window." This avoids context compaction, where agents degrade as their context fills up, by treating each iteration as stateless with institutional knowledge living in specification files.

The implications Huntley draws are sweeping. Software development (translating tickets to code) is now a commodity at "$10-42/hour while they sleep," but software engineering (architecture, security, requirements, failure modes) is where humans still matter. He claims any SaaS product can be cloned using AI by running Ralph in reverse over source code and marketing materials. And he offers a programming language tier list for AI agents: S-tier is Rust, TypeScript with Effect.js, and Python with Pydantic due to strong type systems; F-tier is Java and .NET because their dependency systems don't work well with agent search tools.

The most provocative claim: "Senior engineers who refuse to adapt are in more danger than juniors who embrace it." When commit velocity between adopters and non-adopters diverges dramatically, leadership notices. Whether or not you buy the full vision, the Ralph loop as an architectural pattern for autonomous task execution is worth understanding.

Personal AI Workflows

Several posts showcased how people are building personal AI systems beyond just coding. @mattlam_ set up Clawdbot as a 24/7 personal assistant for $5/month on a Hetzner VPS, handling research, repo setup, task planning, calendar management, and even Twitter search. @alexhillman described a "seeds" system for capturing proto-ideas with markdown files that store the idea, context, and goal, currently sitting at 132 seeds with an AI-developed scoring framework for prioritization. In a separate post, he walked through using a Claude assistant to batch-process Whisper transcriptions.

@emollick contributed two gems: a Claude Code plugin that "visualizes the work Claude Code is doing as agents working in an office, with agents doing work and passing information to each other," and the philosophical zinger: "Could this meeting be an email? Could this organization be a set of markdown files?" That last question is becoming less rhetorical by the day.

New Developer Tools

Two notable open-source releases landed today. @_Evan_Boyle announced GitHub's Copilot CLI SDK, supporting Go, Python, TypeScript, and C# with custom tools, BYOK, and any model. It's built on the same agent loop powering Copilot CLI and the GitHub Coding Agent.

@_orcaman launched Openwork AI, an open-source computer-use agent claiming 4x speed improvement over Claude for Chrome/Cowork with better security. The key differentiator: it runs in its own browser sandbox rather than your main browser where you're logged into everything, significantly reducing prompt injection risk. It's MIT-licensed and macOS-only for now.

Sources

How to Build Systems That Actually Work

Most people mistake the absence of effort for simplicity. They see an elegant solution and assume it sprang fully formed from some gifted mind. What t...

Event-Driven: diseñar tu app pensando en eventos

Tool Search now in Claude Code

"How can I use react-best-practices skills?" Codex example 👇 https://t.co/dUrnqOUWIu

How we built Agent Builder’s memory system

Finally! We (the community + @OpenAIDevs + @huggingface ) bring you an open standard for inference. It's called 'Open Responses' it's based on Responses and it's perfect for agent workloads. Fewer special cases, more consistency, faster shipping. Excited for what this unlocks. Below is a deep dive blog post, we’ll look at how Open Responses works and why the open source community should use Open Responses.

Can you read 900 words per minute? Try it. https://t.co/31ubbZWvXH

ralph-tui 0.1.7 is live - feat: New agent plugin for @FactoryAI @droid - fix: Shift-Enter bug in create-prd chat input (community PR) - fix: incorrect reason command when closing beads - fix: various docs fixes

The End of Software https://t.co/JWg6QYqLzO

https://t.co/YQOpNYJRyO

This week we're going to begin automatically closing pull requests from external contributors. I hate this, sorry. https://t.co/85GLG7i1fU

Why you're still slow even with AI

Most of our old habits are now optimizing for the incorrect thing. If you feel behind while others are shipping, it might be because of these 8 habit...

Which one? - Codex 5.2 high - Codex 5.2 xhigh - Codex 5.2-codex high - Codex 5.2-codex xhigh @steipete @mitsuhiko @badlogicgames @thsottiaux

Talking to AI Agents is All You Need

You've tried Claude Code. Cursor. Antigravity. The demos looked great, but the results feel mediocre. You're not missing a framework. You're not miss...