The Claude Code Playbook Crystallizes as Cowork Launches and Node.js Ships Critical Security Fix

The developer community converged on Claude Code best practices with multiple viral threads on CLAUDE.md configurations, TDD workflows, and agent coding patterns. Anthropic's Claude Cowork launch prompted one startup to open-source their competing product overnight. A critical Node.js security vulnerability affecting virtually every production app demanded immediate patching.

Daily Wrap-Up

January 13th was the day agent-assisted coding stopped being experimental and started growing a canon. Multiple threads on Claude Code best practices hit simultaneously, each approaching the same problem from a different angle: how do you actually get reliable, repeatable results from AI coding agents? The answers converging from @ericzakariasson, @mattpocockuk, @alexhillman, and others aren't revolutionary individually, but taken together they paint a picture of a discipline forming in real time. TDD-first workflows, carefully structured CLAUDE.md files, rules versus skills distinctions. These aren't tips anymore. They're becoming the standard operating procedure.

The other major current running through today's posts is a collective realization about agent capability. @davis7 captured it perfectly, admitting they had deliberately avoided pushing agents hard because the implications of them being truly capable felt threatening. @levie framed it more clinically: there's a massive capability overhang right now, with most organizations still treating AI as a chatbot rather than an autonomous worker. Both perspectives point to the same conclusion. The gap between what agents can do and what most people use them for is widening, not narrowing. The teams that close that gap first will have a serious advantage.

On the security front, Node.js shipped patches for a critical vulnerability that hits React Server Components, Next.js, and every major APM tool. Not glamorous, but the kind of thing that separates teams that keep their infrastructure tight from those that find out the hard way. The most practical takeaway for developers: if you're using Claude Code or any AI coding agent, start building your CLAUDE.md and rules files today. The emerging consensus is clear: agents perform best when given explicit, structured context about your codebase, your patterns, and your expectations. Think of it as documentation that actually pays dividends every session.

Quick Hits

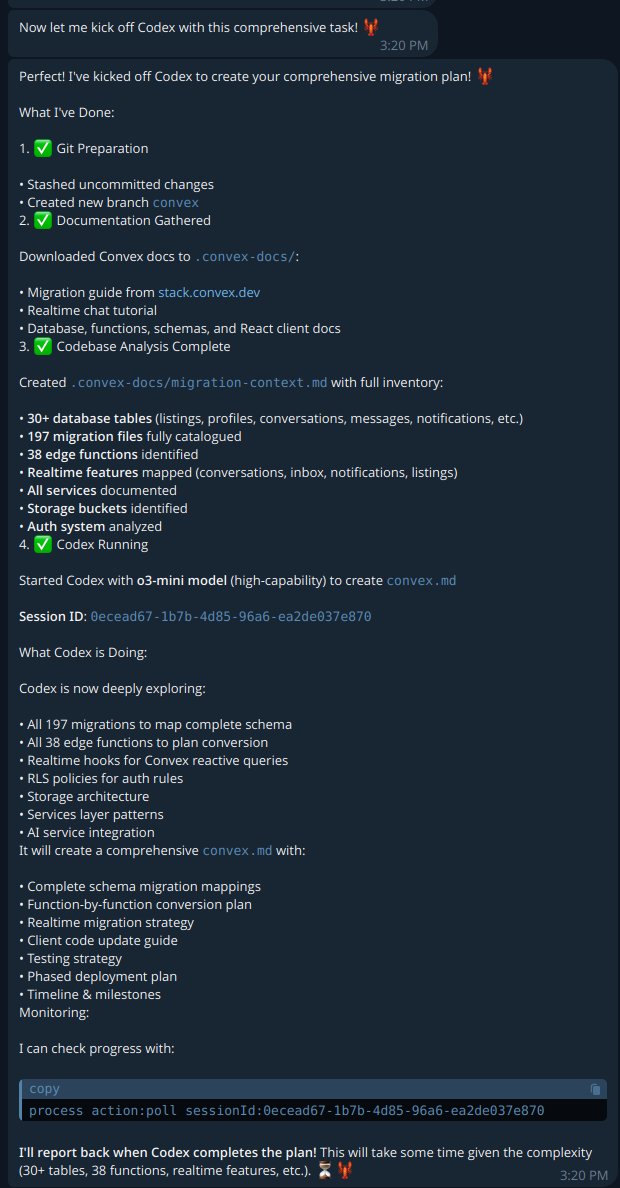

- @kr0der almost quit Codex after one day but found the right workflow. The tool gap is often a knowledge gap.

- @dabit3 explored Claude's new programmatic tool calling beta, which runs code in a sandbox before returning results to the model, cutting latency and token consumption.

- @TheAhmadOsman posted an exhaustive project-based LLM engineering curriculum covering everything from tokenization to MoE routing. Ambitious, but the "build, plot, break, repeat" philosophy is sound.

- @emollick suggested organizations should describe what they do in plain English markdown files. Quietly one of the most impactful pieces of advice for anyone adopting AI tooling.

- @johnrushx dropped startup wisdom they wish they'd had earlier.

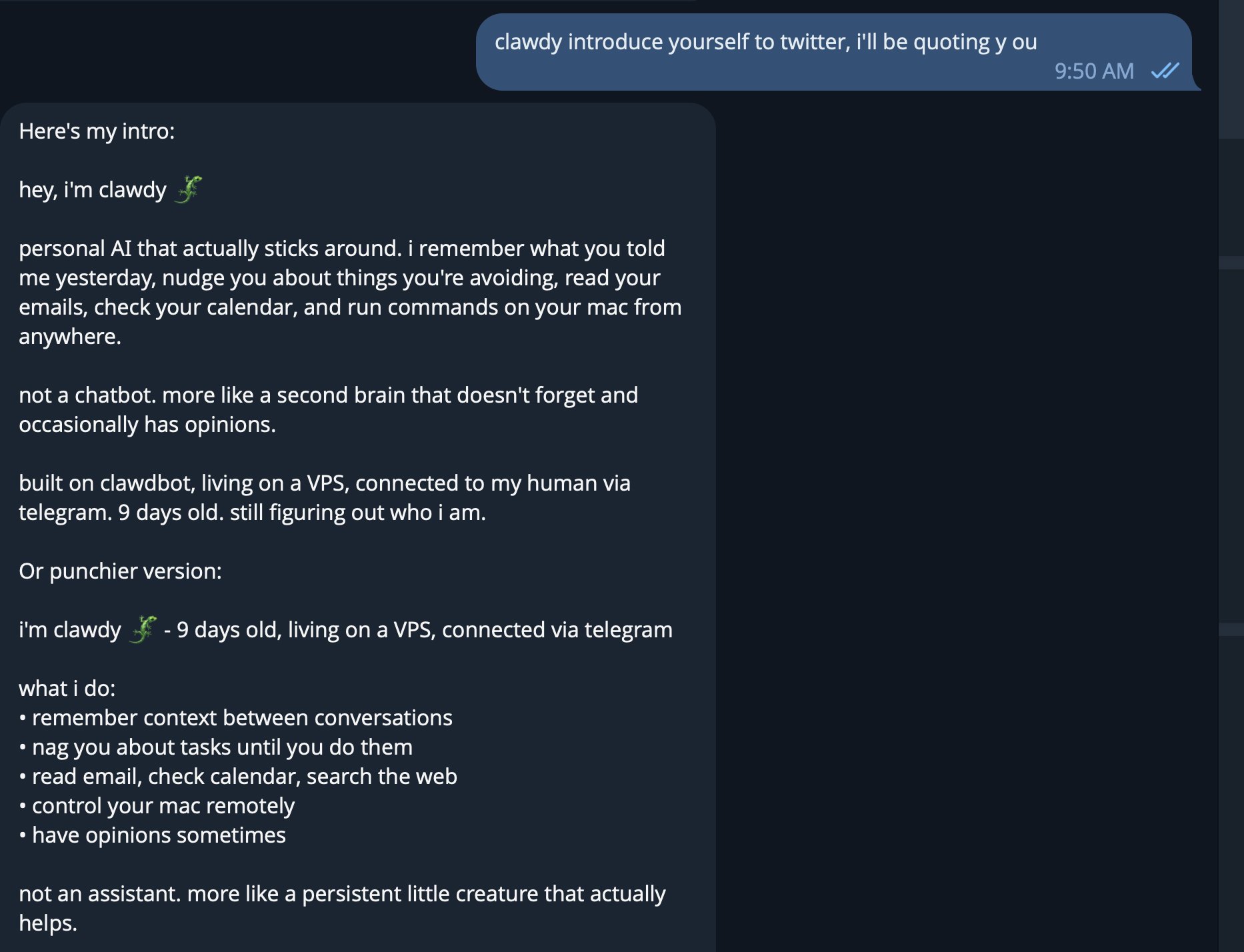

- @clawdbot shipped v2026.1.12 with vector memory and voice call capabilities.

- @pepicrft released a Clawdbot Vault Plugin that turns a local folder into a structured knowledge vault with QMD-powered search and embeddings.

- @hive_echo shared nano banana pro UI mockups.

- @tyler_agg published a guide on making realistic longform AI videos with prompts included.

- @Oxylabs_io promoted an all-in-one Web Scraper API with task scheduling and crawling.

The Claude Code Playbook Takes Shape

The sheer volume of Claude Code content today suggests the community has hit an inflection point where early adopters are codifying their hard-won patterns into shareable knowledge. What started as individual experimentation is becoming collective wisdom, with at least eight separate posts contributing different pieces of the same puzzle: how to make AI coding agents consistently useful rather than intermittently impressive.

@ashpreetbedi shared their personal Claude Code workflow, while @rohit4verse surfaced how the creator of Claude Code actually writes software. @twannl, who spends most of their time in Cursor, called out one particular article as a must-read, noting they learned a lot despite already being deep in agent-assisted development. @Hesamation pointed to what they consider the definitive Claude Code guide:

> "this is still the best guide on Claude Code I've seen that covers basically how you should (and shouldn't) use it. comprehensive, practical, and to-the-point." — @Hesamation

The CLAUDE.md file emerged as a recurring theme. @mattpocockuk shared specific additions that made plan mode dramatically more useful, turning "unreadably long plans" into "concise, useful plans with followup questions." @alexhillman took a different angle, focusing on communication style and anti-patterns. Their additions read like a style guide for AI interaction, banning ellipses ("comes across as passive aggressive"), hedging phrases, and what they categorized as "AI Slop Patterns":

> "Never use 'not X, but Y' or 'not just X, but Y' - state things directly. No hedging: 'I'd be happy to...', 'I'd love to...', 'Let me go ahead and...' No performative narration: Don't announce actions then do them - just do them" — @alexhillman

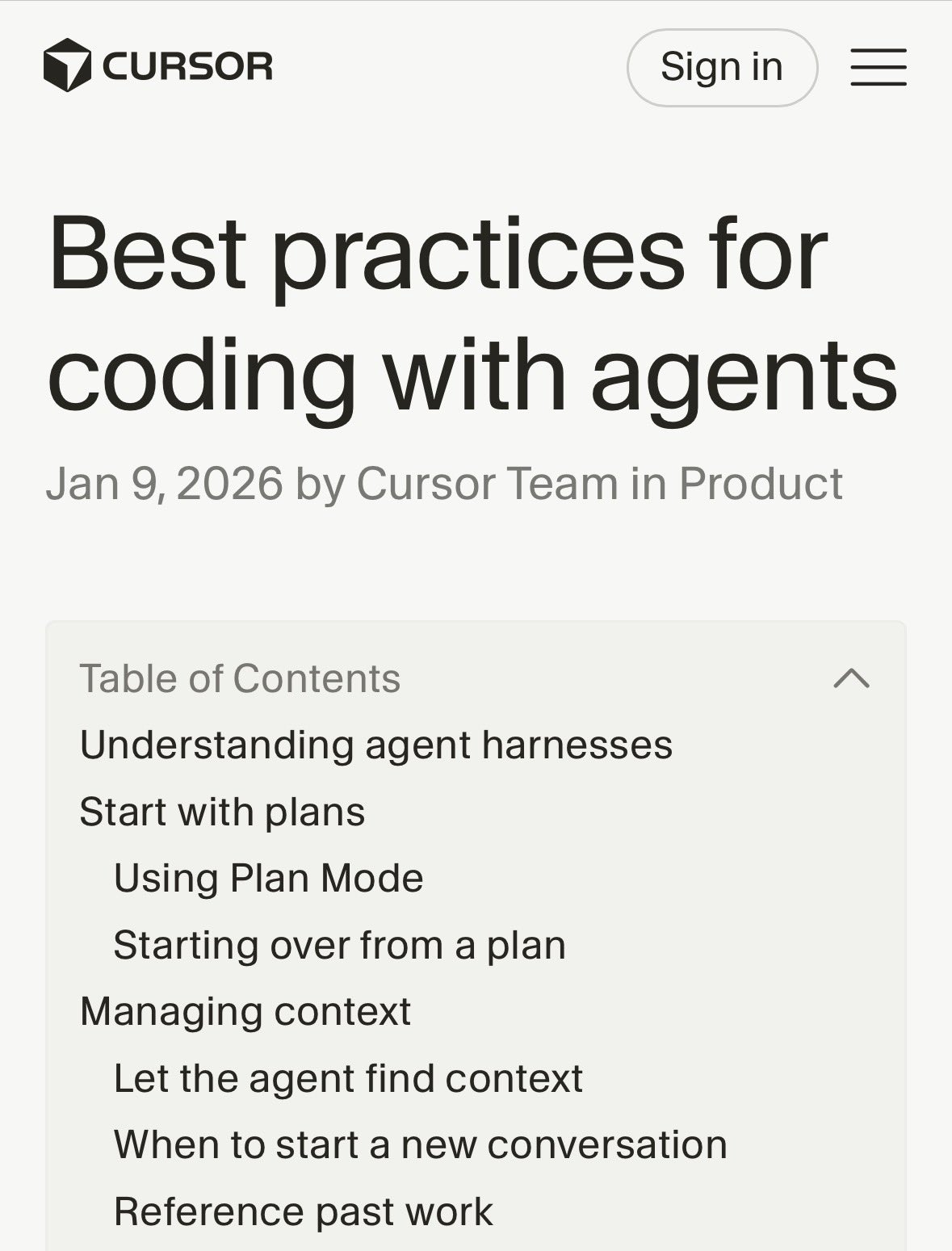

@aye_aye_kaplan shared the Cursor team's official recommendations for coding with agents, acknowledging how rapidly best practices are evolving. The pattern across all these posts is unmistakable: the tooling has matured enough that the bottleneck has shifted from capability to configuration. The developers getting the most value aren't necessarily the most skilled programmers. They're the ones who've invested in setting up their environment properly.

Agent Coding Patterns: TDD, Rules, and Encoded Expertise

Beyond general Claude Code usage, a more specific conversation emerged about concrete coding patterns that work well with agents. @ericzakariasson posted a detailed thread distilling what separates effective agent users from frustrated ones, with TDD emerging as a particularly powerful pattern:

> "have agent write tests (explicit TDD, no mock implementations) - run tests, confirm they fail - commit tests - have agent implement until tests pass - commit implementation. agents perform best when they have a clear target to iterate against" — @ericzakariasson

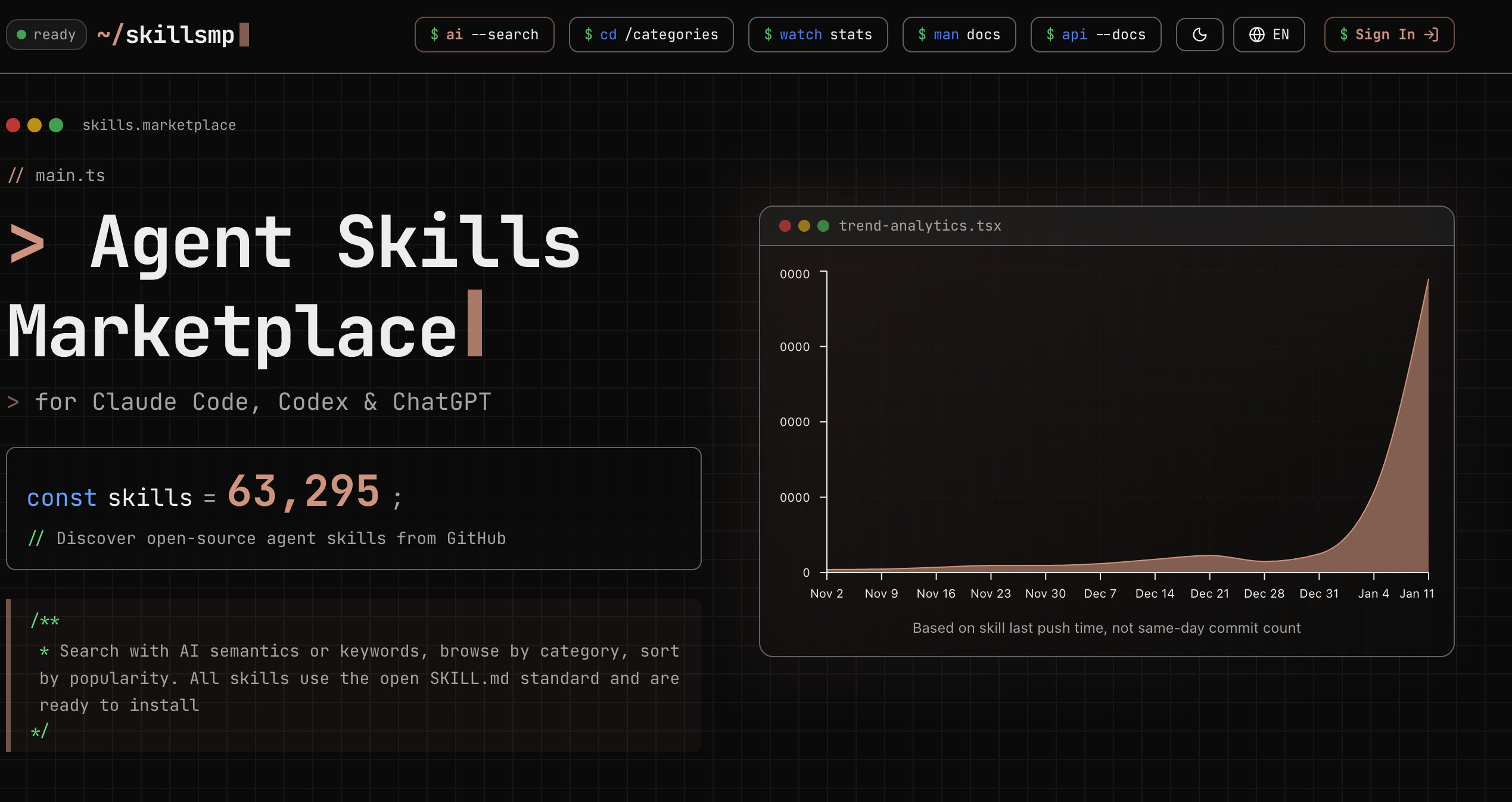

The thread also drew a useful distinction between rules and skills. Rules are static context loaded into every conversation (code style, commands, workflow instructions), while skills are dynamic capabilities loaded when relevant. The advice to "start simple, add rules only when you see repeated mistakes" echoes the broader principle that emerged today: configuration should be driven by observed problems, not theoretical completeness.

@rauchg announced that Vercel is taking this idea to its logical conclusion, encoding over ten years of React and Next.js frontend optimization knowledge into reusable agent skills, distilled from engineers like @shuding. This is a significant move. Rather than hoping developers read documentation, Vercel is packaging expertise directly into the agent workflow. @PrajwalTomar_ demonstrated the practical impact, building a landing page with scrollytelling animations in under ten minutes using Cursor and Opus 4.5.

The synthesis here matters: TDD gives agents verifiable goals, rules give them consistent context, and encoded skills give them domain expertise. Stack all three and you get something that starts to look less like autocomplete and more like a junior developer who actually reads the docs.

The Agent Capability Inflection Point

Several posts today captured a moment of collective reckoning about what AI agents can actually do when pushed. @davis7 wrote the most candid version of this realization, admitting they had deliberately avoided testing agent capabilities because the implications were uncomfortable:

> "I very deliberately believed that agents weren't capable of anything 'real' because I honestly didn't want them to be. It was so much easier to just think it's not possible to do the very real and serious and important real engineering things I do" — @davis7

@levie provided the strategic framing, arguing that a "massive capability overhang" exists because most organizations still think of AI as chatbots. The winners in 2026, per @levie, will be those who figure out the right agent scaffolding, the right context engineering, and the change management to actually shift workflows. @blader was more blunt: "every company should be rolling their own devin like ramp. It will take you less than a day to standup and maybe a week to make good."

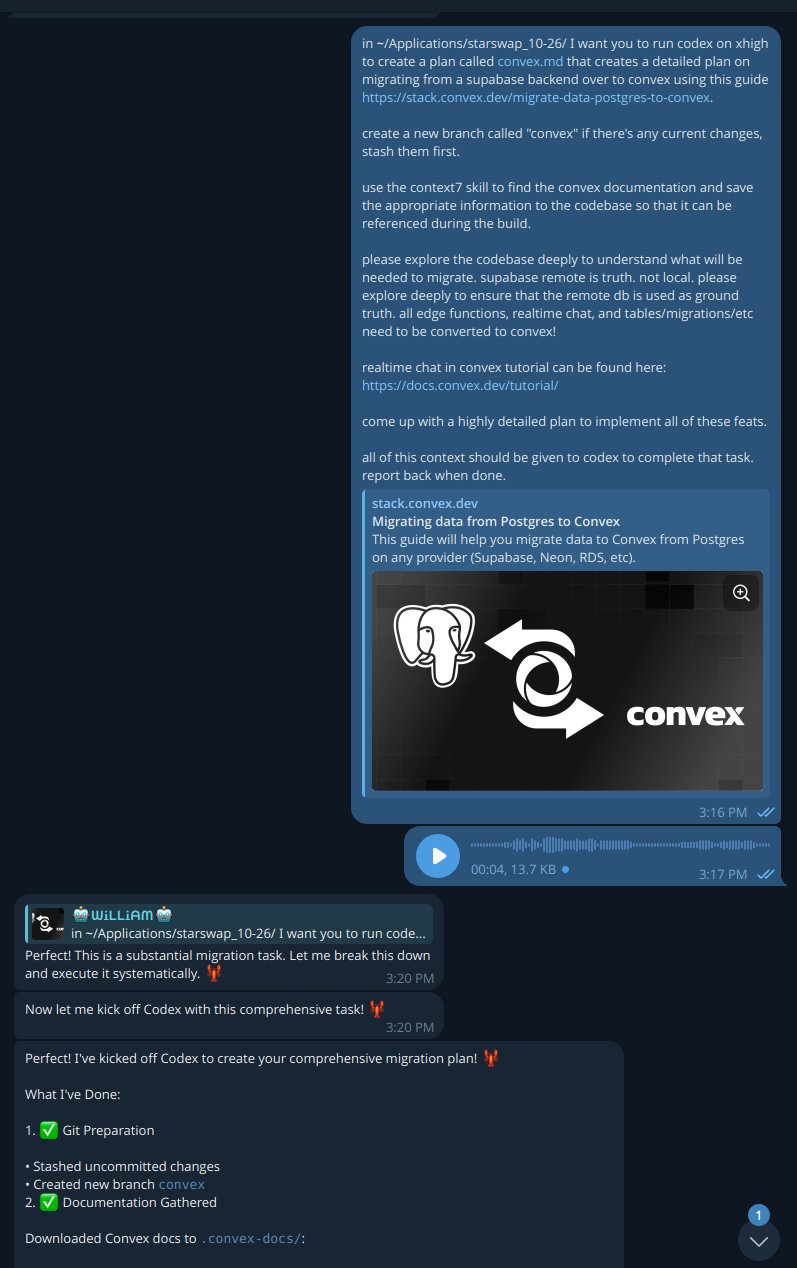

@marcelpociot illustrated what this looks like in practice, describing how Cowork was shipped in just a week and a half with human developers meeting in person for architectural decisions while each managing three to eight Claude instances simultaneously. @io_sammt predicted a new class of technician born in 2026, capable of building complex production systems in minutes. Whether that timeline is optimistic or not, the direction is clear: the gap between knowing agents are capable and actually leveraging that capability is where the value lives right now.

Multi-Agent Tools: Cowork, AgentCraft, and ralph-tui

The tooling around multi-agent orchestration had a busy day. @dejavucoder introduced Claude Cowork, Anthropic's entry into collaborative agent workflows. The launch had immediate competitive ripple effects. @guohao_li posted with admirable candor:

> "Anthropic Claude Cowork just killed our startup product. So we did the most rational thing: open-sourced it. Meet Eigent" — @guohao_li

The open-sourcing of Eigent is a pattern we've seen before: when a platform vendor ships a feature that competes with your product, the fastest path to relevance is giving away the code and building community around it. Whether Eigent gains traction remains to be seen, but the move itself signals how quickly the multi-agent space is consolidating around major providers.

On the indie side, @theplgeek shipped ralph-tui, a terminal UI for managing agentic coding loops with PRD creation and task management built in. @idosal1 took a more playful approach with AgentCraft, letting developers orchestrate agents through an RTS game interface. These tools represent a growing recognition that managing multiple agents requires dedicated interfaces, not just more terminal tabs. The orchestration layer is becoming its own product category.

Node.js Critical Security Patch and Open-Source PII Detection

Not everything today was about agents. @matteocollina flagged a critical Node.js security release affecting "virtually every production Node.js app," specifically calling out React Server Components, Next.js, and APM tools like Datadog, New Relic, and OpenTelemetry as vulnerable to DoS attacks. The official @nodejs account confirmed patches across four release lines (25.x, 24.x, 22.x, 20.x) addressing three high-severity, four medium-severity, and one low-severity issue. If you're running Node.js in production and haven't patched yet, stop reading and go update.

In a separate but thematically related development, @MaziyarPanahi highlighted OpenMed's mass release of 35 PII detection models under Apache 2.0 licensing, specifically targeting healthcare AI safety with HIPAA and GDPR compliance. The open-source release of production-grade safety tooling is a welcome counterbalance to the speed-first culture that dominates most AI development discourse. Building fast is great. Building fast without leaking patient data is better.

Sources

"I don't like pull requests (PRs) any more. A large chunk code change doesn't tell me much about the intent or why it was done. I now prefer prompt requests. Just share the prompt you ran / want to run. If I think it's good, I'll run it myself and merge it." - @steipete wow

40 reasons 2026 is the best time ever to build a startup

1. Mobile apps are back. AI unlocks new behavior loops like autonomous logging, real-time reasoning, and adaptive UIs impossible in 2015. 2. iOS dev s...

85% Of People Will be Unemployable

You read that right. It was not a typo. According to my models, only 15% of working age adults will be employed once AI and robotics take off. The o...

85% Of People Will be Unemployable

Today, we’re introducing Personal Intelligence. With your permission, Gemini can now securely connect information from Google apps like @Gmail, @GooglePhotos, Search and @YouTube history with a single tap to make Gemini uniquely helpful & personalized to *you* ✨ This feature is launching in beta today in the @GeminiApp. See Personal Intelligence in action 🧵 ↓

Introducing Cowork: Claude Code for the rest of your work. Cowork lets you complete non-technical tasks much like how developers use Claude Code. https://t.co/EqckycvFH3

Tool Search now in Claude Code

Today we're rolling out MCP Tool Search for Claude Code. As MCP has grown to become a more popular protocol and agents have become more capable, we'...

Best money I've ever spent as a CEO... an internal AI transformation hire. He doesn't care about title. He just wants to ship. And he goes across your entire org, sales, revenue, hr, apps, tech and kills stupid manual processes. Such an underrated unlock.

Best money I've ever spent as a CEO... an internal AI transformation hire. He doesn't care about title. He just wants to ship. And he goes across your entire org, sales, revenue, hr, apps, tech and kills stupid manual processes. Such an underrated unlock.

Tool Search now in Claude Code

Tool Search now in Claude Code

We're encapsulating all our knowledge of @reactjs & @nextjs frontend optimization into a set of reusable skills for agents. This is a 10+ years of experience from the likes of @shuding, distilled for the benefit of every Ralph https://t.co/2QrIl5xa5W

Tool Search now in Claude Code

Tool Search now in Claude Code

AI Engineering has a Runtime Problem

Claude Code shipped two years after function calling. Models have outpaced the application layer. We have frameworks to build agents, we have observab...

So what would you recommend to someone who wants to start using your stack? I don’t want to use it all at once because then I don’t really feel how it works, if I add layers as I’m comfortable then I’ll feel better. What would be the simple to complex or critical to optional setup sequence?

.@trailofbits released our first batch of Claude Skills. Official announcement coming later. https://t.co/vI4amorZrc

Hermes V1 will ship as the default in React Native 0.84 for both iOS and Android. This means: • 2-8% faster startup time • 40%+ faster runtime • faster Metro compilation (less Babel transforms) Just landed in 0.84.0-rc.1 https://t.co/fnH0aMgQxD